Your browser does not fully support modern features. Please upgrade for a smoother experience.

Submitted Successfully!

Thank you for your contribution! You can also upload a video entry or images related to this topic.

For video creation, please contact our Academic Video Service.

| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Razvan Adrian Covache-Busuioc | -- | 1550 | 2023-10-31 09:48:50 | | | |

| 2 | Jessie Wu | Meta information modification | 1550 | 2023-11-01 02:15:26 | | |

Video Upload Options

We provide professional Academic Video Service to translate complex research into visually appealing presentations. Would you like to try it?

Cite

If you have any further questions, please contact Encyclopedia Editorial Office.

Toader, C.; Eva, L.; Tataru, C.; Covache-Busuioc, R.; Bratu, B.; Dumitrascu, D.; Costin, H.P.; Glavan, L.; Ciurea, A.V. Technological Synergies in Cranial Base Surgery. Encyclopedia. Available online: https://encyclopedia.pub/entry/50966 (accessed on 26 March 2026).

Toader C, Eva L, Tataru C, Covache-Busuioc R, Bratu B, Dumitrascu D, et al. Technological Synergies in Cranial Base Surgery. Encyclopedia. Available at: https://encyclopedia.pub/entry/50966. Accessed March 26, 2026.

Toader, Corneliu, Lucian Eva, Catalina-Ioana Tataru, Razvan-Adrian Covache-Busuioc, Bogdan-Gabriel Bratu, David-Ioan Dumitrascu, Horia Petre Costin, Luca-Andrei Glavan, Alexandru Vlad Ciurea. "Technological Synergies in Cranial Base Surgery" Encyclopedia, https://encyclopedia.pub/entry/50966 (accessed March 26, 2026).

Toader, C., Eva, L., Tataru, C., Covache-Busuioc, R., Bratu, B., Dumitrascu, D., Costin, H.P., Glavan, L., & Ciurea, A.V. (2023, October 31). Technological Synergies in Cranial Base Surgery. In Encyclopedia. https://encyclopedia.pub/entry/50966

Toader, Corneliu, et al. "Technological Synergies in Cranial Base Surgery." Encyclopedia. Web. 31 October, 2023.

Copy Citation

The genesis of Anterior Skull Base (ASB) surgery as a distinct field is anchored in the innovations of the 1940s. Dandy’s instrumental contributions are emblematic of this era, particularly his surgical strategy via the anterior cranial fossa for the excision of orbital tumors and his subsequent expansion of the resection to incorporate the ethmoidal regions.

cranial base surgery

minimally invasive techniques

intraoperative neuromonitoring

advanced imaging

robotics in neurosurgery

radiosurgery

gamma knife

cyberknife

1. Endoscopy in the New Era: Advanced Imaging, Robotic Assistance, and Augmented Reality Overlays

Advancements in skull base surgery are increasingly leveraging the capabilities of virtual reality (VR) and augmented reality (AR). For instance, color-coded stereotactic VR models can be custom-tailored for individual surgical cases, providing a simulated operating field for surgeons and trainees [1]. These models offer invaluable opportunities for surgical education and preoperative simulations. Furthermore, VR technology can be integrated into real-time operative settings by overlaying 3D images onto microscopic or endoscopic views, thus enhancing spatial navigation capabilities for the surgeon [2].

AR technology appears to offer particular benefits to less experienced medical professionals. These systems serve not just as educational tools but also as potential substitutes for existing neural navigation technology. AR can offer both contextual information about underlying structures and direct patient perspectives, potentially revolutionizing conventional neural navigation systems [3].

Beyond surgery, AR also has applications in non-surgical and clinical management at the skull base. For example, it is used for ablating damaged nasal tissue and offers guidance on basic surgical plans and navigational protocols [4]. In cranio-maxillofacial procedures, AR plays a significant role in reconstructing cheekbones and offering data on the underlying structure, albeit without the capability for real-time modifications [5]. Many AR applications superimpose precollected, immersive data onto real endoscopic camera images. However, fields that lie outside the endoscopic view remain hidden to the medical team, necessitating further adaptations to fully realize the technology’s potential.

Moreover, the application of Augmented Reality in clinical settings, particularly in the management of base-of-the-skull pathologies, has been gaining significant attention in the medical community, as evidenced by multiple academic conferences exploring its potential [4][5]. A specific clinical model has been proposed, offering an extended observational perspective of the area under examination [6]. In this model, endoscopic images are displayed centrally, while the projection external to the endoscopic field of view is rendered virtually, utilizing pre-existing computerized tomography data. Such an integrated AR framework suggests that, following technological advancements and methodological refinements, AR applications may become increasingly prevalent across a broader spectrum of clinical scenarios necessitating heightened alertness and precision [7].

When it comes to the design of an ideal AR device for clinical applications, certain rigorous criteria must be met to ensure its functional efficacy and safety. The system should feature a focus marker and device alignment capabilities that are intuitive and minimally intrusive, particularly for the medical professional using it. Calibration adjustments should be undertaken before the initiation of the clinical procedure to minimize undue burden or cognitive load on the healthcare provider [8].

Furthermore, conventional imaging techniques that focus solely on two-dimensional visual data may suffer from limitations in perceived depth, thereby potentially compromising the practitioner’s situational awareness and decision making accuracy. To mitigate such limitations, it is advisable to incorporate depth cues to enhance the perceptual veracity of the rendered images [9]. Additionally, in applications where virtual 3D objects are superimposed onto endoscopic images, it becomes imperative to maintain parallax when the viewing position changes in order to preserve spatial relationships and depth perception.

In terms of data presentation, meticulous attention must be devoted to the structural layout of the AR interface. Inadequate design considerations can obscure critical information or induce visual discomfort, thereby diminishing the user experience and potentially compromising clinical outcomes. Therefore, it is essential to engage in an iterative design process, incorporating user feedback and empirical data, to optimize the AR interface and data presentation for the specialized needs of clinical practice.

2. Data-Driven Neurosurgery: Machine Learning, AI-Assisted Diagnosis, and Surgical Planning

The application of Radiomics in oncological diagnostics has emerged as a transformative approach in recent years, particularly in the preoperative assessment of various neoplastic conditions including prostate cancer, lung cancer, and an array of brain tumors such as gliomas, meningiomas, and brain metastases [8][9][10]. Traditional diagnostic methodologies that rely predominantly on qualitative assessments made by radiologists based on “visible” features, Radiomics facilitates the quantitative extraction of high-dimensional features as parametric data from radiographic images [11][12].

The incorporation of machine learning algorithms further enhances the analytical capabilities of Radiomics, offering unprecedented insights into the pathophysiological characteristics of lesions that are otherwise challenging to discern through conventional visual inspection [13][14]. Several studies have demonstrated the utility of Radiomics-based machine learning in the differential diagnosis of various brain tumors, thus indicating its prospective application in clinical decision making [15].

In the feature selection domain, Least Absolute Shrinkage and Selection Operator (LASSO) has been noted for its effectiveness in handling high-dimensional Radiomics data, particularly when the sample sizes are relatively limited [16][17]. LASSO distinguishes itself by its ability to avoid overfitting, making it an optimal choice for robust feature selection in Radiomics analyses.

Additionally, Linear Discriminant Analysis (LDA) serves as another valuable machine learning classification algorithm tailored for Radiomics applications. LDA seeks to identify and delineate boundaries around clusters belonging to distinct classes and projects these statistical entities into a lower-dimensional space to maximize class discriminatory power. Notably, it has been reported to retain substantial class discrimination information while reducing dimensionality [18][19][20].

Radiomics has extended its utility beyond diagnostic applications to prognostic evaluations, as exemplified in its role in both the diagnosis and treatment control rate prediction for chordoma [21]. Chordoma, a disease notorious for its refractory nature necessitating multiple surgical interventions and radiotherapeutic treatments, poses unique challenges for sustained disease control. In this context, Radiomic models built on features describing both the morphological shape and the genomic heterogeneity of the tumor have demonstrated superior performance in predicting the effectiveness of radiotherapy for tumor control. Such predictive capabilities underscore the potential benefits of Radiomics in enabling more targeted, efficient treatment regimens for diseases such as chordoma, thereby potentially reducing the need for repetitive, invasive procedures.

In another application, Radiomics-based machine learning algorithms have been shown to assist significantly in the preoperative differential diagnosis between germinoma and choroid plexus papilloma [22]. These two types of primary intracranial tumors often present with overlapping clinical manifestations and radiological features, yet they require markedly different treatment modalities. In addressing this diagnostic conundrum, high-performance prediction models have been developed using sophisticated feature selection methodologies and classifiers. These models suggest that Radiomics can offer a non-invasive diagnostic strategy with substantial reliability.

Notably, the application of Radiomics and machine learning in these scenarios holds the promise of revolutionizing the approach to image-based diagnosis and personalized clinical decision making. By leveraging advanced computational techniques to analyze complex, high-dimensional radiographic data, Radiomics provides a more nuanced understanding of tumor characteristics and treatment responses. This computational approach thereby opens avenues for more accurate, timely, and individualized therapeutic strategies, significantly enhancing the quality of patient care in oncological settings.

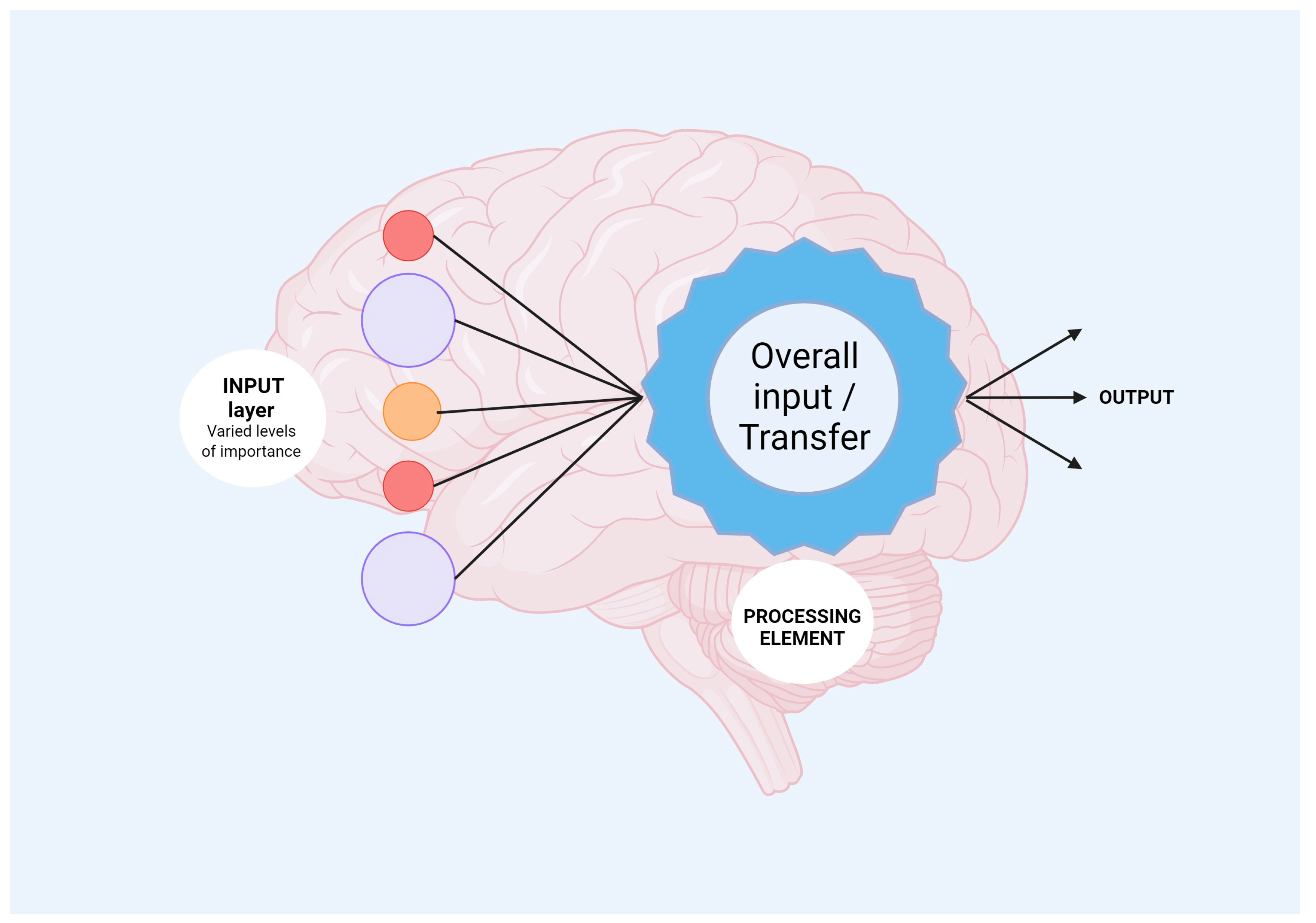

In the realm of skull base neurosurgery, machine learning (ML) methods, including neural network models (NNs) (Figure 1), have been rigorously applied to a comprehensive, multi-center, prospective database to predict the occurrence of Cerebrospinal Fluid Rhinorrhoea (CSFR) following endonasal surgical procedures [23]. The predictive capabilities of NNs surpass those of traditional statistical models and other ML techniques in accurately forecasting CSFR events. Notably, NNs have also revealed intricate relationships between specific risk factors and surgical repair techniques that influence CSFR, relationships that remained elusive when examined through conventional statistical approaches. As these predictive models continue to evolve through the integration of more extensive and granular datasets, refined NN architectures, and external validation processes, they hold the promise of significantly impacting future surgical decision making. Such next-generation models may provide invaluable support for more personalized patient counseling and tailored treatment plans.

Figure 1. Mechanisms of neural network processing are shown. Input layer refers to heterogenous data which will be analyzed by the neural network incorporated algorithms. Further, output information is obtained, offering new avenues for biomedical fields.

Regarding automated image segmentation in surgical navigation applications, although there is a high correlation between the automated segmentation and the anatomical landmarks in question, the Dice Coefficient (DC)—a measure commonly used to assess the performance of the segmentation task—was not deemed to be particularly high [24]. Various factors contribute to this finding, including the complexity of anatomical pathways, the absence of clearly delineated contours in certain regions, and inherent variations arising from manual segmentation. These limitations cast doubt on the utility of the DC as a standalone metric for objectively evaluating the performance of this specific task. However, the low average Hausdorff Distance (HD) on the testing dataset better encapsulates the high accuracy of the automated segmentation, bolstering its credibility for applications such as surgical navigation.

In summary, the application of machine learning, and particularly neural networks, appears to be a game-changer in predicting complex clinical outcomes such as CSFR following skull base neurosurgery. Meanwhile, automated image segmentation remains a challenging task, warranting a more nuanced approach to performance assessment than merely relying on singular statistical measures such as the Dice Coefficient. These advancements signify not only the growing impact of computational methods in medicine but also the necessity for ongoing refinement and validation to ensure these techniques meet the highest standards of clinical efficacy and safety.

References

- Alaraj, A.; Lemole, M.; Finkle, J.; Yudkowsky, R.; Wallace, A.; Luciano, C.; Banerjee, P.; Rizzi, S.; Charbel, F. Virtual reality training in neurosurgery: Review of current status and future applications. Surg. Neurol. Int. 2011, 2, 52.

- Rosahl, S.; Gharabaghi, A.; Hubbe, U.; Shahidi, R.; Samii, M. Virtual Reality Augmentation in Skull Base Surgery. Skull Base 2006, 16, 059–066.

- Liu, W.P.; Azizian, M.; Sorger, J.; Taylor, R.H.; Reilly, B.K.; Cleary, K.; Preciado, D. Cadaveric Feasibility Study of da Vinci Si–Assisted Cochlear Implant With Augmented Visual Navigation for Otologic Surgery. JAMA Otolaryngol. Neck Surg. 2014, 140, 208.

- Citardi, M.J.; Agbetoba, A.; Bigcas, J.-L.; Luong, A. Augmented reality for endoscopic sinus surgery with surgical navigation: A cadaver study: Augmented reality for endoscopic sinus surgery. Int. Forum Allergy Rhinol. 2016, 6, 523–528.

- Marmulla, R.; Hoppe, H.; Mühling, J.; Hassfeld, S. New Augmented Reality Concepts for Craniofacial Surgical Procedures. Plast. Reconstr. Surg. 2005, 115, 1124–1128.

- Kawamata, T.; Iseki, H.; Shibasaki, T.; Hori, T. Endoscopic Augmented Reality Navigation System for Endonasal Transsphenoidal Surgery to Treat Pituitary Tumors: Technical Note. Neurosurgery 2002, 50, 1393–1397.

- Caversaccio, M.; Langlotz, F.; Nolte, L.-P.; Häusler, R. Impact of a self-developed planning and self-constructed navigation system on skull base surgery: 10 years experience. Acta Otolaryngol. 2007, 127, 403–407.

- Bong, J.H.; Song, H.; Oh, Y.; Park, N.; Kim, H.; Park, S. Endoscopic navigation system with extended field of view using augmented reality technology. Int. J. Med. Robot. 2018, 14, e1886.

- Kalaiarasan, K.; Prathap, L.; Ayyadurai, M.; Subhashini, P.; Tamilselvi, T.; Avudaiappan, T.; Infant Raj, I.; Alemayehu Mamo, S.; Mezni, A. Clinical Application of Augmented Reality in Computerized Skull Base Surgery. Evid. Based Complement. Alternat. Med. 2022, 2022, 1335820.

- Grøvik, E.; Yi, D.; Iv, M.; Tong, E.; Rubin, D.; Zaharchuk, G. Deep learning enables automatic detection and segmentation of brain metastases on multisequence MRI. J. Magn. Reson. Imaging 2020, 51, 175–182.

- Laukamp, K.R.; Thiele, F.; Shakirin, G.; Zopfs, D.; Faymonville, A.; Timmer, M.; Maintz, D.; Perkuhn, M.; Borggrefe, J. Fully automated detection and segmentation of meningiomas using deep learning on routine multiparametric MRI. Eur. Radiol. 2019, 29, 124–132.

- Lu, C.-F.; Hsu, F.-T.; Hsieh, K.L.-C.; Kao, Y.-C.J.; Cheng, S.-J.; Hsu, J.B.-K.; Tsai, P.-H.; Chen, R.-J.; Huang, C.-C.; Yen, Y.; et al. Machine Learning–Based Radiomics for Molecular Subtyping of Gliomas. Clin. Cancer Res. 2018, 24, 4429–4436.

- Varghese, B.A.; Cen, S.Y.; Hwang, D.H.; Duddalwar, V.A. Texture Analysis of Imaging: What Radiologists Need to Know. Am. J. Roentgenol. 2019, 212, 520–528.

- Gillies, R.J.; Kinahan, P.E.; Hricak, H. Radiomics: Images Are More than Pictures, They Are Data. Radiology 2016, 278, 563–577.

- Bi, W.L.; Hosny, A.; Schabath, M.B.; Giger, M.L.; Birkbak, N.J.; Mehrtash, A.; Allison, T.; Arnaout, O.; Abbosh, C.; Dunn, I.F.; et al. Artificial intelligence in cancer imaging: Clinical challenges and applications. CA. Cancer J. Clin. 2019, 69, 127–157.

- Kickingereder, P.; Burth, S.; Wick, A.; Götz, M.; Eidel, O.; Schlemmer, H.-P.; Maier-Hein, K.H.; Wick, W.; Bendszus, M.; Radbruch, A.; et al. Radiomic Profiling of Glioblastoma: Identifying an Imaging Predictor of Patient Survival with Improved Performance over Established Clinical and Radiologic Risk Models. Radiology 2016, 280, 880–889.

- Kniep, H.C.; Madesta, F.; Schneider, T.; Hanning, U.; Schönfeld, M.H.; Schön, G.; Fiehler, J.; Gauer, T.; Werner, R.; Gellissen, S. Radiomics of Brain MRI: Utility in Prediction of Metastatic Tumor Type. Radiology 2019, 290, 479–487.

- Zhang, B.; Tian, J.; Dong, D.; Gu, D.; Dong, Y.; Zhang, L.; Lian, Z.; Liu, J.; Luo, X.; Pei, S.; et al. Radiomics Features of Multiparametric MRI as Novel Prognostic Factors in Advanced Nasopharyngeal Carcinoma. Clin. Cancer Res. 2017, 23, 4259–4269.

- Wu, S.; Zheng, J.; Li, Y.; Yu, H.; Shi, S.; Xie, W.; Liu, H.; Su, Y.; Huang, J.; Lin, T. A Radiomics Nomogram for the Preoperative Prediction of Lymph Node Metastasis in Bladder Cancer. Clin. Cancer Res. 2017, 23, 6904–6911.

- Ortega-Martorell, S.; Olier, I.; Julià-Sapé, M.; Arús, C. SpectraClassifier 1.0: A user friendly, automated MRS-based classifier-development system. BMC Bioinform. 2010, 11, 106.

- Buizza, G.; Paganelli, C.; D’Ippolito, E.; Fontana, G.; Molinelli, S.; Preda, L.; Riva, G.; Iannalfi, A.; Valvo, F.; Orlandi, E.; et al. Radiomics and Dosiomics for Predicting Local Control after Carbon-Ion Radiotherapy in Skull-Base Chordoma. Cancers 2021, 13, 339.

- Chen, B.; Chen, C.; Zhang, Y.; Huang, Z.; Wang, H.; Li, R.; Xu, J. Differentiation between Germinoma and Craniopharyngioma Using Radiomics-Based Machine Learning. J. Pers. Med. 2022, 12, 45.

- CRANIAL Consortium Machine learning driven prediction of cerebrospinal fluid rhinorrhoea following endonasal skull base surgery: A multicentre prospective observational study. Front. Oncol. 2023, 13, 1046519.

- Neves, C.A.; Tran, E.D.; Blevins, N.H.; Hwang, P.H. Deep learning automated segmentation of middle skull-base structures for enhanced navigation. Int. Forum Allergy Rhinol. 2021, 11, 1694–1697.

More

Information

Subjects:

Surgery

Contributors

MDPI registered users' name will be linked to their SciProfiles pages. To register with us, please refer to https://encyclopedia.pub/register

:

View Times:

574

Revisions:

2 times

(View History)

Update Date:

01 Nov 2023

Notice

You are not a member of the advisory board for this topic. If you want to update advisory board member profile, please contact office@encyclopedia.pub.

OK

Confirm

Only members of the Encyclopedia advisory board for this topic are allowed to note entries. Would you like to become an advisory board member of the Encyclopedia?

Yes

No

${ textCharacter }/${ maxCharacter }

Submit

Cancel

Back

Comments

${ item }

|

More

No more~

There is no comment~

${ textCharacter }/${ maxCharacter }

Submit

Cancel

${ selectedItem.replyTextCharacter }/${ selectedItem.replyMaxCharacter }

Submit

Cancel

Confirm

Are you sure to Delete?

Yes

No