| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Luca Pasquini | -- | 3106 | 2022-11-09 01:37:33 | | | |

| 2 | Vivi Li | -13 word(s) | 3093 | 2022-11-09 04:07:13 | | |

Video Upload Options

Contrast media are widely diffused in biomedical imaging, due to their relevance in the diagnosis of numerous disorders. However, the risk of adverse reactions, the concern of potential damage to sensitive organs, and the recently described brain deposition of gadolinium salts, limit the use of contrast media in clinical practice. The application of artificial intelligence (AI) techniques to biomedical imaging has led to the development of ‘virtual’ and ‘augmented’ contrasts. The idea behind these applications is to generate synthetic post-contrast images through AI computational modeling starting from the information available on other images acquired during the same scan. In these AI models, non-contrast images (virtual contrast) or low-dose post-contrast images (augmented contrast) are used as input data to generate synthetic post-contrast images, which are often undistinguishable from the native ones.

1. Introduction

2. AI Architectures in Synthetic Reconstruction

2.1. Convolutional Neural Networks in Synthetic Reconstruction

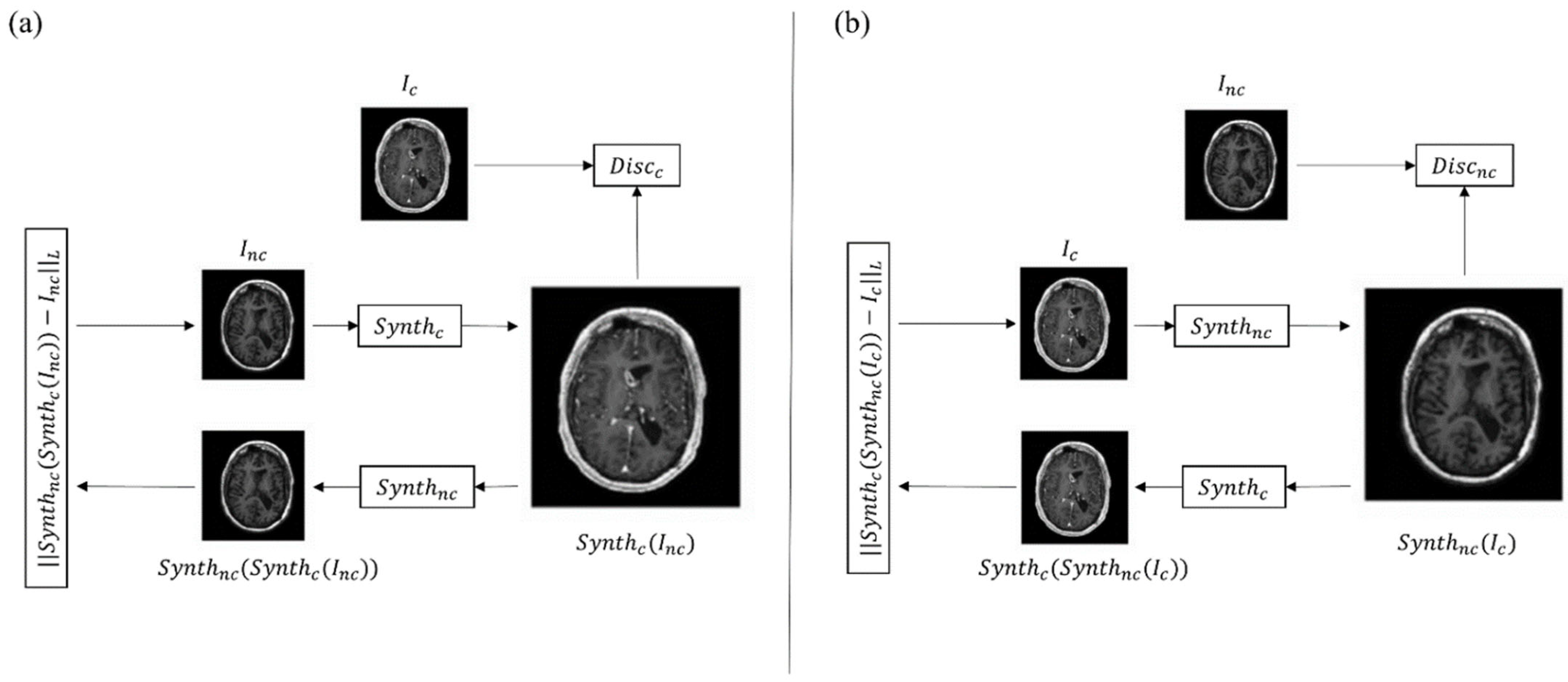

2.2. Generative Adversarial Networks in Synthetic Reconstruction

3. Clinical Applications in Neuroradiology

3.1. AI in Neuro-Oncology Imaging

3.2. AI in Multiple Sclerosis Imaging

| Field of Application | Reference | Database (N) | Task | Results |

|---|---|---|---|---|

| Neuro-oncology | [30] | Private (60) | To obtain 100% full-dose 3D T1-weighted images from 10% low-dose 3D T1-weighted images | SSIM and PNSR increase by 11% and 5 dB |

| [31] | Private (640) | To obtain 100% full-dose 3D T1-weighted images from 10% low-dose 3D T1-weighted images and pre-contrast 3D T1-weighted images | SSIM 0.92 ± 0.02; PSNR 35.07 dB ± 3.84 | |

| [32] | BraTs2020 (369) | To obtain contrast-enhanced T1-weighted images from non-contrast-enhanced MR images | SSIM and PCC 0.991 dB ± 0.007 and 0.995 ± 0.006 (whole brain); 0.993 ± 0.008 and 0.999 ± 0.003 | |

| [47] | Private 1 (185) Private 2 (36) BraTs2018 (73 for SR) |

To obtain 3D isotropic contrast-enhanced T2 FLAIR from non-contrast enhanced 2D FLAIR and image super resolution | SSIM 0.932 (whole brain), 0.851 (tumor region); PSNR 31.25 dB (whole brain) 24.93 dB (tumor region) | |

| [58] | Private (116) | To obtain contrast-enhanced T1-weighted images from non-contrast-enhanced MR images | Sensitivity 91.8%, Specificity 91.2% | |

| [59] | Private (400) BraTs2020 (286) (external validation) |

To obtain contrast-enhanced T1-weighted images from non-contrast-enhanced MR images | SSIM 0.84 ± 0.05 | |

| [63] | Private (83) | To obtain 100% full-dose 3D T1-weighted images from 10% low-dose 3D T1-weighted images | Image quality 0.73; image SNR 0.63; lesion conspicuity 0.89; lesion enhancement 0.87 | |

| [64] | Private (145) | To obtain 100% full-dose 3D T1-weighted images from 25% low-dose 3D T1-weighted images | SSIM 0.871 ± 0.04; PSNR 31.6 dB ± 2 | |

| [68] | Private (250) | To maximize contrast in full-dose 3D T1-weighted images | Sensitivity in lesion detection increase by 16% | |

| Multiple Sclerosis | [72] | Private (1970) | To identify MS enhancing lesion from non-enhanced MR images | Sensitivity and specificity 78% ± 4.3 and 73% ± 2.7 (slice-wise); 72% ± 9 and 70% ± 6.3 |

References

- Faucon, A.L.; Bobrie, G.; Clément, O. Nephrotoxicity of Iodinated Contrast Media: From Pathophysiology to Prevention Strategies. Eur. J. Radiol. 2019, 116, 231–241.

- Cowper, S.E.; Robin, H.S.; Steinberg, S.M. Scleromyxoedema-like cutaneous diseases in renal-dialysis patients. Lancet 2000, 356, 1000–1001.

- Pasquini, L.; Napolitano, A.; Visconti, E.; Longo, D.; Romano, A.; Tomà, P.; Espagnet, M.C.R. Gadolinium-Based Contrast Agent-Related Toxicities. CNS Drugs 2018, 32, 229–240.

- Rossi Espagnet, M.C.; Bernardi, B.; Pasquini, L.; Figà-Talamanca, L.; Tomà, P.; Napolitano, A. Signal Intensity at Unenhanced T1-Weighted Magnetic Resonance in the Globus Pallidus and Dentate Nucleus after Serial Administrations of a Macrocyclic Gadolinium-Based Contrast Agent in Children. Pediatr. Radiol. 2017, 47, 1345–1352.

- Pasquini, L.; Rossi Espagnet, M.C.; Napolitano, A.; Longo, D.; Bertaina, A.; Visconti, E.; Tomà, P. Dentate Nucleus T1 Hyperintensity: Is It Always Gadolinium All That Glitters? Radiol. Med. 2018, 123, 469–473.

- Lohrke, J.; Frisk, A.L.; Frenzel, T.; Schöckel, L.; Rosenbruch, M.; Jost, G.; Lenhard, D.C.; Sieber, M.A.; Nischwitz, V.; Küppers, A.; et al. Histology and Gadolinium Distribution in the Rodent Brain after the Administration of Cumulative High Doses of Linear and Macrocyclic Gadolinium-Based Contrast Agents. Invest Radiol. 2017, 52, 324–333.

- Kanda, T.; Fukusato, T.; Matsuda, M.; Toyoda, K.; Oba, H.; Kotoku, J.; Haruyama, T.; Kitajima, K.; Furui, S. Gadolinium-Based Contrast Agent Accumulates in the Brain Even in Subjects without Severe Renal Dysfunction: Evaluation of Autopsy Brain Specimens with Inductively Coupled Plasma Mass Spectroscopy. Radiology 2015, 276, 228–232.

- McDonald, R.J.; McDonald, J.S.; Kallmes, D.F.; Jentoft, M.E.; Murray, D.L.; Thielen, K.R.; Williamson, E.E.; Eckel, L.J. Intracranial Gadolinium Deposition after Contrast-Enhanced MR Imaging. Radiology 2015, 275, 772–782.

- European Medicines Agency. EMA’s Final Opinion Confirms Restrictions on Use of Linear Gadolinium Agents in Body Scans. Available online: http://www.ema.europa.eu/docs/en_%0DGB/document_library/Referrals_document/gadolinium_contrast_%0Dagents_31/European_Commission_final_decision/WC500240575.%0Dpdf (accessed on 1 June 2022).

- ESUR Guidelines on Contrast Agents v.10.0. Available online: https://www.esur.org/esur-guidelines-on-contrast-agents/ (accessed on 1 June 2022).

- Bottino, F.; Lucignani, M.; Napolitano, A.; Dellepiane, F.; Visconti, E.; Espagnet, M.C.R.; Pasquini, L. In Vivo Brain Gsh: Mrs Methods and Clinical Applications. Antioxidants 2021, 10, 1407.

- Akhtar, M.J.; Ahamed, M.; Alhadlaq, H.; Alrokayan, S. Toxicity Mechanism of Gadolinium Oxide Nanoparticles and Gadolinium Ions in Human Breast Cancer Cells. Curr. Drug Metab. 2019, 20, 907–917.

- Romano, A.; Rossi-Espagnet, M.C.; Pasquini, L.; Di Napoli, A.; Dellepiane, F.; Butera, G.; Moltoni, G.; Gagliardo, O.; Bozzao, A. Cerebral Venous Thrombosis: A Challenging Diagnosis; A New Nonenhanced Computed Tomography Standardized Semi-Quantitative Method. Tomography 2022, 8, 1–9.

- Gillies, R.J.; Kinahan, P.E.; Hricak, H. Radiomics: Images Are More than Pictures, They Are Data. Radiology 2016, 278, 563–577.

- Pasquini, L.; Napolitano, A.; Tagliente, E.; Dellepiane, F.; Lucignani, M.; Vidiri, A.; Ranazzi, G.; Stoppacciaro, A.; Moltoni, G.; Nicolai, M.; et al. Deep Learning Can Differentiate IDH-Mutant from IDH-Wild Type GBM. J. Pers. Med. 2021, 11, 290.

- Pasquini, L.; Napolitano, A.; Lucignani, M.; Tagliente, E.; Dellepiane, F.; Rossi-Espagnet, M.C.; Ritrovato, M.; Vidiri, A.; Villani, V.; Ranazzi, G.; et al. AI and High-Grade Glioma for Diagnosis and Outcome Prediction: Do All Machine Learning Models Perform Equally Well? Front. Oncol. 2021, 11, 601425.

- Bottino, F.; Tagliente, E.; Pasquini, L.; Di Napoli, A.; Lucignani, M.; Talamanca, L.F.; Napolitano, A. COVID Mortality Prediction with Machine Learning Methods: A Systematic Review and Critical Appraisal. J. Pers. Med. 2021, 11, 893.

- Verma, V.; Simone, C.B.; Krishnan, S.; Lin, S.H.; Yang, J.; Hahn, S.M. The Rise of Radiomics and Implications for Oncologic Management. J. Natl. Cancer Inst. 2017, 109, 2016–2018.

- Larue, R.T.H.M.; Defraene, G.; de Ruysscher, D.; Lambin, P.; van Elmpt, W. Quantitative Radiomics Studies for Tissue Characterization: A Review of Technology and Methodological Procedures. Br. J. Radiol. 2017, 90, 20160665.

- McCarthy, J.; Minsky, M.L.; Rochester, N.; Shannon, C.E. A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence—august 31, 1955. AI Mag. 2006, 27, 12.

- Hamet, P.; Tremblay, J. Artificial Intelligence in Medicine. Metabolism 2017, 69, S36–S40.

- Yang, R.; Yu, Y. Artificial Convolutional Neural Network in Object Detection and Semantic Segmentation for Medical Imaging Analysis. Front. Oncol. 2021, 11, 638182.

- Goodfellow, I.J.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative Adversarial Nets. Adv. Neural. Inf. Process Syst. 2014, 3, 2672–2680.

- Lundervold, A.S.; Lundervold, A. An Overview of Deep Learning in Medical Imaging Focusing on MRI. Z Med. Phys. 2019, 29, 102–127.

- Akay, A.; Hess, H. Deep Learning: Current and Emerging Applications in Medicine and Technology. In Proceedings of the IEEE Journal of Biomedical and Health Informatics; IEEE: Piscataway, NJ, USA, 2019; Volume 23, pp. 906–920.

- Fukushima, K.; Miyake, S. Neocognitron: A Self-Organizing Neural Network Model for a Mechanism of Visual Pattern Recognition. In Competition and Cooperation in Neural Nets; Lecture Notes in Biomathematics; Springer: Berlin/Heidelberg, Germany, 1982.

- Kumar, A.; Kim, J.; Lyndon, D.; Fulham, M.; Feng, D. An Ensemble of Fine-Tuned Convolutional Neural Networks for Medical Image Classification. In Proceedings of the IEEE Journal of Biomedical and Health Informatics; IEEE: Piscataway, NJ, USA, 2017; Volume 21, pp. 31–40.

- Cai, L.; Gao, J.; Zhao, D. A Review of the Application of Deep Learning in Medical Image Classification and Segmentation. Ann. Transl. Med. 2020, 8, 713.

- Yadav, S.S.; Jadhav, S.M. Deep Convolutional Neural Network Based Medical Image Classification for Disease Diagnosis. J. Big Data 2019, 6, 113.

- Gong, E.; Pauly, J.M.; Wintermark, M.; Zaharchuk, G. Deep Learning Enables Reduced Gadolinium Dose for Contrast-Enhanced Brain MRI. J. Magn. Reson. Imaging 2018, 48, 330–340.

- Pasumarthi, S.; Tamir, J.I.; Christensen, S.; Zaharchuk, G.; Zhang, T.; Gong, E. A Generic Deep Learning Model for Reduced Gadolinium Dose in Contrast-Enhanced Brain MRI. Magn. Reason. Med. 2021, 86, 1687–1700.

- Xie, H.; Lei, Y.; Wang, T.; Roper, J.; Axente, M.; Bradley, J.D.; Liu, T.; Yang, X. Magnetic Resonance Imaging Contrast Enhancement Synthesis Using Cascade Networks with Local Supervision. Med. Phys. 2022, 49, 3278–3287.

- Lee, D.; Yoo, J.; Ye, J. Deep Artifact Learning for Compressed Sensingand Parallel MRI. arXiv Prepr. 2017, arXiv:1703.01120.

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In International Conference on Medical Image Computing and Computer-Assisted Intervention; Springer: Cham, Switzerland, 2015; pp. 234–241.

- Gong, E.; Pauly, J.; Zaharchuk, G. Improving the PI1CS Reconstructionfor Highly Undersampled Multi-Contrast MRI Using Local Deep Network. In Proceedings of the 25th Scientific Meeting ISMRM, Honolulu, HI, USA, 22–27 April 2017; p. 5663.

- Ran, M.; Hu, J.; Chen, Y.; Chen, H.; Sun, H.; Zhou, J.; Zhang, Y. Denoising of 3D Magnetic Resonance Images Using a Residual Encoder–Decoder Wasserstein Generative Adversarial Network. Med. Image Anal. 2019, 55, 165–180.

- Bustamante, M.; Viola, F.; Carlhäll, C.J.; Ebbers, T. Using Deep Learning to Emulate the Use of an External Contrast Agent in Cardiovascular 4D Flow MRI. J. Magn. Reson. Imaging 2021, 54, 777–786.

- Zhang, H.; Li, H.; Dillman, J.R.; Parikh, N.A.; He, L. Multi-Contrast MRI Image Synthesis Using Switchable Cycle-Consistent Generative Adversarial Networks. Diagnostics 2022, 12, 816.

- Zhao, K.; Zhou, L.; Gao, S.; Wang, X.; Wang, Y.; Zhao, X.; Wang, H.; Liu, K.; Zhu, Y.; Ye, H. Study of Low-Dose PET Image Recovery Using Supervised Learning with CycleGAN. PLoS ONE 2020, 15, e0238455.

- Lyu, Q.; You, C.; Shan, H.; Zhang, Y.; Wang, G. Super-Resolution MRI and CT through GAN-CIRCLE. In Developments in X-ray Tomography XII; SPIE: Bellingham, WA, USA, 2019; Volume 11113, pp. 202–208.

- Gregory, S.; Cheng, H.; Newman, S.; Gan, Y. HydraNet: A Multi-Branch Convolutional Neural Network Architecture for MRI Denoising. In Medical Imaging 2021: Image Processing; SPIE: Bellingham, WA, USA, 2021; Volume 11596, pp. 881–889.

- Hiasa, Y.; Otake, Y.; Takao, M.; Matsuoka, T.; Takashima, K.; Carass, A.; Prince, J.L.; Sugano, N.; Sato, Y. Cross-Modality Image Synthesis from Unpaired Data Using CycleGAN. In International Workshop on Simulation and Synthesis in Medical Imaging; Springer: Cham, Switzerland, 2018; Volume 11037, pp. 31–41.

- Chandrashekar, A.; Shivakumar, N.; Lapolla, P.; Handa, A.; Grau, V.; Lee, R. A Deep Learning Approach to Generate Contrast-Enhanced Computerised Tomography Angiograms without the Use of Intravenous Contrast Agents. Eur. Heart J. 2020, 41, ehaa946.0156.

- Sandhiya, B.; Priyatharshini, R.; Ramya, B.; Monish, S.; Sai Raja, G.R. Reconstruction, Identification and Classification of Brain Tumor Using Gan and Faster Regional-CNN. In Proceedings of the 2021 3rd International Conference on Signal Processing and Communication (ICPSC), Coimbatore, India, 13–14 May 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 238–242.

- Yu, B.; Zhou, L.; Wang, L.; Fripp, J.; Bourgeat, P. 3D CGAN Based Cross-Modality MR Image Synthesis for Brain Tumor Segmentation. In Proceedings of the 2018 IEEE 15th International Symposium on Biomedical Imaging (ISBI 2018), Washington, DC, USA, 4–7 April 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 626–630.

- Mori, M.; Fujioka, T.; Katsuta, L.; Kikuchi, Y.; Oda, G.; Nakagawa, T.; Kitazume, Y.; Kubota, K.; Tateishi, U. Feasibility of New Fat Suppression for Breast MRI Using Pix2pix. Jpn J. Radiol. 2020, 38, 1075–1081.

- Wang, T.; Lei, Y.; Curran, W.J.; Liu, T.; Yang, X. Contrast-Enhanced MRI Synthesis from Non-Contrast MRI Using Attention CycleGAN. In Medical Imaging 2021: Biomedical Applications in Molecular, Structural, and Functional Imaging; SPIE: Bellingham, WA, USA, 2021; Volume 11600, pp. 388–393.

- Zhou, L.; Schaefferkoetter, J.D.; Tham, I.W.K.; Huang, G.; Yan, J. Supervised Learning with Cyclegan for Low-Dose FDG PET Image Denoising. Med. Image Anal. 2020, 65, 101770.

- Jiao, J.; Namburete, A.I.L.; Papageorghiou, A.T.; Noble, J.A. Self-Supervised Ultrasound to MRI Fetal Brain Image Synthesis. In Proceedings of the IEEE Transactions on Medical Imaging; IEEE: Piscataway, NJ, USA, 2020; Volume 39, pp. 4413–4424.

- Zahra, M.A.; Hollingsworth, K.G.; Sala, E.; Lomas, D.J.; Tan, L.T. Dynamic Contrast-Enhanced MRI as a Predictor of Tumour Response to Radiotherapy. Lancet Oncol. 2007, 8, 63–74.

- Kappos, L.; Moeri, D.; Radue, E.W.; Schoetzau, A.; Schweikert, K.; Barkhof, F.; Miller, D.; Guttmann, C.R.G.; Weiner, H.L.; Gasperini, C.; et al. Predictive Value of Gadolinium-Enhanced Magnetic Resonance Imaging for Relapse Rate and Changes in Disability or Impairment in Multiple Sclerosis: A Meta-Analysis. Gadolinium MRI Meta-Analysis Group. Lancet 1999, 353, 964–969.

- Miller, D.H.; Barkhof, F.; Nauta, J.J.P. Gadolinium Enhancement Increases the Sensitivity of MRI in Detecting Disease Activity in Multiple Sclerosis. Brain 1993, 116 Pt 5, 1077–1094.

- Di Napoli, A.; Cristofaro, M.; Romano, A.; Pianura, E.; Papale, G.; di Stefano, F.; Ronconi, E.; Petrone, A.; Rossi Espagnet, M.C.; Schininà, V.; et al. Central Nervous System Involvement in Tuberculosis: An MRI Study Considering Differences between Patients with and without Human Immunodeficiency Virus 1 Infection. J. Neuroradiol. 2019, 47, 334–338.

- Di Napoli, A.; Spina, P.; Cianfoni, A.; Mazzucchelli, L.; Pravatà, E. Magnetic Resonance Imaging of Pilocytic Astrocytomas in Adults with Histopathologic Correlation: A Report of Six Consecutive Cases. J. Integr. Neurosci 2021, 20, 1039–1046.

- Mattay, R.R.; Davtyan, K.; Rudie, J.D.; Mattay, G.S.; Jacobs, D.A.; Schindler, M.; Loevner, L.A.; Schnall, M.D.; Bilello, M.; Mamourian, A.C.; et al. Economic Impact of Selective Use of Contrast for Routine Follow-up MRI of Patients with Multiple Sclerosis. J. Neuroimaging 2022, 32, 656–666.

- Molinaro, A.M.; Hervey-Jumper, S.; Morshed, R.A.; Young, J.; Han, S.J.; Chunduru, P.; Zhang, Y.; Phillips, J.J.; Shai, A.; Lafontaine, M.; et al. Association of Maximal Extent of Resection of Contrast-Enhanced and Non-Contrast-Enhanced Tumor with Survival Within Molecular Subgroups of Patients with Newly Diagnosed Glioblastoma. JAMA Oncol. 2020, 6, 495–503.

- Pasquini, L.; Di Napoli, A.; Napolitano, A.; Lucignani, M.; Dellepiane, F.; Vidiri, A.; Villani, V.; Romano, A.; Bozzao, A. Glioblastoma Radiomics to Predict Survival: Diffusion Characteristics of Surrounding Nonenhancing Tissue to Select Patients for Extensive Resection. J. Neuroimaging 2021, 31, 1192–1200.

- Kleesiek, J.; Morshuis, J.N.; Isensee, F.; Deike-Hofmann, K.; Paech, D.; Kickingereder, P.; Köthe, U.; Rother, C.; Forsting, M.; Wick, W.; et al. Can Virtual Contrast Enhancement in Brain MRI Replace Gadolinium?: A Feasibility Study. Invest Radiol. 2019, 54, 653–660.

- Calabrese, E.; Rudie, J.D.; Rauschecker, A.M.; Villanueva-Meyer, J.E.; Cha, S. Feasibility of Simulated Postcontrast Mri of Glioblastomas and Lower-Grade Gliomas by Using Three-Dimensional Fully Convolutional Neural Networks. Radiol Artif Intell 2021, 3, e200276.

- Wang, Y.; Wu, W.; Yang, Y.; Hu, H.; Yu, S.; Dong, X.; Chen, F.; Liu, Q. Deep Learning-Based 3D MRI Contrast-Enhanced Synthesis from a 2D Noncontrast T2Flair Sequence. Med. Phys. 2022, 49, 4478–4493.

- Romano, A.; Moltoni, G.; Guarnera, A.; Pasquini, L.; Di Napoli, A.; Napolitano, A.; Espagnet, M.C.R.; Bozzao, A. Single Brain Metastasis versus Glioblastoma Multiforme: A VOI-Based Multiparametric Analysis for Differential Diagnosis. Radiol. Med. 2022, 127, 490–497.

- Romano, A.; Pasquini, L.; Di Napoli, A.; Tavanti, F.; Boellis, A.; Rossi Espagnet, M.C.; Minniti, G.; Bozzao, A. Prediction of Survival in Patients Affected by Glioblastoma: Histogram Analysis of Perfusion MRI. J. Neurooncol. 2018, 139, 455–460.

- Luo, H.; Zhang, T.; Gong, N.-J.; Tamir, J.; Pasumarthi Venkata, S.; Xu, C.; Duan, Y.; Zhou, T.; Zhou, F.; Zaharchuk, G.; et al. Deep Learning-Based Methods May Minimize GBCA Dosage in Brain MRI Abbreviations CE-MRI Contrast-Enhanced MRI DL Deep Learning GBCAs Gadolinium-Based Contrast Agents. Eur. Radiol. 2021, 31, 6419–6428.

- Ammari, S.; Bône, A.; Balleyguier, C.; Moulton, E.; Chouzenoux, É.; Volk, A.; Menu, Y.; Bidault, F.; Nicolas, F.; Robert, P.; et al. Can Deep Learning Replace Gadolinium in Neuro-Oncology? Invest. Radiol. 2022, 57, 99–107.

- Kersch, C.N.; Ambady, P.; Hamilton, B.E.; Barajas, R.F. MRI and PET of Brain Tumor Neuroinflammation in the Era of Immunotherapy, From the AJR Special Series on Inflammation. AJR Am. J. Roentgenol. 2022, 218, 582–596.

- Kaufmann, T.J.; Smits, M.; Boxerman, J.; Huang, R.; Barboriak, D.P.; Weller, M.; Chung, C.; Tsien, C.; Brown, P.D.; Shankar, L.; et al. Consensus Recommendations for a Standardized Brain Tumor Imaging Protocol for Clinical Trials in Brain Metastases. Neuro. Oncol. 2020, 22, 757–772.

- Ye, Y.; Lyu, J.; Hu, Y.; Zhang, Z.; Xu, J.; Zhang, W.; Yuan, J.; Zhou, C.; Fan, W.; Zhang, X. Augmented T1-Weighted Steady State Magnetic Resonance Imaging. NMR Biomed. 2022, 35, e4729.

- Bône, A.; Ammari, S.; Menu, Y.; Balleyguier, C.; Moulton, E.; Chouzenoux, É.; Volk, A.; Garcia, G.C.T.E.; Nicolas, F.; Robert, P.; et al. From Dose Reduction to Contrast Maximization. Invest Radiol. 2022, 57, 527–535.

- Confavreux, C.; Vukusic, S. Natural History of Multiple Sclerosis: A Unifying Concept. Brain 2006, 129, 606–616.

- Thompson, A.J.; Banwell, B.L.; Barkhof, F.; Carroll, W.M.; Coetzee, T.; Comi, G.; Correale, J.; Fazekas, F.; Filippi, M.; Freedman, M.S.; et al. Diagnosis of Multiple Sclerosis: 2017 Revisions of the McDonald Criteria. Lancet Neurol. 2018, 17, 162–173.

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image Quality Assessment: From Error Visibility to Structural Similarity. In Proceedings of the IEEE Transactions on Image Processing; IEEE: Piscataway, NJ, USA, 2004; Volume 13, pp. 600–612.

- Narayana, P.A.; Coronado, I.; Sujit, S.J.; Wolinsky, J.S.; Lublin, F.D.; Gabr, R.E. Deep Learning for Predicting Enhancing Lesions in Multiple Sclerosis from Noncontrast MRI. Radiology 2020, 294, 398–404.

- Foroughi, A.A.; Zare, N.; Saeedi-Moghadam, M.; Zeinali-Rafsanjani, B.; Nazeri, M. Correlation between Contrast Enhanced Plaques and Plaque Diffusion Restriction and Their Signal Intensities in FLAIR Images in Patients Who Admitted with Acute Symptoms of Multiple Sclerosis. J. Med. Imaging Radiat. Sci. 2021, 52, 121–126.