| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Guenther Witzany | + 13560 word(s) | 13560 | 2020-09-23 11:55:06 | | | |

| 2 | Guenther Witzany | Meta information modification | 13560 | 2020-09-23 18:05:23 | | | | |

| 3 | Catherine Yang | Meta information modification | 13560 | 2020-09-24 03:06:53 | | | | |

| 4 | Guenther Witzany | Meta information modification | 13560 | 2020-09-24 08:44:04 | | | | |

| 5 | Guenther Witzany | Meta information modification | 13560 | 2020-09-24 16:29:02 | | | | |

| 6 | Guenther Witzany | + 10 word(s) | 13570 | 2020-09-27 13:58:29 | | | | |

| 7 | Guenther Witzany | + 21 word(s) | 13591 | 2020-10-03 10:09:35 | | | | |

| 8 | Karina Chen | Meta information modification | 13591 | 2020-10-26 09:52:26 | | | | |

| 9 | Catherine Yang | Meta information modification | 13591 | 2020-11-05 11:41:03 | | | | |

| 10 | Catherine Yang | Meta information modification | 13591 | 2020-11-06 09:57:06 | | | | |

| 11 | Catherine Yang | Meta information modification | 13591 | 2020-11-09 03:47:25 | | | | |

| 12 | Catherine Yang | Meta information modification | 13591 | 2020-11-09 09:59:01 | | |

Video Upload Options

Is there any reason, to believe a modern natural philosophy makes sense? The history of natural philosophy is marked by the search for principles that determine all beings independently whether they are abiotic matter or living organisms. Empirical data on the key features of life contradict even the possibility to find such principles because life in contrast to abiotic matter offers some main characteristics that are completely absent on abiotic planets. This means, if a modern natural philosophy should have any benefit it must be divided into a natural philosophy of physics or cosmology and a natural philosophy of life. If it is possible to give an updated definition of life, empirically based, non-reductive, non-mechanistic and without metaphysical assumptions, this would be an appropriate basis for a global consensus how future of humans may be generated in symbiosis with global biosphere. If we think on billions invested in health and drug research a new natural philosophy of life could orientate future of research on health and new drugs and avoid misinvestments.

1. Introduction

In the last half a century not only was there a tremendous expansion of research, but there was also parallel development of commercial applications for fighting diseases. In the twenty-first century, the investments in drug research and development in the United States was more than $ 500 billion. But, as indicated by official reports, in contrast to investment in research and development of appropriate techniques in the material sciences (increased to 45%), applicable output in life sciences remained at approximately 15%. Therefore, in addition to the theoretical deficits in justification of scientific sentences in biology, we also have to evaluate whether the dominant paradigm to speak about life of the last 60 years was the appropriate tool in the development of new drugs. To initiate a discourse between natural philosophy of life and the biological discilines we have to come clear about basics and foundations of how to think and speak about living nature.

Lets look at a short overview on the classical occidental philosophy as propounded by the ancient Greeks and the natural philosophies of the last 2000 years until the dawn of the empiristic logic of science in the twentieth century, which wanted to delimitate classical metaphysics from empirical sciences.[1] Linguistic and communicative vocabulary as a crucial tool in scientific foundations and the methodology of philosophy of science has been in use for 70 years. Before this time, empirical descriptions of non-living nature and even living nature derived from metaphysical constructions with a long and complex history embracing the most prominent thinkers in occidental philosophy. All of them tried to give answers to the classical antinomies which derived from the Athens school of Greek philosophy.

Before I give a short reconstruction of the metaphysics of nature I want to give a synopsis of what the metaphysical thinking opposed. It was a central paradigm shift in human history: the change from a myth-based self-understanding with its focus on cultus and ritus and the strict hierarchy of norms and traditions within which a tribal society was interwoven.

2. Metaphysical vs. Mythological Construction of Nature

Pre-metaphysical hierarchies are mythology-based forces of creation. Nature was speaking to humans as animals and plants, natural forces as thunder, wind, water and fire. The order of the world was self-evident. Animals were not ranked inferior to humans but equally. As animals are different, so are humans different. The self-evident order of the world is a cosmocentric law which rules over animals and humans. The myth of the change of nature before humans to nature with humans does not resolve the status of nature without humans. The mythology of pre-metaphysical tribal societies suppresses destruction of nature definitively. Nature is a kind of holy being within which non-holy humans are embedded. Therefore humans have to act accordingly. Humans in pre-metaphysical tribal societies were not only ecological experts. Their educational systems were holistic ones, each member being required to be familiar with their surroundings such as plants, animals, climate, annual cycles and repetitions, interdependencies of the inner and outer nature. Each member of this kind of human society was also familiar with the ethics and norms of tribal traditions in social affairs.

A different relationship with nature was constructed in the metaphysical thinking of the classical Greek philosophers. At the basis of the western occidental-modern world view and technical-scientific modernity we can find Greek cosmology. Their competing metaphysical world views are extensively developed constructions according to logics and methodology which offer completely different answers to questions of the myth-based lifeworld. The change from a natural being into a society-based being is an irreversible process. The division of survival of society, of the survival of non-human nature, indicates a newly-derived hierarchy in which culture (i.e. inner nature of humans) has primacy in opposition to nature (outer nature). The subordination of society to an omnipotent creator god and his plan of creation are followed by the subordination of non-human nature to the human one. The hierarchy is strict and structured: the primacy of gods, followed by humans and, last, the rest of nature. The age of unity between human mankind and nature is broken irreparably. The order of the world is no longer self-evident but offered by god and supernatural. God is thinking prime pictures whose depictions are manifested in a great variety here on earth and in the cosmos.

The invention of the general term as crucial tool of the technique of abstract thinking divides metaphysical interpretation of nature clearly from the pre-metaphysical one. The rationalisation of world views occurred in parallel with differentiation and complexity of writing. Development and practice of the technique of writing liberated transport of tradition from the ancient practice of vocal traditions. Now it was possible to read about the history and myth of tribal societies even if they were far away or no longer present. Metaphysical philosophy of nature from now on had to answer the questions of classical antinomies, the relation between the whole and its parts and between (statical) being and (dynamical) becoming. There are three mainstream conceptions within metaphysical philosophy of nature in the occidental tradition of philosophy of the last 2000 years. All philosophical conceptions of the last 2000 years are part of one of these mainstream paradigms[2]. We differentiate:

• Monistic-organismic world views

• Pluralistic-mechanistic world views

• Organic-morphological world view

2.1. Monistic-Organismic World Views

The main principle of all monistic-organismic world views is holism (all is one). What we experience as a broad variety of things and processes constituting this world is attributed to one main principle. The multitude of beings is within this world view deduced from one driving force. Cosmos is a whole, the many things are epiphenomena which seem to be many but in reality are only parts of the whole. Inside and outside are two aspects of the one and whole reality. What seem to be many are only different moments of the one and all. According to different wholes in this monistic organismic world view (Life, soul of the world, world-mind, god) there is a differentiation between a physical, metaphysical or pantheistic monism. If the main principle is life we speak about hylozoism, if it is the soul, panpsychism, if it is divine, pantheism. In all of these monisms there is one and only one main principle which is behind all things. In the history of philosophy we can differentiate various developments of these monisms such as pre-Socratic hylozoism (Thales of Miletus, Anaximenes, Heraclitus), cosmic pantheism of the Stoa (Seneca, Epictetus, Marcus Aurelius, Cicero), pantheistic emanantism of Plotinus (Ammonius Sacca, Plotinus, Scotus Eriugena and later on Spinoza, Hegel), aesthetic pantheism of Giordano Bruno and his monadology, which was further developed by Leibniz and the pre-critical Kant in his metaphysical dynamism, and later on Hamann, Kierkegaard, Schelling, Goethe, Novalis, Hölderlin, Rilke, Rudolf Steiner. In a certain sense, this monistic world view is exemplified also in rationalism with its objection that the whole world can be imagined as and integrated within one objective and logical system which we must solely investigate long enough to integrate all things into this one and only system, as thought by Spinoza. This thinking also attracted Hegel. The god of Hegel is living and organismic and emerges as world through dialectical processes of birth, death and next level of being. In its organismic variation we find monistic evolutionism in Clifford, Huxley, Darwin and Spencer. One law determines the whole universe. This absolute unifying law is the law of development. Differentiation and Integration are the everlasting potentials of this law. The Emergentism of Samuel Alexander postulates the one and only world matter which is the material out of which all things are formed. The many parts and processes are events which are emerging out of this world matter which is at the last identical with god. Another much younger philosophy is the pan-vitalistic metaphysics of France with Maine de Biran and Bergson. From its strict anti-mechanistic and anti-rationalistic view they propagated a self-enforcing power of all living, or as Bergson called it, the Bios, the principle of creation in the whole reality which is the driving force of the whole universe. This vitalism is integrated within certain other holisms which tried to unify this world view with modern natural science knowledge and such proponents as Haldane, Meyer-Abich, Wheeler, Whitehead, Bohm, Capra. Another kind of organic monism is the dialectical materialism of Engels which is a counterpart to Hegel’s idealistic monism. The whole and the one is more than the sum of its parts. The parts per se have no value, only in sum the whole is the main value. The monistic-organismic world view is present also in twentieth-century physics. Searching for the last common invisible matter, or the elementary parts of all matter or the last and one formula (Stephen Hawking) through which all can be explained or which represents the ultimate law of all being, are variations of ‘all is one’ – metaphysics. The particles on the subatomar level are not parts by their own. They are parts which are all constituted by lower parts and smaller parts and at the last they are quantums, quantum parts[3] or, as Einstein noted 1950, electrical field densities which we see as corpuscles but in reality are condensed out of a universal field of energy.[4]

The driving force of these monistic-organismic world views derives from both the presumption of the unification of thinking and being (without language’s critical reflection) and a kind of idealistic rationalisation of experiences such as separation, transitoriness, contradiction, fear of reality and the new, unexplainable. The many parts we experience are at the last all in one, a common principle, the last and ultimate law and formula or in its theistic variation basics of all religious social orders.

2.2. Pluralistic-Mechanistic World Views

In strict opposition to the monistic-organismic world views there are the pluralistic mechanistic ones. Their main principle is: all is endless plurality (all is many). In contrast with the holistic one and only of monisms, in the pluralistic all is built of indefinite numbers of corpuscles, smallest parts. If we look at or experience things, persons, objects, they seem to be entities, but in reality they are the sum of these smallest corpuscles. These last smallest entities are unchangeable and everlasting. A real becoming out of nothing, i.e. a real de novo emergence, which means a movement from not being into being, may be a construction in our consciousness but has nothing to do with any reality. These ultimate single corpuscles can be brought into forms or can even be mixed but this does not change anything within them. In their outer nature they can be moved and change their relation to one another but in their substance they are unchangeable. Any movement is caused from outside and is purely mechanistic. Parmenides was one of the first thinkers to propound this world view. For him movement is also an illusion because it is a line-up of the smallest unmoved statical state of things. A hundred years later the atomistic school changed the philosophy of Parmenides in one crucial aspect. Leukippus and Democrites now believed the experience of multiplicity, changeability and movement to be reality. The hylomorphistic conception of Aristotle damaged this world view until it was revived by Pierre Gassendi in 1649. He constituted the philosophy of mechanistic atomism in a new way. Robert Boyle described this mechanistic atomism. He observed that in contrast with older forms of atomism matter is an assembly of different basic elements. His philosophy focused on investigations and research into these basic elements. A hundred and fifty years later Proust and Dalton postulated real atoms in the so-called law of constant and multiple proportions. Then the term molecules was developed and in the shift to the twentieth century it became increasingly clear that atoms are not atoms, because they are constituted by a variety of dynamic entities which can emerge as corpuscles or waves. This contradicted the term atom fundamentally. Although atomism was shown to be a misinterpretation of nature, mechanism as mechanism has been a successful model until today.

René Descartes observed earlier that the concept of indivisible corpuscles is dubious in principle. Rationalistic investigation can experience only mathematical relations and the reality of matter can only be viewed as proportion and dimension. We can only understand machines because all their functions can be reconstructed by investigation of the function of their parts. These parts of the world machine first are thought by god and later on produced. The parts of being within Descartes’s thinking are purely dimensional and equal. Behind these qualities of the ultimate parts there is nothing else. They can be differentiated only in their size, geometry and configuration. Every phenomenon in the cosmic universe is a configuration and local movement of these parts. Additionally all living beings function in the same way, purely mechanistically. Descartes’s strict mechanisation of everything within nature became a broad mainstream world view. The principles of mechanics were adapted to whole physics and as result this kind of physics became the basic science of all empirical research and investigation. A late player was Newton, whose philosophy was founded on mechanistic principles. According to Newton, ultimate particles are created by god as massive particles. From this, the next step was the apodictic mechanism of LaPlace, who stated that every single status within the world and cosmos is a strict effect of the foregoing causes. If there were a mind which could oversee all forces which are existent in nature it would be able to predict every future development out of this knowledge, because everything happens according to strict mechanical laws (the LaPlace demon).

Then came a state of universal determinism: the state of every closed system at a certain moment determines the following development for all time. The whole world as well as the universe is a big machine, which is constituted by an infinite quantity of parts, all of them underlying strict natural laws. With strict rational thinking nature has to be analysed in minutest detail until all parts of nature are part of scientific knowledge. Then sometimes mechanical nature can be reconstructed completely and even optimised, unlike real nature. This is also valid for all living beings including humans, especially the human mind. This was a basic conviction also of Dubois-Reymond, one of the main mentors of Sigmund Freud. In the twentieth century, Rudolf Carnap was also convinced that the psychological features of humans are a bundle of physiological mechanistic single processes and should be explained mechanistically.

2.3. Organic-Morphological World View

In between these two completely contradictory world views there is a third world view which was worked out by Aristotle and Neo-Thomists. The starting-point of the so-called organic-morphological world view is the theory of levels, which includes a categorisation of the delimitations and differences between these levels. Nicolai Hartman distinguishes four levels of being: the material, the vital, the psychic and the mental. These levels differ in their stages on the way to perfection which depend on the translation of potentiality into actuality. In the level of the material, the potentiality is dominating, actuality is less, i.e. the real matter of the world behaves according to natural laws, e.g., in the case of nuclear technologies, much more actuality can be processed out of single atoms. In a nuclear chain reaction actuality is nearly indefinite whereas potentiality approaches zero. The higher level integrates the lower one, although the lower one is not dissolved but gets a new function within the higher one. This means reality is constituted by many and ultimate smallest particles which develop in real processual reality into different forms which unite to become such things as bodies of living beings. This organic morphological world view strictly contradicts the monistic-organismic as well as the pluralistic mechanistic world view. The relationships between these levels are determined by a set of laws:

(1) Law of autonomy: according to Hartmann, each layer of being is autonomously structured and the genesis of this autonomy cannot be fully derived from the next lower level. The mental level is therefore independent of the psychic level, the psychic of the vital, and the vital of the inorganic. This does not necessarily mean that the mental level lacks the psychic level, the psychic level lacks the vital one and the vital lacks inorganic elements. Rather it emphasises that each of these levels is characterised by features that can be found here and only here.

Within this law of autonomy there are two subordinate laws.

(a) The law of novelty: in each higher level, features appear which are lacking in the next lower level. These features represent a novelty, something new compared with the lower level. Such new features are neither a logical consequence in the development from the lower to the higher level nor can they be fully derived from the former.

(b) The law of modified, recurrent features: the laws of the lower level reappear in the higher level, never vice versa, but in a modified manner. Specifically, the laws of the lower are structurally and functionally integrated into the higher. For example, the laws of the inorganic level recur in the vital level, but under organisational principles of the vital level, i.e. in a constellation unknown in the inorganic level.

(2) Law of dominance: the laws specific to one level do not merely govern that layer, Within the overall organism, every higher level acts on all levels below it, without dismantling or negating them. Humans, for example, possess a vegetative nervous system whose function is largely independent of mental activity. This mental activity, however, can influence the psychic state and, by destabilising it, e.g. in extreme stress situations, have an effect on the vegetative nervous system.

(3) Law of dependence: each higher level is neither poised above nor determined by the lower ones, although a certain dependence does exist. The mental level functions on the basis of the psychic, this on the vital, and the vital in turn on inorganic substances. In the case of comatose patients, the vital level and the vital organisation of the inorganic matter comprising the body continue to function, but the psychic and mental levels are silenced.

(4) Law of distance: owing to the new, defining quality of a level of being, Nikolai Hartman recognises a ‘metaphysical discontinuity’ rather than actual transitions between these levels. While representatives of approaches based on continuity theories have always postulated such transitions, no actual transitions have been found or convincingly reconstructed in the field of palaeontology. According to this law, nature, and even evolution, progresses in discrete steps. In contrast with the former two most prominent world views with their 'all is one' or 'all are part', in this different worldview being is a kind of processual reality with developmental stages from simpler to more complex structures. Also in contrast with the former conception, the occurrence of novelty which did not exist before is a special feature of being and cannot be logically or ontologically deduced from former stages. Movement, development, changeability from the littlest inorganic parts up to the human mind is an inherent potentiality of being and not a mere epiphenomenon or mixture of unchangeable smallest beings. The differences between the organic morphological world view and the monistic-organismic and pluralistic-mechanistic world views are fundamental and unbridgeable.

3. Delimitations against Metaphysics

All schools of philosophy from antiquity and the classical Greek age up until the twentieth century tried to solve the classical problems of antinomy, i.e. (i) the relationship of the whole and its parts and (ii) the (statical) being and the (dynamical) becoming. The short overview of the philosophical conceptions, their tendencies and motifs described a kind of philosophy which was to be strictly avoided by the philosophy of science called logical empirism and later on critical rationalism.

The whole dictionary and language game played in these metaphysical languages was a real nightmare for the proponents of the project of ‘exact science’. They were convinced they could find a language which could both exclude metaphysical language, inexact terms and apodictically-claimed truth and for the future express empirical sensory data unambiguously and definitively. For logical empirism and positivism metaphysical questions do not have any subject and therefore replace this kind of philosophy by the primacy of empirical scientific knowledge, materialism and naturalism. The absolute spirit as the highest intellectual level of theoretical thinking in german idealism was transformed into absolute objectivism. But, as we will see later, both the idealistic tradition and it materialistic counterpart and even empirism share classical metaphysical positions such as (i) their claim of being an original philosophy and (ii) the identification of being and thinking. The latter one particularly constructs an inner relationship between thinking and being: as we are thinking, being also functions. The general, the necessary and the supratemporal can be found also in their terms. Empirism and Nominalism identified this self-misunderstanding. They resolved this misunderstanding in a multitude of entities without qualities. Only by the sensory organs of feeling subjects can these entities be mentioned and then be reconstructed within their imaginative apparatus.

Modern empirism wanted to be freed from these metaphysical implications by a substantial and fundamental critique of metaphysics. Therefore the only serious value for science is the rationality of the methods of scientific knowledge, i.e. the formalizable (mathematical) expression of empirical sentences. This is strict objectivism, which restricts itself to a pure observer perspective that confirms its observations by techniques of measurements and subsumes reality in the formalisable depiction of these measurements. Between metaphysics and objectivism there is an unbridgeable gap: what can be found empirically and described as formalisable exists. Outside these criteria everything can be believed but is not subject of exact sciences. From now on objectivity is the main agenda of natural sciences, subjectivity the subject of human sciences. The interesting and ambitious programme of logical empiricism started as no scientific discipline started before: by a fundamental critique of the sentences with which we describe observations and those with which we construct theories. This scientific approach was a fundamental shift in the history of philosophy. In the main focus were not the things, the world, the being but conversely the medium in which we describe things, our opinions, impressions, experiences, the language itself.

3.1 Linguistic Turn

To delimitate exact scientific sentences from inexact sentences as they occur in philosophy and theology, the school of logical empirism at the 1930s tried to construct a formalised language of exact sciences according to the young Ludwig Wittgenstein and the theoretical construction which he outlined in Tractatus Logico-Philosophicus.[5][6][7][8][9][10][11][12] With this formalised language of exact sciences it should be possible to outline empirical results of experimental research exactly and without ambiguity. This means that every sentence with which observations are described as well as sentences which are used to construct theories must fulfil the criterion of formalisability, i.e. they must be expressible as mathematics. Only sentences which fulfil this criterion should be claimed as (termed as) scientific. Sentences which would not be formalisable have to be excluded from science because they are not scientific sentences. By this procedure natural science should be installed as exact science. Because the world functions exclusively according to the laws and principles of physics, this world can be depicted only by sentences of mathematics which are able to express physical reality in a one-to-one manner. Natural laws expressed within the language of mathematics, i.e. formalisable, represent the inner logic of nature. The central part and most important element of language therefore is the syntax, because only by the logical syntactic structure of language is it possible to depict the logical structure of nature. Language as depiction of the natural laws of reality therefore must be formalisable in all its aspects. Because language therefore is seen as a quantifiable set of signs it can be expressed also in binary codes (1/0). Meaning functions therefore are deducible solely from this formal syntactic structure. Similarly to this model of language, cybernetic system theory and information theory investigate the empirical significance of scientific sentences out of a quantifiable set of signs and, additionally, out of the information transfer of formalised references between a sender and a receiver (sender-receiver narrative). Information-processing systems therefore are quantifiable themselves. Understanding information is possible because of the logical structure of the universal syntax, i.e. by a process which reverses the construction of meaning. Because of this theorem, information theory is also a mathematical theory of language.[13][14] Both constructions are founded on the assumption that reality can be depicted in a one-to-one manner only by formalisable procedures, i.e. formalised sentences. Exact sciences means correspondence of thinking and being. Manfred Eigen adapted these models for biology in the last third of the twentieth century in the description of the genetic code as a language-like structure. [15]

3.1.1 Gödel’s ‘Incompleteness Theorem’ and Real-life Languages

A similar situation is encountered in the attempt to absolutise mathematics as that pure formal language whose every ramification might become fully transparent. This led Gödel to formulate the Unvollständigkeitssatz (incompleteness theorem) in his work Über formal unentscheidbare Sätze der principia mathematica und verwandter Systeme.[16] Gödel investigated a formal system by applying arithmetic and related deduction methodologies. His aim was to convert metatheoretical statements into arithmetical statements by means of a specific allocation procedure. More precisely, he strove to convert the statements formulated in a meta-language into the object language S by using the object language S. This led Gödel to two conclusions:

• On the assumption that system S is consistent, then it will contain one formally indeterminable theorem, i.e. one theorem is inevitably present that can be neither proved nor disproved within the system.

• On the assumption that system S is consistent, then this consistency of S cannot be proved within S.

Today, several branches of mathematics are considered indeterminable. Herein lies the consequence of this indeterminability theorem for the automaton theory of A. Turing and J. v. Neumann: a machine can principally calculate only those functions for which an algorithm can be provided. Functions lacking an algorithm are not calculable. Every cybernetic, self-controlling machine is the realisation of a formal system. For each of these machines, as in the case of every organism, there must be an indeterminable formula. It is precisely by means of a non-formal language that this formula can be shown to be true or false; this non-formal language is the very tool that enables the language itself to be discussed. The machine is unable to do this because no algorithm is available with which a cybernetic machine can determine its underlying formal system. Systems theory is principally unable to fulfil these demands. The fact that the paradoxes arising within a formal language cannot be solved with that language led to a differentiation between object language and meta-language. Nonetheless, paradoxes can also appear within meta-language; these can only be solved by being split into meta-language, meta-meta language and so forth in an infinite number of steps. This unavoidable gradation of meta-languages necessitates resorting to informal speech, developed in the context of social experience, as the ultimate meta-language. It provides the last instance for deciding on the paradoxes emerging from object- and meta-languages. Neither the syntax nor the semantics of a system can be constituted within that particular system without resort to the ultimate meta-language. The ambition to provide logic and mathematics with a priori validity is no longer tenable: an unambiguous linguistic foundation of science, one beyond further inquiry and supporting itself through direct evidence, cannot be secured. Language proves to be a perpetually open system with regard to its logical structures and cannot guarantee definiteness from within itself. To summarise this chapter:

• There can be no formal system which is entirely reflectable in all its aspects while at the same time being its own metasystem.

• Concrete acts and interactions are basically unlimited in their possibilities. There will always be lines of argumentation that lie outside and have no connection with an existing system. Basically, every system can be transcended argumentatively. Newly-emerging language games and rules may develop as novel structures which are foreign to previous systems and not merely a further step in a series of prevailing elements. These very discontinuities enable totally new language applications.

• The ultimate meta-language, informal language, provides indispensable evidence about the communication practice of subjects in the real environment; the operator of formalisations is itself an integral part of this. Reverting to this everyday type of communication reveals information about the subjects practising this usage. In this sense, pragmatism becomes the theoretical basis both for formal operation and for a non-reductionistic language theory.

3.1.2 The Roots of the Idea of an ‘Exact’ Scientific Language

Logical empirism and critical rationalism fail in their attempt to construct a pure language of logics and mathematics as delimitation from non-scientific, metaphysical sentences. The failure is hidden in their own metaphysical concept of language upon which they cannot reflect, because they reduce the main structures of language and communication to the syntax alone. Let me reconstruct the developmental history of this misconception of language. The origin of this can be clearly identified in the depiction theory of language of Plato. He was convinced that cosmos and the world can be sufficiently depicted by mathematics (following Pythagorean motifs). This clearly derives from his concept of ideal archetypes (the thoughts of god) which we find in this world in a variety of inexact depictions. With his language (mathematics) mankind can participate in these thoughts of god, i.e. the ideal archetypes. Aristotle shares the view on language as a tool, but in a crucial aspect he changes Plato’s conception: language acts as expression of the inner conceptions; the logical order of the linguistic sign system we use represents the logical order of nature in general. Here in the idea of an ideal language which can depict nature in a one-to-one depiction are the basics of the concept of the exact scientific language. The Aristotelian tool ‘language’, which functions like the tools with which we calculate mathematically, was further developed by Hobbes and Leibniz, who investigated this relationship between language and mathematics. Leibniz’ intention was similar to that of his twentieth-century peers: to define a syntactic-semantic construction of our thinking in symbols and therefore to reject all misunderstandings and obscurities within sciences. Even the concept of the young Wittgenstein postulates that behind the everyday language hides the logical form of a universal language (as postulated by Leibniz). Within this logical form of the language we can find the intersubjective and valid depiction of the fundamental facts. We have to express such fundamental facts by using these elementary sentences (Elementarsätze). By using these elementary sentences we can reconstruct any sensible sentences logically. The meanings of words within a language are presumed as non-variable substances which are coherent with material substances. Exactly at this point the modern empirical concept of language is congruent with the metaphysical conception of Aristotle. This is the basics of the theory that language does not transport real contents but structures exclusively: the signs which are used within a language are variables, which have to be filled up (similar to an empty container) by communication partners from their pool of private experiences. Therefore communication is a process which starts with private encoding activities, technical transmission via a medium or communication channel and last but not least the private decoding of the receiver (sender-receiver narrative). The interpretation of certain contents being transported in the messages is purely a private matter. The unchangeable thing, the material reality, is only the logical structure of the used language. The starting-point, again, is the Aristotelian logic of subjects and predicates which is the real depiction of the order of being. This has been picked up by scholasticism: ontology, the order of being, can be depicted in the Latin language. As noted before, according to Leibniz, this should produce a pure and logical form of speech which should lead to his programme of characteristica universalis independently of any meaningful content working as a universal language of sciences. Note that here we can find Chomsky’s meaning-independent, syntactical structure of universal syntax.[17][18] This is exactly the purpose defined by the young Wittgenstein: to depict objective reality by one language, the language of mathematics and logic.

3.1.3 The End of Linguistic Turn

In his later work, Philosophical Investigations, Wittgenstein refuted the concept which he worked out earlier.[19] The main characteristic of this pragmatic turn was the abandoning of the ideal of a world-depicting universal language. In contrast to former concepts which thought that behind any language is a material reality which determines the visible order of languages (e.g. universal laws, universal syntax) Wittgenstein proofed, that this is not the case. The most essential background of language is its concrete use in interacting humans. The real use of a language is always the unity of language and actions. This unity of language and actions Wittgenstein called Sprachspiel (language game). Game, because as in every game so also in language there are valid certain rules. It is not possible to go behind the practice of a life-form through explanations or foundations. Language itself is the last bastion as the real practice of actions.

Language as practical action is an intersubjective phenomenon. To insist on this fact and to demonstrate that methodological solipsism is unsuccessful in principle Wittgenstein worked out the proof of the impossibility of a private language. In his analysis of the expression ‘to obey a rule’, Wittgenstein provides proof that the identity of meanings logically depends on the ability to follow intersubjectively valid rules with at least one additional subject; there can be no identical meanings for the lone subject. Speaking is a form of social action. The rules of language games have developed historically as ‘customs’ from real-life usage. Such customs may even function as institutional regulations within societies. The practice of a great variety of language games is therefore the self-regulating practice of societies. They understand the rules you must play within such a game. Then you can see the meaning of a term because as co-player you get experience about how a term is used within this play, which rules determine its meaning and how the rules may change according to varying situations.

Participation in common language games as precondition for the process we term ‘understanding of words and sentences’ is replacing the methodological-solipsistic ‘empathy’ by which the former concepts fill up logical structures from a private pool of experiences. In the course of the further discussions in the philosophy of science it became increasingly clear that the validity claims of the linguistic turn could not be fulfilled.[20][21] Artificially constructed languages such as formalisable mathematical languages are totally different from natural languages such as the everyday language with which humans coordinate and organise their daily routine. A variety of problems of formalisable scientific languages could not be solved in principle: primary as well as boundary conditions but also terms of disposition such as ‘soluble’, ‘magnetic’, ‘practicability’ ‘progress in the cognition process’ or ‘visible’ are not formalisable. Additionally, neither the verification criteria (Carnap) nor the falsification criteria (Popper) managed to delimitate empirical sentences from non-empirical ones.[22]

The attempt of the linguistic turn to instal logic and mathematics as the foundation of real sciences, with an unambiguously value, had to be abandoned. Linguistic turn thinkers were convinced that mathematical languages are ambiguously because they depict reality in a one to one manner. In contrast to this language even in its logical structure is an open system which cannot guarantee lack of ambiguities. The long-lasting ideal of empiricism to reduce every sentence to observation was no longer valid. Empirical theories from then on had a very risky status which only partially and indirectly can be deduced by hypothesis-relaying in observations: We make observations. In the next step we make hypothesis. Out of these hypothesis we deduce conclusions. But they are not complete but partially and indirectly. The exclusion of this history of empirical research was also a failure, as Thomas Kuhn proved.[23] The historical set-ups and circumstances of research communities strongly influenced theory building and descriptions of observations. Objectivity from then on was not an unchangeable truth but depended on consensual procedures in a great variety of language games of scientific communities.

3. 2. Postmetaphysical Thinking: Wittgenstein’s Pragmatic Turn

Self-definition of the ‘exact’ sciences was inherently presumed to reduce every observation on this formalisable universal language. Unfortunately this failed. All attempts to translate all terms with which we express observations in terms of the theoretical language demonstrated that this was not possible in an exact way. The universal depicting language remained as a postulation that could not be satisfied by real processes. Curiously, metaphysics by itself was the basis of the criticism of metaphysics by the young Wittgenstein. The depiction of the world by logical atomism - Russell’s and Whitehead’s Principia mathematica[24] - was unmasked as secret metaphysics of logic itself. The supposition of an ‘identical logical structure of language’ which constitutes intersubjectivity a priori can only be simulated in computerised models in artificial binary code languages which are based on formalisable procedures. But this has nothing to do with social praxis and socially shared lifeworld of human beings. The real-life everyday language can even speak about itself; it is its own meta-language. Exactly this is not possible for identical artificially constructed languages of science, which cannot be their own meta-languages coherent with their own definition. This is also an inherent deficit of all concepts of artificial intelligence (AI).

3.2.1 The Fundamental Status of Communicative Intersubjectivity

According to these problems outlined above a theory of communicative intersubjectivity could solve these problems and therefore give a good basis for scientific rationality. This includes the withdrawal of reductionism as a formalisable term of language. Intersubjective interactions are characterised by reciprocal validity claims. To speak, make propositions and understand utterances does not function through a private encoding process and subsequently a private decoding process, but a shared rule-governed sign-mediated reciprocal interaction. The shared competence of semiotic rules and the socialised linguistic competence to build correct sentences enable interaction partners to understand identical meanings of utterances. The only way to decide whether a mathematical formula is true or false is by using a non-formalisable language. You cannot decide this from the formalisable language itself. With non-formalisable languages you can easily change from formalisable to non-formalisable languages and vice versa. This is impossible for the formalisable language itself. The contradictions within a formalisable language cannot be solved by this language. Therefore you need a meta-language. But some contradictions are inherent also in every meta-language.

The result of this discussion was that solving these problems and paradoxes within formalisable languages and meta-languages needs a non-formalisable everyday language. This non-formalisable everyday language must be postulated as the ultimate meta-language. Everyday language is based on concrete social experience. A further result of this discussion was that the foundation and justification of formalisable scientific languages is possible only through a reflection on communication practice in concrete social practice of societies.[19] Communication is a kind of social interaction, and communication science therefore has to be seen as a kind of sociology.[25][26] Communicative practice of language game communities not only constitutes meanings in utterances but primarily guarantees self-identities in reciprocal interactions of common processes of social coordination and organisation. Only the analysis of this communicative practice enables us to find essential principles of structure and function of languages in general. Even natural scientists are part of language game communities. Even the natural scientist does not start speaking and thinking just as soon as s./he starts university. Prior to this the scientist learnt linguistic and communicative competences within social interactions, as do all humans capable of language and communication. In this discussion it became increasingly clear that every language as sign system depends on communicative agents.[27] The project to found and justify an exact scientific language failed but it led to a highly differentiated and long-lasting reflection on language and communication which had never occurred before. A further result of this new subject of scientific research was interest in the roots of language and communication: reflection on the inherent historicity of the interacting subjects. This means that within science this led to reflection on customs and practice of scientific communities in the light of the history of sciences.[23][28]

Even scientific languages are developmental processes of the practices of historically grown scientific communities. When the pragmatic turn replaced the linguistic turn this was because from now on it was not the syntax and symantics that were the central focus of investigations about languages but (i) the subjects which interact with languages as well as (ii) the pragmatic aspects in which these interacting agents are interwoven and which determine how an interactional situation is able to be constituted as such. The complementarity and non-reductionability of the three levels of rules which are at the basis of any language used in communicative actions were commonsense elements.[29] Language therefore is not solely the subject of scientific investigations of a technique for information storage or transport but depends primarily on language-using subjects with linguistic and communicative competences in real social contexts of a real lifeworld.[30][31] On the other hand, it is not possible to develop an exact language of science which functions like natural laws in inorganic matter because scientific languages are also spoken by real-life subjects and the validity claim of objectivism to eliminate all inexact parameters of subjects does not function even in the scientific language game. Also, scientific languages depend on utterances which are preliminary; they are as open as any real-life language and therefore can generate real novelties, new sentences which did not exist before, and therefore are able to progress in knowledge. Because utterances in scientific languages are subject to discourses of scientific communities and are constantly under pressure of foundation and justification they may contribute ‘in the long run’(Peirce) to this progress in knowledge.[32]

The meaning of words is not the result of syntactic structures solely but depends on the context within which language-using individuals are interwoven. In the realm of this discourse on the role of language and communication in science and society the primacy of pragmatics, the level of contexts within which sign-using subjects are interwoven, became evident. The explaining-understanding controversy was solved by a pragmatic communication theory which lay behind the positions of classical hermeneutics and integrated speech-act theory.[33] In contrast with all former concepts this pragmatic communication theory replaced the subject of knowledge of Kant (solus ipse) by communicative intersubjective consortia of subjects that share communicative competences which enable these consortia to communicate internally as well as externally. Only on this basis of communicative actions is a common understanding of identical meanings of utterances possible. This is valid also for coordination and organisation of societies.

4. Manfred Eigen’s Depiction Theory of Language

Manfred Eigen did not integrate these results into his concept. Eigen compares human language with molecular genetic language explicitly.[34] Both serve as communication mechanisms. The molecular constitution of genes is possible, according to Eigen, because nucleic acids are arranged according to the syntax and semantics of this molecular language. Even the amino acid sequences constitute a linguistic system. Through this comparison Manfred Eigen follows the depiction theory of language within the tradition of empirism, logics, mathematical language theory, cybernetic systems theory and information theory. The world behaves according to physically determinable natural laws. These natural laws can be expressed only by using the language of mathematics. The formalisable artificial language of mathematics is alone capable of realistically depicting these natural laws. Language in its fundamental sense is language as a formalised sign language. The natural laws are explications of the implicit order of mathematics and nature. Mathematical language depicts this logical order through the logical structure of the linguistic sign system. The essential level of rules of a language therefore is the syntax. Only through the syntax does the logical structure of a language as a depiction of the logical structure of nature come to light. Because both the identity of the logical order of the language in its syntax and the logical order of nature can be expressed in mathematics, this language is quantifiable and can be expressed in binary codes (1/0). The semantic aspect of language initially comprises an incidentally developed or combined sign sequence, a mixture of characters, which only gain significance in the course of specific selection processes. The linguistic signs are variables whose syntax is subject to the natural laws governing the sign-using brain organ. The brain of humans, for example, is endowed with these variables and combines them to reflect synapse network logics. The variable sign syntax of the brain then must be filled up with experiences of a personal nature and thus constitutes an individualised evaluation scheme. In messages between communication partners, one side encodes the message in phonetic characters. The receiver must then decode and interpret the message based on empathy and personal experience. Understanding messages shared between sender and receiver is largely possible because the uniform logical form – a universal syntax – lies hidden behind every language. The function of that organ which syntactically combines the language signs according to its own structure most closely corresponds in Eigen’s opinion to cybernetics, i.e. the theory of information-processing systems (while abstracting the manner of its realisation).[35] Functional units like the central nervous system, brain or even macromolecules consist of a definable, limited number of elements and a limited number of relationships between these elements. These systems, along with their description by means of a language, are depictions of a reality, structured by natural laws. Since both the logic of the describing and that of the theory constructing language correspond with the logic of the system, the relationship between these elements of the system can be represented in an abstract, formal and unambiguous manner.

From the perspective of man as a machine, humans clearly represent an optimal model: they fulfil all those preconditions for constructing algorithms that a conventional machine cannot deliver, i.e. criteria for information evaluation based on the real social lifeworld. Humans, and all other biological systems, resemble a learning machine capable of internally producing a syntactically correct depiction of the environment by interacting with this environment, of correcting this depiction through repeated interactions and thus of changing their behaviour according to the environmental circumstances. Such learning systems are able to continuously optimise their adaptability. The differences between nucleic acid language and human language stem from the continuous developmental processes of biological structures, based on the model of a self-reproducing and self-regulating automaton that functions as realisations of algorithms. This enables the steady optimisation of problem-solving strategies in organisms, eventually leading to the constitution of a central nervous system, a precursor ultimately giving rise to the brain and its enormous storage and information-processing capacity. Language enables the implementation of this evolutionary plan (from the amoeba to Einstein): this medium forms, transforms, stores, expands and combines information.

4.1. Falsification of Manfred Eigen’s Depiction Theory of Language

Even formal systems are not closed, as Eigen suggests, nor are they principally fully determinable.[36] Furthermore, language is the result of communicative interactions in dialogue situations rather than the result of constitutive achievements of the individual persons. Communicating with one another, sending messages, understanding expressions is not a private coding and decoding process, but rather an interpretation process arising from a mutual adherence to rules by communicating partners who agree on the rules. The ability to abide by these rules is innate, the skill in complying with particular rules is acquired through interactions and relies on norms of interaction to utilise words in sentences, i.e. a linguistic competence. Information cannot generally be quantified as message content: statements made by social individuals in situational contexts are not closed and, thus, are generally not fully formalisable. The attempt to construct a purely representational language is doomed to failure because formal artificial languages do not exclusively contain terms that are unambiguous. This pertains to terms that cannot be confirmed through observation.

Specifically, scientific statements are not attributable to immediate sensory experience, i.e. the language game used to describe observations does not mirror the brain activity during the perception of reality. A world-depicting exact language must remain a mere postulate because it cannot logically substantiate itself. Too many theoretical concepts, too many scientific criteria that are generally not formalisable (e.g. ‘progress in the cognition process’, ‘practicability’, etc.), point to the limits of formalisability. The very identity between artificial language and its form renders it incapable of reporting on itself, something that presents no problem when informal speech, i.e. everyday language, is used. Language is an intersubjective phenomenon which several individuals can share, alter, reproduce as well as renew the rules of language usage. The basis and aims of this usage are defined by the real social lifeworld of interacting life-forms. The user of a linguistic sign cannot be comprehended according to the speaker-outside world model. Rather, this requires reflection on the interactive circumstances to which the user has always been bound, circumstances which provide an underlying awareness enabling him/her to understand statements made by members of the real lifeworld. The user of formal artificial languages – before appreciating the purpose of the usage – has also developed this prior awareness in the course of interactive processes with members of the real social lifeworld. Speech is a form of action, and I can understand this activity if I understand the rules governing the activity.[30][31] This means I can also understand an act that runs counter to the rules. Everyday language usage reflects everyday social interactions of the constituent individuals. The prerequisite for fully understanding statements is the integration of the understander in customs of social interaction and not merely knowledge of formal syntactic-semantic rules. A prior condition for all formalisations in scientific artificial languages is a factual, historically evolved, communicative experience. This very precondition becomes an object of empirically testable hypothesis formation in Eigen’s language model. At this point, however, Eigen’s model becomes paradoxical because he seeks to grasp theoretically language with tools that are themselves linguistically predetermined. Eigen’s language model, which is rooted in information theory, clearly reveals that Eigen equates the form of theory language with the form of language used to describe reality (experience). This implies the equation of formalised scientific languages with the language used to describe observations. Previous attempts to specify all the rules governing the translation of every term in theory-language into terms of observational languages have been unsuccessful. Not all concepts of theory language can be transposed into concepts of the observational language. Eigens concept of language and communication cannot be founded and justified any longer.

5. Theory of Biocommunication: Biology as a social science

To avoid all the misassumptions presented above I decided to develop a new theory of life: The Theory of Biocommunication. A theory of biocommunication based on a pragmatic philosophy of biology could demonstrate on the basis of empirical data that living nature in its genetic structures is language-like and in its cells, tissues, organs and organism is communicatively coordinated and organised.[37] Karl von Frisch has proved that the interactions between honey-bees are sign-mediated, based on body behaviours which function as symbols respectively.[38] If this becomes the mainstream coherent description of biological processes then humans could leave their anthropocentric world view for a biocentric one, in which humans would take a new place, as being parts of a universal community of communicative living nature. This could enable biology to leave behind its mechanicism and physicalism, which are unable to differentiate clearly between life and non-living matter. Biology could start to develop as a key science with much more coherence in describing animated nature. In going back to non-reductionistic terms of ‘language’ and ‘communication’, biology could make real progress in knowledge, which would help humans in general to ensure sustainable developmental preconditions for both humans and non-human living nature. This could be a real future option for human societies in the long run. Current molecular biology, genetics as well as cell biology investigates its scientific object by using key terms such as genetic code, code without commas, misreading of the genetic code, coding, open reading frame, genetic storage medium DNA, genetic information, genetic alphabet, genetic expression, messenger RNA, cell-to-cell communication, immune response, transcription, translation, nucleic acid language, amino acid language, recognition sequences, recognition sites, protein coding sequences, repeat sequences, signalling, signal transduction, signalling codes, signalling pathways, etc. All these terms combine a linguistic and communication theoretical vocabulary with a biological one, although most biologists are not very familiar with current definitions of ‘language’ and ‘communication’ and research results of linguistics, communication science, pragmatic action theory, sociological theories.

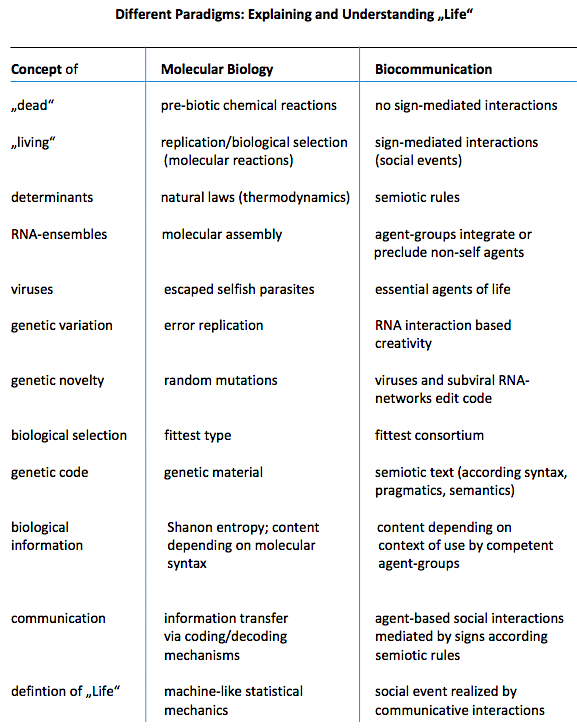

Table 1: The biocommunication approach explains life as a social event realized by communicative interactions of cells, viruses, and RNA consortia (ref. 91)

If we speak about (i) the three categories of signs, index, icon and symbol, (ii) the three complementary non-reducible levels of semiotic rules’ syntax, pragmatics and semantics and (iii) communication as interactions mediated by signs according 3 levels of rules, it can easily be seen that all these categories are nearly unknown in biology. Most biologists who use linguistic and communicative vocabulary to describe biological or genetic features do this according to their methodological self-understanding as empirical natural scientists. ‘Language’ and ‘communication’ are investigated in natural sciences behaviouristically or in the realm of formalisable procedures, i.e algorithms with information-theoretical, statistical or systems-theoretical conclusions. In contrast with this linguistics, communication theory, semiotics and especially pragmatics, as well as action theory, have tapped knowledge about language and communication which was unimaginable 40 years ago. It is completely different from fundamental suppositions of older, mechanistic, behaviouristic and even information-theoretical definitions. In the light of this current empirical and theoretical knowledge it has become increasingly clear that the multiple levels of sign-mediated interactions which we call ‘communication’ cannot be explained or even sufficiently described by older models such as the ‘sender-receiver’ narrative or even based on the term ‘information’. Even physicalistic or mathematical definitions of language as quantifiable sets of signs are all falsified meanwhile.[39] The current theory on biocommunication does not want or is unable to replace empirical biology.[40][41][42][43] Conversely, the recent quality of empirical biology is the prerequisite for a theory of biocommunication. Because natural sciences are not familiar with appropriate definitions of "language" and "communication" as both terms are in current action theoretical investigations this theory of biocommunication should be a complementary tool for e.g. biology. The theory of biocommunication then could act as a complementary tool in the interpretation of available empirical data concerning biological affairs.

5.1. Biocommunication

The first draft of a theory of biocommunication was outlined in 1975.[44] Tembrock exemplified the three semiotic levels syntax, semantics and pragmatics in great detail for several behavioural patterns within the kingdom of animals. He focused on the transport of information via chemical, mechanical (tactile and acoustic) and visual signs. Although his investigations were conducted in a strict empirical manner, Tembrock justified his approach according to a solipsistic model of knowledge and communication as we came to know it in the depiction theory of language: ‘There are built up inner models, of which the parameters are determined by the features of the circumstances, that are depicted by them’. His biocommunicative approach is therefore coherent with the sender-receiver model of information theory, i.e. a depiction theory similar to that of Manfred Eigen. Tembrock wants to demonstrate ‘exact’ science: the semioses that he investigates he tries to formalise and therefore sign-mediated interactions would be a kind of mechanistic behaviour. The inherent features of language and communication, especially the possibility of innovative semiosis or the common understanding (and interpretation) of identical meanings, is without the realm of formalisable procedures.

In contrast with this empiricist approach, at the end of the 1980s I developed a pragmatic approach of biocommunication based on the results of the philosophy of science discourse in the twentieth century.[45] In this pragmatic conception of biocommunication I integrated the pragmatic turn in its methodological foundation as well as the complementarity of the three semiotic levels of semiotic rules. Additionally, and in contrast with theories of knowledge with a solipsistic foundation, it investigates its scientific subject according to the primacy of pragmatics, i.e. the contexts’ communicative-intersubjective sign-users are interwoven in a real-life world. The main focus of biocommunicative analysis is the agents that use and interpret signs in communicative interactions. Because ‘One cannot follow rules only once’ (see above), speech and communication are kinds of social behaviour and therefore it is important to investigate group behaviour and group identity, the pragmatic contexts in which they are actively interwoven together with their history and cultural identity. These groups share a repertoire of signs and semiotic rules, with which they coordinate every life organisation that is necessary. This biocommunicative approach investigates communicative acts within and between cells, tissues, organs and organisms as sign-mediated interactions. The signs consist in most cases of molecules in crystallised, fluid or gaseous form and are termed semiochemicals (greek: semeion = sign). In higher animals additionally we can find acoustic and visual sign use. Competent sign-using agents follow syntactic, pragmatic and semantic rules in parallel. They determine the realm of possible combinations of signs, as well as interactional circumstances and the meanings of the signs within messages. No level of rules is reducible to one another. This is a crucial difference from all similar concepts of bringing together linguistics and biology.

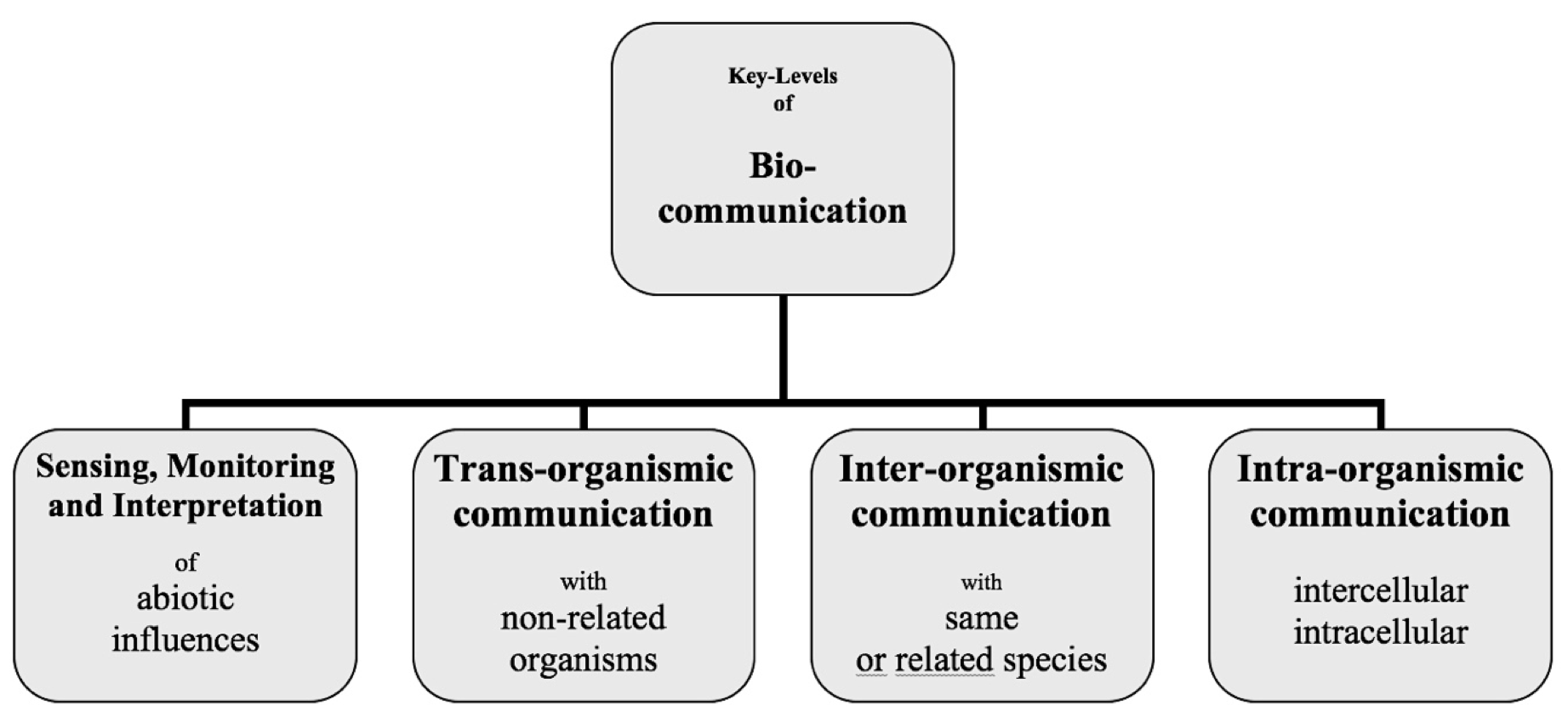

Figure 1: The biocommunication approach identified 4 levels in which cellular organisms are involved during their life.

Biocommunicative investigations concern archaea, bacteria, protoctists (eukaryotic unicellular organisms and their relatives), fungi, animals and plants.[46][47][48][49][50][51] Additionally the biocommunicative approach investigates DNA/RNA sequences as code, i.e. a linguistic or language-like genetic text that underlies combinatorial (syntactic), context-sensitive (pragmatic) and content-specific (semantic) rules. From the biocommunicative perspectives the interesting aspects are the linguistic (text-editing) and communicative (interaction-constituting) competences of viruses and viral-like agents such as self-replicating RNA species.[52] The generation of meaningful nucleotide sequences and their integration into pre-existing genetic texts as well as their capability to combine, recombine and regulate these genetic texts according to context-specific (adaptational) purposes of their host organisms is of special interest in biocommunicative research.[53]

5.1.1 Evolutionary History: History of interacting living agents

Communication in general can be understood as rule-governed sign-mediated interaction. This is crucially different from chemical-physical interactions in unanimated nature, because these interactions are not governed by semiotic rules. This is equally valid for human communication and communication in non-human life.[54] Referring back to the rules of communicative rationality provides an opportunity to answer questions of evolutionary logic and dynamics as questions of interaction logic and dynamics.[45] Evolutionary history can then be understood as a developmental history of interacting living agents. A more detailed examination of research results in the biological sciences should yield structures that can unequivocally be interpreted as communication rules. Understanding nature would no longer be a metaphorical expression of reductionistic explanatory models, but rather would mean understanding interaction logic and dynamics in their regulative, constitutive, and generative (innovative) dimensions. Unexpectedly, the controversial theoretical concepts of evolutionary novelty being essential for diversity and its selection processes are no longer the undirected or directed mutation narrative, nor teleological vitalism metaphysics (more recently ‘intelligent design’), nor the molecular biological self-organisation of matter (Eigen-Schuster narrative), nor the increasing complexity of a self-emerging property of systems (Kauffman narrative), but natural genetic content organisation by competent microbial/viral agents that cooperate for their survival goals which may coincide with those of their hosts as documented in the variety of endosymbiotic evolutionary processes.[54]

5.2. Key levels of biocommunication

At the begining of the biocommunication approach I investigated signaling and sign use within communicative interactions in plants, animals (corals and bees), fungi, bacteria and viruses with respect to 4 levels of biocommunication (see Figure 1). Having translated empirical data into a coherent method of investigation to constitute a sustainable integrative biology I invited leading experts in their field to publish books on domains of life such as biocommunication in soil microorganisms, biocommunication of plants, biocommunication of fungi, biocommunication of animals, biocommunication of ciliates and viruses. All this systematized research demonstrated the acceptance and practicability of this method to identify all relevant signaling processes throughout living nature.[55]

(a) Sensing, memory and interpretation of abiotic indices: Sensing, memory and interpretation of recent experiences against the background of memorised experiences according to abiotic indices such as temperature, light, gravity, wind exposure, moisture, etc. All these experiences have to be transported into the organism before an appropriate response behavior can be organized. If something goes wrong within these signaling processes the response behavior may remain rudimentary, deformed or inappropriate for the survival of the organism.

(b) Intraorganismic communication: We can describe all signaling processes within an organism. The organism must differentiate between sensing experiences that come from abiotic indices and receiving biotic information, which means certain behavioral patterns and semiochemicals that are produced not by the organism itself but by those outside.

(c) Interorganismic communication: There is a repertoire of signaling molecules or tactile behavior which derives from species-specific members of a biological community. This means species communities share the ability to generate signals, sense signals, memorise and interpret such signals according to inherited or acquired background knowledge which is stored genetically or by epigenetic markings. As we know from many animals, and even fungi, plants and bacteria, such organism communities can develop ‘dialects’ which are a commonly shared context-dependent ‘culture’ of an ecological niche or specialized environmental circumstances that slightly differ from those of the same species in other ecofields.

(d) Transorganismic communication: Organisms communicate ouside these levels with other organisms that are not members of their species. We can term this trans-organismic. We find such signaling processes in a variety of mutual interactions that extend from all levels of symbiosis until parasitic behavior in that only one participant benefits from these interactions and not the other. In general no interaction will take place that is coordinated and organized without signaling, including attack or defense behavior. All of these interaction behavioral patterns have been termed as mechanistic in the last decades. However, communicative actions are not mechanistic although they may appear rather conservative and goal oriented. Such actions can change and adapt to a slightly different reaction pattern to derive a completely new behavior out of nothing. This is a key difference from mechanistic and reductionistic explanations.

5.3. Context determines meaning: Examples

Competent agents that generate and use signs to coordinate behavior in most cases use semiochemicals (semeion = sign) to transport messages. The repertoire of these semiochemicals which are used as signs in signaling pathways or even in combinations as sign sequences is rather limited in comparison with the variety of behaviors that have to be coordinated. In natural language/code use it is usual to use the same signs and sign sequences to transport different semantic contents (meanings). The context in which interacting living agents are involved decides the meanings of the signs. The following brief examples from plants, fungi, animals and epigenetics will demonstrate this. Auxin is used in plants' hormonal, morphogenic and transmitter pathways. Because the context of use can be very complex and highly diverse, identifying momentary usage is extremely difficult.[56] For synaptic neuronal-like cell-cell communication, plants use neurotransmitter-like auxin. Auxin immunolocalization implicates vesicular neurotransmitter like mode of polar auxin transport in root apices and presumably also neurotransmitters such as glutamate, glycine, histamine, acetylcholine, and dopamine - all of which they also produce. Auxin is detected as an extracellular signal at the plant synapse in order to react to light and gravity.[57] However, it also serves as an extracellular messenger substance sending electrical signals and functions as a synchronisation signal for cell division.[58] In intracellular signaling, auxin serves in organogenesis, cell development and differentiation. In the organogenesis of roots, for example, auxin enables cells to determine their position and their identity.[59] The cell wall and the organelles it contains help to regulate the signal molecules. Additionally maternal auxin supply contributes to early embryo patterning. Auxin serves also as a growth hormone. Intracellularly, it mediates in cell division and cell elongation. At the intercellular, whole-plant level, it supports cell division in the cambium, and at the tissue level it promotes the maturation of vascular tissue during embryonic development, and organ growth as well as tropic responses and apical dominance.[60]

In fungi semiochemicals are used to transport certain meanings. Such meanings are subject to change, and rely on different behavioral contexts, which differ under different conditions. In fungi such contexts concern, e.g., cell adhesion, pheromone response, calcium/calmodulin pathways, cell integrity, osmotic growth, cell growth and stress response.[61] In this context different modes of behavior can be organized by syntactically identical signaling. For example, cyclic adenosine monophosphate (cAMP) may trigger and inhibit in a variety of fungal species filamentous growth, regulate positive virulence, suppress mating, activate protein kinase or directly or indirectly induce developmental changes.[62] Additionally it should be mentioned, that depending on the real-life context, epigenetic regulation can supress or amplify incoming or transmitted secondary metabolites, a rather important signaling resource in fungal organisms. As a result, not for every message, a novel sequence hast to be produced.[63]

It is well known how bees communicate by using signs, especially moving patterns that serve as signs. Such moving patterns are termed bee dances.[38] Here we will look briefly at the waggle dance, which describes the direction of the destination in terms of the respective position of the sun and defines the distance. As the ability to dance is genetically fixed the whole ability to dance a waggle dance that transports the meaning depends on social interactions in the developmental stage of young bees, which means it relies also on the culture of the real lifeworld of the bees. Karl von Frisch demonstrated the existence of bee dialects in that the same dance behavior can indicate rather different distances depending on the cultural background of the bees and their customs and traditions. The waggle dance as part of the foundation of a new colony informs bees about appropriate new hives that scouts have found. The same dance behavior is used when the new hive is colonised and workers fly out to gather food for the hive.[38] In different situational contexts such as (1) searching for a new hive and (2) food gathering the same sign sequence is used to motivate other scouts to see if the hive is suitable and motivates worker bees to gather food. Interestingly, at the genetic level evolution has also found a technique to trigger certain environmental experiences of organisms which can be stored as a memory tool in repeated experiences for better, faster response behavior which aids better adaptation.

Especially with the rise of epigenetics it has become obvious that it is not the syntax of the genetic text which serves as a primary information pool and as a blueprint for the development of an organism but the epigenetic markings.[64][65][66][67][68] Such epigenetic markings arise from methylation patterns on the genome or histone modifications that mark special pathways for gene expression and transcription in which long non-coding RNAs are determined that in late transcription are split up into short non-coding RNAs and microRNAs relevant to all cellular processes such as transcription, RNA editing, splicing, translation, immunity and repair. For example, under extreme stress or dramatic environmental change, epigenetic markings can change.[69] It has been reported that such stressful situations can reactivate the genomic sequences of grandparents and great-grandparents of plants if the genetic features of the parents are not sufficient to react appropriately to the stressful situation.[70] As we know, retroposons are stress-inducible elements that are not solely active in plants but also in animals. During mammalian maternal stress which occurs early in fetal life such retroposons activation can induce non-Mendelian-inherited epigenetic traits.[71][72]

5.3.1 Cells, tissues, organs, organisms