Your browser does not fully support modern features. Please upgrade for a smoother experience.

Submitted Successfully!

Thank you for your contribution! You can also upload a video entry or images related to this topic.

For video creation, please contact our Academic Video Service.

| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Zhan Chen | -- | 1824 | 2024-01-26 05:19:26 | | | |

| 2 | Sirius Huang | + 2 word(s) | 1826 | 2024-01-26 07:58:26 | | |

Video Upload Options

We provide professional Academic Video Service to translate complex research into visually appealing presentations. Would you like to try it?

Cite

If you have any further questions, please contact Encyclopedia Editorial Office.

Chen, Z.; Zhang, Y.; Qi, X.; Mao, Y.; Zhou, X.; Wang, L.; Ge, Y. Height Estimation with Aerial Images. Encyclopedia. Available online: https://encyclopedia.pub/entry/54388 (accessed on 09 May 2026).

Chen Z, Zhang Y, Qi X, Mao Y, Zhou X, Wang L, et al. Height Estimation with Aerial Images. Encyclopedia. Available at: https://encyclopedia.pub/entry/54388. Accessed May 09, 2026.

Chen, Zhan, Yidan Zhang, Xiyu Qi, Yongqiang Mao, Xin Zhou, Lei Wang, Yunping Ge. "Height Estimation with Aerial Images" Encyclopedia, https://encyclopedia.pub/entry/54388 (accessed May 09, 2026).

Chen, Z., Zhang, Y., Qi, X., Mao, Y., Zhou, X., Wang, L., & Ge, Y. (2024, January 26). Height Estimation with Aerial Images. In Encyclopedia. https://encyclopedia.pub/entry/54388

Chen, Zhan, et al. "Height Estimation with Aerial Images." Encyclopedia. Web. 26 January, 2024.

Copy Citation

Height estimation is a key component of 3D scene understanding and has long held a significant position in the domains of remote sensing and computer vision. Initial research predominantly focused on stereo or multi-view image matching. With the advent of large-scale depth datasets, research focus has shifted. The effort is centered on estimating distance information from monocular 2D images using supervised learning. Monocular height estimation approaches can be generally categorized into three types: methodologies based on handcrafted features, methodologies utilizing convolutional neural networks (CNN), and methodologies based on attention mechanisms.

monocular height estimation

multilevel interaction

local attention

1. Introduction

With the advancement of high-resolution sensors in the field of remote sensing [1][2], the horizontal pixel resolution (the horizontal distance represented by a single pixel) of the visible light band for ground observation from satellite/aerial platforms has reached the level of decimeters or even centimeters. This has made various downstream applications such as fine-grained urban 3D reconstruction [3], high-precision mapping [4], and MR scene interaction [5] incrementally achievable. Among these applications, the ground surface height value (Digital Surface Model, DSM) serves as a primary data support, and its acquisition methods have been a focal point of research [6].

Numerous studies on height estimation have been published, among which methods based on LiDAR demonstrate the highest measurement accuracy [7]. These techniques involve calculating the time difference between wave emission and reception to ascertain the distance to corresponding points, with further adjustments to generate height values. However, the high power consumption and costly equipment required for LiDAR significantly constrain its application in satellite/UAV scenarios. Another prevalent method is stereophotogrammetry, as developed by researchers like Nemmaou et al. [8], which utilizes prior knowledge of perspective differences from multiple images or multi-angles to fit height information. Furthermore, Hoja et al. and Xiaotian et al. [9][10] have employed multisensor fusion methods combining stereoscopic views with SAR interferometry, further enhancing the quality of the estimations. While stereophotogrammetry significantly reduces both power consumption and equipment costs compared to LiDAR, its high computational demands pose challenges for sensor-side deployment and real-time computation.

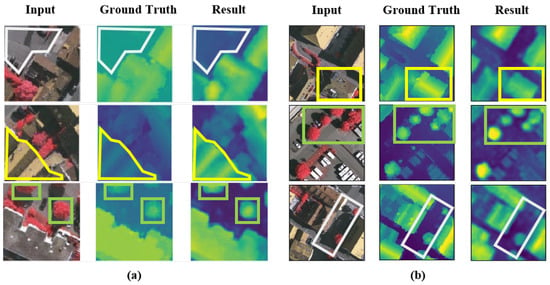

Recently, numerous monocular deep learning algorithms have been proposed that, when combined with AI-capable chips, can achieve a balance between computational power and cost (latency <100 ms, chip cost < USD 50, chip power consumption <3 W). Among these, convolutional neural network (CNNs) models, widely applied in the field of computer vision, have begun to be utilized in monocular height estimation [11]. CNNs excel in reconstructing details like edges and offer controllable computational complexity. However, as monocular height estimation is inherently an ill-posed problem [12], it demands more advanced information extraction. CNNs use a fixed receptive field for information extraction, making it challenging to interact with information at the level of the entire image. This limitation often leads to common issues such as instance-level height deviations (overall deviation in height prediction for individual, homogeneous land parcels) like Figure 1a, which restrict the large-scale application of monocular height estimation.

Figure 1. Typical problems of existing height estimation methods. (a): Instance-level height deviation caused by fixed receptive field. (b): Edge ambiguity (gray box: road; yellow box: building; green box: tree).

But the advent of transformers and their attention mechanisms [13], which capture long-distance feature dependencies, has significantly improved the inadequate whole-image information interaction common to CNN models. Transformers have been progressively applied in remote sensing tasks such as object detection [14] and semantic segmentation [15]. Among these developments, the Vision Transformer (ViT) [16] was an early adopter of the transformer approach in the visual domain, yet it encounters two primary issues. First, the high computational complexity and difficulty in model convergence: ViT, by emulating the transformer’s approach used in natural language processing, constructs attention among all tokens (small segments of an image). Given that height prediction is a dense generation task requiring predictions for all pixel heights, this leads to an excessively high overall computational complexity and challenges in model convergence. Second, the original ViT’s edge reconstruction quality is mediocre. Due to the lack of inductive bias in the transformer’s attention mechanism, which is inherent in CNNs, although it achieves better average error metrics (REL) by reducing overall instance errors, it underperforms in edge reconstruction quality between instances compared to CNN models, resulting in edge blurring issues akin to those observed in Figure 1b.

2. Overview of Height Estimation

Height estimation is a key component of 3D scene understanding [17] and has long held a significant position in the domains of remote sensing and computer vision. Initial research predominantly focused on stereo or multi-view image matching. These methodologies [18] typically relied on geometric relationships for keypoint matching between two or more images, followed by using triangulation and camera pose data to compute depth information. Recently, with the advent of large-scale depth datasets [19], research focus has shifted. The current effort is centered on estimating distance information from monocular 2D images using supervised learning. Present monocular height estimation approaches can be generally categorized into three types [20][21][22][23]: methodologies based on handcrafted features, methodologies utilizing convolutional neural networks (CNN), and methodologies based on attention mechanisms. In general, due to the datasets containing various types of features, it is challenging to extract only manual features to fit the distribution of different datasets, and the effectiveness is generally moderate.

3. Height Estimation Based on Manual Features

Conditional random fields (CRF) and Markov random fields (MRF) have been primarily utilized by researchers to model the local and global structures of images. Recognizing that local features alone are insufficient for predicting depth values, Batra et al. [24] simulated the relationships between adjacent regions and used CRF and MRF to model these structures. To capture global features beyond local ones, Saxena et al. [25] computed features of neighbouring blocks and applied MRF and Laplacian models for area depth estimation. In another study, Saxena et al. [26] introduced superpixels as replacements for pixels during the training process, enhancing the depth estimation approach. Liu et al. [27] previously formulated depth estimation as a discrete–continuous optimization problem, with the discrete component encoding relationships between adjacent pixels and the continuous part representing the depth of superpixels. These variables were interconnected in a CRF for predicting depth values. Lastly, Zhuo et al. [28] introduced a hierarchical approach that combines local depth, intermediate structures, and global structures for depth estimation.

4. Height Estimation Based on CNN

Convolutional neural networks (CNNs) have been extensively utilized in recent years across various fields of computer vision, including scene classification, semantic segmentation, and object detection [29][30]. Among these applications, ResNet [31] is often employed as the backbone of models. In a notable study, IMG2DSM [32], an adversarial loss function was introduced early to enhance the synthesization of Digital Surface Models (DSM), using conditional generative adversarial networks to transform images into DSM elevations. Zhang et al. [33] improved object feature abstraction at various scales through multipath fusion networks for multiscale feature extraction. Li et al. [34] segmented height values into intervals with incrementally increasing spacing and reframed the regression problem as an ordinal regression problem, using ordinal loss for network training. They also developed a postprocessing technique to convert predicted height maps of each block into seamless height maps. Carvalho et al. [35] conducted in-depth research on various loss functions for depth regression. They combined an encoder–decoder architecture with adversarial loss and proposed D3Net. Zhu et al. [36] focused on reducing processing time and eliminating fully connected layers before the upsampling process in the visual geometry group. Kuznietsov et al. [12] enhanced network performance by utilizing stereo images with sparse ground truth depths. Their loss function harnessed the predicted depth, reference depth, and the differences between the image and the generated distorted image. In conclusion, the convolution-based height estimation method achieves basic dataset fitting, but it still exhibits significant instance-level height prediction deviations due to the limitation of a fixed receptive field. Xiong et al. [37] and Tao et al. [38] attempted to improve existing deformable convolutions by introducing ’scaling’ mechanisms and authentic deformation mechanisms into the convolutions, respectively. They aim to enable convolutions to adaptively adjust for the extraction of multiscale information.

5. Attention and Transformer in Remote Sensing

5.1. Attention Mechanism and Transformer

The attention mechanism [13], an information processing method that simulates the human visual system, allows for the assignment of different weights to elements in a learning input sequence. By learning to assign higher weights to more essential elements, the attention mechanism enables the model to focus on critical information, thus improving its performance in processing sequential information. In computer vision, this mechanism directs the model’s focus toward key areas of an image, enhancing its performance. It can be seen as a simulation of the human process of image perception, which involves understanding the entire image by focusing on important parts.

Recently, attention mechanisms have been incorporated into computer vision, inspired by the exceptional performance of Transformer models in natural language processing (NLP) [13]. These models, based on self-attention mechanisms [39], establish global dependencies in the input sequence, enabling better handling of sequential information. The format of input sequences in computer vision, however, varies from NLP and includes vectors, single-channel feature maps, multichannel feature maps, and feature maps from different sources. Consequently, various forms of attention mechanisms, such as spatial attention [40], local attention [41], cross-channel attention [42], and cross-modal attention [13][43], have been adapted for visual sequence modeling.

Concurrently, various visual transformer methods have been proposed. The Vision Transformer (ViT) [16] partitions an image into blocks and computes attention between these block vectors. The Swin Transformer [44] significantly reduces computational burden by establishing three different attention computation scales and optimizes local modeling across various visual tasks.

5.2. Transformers Applied in Remote Sensing

Recently, numerous remote sensing tasks have begun incorporating or optimizing transformer networks. Yang et al. [45] used an optimized Vision Transformer (ViT) network for hyperspectral image classification, adjusting the sampling method of ViT to improve local modeling. In object detection, Zhao et al. [14] integrated additional classification tokens for synthetic aperture radar (SAR) images to enhance ViT-based detection accuracy. Chen et al. [46] introduced the MPViT network, combining scene classification, super-resolution, and instance segmentation to significantly increase building extraction. In the realm of supervised learning, He et al. [47] were among the first to integrate a pyramid structure into ViT for broadening self-supervised learning applications in optical remote sensing image interpretation.

In the context of monocular height estimation, Sun et al. [48] built upon Adabins by dividing the decoder into two branches, one for generating classified height values and another for probability map regression, simplifying the complexity of reconstructing both local semantics and semantic modeling in a single branch. The SFFDE model incorporated an Elevation Semantic Globalization (ESG) module, using self-attention between the encoder and decoder to extract global semantics and reduce edge blurring. However, due to the sequential use of local modeling (CNN, encoding), global modeling (attention, ESG), and local modeling (CNN, decoding), a dedicated fusion module to address the coupling problem of features with different granularities is lacking.

Overall, in the field of remote sensing, existing methods primarily expand the ViT structure by adding local modeling modules or extending supervision types to adapt to the multiscale nature of remote sensing images. This potentially complicates the modeling in monocular height estimation tasks.

References

- Benediktsson, J.A.; Chanussot, J.; Moon, W.M. Very high-resolution remote sensing: Challenges and opportunities. Proc. IEEE 2012, 100, 1907–1910.

- Sun, X.; Wang, P.; Yan, Z.; Xu, F.; Wang, R.; Diao, W.; Chen, J.; Li, J.; Feng, Y.; Xu, T.; et al. FAIR1M: A benchmark dataset for fine-grained object recognition in high-resolution remote sensing imagery. ISPRS J. Photogramm. Remote. Sens. 2022, 184, 116–130.

- Zhao, L.; Wang, H.; Zhu, Y.; Song, M. A review of 3D reconstruction from high-resolution urban satellite images. Int. J. Remote Sens. 2023, 44, 713–748.

- Mahabir, R.; Croitoru, A.; Crooks, A.T.; Agouris, P.; Stefanidis, A. A critical review of high and very high-resolution remote sensing approaches for detecting and mapping slums: Trends, challenges and emerging opportunities. Urban Sci. 2018, 2, 8.

- Coronado, E.; Itadera, S.; Ramirez-Alpizar, I.G. Integrating Virtual, Mixed, and Augmented Reality to Human–Robot Interaction Applications Using Game Engines: A Brief Review of Accessible Software Tools and Frameworks. Appl. Sci. 2023, 13, 1292.

- Takaku, J.; Tadono, T.; Kai, H.; Ohgushi, F.; Doutsu, M. An Overview of Geometric Calibration and DSM Generation for ALOS-3 Optical Imageries. In Proceedings of the 2021 IEEE International Geoscience and Remote Sensing Symposium IGARSS, Brussels, Belgium, 11–16 July 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 383–386.

- Estornell, J.; Ruiz, L.; Velázquez-Martí, B.; Hermosilla, T. Analysis of the factors affecting LiDAR DTM accuracy in a steep shrub area. Int. J. Digit. Earth 2011, 4, 521–538.

- Nemmaoui, A.; Aguilar, F.J.; Aguilar, M.A.; Qin, R. DSM and DTM generation from VHR satellite stereo imagery over plastic covered greenhouse areas. Comput. Electron. Agric. 2019, 164, 104903.

- Hoja, D.; Reinartz, P.; Schroeder, M. Comparison of DEM generation and combination methods using high resolution optical stereo imagery and interferometric SAR data. Rev. Française Photogramm. Télédétect. 2007, 2006, 89–94.

- Xiaotian, S.; Guo, Z.; Xia, W. High-precision DEM production for spaceborne stereo SAR images based on SIFT matching and region-based least squares matching. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2012, 39, 49–53.

- Li, Q.; Zhu, J.; Liu, J.; Cao, R.; Li, Q.; Jia, S.; Qiu, G. Deep learning based monocular depth prediction: Datasets, methods and applications. arXiv 2020, arXiv:2011.04123.

- Kuznietsov, Y.; Stuckler, J.; Leibe, B. Semi-supervised deep learning for monocular depth map prediction. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 6647–6655.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 30, 1–11.

- Zhao, S.; Luo, Y.; Zhang, T.; Guo, W.; Zhang, Z. A domain specific knowledge extraction transformer method for multisource satellite-borne SAR images ship detection. ISPRS J. Photogramm. Remote Sens. 2023, 198, 16–29.

- He, Q.; Sun, X.; Diao, W.; Yan, Z.; Yin, D.; Fu, K. Transformer-induced graph reasoning for multimodal semantic segmentation in remote sensing. ISPRS J. Photogramm. Remote Sens. 2022, 193, 90–103.

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929.

- Wojek, C.; Walk, S.; Roth, S.; Schindler, K.; Schiele, B. Monocular visual scene understanding: Understanding multi-object traffic scenes. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 35, 882–897.

- Goetz, J.; Brenning, A.; Marcer, M.; Bodin, X. Modeling the precision of structure-from-motion multi-view stereo digital elevation models from repeated close-range aerial surveys. Remote Sens. Environ. 2018, 210, 208–216.

- Geiger, A.; Lenz, P.; Stiller, C.; Urtasun, R. Vision meets robotics: The kitti dataset. Int. J. Robot. Res. 2013, 32, 1231–1237.

- Li, X.; Wen, C.; Wang, L.; Fang, Y. Geometry-aware segmentation of remote sensing images via joint height estimation. IEEE Geosci. Remote Sens. Lett. 2021, 19, 1–5.

- Mou, L.; Zhu, X.X. IM2HEIGHT: Height estimation from single monocular imagery via fully residual convolutional-deconvolutional network. arXiv 2018, arXiv:1802.10249.

- Yu, D.; Ji, S.; Liu, J.; Wei, S. Automatic 3D building reconstruction from multi-view aerial images with deep learning. ISPRS J. Photogramm. Remote Sens. 2021, 171, 155–170.

- Mahdi, E.; Ziming, Z.; Xinming, H. Aerial height prediction and refinement neural networks with semantic and geometric guidance. arXiv 2020, arXiv:2011.10697.

- Batra, D.; Saxena, A. Learning the right model: Efficient max-margin learning in laplacian crfs. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition, Washington, DC, USA, 16–21 June 2012; IEEE: Piscataway, NJ, USA, 2012; pp. 2136–2143.

- Saxena, A.; Chung, S.; Ng, A. Learning depth from single monocular images. Adv. Neural Inf. Process. Syst. 2005, 18, 1–16.

- Saxena, A.; Schulte, J.; Ng, A.Y. Depth Estimation Using Monocular and Stereo Cues. In Proceedings of the IJCAI, Hyderabad, India, 6–12 January 2007; Volume 7, pp. 2197–2203.

- Liu, M.; Salzmann, M.; He, X. Discrete-continuous depth estimation from a single image. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 716–723.

- Zhuo, W.; Salzmann, M.; He, X.; Liu, M. Indoor scene structure analysis for single image depth estimation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 614–622.

- Zhang, Y.; Yan, Z.; Sun, X.; Lu, X.; Li, J.; Mao, Y.; Wang, L. Bridging the Gap Between Cumbersome and Light Detectors via Layer-Calibration and Task-Disentangle Distillation in Remote Sensing Imagery. IEEE Trans. Geosci. Remote Sens. 2023, 61, 1–18.

- Zhang, Y.; Yan, Z.; Sun, X.; Diao, W.; Fu, K.; Wang, L. Learning efficient and accurate detectors with dynamic knowledge distillation in remote sensing imagery. IEEE Trans. Geosci. Remote Sens. 2021, 60, 1–19.

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016; pp. 770–778.

- Ghamisi, P.; Yokoya, N. IMG2DSM: Height simulation from single imagery using conditional generative adversarial net. IEEE Geosci. Remote Sens. Lett. 2018, 15, 794–798.

- Zhang, Y.; Chen, X. Multi-path fusion network for high-resolution height estimation from a single orthophoto. In Proceedings of the 2019 IEEE International Conference on Multimedia & Expo Workshops (ICMEW), Shanghai, China, 8–12 July 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 186–191.

- Li, X.; Wang, M.; Fang, Y. Height estimation from single aerial images using a deep ordinal regression network. IEEE Geosci. Remote Sens. Lett. 2020, 19, 1–5.

- Carvalho, M.; Le Saux, B.; Trouvé-Peloux, P.; Almansa, A.; Champagnat, F. On regression losses for deep depth estimation. In Proceedings of the 2018 25th IEEE International Conference on Image Processing (ICIP), Athens, Greece, 7–10 October 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 2915–2919.

- Zhu, J.; Ma, R. Real-Time Depth Estimation from 2D Images. 2016. Available online: http://cs231n.stanford.edu/reports/2016/pdfs/407_Report.pdf (accessed on 1 December 2023).

- Xiong, Z.; Huang, W.; Hu, J.; Zhu, X.X. THE benchmark: Transferable representation learning for monocular height estimation. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5620514.

- Tao, H. A label-relevance multi-direction interaction network with enhanced deformable convolution for forest smoke recognition. Expert Syst. Appl. 2024, 236, 121383.

- Shaw, P.; Uszkoreit, J.; Vaswani, A. Self-attention with relative position representations. arXiv 2018, arXiv:1803.02155.

- Jaderberg, M.; Simonyan, K.; Zisserman, A. Spatial transformer networks. arXiv 2015, arXiv:1506.02025.

- Luong, M.T.; Pham, H.; Manning, C.D. Effective approaches to attention-based neural machine translation. arXiv 2015, arXiv:1508.04025.

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 7132–7141.

- Huang, Z.; Wang, X.; Huang, L.; Huang, C.; Wei, Y.; Liu, W. Ccnet: Criss-cross attention for semantic segmentation. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 603–612.

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, BC, Canada, 11–17 October 2021; pp. 10012–10022.

- Yang, J.; Du, B.; Zhang, L. From center to surrounding: An interactive learning framework for hyperspectral image classification. ISPRS J. Photogramm. Remote Sens. 2023, 197, 145–166.

- Chen, S.; Ogawa, Y.; Zhao, C.; Sekimoto, Y. Large-scale individual building extraction from open-source satellite imagery via super-resolution-based instance segmentation approach. ISPRS J. Photogramm. Remote Sens. 2023, 195, 129–152.

- He, Q.; Sun, X.; Yan, Z.; Wang, B.; Zhu, Z.; Diao, W.; Yang, M.Y. AST: Adaptive Self-supervised Transformer for optical remote sensing representation. ISPRS J. Photogramm. Remote Sens. 2023, 200, 41–54.

- Sun, W.; Zhang, Y.; Liao, Y.; Yang, B.; Lin, M.; Zhai, R.; Gao, Z. Rethinking Monocular Height Estimation From a Classification Task Perspective Leveraging the Vision Transformer. IEEE Geosci. Remote Sens. Lett. 2022, 19, 1–5.

More

Information

Subjects:

Remote Sensing

Contributors

MDPI registered users' name will be linked to their SciProfiles pages. To register with us, please refer to https://encyclopedia.pub/register

:

View Times:

829

Revisions:

2 times

(View History)

Update Date:

26 Jan 2024

Notice

You are not a member of the advisory board for this topic. If you want to update advisory board member profile, please contact office@encyclopedia.pub.

OK

Confirm

Only members of the Encyclopedia advisory board for this topic are allowed to note entries. Would you like to become an advisory board member of the Encyclopedia?

Yes

No

${ textCharacter }/${ maxCharacter }

Submit

Cancel

Back

Comments

${ item }

|

More

No more~

There is no comment~

${ textCharacter }/${ maxCharacter }

Submit

Cancel

${ selectedItem.replyTextCharacter }/${ selectedItem.replyMaxCharacter }

Submit

Cancel

Confirm

Are you sure to Delete?

Yes

No