Your browser does not fully support modern features. Please upgrade for a smoother experience.

Submitted Successfully!

Thank you for your contribution! You can also upload a video entry or images related to this topic.

For video creation, please contact our Academic Video Service.

| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Monica Micucci | -- | 1345 | 2023-12-07 19:21:23 | | | |

| 2 | Catherine Yang | Meta information modification | 1345 | 2023-12-08 01:33:22 | | |

Video Upload Options

We provide professional Academic Video Service to translate complex research into visually appealing presentations. Would you like to try it?

Cite

If you have any further questions, please contact Encyclopedia Editorial Office.

Micucci, M.; Iula, A. Fusion of 3D Ultrasound Hand-Geometry and Palmprint. Encyclopedia. Available online: https://encyclopedia.pub/entry/52501 (accessed on 01 April 2026).

Micucci M, Iula A. Fusion of 3D Ultrasound Hand-Geometry and Palmprint. Encyclopedia. Available at: https://encyclopedia.pub/entry/52501. Accessed April 01, 2026.

Micucci, Monica, Antonio Iula. "Fusion of 3D Ultrasound Hand-Geometry and Palmprint" Encyclopedia, https://encyclopedia.pub/entry/52501 (accessed April 01, 2026).

Micucci, M., & Iula, A. (2023, December 07). Fusion of 3D Ultrasound Hand-Geometry and Palmprint. In Encyclopedia. https://encyclopedia.pub/entry/52501

Micucci, Monica and Antonio Iula. "Fusion of 3D Ultrasound Hand-Geometry and Palmprint." Encyclopedia. Web. 07 December, 2023.

Copy Citation

Multimodal biometric systems are often used in a wide variety of applications where high security is required. Such systems show several merits in terms of universality and recognition rate compared to unimodal systems. Among several acquisition technologies, ultrasound bears great potential in high secure access applications because it allows the acquisition of 3D information about the human body and is able to verify liveness of the sample.

multimodal systems

palmprint

hand-geometry

3D ultrasound

fusion

1. Introduction

In recent years, biometric recognition is acquiring increasing popularity in various fields where personal security is required, replacing classical authentication methods based on PINs and passwords. Biometric characteristics are mainly employed in commercial applications such as smartphones and access control, government, and forensics.

Biometric systems based on the combination of two or more characteristics, referred to as multimodal systems, have several advantages compared to their unimodal counterparts as they allow improved recognition rate, universality, and the authentication of users for which one of the single biometric characteristic cannot be detected [1][2][3]. In particular, multimodal systems based on a single sensor are arousing interest because they permit to achieve cost-effectiveness and improved acceptability from users [4].

Multimodal systems are often employed for human hand characteristics including hand geometry and palmprint because both are universal, invariant, acceptable and collectable [5][6].

Over the years, several technologies have been experimented with for the acquisition of the two human hand modalities. The most commonly employed are optical and infrared [7]. The former is mainly based on CCD cameras and contactless technique [8][9][10]: CCD cameras collect high-quality images but are limited by the bulkiness of the device, while contactless modality is highly useful for acceptability of users and reasons of personal hygiene, but it is not very reliable for low-quality images. Regarding the latter, both Near-Infrared (NIR) and Far-Infrared (FIR) radiation are used [11][12]. The principal limit of these technologies is their capability of providing information present only on the external skin surface.

Ultrasound is a technology employed in several fields including sonar [13], motors and actuators [14], Non-Destructive Evaluations (NDE) [15], Indoor Positioning Systems (IPS) [16], medical imaging [17] and therapy [18], and biometric systems [19]. The capability of ultrasound to penetrate the human body can be very useful in the latter field because it allows for 3D information on the features to be obtained, leading to a more accurate description of the biometric characteristic and hence, improved recognition accuracy [10].Moreover, ultrasound is featured by the capability of effectively detecting liveness during the acquisition phase, by simply checking vein pulsing, making the system very difficult to counterfeit and is not influenced by the presence of oil and ink stains on the skin and by environmental changes in light or temperature. Ultrasound technology has been widely investigated in the biometric field, particularly for extraction of fingerprint features [20][21] and, recently, the integration of the sensor in smartphone devices became reality [22]. Other characteristics, including hand geometry [23][24], palmprint [25][26][27], and hand veins [28][29][30] were also investigated.

2. Image Acquisition and Feature Extraction

Ultrasound image acquisition of the human hand [24] is performed with a system composed of an ultrasound scanner [31], a linear array of 192 elements and a numerical pantograph, which controls the movement of the probe on the region of interest (ROI).

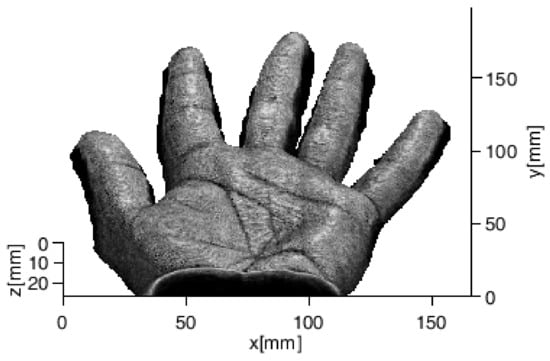

The acoustic coupling between the human body and the probe is created by submerging both in a tank of water. A three-dimensional image is acquired by moving the probe along the elevation direction; during the motion, several B-mode images are collected and regrouped in order to obtain a volume defined by an 8-bit grayscale 3D matrix (416 × 500 × 68 voxels). Figure 1 shows an example of a 3D render of the whole human hand. The resolution of the image is about 400 μm.

Figure 1. Example of 3D rendered human hand.

Successively, an interpolation is performed along the z-axis and 2D renderings are extracted at various depths from the volume: the external surface of the hand is first projected on the XY plane in order to achieve the shallowest 2D image. Then, it is translated along the z-axis beneath the skin and projected again on the XY plane obtaining 2D images at increasing depths.

Three-dimensional information is taken into account by collecting fourteen 2D images with a step of 50 μm: the shallowest image is taken at 100 µm while the deepest is captured at 750 µm. Successively, 2D and 3D features both for hand geometry and palmprint are extracted from 2D renderings: for the hand geometry, they consist of hand measurements including the size of palm, lengths and widths of fingers, while for palmprint they are represented by principal lines and main wrinkles.

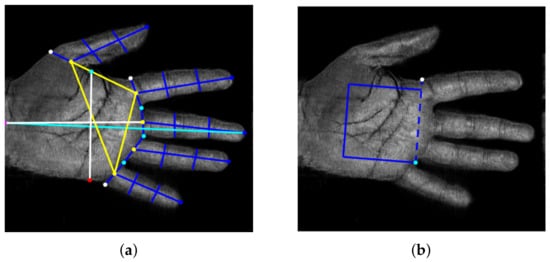

The procedure employed for the extraction of 2D templates consists of a median filter to reduce the noise, binarization with a suitable threshold, and calculation of distances between a middle point on the wrist boundary, as a reference point, and each point on the hand contour with Euclidean distance [24]. Then, several feature points, shown in Figure 2a with different colours, including finger peaks, a middle point, valleys between fingers, other finger base points, and an extra point are extracted [32]. From these points, 26 distances are calculated in order to define a 2D template. Successively, 2D templates at different depths are combined to obtain the 3D template; three combinations were considered:

Figure 2. (a) Feature points extracted from the hand shape and the 26 distances defining the 2D template; (b) ROI extraction for palmprint from two feature points of (a).

-

Mean features (MF): each length computed as the mean value of the lengths obtained at each depth;

-

Weighted Mean features (WMF): each length represented by a weighted mean of the lengths obtained at various depths;

-

Global features (GF): all lengths computed at every depth.

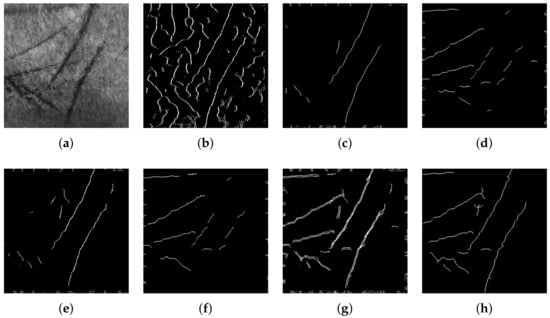

Regarding palmprint, a palm ROI is first extracted by defining a square as indicated in Figure 2b; in this way, the repeatability of the procedure is guaranteed. Successively, after some preprocessing operations, 2D features are extracted with a classical line-based procedure [27] as shown in Figure 3. The image is scanned along four directions (0°, 90°, 180°, 270°). Along each direction, the edges of principal lines are detected by calculating intensity variations through the first derivative. Short lines and isolated points are then filtered by using a Laplacian filter. The four images obtained are summed with a logical OR operation. Finally, morphological operations are executed: closing operation in order to filling holes and small concavity, thinning operation, and pruning operation to remove short lines. In this way, 2D templates are achieved.

Figure 3. Palmprint feature extraction procedure step by step: (a) 2D grayscale image at 350 μm; (b) image after detection of edges; (c) feature extraction along direction 0° (d) 90° (e) 180° (f) 270° (g); logical OR of images after feature extraction along four directions; (h) final 2D template.

Successively, 2D templates, at different depths, are combined with a particular algorithm in order to obtain a 3D template. The algorithm is mainly based on two operations that are executed iteratively: the first analysed 2D template 𝑇𝑖 is dilated with a structuring element of 𝛽 dimension and stored in a 3D matrix; then, a logical AND comparison is performed between the current dilated template and the 2D template at adjacent depth level (𝑇𝑖−1 or 𝑇𝑖+1) [33][34], and the result is stored in the 3D matrix. The dilation operation is performed to account for the fact that, by increasing the under-skin depth, the palm trait may be not orthogonal to the XY plane, while the AND operation allows filtering spurious traits in each of the two images. Dimension 𝛽 of the structuring element affects the quality of results because if it is too high, the 3D template may contain spurious traits while if it is too low, some principal information could be eliminated.

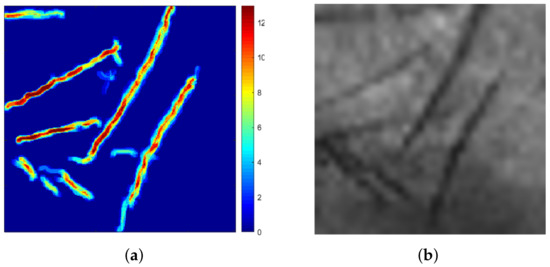

Figure 4a shows an example of a 3D template represented as a colour scale matrix and obtained by setting 𝛽 = 5, where each pixel is defined by a value that varies from 0 to 13: 0 defines a blue pixel that corresponds to the absence of trait while 13 defines a dark red pixel that corresponds to the presence of the trait at all depths. For comparison, Figure 4 shows the corresponding 2D grey scale render.

Figure 4. (a) Three-dimensional template represented as a colour scale matrix where the trait’s depth varies from 0 to 13; (b) 2D greyscale render of the same sample.

References

- Wang, Y.; Shi, D.; Zhou, W. Convolutional Neural Network Approach Based on Multimodal Biometric System with Fusion of Face and Finger Vein Features. Sensors 2022, 22, 6039.

- Ryu, R.; Yeom, S.; Kim, S.H.; Herbert, D. Continuous Multimodal Biometric Authentication Schemes: A Systematic Review. IEEE Access 2021, 9, 34541–34557.

- Haider, S.; Rehman, Y.; Usman Ali, S. Enhanced multimodal biometric recognition based upon intrinsic hand biometrics. Electronics 2020, 9, 1916.

- Bhilare, S.; Jaswal, G.; Kanhangad, V.; Nigam, A. Single-sensor hand-vein multimodal biometric recognition using multiscale deep pyramidal approach. Mach. Vis. Appl. 2018, 29, 1269–1286.

- Kumar, A.; Zhang, D. Personal recognition using hand shape and texture. IEEE Trans. Image Process. 2006, 15, 2454–2461.

- Charfi, N.; Trichili, H.; Alimi, A.; Solaiman, B. Bimodal biometric system for hand shape and palmprint recognition based on SIFT sparse representation. Multimed. Tools Appl. 2017, 76, 20457–20482.

- Gupta, P.; Srivastava, S.; Gupta, P. An accurate infrared hand geometry and vein pattern based authentication system. Knowl. Based Syst. 2016, 103, 143–155.

- Kanhangad, V.; Kumar, A.; Zhang, D. Contactless and pose invariant biometric identification using hand surface. IEEE Trans. Image Process. 2011, 20, 1415–1424.

- Kumar, A. Toward More Accurate Matching of Contactless Palmprint Images under Less Constrained Environments. IEEE Trans. Inf. Forensics Secur. 2019, 14, 34–47.

- Liang, X.; Li, Z.; Fan, D.; Zhang, B.; Lu, G.; Zhang, D. Innovative Contactless Palmprint Recognition System Based on Dual-Camera Alignment. IEEE Trans. Syst. Man Cybern. Syst. 2022, 52, 6464–6476.

- Wu, W.; Elliott, S.; Lin, S.; Sun, S.; Tang, Y. Review of palm vein recognition. IET Biom. 2020, 9, 1–10.

- Palma, D.; Blanchini, F.; Giordano, G.; Montessoro, P.L. A Dynamic Biometric Authentication Algorithm for Near-Infrared Palm Vascular Patterns. IEEE Access 2020, 8, 118978–118988.

- Wang, R.; Müller, R. Bioinspired solution to finding passageways in foliage with sonar. Bioinspir. Biomim. 2021, 16, 066022.

- Iula, A.; Bollino, G. A travelling wave rotary motor driven by three pairs of langevin transducers. IEEE Trans. Ultrason. Ferroelectr. Freq. Control 2012, 59, 121–127.

- Pyle, R.; Bevan, R.; Hughes, R.; Rachev, R.; Ali, A.; Wilcox, P. Deep Learning for Ultrasonic Crack Characterization in NDE. IEEE Trans. Ultrason. Ferroelectr. Freq. Control 2021, 68, 1854–1865.

- Carotenuto, R.; Merenda, M.; Iero, D.; Della Corte, F. An Indoor Ultrasonic System for Autonomous 3-D Positioning. IEEE Trans. Instrum. Meas. 2019, 68, 2507–2518.

- Avola, D.; Cinque, L.; Fagioli, A.; Foresti, G.; Mecca, A. Ultrasound Medical Imaging Techniques. ACM Comput. Surv. 2021, 54.

- Trimboli, P.; Bini, F.; Marinozzi, F.; Baek, J.H.; Giovanella, L. High-intensity focused ultrasound (HIFU) therapy for benign thyroid nodules without anesthesia or sedation. Endocrine 2018, 61, 210–215.

- Iula, A. Ultrasound systems for biometric recognition. Sensors 2019, 19, 2317.

- Schmitt, R.; Zeichman, J.; Casanova, A.; Delong, D. Model based development of a commercial, acoustic fingerprint sensor. In Proceedings of the IEEE International Ultrasonics Symposium, IUS, Dresden, Germany, 7–10 October 2012; pp. 1075–1085.

- Lamberti, N.; Caliano, G.; Iula, A.; Savoia, A. A high frequency cMUT probe for ultrasound imaging of fingerprints. Sens. Actuator A Phys. 2011, 172, 561–569.

- Jiang, X.; Tang, H.Y.; Lu, Y.; Ng, E.J.; Tsai, J.M.; Boser, B.E.; Horsley, D.A. Ultrasonic fingerprint sensor with transmit beamforming based on a PMUT array bonded to CMOS circuitry. IEEE Trans. Ultrason. Ferroelectr. Freq. Control 2017, 64, 1401–1408.

- Iula, A.; Hine, G.; Ramalli, A.; Guidi, F. An Improved Ultrasound System for Biometric Recognition Based on Hand Geometry and Palmprint. Procedia Eng. 2014, 87, 1338–1341.

- Iula, A. Biometric recognition through 3D ultrasound hand geometry. Ultrasonics 2021, 111, 106326.

- Iula, A.; Nardiello, D. Three-dimensional ultrasound palmprint recognition using curvature methods. J. Electron. Imaging 2016, 25, 033009.

- Nardiello, D.; Iula, A. A new recognition procedure for palmprint features extraction from ultrasound images. Lect. Notes Electr. Eng. 2019, 512, 113–118.

- Iula, A.; Nardiello, D. 3-D Ultrasound Palmprint Recognition System Based on Principal Lines Extracted at Several under Skin Depths. IEEE Trans. Instrum. Meas. 2019, 68, 4653–4662.

- De Santis, M.; Agnelli, S.; Nardiello, D.; Iula, A. 3D Ultrasound Palm Vein recognition through the centroid method for biometric purposes. In Proceedings of the 2017 IEEE International Ultrasonics Symposium (IUS), Washington, DC, USA, 6–9 September 2017.

- Iula, A.; Vizzuso, A. 3D Vascular Pattern Extraction from Grayscale Volumetric Ultrasound Images for Biometric Recognition Purposes. Appl. Sci. 2022, 12, 8285.

- Micucci, M.; Iula, A. Ultrasound wrist vein pattern for biometric recognition. In Proceedings of the 2022 IEEE International Ultrasonics Symposium, IUS, Venice, Italy, 10–13 October 2022; Volume 2022.

- Tortoli, P.; Bassi, L.; Boni, E.; Dallai, A.; Guidi, F.; Ricci, S. ULA-OP: An advanced open platform for ultrasound research. IEEE Trans. Ultrason. Ferroelectr. Freq. Control 2009, 56, 2207–2216.

- Sharma, S.; Dubey, S.; Singh, S.; Saxena, R.; Singh, R. Identity verification using shape and geometry of human hands. Expert Syst. Appl. 2015, 42, 821–832.

- Iula, A. Micucci, M. Multimodal Biometric Recognition Based on 3D Ultrasound Palmprint-Hand Geometry Fusion. IEEE Access 2022, 10, 7914–7925.

- Iula, A.; Micucci, M. A Feasible 3D Ultrasound Palmprint Recognition System for Secure Access Control Applications. IEEE Access 2021, 9, 39746–39756.

More

Information

Subjects:

Engineering, Electrical & Electronic

Contributors

MDPI registered users' name will be linked to their SciProfiles pages. To register with us, please refer to https://encyclopedia.pub/register

:

View Times:

508

Revisions:

2 times

(View History)

Update Date:

08 Dec 2023

Notice

You are not a member of the advisory board for this topic. If you want to update advisory board member profile, please contact office@encyclopedia.pub.

OK

Confirm

Only members of the Encyclopedia advisory board for this topic are allowed to note entries. Would you like to become an advisory board member of the Encyclopedia?

Yes

No

${ textCharacter }/${ maxCharacter }

Submit

Cancel

Back

Comments

${ item }

|

More

No more~

There is no comment~

${ textCharacter }/${ maxCharacter }

Submit

Cancel

${ selectedItem.replyTextCharacter }/${ selectedItem.replyMaxCharacter }

Submit

Cancel

Confirm

Are you sure to Delete?

Yes

No