Your browser does not fully support modern features. Please upgrade for a smoother experience.

Submitted Successfully!

Thank you for your contribution! You can also upload a video entry or images related to this topic.

For video creation, please contact our Academic Video Service.

| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Pethuru Raj Chelliah | -- | 2272 | 2023-02-09 07:17:38 | | | |

| 2 | Catherine Yang | Meta information modification | 2272 | 2023-02-09 07:29:49 | | |

Video Upload Options

We provide professional Academic Video Service to translate complex research into visually appealing presentations. Would you like to try it?

Cite

If you have any further questions, please contact Encyclopedia Editorial Office.

Surianarayanan, C.; Lawrence, J.J.; Chelliah, P.R.; Prakash, E.; Hewage, C. Edge Artificial Intelligence. Encyclopedia. Available online: https://encyclopedia.pub/entry/41013 (accessed on 22 May 2026).

Surianarayanan C, Lawrence JJ, Chelliah PR, Prakash E, Hewage C. Edge Artificial Intelligence. Encyclopedia. Available at: https://encyclopedia.pub/entry/41013. Accessed May 22, 2026.

Surianarayanan, Chellammal, John Jeyasekaran Lawrence, Pethuru Raj Chelliah, Edmond Prakash, Chaminda Hewage. "Edge Artificial Intelligence" Encyclopedia, https://encyclopedia.pub/entry/41013 (accessed May 22, 2026).

Surianarayanan, C., Lawrence, J.J., Chelliah, P.R., Prakash, E., & Hewage, C. (2023, February 09). Edge Artificial Intelligence. In Encyclopedia. https://encyclopedia.pub/entry/41013

Surianarayanan, Chellammal, et al. "Edge Artificial Intelligence." Encyclopedia. Web. 09 February, 2023.

Copy Citation

Artificial Intelligence (Al) models are being produced and used to solve a variety of current and future business and technical problems. Therefore, AI model engineering processes, platforms, and products are acquiring special significance across industry verticals. For achieving deeper automation, the number of data features being used while generating highly promising and productive AI models is numerous, and hence the resulting AI models are bulky. Such heavyweight models consume a lot of computation, storage, networking, and energy resources. On the other side, increasingly, AI models are being deployed in IoT devices to ensure real-time knowledge discovery and dissemination.

artificial intelligence

AI model optimization

edge AI

federated learning

1. Introduction

The Internet of Things (IoT) has grown rapidly and generates a huge amount of data. Depending upon the domain and application, say, for example, smart traffic application, smart home, smart city, smart transport, etc., the acquired data are required to be processed immediately to produce meaningful insights and actionable decisions. In these cases, sending data to a centralized server and analyzing the data at the server involves greater latency [1] which even prohibits the real purpose of the application itself. Cloud computing is not adequate to meet the diverse needs of data analysis of today’s intelligent society, and so edge computing has evolved [2][3]. Edge computing has brought the processing of data to the point of acquisition by pushing applications, storage, and processing power away from the centralized data center and to the edge itself [4].

Centralized processing requires a massive amount of need to be transferred to the cloud for analysis. This not only requires more network bandwidth but also consumes time. Thus, it seriously suffers from latency, bandwidth-related issues, and huge transmission energy, which cannot be tolerated in applications involving augmented reality, video conferences, streaming applications, etc. However, in reality, every network has limited bandwidth. In addition, when data are transmitted to the cloud, it inherently prohibits the real-time analysis of data. However, many applications are in need of real-time analysis. For example, in the case of the healthcare domain, consider the case of a vital parameter-monitoring system that monitors parameters related to, say, COVID-19. Despite sending the monitored data to a centralized cloud server to which the physician or hospital is connected, which upon receiving the data, will analyze the health conditions of the patient, the monitoring system itself should be equipped with processing capability so that it can produce actionable and meaningful insights without latency. In addition, it itself will direct the required action and prevents the transfer of data to a centralized infrastructure. In scenarios where the analysis in edge becomes necessary, it should be conducted at the edge itself without centralized analytics.

2. Motivating Use Cases of Edge AI

Real-time analysis and decision making without latency—In contrast to cloud Al, edge AI can provide many benefits to the healthcare domain. For example, consider an individual is taking up his routine exercising. His vital parameters, namely pulse rate, blood glucose level, and blood pressure, are being monitored by wearable sensors and got updated in the edge application running on his mobile. Here, the mobile is the edge device, and it analyses the monitored parameters with the help of an artificial intelligence-based model, and it immediately takes the decision according to the analysis done, without sending the data to any other central server such as a cloud. The emphasis is that without any latency, the data are analyzed locally, and the decision is taken immediately. This is more important in the case of the healthcare domain, as the vital parameters are out of the normal threshold ranges. In such instances, immediately, the edge application intimates the physician and books the ambulance to a hospital.

Edge AI in Remote robotics surgery—In medical exigency, robotics surgery would be carried out under the supervision of a surgeon from remote. In this situation, the robots are fully equipped with AI-based models, and it performs the concerned surgery with guidance and conversation with a remote physician. The key point to be noted here is that the evolving 5G communication makes surgery easy and safe.

Edge AI integrated into cameras in airport security systems—When the video cameras installed in airports are integrated with video analytics applications, say, for example, detection of terrorist attacks, the attacks can be detected without latency. In conventional security applications, a series of video cameras capture the video of what is happening in the airport to cloud servers where AI-based models would run to detect or predict terrorist attacks. When the video analytics models run in the camera itself, based on the seriousness of the analysis, the device (i.e., video camera) makes a call to the police immediately to avoid the escape of any detected terrorist.

Edge AI in predictive maintenance—In manufacturing and in similar other sectors, predictive maintenance is used to determine potential faults and abnormalities in processes. Conventionally, heartbeat signals which exhibit the healthiness of various sensors and machines are continuously collected and sent to a centralized cloud setup where AI-based analytics would be carried out to predict the faults. However, nowadays, the loT devices themselves are equipped with AI models to predict the possibility of faults, and immediately with no latency proper maintenance process will be carried which ultimately leads to increased production with reduced cost.

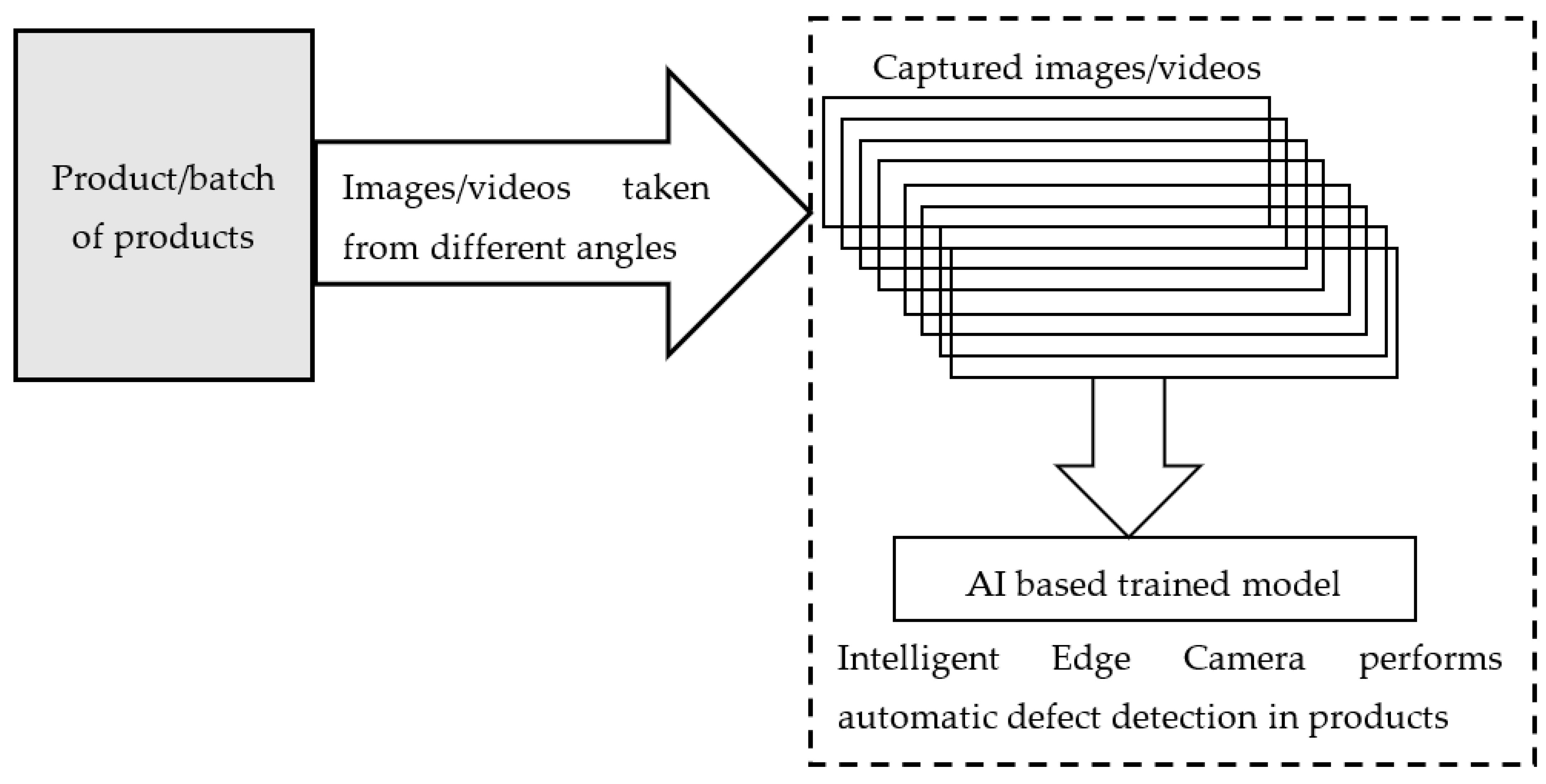

Edge AI in quality assurance—Existing cameras and devices are incorporated with intelligent state-of-art neural network-based video analytics models, which are capable of executing Trillions of Operations Per Second. These devices have higher computer vision and scan a single product or huge batches of products at a time and find out faulty products with accuracy exceeding human capability. In addition to product inspection, edge devices are also equipped with relevant models for continuous and detailed factory monitoring. In addition, the complete assembly line of factories is thoroughly inspected by the installed cameras towards production with zero defects as shown in Figure 1.

Figure 1. Block diagram of edge AI-based defect detection.

Edge AI and mobile augmented reality—Mobile augmented reality applications have to process huge amounts of data that arrive from various devices such as a camera, OPS, and other video and audio data within the stipulated latency of around 15 to 20 milliseconds in order to show the augmented reality to the user [5]. The next generation IoT devices are equipped with reinforcement learning-based Al models implemented with the help of customized processors and 50 communication technologies. The combination of edge Al and the next-generation IoT enables practical implementation and brings in a more autonomous nature along with real-time analysis of the edge devices themselves. This combination will not only reduce the latency but also conserves energy.

Edge AI in drone applications—In contrast to traditional PID-based drone flights, which have limitations on the number of parameters and hence tend to break more often under situations that were not taken into the design, deep neural networks are trained with several example situations and simulated situations consisting of several disturbances, the AI-powered drone flight becomes easier. AT-powered drones are used for many applications such as (i) delivery of medicine to COVID-19 infected cases, (ii) delivery of food packets to affected people during natural disasters such as storms, (iii) tracking of vehicles, (iv) traffic monitoring, (v) air, noise, pollution monitoring, (vi) monitoring and exploring dangerous areas.

3. Learning-Related Strategies

3.1. Federated Learning

Federated learning is a distributed, and collaborative learning method that allows different edge devices with different datasets to work together to train a global model. In this learning, a single global model is stored in a centralized cloud infrastructure. At first, the global model is shared with devices with initial weights. Now the edge device collects the real-time data and trains the model locally with the new data for one or several iterations in order to update the weights so that the loss function is minimized [6]. The updated weights are sent to a centralized server. Here the data are not sent to the centralized server. Only the weights are sent to the server with encryption [4]. The centralized server receives the updated weights from several edge devices. It computes the average of updated weights, and then it updates the weights of the global model. Then the global model is again shared with edge devices. The concept of federated learning is shown in Figure 2. Federated learning could cater to the needs of modern IoT-based applications and turns out to be the basis for next-generation artificial intelligence [7].

Figure 2. Concept of federated learning in edge servers (shown with four nodes).

As discussed above, in centralized learning, the server sends a model, which initially gets trained in the server, to edge devices. In an edge device, the model gets training with local data, and the updated model parameters are sent to the server. In this way, the server aggregates all the parameters and resends the updated model to devices. In the case of decentralized, federated learning such as Peer-to-Peer [8] or in a ring topology [9], in addition to training the model with local data, each device performs the updating of parameter values with a Gossip algorithm [10][11]. As multiple devices are involved in training the model simultaneously, the training time is reduced [12]. Moreover, in federated computing, privacy and security of data are maintained [13]. Federated learning is more appropriate for utilizing low-costing machine learning models on devices and sensors [14].

As far as the data in different clients are homogeneous in which the feature space of local data in participating clients is the same, updating the global model with updated weights of clients would be easy, and such data federated learning is called horizontal learning, and it leads to an effective global solution [15]. However, updating the global models becomes a challenge when the data in devices are of the kind non-Independent and Identically Distributed (non-IID) which makes the convergence of the global model becomes difficult [16]. This issue can be resolved by developing a personalized local model for each client [17], and then the personalized local models can be merged into a shared global model with the help of the Bayesian fusion rule, as discussed in [18][19]. In another research work [20], a branch-wise averaging-based aggregation method has been proposed, which guarantees convergence of the global model. In another work [21], feature-oriented regulation method has been proposed to establish a firm structure information alignment across collaborative models.

Federated learning is of two kinds, centralized [22] and decentralized (Figure 3).

Figure 3. Federated learning in a peer-to-peer model.

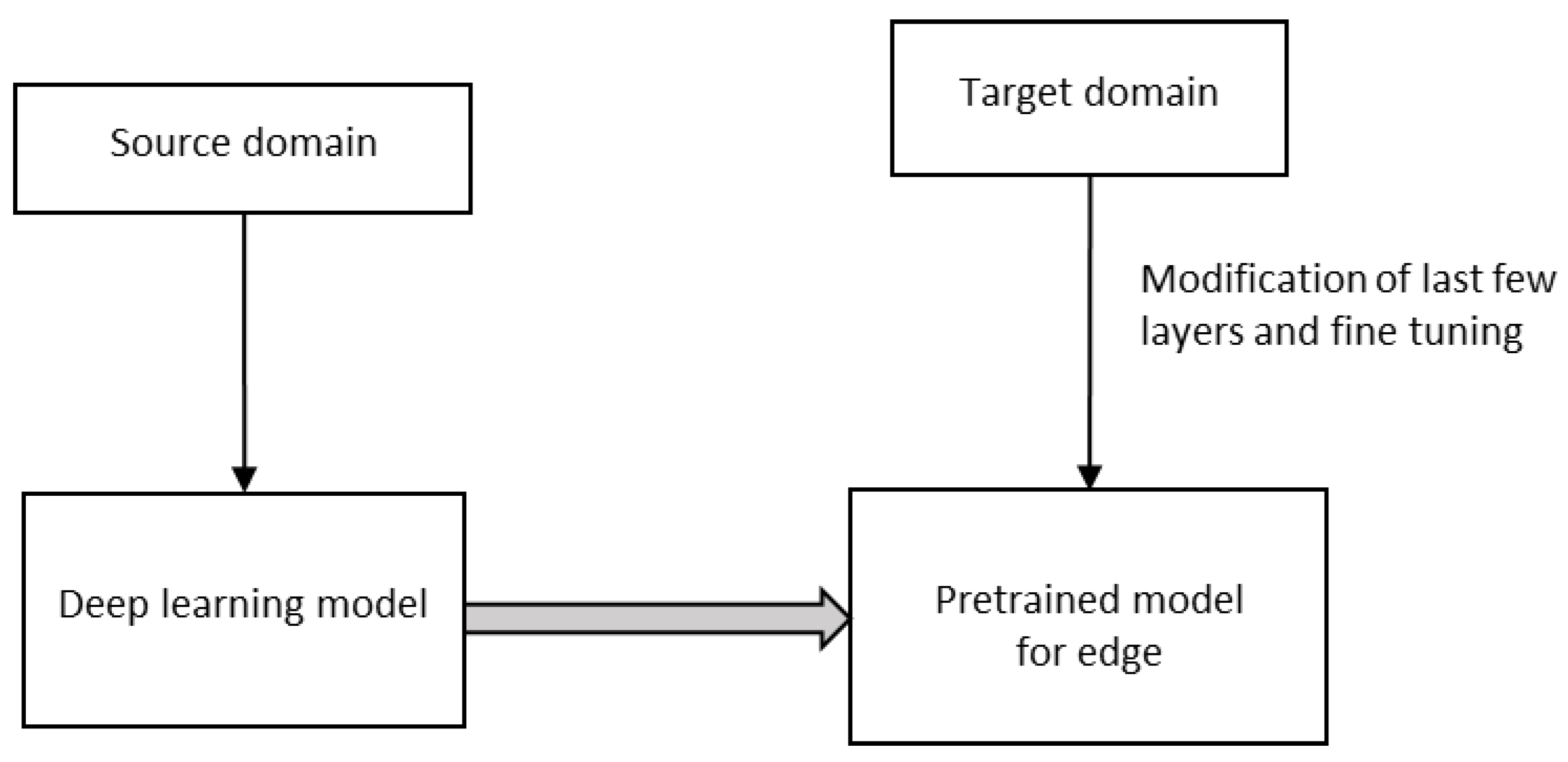

3.2. Deep Transfer Learning (DTL)

Edge devices such as IoT, webcam, robots, intelligent medical equipment, etc., are very useful for many healthcare applications during a pandemic, say, for example, COVID-19. Both shortages of reliable datasets, limited hardware, and power support of edge devices prohibit the usage of deep learning models in them. However, in Deep Transfer Learning (DTL), the knowledge of an already learned model is used to solve a new task, as in Figure 4. DTL significantly reduces training time and the requirement of resources for a target domain-specific task for a fixed feature extraction or fine-tuning [23][24].

Figure 4. Deep transfer learning.

In contrast to conventional machine learning, where learning takes place in isolation, transfer learning uses knowledge learned from other existing domains while learning for a new task. Transfer learning is of two types, homogeneous and heterogeneous. In homogeneous transfer learning, the source and target domains are the same. It means that the feature space is the same, whereas, in heterogeneous transfer learning, the feature space is not the same. Different methods, namely, instance-based methods, feature-based methods, parameter-based methods, and relation-based methods, are being used for homogeneous transfer learning, whereas for heterogeneous domains, only feature-based methods are being used. There are two types of methods, namely asymmetric feature transformation and symmetric in heterogeneous transfer learning. In asymmetric transfer learning, one of the domains is transformed into the other, and this method is found to be effective when both domains share the same label space. In symmetric transfer leaning, both domains are transformed into a common feature space.

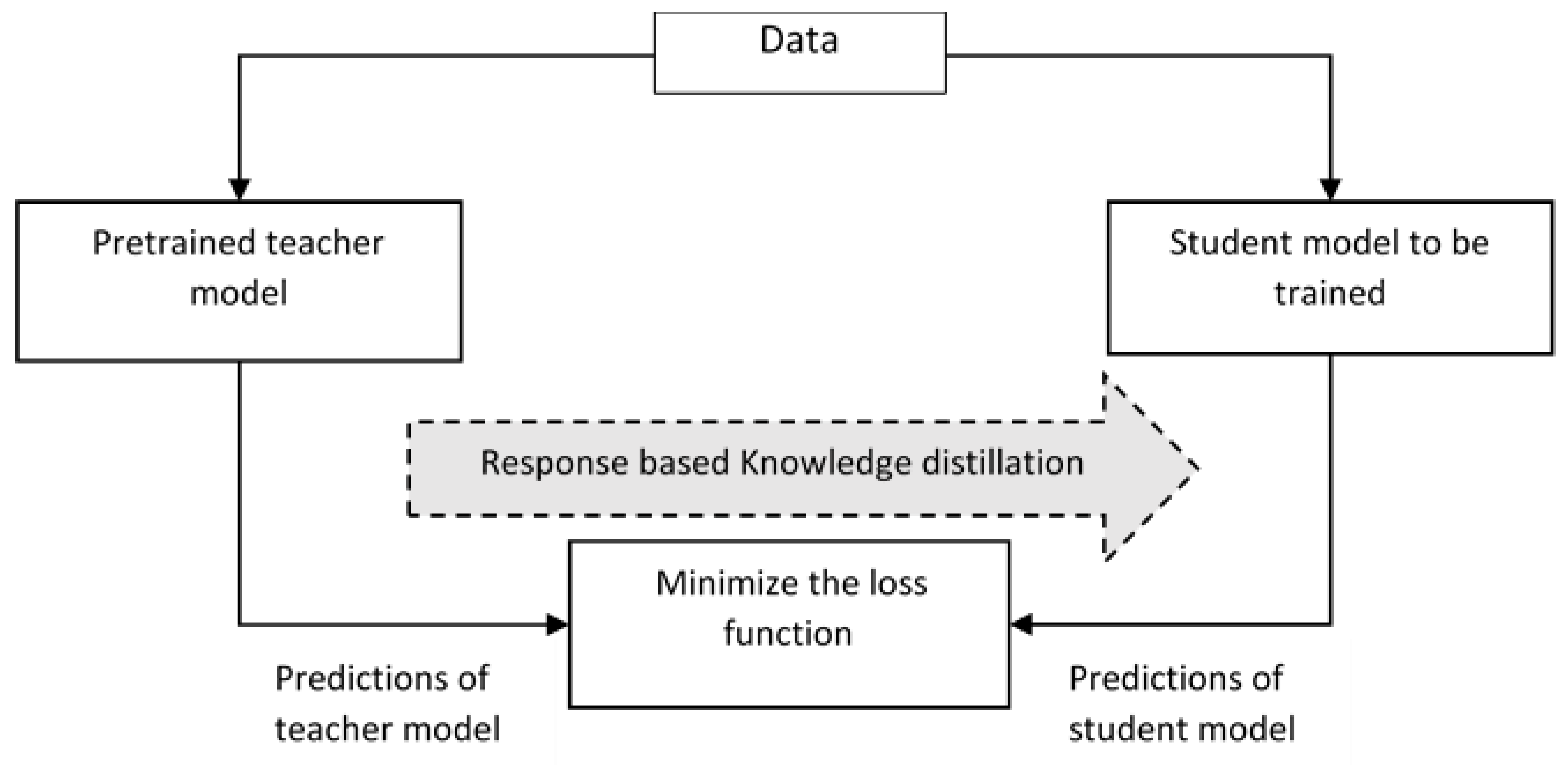

3.3. Knowledge Distillation

Large machine learning models have millions of parameters associated with them, which makes the deployment of the model in edge devices infeasible. So, the knowledge gained from large models is transferred to small models which run on edge devices. Here, the large models serve as the teacher model, and the small model is such as the student model. The teacher model refers to a larger model, and it alone gets pre-trained. The learned knowledge from the teacher model is transferred to the student model through knowledge distillation, as in Figure 5. The knowledge distillation helps in improving the accuracy of the student model despite the constrained hardware [25]. Different knowledge distillation techniques, namely response-based distillation, where the prediction performance of output layers of the teacher model and student model are compared using a loss function which shows the difference between the models and the loss function is minimized so that the accuracy of student model approaches that of the teacher model. In contrast to response-based distillation, in feature-based distillation teacher model distills the intermediate features of the student model. Here, the position of distillation is moved prior to the output layer [26][27]. Here the student mimics by minimizing the loss function that is computed according to the intermediate layers. Relation based distillation is one in which the difference between the relationship between different feature maps is captured as a gram matrix, and the corresponding loss function is minimized. The student model reduces the computation cost and memory usage [28].

Figure 5. Knowledge distillation.

References

- Vahid Dastjerdi, A.; Buyya, R. Fog Computing: Helping the Internet of Things Realize. IEEE Comput. Soc. 2016, 49, 112–116.

- Cao, K.; Liu, Y.; Meng, G.; Sun, Q. An Overview on Edge Computing Research. IEEE Access 2020, 8, 85714–85728.

- Cui, L.; Yang, S.; Chen, F.; Ming, Z.; Lu, N.; Qin, J. A survey on application of machine learning for Internet of Things. Int. J. Mach. Learn. Cybern. 2018, 9, 1399–1417.

- Pooyandeh, M.; Sohn, I. Edge Network Optimization Based on AI Techniques: A Survey. Electronics 2021, 10, 2830.

- Chen, M.; Liu, W.; Wang, T.; Liu, A.; Zeng, Z. Edge intelligence computing for mobile augmented reality with deep reinforcement learning approach. Comput. Netw. 2021, 195, 108186.

- Xia, Q.; Ye, W.; Tao, Z.; Wu, J.; Li, Q. A survey of federated learning for edge computing: Research problems and solutions. High-Confid. Comput. 2021, 1, 100008.

- Abreha, H.G.; Hayajneh, M.; Serhani, M.A. Federated Learning in Edge Computing: A Systematic Survey. Sensors 2022, 22, 450.

- Wink, T.; Nochta, Z. An Approach for Peer-to-Peer Federated Learning. In Proceedings of the 2021 51st Annual IEEE/IFIP International Conference on Dependable Systems and Networks Workshops (DSN-W), Taipei, Taiwan, 21–24 June 2021; pp. 150–157.

- Lian, X.; Zhang, C.; Zhang, H.; Hsieh, C.-J.; Zhang, W.; Liu, J. Can decentralized algorithms outperform centralized algorithms? A case study for decentralized parallel stochastic gradient descent. In Proceedings of the Advances in Neural Information Processing Systems 30: Annual Conference on Neural Information Processing Systems 2017, Long Beach, CA, USA, 4–9 December 2017; pp. 5330–5340.

- Truong, N.; Sun, K.; Wang, S.; Guitton, F.; Guo, Y.K. Privacy preservation in federated learning: An insightful survey from the GDPR perspective. Comput. Secur. 2021, 110, 102402.

- Brecko, A.; Kajati, E.; Koziorek, J.; Zolotova, I. Federated Learning for Edge Computing: A Survey. Appl. Sci. 2022, 12, 9124.

- Li, Q.; Wen, Z.; Wu, Z.; Hu, S.; Wang, N.; Li, Y.; Liu, X.; He, B. A Survey on Federated Learning Systems: Vision, Hype and Reality for Data Privacy and Protection. IEEE Trans. Knowl. Data Eng. 2021.

- Makkar, A.; Ghosh, U.; Rawat, D.B.; Abawajy, J.H. FedLearnSP: Preserving Privacy and Security Using Federated Learning and Edge Computing. IEEE Consum. Electron. Mag. 2022, 11, 21–27.

- Aledhari, M.; Razzak, R.; Parizi, R.M.; Saeed, F. Federated Learning: A Survey on Enabling Technologies, Protocols, and Applications. IEEE Access 2020, 8, 140699–140725.

- Zhua, H.; Xub, J.; Liua, S.; Jin, Y. Federated Learning on Non-IID Data: A Survey. Neurocomputing 2021, 465, 371–390.

- Hsu, T.M.-H.; Qi, H.; Brown, M. Measuring the effects of nonidentical data distribution for federated visual classification. arXiv 2019, arXiv:1909.06335.

- Kulkarni, V.; Kulkarni, M.; Pant, A. Survey of personalization techniques for federated learning. In Proceedings of the 2020 Fourth World Conference on Smart Trends in Systems, Security and Sustainability (WorldS4), London, UK, 27–28 July 2020; pp. 794–797.

- Wu, P.; Imbiriba, T.; Park, J.; Kim, S.; Closas, P. Personalized Federated Learning over non-IID Data for Indoor Localization. arXiv 2021, arXiv:2107.04189v2.

- Huang, Y.; Chu, L.; Zhou, Z.; Wang, L.; Liu, J.; Pei, J.; Zhang, Y. Personalized cross-silo federated learning on non-IID data. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtual, 2–9 February 2021.

- Wang, C.-H.; Huang, K.-Y.; Chen, J.-C.; Shuai, H.-H.; Cheng, W.-H. Heterogeneous Federated Learning Through Multi-Branch Network. In Proceedings of the 2021 IEEE International Conference on Multimedia and Expo (ICME), Shenzhen, China, 5–9 July 2021; pp. 1–6.

- Yu, F.; Zhang, W.; Qin, Z.; Xu, Z.; Wang, D.; Liu, C.; Tian, Z.; Chen, X. Heterogeneous Federated Learning. arXiv 2020, arXiv:2008.06767.

- Khan, L.U.; Saad, W.; Han, Z.; Hossain, E.; Hong, C.S. Federated Learning for Internet of Things: Recent Advances, Taxonomy, and Open Challenges. IEEE Commun. Surv. Tutor. 2021, 23, 1759–1799.

- Sufian, A.; Ghosh, A.; Sadiq, A.S.; Smarandache, F. A Survey on Deep Transfer Learning to Edge Computing for Mitigating the COVID-19 Pandemic. J. Syst. Archit. 2020, 108, 101830.

- Chen, Q.; Zheng, Z.; Hu, C.; Wang, D.; Liu, F. On-edge multi-task transfer learning: Model and practice with data-driven task allocation. IEEE Trans. Parallel Distrib. Syst. 2020, 31, 1357–1371.

- Alkhulaifi, A.; Alsahli, F.; Ahmad, I. Knowledge distillation in deep learning and its applications. PeerJ Comput. Sci. 2021, 7, e474.

- Heo, B.; Kim, J.; Yun, S.; Park, H.; Kwak, N.; Choi, J.Y. A comprehensive overhaul of feature distillation. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV) 2019, Seoul, Republic of Korea, 27 October–2 November 2019.

- Wang, L.; Yoon, K. Knowledge Distillation and Student-Teacher Learning for Visual Intelligence: A Review and New Outlooks. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 44, 3048–3068.

- Tao, Z.; Xia, Q.; Li, Q. Neuron Manifold Distillation for Edge Deep Learning. In Proceedings of the 2021 IEEE/ACM 29th International Symposium on Quality of Service (IWQOS), Tokyo, Japan, 25–28 June 2021; pp. 1–10.

- Li, D.; Wang, J. FedMD: Heterogenous federated learning via model distillation. arXiv 2019, arXiv:1910.03581.

- Jiang, D.; Shan, C.; Zhang, Z. Federated Learning Algorithm Based on Knowledge Distillation. In Proceedings of the 2020 International Conference on Artificial Intelligence and Computer Engineering (ICAICE), Beijing, China, 23–25 October 2020; pp. 163–167.

More

Information

Contributors

MDPI registered users' name will be linked to their SciProfiles pages. To register with us, please refer to https://encyclopedia.pub/register

:

View Times:

1.4K

Revisions:

2 times

(View History)

Update Date:

09 Feb 2023

Notice

You are not a member of the advisory board for this topic. If you want to update advisory board member profile, please contact office@encyclopedia.pub.

OK

Confirm

Only members of the Encyclopedia advisory board for this topic are allowed to note entries. Would you like to become an advisory board member of the Encyclopedia?

Yes

No

${ textCharacter }/${ maxCharacter }

Submit

Cancel

Back

Comments

${ item }

|

More

No more~

There is no comment~

${ textCharacter }/${ maxCharacter }

Submit

Cancel

${ selectedItem.replyTextCharacter }/${ selectedItem.replyMaxCharacter }

Submit

Cancel

Confirm

Are you sure to Delete?

Yes

No