Your browser does not fully support modern features. Please upgrade for a smoother experience.

Submitted Successfully!

Thank you for your contribution! You can also upload a video entry or images related to this topic.

For video creation, please contact our Academic Video Service.

| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Md Tanzil Shahria | -- | 2331 | 2023-01-05 22:34:06 | | | |

| 2 | Sirius Huang | Meta information modification | 2331 | 2023-01-08 16:53:29 | | |

Video Upload Options

We provide professional Academic Video Service to translate complex research into visually appealing presentations. Would you like to try it?

Cite

If you have any further questions, please contact Encyclopedia Editorial Office.

Shahria, M.T.; Sunny, M.S.H.; Zarif, M.I.I.; Ghommam, J.; Ahamed, S.I.; Rahman, M.H. Vision-Based Robotic Applications. Encyclopedia. Available online: https://encyclopedia.pub/entry/39811 (accessed on 07 June 2026).

Shahria MT, Sunny MSH, Zarif MII, Ghommam J, Ahamed SI, Rahman MH. Vision-Based Robotic Applications. Encyclopedia. Available at: https://encyclopedia.pub/entry/39811. Accessed June 07, 2026.

Shahria, Md Tanzil, Md Samiul Haque Sunny, Md Ishrak Islam Zarif, Jawhar Ghommam, Sheikh Iqbal Ahamed, Mohammad H Rahman. "Vision-Based Robotic Applications" Encyclopedia, https://encyclopedia.pub/entry/39811 (accessed June 07, 2026).

Shahria, M.T., Sunny, M.S.H., Zarif, M.I.I., Ghommam, J., Ahamed, S.I., & Rahman, M.H. (2023, January 05). Vision-Based Robotic Applications. In Encyclopedia. https://encyclopedia.pub/entry/39811

Shahria, Md Tanzil, et al. "Vision-Based Robotic Applications." Encyclopedia. Web. 05 January, 2023.

Copy Citation

Being an emerging technology, robotic manipulation has encountered tremendous advancements due to technological developments starting from using sensors to artificial intelligence. Over the decades, robotic manipulation has advanced in terms of the versatility and flexibility of mobile robot platforms. Thus, robots are now capable of interacting with the world around them. To interact with the real world, robots require various sensory inputs from their surroundings, and the use of vision is rapidly increasing, as vision is unquestionably a rich source of information for a robotic system.

computer vision

robot manipulation

sensors

vision-based control

1. Introduction

Robotic manipulation alludes to the manner in which robots directly and indirectly interact with surrounding objects. Such interaction includes picking and grasping objects [1][2][3], moving objects from place to place [4][5], folding laundry [6], packing boxes [7], operating as per user requirement, etc. Object manipulation is considered the pivotal role of robotics. Over time, robot manipulation has encountered considerable changes that cause technological development in both industry and academia.

Manual robot manipulation was one of the initial steps of automation [8][9]. A manual robot refers to a manipulation system that requires continuous human involvement to operate [10]. In the beginning, spatial algebra [11], forward kinematics [12][13][14], differential kinematics [15][16][17], inverse kinematics [18][19][20][21][22], etc. were explored by researchers for pick and place tasks, which is not the only application of robotic manipulation systems but the stepping-stone for a wide range of possibilities [23]. The capability of gripping, holding, and manipulating objects requires dexterity, perception of touch, and response from eyes and muscles; mimicking all these attributes is a complex and tedious task [24]. Thus, researchers have explored a wide range of algorithms to adopt and design more efficient and appropriate models for this task. Through time, manual manipulators got advanced and had individual control systems according to their specification and application [25][26].

Along with the individual use of robotic manipulation systems, it has a wide range of industrial applications nowadays as it can be applied to complex and diverse tasks [27]. Hence, typical manipulative devices have become less suited in these times [28]. Different kinds of new technologies, such as wireless communication, augmented reality [29], etc., are being adopted and applied in manipulation systems to uncover the most suitable and friendly human–robot collaboration model for specific tasks [30]. To make the process more efficient and productive and to obtain successful execution, researchers have introduced automation in this field [31].

To habituate to the automated system, researchers first introduced automation in the motion planning technique [3][32], which eventually contributed to the automated robotic manipulation system. Automated and semi-automated manipulation systems not only boost the performance of industrial robots but also contribute to other fields of robotics such as mobile robots [33], assistive robots [34], swarm robots [35], etc. While designing the automated system, the utilization of vision is increasing rapidly as vision is undoubtedly a loaded source of information [36][37][38]. By properly utilizing vision-based data, a robot can identify, map, localize, and calculate various measurements of any object and respond accordingly to complete its tasks [39][40][41][42]. Various studies confirm that vision-based approaches are more appropriate in different fields of robotics such as swarm robotics [35], fruit-picking robots [1], robotic grasping [43], mobile robots [33][44][45], aerial robotics [46], surgical robots [47], etc. To process the vision-based data, different approaches are being introduced by the researchers. However, learning-based approaches are at the center of such autonomous approaches, as in the real world, there are too many deviations and learning algorithms that help the robot gain knowledge from its experience with the environment [48][49][50]. Among different learning methods, various neural network-based models [51][52][53][54], deep learning-based models [49][50][54][55][56], and transfer learning models [57][58][59][60] are mostly exercised by the experts of manipulation systems, whereas different filter-based approaches are also popular among researchers [61][62][63].

2. Current State

A common structural assumption for manipulative tasks for a robot is that an object or set of objects in the environment is what the robot is trying to manipulate. Because of this, generalization via objects—both across different objects and between similar (or identical) objects in different task instances—is an important aspect of learning to manipulate.

Commonly used object-centric manipulation skills and task model representations are often sufficient to generalize across tasks and objects, but adapting to differences in shape, properties, and appearance is required. A wide range of robotic manipulation problems can be solved using the vision-based approach as it works as a better sensory source for the system. Because of that and the availability of a fast processing power, vision-based approaches have become very popular among researchers who are working on robotic manipulation-based problems. A chronological observation depicting the contributions of the researchers based on the addressed problems and their outcomes is compiled in Table 1.

Table 1. Chronological progression of the vision-based approach.

| Year | Addressed Problems | Contributions | Outcomes |

|---|---|---|---|

| 2016 [64][65][66] | Manipulation [64] and grasping control strategies [66] using eye-tracking and sensory-motor fusion [65]. | Object detection, path planning, and navigation [64]; Control of an endoscopic manipulator [65]; Sensory-motor fusion-based manipulation and grasping control strategy for a robotic hand–eye system [66]. | The proposed approach has improved performance in calibration, task completion, and navigation [64]; Shows better a performance than endoscope manipulation by an assistant [64]; Demonstrates responsiveness and flexibility [66]. |

| 2017 [67][68][69][70][71][72][73] | Following human user with robotic blimp [70]; Deformable object manipulation [67]; Tracking and navigation for aerial vehicles [68][73]; Object detection without GPU support [69]; Automated object recognition for assistive robots [71]; Path-finding for humanoid robot [72]. | Robotic rope manipulation using vision-based learning model [67]; Robust vision-based tracking system for a UAV [68]; Real-time robotic object detection and recognition model [69]; Behavioral stability in humanoid robots and path-finding algorithms [72]; Robust real-time navigation [70] and long-range object tracking system [70][73]. | Robot successfully manipulates a rope [67]; System achieves robust tracking in real-time [68] and proved to be efficient in object detection [69]; Robotic blimp can follow humans [70]; System was able to detect and recognize objects [71]; Algorithm successfully able to find a path to guide the robot [72]; System arrived at an operational stage for lighting and weather conditions [73]. |

| 2018 [74][75][76][77][78][79][80][81] | Real-time mobile robot controller [74]; Target detection for safe UAV landing [75]; Vision-based grasping [76], object sorting [79], and dynamic manipulation [77]; Multi-task learning [78]; Learn complex skills from raw sensory inputs [80]; Autonomous landing of a quadrotor on moving targets [81]. | Sensor-independent controller for real-time mobile robots [74]; Detection and landing system for drones [75]; GDRL-based grasping benchmark [76]; Effective robotic framework for extensible RL [77]; Complete controller for generating robot arm trajectories [78]; Successfully inaugurate a camera-robot system [79]; Successful framework to learn a deep dynamics model on images [80]; Autonomous NN-based landing controller of UAVs on moving targets in search and secure applications [81]. | The mobile robot reaches its goal [74]; The system finds targets and lands safely [75]; System grasps better than other algorithms [76]; Real-world reinforcement learning can handle large datasets and models [77]; Method is a versatile manipulator that can accurately correct errors [78]; Placement of objects by the robot gripper [79]; Generalization to a wide range of tasks [80]; Successful autonomous quadrotor landing on fixed and moving platforms [81]. |

| 2019 [82][83][84][85][86][87][88][89] | Nonlinear approximation for mobile robots [82]; Control of cable-driven robots [83]; Leader–follower formation control [84]; Motion control for a free-floating robot [85]; Control of soft robots [86]; Approach an object when obstacles are present [87]; Needle-based percutaneous using robotic technologies [88]; Natural interaction control of surgical robots [89]. | Effective recurrent neural network-based controller for robots [82]; Robust method for analyzing the stability of the cable-driven robots [83]; Effective formation control for a multi-agent system [84]; Efficient vision-based system for a free-floating robot [85]; Stable framework for soft robots [86]; Useful system to increase the autonomy of people with upper-body disabilities [87]; Accurate system to identify the needle position and orientation [88]; Smooth model to use eye movements to control a robot [89]. | System outperforms existing ones [82]; Vision-based control is a good alternative to model-based control [83]; Control protocol completes formation tasks with visibility constraints [84]; Method eliminates false targets and improves positioning precision [85]; System maintained an acceptable accuracy and stability [86]; A person successfully controlled the robotic arm using the system [87]; Framework shows the proposed robotic hardware’s efficiency [88]; movement was feasible and convenient [89]. |

| 2020 [90][91][92][93][94][95] | Grasping under occlusion [90]; Recognition and manipulation of objects [91]; Controllers for decentralized robot swarms [92]; Robot manipulation via human demonstration [93]; Robot manipulator using Iris tracking [94]; Object tracking of visual servoing [95]. | Robust grasping method for a robotic system [90]; Effective stereo algorithm for manipulation of objects [91]; Successful framework to control decentralized robot swarms [92]; Generalized framework for activity recognition from human demonstrations [93]; Real-time iris tracking method for the ophthalmic robotic system [94]; Successful method for conventional template matching [95]. | Method’s effectiveness validated through experiments [90]; R-CNN method is very stable [91]; Architecture shows promising performance for large-sized swarms [92]; Proposed approach achieves good generalized performance [93]; Tracker is suitable for the ophthalmic robotic system [94]; Control system demonstrates significant improvement to feature tracking and robot motion [95]. |

| 2021 [96][97][98][99][100][101][102] | Human–robot handover applications [96]; Imitation learning for robotic manipulation [97]; Reaching and grasping objects using a robotic arm [98]; Integration of libraries for real-time computer vision [99]; Mobility and key challenges for various construction applications [100]; Obtaining the spatial information of operated target [101]; Training actor–critic methods is RL [102]. | Efficient human–robot hand-over control strategy [96]; Intelligent vision-guided imitation learning framework for robotic exactitude manipulation [97]; Robotic hand–eye coordination system to achieve robust reaching ability [98]; Upgraded vision of a real-time computer vision system [99]; Mobile robotic system for object manipulation using autonomous navigation and object grasping [100]; Calibration-free monocular vision-based robot manipulation [101]; Attention-driven robot manipulation for discretization of the translation space [102] | Control shows promising and effective results [96]; Object can reach the goal positions smoothly and intelligently using the framework [97]; Dual neural-network-based controller leads to higher success rate and better control performance [98]; Successfully implemented and tested on the latest technologies [99]; UGV autonomously navigates toward a selected location [100]; Performance of the method has been successfully evaluated [101]; Algorithm achieves state-of-the-art performance on several difficult robotics tasks [102]. |

| 2022 [103][104][105][106][107][108][109] | Micro-manipulation on cells [103]; Collision-free navigation [104]; Highly nonlinear continuum manipulation [105]; Complexity of RL in broad range of robotic manipulation task [106]; Uncertainty in DNN-based prediction for robotic grasping [107]; Path planning for a robotic arm in a 3D workspace [108]; Object tracking and control of a robotic arm in real-time [109]. | Path planning for magnetic micro-robots [103]; Neural radiance fields (NeRFs) for navigation in 3D environment [104]; Aerial continuum manipulation systems (ACMSs) [105]; Attention-driven robotic manipulation [106]; Robotic grabbing in distorted RGB-D data [107]; Real-time path generation with lower computational cost [108]; Real-time object tracking with reduced stress load and a high rate of success. [109]. | Magnetic micro-robots performed accurately in complex environment [103]; NeRFs outperforms the dynamically informed INeRF baseline [104]; simulation demonstrates good results [105]; ARM was successful on a range of RLBench tasks [106]; System performs better than end-to-end networks in difficult conditions [107]; System significantly eased the limitations of prior research [108]; System effectively locates the robotic arm in the desired location with very high accuracy [109]. |

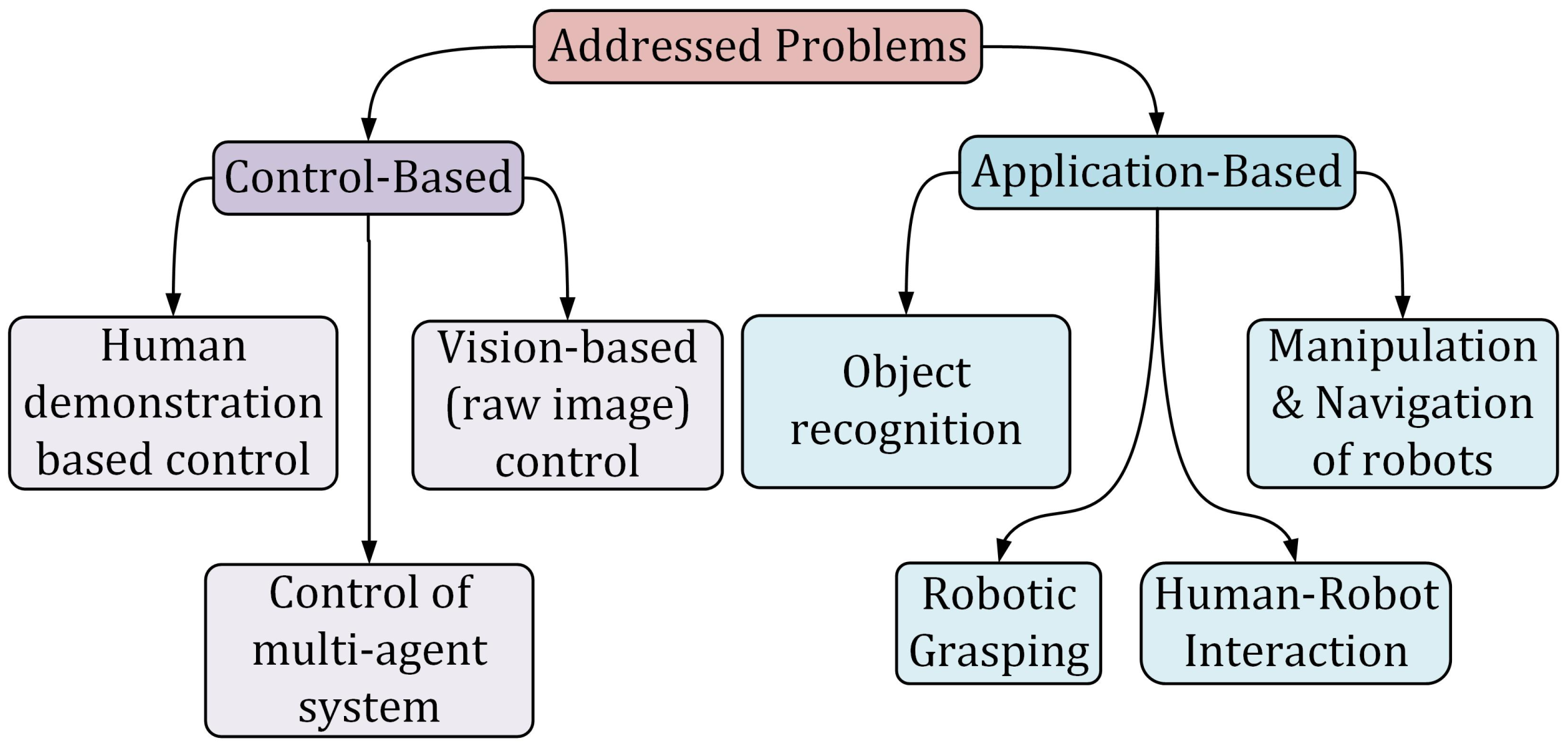

Figure 1 represents the basic categorization of the problems addressed by the researchers. The problems are primarily divided into two categories: control-based problems and application-based problems. Each of these problems is further categorized into several sub-categories. While dealing with control-based problems such as human demonstration-based control [78][93][97], vision (raw images)-based control [74][82][83][85][86], multi-agent system control [84][92][100][105], etc., researchers have tried and succeeded to solve them by adopting vision-based approaches. The addressed control-based problems are designing a vision-based real-time mobile robot controller [74], multi-task learning from demonstration [78][102][106], nonlinear approximation in the control and monitoring of mobile robots [82], control of cable-driven robots [83], leader–follower formation control [84], motion control for a free-floating robot [85], control of soft robots [86], controllers for decentralized robot swarms [92], robot manipulation via human demonstrations [93], and imitation learning for robotic manipulation [97].

Figure 1. Categorization of problems addressed by the researchers.

Similarly, while solving application-based problems such as object recognition and manipulation [67][69][70][71][77][79][80][91][101], navigation of robots [68][72][73][75][99][104][108][109], robotic grasping [76][90][107], human–robot interaction [96], etc., researchers successfully applied vision-based approaches and obtained very promising results. The addressed application-based problems are the manipulations of the deformable objects, such as ropes [67], a vision-based tracking system for aerial vehicles [68], object detection without a graphics processing unit (GPU) support for robotic applications [69], detecting and following the human user with a robotic blimp [70], object detection and recognition for autonomous assistive robots [71], path-finding for a humanoid robot [72] or robotic arms [108], navigation of an unmanned surface vehicle [73], vision-based target detection for the safe landing of UAV in both fixed [75] and moving platforms [81], vision-based grasping for robots [76], vision-based dynamic manipulation [77], vision-based object sorting robot manipulator [79], learning complex robotic skills from raw sensory inputs [80], grasping under occlusion for manipulating a robotic system [90], recognition and manipulation of objects [91], human–robot handover applications [96], targeted drug delivery in biological research [103], uncertainty in DNN-based robotic grasping [107], and object tracking via a robotic arm in a real-time 3D environment [109].

3. Applications

Vision-based autonomous robot manipulation for various applications has received a lot of attention in the recent decade. Manipulation based on vision occurs when a robot manipulates an item utilizing computer vision with the feedback from the data of one or more camera sensors. The increased complexity of jobs performed by fully autonomous robots has resulted from advances in computer vision and artificial intelligence. A lot of research is going on in the computer vision field, and it may be able to provide us with more natural, non-contact solutions in the future. Human intelligence is also required for robot decision-making and control in situations in which the environment is mainly unstructured, the objects are unfamiliar, and the motions are unknown. A human–robot interface is a fundamental approach for teleoperation solutions because it serves as a link between the human intellect and the actual motions of the remote robot. The current approach of robot-manipulator in teleoperation, which makes use of vision-based tracking, allows the communication of tasks to the robot manipulator in a natural way, often utilizing the same hand gestures that would ordinarily be used for a task. The use of direct position control of the robot end-effector in vision-based robot manipulation allows for greater precision in manipulating robots.

Manipulation of deformable objects, autonomous vision-based tracking systems, tracking moving objects of interest, visual-based real-time robot control, vision-based target detection as well as object recognition, multi-agent system leader–follower formation control using a vision-based tracking scheme and vision-based grasping method to grasp the target object for manipulation are some of the well-known applications in vision-based robot manipulation. Researchers have classified the application of vision-based works into six categories: manipulation of the object, vision-based tracking, object detection, pathfinding/navigation, real-time remote control, and robotic arm/grasping. The summary of recent vision-based applications are mentioned in Table 2.

Table 2. Application of vision-based works.

| Study | Manipulation of Object | Vision-Based Tracking | Object Detection | Path Finding/ Navigation | Real-Time Remote Control | Robotic Arm/ Grasping |

|---|---|---|---|---|---|---|

| [67] | ✓ | |||||

| [68] | ✓ | |||||

| [69] | ✓ | |||||

| [70] | ✓ | ✓ | ||||

| [71] | ✓ | |||||

| [72] | ✓ | ✓ | ||||

| [73] | ✓ | ✓ | ||||

| [74] | ✓ | ✓ | ||||

| [75] | ✓ | ✓ | ||||

| [76] | ✓ | |||||

| [77] | ✓ | ✓ | ✓ | |||

| [78] | ✓ | ✓ | ✓ | ✓ | ||

| [79] | ✓ | ✓ | ✓ | ✓ | ||

| [80] | ✓ | ✓ | ||||

| [81] | ✓ | ✓ | ||||

| [82] | ✓ | ✓ | ||||

| [83] | ✓ | ✓ | ||||

| [84] | ✓ | ✓ | ||||

| [85] | ✓ | ✓ | ||||

| [86] | ✓ | ✓ | ✓ | |||

| [90] | ✓ | ✓ | ||||

| [91] | ✓ | ✓ | ||||

| [92] | ✓ | ✓ | ✓ | |||

| [93] | ✓ | ✓ | ||||

| [96] | ✓ | ✓ | ||||

| [97] | ✓ | ✓ | ||||

| [64] | ✓ | ✓ | ||||

| [94] | ✓ | ✓ | ||||

| [65] | ✓ | ✓ | ||||

| [87] | ✓ | ✓ | ✓ | ✓ | ||

| [95] | ✓ | ✓ | ||||

| [88] | ✓ | ✓ | ||||

| [89] | ✓ | ✓ | ✓ | ✓ | ||

| [66] | ✓ | ✓ | ||||

| [98] | ✓ | ✓ | ✓ | |||

| [99] | ✓ | |||||

| [100] | ✓ | ✓ | ||||

| [101] | ✓ | ✓ | ||||

| [102] | ✓ | |||||

| [103] | ✓ | ✓ | ||||

| [104] | ✓ | |||||

| [105] | ✓ | |||||

| [106] | ✓ | |||||

| [107] | ✓ | |||||

| [108] | ✓ | |||||

| [109] | ✓ | ✓ |

References

- Tang, Y.; Chen, M.; Wang, C.; Luo, L.; Li, J.; Lian, G.; Zou, X. Recognition and localization methods for vision-based fruit picking robots: A review. Front. Plant Sci. 2020, 11, 510.

- Zhang, H.; Zhou, X.; Lan, X.; Li, J.; Tian, Z.; Zheng, N. A real-time robotic grasping approach with oriented anchor box. IEEE Trans. Syst. Man Cybern. Syst. 2019, 51, 3014–3025.

- Bertolucci, R.; Capitanelli, A.; Maratea, M.; Mastrogiovanni, F.; Vallati, M. Automated planning encodings for the manipulation of articulated objects in 3d with gravity. In Proceedings of the International Conference of the Italian Association for Artificial Intelligence, Rende, Italy, 19–22 November 2019; Springer: Berlin/Heidelberg, Germany, 2019; pp. 135–150.

- Marino, H.; Ferrati, M.; Settimi, A.; Rosales, C.; Gabiccini, M. On the problem of moving objects with autonomous robots: A unifying high-level planning approach. IEEE Robot. Autom. Lett. 2016, 1, 469–476.

- Kong, S.; Tian, M.; Qiu, C.; Wu, Z.; Yu, J. IWSCR: An intelligent water surface cleaner robot for collecting floating garbage. IEEE Trans. Syst. Man, Cybern. Syst. 2020, 51, 6358–6368.

- Miller, S.; Van Den Berg, J.; Fritz, M.; Darrell, T.; Goldberg, K.; Abbeel, P. A geometric approach to robotic laundry folding. Int. J. Robot. Res. 2012, 31, 249–267.

- Do, H.M.; Choi, T.; Park, D.; Kyung, J. Automatic cell production for cellular phone packing using two dual-arm robots. In Proceedings of the 2015 15th International Conference on Control, Automation and Systems (ICCAS), Busan, Republic of Korea, 13–16 October 2015; IEEE: Piscataway Township, NJ, USA, 2015; pp. 2083–2086.

- Kemp, C.C.; Edsinger, A. Robot manipulation of human tools: Autonomous detection and control of task relevant features. In Proceedings of the Fifth International Conference on Development and Learning, Bloomington, IN, USA, 31 May–3 June 2006; Volume 42.

- Edsinger, A. Robot Manipulation in Human Environments; CSAIL Technical Reports: Cambridge, MA, USA, 2007.

- What Are Manual Robots? Bright Hub Engineering: Albany, NY, USA, 11 December 2009.

- Van Pham, H.; Asadi, F.; Abut, N.; Kandilli, I. Hybrid spiral STC-hedge algebras model in knowledge reasonings for robot coverage path planning and its applications. Appl. Sci. 2019, 9, 1909.

- Merlet, J.P. Solving the forward kinematics of a Gough-type parallel manipulator with interval analysis. Int. J. Robot. Res. 2004, 23, 221–235.

- Kucuk, S.; Bingul, Z. Robot Kinematics: Forward and Inverse Kinematics; INTECH Open Access Publisher: London, UK, 2006.

- Seng Yee, C.; Lim, K.B. Forward kinematics solution of Stewart platform using neural networks. Neurocomputing 1997, 16, 333–349.

- Lee, B.J. Geometrical derivation of differential kinematics to calibrate model parameters of flexible manipulator. Int. J. Adv. Robot. Syst. 2013, 10, 106.

- Ye, S.; Wang, Y.; Ren, Y.; Li, D. Robot calibration using iteration and differential kinematics. J. Phys. Conf. Ser. 2006, 48, 1.

- Park, I.W.; Lee, B.J.; Cho, S.H.; Hong, Y.D.; Kim, J.H. Laser-based kinematic calibration of robot manipulator using differential kinematics. IEEE/ASME Trans. Mechatron. 2011, 17, 1059–1067.

- D’Souza, A.; Vijayakumar, S.; Schaal, S. Learning inverse kinematics. In Proceedings of the 2001 IEEE/RSJ International Conference on Intelligent Robots and Systems. Expanding the Societal Role of Robotics in the the Next Millennium (Cat. No. 01CH37180), Maui, Hawaii, USA, 29 October–3 November 2001; IEEE: Piscataway Township, NJ, USA, 2001; Volume 1, pp. 298–303.

- Grochow, K.; Martin, S.L.; Hertzmann, A.; Popović, Z. Style-based inverse kinematics. In Proceedings of the ACM SIGGRAPH 2004 Papers, Los Angeles, CA, USA, 8–12 August 2004; AMC: New York, NY, USA, 2004; pp. 522–531.

- Manocha, D.; Canny, J.F. Efficient inverse kinematics for general 6R manipulators. IEEE Trans. Robot. Autom. 1994, 10, 648–657.

- Goldenberg, A.; Benhabib, B.; Fenton, R. A complete generalized solution to the inverse kinematics of robots. IEEE J. Robot. Autom. 1985, 1, 14–20.

- Wang, L.C.; Chen, C.C. A combined optimization method for solving the inverse kinematics problems of mechanical manipulators. IEEE Trans. Robot. Autom. 1991, 7, 489–499.

- Tedrake, R. Robotic Manipulation Course Notes for MIT 6.4210. 2022. Available online: https://manipulation.csail.mit.edu/ (accessed on 2 November 2022).

- Billard, A.; Kragic, D. Trends and challenges in robot manipulation. Science 2019, 364, eaat8414.

- Harvey, I.; Husbands, P.; Cliff, D.; Thompson, A.; Jakobi, N. Evolutionary robotics: The Sussex approach. Robot. Auton. Syst. 1997, 20, 205–224.

- Belta, C.; Kumar, V. Abstraction and control for groups of robots. IEEE Trans. Robot. 2004, 20, 865–875.

- Arents, J.; Greitans, M. Smart industrial robot control trends, challenges and opportunities within manufacturing. Appl. Sci. 2022, 12, 937.

- Su, Y.H.; Young, K.Y. Effective manipulation for industrial robot manipulators based on tablet PC. J. Chin. Inst. Eng. 2018, 41, 286–296.

- Su, Y.; Liao, C.; Ko, C.; Cheng, S.; Young, K.Y. An AR-based manipulation system for industrial robots. In Proceedings of the 2017 11th Asian Control Conference (ASCC), Gold Coast, Australia, 17–20 December 2017; IEEE: Piscataway Township, NJ, USA, 2017; pp. 1282–1285.

- Inkulu, A.K.; Bahubalendruni, M.R.; Dara, A.; SankaranarayanaSamy, K. Challenges and opportunities in human robot collaboration context of Industry 4.0—A state of the art review. In Industrial Robot: The International Journal of Robotics Research and Application; Emerald Publishing: Bingley, UK, 2021.

- Balaguer, C.; Abderrahim, M. Robotics and Automation in Construction; BoD—Books on Demand: Elizabeth, NJ, USA, 2008.

- Capitanelli, A.; Maratea, M.; Mastrogiovanni, F.; Vallati, M. Automated planning techniques for robot manipulation tasks involving articulated objects. In Proceedings of the Conference of the Italian Association for Artificial Intelligence, Bari, Italy, 14–17 November 2017; Springer: Berlin/Heidelberg, Germany, 2017; pp. 483–497.

- Finžgar, M.; Podržaj, P. Machine-vision-based human-oriented mobile robots: A review. Stroj. Vestn. J. Mech. Eng. 2017, 63, 331–348.

- Zlatintsi, A.; Dometios, A.; Kardaris, N.; Rodomagoulakis, I.; Koutras, P.; Papageorgiou, X.; Maragos, P.; Tzafestas, C.S.; Vartholomeos, P.; Hauer, K.; et al. I-Support: A robotic platform of an assistive bathing robot for the elderly population. Robot. Auton. Syst. 2020, 126, 103451.

- Shahria, M.T.; Iftekhar, L.; Rahman, M.H. Learning-Based Approaches in Swarm Robotics: In A Nutshell. In Proceedings of the International Conference on Mechanical, Industrial and Energy Engineering 2020, Khulna, Bangladesh, 19–21 December 2020.

- Martinez-Martin, E.; Del Pobil, A.P. Vision for robust robot manipulation. Sensors 2019, 19, 1648.

- Budge, B. 1.1 Computer Vision in Robotics. In Deep Learning Approaches for 3D Inference from Monocular Vision; Queensland University of Technology: Brisbane, Australia, 2020; p. 4.

- Wang, X.; Wang, X.L.; Wilkes, D.M. An automated vision based on-line novel percept detection method for a mobile robot. Robot. Auton. Syst. 2012, 60, 1279–1294.

- Robot Vision-Sensor Solutions for Robotics; SICK: Minneapolis, MN, USA; Available online: https://www.sick.com/cl/en/robot-vision-sensor-solutions-for-robotics/w/robotics-robot-vision/ (accessed on 2 November 2022).

- Gao, Y.; Spiteri, C.; Pham, M.T.; Al-Milli, S. A survey on recent object detection techniques useful for monocular vision-based planetary terrain classification. Robot. Auton. Syst. 2014, 62, 151–167.

- Baerveldt, A.J. A vision system for object verification and localization based on local features. Robot. Auton. Syst. 2001, 34, 83–92.

- Garcia-Fidalgo, E.; Ortiz, A. Vision-based topological mapping and localization methods: A survey. Robot. Auton. Syst. 2015, 64, 1–20.

- Du, G.; Wang, K.; Lian, S.; Zhao, K. Vision-based robotic grasping from object localization, object pose estimation to grasp estimation for parallel grippers: A review. Artif. Intell. Rev. 2021, 54, 1677–1734.

- Gupta, M.; Kumar, S.; Behera, L.; Subramanian, V.K. A novel vision-based tracking algorithm for a human-following mobile robot. IEEE Trans. Syst. Man, Cybern. Syst. 2016, 47, 1415–1427.

- Zhang, K.; Chen, J.; Yu, G.; Zhang, X.; Li, Z. Visual trajectory tracking of wheeled mobile robots with uncalibrated camera extrinsic parameters. IEEE Trans. Syst. Man, Cybern. Syst. 2020, 51, 7191–7200.

- Lin, L.; Yang, Y.; Cheng, H.; Chen, X. Autonomous vision-based aerial grasping for rotorcraft unmanned aerial vehicles. Sensors 2019, 19, 3410.

- Yang, L.; Etsuko, K. Review on vision-based tracking in surgical navigation. IET Cyber-Syst. Robot. 2020, 2, 107–121.

- Kroemer, O.; Niekum, S.; Konidaris, G. A Review of Robot Learning for Manipulation: Challenges, Representations, and Algorithms. J. Mach. Learn. Res. 2021, 22, 30–31.

- Ruiz-del Solar, J.; Loncomilla, P. Applications of deep learning in robot vision. In Deep Learning in Computer Vision; CRC Press: Boca Raton, FL, USA, 2020; pp. 211–232.

- Watt, N. Deep Neural Networks for Robot Vision in Evolutionary Robotics; Nelson Mandela University: Gqeberha, South Africa, 2021.

- Jiang, Y.; Yang, C.; Na, J.; Li, G.; Li, Y.; Zhong, J. A brief review of neural networks based learning and control and their applications for robots. Complexity 2017, 2017, 1895897.

- Vemuri, A.T.; Polycarpou, M.M. Neural-network-based robust fault diagnosis in robotic systems. IEEE Trans. Neural Netw. 1997, 8, 1410–1420.

- Prabhu, S.M.; Garg, D.P. Artificial neural network based robot control: An overview. J. Intell. Robot. Syst. 1996, 15, 333–365.

- Köker, R.; Öz, C.; Çakar, T.; Ekiz, H. A study of neural network based inverse kinematics solution for a three-joint robot. Robot. Auton. Syst. 2004, 49, 227–234.

- Pierson, H.A.; Gashler, M.S. Deep learning in robotics: A review of recent research. Adv. Robot. 2017, 31, 821–835.

- Liu, H.; Fang, T.; Zhou, T.; Wang, Y.; Wang, L. Deep learning-based multimodal control interface for human–robot collaboration. Procedia CIRP 2018, 72, 3–8.

- Liu, Y.; Li, Z.; Liu, H.; Kan, Z. Skill transfer learning for autonomous robots and human–robot cooperation: A survey. Robot. Auton. Syst. 2020, 128, 103515.

- Nakashima, K.; Nagata, F.; Ochi, H.; Otsuka, A.; Ikeda, T.; Watanabe, K.; Habib, M.K. Detection of minute defects using transfer learning-based CNN models. Artif. Life Robot. 2021, 26, 35–41.

- Tanaka, K.; Yonetani, R.; Hamaya, M.; Lee, R.; Von Drigalski, F.; Ijiri, Y. Trans-am: Transfer learning by aggregating dynamics models for soft robotic assembly. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; IEEE: Piscataway Township, NJ, USA, 2021; pp. 4627–4633.

- Zarif, M.I.I.; Shahria, M.T.; Sunny, M.S.H.; Rahaman, M.M. A Vision-based Object Detection and Localization System in 3D Environment for Assistive Robots’ Manipulation. In Proceedings of the 9th International Conference of Control Systems, and Robotics (CDSR’22), Niagara Falls, Canada, 02–04 June 2022.

- Janabi-Sharifi, F.; Marey, M. A kalman-filter-based method for pose estimation in visual servoing. IEEE Trans. Robot. 2010, 26, 939–947.

- Wu, B.F.; Jen, C.L. Particle-filter-based radio localization for mobile robots in the environments with low-density WLAN APs. IEEE Trans. Ind. Electron. 2014, 61, 6860–6870.

- Zhao, D.; Li, C.; Zhu, Q. Low-pass-filter-based position synchronization sliding mode control for multiple robotic manipulator systems. Proc. Inst. Mech. Eng. Part I J. Syst. Control Eng. 2011, 225, 1136–1148.

- Eid, M.A.; Giakoumidis, N.; El Saddik, A. A novel eye-gaze-controlled wheelchair system for navigating unknown environments: Case study with a person with ALS. IEEE Access 2016, 4, 558–573.

- Cao, Y.; Miura, S.; Kobayashi, Y.; Kawamura, K.; Sugano, S.; Fujie, M.G. Pupil variation applied to the eye tracking control of an endoscopic manipulator. IEEE Robot. Autom. Lett. 2016, 1, 531–538.

- Hu, Y.; Li, Z.; Li, G.; Yuan, P.; Yang, C.; Song, R. Development of sensory-motor fusion-based manipulation and grasping control for a robotic hand-eye system. IEEE Trans. Syst. Man Cybern. Syst. 2016, 47, 1169–1180.

- Nair, A.; Chen, D.; Agrawal, P.; Isola, P.; Abbeel, P.; Malik, J.; Levine, S. Combining self-supervised learning and imitation for vision-based rope manipulation. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May 2017; IEEE: Piscataway Township, NJ, USA, 2017; pp. 2146–2153.

- Cheng, H.; Lin, L.; Zheng, Z.; Guan, Y.; Liu, Z. An autonomous vision-based target tracking system for rotorcraft unmanned aerial vehicles. In Proceedings of the 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Exeter, UK, 21–23 June 2017; IEEE: Piscataway Township, NJ, USA, 2017; pp. 1732–1738.

- Lu, K.; An, X.; Li, J.; He, H. Efficient deep network for vision-based object detection in robotic applications. Neurocomputing 2017, 245, 31–45.

- Yao, N.; Anaya, E.; Tao, Q.; Cho, S.; Zheng, H.; Zhang, F. Monocular vision-based human following on miniature robotic blimp. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Vancouver, BC, Canada, 24–28 September 2017; IEEE: Piscataway Township, NJ, USA, 2017; pp. 3244–3249.

- Martinez-Martin, E.; Del Pobil, A.P. Object detection and recognition for assistive robots: Experimentation and implementation. IEEE Robot. Autom. Mag. 2017, 24, 123–138.

- Abiyev, R.H.; Arslan, M.; Gunsel, I.; Cagman, A. Robot pathfinding using vision based obstacle detection. In Proceedings of the 2017 3rd IEEE International Conference on Cybernetics (CYBCONF), Exeter, UK, 21–23 June 2017; IEEE: Piscataway Township, NJ, USA, 2017; pp. 1–6.

- Shin, B.S.; Mou, X.; Mou, W.; Wang, H. Vision-based navigation of an unmanned surface vehicle with object detection and tracking abilities. Mach. Vis. Appl. 2018, 29, 95–112.

- Dönmez, E.; Kocamaz, A.F.; Dirik, M. A vision-based real-time mobile robot controller design based on gaussian function for indoor environment. Arab. J. Sci. Eng. 2018, 43, 7127–7142.

- Rabah, M.; Rohan, A.; Talha, M.; Nam, K.H.; Kim, S.H. Autonomous vision-based target detection and safe landing for UAV. Int. J. Control. Autom. Syst. 2018, 16, 3013–3025.

- Quillen, D.; Jang, E.; Nachum, O.; Finn, C.; Ibarz, J.; Levine, S. Deep reinforcement learning for vision-based robotic grasping: A simulated comparative evaluation of off-policy methods. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia, 21–25 May 2018; IEEE: Piscataway Township, NJ, USA, 2018; pp. 6284–6291.

- Kalashnikov, D.; Irpan, A.; Pastor, P.; Ibarz, J.; Herzog, A.; Jang, E.; Quillen, D.; Holly, E.; Kalakrishnan, M.; Vanhoucke, V.; et al. Qt-opt: Scalable deep reinforcement learning for vision-based robotic manipulation. arXiv 2018, arXiv:1806.10293.

- Rahmatizadeh, R.; Abolghasemi, P.; Bölöni, L.; Levine, S. Vision-based multi-task manipulation for inexpensive robots using end-to-end learning from demonstration. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia, 21–25 May 2018; IEEE: Piscataway Township, NJ, USA, 2018; pp. 3758–3765.

- Ali, M.H.; Aizat, K.; Yerkhan, K.; Zhandos, T.; Anuar, O. Vision-based robot manipulator for industrial applications. Procedia Comput. Sci. 2018, 133, 205–212.

- Ebert, F.; Finn, C.; Dasari, S.; Xie, A.; Lee, A.; Levine, S. Visual foresight: Model-based deep reinforcement learning for vision-based robotic control. arXiv 2018, arXiv:1812.00568.

- Almeshal, A.M.; Alenezi, M.R. A vision-based neural network controller for the autonomous landing of a quadrotor on moving targets. Robotics 2018, 7, 71.

- Fang, W.; Chao, F.; Yang, L.; Lin, C.M.; Shang, C.; Zhou, C.; Shen, Q. A recurrent emotional CMAC neural network controller for vision-based mobile robots. Neurocomputing 2019, 334, 227–238.

- Zake, Z.; Chaumette, F.; Pedemonte, N.; Caro, S. Vision-based control and stability analysis of a cable-driven parallel robot. IEEE Robot. Autom. Lett. 2019, 4, 1029–1036.

- Liu, X.; Ge, S.S.; Goh, C.H. Vision-based leader–follower formation control of multiagents with visibility constraints. IEEE Trans. Control Syst. Technol. 2018, 27, 1326–1333.

- Shangguan, Z.; Wang, L.; Zhang, J.; Dong, W. Vision-based object recognition and precise localization for space body control. Int. J. Aerosp. Eng. 2019, 2019, 7050915.

- Fang, G.; Wang, X.; Wang, K.; Lee, K.H.; Ho, J.D.; Fu, H.C.; Fu, D.K.C.; Kwok, K.W. Vision-based online learning kinematic control for soft robots using local gaussian process regression. IEEE Robot. Autom. Lett. 2019, 4, 1194–1201.

- Cio, Y.S.L.K.; Raison, M.; Ménard, C.L.; Achiche, S. Proof of concept of an assistive robotic arm control using artificial stereovision and eye-tracking. IEEE Trans. Neural Syst. Rehabil. Eng. 2019, 27, 2344–2352.

- Guo, J.; Liu, Y.; Qiu, Q.; Huang, J.; Liu, C.; Cao, Z.; Chen, Y. A novel robotic guidance system with eye gaze tracking control for needle based interventions. IEEE Trans. Cogn. Dev. Syst. 2019, 13, 178–188.

- Li, P.; Hou, X.; Duan, X.; Yip, H.; Song, G.; Liu, Y. Appearance-based gaze estimator for natural interaction control of surgical robots. IEEE Access 2019, 7, 25095–25110.

- Yu, Y.; Cao, Z.; Liang, S.; Geng, W.; Yu, J. A novel vision-based grasping method under occlusion for manipulating robotic system. IEEE Sens. J. 2020, 20, 10996–11006.

- Du, Y.C.; Muslikhin, M.; Hsieh, T.H.; Wang, M.S. Stereo vision-based object recognition and manipulation by regions with convolutional neural network. Electronics 2020, 9, 210.

- Hu, T.K.; Gama, F.; Chen, T.; Wang, Z.; Ribeiro, A.; Sadler, B.M. VGAI: End-to-end learning of vision-based decentralized controllers for robot swarms. In Proceedings of the ICASSP 2021—2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Toronto, ON, Canada, 6–11 June 2021; IEEE: Piscataway Township, NJ, USA, 2021; pp. 4900–4904.

- Jia, Z.; Lin, M.; Chen, Z.; Jian, S. Vision-based robot manipulation learning via human demonstrations. arXiv 2020, arXiv:2003.00385.

- Qiu, H.; Li, Z.; Yang, Y.; Xin, C.; Bian, G.B. Real-Time Iris Tracking Using Deep Regression Networks for Robotic Ophthalmic Surgery. IEEE Access 2020, 8, 50648–50658.

- Wang, X.; Fang, G.; Wang, K.; Xie, X.; Lee, K.H.; Ho, J.D.; Tang, W.L.; Lam, J.; Kwok, K.W. Eye-in-hand visual servoing enhanced with sparse strain measurement for soft continuum robots. IEEE Robot. Autom. Lett. 2020, 5, 2161–2168.

- Melchiorre, M.; Scimmi, L.S.; Mauro, S.; Pastorelli, S.P. Vision-based control architecture for human–robot hand-over applications. Asian J. Control 2021, 23, 105–117.

- Li, Y.; Qin, F.; Du, S.; Xu, D.; Zhang, J. Vision-Based Imitation Learning of Needle Reaching Skill for Robotic Precision Manipulation. J. Intell. Robot. Syst. 2021, 101, 1–13.

- Fang, W.; Chao, F.; Lin, C.M.; Zhou, D.; Yang, L.; Chang, X.; Shen, Q.; Shang, C. Visual-Guided Robotic Object Grasping Using Dual Neural Network Controllers. IEEE Trans. Ind. Inform. 2020, 17, 2282–2291.

- Roland, C.; Choi, D.; Kim, M.; Jang, J. Implementation of Enhanced Vision for an Autonomous Map-based Robot Navigation. In Proceedings of the Korean Institute of Information and Commucation Sciences Conference, Yeosu, Republic of Korea, 3 October 2021; The Korea Institute of Information and Commucation Engineering: Seoul, Republic of Korea, 2021; pp. 41–43.

- Asadi, K.; Haritsa, V.R.; Han, K.; Ore, J.P. Automated object manipulation using vision-based mobile robotic system for construction applications. J. Comput. Civ. Eng. 2021, 35, 04020058.

- Luo, Y.; Dong, K.; Zhao, L.; Sun, Z.; Cheng, E.; Kan, H.; Zhou, C.; Song, B. Calibration-free monocular vision-based robot manipulations with occlusion awareness. IEEE Access 2021, 9, 85265–85276.

- James, S.; Wada, K.; Laidlow, T.; Davison, A.J. Coarse-to-Fine Q-attention: Efficient Learning for Visual Robotic Manipulation via Discretisation. arXiv 2021, arXiv:2106.12534.

- Tang, X.; Li, Y.; Liu, X.; Liu, D.; Chen, Z.; Arai, T. Vision-Based Automated Control of Magnetic Microrobots. Micromachines 2022, 13, 337.

- Adamkiewicz, M.; Chen, T.; Caccavale, A.; Gardner, R.; Culbertson, P.; Bohg, J.; Schwager, M. Vision-only robot navigation in a neural radiance world. IEEE Robot. Autom. Lett. 2022, 7, 4606–4613.

- Samadikhoshkho, Z.; Ghorbani, S.; Janabi-Sharifi, F. Vision-based reduced-order adaptive control of aerial continuum manipulation systems. Aerosp. Sci. Technol. 2022, 121, 107322.

- James, S.; Davison, A.J. Q-attention: Enabling Efficient Learning for Vision-based Robotic Manipulation. IEEE Robot. Autom. Lett. 2022, 7, 1612–1619.

- Yin, R.; Wu, H.; Li, M.; Cheng, Y.; Song, Y.; Handroos, H. RGB-D-Based Robotic Grasping in Fusion Application Environments. Appl. Sci. 2022, 12, 7573.

- Abdi, A.; Ranjbar, M.H.; Park, J.H. Computer vision-based path planning for robot arms in three-dimensional workspaces using Q-learning and neural networks. Sensors 2022, 22, 1697.

- Montoya Angulo, A.; Pari Pinto, L.; Sulla Espinoza, E.; Silva Vidal, Y.; Supo Colquehuanca, E. Assisted Operation of a Robotic Arm Based on Stereo Vision for Positioning Near an Explosive Device. Robotics 2022, 11, 100.

More

Information

Subjects:

Robotics

Contributors

MDPI registered users' name will be linked to their SciProfiles pages. To register with us, please refer to https://encyclopedia.pub/register

:

View Times:

1.3K

Revisions:

2 times

(View History)

Update Date:

08 Jan 2023

Notice

You are not a member of the advisory board for this topic. If you want to update advisory board member profile, please contact office@encyclopedia.pub.

OK

Confirm

Only members of the Encyclopedia advisory board for this topic are allowed to note entries. Would you like to become an advisory board member of the Encyclopedia?

Yes

No

${ textCharacter }/${ maxCharacter }

Submit

Cancel

Back

Comments

${ item }

|

More

No more~

There is no comment~

${ textCharacter }/${ maxCharacter }

Submit

Cancel

${ selectedItem.replyTextCharacter }/${ selectedItem.replyMaxCharacter }

Submit

Cancel

Confirm

Are you sure to Delete?

Yes

No