| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Pierpaolo Dini | -- | 1897 | 2023-07-05 15:12:00 | | | |

| 2 | Wendy Huang | Meta information modification | 1897 | 2023-07-06 05:32:01 | | |

Video Upload Options

The Intrusion Detection System (IDS) is an effective tool utilized in cybersecurity systems to detect and identify intrusion attacks. Traditional IDS methods rely heavily on signature-based approaches, which are limited in their ability to detect novel and sophisticated attacks. To overcome these limitations, researchers and practitioners have started to explore the integration of machine learning techniques into IDS design. Machine learning (ML) has demonstrated remarkable success in various domains, including natural language processing, computer vision, and pattern recognition. Leveraging ML algorithms in the realm of networking cybersecurity offers promising opportunities to enhance the accuracy and efficiency of intrusion detection systems.

1. Introduction

With the rapid growth of networking technologies and the increasing number of cyber threats, ensuring effective cybersecurity has become a paramount concern. One crucial aspect of cybersecurity is the detection and prevention of unauthorized access and malicious activities within computer networks. Intrusion Detection Systems (IDS) play a vital role in monitoring network traffic and identifying potential security breaches. ML-based IDS models can learn from large volumes of network data, detect anomalous patterns, and adapt to evolving attack strategies [1][2][3]. This approach holds the potential to improve the overall security posture by reducing false positives and detecting previously unknown attacks. The design of IDS exploiting machine learning for networking cybersecurity involves several key components. Firstly, a robust and comprehensive dataset is required for training and evaluating the ML models. The proposed datasets encompass a wide range of network traffic patterns, including both normal and malicious activities, to enable effective learning. Secondly, suitable feature selection and extraction techniques are crucial to capture relevant information from the network data [4]. These features serve as input to the ML algorithms, enabling them to discern normal traffic from potential intrusions. Furthermore, the choice of ML algorithms plays a vital role in IDS design. Various algorithms, such as support vector machines, random forests, and deep learning architectures, have been investigated for intrusion detection. Each algorithm has its strengths and weaknesses, and the selection depends on factors such as the complexity of the problem, the availability of data, and the desired trade-offs between detection accuracy and computational efficiency.

2. Motivations

3. Advantages of Machine Learning in Network Security

-

Threat detection: Machine learning can be used to develop predictive models capable of identifying and detecting suspicious behavior or cyber attacks in communication networks. These models can analyze large amounts of data in real time from various sources, such as network logs, packet traffic and user behavior, in order to identify anomalies and patterns associated with malicious activity.

-

Automation of attack responses: Automation is a key aspect in the security of communication networks. Using machine learning algorithms can help automate an attack response, allowing you to react quickly and effectively to threats. For example, a machine learning system can be trained to recognize certain types of attacks and automatically trigger appropriate countermeasures, such as isolating compromised devices or changing security rules.

-

Detect new types of attacks: As cyberthreats evolve, new types of attacks are constantly emerging. The traditional signature-based approach may not be enough to detect these new threats. The use of machine learning algorithms can help recognize anomalous patterns or behavior that could indicate the emergence of new types of attacks, even in the absence of specific signatures.

-

Reduce False Positives: Traditional security systems often generate large numbers of false positives, that is, they falsely report normal activity as an attack. This can lead to wasted time and valuable resources in dealing with non-relevant reports. Using machine learning models can help reduce false positives, increasing the efficiency of security operations and enabling more accurate identification of real threats.

-

Adaptation and continuous learning: Machine learning models can be adapted and updated in real time to address new threats and changing conditions of communication networks. With continuous learning, models can improve over time, gaining greater knowledge of threats and their variants.

-

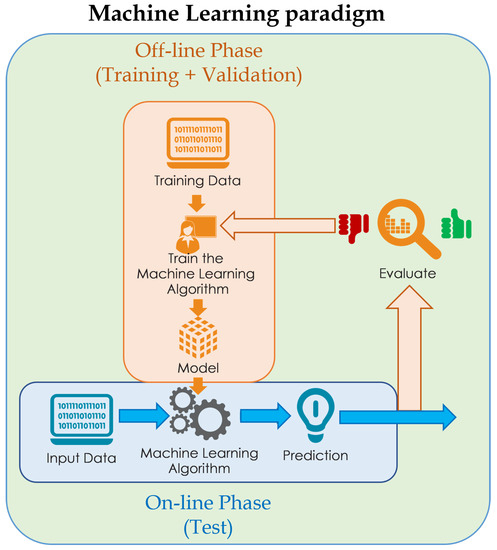

Data Collection: The initial phase involves the collection of training data. This data consists of labeled examples, i.e., pairs of matching inputs and outputs. For example, if researchers are trying to build a model to recognize images of cats, the data will contain images of cats labeled “cat” and different images labeled “not cat”.

-

Data preparation: This phase involves cleaning, normalizing, and transforming the training data to make it suitable for processing by the machine learning model. This can include eliminating missing data, handling categorical characteristics, and normalizing numeric values.

-

Model selection and training: In this phase, you select the appropriate machine learning model for the problem at hand. The model is then trained on the training data, which consists of making the model learn the patterns and relationships present in the data. During training, the model is iteratively adjusted to minimize the error between its predictions and the corresponding output labels in the training data.

-

Model Evaluation: After training, the model is evaluated using separate test data, which was not used during the training. This allows you to evaluate the effectiveness of the model in generalizing patterns to new data. Several metrics, such as accuracy, precision, and area under the ROC curve, are used to evaluate model performance.

-

Model Usage: Once the model has been trained and evaluated, it can be used to make predictions on new input data. The model applies the relationships learned during training to make predictions about new input instances.

References

- Musa, U.S.; Chhabra, M.; Ali, A.; Kaur, M. Intrusion Detection System using Machine Learning Techniques: A Review. In Proceedings of the 2020 International Conference on Smart Electronics and Communication (ICOSEC), Trichy, India, 10–12 September 2020; pp. 149–155.

- Aljabri, M.; Altamimi, H.S.; Albelali, S.A.; Maimunah, A.H.; Alhuraib, H.T.; Alotaibi, N.K.; Alahmadi, A.A.; Alhaidari, F.; Mohammad, R.M.A.; Salah, K. Detecting malicious URLs using machine learning techniques: Review and research directions. IEEE Access 2022, 10, 121395–121417.

- Okey, O.D.; Maidin, S.S.; Adasme, P.; Lopes Rosa, R.; Saadi, M.; Carrillo Melgarejo, D.; Zegarra Rodríguez, D. BoostedEnML: Efficient technique for detecting cyberattacks in IoT systems using boosted ensemble machine learning. Sensors 2022, 22, 7409.

- Htun, H.H.; Biehl, M.; Petkov, N. Survey of feature selection and extraction techniques for stock market prediction. Financ. Innov. 2023, 9, 26.

- Bhuyan, M.H.; Bhattacharyya, D.K.; Kalita, J.K. Network Traffic Anomaly Detection and Prevention: Concepts, Techniques, and Tools; Springer: Berlin/Heidelberg, Germany, 2017.

- Liu, J.; Dong, Y.; Zha, L.; Tian, E.; Xie, X. Event-based security tracking control for networked control systems against stochastic cyber-attacks. Inf. Sci. 2022, 612, 306–321.

- Zha, L.; Liao, R.; Liu, J.; Xie, X.; Tian, E.; Cao, J. Dynamic event-triggered output feedback control for networked systems subject to multiple cyber attacks. IEEE Trans. Cybern. 2021, 52, 13800–13808.

- Qu, F.; Tian, E.; Zhao, X. Chance-Constrained H-infinity State Estimation for Recursive Neural Networks Under Deception Attacks and Energy Constraints: The Finite-Horizon Case. IEEE Trans. Neural Netw. Learn. Syst. 2022.

- Chen, H.; Jiang, B.; Ding, S.X.; Huang, B. Data-driven fault diagnosis for traction systems in high-speed trains: A survey, challenges, and perspectives. IEEE Trans. Intell. Transp. Syst. 2020, 23, 1700–1716.

- Elhanashi, A.; Lowe Sr, D.; Saponara, S.; Moshfeghi, Y. Deep learning techniques to identify and classify COVID-19 abnormalities on chest X-ray images. In Proceedings of the Real-Time Image Processing and Deep Learning 2022; SPIE: Bellingham, WA, USA, 2022; Volume 12102, pp. 15–24.

- Zheng, Q.; Zhao, P.; Wang, H.; Elhanashi, A.; Saponara, S. Fine-grained modulation classification using multi-scale radio transformer with dual-channel representation. IEEE Commun. Lett. 2022, 26, 1298–1302.

- Elhanashi, A.; Gasmi, K.; Begni, A.; Dini, P.; Zheng, Q.; Saponara, S. Machine Learning Techniques for Anomaly-Based Detection System on CSE-CIC-IDS2018 Dataset. In Applications in Electronics Pervading Industry, Environment and Society: APPLEPIES 2022; Springer: Berlin/Heidelberg, Germany, 2023; pp. 131–140.