| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Beatrix Zheng | -- | 9318 | 2022-11-07 01:36:07 | | | |

| 2 | Beatrix Zheng | Meta information modification | 9318 | 2022-11-10 02:20:17 | | |

Video Upload Options

Hockey stick graphs present the global or hemispherical mean temperature record of the past 500 to 2000 years as shown by quantitative climate reconstructions based on climate proxy records. These reconstructions have consistently shown a slow long term cooling trend changing into relatively rapid warming in the 20th century, with the instrumental temperature record by 2000 exceeding earlier temperatures. The term "hockey stick graph" was popularized by the climatologist Jerry Mahlman, to describe the pattern shown by the Mann, Bradley & Hughes 1999 (MBH99) reconstruction, envisaging a graph that is relatively flat with a downward trend to 1900 as forming an ice hockey stick's "shaft" followed by a sharp, steady increase corresponding to the "blade" portion. The reconstructions have featured in Intergovernmental Panel on Climate Change (IPCC) reports as evidence of global warming. Arguments over the reconstructions have been taken up by fossil fuel industry funded lobbying groups attempting to cast doubt on climate science. Paleoclimatology dates back to the 19th century, and the concept of examining varves in lake beds and tree rings to track local climatic changes was suggested in the 1930s. In the 1960s, Hubert Lamb generalised from historical documents and temperature records of central England to propose a Medieval Warm Period from around 900 to 1300, followed by Little Ice Age. This was the basis of a "schematic diagram" featured in the IPCC First Assessment Report of 1990 beside cautions that the medieval warming might not have been global. The use of indicators to get quantitative estimates of the temperature record of past centuries was developed, and by the late 1990s a number of competing teams of climatologists found indications that recent warming was exceptional. Bradley & Jones 1993 introduced the "Composite Plus Scaling" (CPS) method which, as of 2009, was still being used by most large-scale reconstructions. Their study was featured in the IPCC Second Assessment Report of 1995. In 1998 Michael E. Mann, Raymond S. Bradley and Malcolm K. Hughes developed new statistical techniques to produce Mann, Bradley & Hughes 1998 (MBH98), the first eigenvector-based climate field reconstruction (CFR). This showed global patterns of annual surface temperature, and included a graph of average hemispheric temperatures back to 1400 with shading emphasising that uncertainties (to two standard error limits) were much greater in earlier centuries. Jones et al. 1998 independently produced a CPS reconstruction extending back for a thousand years, and Mann, Bradley & Hughes 1999 (MBH99) used the MBH98 methodology to extend their study back to 1000. A version of the MBH99 graph was featured prominently in the 2001 IPCC Third Assessment Report (TAR), which also drew on Jones et al. 1998 and three other reconstructions to support the conclusion that, in the Northern Hemisphere, the 1990s was likely to have been the warmest decade and 1998 the warmest year during the past 1,000 years. The graph became a focus of dispute for those opposed to the strengthening scientific consensus that late 20th century warmth was exceptional. In 2003, as lobbying over the 1997 Kyoto Protocol intensified, a paper claiming greater medieval warmth was quickly dismissed by scientists in the Soon and Baliunas controversy. Later in 2003, Stephen McIntyre and Ross McKitrick published McIntyre & McKitrick 2003b disputing the data used in MBH98 paper. In 2004 Hans von Storch published criticism of the statistical techniques as tending to underplay variations in earlier parts of the graph, though this was disputed and he later accepted that the effect was very small. In 2005 McIntyre and McKitrick published criticisms of the principal components analysis methodology as used in MBH98 and MBH99. Their analysis was subsequently disputed by published papers including Huybers 2005 and Wahl & Ammann 2007 which pointed to errors in the McIntyre and McKitrick methodology. Political disputes led to the formation of a panel of scientists convened by the United States National Research Council, their North Report in 2006 supported Mann's findings with some qualifications, including agreeing that there were some statistical failings but these had little effect on the result. More than two dozen reconstructions, using various statistical methods and combinations of proxy records, support the broad consensus shown in the original 1998 hockey-stick graph, with variations in how flat the pre-20th century "shaft" appears. The 2007 IPCC Fourth Assessment Report cited 14 reconstructions, 10 of which covered 1,000 years or longer, to support its strengthened conclusion that it was likely that Northern Hemisphere temperatures during the 20th century were the highest in at least the past 1,300 years. Further reconstructions, including Mann et al. 2008 and PAGES 2k Consortium 2013, have supported these general conclusions.

1. Origins: the First Paleoclimate Reconstructions

Paleoclimatology influenced the 19th century physicists John Tyndall and Svante Arrhenius who found the greenhouse gas effect of carbon dioxide (CO

2) in the atmosphere to explain how past ice ages had ended.[1] From 1919 to 1923, Alfred Wegener did pioneering work on reconstructing the climate of past eras in collaboration with Milutin Milanković,[2] publishing Die Klimate der geologischen Vorzeit (“The Climates of the Geological Past”) together with Wladimir Köppen, in 1924.[3] In the 1930s Guy Stewart Callendar compiled temperature records to look for changes. Wilmot H. Bradley showed that annual varves in lake beds showed climate cycles, and A. E. Douglass found that tree rings could track past climatic changes but these were thought to only show random variations in the local region. It was only in the 1960s that accurate use of tree rings as climate proxies for reconstructions was pioneered by Harold C. Fritts.[1]

In 1965 Hubert Lamb, a pioneer of historical climatology, generalised from temperature records of central England by using historical, botanical and archeological evidence to popularise the idea of a Medieval Warm Period from around 900 to 1300, followed by a cold epoch culminating between 1550 and 1700.[4][5] In 1972 he became the founding director of the Climatic Research Unit (CRU) in the University of East Anglia (UEA), which aimed to improve knowledge of climate history in both the recent and far distant past, monitor current changes in global climate, identify processes causing changes at different timescales, and review the possibility of advising about future trends in climate.[6] During the cold years of the 1960s, Lamb had anticipated that natural cycles were likely to lead over thousands of years to a future ice age, but after 1976 he supported the emerging view that greenhouse gas emissions caused by humanity would cause detectable global warming "by about A.D. 2000".[5]

The first quantitative reconstruction of Northern Hemisphere (NH) annual mean temperatures was published in 1979 by Brian Groveman and Helmut Landsberg. They used "a short-cut method" based on their earlier paper which showed that 9 instrumental stations could adequately represent an extensive gridded instrumental series, and reconstructed temperatures from 1579 to 1880 on the basis of their compilation of 20 time-series. These records were largely instrumental but also included some proxy records including two tree-ring series. Their method used nested multiple regression to allow for records covering different periods, and produced measures of uncertainty. The reconstruction showed a cool period extending beyond the Maunder Minimum, and warmer temperatures in the 20th century.[7][8] After this around a decade elapsed before Gordon Jacoby and Rosanne D'Arrigo produced the next quantitative NH reconstruction, published in 1989. This was the first based entirely on non-instrumental records, and used tree rings.[7] They reconstructed northern hemisphere annual temperatures since 1671 on the basis of boreal North American tree ring data from 11 distinct regions. From this, they concluded that recent warming was anomalous over the 300-year period, and went as far as speculating that these results supported the hypothesis that recent warming had human causes.[9]

1.1. IPCC First Assessment Report, 1990, Supplement, 1992

Publicity over the concerns of scientists about the implications of global warming led to increasing public and political interest, and the Reagan administration, concerned in part about the political impact of scientific findings, successfully lobbied for the 1988 formation of the Intergovernmental Panel on Climate Change to produce reports subject to detailed approval by government delegates.[10] The IPCC First Assessment Report in 1990 noted evidence that Holocene climatic optimum around 5,000-6,000 years ago had been warmer than the present (at least in summer) and that in some areas there had been exceptional warmth during "a shorter Medieval Warm Period (which may not have been global)", the "Medieval Climatic Optimum" from the "late tenth to early thirteenth centuries (about AD 950-1250)", followed by a cooler period of the Little Ice Age which ended only in the middle to late nineteenth century. The report discussed the difficulties with proxy data, "mainly pollen remains, lake varves and ocean sediments, insect and animal remains, glacier termini" but considered tree ring data was "not yet sufficiently easy to assess nor sufficiently integrated with indications from other data to be used in this report." A "schematic diagram" of global temperature variations over the last thousand years[11] has been traced to a graph based loosely on Lamb's 1965 paper, nominally representing central England, modified by Lamb in 1982.[4] Mike Hulme describes this schematic diagram as "Lamb's sketch on the back of an envelope", a "rather dodgy bit of hand-waving".[12]

In Bradley 1991, a working group of climatologists including Raymond S. Bradley, Malcolm K. Hughes, Jean Jouzel, Wibjörn Karlén, Jonathan Overpeck and Tom Wigley proposed a project to improve understanding of natural climatic variations over the last two thousand years so that their effect could be allowed for when evaluating human contributions to climate change. Climate proxy temperature data was needed at seasonal or annual resolution covering a wide geographical area to provide a framework for testing the part climate forcings had played in past variations, look for cycles in climate, and find if debated climatic events such as the Little Ice Age and Medieval Warm Period were global. Reconstructions were to be made of key climate systems, starting with three climatically sensitive regions: the Asian monsoon region, the El Niño–Southern Oscillation region and the Atlantic region. Areas where more data was needed were to be identified, and there was a need for improved data exchange with computer-based archiving and translation to give researchers access to worldwide paleoclimate information.[13]

The IPCC supplementary report, 1992, reviewed progress on various proxies. These included a study of 1,000 years of tree ring data from Tasmania which, like similar studies, did not allow for possible overestimate of warming due to increased CO

2 levels having a fertilisation effect on tree growth. It noted the suggestion of Bradley et al. 1991 that instrumental records in specific areas could be combined with paleoclimate data for increased detail back to the 18th century.[14]

2. Composite Plus Scaling (CPS) Reconstructions

2.1. Bradley and Jones 1993

Archives of climate proxies were developed: in 1993 Raymond S. Bradley and Phil Jones composited historical records, tree-rings and ice cores for the Northern Hemisphere from 1400 up to the 1970s to produce a decadal reconstruction.[15] Like later reconstructions including the MBH "hockey stick" studies, the Bradley & Jones 1993 reconstruction indicated a slow cooling trend followed by an exceptional temperature rise in the 20th century.[16] Their study also used the modern instrumental temperature record to evaluate how well the regions covered by proxies represented the northern hemisphere average, and compared the instrumental record with the proxy reconstruction over the same period. It concluded that the "Little Ice Age" period was complex, with evidence suggesting the influence of volcanic eruptions. It showed that temperatures since the 1920s were higher than earlier in the 500-year period, an indication of other factors which could most probably be attributed to human caused changes increasing levels of greenhouse gases.[17]

This paper introduced the "Composite Plus Scaling" (CPS) method which was subsequently used by most large-scale climate reconstructions of hemispheric or global average temperatures. In this method, also known as "Composite Plus Scale", selected climate proxy records were standardized before being averaged (composited), and then centred and scaled to provide a quantitative estimate of the target temperature series for the climate of the region or hemisphere over time. This method was implemented in various ways, including different selection processes for the proxy records, and averaging could be unweighted, or could be weighted in relation to an assessment of reliability or of area represented. There were also different ways of finding the scaling coefficient used to scale the proxy records to the instrumental temperature record.[18]

John A. Eddy had earlier tried to relate the rarity of sunspots during the Maunder Minimum to Lamb's estimates of past climate, but had insufficient information to produce a quantitative assessment. The problem was reexamined by Bradley in collaboration with solar physicists Judith Lean and Juerg Beer, using the findings of Bradley & Jones 1993. The Lean, Beer & Bradley 1995 paper confirmed that the drop in solar output appeared to have caused a temperature drop of almost 0.5 °C during the Little Ice Age, and increased solar output might explain the rise in early 20th century temperatures. A reconstruction of Arctic temperatures over four centuries by Overpeck et al. 1997 reached similar conclusions, but both these studies came up against the limitations of the climate reconstructions at that time which only resolved temperature fluctuations on a decadal basis rather than showing individual years, and produced a single time series so did not show a spatial pattern of relative temperatures for different regions.[19]

2.2. IPCC Second Assessment Report

The IPCC Second Assessment Report (SAR) of 1996 featured Figure 3.20 showing the Bradley & Jones 1993 decadal summer temperature reconstruction for the northern hemisphere, overlaid with a 50-year smoothed curve and with a separate curve plotting instrumental thermometer data from the 1850s onwards. It stated that in this record, warming since the late 19th century was unprecedented. The section proposed that "The data from the last 1000 years are the most useful for determining the scales of natural climate variability". Recent studies including the 1994 reconstruction by Hughes and Diaz questioned how widespread the Medieval Warm Period had been at any one time, thus it was not possible "to conclude that global temperatures in the Medieval Warm Period were comparable to the warm decades of the late 20th century." The SAR concluded, "it appears that the 20th century has been at least as warm as any century since at least 1400 AD. In at least some areas, the recent period appears to be warmer than has been the case for a thousand or more years".[20]

Tim Barnett of the Scripps Institution of Oceanography was working towards the next IPCC assessment with Phil Jones, and in 1996 told journalist Fred Pearce "What we hope is that the current patterns of temperature change prove distinctive, quite different from the patterns of natural variability in the past".[21]

A divergence problem affecting some tree ring proxies after 1960 had been identified in Alaska by Taubes 1995 and Jacoby & d'Arrigo 1995. Tree ring specialist Keith Briffa's February 1998 study showed that this problem was more widespread at high northern latitudes, and warned that it had to be taken into account to avoid overestimating past temperatures.[22]

3. Climate Field Reconstruction (CFR) Methods; MBH 1998 and 1999

Variations on the "Composite Plus Scale" (CPS) method continued to be used to produce hemispheric or global mean temperature reconstructions. From 1998 this was complemented by Climate Field Reconstruction (CFR) methods which could show how climate patterns had developed over large spatial areas, making the reconstruction useful for investigating natural variability and long term oscillations as well as for comparisons with patterns produced by climate models. The CFR method made more use of climate information embedded in remote proxies, but was more dependent than CPS on assumptions that relationships between proxy indicators and large-scale climate patterns remained stable over time.[23]

Related rigorous statistical methods had been developed for tree ring data, with Harold C. Fritts publishing a 1991 study and a 1991 book showing methodology and examples of how to produces maps showing climate developments in North America over time. These methods had been used for regional reconstructions of temperatures, and other aspects such as rainfall.[24]

As part of his Phd research, Michael E. Mann worked with seismologist Jeffrey Park on developing statistical techniques for find long term oscillations of natural variability in the instrumental temperature record of global surface temperatures over the last 140 years; Mann & Park 1993 showed patterns relating to the El Niño–Southern Oscillation, and Mann & Park 1994 found what was later termed the Atlantic multidecadal oscillation. They then teamed up with Raymond S. Bradley to use these techniques on the dataset from his Bradley & Jones 1993 study with the aim of finding long term oscillations of natural variability in global climate. The resulting reconstruction went back to 1400, and was published in November as Mann, Park & Bradley 1995. They were able to detect that the multiple proxies were varying in a coherent oscillatory way, indicating both the multidecadal pattern in the North Atlantic and a longer term oscillation of roughly 250 years in the surrounding region. Their study did not calibrate these proxy patterns against a quantitative temperature scale, and a new statistical approach was needed to find how they related to surface temperatures in order to reconstruct past temperature patterns.[25][26]

3.1. Mann, Bradley and Hughes 1998

For his postdoctoral research Mann joined Bradley and tree ring specialist Malcolm K. Hughes to develop a new statistical approach to reconstruct underlying spatial patterns of temperature variation combining diverse datasets of proxy information covering different periods across the globe, including a rich resource of tree ring networks for some areas and sparser proxies such as lake sediments, ice cores and corals, as well as some historical records.[27]

Their global reconstruction was a major breakthrough in evaluation of past climate dynamics, and the first eigenvector-based climate field reconstruction (CFR) incorporating multiple climate proxy data sets of different types and lengths into a high-resolution global reconstruction.[28] To relate this data to measured temperatures, they used principal component analysis (PCA) to find the leading patterns, or principal components, of instrumental temperature records during the calibration period from 1902 to 1980. Their method was based on separate multiple regressions between each proxy record (or summary) and all of the leading principal components of the instrumental record. The least squares simultaneous solution of these multiple regressions used covariance between the proxy records. The results were then used to reconstruct large-scale patterns over time in the spatial field of interest (defined as the empirical orthogonal functions, or EOFs) using both local relationships of the proxies to climate and distant climate teleconnections.[18] Temperature records for almost 50 years prior to 1902 were analysed using PCA for the important step of validation calculations, which showed that the reconstructions were statistically meaningful, or skillful.[29]

A balance was required over the whole globe, but most of the proxy data came from tree rings in the Northern mid latitudes, largely in dense proxy networks. Since using all of the large numbers of tree ring records in would have overwhelmed the sparse proxies from the polar regions and the tropics, they used principal component analysis (PCA) to produce PC summaries representing these large datasets, and then treated each summary as a proxy record in their CFR analysis. Networks represented in this way included the North American tree ring network (NOAMER) and Eurasia.[30]

The primary aim of CFR methods was to provide the spatially resolved reconstructions essential for coherent geophysical understanding of how parts of the climate system varied and responded to radiative forcing, so hemispheric averages were a secondary product.[31] The CFR method could also be used to reconstruct Northern Hemisphere mean temperatures, and the results closely resembled the earlier CPS reconstructions including Bradley & Jones 1993.[28] Mann describes this as the least scientifically interesting thing they could do with the rich spatial patterns, but also the aspect that got the most attention. Their original draft ended in 1980 as most reconstructions only went that far, but an anonymous peer reviewer of the paper suggested that the curve of instrumental temperature records should be shown up to the present to include the considerable warming that had taken place between 1980 and 1998.[32]

The Mann, Bradley & Hughes 1998 (MBH98) multiproxy study on "Global-scale temperature patterns and climate forcing over the past six centuries" was submitted to the journal Nature on 9 May 1997, accepted on 27 February 1998 and published on 23 April 1998. The paper announced a new statistical approach to find patterns of climate change in both time and global distribution, building on previous multiproxy reconstructions. The authors concluded that "Northern Hemisphere mean annual temperatures for three of the past eight years are warmer than any other year since (at least) AD1400", and estimated empirically that greenhouse gases had become the dominant climate forcing during the 20th century.[33] In a review in the same issue, Gabriele C. Hegerl described their method as "quite original and promising", which could help to verify model estimates of natural climate fluctuations and was "an important step towards reconstructing space–time records of historical temperature patterns".[34]

Publicity and controversy on publication of MBH98

Release of the paper on 22 April 1998 was given exceptional media coverage, including questioning as to whether it proved that human influences were responsible for global warming. Mann would only agree that it was "highly suggestive" of that inference.[35] He said that "Our conclusion was that the warming of the past few decades appears to be closely tied to emission of greenhouse gases by humans and not any of the natural factors". Most proxy data are inherently imprecise, and Mann said "We do have error bars. They are somewhat sizable as one gets farther back in time, and there is reasonable uncertainty in any given year. There is quite a bit of work to be done in reducing these uncertainties." Climatologist Tom Wigley welcomed the progress made in the study, but doubted if proxy data could ever be wholly convincing in detecting the human contribution to changing climate.[36]

Phil Jones of the UEA Climatic Research Unit told the New York Times he was doubtful about adding the 150-year thermometer record to extend the proxy reconstruction, and compared this with putting together apples and oranges; Mann et al. said they used a comparison with the thermometer record to check that recent proxy data were valid. Jones thought the study would provide important comparisons with the findings of climate modeling, which showed a "pretty reasonable" fit to proxy evidence.[36] A commentary on MBH98 by Jones was published in Science on 24 April 1998. He noted that it used almost all the available long term proxy climate series, "and if the new multivariate method of relating these series to the instrumental data is as good as the paper claims, it should be statistically reliable." He discussed some of the difficulties, and emphasised that "Each paleoclimatic discipline has to come to terms with its own limitations and must unreservedly admit to problems, warts and all."[37]

The study was disputed by contrarian Pat Michaels with the claim that all of the warming took place between 1920 and 1935, before increased human greenhouse gas emissions.[38] The George C. Marshall Institute alleged that MBH98 was deceptive in only going back to 1400, and so not covering the Medieval Warm Period which predated industrial greenhouse gas emissions.[39] The same criticisms were made by Willie Soon and Sallie Baliunas.[40]

3.2. Pollack, Huang and Shen, Jones et al. 1998

In October 1998 the borehole reconstruction published by Pollack, Huang and Shen gave independent support to the conclusion that 20th century warmth was exceptional for the past 500 years.[41]

Jones, Keith Briffa, Tim P. Barnett and Simon Tett had independently produced a "Composite Plus Scale" (CPS) reconstruction extending back for a thousand years, comparing tree ring, coral layer, and glacial proxy records, but not specifically estimating uncertainties. Jones et al. 1998 was submitted to The Holocene on 16 October 1997; their revised manuscript was accepted on 3 February and published in May 1998. As Bradley recalls, Mann's initial view was that there was too little information and too much uncertainty to go back so far, but Bradley said "Why don't we try to use the same approach we used in Nature, and see if we could push it back a bit further?" Within a few weeks, Mann responded that to his surprise, "There is a certain amount of skill. We can actually say something, although there are large uncertainties."[42][43]

3.3. Mann, Bradley and Hughes 1999

In considering the 1998 Jones et al. reconstruction which went back a thousand years, Mann, Bradley and Hughes reviewed their own research and reexamined 24 proxy records which extended back before 1400. Mann carried out a series of statistical sensitivity tests, removing each proxy in turn to see the effect its removal had on the result. He found that certain proxies were critical to the reliability of the reconstruction, particularly one tree ring dataset collected by Gordon Jacoby and Rosanne D'Arrigo in a part of North America Bradley's earlier research had identified as a key region.[44] This dataset only extended back to 1400, and though another proxy dataset from the same region (in the International Tree-Ring Data Bank) went further back and should have given reliable proxies for earlier periods, validation tests only supported their reconstruction after 1400. To find out why, Mann compared the two datasets and found that they tracked each other closely from 1400 to 1800, then diverged until around 1900 when they again tracked each other. He found a likely reason in the CO

2 "fertilisation effect" affecting tree rings as identified by Graybill and Idso, with the effect ending once CO

2 levels had increased to the point where warmth again became the key factor controlling tree growth at high altitude. Mann used comparisons with other tree ring data from the region to produce a corrected version of this dataset. Their reconstruction using this corrected dataset passed the validation tests for the extended period, but they were cautious about the increased uncertainties.[45]

The Mann, Bradley and Hughes reconstruction covering 1,000 years (MBH99) was submitted in October 1998 to Geophysical Research Letters which published it in March 1999 with the cautious title Northern Hemisphere temperatures during the past millennium: inferences, uncertainties, and limitations to emphasise the increasing uncertainty involved in reconstructions of the period before 1400 when fewer proxies were available.[42][46] A University of Massachusetts Amherst news release dated 3 March 1999 announced publication in the 15 March issue of Geophysical Research Letters, "strongly suggesting that the 1990s were the warmest decade of the millennium, with 1998 the warmest year so far." Bradley was quoted as saying "Temperatures in the latter half of the 20th century were unprecedented", while Mann said "As you go back farther in time, the data becomes sketchier. One can’t quite pin things down as well, but, our results do reveal that significant changes have occurred, and temperatures in the latter 20th century have been exceptionally warm compared to the preceding 900 years. Though substantial uncertainties exist in the estimates, these are nonetheless startling revelations." While the reconstruction supported theories of a relatively warm medieval period, Hughes said "even the warmer intervals in the reconstruction pale in comparison with mid-to-late 20th-century temperatures."[47] The New York Times report had a colored version of the graph, distinguishing the instrumental record from the proxy evidence and emphasising the increasing range of possible error in earlier times, which MBH said would "preclude, as yet, any definitive conclusions" about climate before 1400.[48]

The reconstruction found significant variability around a long-term cooling trend of –0.02 °C per century, as expected from orbital forcing, interrupted in the 20th century by rapid warming which stood out from the whole period, with the 1990s "the warmest decade, and 1998 the warmest year, at moderately high levels of confidence." This was illustrated by the time series line graph Figure 2(a) which showed their reconstruction from AD 1000 to 1980 as a thin line, wavering around a thicker dark 40-year smoothed line. This curve followed a downward trend (shown as a thin dot-dashed line) from a Medieval Warm Period (about as warm as the 1950s) down to a cooler Little Ice Age before rising sharply in the 20th century. Thermometer data shown with a dotted line overlapped the reconstruction for a calibration period from 1902 to 1980, then continued sharply up to 1998. A shaded area showed uncertainties to two standard error limits, in medieval times rising almost as high as recent temperatures.[46][49][50] When Mann gave a talk about the study to the National Oceanic and Atmospheric Administration's Geophysical Fluid Dynamics Laboratory, Jerry Mahlman nicknamed the graph the "hockey stick",[42] with the slow cooling trend the "stick", and the anomalous 20th century warming the "blade".[16]

Critique and independent reconstructions

Briffa and Tim Osborn critically examined MBH99 in a May 1999 detailed study of the uncertainties of various proxies. They raised questions later adopted by critics of Mann's work, including the point that bristlecone pines from the Western U.S. could have been affected by pollution such as rising CO

2 levels as well as temperature. The temperature curve was supported by other studies, but most of these shared the limited well dated proxy evidence then available, and so few were truly independent. The uncertainties in earlier times rose as high as those in the reconstruction at 1980, but did not reach the temperatures of later thermometer data. They concluded that although the 20th century was almost certainly the warmest of the millennium, the amount of anthropogenic warming remains uncertain."[51][52]

With work progressing on the next IPCC report, Chris Folland told researchers on 22 September 1999 that a figure showing temperature changes over the millennium "is a clear favourite for the policy makers' summary". Two graphs competed: Jones et al. (1998) and MBH99. In November, Jones produced a simplified figure for the cover of the short annual World Meteorological Organization report, which lacks the status of the more important IPCC reports. Two fifty-year smoothed curves going back to 1000 were shown, from MBH99 and Jones et al. (1998), with a third curve to 1400 from Briffa's new paper, combined with modern temperature data bringing the lines up to 1999: in 2010 the lack of a clarity about this change of data was criticised as misleading.[53]

Briffa's paper as published in the January 2000 issue of Quaternary Science Reviews showed the unusual warmth of the last century, but cautioned that the impact of human activities on tree growth made it subtly difficult to isolate a clear climate message.[54] In February 2000 Thomas J. Crowley and Thomas S. Lowery's reconstruction incorporated data not used previously. It reached the conclusion that peak Medieval warmth only occurred during two or three short periods of 20 to 30 years, with temperatures around 1950s levels, refuting claims that 20th century warming was not unusual.[55] An analysis by Crowley published in July 2000 compared simulations from an energy balance climate model with reconstructed mean annual temperatures from MBH99 and Crowley & Lowery (2000). While earlier reconstructed temperature variations were consistent with volcanic and solar irradiation changes plus residual variability, very large 20th-century warming closely agreed with the predicted effects of greenhouse gas emissions.[56]

Reviewing twenty years of progress in palaeoclimatology, Jones noted the reconstructions by Jones et al. (1998), MBH99, Briffa (2000) and Crowley & Lowery (2000) showing good agreement using different methods, but cautioned that use of many of the same proxy series meant that they were not independent, and more work was needed.[57]

3.4. IPCC Third Assessment Report, 2001

The Working Group 1 (WG1) part of the IPCC Third Assessment Report (TAR) included a subsection on multi-proxy synthesis of recent temperature change. This noted five earlier large-scale palaeoclimate reconstructions, then discussed the Mann, Bradley & Hughes 1998 reconstruction going back to 1400 AD and its extension back to 1000 AD in Mann, Bradley & Hughes 1999 (MBH99), while emphasising the substantial uncertainties in the earlier period. The MBH99 conclusion that the 1990s were likely to have been the warmest decade, and 1998 the warmest year, of the past millennium in the Northern Hemisphere, with "likely" defined as "66-90% chance", was supported by reconstructions by Crowley & Lowery 2000 and by Jones et al. 1998 using different data and methods. The Pollack, Huang & Shen 1998 reconstruction covering the past 500 years gave independent support for this conclusion, which was compared against the independent (extra-tropical, warm-season) tree-ring density NH temperature reconstruction of Briffa 2000.[58]

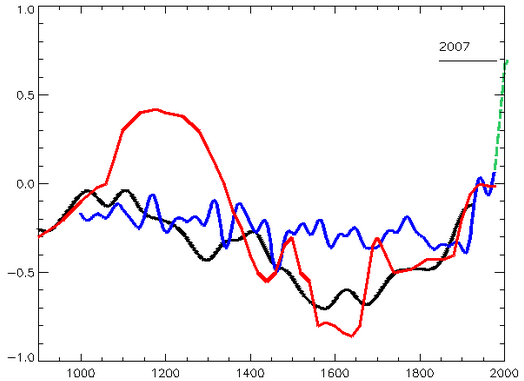

Its Figure 2.21 showed smoothed curves from the MBH99, Jones et al. and Briffa reconstructions, together with modern thermometer data as a red line and the grey shaded 95% confidence range from MBH99. Above it, figure 2.20 was adapted from MBH99.[58] Figure 5 in WG1 Technical Summary B (as shown to the right) repeated this figure without the linear trend line declining from AD 1000 to 1850.[59]

This iconic graph adapted from MBH99 was featured prominently in the WG1 Summary for Policymakers under a graph of the instrumental temperature record for the past 140 years. The text stated that it was "likely that, in the Northern Hemisphere, the 1990s was the warmest decade and 1998 the warmest year" in the past 1,000 years.[60] Versions of these graphs also featured less prominently in the short Synthesis Report Summary for Policymakers, which included a sentence stating that "The increase in surface temperature over the 20th century for the Northern Hemisphere is likely to have been greater than that for any other century in the last thousand years", and the Synthesis Report - Questions.[61]

The Working Group 1 scientific basis report was agreed unanimously by all member government representatives in January 2001 at a meeting held in Shanghai, China. A large poster of the IPCC illustration based on the MBH99 graph formed the backdrop when Sir John T. Houghton, as Co-Chair of the working group, presented the report in an announcement shown on television, leading to wide publicity.[42][62]

4. Scientific Debates

The Huang, Pollack & Shen 2000 borehole temperature reconstruction covering the past five centuries supported the conclusion that 20th century warming was exceptional[63]

In a perspective commenting on MBH99, Wallace Smith Broecker argued that the Medieval Warm Period (MWP) was global. He attributed recent warming to a roughly 1500-year cycle which he suggested related to episodic changes in the Atlantic's conveyor circulation.[64]

A March 2002 tree ring reconstruction by Jan Esper et al. noted the debate, and Broecker's criticism that MBH99 did not show a clear MWP. They concluded that the MWP was likely to have been widespread in the extratropical northern hemisphere, and seemed to have approached late 20th century temperatures at times.[65] In an interview, Mann said the study did not contradict MBH as it dealt only with extratropical land areas, and stopped before the late 20th century. He reported that Edward R. Cook, a co-author on the paper, had confirmed agreement with these points,[66] and a later paper by Cook, Esper and D'Arrigo reconsidered the earlier paper's conclusions along these lines.[67]

Lonnie Thompson published a paper on "Tropical Glacier and Ice Core Evidence of Climate Change" in January 2003, featuring Figure 7 showing graphs based on ice cores closely resembling a graph based on the MBH99 reconstruction, combined with thermometer readings from Jones et al. 1999.[68]

4.1. RegEM Climate Field Reconstruction

In March 2001 Tapio Schneider published his regularized expectation–maximization (RegEM) technique for analysis of incomplete climate data.[69] The original MBH98 and MBH99 papers avoided undue representation of large numbers of tree ring proxies by using a principal component analysis step to summarise these proxy networks, but from 2001 Mann stopped using this method and introduced a multivariate Climate Field Reconstruction (CFR) technique based on the RegEM method which did not require this PCA step. In May 2002 Mann and Scott Rutherford published a paper on testing methods of climate reconstruction which discussed this technique. By adding artificial noise to actual temperature records or to model simulations they produced synthetic datasets which they called "pseudoproxies". When the reconstruction procedure was used with these pseudoproxies, the result was then compared with the original record or simulation to see how closely it had been reconstructed. The paper discussed the issue that regression methods of reconstruction tended to underestimate the amplitude of variation.[70]

5. Controversy after IPCC Third Assessment Report

While the IPCC Third Assessment Report (TAR) drew on five reconstructions to support its conclusion that recent Northern Hemisphere temperatures were the warmest in the past 1,000 years, it gave particular prominence to an IPCC illustration based on the MBH99 paper.[60][62] The hockey stick graph was subsequently seen by mass media and the public as central to the IPCC case for global warming, which had actually been based on other unrelated evidence.[42] From an expert viewpoint the graph was, like all newly published science, preliminary and uncertain, but it was widely used to publicise the issue of global warming,[49][71] and it was targeted by those opposing ratification of the Kyoto Protocol on global warming.[42]

A literature review by Willie Soon and Sallie Baliunas, published in the relatively obscure journal Climate Research on 31 January 2003, used data from previous papers to argue that the Medieval Warm Period had been warmer than the 20th century, and that recent warming was not unusual. In March they published an extended paper in Energy & Environment, with additional authors.[72][73] The Bush administration's Council on Environmental Quality chief of staff Philip Cooney inserted references to the papers in the draft first Environmental Protection Agency Report on the Environment, and removed all references to reconstructions showing world temperatures rising over the last 1,000 years.[74] In the Soon and Baliunas controversy, two scientists cited in the papers said that their work was misrepresented,[72][73] and the Climate Research paper was criticised by many other scientists, including several of the journal's editors.[75] On 8 July Eos featured a detailed rebuttal of both papers by 13 scientists including Mann and Jones, presenting strong evidence that Soon and Baliunas had used improper statistical methods. Responding to the controversy, the publisher of Climate Research upgraded Hans von Storch from editor to editor in chief, but von Storch decided that the Soon and Baliunas paper was seriously flawed and should not have been published as it was. He proposed a new editorial system, and though the publisher of Climate Research agreed that the paper should not have been published uncorrected, he rejected von Storch's proposals to improve the editorial process, and von Storch with three other board members resigned.[72][76] Senator James M. Inhofe stated his belief that "manmade global warming is the greatest hoax ever perpetrated on the American people",[75][77] and a hearing of the United States Senate Committee on Environment and Public Works which he convened on 29 July 2003 heard the news of the resignations.[72][78]

Stephen McIntyre downloaded datasets for MBH99, and obtained MBH98 datasets by request to Mann in April 2003.[79] At the suggestion of Sonja Boehmer-Christiansen, editor of the social science journal Energy & Environment, McIntyre wrote an article with the assistance of University of Guelph economics professor Ross McKitrick,[80][81] which Energy & Environment published on 27 October 2003.[82][83] The McIntyre & McKitrick 2003b paper (MM03) said that the Mann, Bradley & Hughes 1998 (MBH98) "hockey stick" shape was "primarily an artefact of poor data handling and use of obsolete proxy records."[84] Their criticism was comprehensively refuted by Wahl & Ammann 2007,[85] which showed errors in the methods used by McIntyre and McKitrick.[86]

The statistical methods used in the MBH reconstruction were questioned in a 2004 paper by Hans von Storch with a team including Eduardo Zorita,[87] which said that the methodology used to average the data and the wide uncertainties might have hidden abrupt climate changes, possibly as large as the 20th century spike in measured temperatures.[50] They used the pseudoproxy method which Mann and Rutherford had developed in 2002, and like them found that regression methods of reconstruction tended to underestimate the amplitude of variation, a problem covered by the wide error bars in MBH99. It was a reasonable critique of nearly all the reconstructions at that time, but MBH were singled out.[70] Other researchers subsequently found that the von Storch paper had an undisclosed additional step which, by detrending data before estimating statistical relationships, had removed the main pattern of variation.[88] The von Storch et al. view that the graph was defective overall was refuted by Wahl, Ritson and Ammann (2006).[85][89]

In 2004 McIntyre and McKitrick tried unsuccessfully to get an extended analysis of the hockey stick into the journal Nature.[90][91] At this stage Nature contacted Mann, Bradley, and Hughes, about minor errors in the online supplement to MBH98. In a corrigendum published on 1 July 2004 they acknowledged that McIntyre and McKitrick had pointed out errors in proxy data that had been included as supplementary information, and supplied a full corrected listing of the data. They included a documented archive of all the data used in MBH98, and expanded details of their methods. They stated that "None of these errors affect our previously published results."[92]

The McIntyre and McKitrick comment was accepted for publication by Geophysical Research Letters. McIntyre & McKitrick 2005 (MM05) reported a technical statistical error in the Mann, Bradley & Hughes 1998 (MBH98) method, which they said would produce hockey stick shapes from random data. This claim was given widespread publicity and political spin. Scientists found that the issues raised by McIntyre and McKitrick were minor and did not affect the main conclusions of MBH98 or Mann, Bradley & Hughes 1999.[85][90] Mann himself had already stopped using the criticised statistical method in 2001, when he changed over to the RegEM climate field reconstruction method.[93] To balance dense networks of tree-ring proxies against sparse proxy temperature records such as lake sediments, ice cores or corals, MBH 1998 (and 1999) used principal component analysis (PCA) to find the leading patterns of variation (PC1, PC2, PC3 etc.), with an objective method establish how many significant principal components should be kept so that the patterns put together characterized the original dataset.[94] McIntyre and McKitrick highlighted the effect of centering over the 1902–1980 period rather than the whole 1400–1980 period which would have changed the order of principal components so that the warming pattern of high altitude tree ring data was demoted from PC1 to PC4,[95] but instead of recalculating the objective selection rule which increased the number of significant PCs from two to five, they only kept PC1 and PC2. This removed the significant 20th century warming pattern of PC4, discarding data that produced the "hockey stick" shape,[96][97] Subsequent investigation showed that the "hockey stick" shape remained with the correct selection rule.[97]

The MM05 paper claimed that 1902–1980 centering would produce hockey stick shapes from "persistent red noise",[95] but their methods exaggerated the effect.[98] Tests of the MBH98 methodology on pseudoproxies formed with noise varying from red noise to white noise found that this effect caused only very small differences which were within the uncertainty range and had no significance for the final reconstruction.[99] McIntyre and McKitrick's code selected 100 simulations with the highest "hockey stick index" from the 10,000 simulations they had carried out, and their illustrations were taken from this pre-selected 1%.[100]

On 23 June 2005, Rep. Joe Barton, chairman of the House Committee on Energy and Commerce wrote joint letters with Ed Whitfield, Chairman of the Subcommittee on Oversight and Investigations, referring to the publicity and demanding full records on climate research, as well as personal information about their finances and careers, from the three scientists Mann, Bradley and Hughes.[101][102] Sherwood Boehlert, chairman of the House Science Committee, told his fellow Republican Joe Barton it was a "misguided and illegitimate investigation" apparently aimed at intimidating scientists. The U.S. National Academy of Sciences (NAS) president Ralph J. Cicerone proposed that the NAS should appoint an independent panel to investigate. Barton dismissed this offer,[103][104] but following Boehlert's November 2005 request, the National Academy of Science arranged for its National Research Council to set up a special committee chaired by Gerald North, to investigate and report.[105]

The North Report went through a rigorous review process,[106] and was published on 22 June 2006.[107] It concluded "with a high level of confidence that global mean surface temperature was higher during the last few decades of the 20th century than during any comparable period during the preceding four centuries", justified by consistent evidence from a wide variety of geographically diverse proxies, but "Less confidence can be placed in large-scale surface temperature reconstructions for the period from 900 to 1600".[63] It broadly agreed with the basic findings of the original MBH studies which had subsequently been supported by other reconstructions and proxy records, while emphasising uncertainties over earlier periods.[108] The contested principal component analysis methodology had a small tendency to bias results so was not recommended, but it had little influence on the final reconstructions, and other methods produced similar results.[109][110]

Barton's staffer contacted statistician Edward Wegman who produced the Wegman report with his graduate student Yasmin H. Said, and statistician David W. Scott, all statisticians with no expertise in climatology or other physical sciences.[111][112] The Wegman report was announced on 14 July 2006 in the Wall Street Journal,[113] and discussed at hearings of the United States House Energy Subcommittee on Oversight and Investigations on 19 July 2006,[113][114] and 27 July 2006.[115] The report was not properly peer reviewed. It reiterated McIntyre and McKitrick's claims on statistical failings in the MBH studies, but did not quantify whether correcting these points had any significant effect.[116] It included a social network analysis to allege a lack of independent peer review of Mann's work: this analysis has been discredited by expert opinion and found to have issues of plagiarism.[117][118]

6. Reconstructions 2003–2006

Using various high-resolution proxies including tree rings, ice cores and sediments, Mann and Jones published reconstructions in August 2003 which indicated that "late 20th century warmth is unprecedented for at least roughly the past two millennia for the Northern Hemisphere. Conclusions for the Southern Hemisphere and global mean temperature are limited by the sparseness of available proxy data in the Southern Hemisphere at present." They concluded that "To the extent that a ‘Medieval’ interval of moderately warmer conditions can be defined from about AD 800 – 1400, any hemispheric warmth during that interval is dwarfed in magnitude by late 20th century warmth."[119]

Borehole climate reconstructions in a paper by Pollack and Smerdon, published in June 2004, supported estimates of a surface warming of around 1 °C (1.8 °F) over the period from 1500 to 2000.[120]

In a study published in November 2004 Edward R. Cook, Jan Esper and Rosanne D'Arrigo re-examined their 2002 paper, and now supported MBH. They concluded that "annual temperatures up to AD 2000 over extra-tropical NH land areas have probably exceeded by about 0.3 °C the warmest previous interval over the past 1162 years".[67]

A study by Anders Moberg et al. published on 10 February 2005 used a wavelet transform technique to reconstruct Northern Hemisphere temperatures over the last 2,000 years, combining low-resolution proxy data such as lake and ocean sediments for century-scale or longer changes, with tree ring proxies only used for annual to decadal resolution. They found there had been a peak of temperatures around AD 1000 to 1100 similar to those reached in the years before 1990,[121] and supported the basic conclusion of MBH99 by stating "We find no evidence for any earlier periods in the last two millennia with warmer conditions than the post-1990 period".[122]

At the end of April 2005 Science published a reconstruction by J. Oerlemans based on glacier length records from different parts of the world, and found consistent independent evidence for the period from 1600 to 1990 supporting other reconstructions regarding magnitude and timing of global warming.[123]

On 28 February 2006 Wahl & Ammann 2007 was accepted for publication, and an "in press" copy was made available on the internet. Two more reconstructions were published, using different methodologies and supporting the main conclusions of MBH. Rosanne D'Arrigo, Rob Wilson and Gordon Jacoby suggested that medieval temperatures had been almost 0.7 °C cooler than the late 20th century but less homogenous,[124] Osborn and Briffa found the spatial extent of recent warmth more significant than that during the medieval warm period.[125][126] They were followed in April by a third reconstruction led by Gabriele C. Hegerl.[127]

7. IPCC Fourth Assessment Report, 2007

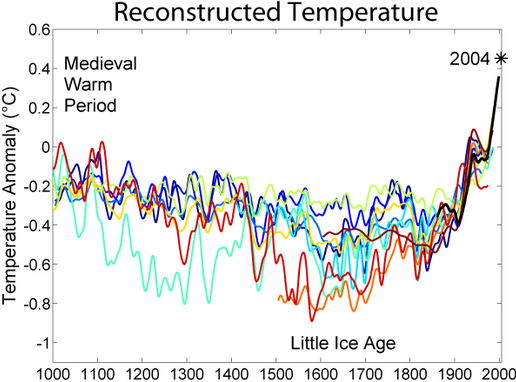

The IPCC Fourth Assessment Report (AR4) published in 2007 included a chapter on Paleoclimate, with a section on the last 2,000 years. This featured a graph showing 12 proxy based temperature reconstructions, including the three highlighted in the IPCC Third Assessment Report (TAR); Mann, Bradley & Hughes 1999 as before, Jones et al. 1998 and Briffa 2000 had both been calibrated by newer studies. In addition, analysis of the Medieval Warm Period cited reconstructions by Crowley & Lowery 2000 (as cited in the TAR) and Osborn & Briffa 2006. Ten of these 14 reconstructions covered 1,000 years or longer. Most reconstructions shared some data series, particularly tree ring data, but newer reconstructions used additional data and covered a wider area, using a variety of statistical methods. The section discussed the divergence problem affecting certain tree ring data.[128]

It concluded that "The weight of current multi-proxy evidence, therefore, suggests greater 20th-century warmth, in comparison with temperature levels of the previous 400 years, than was shown in the TAR. On the evidence of the previous and four new reconstructions that reach back more than 1 kyr, it is likely that the 20th century was the warmest in at least the past 1.3 kyr."[128] The SPM statement in the IPCC TAR of 2001 had been that it was "likely that, in the Northern Hemisphere, the 1990s was the warmest decade and 1998 the warmest year" in the past 1,000 years.[60] The AR4 SPM statement was that "Average Northern Hemisphere temperatures during the second half of the 20th century were very likely higher than during any other 50-year period in the last 500 years and likely the highest in at least the past 1,300 years. Some recent studies indicate greater variability in Northern Hemisphere temperatures than suggested in the TAR, particularly finding that cooler periods existed in the 12th to 14th, 17th and 19th centuries. Warmer periods prior to the 20th century are within the uncertainty range given in the TAR."[129]

8. Mann Et Al., 2008 and 2009

Further reconstructions were published, using additional proxies and different methodology. Juckes et al. 2007 and Lee, Zwiers & Tsao 2008 compared and evaluated the various statistical approaches.[130] In July 2008 Huang, Pollack and Shen published a suite of borehole reconstructions covering 20,000 years. They showed warm episodes in the mid-Holocene and the Medieval period, a little ice age and 20th century warming reaching temperatures higher than Medieval Warm Period peak temperatures in any of the reconstructions: they described this finding as consistent with the IPCC AR4 conclusions.[131]

In a paper published by PNAS on 9 September 2008, Mann and colleagues produced updated reconstructions of Earth surface temperature for the past two millennia.[23] This reconstruction used a more diverse dataset that was significantly larger than the original tree-ring study, at more than 1,200 proxy records. They used two complementary methods, both of which showed a similar "hockey stick" graph with recent increases in northern hemisphere surface temperature are anomalous relative to at least the past 1300 years. Mann said, "Ten years ago, the availability of data became quite sparse by the time you got back to 1,000 AD, and what we had then was weighted towards tree-ring data; but now you can go back 1,300 years without using tree-ring data at all and still get a verifiable conclusion."[132] In a PNAS response, McIntyre and McKitrick said that they perceived a number of problems, including that Mann et al used some data with the axes upside down.[133] Mann et al. replied that McIntyre and McKitrick "raise no valid issues regarding our paper" and the "claim that 'upside down' data were used is bizarre", as the methods "are insensitive to the sign of predictors." They also said that excluding the contentious datasets has little effect on the result.[134]

A study of the changing climate of the Arctic over the last 2,000 years, by an international consortium led by Darrell Kaufman of Northern Arizona University, was published on 4 September 2009. They examined sediment core records from 14 Arctic lakes, supported by tree ring and ice core records. Their findings showed a long term cooling trend consistent with cycles in the Earth's orbit which would be expected to continue for a further 4,000 years but had been reversed in the 20th century by a sudden rise attributed to greenhouse gas emissions. The decline had continued through the Medieval period and the Little Ice Age. The most recent decade, 1999–2008, was the warmest of the period, and four of the five warmest decades occurred between 1950 and 2000. Scientific American described the graph as largely replicating "the so-called 'hockey stick,' a previous reconstruction".[135]

Further support for the "hockey stick" graph came from a new method of analysis using Bayesian statistics developed by Martin Tingley and Peter Huybers of Harvard University, which produced the same basic shape, albeit with more variability in the past, and found the 1990s to have been the warmest decade in the 600-year period the study covered.[136]

9. 2010 Onwards

A 2,000 year extratropical Northern Hemisphere reconstruction by Ljungqvist published by Geografiska Annaler in September 2010 drew on additional proxy evidence to show both a Roman Warm Period and a Medieval Warm Period with decadal mean temperatures reaching or exceeding the reference 1961–1990 mean temperature level. Instrumental records of the period 1990–2010 were possibly above any temperature in the reconstruction period, though this did not appear in the proxy records. They concluded that their "reconstruction agrees well with the reconstructions by Moberg et al. (2005) and Mann et al. (2008) with regard to the amplitude of the variability as well as the timing of warm and cold periods, except for the period c. ad 300–800, despite significant differences in both data coverage and methodology."[137]

A 2010 opinion piece by David Frank, Jan Esper, Eduardo Zorita and Rob Wilson (Frank et al. 2010) noted that by then over two dozen large-scale climate reconstructions had been published, showing a broad consensus that there had been exceptional 20th century warming after earlier climatic phases, notably the Medieval Warm Period and Little Ice Age. There were still issues of large-scale natural variability to be resolved, especially for the lowest frequency variations, and they called for further research to improve expert assessment of proxies and to develop reconstruction methods explicitly allowing for structural uncertainties in the process.[7]

As several studies had noted, regression-based reconstruction methods tended to underestimate low-frequency variability. Bo Christiansen designed a new method (LOC) to overcome this problem, and with Ljungqvist used LOC to produce a 1,000 year reconstruction published in 2011. This showed more low frequency variability and a colder Little Ice Age than previous studies.[138] They then extended the LOC reconstruction back using selected proxies which had a documented relation to temperature and passed a screening procedure. This 2,000 year reconstruction, published in 2012, again showed more variability than earlier reconstructions. It found a homogenous Little Ice Age from 1580–1720 showing colder conditions in all areas, and a well defined but possibly less homogenous Medieval Warm Period peak around 950–1050, reaching or slightly exceeding mid 20th century temperatures as indicated by previous studies including Mann et al. 2008 and 2009.[139]

Ljungqvist et al. 2012 used a larger network of proxies than previous studies, including use low-resolution proxy data with as few as two data points per century, to produce a reconstruction showing centennial patterns of temperature variability in space and time for northern hemisphere land areas over the last 1,200 years. At this broad scale, they found widespread warmth from the 9th to 11th centuries approximating to the 20th century mean, with dominant cooling from the 16th to 18th centuries. The greatest warming occurred from the 19th to the 20th centuries, and they noted that instrumental records of recent decades were much warmer than the 20th century mean. Their spatial reconstruction showed similarities to the Mann et al. 2009 climate field reconstruction, though the different resolution meant these were not directly comparable. The results were robust, even when significant numbers of proxies were removed.[140]

Marcott et al. 2013 used seafloor and lake bed sediment proxies, which were completely independent of those used in earlier studies, to reconstruct global temperatures over the past 11,300 years, covering the entire Holocene, and showing over the last 1,000 years confirmation of the original MBH99 hockey stick graph.[141] Temperatures had slowly risen from the last ice age to reach a level which lasted from 10,000 to 5,000 years ago, then in line with Milankovitch cycles had begun a slow decline, interrupted by a small rise during the Medieval Warm Period, to the Little Ice Age. That decline had then been interrupted by a uniquely rapid rise in the 20th century to temperatures which were already the warmest for at least 4,000 years, within the range of uncertainties of the highest temperatures in the whole period, and on current estimates were likely to exceed those temperatures by 2100.[142]

References

- Weart 2013

- introduction to the English edition, The Climates of the Geological Past, (2015), retrieved August 28, 2015 http://borntraeger-cramer.com/9783443010881

- Köppen, W. & Wegener, A. (1924): Die Klimate der geologischen Vorzeit, Borntraeger Science Publishers. In English as The Climates of the Geological Past (2015).

- Jones et al. 2009, pp. 34, 36.

- Weart 2011c, Hubert Lamb; Lamb 1965 http://www.aip.org/history/climate/20ctrend.htm#L_0358

- Lamb, Clayton & Wigley 1997, pp. xxvii—xxix

- Frank et al. 2010.

- Landsberg, Groveman & Hakkarinen 1978, Groveman & Landsberg 1979

- Mann 2012, pp. 274–275.Jacoby & D'Arrigo 1989.

- Pearce 2010, pp. 37–39; Weart 2011o, Global Warming as a Political Issue (1980s) http://www.aip.org/history/climate/Govt.htm#s3

- Folland 1990, pp. 199–202.

- Pearce 2010, pp. 45–46.

- Bradley 1991

- Folland et al. 1992

- Jones et al. 2009, p. 36.

- Wahl & Ammann 2007.

- Bradley & Jones 1993.

- Jones et al. 2009, pp. 21–22.

- Mann 2012, pp. 40–41

- Nicholls 1996, pp. 173–176, fig 3.20; Hughes & Diaz 1994.

- Pearce 2010, p. 44.

- Briffa et al. 1998,d'Arrigo et al. 2008

- Mann et al. 2008

- Jones & Mann 2004

- Mann 2012, pp. 28–34, 270

- Mann, Park & Bradley 1995.

- Mann 2012, pp. 40–48

- Wahl & Ammann 2007

- Mann 2012, pp. 47–48

- Mann 2012, pp. 43, 45

- Ammann & Wahl 2007

- Mann 2012, pp. 43, 48

- Mann, Bradley & Hughes 1998

- Hegerl 1998

- Mann 2012, pp. 48–50

- Stevens 1998.

- Jones 1998.

- Mann 2012, p. 106, Michaels 1998

- Marshall Institute 1998.

- Mann 2012, p. 106, Soon & Baliunas 1998, Mann 1998.

- Pollack, Huang & Shen 1998

- Monastersky 2006, p. 10.

- Jones et al. 1998

- Mann 2012, pp. 50–51; Jacoby & D'Arrigo 1989; Bradley 1996.

- Mann 2012, pp. 51–53; Graybill & Idso 1993.

- Mann, Bradley & Hughes 1999

- UMass press release, 3 March 1999.

- Stevens 1999.

- Weart 2011c, The Hockey Stick and Beyond http://www.aip.org/history/climate/20ctrend.htm#S6

- Weart 2011c, The influential 1999 "hockey stick" reconstruction http://www.aip.org/history/climate/20ctrend.htm#b_hockey

- Pearce 2010, pp. 50–51

- Briffa & Osborn 1999.

- Pearce 2010, p. 48; Jones and Davies, quoted in UEA press release, 24 Nov. 2009; ICCER 2010, pp. 59–60: "The WMO report is a short document produced annually. It does not have the status or importance of the IPCC reports."

- Briffa 2000

- Crowley & Lowery 2000

- Crowley 2000

- Jones 2000.

- Folland 2001, 2.3.2.2 Multi-proxy synthesis of recent temperature change http://www.grida.no/publications/other/ipcc_tar/?src=/climate/ipcc_tar/wg1/069.htm#fig220

- Albritton 2001, Technical Summary B , Figure 5 . http://www.grida.no/publications/other/ipcc_tar/?src=/climate/ipcc_tar/vol4/english/082.htm#surface

- IPCC WG1 SPM 2001, Figure 1(b). http://www.grida.no/publications/other/ipcc_tar/?src=/climate/ipcc_tar/wg1/005.htm#figspm1

- IPCC TAR SYR 2001 Summary for Policymakers : Question 2 , Figure SPM-10b , Question 9 ; Figure 9-1b , Figure 2-3 . http://www.grida.no/climate/ipcc_tar/vol4/english/index.htm

- Kirby, 22 Jan. 2001 (BBC News)

- North et al. 2006, pp. 1–3 http://books.nap.edu/openbook.php?record_id=11676&page=1

- Broecker 2001

- Esper, Cook & Schweingruber 2002

- Mann, interviewed by Flatow, 29 March 2002 (NPR).

- Cook, Esper & D'Arrigo 2004.

- Thompson et al. 2003.

- Schneider 2001

- Mann 2012, pp. 104–105, 306; Mann & Rutherford 2002

- Pearce & 2010_pt4, "Part four: Climate change debate overheated after sceptics grasped 'hockey stick'". https://www.theguardian.com/environment/2010/feb/09/hockey-stick-michael-mann-steve-mcintyre

- Monastersky 2003.

- Soon & Baliunas 2003.

- Revkin 2005, Waxman 2007

- Revkin, 5 August 2003 (NYT).

- Mann et al. 2003.

- Inhofe, 4 Jan. 2005 (speech); quoted in Congressional Record, 28 July 2003.

- HCEPW, 29 July 2003 (hearing).

- McIntyre, 28 Oct. 2003 (blog).

- McKitrick, Ross; McIntyre, Stephen (November 2003), Ross McKitrick, archived from the original on 27 November 2003, https://web.archive.org/web/20031127041544/http://www.uoguelph.ca/~rmckitri/research/trc.html, retrieved 10 September 2012

- Thacker 2005b.Mann 2012, pp. 122–123.

- Appell, David (2004), Quark Soup: Timing of M&M in E&E, archived from the original on 2 December 2003, https://web.archive.org/web/20031202005513/http://www.davidappell.com/, retrieved 10 September 2012

- Mann 2012, p. 122

- McIntyre & McKitrick 2003a.

- Weart 2011c, footnote 48, (p. 19, n1 in pdf). http://www.aip.org/history/climate/20ctrend.htm#N_48_

- Jansen 2007, Sec. 6.6.1.1: What Do Reconstructions Based on Palaeoclimatic Proxies Show?, p. 466. http://www.ipcc.ch/publications_and_data/ar4/wg1/en/ch6s6-6.html#6-6-1

- ICCER 2010, von Storch et al. 2004.

- Mann 2012, pp. 105, 295

- Wahl, Ritson & Ammann 2006.

- Pearce 2010, pp. 93–96

- McIntyre, Stephen (2004), Welcome to Climate2003, archived from the original on 2 September 2004, https://web.archive.org/web/20040902191048/http://www.climate2003.com/, retrieved 10 September 2012 , McIntyre, Stephen; McKitrick, Ross (1 July 2004), M&M03 Page, archived from the original on 12 September 2004, https://web.archive.org/web/20040912085554/http://www.uoguelph.ca/~rmckitri/research/trc.html, retrieved 10 September 2012

- Mann, Bradley & Hughes 2004, Mann 2012, pp. 304–305.

- Mann 2012, pp. 306–307

- Mann 2012, pp. 131–136

- McIntyre & McKitrick 2005.

- Mann 2012, pp. 137, 306–307]

- Wahl & Ammann 2007

- Huybers 2005

- von Storch & Zorita 2005.

- Mann 2012, pp. 242–243, 362–363

- HCEC, 23 June 2005; Barton & Whitfield 2005.

- Washington Post editorial, 23 July 2005, Hunting Witches". https://www.washingtonpost.com/wp-dyn/content/article/2005/07/22/AR2005072201658.html

- Eilperin, 18 July 2005 (Washington Post).

- Waxman, 1 July 2005 (letter).

- Revkin, 22 June 2006 (NYT).

- North et al. 2006, pp. viii, xi http://books.nap.edu/openbook.php?record_id=11676&page=R8

- NAS press release, 22 June 2006.

- North et al. 2006, pp. 3–4 http://books.nap.edu/openbook.php?record_id=11676&page=3

- North et al. 2006, pp. 112–116 http://books.nap.edu/openbook.php?record_id=11676&page=112

- NAS press conference, 22 June 2006.

- Said 2007

- Mann 2012, pp. 160, 357, Said 2005

- WSJ editorial, 14 July 2006, Hockey Stick Hokum. https://www.wsj.com/articles/SB115283824428306460

- HCEC Hearings, 19 July 2006, p. 1.

- HCEC Hearings, 19 July 2006, p. III.

- HCEC Hearings, 19 July 2006, pp. 697–698 (27 July).

- Vergano, 15 May 2011.

- Vergano, 16 May 2011.

- Mann & Jones 2003.

- Pollack & Smerdon 2004.

- Moberg et al. 2005.

- Weart 2009 (graph).

- Oerlemans 2005.

- D'Arrigo, Wilson & Jacoby 2006.

- Osborn & Briffa 2006.

- North et al. 2006, pp. 113–115.

- Hegerl et al. 2006.

- Jansen 2007, Section 6.6: The Last 2,000 Years. http://www.ipcc.ch/publications_and_data/ar4/wg1/en/ch6s6-6.html

- AR4 WG1 SPM A Palaeoclimatic Perspective http://www.ipcc.ch/publications_and_data/ar4/wg1/en/spmsspm-a-palaeoclimatic.html

- Mann 2012, pp. 140, 308

- Huang, Pollack & Shen 2008

- Black, 1 Sep. 2008 (BBC News).

- McIntyre & McKitrick 2009.

- Mann, Bradley & Hughes 2009.

- Kaufman et al. 2009; UCAR news release, 3 Sept. 2009; Bello 2009.

- Appell 2009; Tingley & Huybers 2010a

- Ljungqvist 2010

- Christiansen & Ljungqvist 2011

- Christiansen & Ljungqvist 2012

- Ljungqvist et al. 2012

- Lemonick 2013

- Gillis 2013