| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Pedro Roque Martins | -- | 1721 | 2022-08-19 19:12:12 | | | |

| 2 | Jason Zhu | Meta information modification | 1721 | 2022-08-25 03:48:21 | | |

Video Upload Options

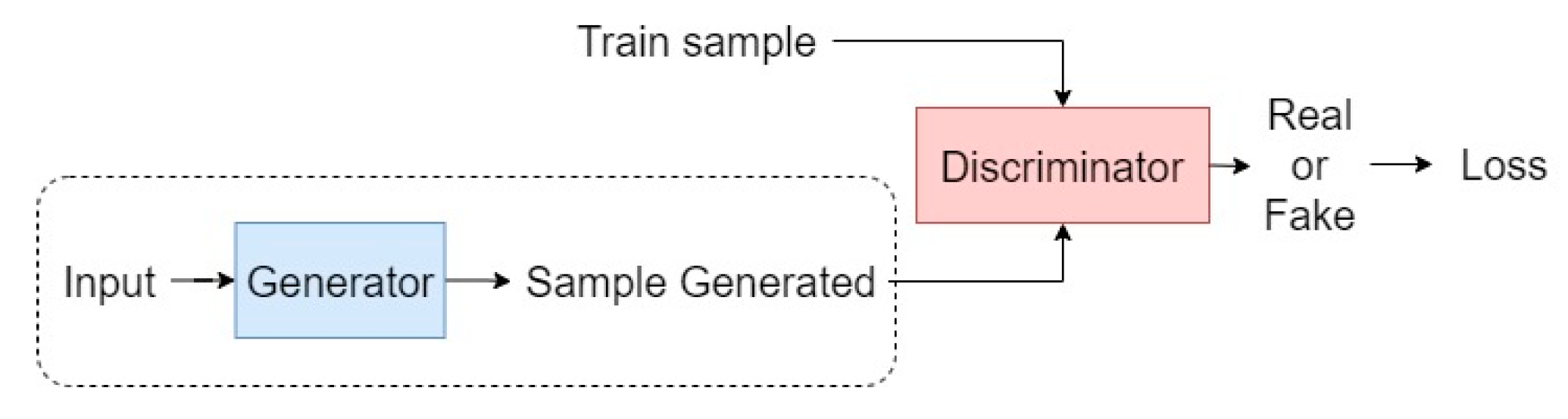

Face recognition systems in uncontrolled environments have shown impressive performance improvements. However, most are limited to the use of a single spectral band in the visible spectrum. The use of multi-spectral images makes it possible to collect information that is not obtainable in the visible spectrum when certain occlusions exist (e.g., fog or plastic materials) and in low- or no-light environments. The state of the art regarding face recognition systems in an uncontrolled environment has led to the conclusion that image synthesis methods, mainly with GANs, have been used to combat intrapersonal variations, such as the difference in pose and facial expression. On the other hand, in the area of multispectral face recognition, with a plurality of solutions presented by the use of multispectral images, fusion methods are those that make the most use of images captured in different spectral bands in order to make a decision. The main problem encountered is the limited number of images (and people) in multispectral databases in an uncontrolled environment, which makes it challenging to train convolutional neural networks, which are the most used method for feature extraction.

1. Introduction

2. Deep Neural Networks

2.1. Face Recognition

2.2. Multispectral Imaging in an Uncontrolled Environment

3. Current Work

3.1. Face Recognition in an Uncontrolled Environment

3.2. Multispectral Face Recognition

3.3. Gaps

References

- Bento, N.A.; Silva, J.S.; Dias, J.B. Detection of Camouflaged People. Int. J. Sens. Netw. Data Commun. 2016, 5, 143–148.

- Gonçalves, M.; Silva, J.S.; Bioucas-Dias, J. Classification of Vegetation Types in Military Region. In Proceedings of the SPIE Security and Defence 2015 Europe: Electro-Optical Remote Sensing, Photonic Technologies, and Applications, Toulouse, France, 21–22 September 2015; p. 9649.

- Silva, J.S.; Guerra, I.F.L.; Bioucas-Dias, J.; Gasche, T. Landmine Detection Using Multispectral Images. IEEE Sens. J. 2019, 19, 9341–9351.

- Chambino, L.L.; Silva, J.S.; Bernardino, A. Multispectral Face Recognition Using Transfer Learning with Adaptation of Domain Specific Units. Sensors 2021, 21, 4520.

- Masi, I.; Wu, Y.; Hassner, T.; Natarajan, P. Deep face recognition: A survey. In Proceedings of the 31st SIBGRAPI Conference on Graphics, Patterns and Images, Foz do Iguaçu, Paraná, Brazil, 29 October–1 November 2018; pp. 471–478.

- Chambino, L.L.; Silva, J.S.; Bernardino, A. Multispectral Facial Recognition: A Review. IEEE Access 2020, 8, 207871–207883.

- Munir, R.; Khan, R.A. An extensive review on spectral imaging in biometric systems: Challenges & advancements. J. Vis. Commun. Image Represent. 2019, 65, 102660.

- Zhang, W.; Zhao, X.; Morvan, J.; Chen, L. Improving Shadow Suppression for Illumination Robust Face Recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 41, 611–624.

- Panetta, K.; Wan, Q.; Agaian, S.; Rajeev, S.; Kamath, S.; Rajendran, R.; Rao, S.P.; Kaszowska, A.; Taylor, H.A.; Samani, A.; et al. A Comprehensive Database for Benchmarking Imaging Systems. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 42, 509–520.

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative adversarial nets. In Proceedings of the Advances in Neural Information Processing Systems, Montreal, QC, Canada, 8–13 December 2014; Volume 27.

- Tran, L.; Yin, X.; Liu, X. Disentangled representation learning gan for pose-invariant face recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, San Juan, PR, USA, 21–26 July 2017; pp. 1415–1424.

- Zhao, J.; Xiong, L.; Li, J.; Xing, J.; Yan, S.; Feng, J. 3d-aided dual-agent gans for unconstrained face recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 41, 2380–2394.

- Cao, J.; Hu, Y.; Zhang, H.; He, R.; Sun, Z. Towards high fidelity face frontalization in the wild. Int. J. Comput. Vis. 2019, 128, 1485–1504.

- Qian, Y.; Deng, W.; Hu, J. Unsupervised face normalization with extreme pose and expression in the wild. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 9851–9858.

- Tuan Tran, A.; Hassner, T.; Masi, I.; Medioni, G. Regressing robust and discriminative 3D morphable models with a very deep neural network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, San Juan, PR, USA, 21–26 July 2017; pp. 5163–5172.

- Kanmani, M.; Narasimhan, V. Optimal fusion aided face recognition from visible and thermal face images. Multimed. Tools Appl. 2020, 79, 17859–17883.

- Peng, C.; Gao, X.; Wang, N.; Li, J. Graphical Representation for Heterogeneous Face Recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 39, 301–312.

- He, R.; Cao, J.; Song, L.; Sun, Z.; Tan, T. Adversarial cross-spectral face completion for NIR-VIS face recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 42, 1025–1037.

- Hu, W.P.; Hu, H.F.; Lu, X.L. Heterogeneous Face Recognition Based on Multiple Deep Networks with Scatter Loss and Diversity Combination. IEEE Access 2019, 7, 75305–75317.

- He, R.; Wu, X.; Sun, Z.N.; Tan, T.N. Wasserstein CNN: Learning Invariant Features for NIR-VIS Face Recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 41, 1761–1773.

- Li, S.; Yi, D.; Lei, Z.; Liao, S. The CASIA NIR-VIS 2.0 Face Database. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Portland, OR, USA, 23–28 June 2013; pp. 348–353.