| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Javier Marín-Morales | + 1089 word(s) | 1089 | 2020-09-22 14:48:58 | | | |

| 2 | Felix Wu | -120 word(s) | 969 | 2020-10-20 12:06:51 | | |

Video Upload Options

Emotions play a critical role in our daily lives, so the understanding and recognition of emotional responses is crucial for human research. Affective computing research has mostly used non-immersive two-dimensional (2D) images or videos to elicit emotional states. However, immersive virtual reality, which allows researchers to simulate environments in controlled laboratory conditions with high levels of sense of presence and interactivity, is becoming more popular in emotion research. Moreover, its synergy with implicit measurements and machine-learning techniques has the potential to impact transversely in many research areas, opening new opportunities for the scientific community. This paper presents a systematic review of the emotion recognition research undertaken with physiological and behavioural measures using head-mounted displays as elicitation devices. The results highlight the evolution of the field, give a clear perspective using aggregated analysis, reveal the current open issues and provide guidelines for future research.

1. Introduction

Emotions play an essential role in rational decision-making, perception, learning and a variety of other functions that affect both human physiological and psychological status [1]. Therefore, understanding and recognising emotions are very important aspects of human behaviour research. To study human emotions, affective states need to be evoked in laboratory environments, using elicitation methods such as images, audio, videos and, recently, virtual reality (VR). VR has experienced an increase in popularity in recent years in scientific and commercial contexts [2]. Its general applications include gaming, training, education, health and marketing. This increase is based on the development of a new generation of low-cost headsets which has democratised global purchases of head-mounted displays (HMDs) [3]. Nonetheless, VR has been used in research since the 1990s [4]. The scientific interest in VR is due to the fact that it provides simulated experiences that create the sensation of being in the real world [5]. In particular, environmental simulations are representations of physical environments that allow researchers to analyse reactions to common concepts [6]. They are especially important when what they depict cannot be physically represented. VR makes it possible to study these scenarios under controlled laboratory conditions [7]. Moreover, VR allows the time- and cost-effective isolation and modification of variables, unfeasible in real space [8].

2. Emotions Analysed

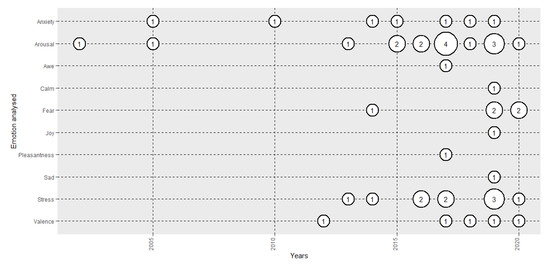

Figure 1 depicts the evolution in the number of papers analysed in the review based on the emotion under analysis. Until 2015, the majority of the papers analysed arousal-related emotions, mostly arousal, anxiety and stress. From that year, some experiments started to analyse valence- related emotions, such as valence, joy, pleasantness and sadness, but the analysis of arousal-related emotions still predominated. Some 50% of the studies used CMA (arousal 38.1% [9] and valence 11.9% [10]), and the other 50% used basic or complex emotions (stress 23.8% [11], anxiety 16.7% [12], fear 11.9% [13], awe 2.4% [14], calmness 2.4% [15], joy 2.4% [15], pleasantness 2.4% [16] and sadness 2.4% [15]).

Figure 1. Evolution of the number of papers published each year based on emotion analysed.

3. Implicit Technique, Features used and Participants

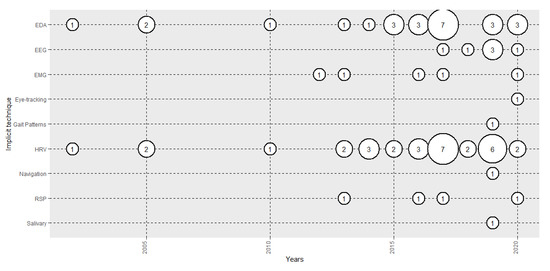

Figure 2 shows the evolution of the number of papers analysed in terms of the implicit measures used. The majority used HRV (73.8%) and EDA (59.5%). Therefore, the majority of the studies used ANS to analyse emotions. However, most of the studies that used HRV used very few features from the time domain, such as HR [17][18]. Very few studies used features from the frequency domain, such as HF, LF or HF/LF [19][20] and 2 used non-linear features, such as entropy and Poincare [21][22]. Of the studies that used EDA, the majority used total skin conductance (SC) [23], but some used tonic (SCL) [9] or phasic activity (SCR) [24]. In recent years, EEG use has increased, with 6 papers being published (14.3%), and the CNS has started to be used, in combination with HMDs, to recognise emotions. The analyses that have been used are ERP [25], power spectral density [26] and functional connectivity [21]. EMG (11.9%) and RSP (9.5) were also used, mostly in combination with HRV. Other implicit measures used were eye-tracking, gait patterns, navigation and salivary cortisol responses. The average number of participants used in the various studies depended on the signal, that is, 75.34 (σ = 73.57) for EDA, 68.58 (σ = 68.35) for HRV and 33.67 (σ = 21.80) for EEG.

Figure 2. Evolution of the number of papers published each year based on the implicit measure used.

4. Data Analysis

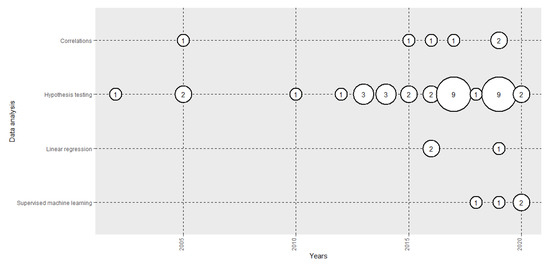

Figure 3 shows the evolution of the number of papers published in terms of the data analysis performed. The vast majority analysed the implicit responses of the subjects in different emotional states using hypothesis testing (83.33%), correlations (14.29) or linear regression (4.76%). However, in recent years, we have seen the introduction of applied supervised machine-learning algorithms (11.90%), such as SVM [22], Random Forest [27] and kNN [26] to perform automatic emotion recognition models. They have been used in combination with EEG [21], HRV [22]and EDA [26].

Figure 3. Evolution of the number of papers published each year by data analysis method used.

5. VR Set-Ups Used: HMDs and Formats

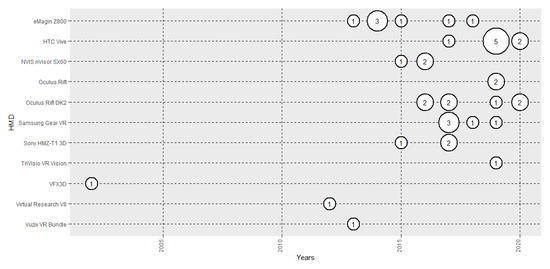

Figure 4 shows the evolution of the number of papers published based on HMD used. In the first years of the 2010s, eMagin was the most used. In more recent years, advances in HMD technologies have positioned HTC Vive as the most used (19.05%). In terms of formats, 3D environments are the most used [25] (85.71%), with 360° panoramas following far behind [28] (16.67%). One research used both formats [16].

Figure 4. Evolution of the number of papers published each year based on head-mounted display (HMD) used.

6. Validation of VR

Table 1 shows the percentage of the papers that presented analyses of the validation of VR in an emotional research. Some 83.33% of the papers did not present any type of validation. Three papers included direct comparisons of results between VR environments and the physical world [16][21][12] and 3 compared, in terms of the formats used, the emotional reactions evoked in 3D VRs, photos [12], 360° panoramas [16] and augmented reality [29]. Finally, another compared the influence of immersion [14], the similarity of VR results with previous datasets [30] and one compared its results with a previous version of the study performed in the real world [31].

Table 1. Previous research that included analyses of the validation of virtual reality (VR).

| Type of Validation | % of Papers | Number of Papers |

|---|---|---|

| No validation | 83.33% | 35 |

| Real | 7.14% | 3 |

| Format | 7.14% | 3 |

| Immersivity | 2.38% | 1 |

| Previous datasets | 2.38% | 1 |

| Replication | 2.38% | 1 |

References

- Picard, R.W. Affective Computing; MIT Press: Cambridge, MA, USA, 1997.

- Cipresso, P.; Chicchi, I.A.; Alcañiz, M.; Riva, G. The Past, Present, and Future of Virtual and Augmented Reality Research: A network and cluster analysis of the literature. Front. Psychol. 2018, 9, 2086.

- Castelvecchi, D. Low-cost headsets boost virtual reality’s lab appeal. Nature 2016, 533, 153–154.

- Slater, M.; Usoh, M. Body centred interaction in immersive virtual environments. Artif. Life Virtual Real. 1994, 1, 125–148.

- Giglioli, I.A.C.; Pravettoni, G.; Martín, D.L.S.; Parra, E.; Alcañiz, M. A novel integrating virtual reality approach for the assessment of the attachment behavioral system. Front. Psychol. 2017, 8, 1–7.

- Kwartler, M. Visualization in support of public participation. In Visualization in Landscape and Environmental Planning: Technology and Applications; Bishop, I., Lange, E., Eds.; Taylor & Francis: London, UK, 2005; pp. 251–260.

- Vince, J. Introduction to Virtual Reality; Media, Springer: Berlin/Heidelberg, Germany, 2004.

- Alcañiz, M.; Baños, R.; Botella, C.; Rey, B. The EMMA Project: Emotions as a Determinant of Presence. PsychNology J. 2003, 1, 141–150.

- Anna Felnhofer; Oswald D. Kothgassner; Mareike Schmidt; Anna-Katharina Heinzle; Leon Beutl; Helmut Hlavacs; Ilse Kryspin-Exner; Is virtual reality emotionally arousing? Investigating five emotion inducing virtual park scenarios. International Journal of Human-Computer Studies 2015, 82, 48-56, 10.1016/j.ijhcs.2015.05.004.

- Maryam Banaei; Javad Hatami; Abbas Yazdanfar; Klaus Gramann; Walking through Architectural Spaces: The Impact of Interior Forms on Human Brain Dynamics. Frontiers in Human Neuroscience 2017, 11, 477, 10.3389/fnhum.2017.00477.

- Federica Pallavicini; Pietro Cipresso; Simona Raspelli; Alessandra Grassi; Silvia Serino; Cinzia Vigna; Stefano Triberti; Marco Villamira; Andrea Gaggioli; Giuseppe Riva; et al. Is virtual reality always an effective stressors for exposure treatments? some insights from a controlled trial. BMC Psychiatry 2013, 13, 52-52, 10.1186/1471-244x-13-52.

- Alessandra Gorini; Eric Griez; Anna Petrova; Giuseppe Riva; Assessment of the emotional responses produced by exposure to real food, virtual food and photographs of food in patients affected by eating disorders. Annals of General Psychiatry 2010, 9, 30-30, 10.1186/1744-859x-9-30.

- Henrik M. Peperkorn; Georg W. Alpers; Andreas Mühlberger; Triggers of Fear: Perceptual Cues Versus Conceptual Information in Spider Phobia. Journal of Clinical Psychology 2013, 70, 704-714, 10.1002/jclp.22057.

- Alice Chirico; Pietro Cipresso; David B. Yaden; Federica Biassoni; Giuseppe Riva; Andrea Gaggioli; Effectiveness of Immersive Videos in Inducing Awe: An Experimental Study. Scientific Reports 2017, 7, 1-11, 10.1038/s41598-017-01242-0.

- Young Kim; Junyoung Moon; Nak-Jun Sung; Min Hong; Correlation between selected gait variables and emotion using virtual reality. Journal of Ambient Intelligence and Humanized Computing 2019, 8, 1-8, 10.1007/s12652-019-01456-2.

- Juan Luis Higuera-Trujillo; Juan López-Tarruella Maldonado; Carmen Llinares Millán; Psychological and physiological human responses to simulated and real environments: A comparison between Photographs, 360° Panoramas, and Virtual Reality. Applied Ergonomics 2017, 65, 398-409, 10.1016/j.apergo.2017.05.006.

- Cade McCall; Lea K. Hildebrandt; Boris Bornemann; Tania Singer; Physiophenomenology in retrospect: Memory reliably reflects physiological arousal during a prior threatening experience. Consciousness and Cognition 2015, 38, 60-70, 10.1016/j.concog.2015.09.011.

- Youssef Shiban; Julia Diemer; Simone Brandl; Rebecca Zack; Andreas Emühlberger; Stefan Wüst; Trier Social Stress Test in vivo and in virtual reality: Dissociation of response domains. International Journal of Psychophysiology 2016, 110, 47-55, 10.1016/j.ijpsycho.2016.10.008.

- Yulong Bian; Chenglei Yang; Fengqiang Gao; Hui-Yu Li; Shisheng Zhou; Hanchao Li; Xiaowen Sun; Xiangxu Meng; A framework for physiological indicators of flow in VR games: construction and preliminary evaluation. Personal and Ubiquitous Computing 2016, 20, 821-832, 10.1007/s00779-016-0953-5.

- Allison P. Anderson; Michael D. Mayer; Abigail M. Fellows; Devin R. Cowan; Mark T. Hegel; Jay C. Buckey; Relaxation with Immersive Natural Scenes Presented Using Virtual Reality. Aerospace Medicine and Human Performance 2017, 88, 520-526, 10.3357/amhp.4747.2017.

- Javier Marín-Morales; Juan Luis Higuera-Trujillo; Alberto Greco; Jaime Guixeres; Carmen Llinares; Claudio Gentili; Enzo Pasquale Scilingo; Mariano Alcañiz; Gaetano Valenza; Real vs. immersive-virtual emotional experience: Analysis of psycho-physiological patterns in a free exploration of an art museum. PLOS ONE 2019, 14, e0223881, 10.1371/journal.pone.0223881.

- Javier Marín-Morales; Juan Luis Higuera-Trujillo; Alberto Greco; Jaime Guixeres; Carmen Llinares; Enzo Pasquale Scilingo; Mariano Alcañiz; Gaetano Valenza; Affective computing in virtual reality: emotion recognition from brain and heartbeat dynamics using wearable sensors. Scientific Reports 2018, 8, 1-15, 10.1038/s41598-018-32063-4.

- Swantje Notzon; S. Deppermann; Andreas J Fallgatter; Julia Diemer; A. Kroczek; Katharina Domschke; Peter Zwanzger; AnnChristine Ehlis; Psychophysiological effects of an iTBS modulated virtual reality challenge including participants with spider phobia. Biological Psychology 2015, 112, 66-76, 10.1016/j.biopsycho.2015.10.003.

- Mascha Van ’T Wout; Christopher M. Spofford; William S. Unger; Elizabeth B. Sevin; M. Tracie Shea; Skin Conductance Reactivity to Standardized Virtual Reality Combat Scenes in Veterans with PTSD. Applied Psychophysiology and Biofeedback 2017, 42, 209-221, 10.1007/s10484-017-9366-0.

- Christopher Stolz; Dominik Endres; Erik M. Mueller; Threat‐conditioned contexts modulate the late positive potential to faces—A mobile EEG/virtual reality study. Psychophysiology 2018, 56, e13308, 10.1111/psyp.13308.

- Oana Bălan; Gabriela Moise; Alin Moldoveanu; Marius Leordeanu; Florica Moldoveanu; An Investigation of Various Machine and Deep Learning Techniques Applied in Automatic Fear Level Detection and Acrophobia Virtual Therapy. Sensors 2020, 20, 496, 10.3390/s20020496.

- Marco Granato; Davide Gadia; Dario Maggiorini; Laura A. Ripamonti; An empirical study of players’ emotions in VR racing games based on a dataset of physiological data. Multimedia Tools and Applications 2020, null, 1-30, 10.1007/s11042-019-08585-y.

- Qiuyun Huang; Minyan Yang; Hao-Ann Jane; Shuhua Li; Nicole Bauer; Trees, grass, or concrete? The effects of different types of environments on stress reduction. Landscape and Urban Planning 2020, 193, 103654, 10.1016/j.landurbplan.2019.103654.

- Chai-Fen Tsai; Shih-Ching Yeh; Yanyan Huang; Zhengyu Wu; Jianjun Cui; Lirong Zheng; The Effect of Augmented Reality and Virtual Reality on Inducing Anxiety for Exposure Therapy: A Comparison Using Heart Rate Variability. Journal of Healthcare Engineering 2018, 2018, 1-8, 10.1155/2018/6357351.

- Frank H. Wilhelm; Monique C. Pfaltz; James J. Gross; Iris B. Mauss; Sun I. Kim; Brenda K. Wiederhold; Mechanisms of Virtual Reality Exposure Therapy: The Role of the Behavioral Activation and Behavioral Inhibition Systems. Applied Psychophysiology and Biofeedback 2005, 30, 271-284, 10.1007/s10484-005-6383-1.

- Patrick Zimmer; C. Carolyn Wu; Gregor Domes; Same same but different? Replicating the real surroundings in a virtual trier social stress test (TSST-VR) does not enhance presence or the psychophysiological stress response. Physiology & Behavior 2019, 212, 112690, 10.1016/j.physbeh.2019.112690.