Eugene Garfield introduced information systems that made the discovery of scientific information much more efficient. The founded by him Institute for Scientific Information (ISI) developed innovative information products and provided current scientific information to researchers all over the world. Garfield introduced the citation as a qualitative measure of academic impact and propelled the concepts of “citation indexing” and “citation linking”, paving the way for today’s search engines. He created the Science Citation Index (SCI), which provided a new way of retrieving, organizing, disseminating, and using scientific information; triggered the development of new disciplines (scientometrics, infometrics, webometrics); and became the foundation for building important new information products. The Journal Impact Factor, initially established to select journals for the SCI, became the most widely accepted tool for measuring academic impact. Garfield actively promoted English as the international language of science and became a powerful force in the globalization of research. His ideas revolutionized science and dramatically influenced the culture of research. This Encyclopedia entry is based on the following article:

Eugene Garfield

I had always envisaged a time when scholars would become citation conscious, and to a large extent they have, for information retrieval, evaluation, and measuring impact…I did not imagine that the worldwide scholarly enterprise would grow to its present size, or that bibliometrics would become so widespread.

Eugene Garfield

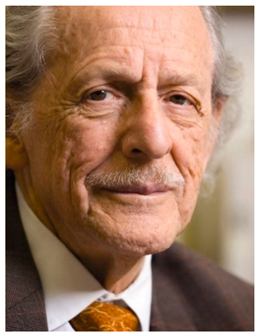

Eugene Garfield advanced the theory and practice of information science, raised awareness about citations and academic impact, actively promoted English as the lingua franca of science, and became a powerful force in the globalization of research. He founded the Institute for Scientific Information (ISI) (Figure 1), which developed innovative information products and provided current scientific information to researchers all over the world. The creation of the Science Citation Index (SCI) is one of the most important events in modern science . Garfield’s idea of using citations in articles to index scientific literature offered a new and more efficient way of collecting, analyzing, disseminating, and discovering scientific information. The SCI laid the foundation for building new important information products such as Web of Science (one of the most widely used databases for finding scientific literature today), Essential Science Indicators, and Journal Citation Reports. It triggered the development of new disciplines (such as scientometrics, infometrics, and webometrics ) and preceded the search engines, which use “citation linking”—a core concept of the SCI—to connect and rank documents. Some have called Garfield “The Grandfather of Google” . “Citation linking” was probably considered by Sergey Brin’s and Larry Page for their article in which they mentioned Google for the first time. Many articles, books, conference presentations, and interviews discuss and analyze Garfield’s ideas and legacy

[1][2][3][4][5][6][7][8][9][10][11][12][13][14][15].

Figure 1. Institute for Scientific Information (ISI) at 3501 Market Street, Philadelphia. Courtesy of Eugene Garfield’s personal archive.

1. Revolutionizing Scientific Information

The citation becomes the subject … It was a radical approach to retrieving information.

Eugene Garfield (Interviewed by Eric Ramsay)

[13]

While working at the Welch Medical Library at Johns Hopkins University in the early 1950s, Garfield studied the role and importance of review articles and their linguistic structure. He concluded that each sentence in a review article could be an indexing statement and that that there should be a way to structure it. About that time, he also became interested in the idea of creating an equivalent to Shepard’s Citations (a legal index for case law) for science. Garfield described in an interview how these events led him to conceive the SCI

[1]. The SCI is a multidisciplinary directory to scientific literature, which facilitates interdisciplinary research. It provided a new way of organizing, disseminating, and retrieving scientific information. Garfield introduced the SCI as a “new dimension in indexing”

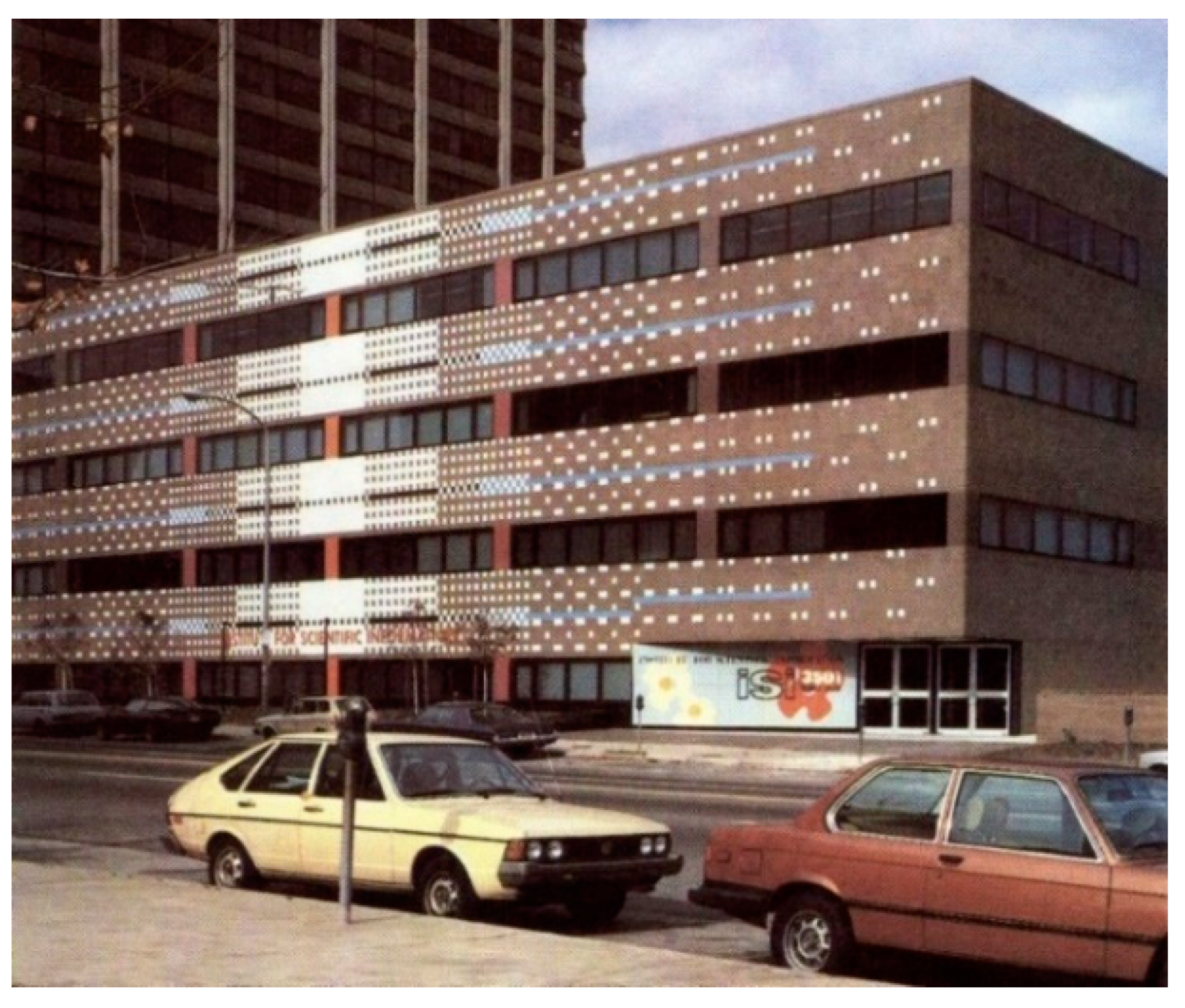

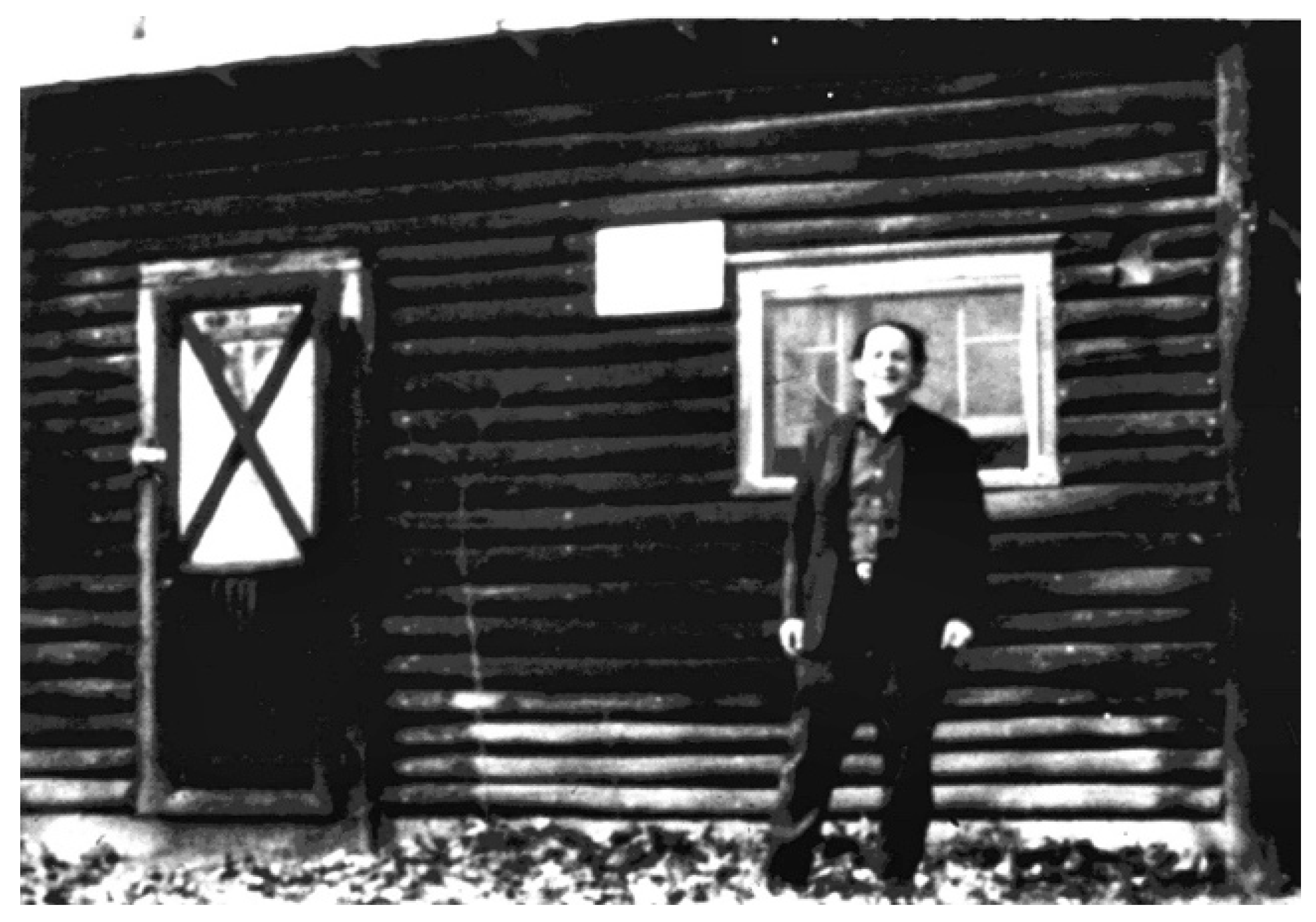

[2]. Citation indexing uses the concept that the literature cited in an article represents the most significant research performed before. It stipulates that, when an article cites another article, there should be something in common between the two. In this case, each bibliographic citation acts as a descriptor or symbol that defines a question (treated from a certain aspect in the cited work). It is strange to imagine today that one of the most important resources for modern science was created in a converted chicken coop in rural New Jersey (Figure 2). The citation indexes produced by ISI were the most important sources of bibliometric information before Reed Elsevier launched Scopus in 2004. Most of the products associated with Garfield’s legacy are now part of the Clarivate Analytics’ platform Web of Science (WoS).

Figure 2. Eugene Garfield in front of the converted chicken coop in Thorofare, NJ, where he started the Science Citation Index. With permission from Eugene Garfield’s personal archive.

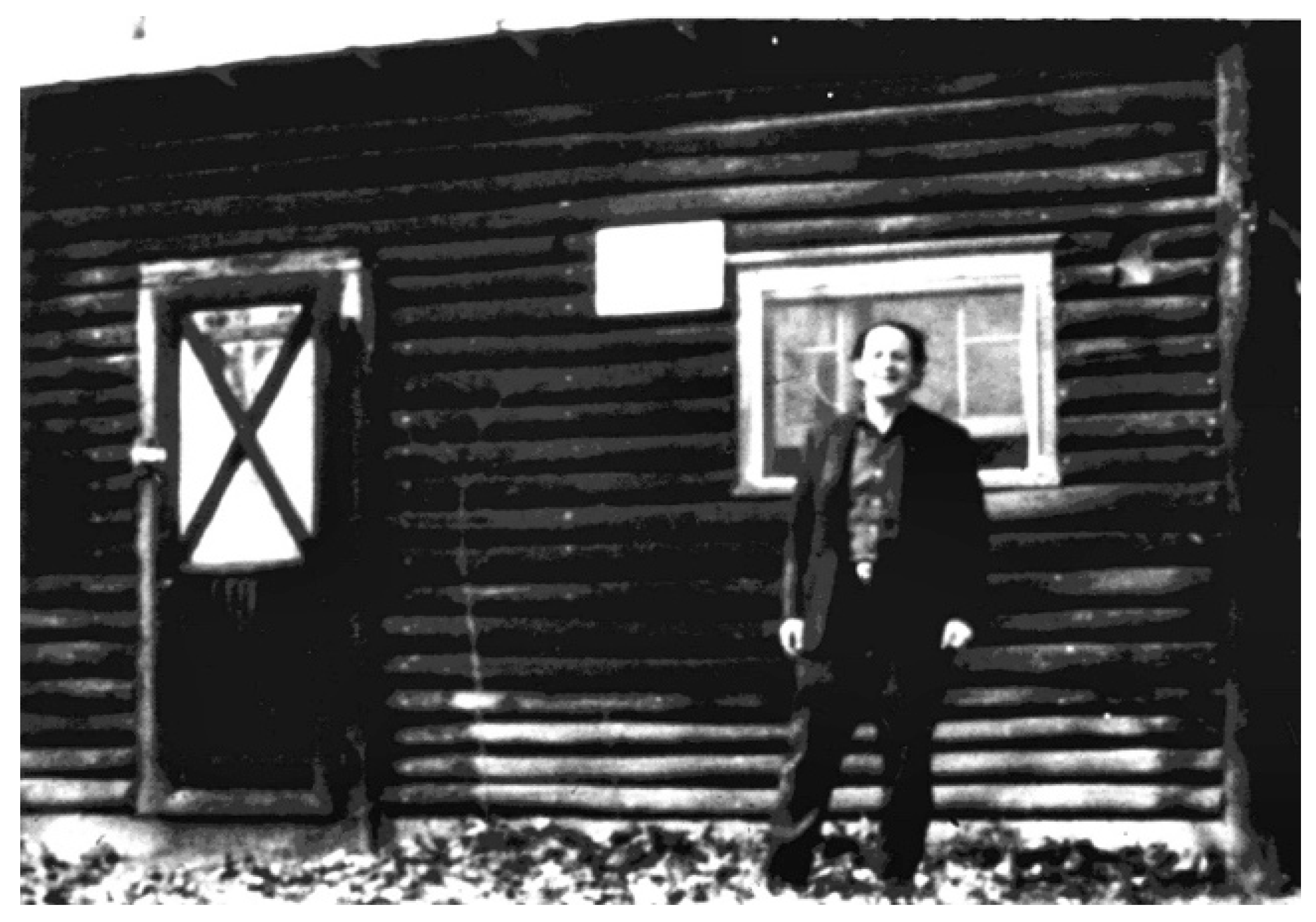

Some critical developments prepared the ground for the emergence of a tool such as the SCI. In the years after World War II, there was a significant increase in funding for research, which led to a rapid expansion of science and literature growth. There was widespread dissatisfaction with the traditional indexing and abstracting services, because they were discipline-focused and took a long time to reach users. Researchers wanted recognition, and there was a need for quantifiable tools to evaluate journals and individuals’ work. The Journal Impact Factor (IF) has become the most accepted tool for measuring the quality of journals. A by-product from the SCI, it was originally designed as a tool to select journals for coverage in the SCI It has been used later [largely inappropriately] for measuring the quality of individual researchers’ work, as well as in science policy and research funding. As researchers are evaluated, hired, promoted, and funded based on the impact of their work, the importance of publishing in high-impact journals has created an extremely competitive environment, where the “publish or perish” philosophy dominates academic life. In his book, "Citation Indexing—Its Theory and Application in Science, Technology, and Humanities", Garfield presented the core concepts on which SCI was built

[16](Figure 3). Garfield will be remembered mostly for the Current Contents and the Science Citation Index (SCI), but other important information products are also part of his legacy (Table 1).

Figure 3. Garfield’s book on citation indexing that laid out the core concepts of the SCI.

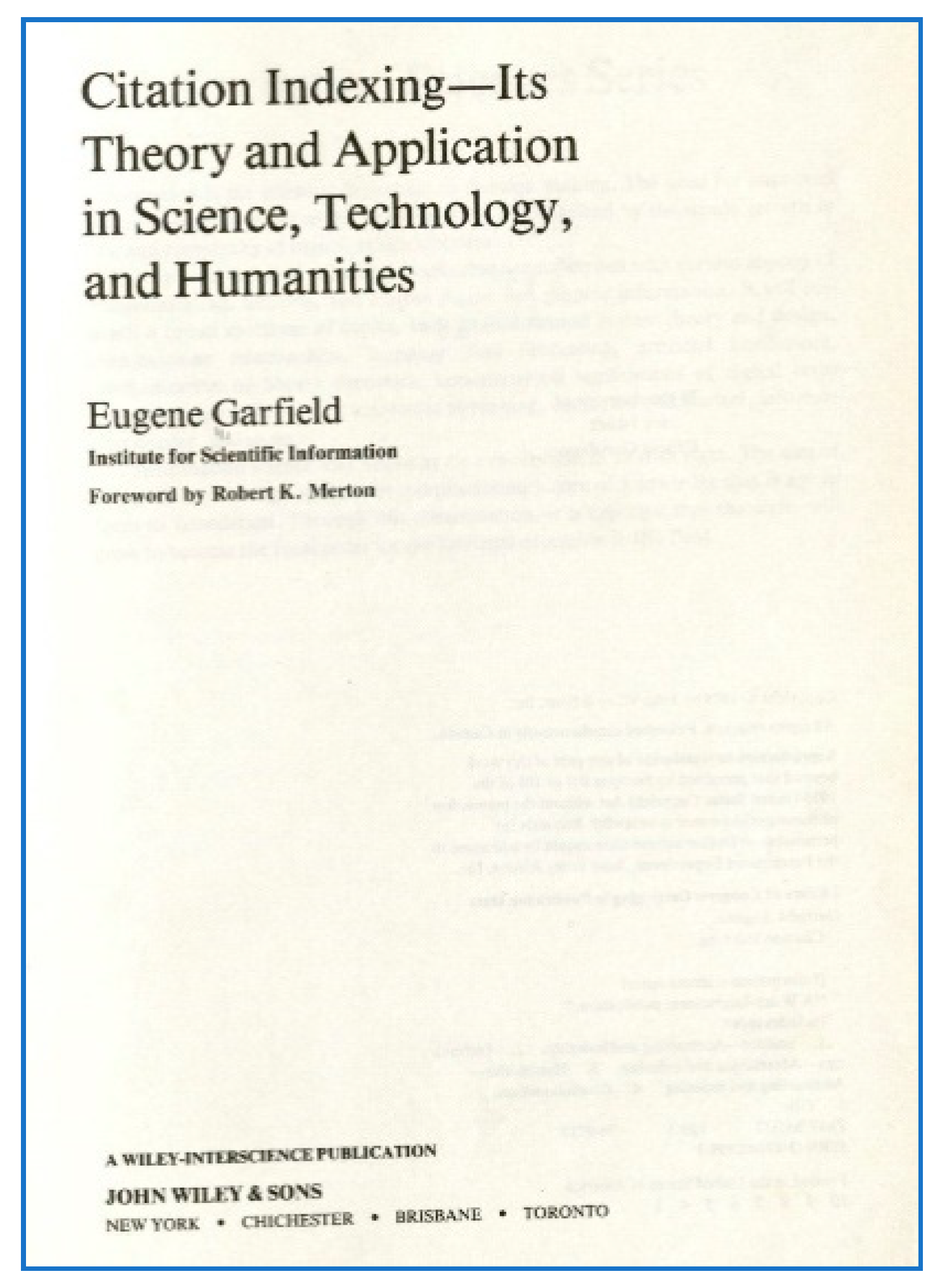

Table 1. Information products associated with the legacy of Eugene Garfield.

| Product |

Features |

| Science Citation Index (SCI) * |

A citation database launched by ISI in 1964. Science Citation Index Expanded covers over 8500 major journals, across 150 disciplines, from 1900 to the present. |

| Social Sciences Citation Index (SSCI) * |

A citation database, covering over 3200 of the world’s leading academic journals in the social sciences across more than 55 disciplines, as well as selected items from 3500 of the world’s leading scientific and technical journals, from 1900 to present. |

| Arts & Humanities Citation Index (A&HCI)* |

A citation database covering over 1700 arts and humanities fully indexed journals, as well as selected items from over 250 scientific and social sciences journals, from 1975 to present. |

| Index Chemicus * |

A text- and substructure-searchable database, offering full graphical summaries, important reaction diagrams, and complete bibliographic information from over 100 of the world’s leading organic chemistry journals. Included in Clarivate Analytics’ Web of Science platform. |

| Current Chemical Reactions * |

A database containing single- and multi-step new synthetic methods. The overall reaction flow is provided for each method, along with a detailed and accurate graphical representation of each reaction step. Included in Clarivate Analytics’ Web of Science platform. |

| Current Contents |

A weekly journal (first published in print) reproducing the table of contents from journal issues of major peer-reviewed scientific journals published only a few weeks ago—a shorter time lag than any service then available. Contained an author index and a keyword subject index. Author addresses were provided so readers could send reprint requests for copies of the actual articles. Current status: Still published in print, it is available as one of the databases included in Clarivate Analytics’ platform. |

| Essays of an Information Scientist |

Eugene Garfield, Essays of an Information Scientist, Volumes 1–15, Philadelphia: ISI Press. A 15-volume series, which includes the original essays of Eugene Garfield published in the Current Contents, as well as some of his articles published elsewhere. |

| Web of Science Core Collection * |

Includes the Science Citation Index Expanded, Social Sciences Citation Index, Arts & Humanities Citation Index, Emerging Sources Citation Index, Book Citation Index, and Conference Proceedings Citation Index. |

| Journal Citation Reports (JCR) * |

Publishes the annual journal impact factors of scientific journals and provides metrics and analysis for them. As of 1 June 2019, it covered 11,500+ indexed journals, 230+ disciplines, 80 countries/regions, and 2.2 million articles, reviews, and other source items. |

| InCites Essential Science Indicators (ESI) * |

A compilation of science performance statistics and science trends data based on journal article publication counts and citation data from Clarivate Analytics’ databases, which shows the influential individuals, institutions, papers, publications, and countries in their field of study, as well as emerging research areas that can impact their work. |

| The Scientist* |

A newspaper for scientists. |