Since the end of the 20th century, supercomputer operating systems have undergone major transformations, as fundamental changes have occurred in supercomputer architecture. While early operating systems were custom tailored to each supercomputer to gain speed, the trend has been moving away from in-house operating systems and toward some form of Linux, with it running all the supercomputers on the TOP500 list in November 2017. Given that modern massively parallel supercomputers typically separate computations from other services by using multiple types of nodes, they usually run different operating systems on different nodes, e.g., using a small and efficient lightweight kernel such as Compute Node Kernel (CNK) or Compute Node Linux (CNL) on compute nodes, but a larger system such as a Linux-derivative on server and input/output (I/O) nodes. While in a traditional multi-user computer system job scheduling is in effect a tasking problem for processing and peripheral resources, in a massively parallel system, the job management system needs to manage the allocation of both computational and communication resources, as well as gracefully dealing with inevitable hardware failures when tens of thousands of processors are present. Although most modern supercomputers use the Linux operating system, each manufacturer has made its own specific changes to the Linux-derivative they use, and no industry standard exists, partly because the differences in hardware architectures require changes to optimize the operating system to each hardware design.

- massively parallel

- job scheduling

- supercomputer

1. Context and Overview

In the early days of supercomputing, the basic architectural concepts were evolving rapidly, and system software had to follow hardware innovations that usually took rapid turns.[1] In the early systems, operating systems were custom tailored to each supercomputer to gain speed, yet in the rush to develop them, serious software quality challenges surfaced and in many cases the cost and complexity of system software development became as much an issue as that of hardware.[1]

In the 1980s the cost for software development at Cray came to equal what they spent on hardware and that trend was partly responsible for a move away from the in-house operating systems to the adaptation of generic software.[2] The first wave in operating system changes came in the mid 1980s, as vendor specific operating systems were abandoned in favor of Unix. Despite early skepticism, this transition proved successful.[1][2]

By the early 1990s, major changes were occurring in supercomputing system software.[1] By this time, the growing use of Unix had begun to change the way system software was viewed. The use of a high level language (C) to implement the operating system, and the reliance on standardized interfaces was in contrast to the assembly language oriented approaches of the past.[1] As hardware vendors adapted Unix to their systems, new and useful features were added to Unix, e.g., fast file systems and tunable process schedulers.[1] However, all the companies that adapted Unix made unique changes to it, rather than collaborating on an industry standard to create "Unix for supercomputers". This was partly because differences in their architectures required these changes to optimize Unix to each architecture.[1]

Thus as general purpose operating systems became stable, supercomputers began to borrow and adapt the critical system code from them and relied on the rich set of secondary functions that came with them, not having to reinvent the wheel.[1] However, at the same time the size of the code for general purpose operating systems was growing rapidly. By the time Unix-based code had reached 500,000 lines long, its maintenance and use was a challenge.[1] This resulted in the move to use microkernels which used a minimal set of the operating system functions. Systems such as Mach at Carnegie Mellon University and ChorusOS at INRIA were examples of early microkernels.[1]

The separation of the operating system into separate components became necessary as supercomputers developed different types of nodes, e.g., compute nodes versus I/O nodes. Thus modern supercomputers usually run different operating systems on different nodes, e.g., using a small and efficient lightweight kernel such as CNK or CNL on compute nodes, but a larger system such as a Linux-derivative on server and I/O nodes.[3][4]

2. Early Systems

The CDC 6600, generally considered the first supercomputer in the world, ran the Chippewa Operating System, which was then deployed on various other CDC 6000 series computers.[6] The Chippewa was a rather simple job control oriented system derived from the earlier CDC 3000, but it influenced the later KRONOS and SCOPE systems.[6][7]

The first Cray-1 was delivered to the Los Alamos Lab with no operating system, or any other software.[8] Los Alamos developed the application software for it, and the operating system.[8] The main timesharing system for the Cray 1, the Cray Time Sharing System (CTSS), was then developed at the Livermore Labs as a direct descendant of the Livermore Time Sharing System (LTSS) for the CDC 6600 operating system from twenty years earlier.[8]

In developing supercomputers, rising software costs soon became dominant, as evidenced by the 1980s cost for software development at Cray growing to equal their cost for hardware.[2] That trend was partly responsible for a move away from the in-house Cray Operating System to UNICOS system based on Unix.[2] In 1985, the Cray-2 was the first system to ship with the UNICOS operating system.[9]

Around the same time, the EOS operating system was developed by ETA Systems for use in their ETA10 supercomputers.[10] Written in Cybil, a Pascal-like language from Control Data Corporation, EOS highlighted the stability problems in developing stable operating systems for supercomputers and eventually a Unix-like system was offered on the same machine.[10][11] The lessons learned from developing ETA system software included the high level of risk associated with developing a new supercomputer operating system, and the advantages of using Unix with its large extant base of system software libraries.[10]

By the middle 1990s, despite the extant investment in older operating systems, the trend was toward the use of Unix-based systems, which also facilitated the use of interactive graphical user interfaces (GUIs) for scientific computing across multiple platforms.[12] The move toward a commodity OS had opponents, who cited the fast pace and focus of Linux development as a major obstacle against adoption.[13] As one author wrote "Linux will likely catch up, but we have large-scale systems now". Nevertheless, that trend continued to gain momentum and by 2005, virtually all supercomputers used some Unix-like OS.[14] These variants of Unix included IBM AIX, the open source Linux system, and other adaptations such as UNICOS from Cray.[14] By the end of the 20th century, Linux was estimated to command the highest share of the supercomputing pie.[1][15]

3. Modern Approaches

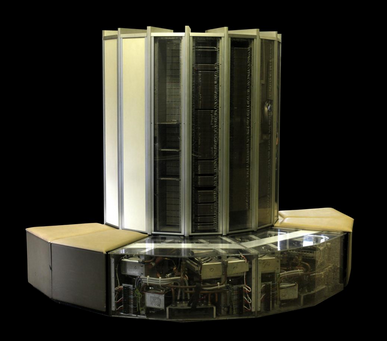

The IBM Blue Gene supercomputer uses the CNK operating system on the compute nodes, but uses a modified Linux-based kernel called I/O Node Kernel (INK) on the I/O nodes.[3][16] CNK is a lightweight kernel that runs on each node and supports a single application running for a single user on that node. For the sake of efficient operation, the design of CNK was kept simple and minimal, with physical memory being statically mapped and the CNK neither needing nor providing scheduling or context switching.[3] CNK does not even implement file I/O on the compute node, but delegates that to dedicated I/O nodes.[16] However, given that on the Blue Gene multiple compute nodes share a single I/O node, the I/O node operating system does require multi-tasking, hence the selection of the Linux-based operating system.[3][16]

While in traditional multi-user computer systems and early supercomputers, job scheduling was in effect a task scheduling problem for processing and peripheral resources, in a massively parallel system, the job management system needs to manage the allocation of both computational and communication resources.[17] It is essential to tune task scheduling, and the operating system, in different configurations of a supercomputer. A typical parallel job scheduler has a master scheduler which instructs some number of slave schedulers to launch, monitor, and control parallel jobs, and periodically receives reports from them about the status of job progress.[17]

Some, but not all supercomputer schedulers attempt to maintain locality of job execution. The PBS Pro scheduler used on the Cray XT3 and Cray XT4 systems does not attempt to optimize locality on its three-dimensional torus interconnect, but simply uses the first available processor.[18] On the other hand, IBM's scheduler on the Blue Gene supercomputers aims to exploit locality and minimize network contention by assigning tasks from the same application to one or more midplanes of an 8x8x8 node group.[18] The Slurm Workload Manager scheduler uses a best fit algorithm, and performs Hilbert curve scheduling to optimize locality of task assignments.[18] Several modern supercomputers such as the Tianhe-2 use Slurm, which arbitrates contention for resources across the system. Slurm is open source, Linux-based, very scalable, and can manage thousands of nodes in a computer cluster with a sustained throughput of over 100,000 jobs per hour.[19][20]

The content is sourced from: https://handwiki.org/wiki/Software:Supercomputer_operating_systems

References

- Encyclopedia of Parallel Computing by David Padua 2011 ISBN:0-387-09765-1 pages 426-429

- Knowing machines: essays on technical change by Donald MacKenzie 1998 ISBN:0-262-63188-1 page 149-151

- Euro-Par 2004 Parallel Processing: 10th International Euro-Par Conference 2004, by Marco Danelutto, Marco Vanneschi and Domenico Laforenza ISBN:3-540-22924-8 pages 835

- An Evaluation of the Oak Ridge National Laboratory Cray XT3 by Sadaf R. Alam, et al., International Journal of High Performance Computing Applications, February 2008 vol. 22 no. 1 52-80

- Targeting the computer: government support and international competition by Kenneth Flamm 1987 ISBN:0-8157-2851-4 page 82 [1]

- The computer revolution in Canada by John N. Vardalas 2001 ISBN:0-262-22064-4 page 258

- Design of a computer: the Control Data 6600 by James E. Thornton, Scott, Foresman Press 1970 page 163

- Targeting the computer: government support and international competition by Kenneth Flamm 1987 ISBN:0-8157-2851-4 pages 81-83

- Lester T. Davis, The balance of power, a brief history of Cray Research hardware architectures in "High performance computing: technology, methods, and applications" by J. J. Dongarra 1995 ISBN:0-444-82163-5 page 126 [2]

- Lloyd M. Thorndyke, The Demise of the ETA Systems in "Frontiers of Supercomputing II by Karyn R. Ames, Alan Brenner 1994 ISBN:0-520-08401-2 pages 489-497

- Past, present, parallel: a survey of available parallel computer systems by Arthur Trew 1991 ISBN:3-540-19664-1 page 326

- Frontiers of Supercomputing II by Karyn R. Ames, Alan Brenner 1994 ISBN:0-520-08401-2 page 356

- Brightwell,Ron Riesen,Rolf Maccabe,Arthur. "On the Appropriateness of Commodity Operating Systems for Large-Scale, Balanced Computing Systems". http://www.sandia.gov/~rbbrigh/slides/conferences/commodity-os-ipdps03-slides.pdf. Retrieved January 29, 2013.

- Getting up to speed: the future of supercomputing by Susan L. Graham, Marc Snir, Cynthia A. Patterson, National Research Council 2005 ISBN:0-309-09502-6 page 136

- Forbes magazine, 03.15.05: Linux Rules Supercomputers https://www.forbes.com/2005/03/15/cz_dl_0315linux.html

- Euro-Par 2006 Parallel Processing: 12th International Euro-Par Conference, 2006, by Wolfgang E. Nagel, Wolfgang V. Walter and Wolfgang Lehner ISBN:3-540-37783-2 page

- Open Job Management Architecture for the Blue Gene/L Supercomputer by Yariv Aridor et al in Job scheduling strategies for parallel processing by Dror G. Feitelson 2005 ISBN:978-3-540-31024-2 pages 95-101

- Job Scheduling Strategies for Parallel Processing: by Eitan Frachtenberg and Uwe Schwiegelshohn 2010 ISBN:3-642-04632-0 pages 138-144

- SLURM at SchedMD http://slurm.schedmd.com/

- Jette, M. and M. Grondona, SLURM: Simple Linux Utility for Resource Management in the Proceedings of ClusterWorld Conference, San Jose, California, June 2003 [3]