Your browser does not fully support modern features. Please upgrade for a smoother experience.

Please note this is an old version of this entry, which may differ significantly from the current revision.

Subjects:

Imaging Science & Photographic Technology

|

Engineering, Electrical & Electronic

|

Computer Science, Interdisciplinary Applications

The successful investigation and prosecution of significant crimes, including child pornography, insurance fraud, movie piracy, traffic monitoring, and scientific fraud, hinge largely on the availability of solid evidence to establish the case beyond any reasonable doubt. When dealing with digital images/videos as evidence in such investigations, there is a critical need to conclusively prove the source camera/device of the questioned image.

- source camera identification

- camera brand source identification

- camera model source identification

- image source camera identification

- Photo Response Non-Uniformity

- computer forensic

- image forensic

1. Introduction

The last few years have seen a significant increase in research interest in the field of digital image forensics because the easy availability of advanced and affordable devices has made the acquisition and manipulation of digital media images which used to be a professional job very easily accessible to the public, giving room for untrusted media images and videos being in circulation. According to Su, Zhang, and Ji [1], the advancement in digital technology and the increasing number of images and video-sharing websites like YouTube, Facebook, Twitter, and other social media platforms have helped the spread of various kinds of less trusted images from individual sources on the internet. Digital forensic investigation is, therefore, more complex nowadays than ever due to this rapid advancement in digital devices and more reliance on it for virtually all human activities with users leveraging the technologies of digital devices that serve both good and malicious purposes and intents. Distinct from other forensic evidence, image and video recording provide a real-time eyewitness account that investigators, prosecutors, and the jury can listen to or see exactly what transpired. It is crucial to acquire a technology capable of proficiently identifying digital devices responsible for capturing images. This capability is essential for supporting law enforcement officers and prosecutors in criminal investigations, covering areas such as child pornography, insurance claims, movie piracy, traffic monitoring, and financial fraud. The challenge then becomes, can it genuinely identify the digital image that came from the alleged camera? The necessity to tackle these and other challenges gave rise to what is today known as image forensics. Identifying the source of an image is a vital aspect of digital forensics, as highlighted by Chen et al. [2], who emphasized that determining the acquisition device of an image as evidence for presentation in court is as crucial as the digital image itself. The goal of addressing the source camera identification problem involves discerning whether a given image was captured with a specific camera, including details about the camera model/brand and the imaging mechanism employed (such as camera, scanner, computer graphics, or smartphone). According to the observations of Thai, Retraint, and Cogranne [3], current active approaches like digital signatures and digital watermarking have drawbacks, as they require the incorporation of specialized information during image generation. Elaborating on these limitations, Chio, Lam, and Wong [4] argued that many images from cameras contain an exchangeable image file format (EXIF) header, which includes information such as the type of digital camera, exposure, date, and time. This information, however, could be maliciously altered and could be destroyed during the process of an image being edited. The drawback of active techniques to source camera identification gave rise to passive techniques which Thai et al. [3] argued have received significant attention in the last decade because they do not impose any constraints and do not require any prior knowledge. Only the suspicious digital image is available to forensic analysts, who can extract meaningful digital information from it to gather forensic evidence, track down the capture device, or discover any alteration therein. According to Bernacki [5], the internal traces or unique artefacts left by the digital camera in each digital image serve as camera fingerprints that are used in passive techniques and investigating the image acquisition pipeline can offer these internal traces.

2. Image Source Camera Identification Techniques

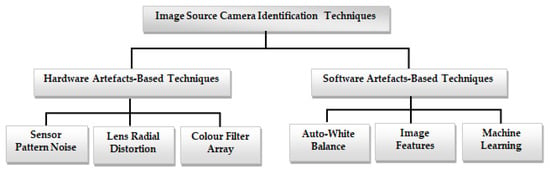

To help with image forensic investigations, researchers introduced different methods for image source camera identification [9,10]. This section gives a comprehensive overview of the various proposed methods for identifying the source camera of an image. This examination delves into existing methods for image source camera identification, including methods based on intrinsic hardware artifacts resulting from manufacturing imperfections, and those utilizing software-related properties. Intrinsic hardware-related flaws that can be exploited in image source camera identification include sensor pattern noise, lens radial distortion, and sensor dust, among others. Software artefact-based methodologies are used in camera fingerprint extraction using the characteristics and artefacts left by camera software, such as auto white balance approximation and colour filter array interpolation, among others. Figure 1 shows the taxonomy of image source camera identification techniques.

Figure 1. Taxonomy of image source camera identification techniques.

2.1. Sensor Pattern Noise-Based Techniques

A flaw in the manufacturing process of the image sensor chip, which creates in pixel sensitivity variation in the imaging sensor, is the source of sensor pattern noise (SPN). These pattern noises contain a distinctive quality that makes them identifiable to that camera imaging sensor. Therefore, it provides a “fingerprint” of that specific digital camera. The main component of SPN is the photo response non-uniformity (PRNU) noise. Therefore, analyzing the PRNU noise, which is measured as a unique camera fingerprint, is one of the trustworthy techniques for image source camera identification using SPN. The image still undergoes further processing stages like demosaicking, interpolation, and gamma correction after the sensing process. Even after going through all of this, the image still has bullet scratches which are not removable by the above processes.

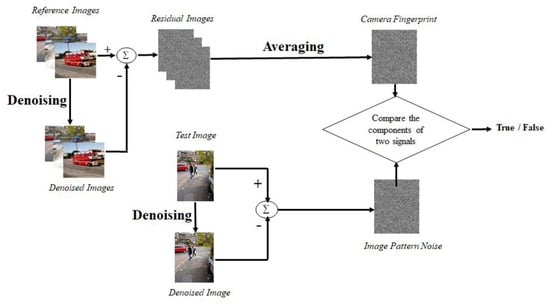

In the paper, which has been thought of as a benchmark for image source camera identification using SPN, Lukas et al. [14], introduced a technique that uses discrete wavelet transform to decompose the original images into four sub-bands. Then it applies a Wiener denoising filter on the resulting three high-frequency wavelet subbands to denoise the image high-frequency wavelet subbands and reconstruct the image using the smoothed wavelet high-frequency sub-bands. It subtracts the resulting denoised image from the input image to compute the reference pattern noise of the image. The camera fingerprint is computed by averaging the reference pattern noise of a few images from the camera under different conditions. Then, to determine if the image comes from the reference camera, they use the normalization cross correlation between the calculated pattern noise of the injury image and the pattern noise of the camera. Even though this method appears to have the potential to increase computing complexity and cannot be used for large-scale processing, its level of reliability tends to be high. The experiments were conducted on roughly 320 images captured by nine consumer digital cameras, and the outcomes of the experiment were assessed using false acceptance rate (FAR) and false rejection rate (FRR) error rates. Even for cameras of the same model, the camera recognition is 99.8% accurate. Jaiswal and Srivastava in [15] highlighted that image scenes may highly contaminate the extracted PRNU, resulting in wrong camera identification. Therefore, they proposed a framework based on the frequency and spatial features to increase the size of the image dataset used to train and estimate the camera PRNU. The proposed framework uses discrete wavelet transform (DWT) and local binary pattern (LBP) to extract features from the images. These features are then used to train a multi-class classifier, e.g., support vector machine (SVM), linear discriminant analysis (LDA), and K-nearest neighbor (KNN). The resulting trained classifier is then used to identify the image camera source. Soobhany et al. [16] proposed another technique like [14] where they used a non-discrete wavelet transform to decompose the input image into four wavelet sub-bands. To calculate the SPN from the image, the coefficients of the resulting wavelet high-frequency sub-bands are de-noised. The image SPN signature was compared to the camera reference SPN signature to identify the image source camera. An advantage of this technique is that the non-decimated wavelet transform maintains all the details of the wavelet sub-bands during the decomposition process allowing for more information to be preserved. Again, the SPN signature can be retrieved after the first level of wavelet decomposition, as compared to the decimated approach, which requires four levels of wavelet decomposition to obtain a credible SPN. The proposed method was tested using images from ten different cameras from the Dresden image dataset. Results demonstrate that the suggested method outperforms the state-of-the-art wavelet-based image source camera identification method with relatively low computational cost. Al-Athamneh et al. [17] suggested the use of only the green component of an RGB image for PRNU extraction while using a similar method used in [14]. This is because human eyes are susceptible to green colour, and the green colour of the sensor pixel caries twice the information compared to its red and blue components. The green colour channel of the video frames was examined to create G-PRNU (green—photo response non-uniformity). The technique demonstrated a good level of reliability in identifying digital video cameras and generated superior performance compared to PRNU in identifying the source of digital videos. Images from six cameras were used to test the technique (two mobile phones and four consumer cameras). Videos, 290 in number, were recorded over the course of four months in a variety of settings. The 2-D correlation coefficient detection test was used to determine the sources of each of the 290 test videos. Their results show an average prediction accuracy of 99.15%. Akshatha et al. [18] proposed an image camera source identification technique. They used a high-order wavelet statistics (HOWS) method to remove the camera noise from the input image and extract the camera signature. To determine the originating source camera for the given image, the features were fed to support vector machine classifiers, and the results were validated using the ten-fold cross-validation technique. Images taken with different cell phone cameras were used, and the algorithm proved to be capable of accurately identifying the source camera of the provided image with 96.18% accuracy on average, irrespective of camera model or band. Georgievska et al. [19] proposed an image source camera identification method where images are clustered based on peak to correlation energy (PCE) similarity scores of their PRNU patterns. The image is first converted to grayscale. The initial estimate of the PRNU pattern is obtained using the first step total variation (FSTV) algorithm. After that zero mean and Wiener filtering steps are performed to filter out any artefacts produced by colour interpolation, on-sensor signal transfer, imaging sensor design, and JPEG compression. Then, PCE is computed as the ratio between the height of the peak and the energy of the cross correlation between two PRNU patterns. Their proposed technique uses graphics processing units (GPUs) to extract the PRNU patterns from large sets of images as well as to compute the PCE scores within a reasonable timeframe. The performance of the proposed method was evaluated using the Dresden image dataset. Their result showed this technique is highly effective.

Rodrıguez-Santos et al. [20] proposed employing Jensen–Shannon divergence (JSD) to statistically compare the PRNU-based fingerprint of each qualifying source camera against the noise residual of the disputed image for the digital camera identification technique. Zhang et al. [21] proposed an iterative algorithm tri-transfer learning (TTL) for source camera identification, this algorithm combines transfer learning with tri-training learning. The transfer learning module in TTL transfers knowledge obtained from training sets to improve identification performance. In comparison to previous methods, combining the two modules allows the framework to achieve superior efficiency and performance on mismatched camera model identification compared to other state-of-the-art techniques. Zeng et al. [22] proposed a dual tree complex wavelet transform (DTCWT)-based approach for extracting the SPN from a given image that performs better near strong edges. Symmetric boundary extension rather than periodized boundary extension was used to improve the quality of SPN as well as the picture border. Balamurugan et al. [23] proposed an image source camera identification technique, which uses an improved locally adaptive discrete cosine transform (LADCT) filter followed by a weighted averaging method to exploit the content of images carrying PRNU efficiently. LADCT is believed to perform well on images with high image-dependent noise like multiplicative noise of which PRNU is one of such. The technique divides images into blocks of fixed size in pixels that can be shifted in a single step either horizontally or vertically. A discrete cosine transform (DCT) is applied on each block, extracting its DCT coefficient, and for each of the provided blocks and over the DCT coefficients, and a threshold is applied. With the application of inverse DCT (IDCT) on the DCT coefficients, the blocks are once more reconstructed in the spatial domain. Then the average of the DCT coefficients for the same spatial domain values is used to determine the final estimation of the pixel. The weighted average provides weight to every coefficient of the blocks with the same weights, providing a greater averaging value than the simple average. The Dresden image dataset was used to evaluate the performance of the proposed technique. Their experimental results demonstrated its significant effectiveness. Qian et al. [24] introduced a source camera identification technique for web images using neural-network augmented sensor pattern noise to easily trace web images while maintaining confidentiality. Their technique includes three stages: initial device fingerprint registration, fingerprint extraction, secure connection establishment during image collection, and verification of the relationship between images and their source devices. This technique provides cutting-edge performance for dependable source identification in modern smartphone images by adding metric learning and frequency consistency into the deep network design. Their technique also offers many optimisation sub-modules to reduce fingerprint leakage while improving accuracy and efficiency. It uses two cryptographic techniques, the fuzzy extractor and zero-knowledge proof, to securely establish the correlation between registered and validated image fingerprints.

Lawgaly and Khelifi [25] proposed similar techniques that use locally adaptive DCT (LADCT) for image source camera identification. Their technique enhanced the locally adaptive DCT filter before the weighted averaging (WA) approach as in [23] to effectively exploit the content of images conveying the PRNU. The estimated colour PRNUs were concatenated for better matching because the physical PRNU is present in all colour planes. The system was thoroughly evaluated via extensive experiments on two separate image datasets considering varied image sizes, and the gain obtained with each of its components was highlighted. To produce denoised estimates of neighboring and overlapping blocks, they used a sliding block window. The local block means and the local noise variance both influence each block’s threshold. The algorithm was evaluated using images from the Dresden dataset; their results demonstrated superior performance against cutting-edge techniques. Chen and Thing [26] adopted what they called block matching and 3D filtering (BM3D) which is known as a collaborative filtering process. This proposed technique grouped similar blocks extracted from images where each group is stacked together to form 3D cylinder-like shapes. Filtering is performed on every block group. A linear transform is applied on the image before Wiener filtering. Then, the transform is inverted to reproduce all filtered blocks before the image is transformed back to its 2D form. Their results show that PRNU-based methods can provide a certain level of capability in terms of verifying the integrity of images.

Yaqub [27] proposed a simple scaling-based technique for image source camera identification when the questioned image is cropped from an unidentified source or when it is full resolution. The technique presents a simple, effective, and efficient approach for image source camera identification based on a hierarchy of scaled camera fingerprints. Lower levels of the hierarchy, which contain scaled-down fingerprints, allow for the elimination of many candidate cameras, which reduces computation time. Test results show that the technique while being applicable to full-resolution and cropped query images, leads to significantly less computation. A test with 500 cameras showed that for non-cropped images, the technique has 55 times less run time overhead than the conventional full-resolution correlation, while for cropped images, the overhead is decreased by a factor of 13.35. Kulkarni and Mane [28] proposed a hybrid system made up of the best results as a method for extracting sensor noise that uses gradient-based operators and Laplacian operators to generate a third image while also revealing the noise and edges present in it. To obtain the noise present in the image, a threshold is applied to remove the edges.

The gray level co-occurrence matrix (GLCM) in the feature extraction module is then given this noisy image. Based on its qualities, homogeneity, contrast, correlation, and entropy are used to extract numerous features. To obtain an exact match, the SPN is retrieved from the GLCM features and used for matching with the test set. Results are improved by the hybrid method that combines GLCM feature extraction with SPN extraction. Using Dresden image dataset, the technique’s accuracy is found to be, on average, 97.59%, which is quite high. Figure 2 shows the flow chart for source camera identification using large components of sensor pattern noise.

Figure 2. SPN processing pipeline using large components of sensor pattern noise.

The effect of wavelet transform on the performance of the conventional wavelet-based image camera source identification technique was reported in [29]. The authors used plane images from the VISION image dataset captured using eleven different camera brands to generate the experimental results. They reported that the conventional wavelet-based technique achieves its highest performance when it uses a sym2 wavelet.

2.2. Intrinsic Lens Radial Distortion

In a camera, a lens is a device that directs light toward a fixed focal point. The symmetric distortion caused by flaws in the lens’s curvature during the grinding process is known as radial lens distortion. Most image devices, as mentioned by Choi et al. in [30], have lenses with spherical surfaces; their intrinsic radial distortions act as a distinctive fingerprint for recognizing source cameras. In this paper, the authors introduced two kinds of features based on pixel intensities and distortion measurements, which enable measurement of the radial distortions causing a straight line to become curved in the images. Performing four different sets of experiments, lens radial distortion in image categorization is used in the initial set of tests as a feasibility test. The second set of tests demonstrates that the technique outperforms those that solely use image intensities in terms of accuracy by a statistically significant margin. The next series of tests examines how the suggested features work when more cameras and testing images are considered. The fourth set of tests examines how the focal length of zoom lenses affects error rates. The SVM classifier included in the LibSVM package was utilized in the tests and the average accuracy obtained was 91.5% associated with the confusion matrix as assessment criterion.

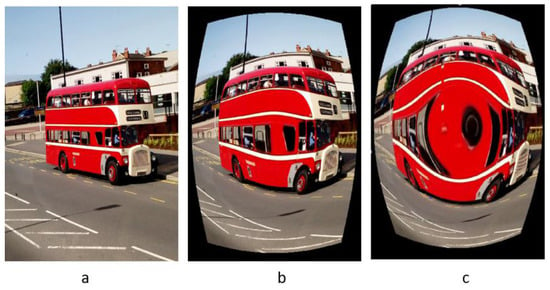

Bernacki [5] reported a digital camera identification technique using a real-time image processing system based on the investigation of vignetting and distortion flaws. The technique eliminates the need for a wavelet-based denoising filter or the creation of camera fingerprints, both of which have a significant impact on the image processing speed. Instead, the technique separates the red colour band from the input image and filters it using a median filter. After that, the absolute difference between the red colour channel and its median filtered version is computed. The size of the picture sections to be examined at the four corners is determined, and the mean value of the pixel intensities is computed to provide the value that will be utilized as the camera signature. Their findings suggest that vignetting defect analysis can be used to identify camera brands with less computational effort. This technique calculates the distortion parameter k for a collection of images taken with various cameras to see if there are any patterns that could be used to identify an individual camera using this model pu = pd (1 + kr2). Brand identification accuracy on smartphones and the Dresden image dataset is 72% and 52%, respectively. As a result, the performance accuracy is less than the algorithm presented in [13], but the vignetting-CT algorithm outperforms it in terms of speed. Figure 3 displays an example of lens radial distortion highlighting the original scene, barrel, and pincushion distortions.

Figure 3. Sample of lens radial distortion: (a) original, (b) barrel distortion, (c) pincushion distortion.

2.3. Colour Filter Array Interpolation

Colour filter array (CFA) is a demosaicing method used in digital cameras. It is also known as a colour reconstruction method, which is used to reconstruct a digital colour image from the colour samples generated by an image sensor overlaid with a CFA. This demosaicing information can be extracted and used as a camera fingerprint.

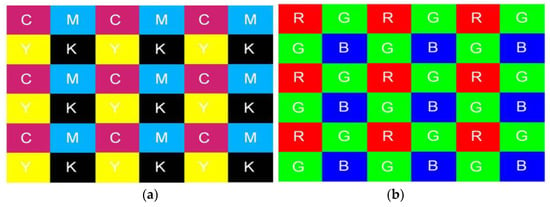

To discern the correlation structure present in each color band for image classification purposes, Bayram et al. [31] investigated the CFA interpolation procedure. The underlying assumption is that each device manufacturer’s interpolation algorithm and CFA filter pattern design exhibit distinct uniqueness, leading to discernible correlation structures in captured images. Utilizing the iterative expectation maximization (EM) algorithm, two distinct sets of features are derived for classification: the interpolation coefficients derived from the images and the peak locations and magnitudes within the frequency spectrum of the probability maps. Two camera models: Sony DSC-P51 and Nikon E-2100 with a resolution of two megapixels are used in the dataset. Using the confusion matrix for assessment the classification accuracy is 95.71% for two separate cameras when using a 5 × 5 interpolation kernel, however, it decreases to 83.33% when three cameras are compared. It ought to have been investigated how this technique affected the categorization accuracy with a larger number of cameras. The technique has not been tested with identical model cameras, but failure could be anticipated because identical model cameras often utilize the same CFA filter pattern and interpolation algorithm. Consequently, this technique may not perform well where compressed images are involved. Figure 4 shows the Bayram RGB interpolation values.

Figure 4. CFA pattern using (a) CMYK values and (b) RGB values.

Lia and Lin [32] introduced an algorithm that employs an interpolation of images to determine image characteristic values with a support vector machine (SVM) to lower the required processing power and attain a high true positive. This algorithm uses the colour interpolation methods, which includes bilinear interpolation, adaptive colour plane interpolation, effective colour interpolation and highly effective iterative demosaicking. Cameras of various brands and models were employed to conduct classification in the study and the results of their study showed that this method had a good identification rate, with a recognition rate of up to 90% only when a wave filter was additionally introduced. Chen and Stamm [33] proposed a camera brand identification technique. Their method first re-samples colour components of the input image in relation to a predetermined CFA pattern, where M different baseline demosaicing algorithms are applied to demosaic missing colour components in the input image. It then subtracts each resulting re-demosaic image from the input image generating M demosaic residual images. The resulting demosaic residual images are considered as a set of co-occurrence matrices using K different geometric patterns. It then uses the multi-class ensemble classification method to extract the camera brand signature. They used relative error reduction (RER) criteria to measure the performance of their technique. They reported a performance of 98% in terms of accuracy for camera model identification using images from the Dresden image dataset.

This entry is adapted from the peer-reviewed paper 10.3390/jimaging10020031

This entry is offline, you can click here to edit this entry!