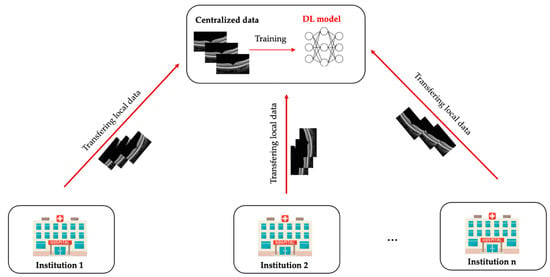

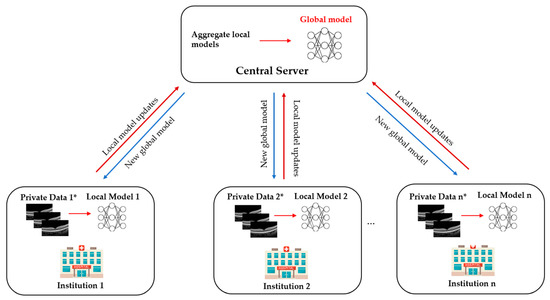

Advances in artificial intelligence deep learning (DL) have made tremendous impacts on the field of ocular imaging over the last few years. Specifically, DL has been utilised to detect and classify various ocular diseases on retinal photographs, optical coherence tomography (OCT) images, and OCT-angiography images. In order to achieve good robustness and generalisability of model performance, DL training strategies traditionally require extensive and diverse training datasets from various sites to be transferred and pooled into a “centralised location”. However, such a data transferring process could raise practical concerns related to data security and patient privacy. Federated learning (FL) is a distributed collaborative learning paradigm which enables the coordination of multiple collaborators without the need for sharing confidential data. This distributed training approach has great potential to ensure data privacy among different institutions and reduce the potential risk of data leakage from data pooling or centralisation.

- ocular imaging

- federated learning

- deep learning

- ophthalmology

- data security

- patient privacy

1. What Is Federated Learning?

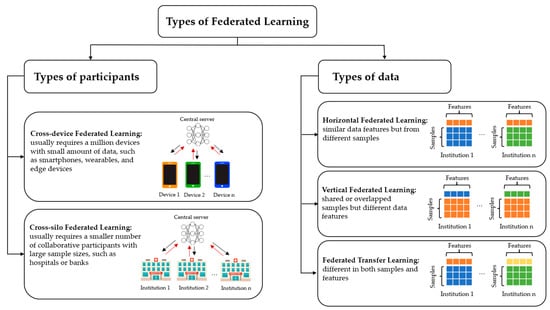

2. Types of Federated Learning

3. Current Federated Learning Applications in Ophthalmology

3.1. Diabetic Retinopathy

Yu et al. [5] utilised the FL framework for referable diabetic retinopathy (RDR) classification using OCT and OCTA from two different institutions. The performance of the FL model was compared with the model trained with data acquired from the same institution and from another institution. The results were comparable to those trained on local data and outperformed those trained on other institutes’ data. This study demonstrated the potential for FL to be applied for the classification of DR and facilitate collaboration between different institutions in the real world. Furthermore, the study also investigated the FL approach to apply microvasculature segmentation to multiple datasets in a simulated environment. The study designed a robust FL framework for microvasculature segmentation in OCTA images. The image datasets were acquired from four different OCT devices. The FL framework in this experiment achieved performance comparable to the internal model and the model trained with combined datasets, showing that FL can be used to improve generalizability by including diverse data from different OCT devices. However, regardless of the promising performance of the FL approach, it is essential to consider the potential application scenario. Using OCTA images for the classification of RDR could not be feasible in the DR screening programme in the real world. Retinal fundus photography is a widely acceptable imaging modality for identifying RDR, whereas OCTA images would be helpful to detect diabetic macular ischemia. In addition, although the source of images used for microvasculature segmentation was obtained from different OCT devices, the sample size of the datasets was small. Therefore, sample size justification would be needed to make the result of the FL approach more meaningful.3.2. Retinopathy of Prematurity

Retinopathy of prematurity (ROP), a leading cause of childhood blindness worldwide, is a condition characterised by the growth of abnormal fibrovascular retinal structures in preterm infants. Hanif et al. [6] and Lu et al. [7] explored the FL approach for developing a DL model for ROP. Lu et al. [7] utilised, trained, and validated a model on 5245 ROP retinal photographs from 1686 eyes of 867 premature infants in neonatal intensive care of seven hospital centres in the United States. The images were labelled with clinical diagnoses of plus disease (plus, pre plus, or no plus) and a reference standard diagnosis (RSD) by three image-based ROP graders and the clinical diagnosis. In most DL model comparisons, the models trained via the FL approach achieved performance comparable with those trained via the centralised learning approach, with the AUROC ranging from 0.93 to 0.96. In addition, the FL model performed better than the locally trained model using only a single-institution data set in 4 of 7 sites in terms of AUROC. Moreover, the FL model in this study maintained its consistency and accuracy to heterogenous clinical data sets among different institutions, which varied in sample sizes, disease prevalence and patient demographics. In the second experiment, Hanif et al. [6] demonstrated the potential ability of FL to harmonise the difference in clinical diagnoses of ROP severity between institutions. Instead of using the consensus RSD, a FL model was developed based on ROP vascular severity score (VSS). In this researchtudy, there was a significant difference in the level of VSS in eyes with no plus disease. VSS could be subjective, with considerable variation between experts in clinical settings that may affect clinical or epidemiology research [8]. However, according to this researchtudy, the FL model could standardise the difference in clinical diagnoses across institutions without centralised data collection and consensus of experts. Based on the results of this researchstudy, the FL model provides a generalisable approach to assessing clinical diagnostic paradigms and disease severity for epidemiologic evaluation without sharing patient information. These two studies demonstrated the utility of FL framework in ROP, allowing collaboration between different institutions while protecting data privacy. However, these studies were still conducted under a simulated environment. Practical issues during clinical implementation such as communication efficiency or bias of data among participating centres could not be identified in these studies. Such challenges will be further discussed in the section below.4. Future Direction:

Ophthalmology is a medical speciality driven by imaging that has unique opportunities for implementing DL systems. Ocular imaging is not only fast and cheap compared to other imaging modalities such as CT or MRI scans but contains essential information on ocular and systemic diseases. Utilising FL in prior DR and ROP studies illustrates the potential ability to overcome privacy challenges and inspires further deployment of FL in other ophthalmic diseases. In the future, FL applications and developments in real-world clinics are warranted.

4.1. Multi-Modal Federated Learning

In ophthalmology, there are diverse data from different modalities such as fundus photography, OCT, OCTA, and visual field (VF) with different protocols. With such a wide range of modalities, using one modality alone is often insufficient to detect alterations and diagnose diseases. Glaucoma, for instance, is diagnosed based on a combination of intraocular pressure measurement, colour fundus photograph, VF examinations and peripapillary retinal nerve fibre layer (RNFL) thickness evaluation. A DL algorithm developed based on RNFL thickness without referring to the VF, or relevant clinical diagnostic data may not be enough to diagnose glaucoma in real-world setting. Recently, Xiong et al. trained and validated a bimodal DL algorithm to detect glaucomatous optic neuropathy (GON) from both OCT images and VF [9]. The diagnostic performance of the proposed DL algorithm reached an AUROC of 0.950 and outperformed 2 single modals trained by only VF or OCT data (AUROC, 0.868 and 0.809, respectively). In addition, the model achieved comparable performance to experienced glaucoma specialists, suggesting that this multi-modal DL system could be valuable in detecting GON. Apart from glaucoma, OCT and OCTA have become necessary non-invasive imaging modalities for quantitative and qualitative assessment of retinal features (e.g., retinal thickness and retinal fluid) in many retinal diseases such as AMD and DR. A recent study by Jin et al. demonstrated the efficacy of a multimodal DL model using OCT and OCTA images for the assessment of choroidal neovascularisation in neovascular AMD, which achieved comparable performance to retinal specialists with an accuracy of 95.5% and an AUROC of 0.979 [10]. In addition to ocular imaging data, EHRs also contain various information, including past medical history and systemic features. Incorporating EHRs data offers an outstanding opportunity to better understand complex relationships between systemic and ocular diseases. Data from medical history or laboratory, such as blood pressure and glycated haemoglobin, can be used to improve the predictive power of AI systems. Therefore, it is necessary to build and implement FL systems to support multi-modal data from different modalities to enhance the performance of DL in early detection and disease management. Several existing studies proposed a multi-modal FL framework using data modalities showing promising results. Recently, Zhao et al. have proposed a multi-modal framework that enables FL systems to work better with collaborators with local data from different modalities and clients with varying setups of devices compared to a single modality [11]. Another study by Qayyum et al. suggested a framework using clustered FL-based methods for an automatic diagnosis of COVID-19 that would allow remote hospitals to utilise multi-modal data, including chest X-rays and ultrasound images [12]. Additionally, the clustered FL presented a better performance in handling the divergence of data distribution compared to conventional FL.4.2. Federated Learning and Rare Ocular Diseases

In addition, FL is expected to help in the future in diagnosing, predicting, and treating rare or geographically uncommon diseases such as ocular tumours or inherited retinal diseases, where currently there are challenges due to low incidence rates and small datasets [13][14]. Connecting multiple institutions on a global scale could improve clinical decisions regardless of patients’ location and demographic environment. Fujinami-Yokokawa Y et al. [15] trained and validated a DL system for automated classification among ABCA4-, EYS-, and RP1L1-associated retinal dystrophies using a Japanese Eye Genetics Consortium dataset of 417 images (fundus photographs and FAF images). Although the DL system could provide an accurate diagnosis of three inherited retinal diseases, there is limited phenotypic heterogeneity within each group, and the dataset is from a specific ethnic population. Recently, FL has shown its feasibility and effectiveness for weakly supervised classification of carcinoma in histopathology and survival prediction by using thousands of gigapixel whole slide images from multiple institutions [16]. The study demonstrated the potential of the FL framework to be applied in rare diseases where datasets are limited or in countries that lack access to pathology and laboratory services. Therefore, FL is a promising approach for greater international collaboration to develop valuable and robust DL algorithms for rare ocular diseases.4.3. Blockchain-Based Federated Learning

The development of FL could further combine with the next generation of technology, potentially blockchain technology, to improve the privacy mechanism. Blockchain is a decentralised ledger innovation predicated on privacy, openness, and immutability, which has been used in the healthcare system to manage genetic information and EHRs [17][18]. Blockchain network has also been applied in ophthalmology to detect myopic macular degeneration and high myopia using retinal photographs from diverse multi-ethnic cohorts in different countries [19]. The study suggested that adopting blockchain technology could increase the validity and transparency of DL algorithms in medicine. With its immutability and traceability, blockchain can be an effective tool to prevent malicious attacks in FL. The immediate model updates, either local weights or gradients, can be chained in a cryptographical way offered by blockchain technology to maintain their integrity and confidentiality. Thus, integrating FL and blockchain could effectively allow the processing of vast amounts of data created practically in healthcare settings and improve data security and privacy by offering security and effective points for the deployment of the model [20].4.4. Decentralised Federated Learning

In FL system, a central server usually orchestrates the learning process and updates the model upon the training results from clients. However, such star-shaped server-client architecture decreases the fault tolerance, does not solve the problem of information governance, and requires a powerful central server, which may not always be available in many real-life scenarios with a very large number of clients [21][22]. Thus, the fully decentralised FL that replaces the communication between central server and each client by interconnected clients’ peer-to-peer communication was proposed to address the above-mentioned problems. Recently, swarm learning, a decentralised learning system without central server, was introduced to build the models independently on private data at each individual site and support data sovereignty, security, and confidentiality by utilising edge computing, blockchain-based peer-to-peer networking and coordinator [21]. Saldanha et al. proved that swarm learning can not only be used to detect COVID-19, tuberculosis, leukaemia and lung pathologies but also to predict clinical biomarkers in solid tumours and yield high-performing models for pathology-based prediction of BRAF mutation and microsatellite instability (MSI) status [23].4.5. Federated Learning and Fifth Generation (5G) and Beyond Technology

With the advent of wireless communications over the past few decades, the recent 5G and beyond technology has already been launched and provided low latency, high transmission rate, and high reliability compared to existing networks [24]. An efficient 5G network could address the issue of communication latency and network bandwidth in the FL framework. Moreover, the 5G network has been implemented in managing COVID-19 patients by video telemedicine in real-time [25]. In the field of ophthalmology, 5G technology has been applied in ophthalmology to conduct real-time tele-retinal laser photocoagulation for the treatment of DR [26]. This evidence suggests the potential of integration FL and 5G technology to allow pre-processing, training, and processing data in real-time.5. Conclusions

FL creates a reliable and collaborative DL model for multi-institution collaborations without compromising the privacy of data, which will be critical in ophthalmology healthcare, especially in ocular image analysis. More research is warranted in the field of ophthalmology to investigate how to apply FL efficiently and effectively in real-time and real-world clinical settings.References

- McMahan, B.; Moore, E.; Ramage, D.; Hampson, S.; y Arcas, B.A. Communication-efficient learning of deep networks from decentralized data. In Proceedings of the Artificial Intelligence and Statistics, Fort Lauderdale, FL, USA, 20–22 April 2017; pp. 1273–1282.

- Yang, Q.; Liu, Y.; Chen, T.; Tong, Y. Federated Machine Learning: Concept and Applications. ACM Trans. Intell. Syst. Technol. 2019, 10, 12.

- Jin, Y.; Wei, X.; Liu, Y.; Yang, Q. Towards utilizing unlabeled data in federated learning: A survey and prospective. arXiv 2020, arXiv:2002.11545.

- Yang, Q.; Liu, Y.; Cheng, Y.; Kang, Y.; Chen, T.; Yu, H. Federated Learning. Synth. Lect. Artif. Intell. Mach. Learn. 2019, 13, 1–207.

- Yu, T.T.L.; Lo, J.; Ma, D.; Zang, P.; Owen, J.; Wang, R.K.; Lee, A.Y.; Jia, Y.; Sarunic, M.V. Collaborative Diabetic Retinopathy Severity Classification of Optical Coherence Tomography Data through Federated Learning. Investig. Ophthalmol. Vis. Sci. 2021, 62, 1029.

- Hanif, A.; Lu, C.; Chang, K.; Singh, P.; Coyner, A.S.; Brown, J.M.; Ostmo, S.; Chan, R.V.P.; Rubin, D.; Chiang, M.F.; et al. Federated Learning for Multicenter Collaboration in Ophthalmology: Implications for Clinical Diagnosis and Disease Epidemiology. Ophthalmol. Retina 2022, 6, 650–656.

- Lu, C.; Hanif, A.; Singh, P.; Chang, K.; Coyner, A.S.; Brown, J.M.; Ostmo, S.; Chan, R.V.P.; Rubin, D.; Chiang, M.F.; et al. Federated Learning for Multicenter Collaboration in Ophthalmology: Improving Classification Performance in Retinopathy of Prematurity. Ophthalmol. Retina 2022, 6, 657–663.

- Fleck, B.W.; Williams, C.; Juszczak, E.; Cocker, K.; Stenson, B.J.; Darlow, B.A.; Dai, S.; Gole, G.A.; Quinn, G.E.; Wallace, D.K.; et al. An international comparison of retinopathy of prematurity grading performance within the Benefits of Oxygen Saturation Targeting II trials. Eye 2018, 32, 74–80.

- Xiong, J.; Li, F.; Song, D.; Tang, G.; He, J.; Gao, K.; Zhang, H.; Cheng, W.; Song, Y.; Lin, F.; et al. Multimodal Machine Learning Using Visual Fields and Peripapillary Circular OCT Scans in Detection of Glaucomatous Optic Neuropathy. Ophthalmology 2022, 129, 171–180.

- Jin, K.; Yan, Y.; Chen, M.; Wang, J.; Pan, X.; Liu, X.; Liu, M.; Lou, L.; Wang, Y.; Ye, J. Multimodal deep learning with feature level fusion for identification of choroidal neovascularization activity in age-related macular degeneration. Acta Ophthalmol. 2022, 100, e512–e520.

- Zhao, Y.; Barnaghi, P.; Haddadi, H. Multimodal federated learning. arXiv 2021, arXiv:2109.04833.

- Qayyum, A.; Ahmad, K.; Ahsan, M.A.; Al-Fuqaha, A.; Qadir, J. Collaborative federated learning for healthcare: Multi-modal covid-19 diagnosis at the edge. arXiv 2021, arXiv:2101.07511.

- Sadilek, A.; Liu, L.; Nguyen, D.; Kamruzzaman, M.; Serghiou, S.; Rader, B.; Ingerman, A.; Mellem, S.; Kairouz, P.; Nsoesie, E.O.; et al. Privacy-first health research with federated learning. NPJ Digit. Med. 2021, 4, 132.

- Sheller, M.J.; Edwards, B.; Reina, G.A.; Martin, J.; Pati, S.; Kotrotsou, A.; Milchenko, M.; Xu, W.; Marcus, D.; Colen, R.R.; et al. Federated learning in medicine: Facilitating multi-institutional collaborations without sharing patient data. Sci. Rep. 2020, 10, 12598.

- Fujinami-Yokokawa, Y.; Ninomiya, H.; Liu, X.; Yang, L.; Pontikos, N.; Yoshitake, K.; Iwata, T.; Sato, Y.; Hashimoto, T.; Tsunoda, K.; et al. Prediction of causative genes in inherited retinal disorder from fundus photography and autofluorescence imaging using deep learning techniques. Br. J. Ophthalmol. 2021, 105, 1272–1279.

- Lu, M.Y.; Chen, R.J.; Kong, D.; Lipkova, J.; Singh, R.; Williamson, D.F.K.; Chen, T.Y.; Mahmood, F. Federated learning for computational pathology on gigapixel whole slide images. Med. Image Anal. 2022, 76, 102298.

- Haleem, A.; Javaid, M.; Singh, R.P.; Suman, R.; Rab, S. Blockchain technology applications in healthcare: An overview. Int. J. Intell. Netw. 2021, 2, 130–139.

- Ng, W.Y.; Tan, T.-E.; Xiao, Z.; Movva, P.V.H.; Foo, F.S.S.; Yun, D.; Chen, W.; Wong, T.Y.; Lin, H.T.; Ting, D.S.W. Blockchain Technology for Ophthalmology: Coming of Age? Asia-Pac. J. Ophthalmol. 2021, 10, 343–347.

- Tan, T.-E.; Anees, A.; Chen, C.; Li, S.; Xu, X.; Li, Z.; Xiao, Z.; Yang, Y.; Lei, X.; Ang, M.; et al. Retinal photograph-based deep learning algorithms for myopia and a blockchain platform to facilitate artificial intelligence medical research: A retrospective multicohort study. Lancet Digit. Health 2021, 3, e317–e329.

- Wang, Z.; Hu, Q. Blockchain-based Federated Learning: A Comprehensive Survey. arXiv 2021, arXiv:2110.02182.

- Warnat-Herresthal, S.; Schultze, H.; Shastry, K.L.; Manamohan, S.; Mukherjee, S.; Garg, V.; Sarveswara, R.; Händler, K.; Pickkers, P.; Aziz, N.A.; et al. Swarm Learning for decentralized and confidential clinical machine learning. Nature 2021, 594, 265–270.

- Kairouz, P.; McMahan, H.B.; Avent, B.; Bellet, A.; Bennis, M.; Bhagoji, A.N.; Bonawitz, K.; Charles, Z.; Cormode, G.; Cummings, R. Advances and open problems in federated learning. Found. Trends® Mach. Learn. 2021, 14, 1–210.

- Saldanha, O.L.; Quirke, P.; West, N.P.; James, J.A.; Loughrey, M.B.; Grabsch, H.I.; Salto-Tellez, M.; Alwers, E.; Cifci, D.; Ghaffari Laleh, N. Swarm learning for decentralized artificial intelligence in cancer histopathology. Nat. Med. 2022, 28, 1232–1239.

- Simkó, M.; Mattsson, M.O. 5G Wireless Communication and Health Effects-A Pragmatic Review Based on Available Studies Regarding 6 to 100 GHz. Int. J. Environ. Res. Public Health 2019, 16, 3406.

- Hong, Z.; Li, N.; Li, D.; Li, J.; Li, B.; Xiong, W.; Lu, L.; Li, W.; Zhou, D. Telemedicine During the COVID-19 Pandemic: Experiences From Western China. J. Med. Internet Res. 2020, 22, e19577.

- Chen, H.; Pan, X.; Yang, J.; Fan, J.; Qin, M.; Sun, H.; Liu, J.; Li, N.; Ting, D.; Chen, Y. Application of 5G Technology to Conduct Real-Time Teleretinal Laser Photocoagulation for the Treatment of Diabetic Retinopathy. JAMA Ophthalmol. 2021, 139, 975–982.