Underwater video images, as the primary carriers of underwater information, play a vital role in human exploration and development of the ocean. Due to the absorption and scattering of incident light by water bodies, the video images collected underwater generally appear blue-green and have an apparent fog-like effect. In addition, blur, low contrast, color distortion, more noise, unclear details, and limited visual range are the typical problems that degrade the quality of underwater video images. Underwater vision enhancement uses computer technology to process degraded underwater images and convert original low-quality images into a high-quality image. The problems of color bias, low contrast, and atomization of original underwater video images are effectively solved by using vision enhancement technology. Enhanced video images improve the visual perception ability and are benefificial for subsequent visual tasks. Therefore, underwater video image enhancement technology has important scientifific signifificance and application value.

- underwater vision

- video/image enhancement

- deep learning

1. Introduction

Visual information, which plays an essential role in detecting and perceiving the environment, is easy for underwater vehicles to obtain. However, due to many uncertaintiesin the aquatic environment and the inflfluence of water on light absorption and scattering,and the quality of directly captured underwater images can degrades signifificantly. Largeamounts of solvents, particulate matter, and other inhomogeneous media in the water cause less light to enter the camera than in the natural environment. According to the Beer-Lambert-Bouger law, the attenuation of light has an exponential relationship with the medium. Therefore, the attenuation model of light in the process of underwater propagation is expressed as:

(1)

(1)

In Equation (1), E is the illumination of light, r is the distance, a is the absorption coeffificient of the water body, and b is the scattering coeffificient of the water body. The sum of a and b is equivalent to the total attenuation coeffificient of the medium.

The process of underwater imaging is shown in Figure 1. As light travels through water, it is absorbed and scattered. Water bodies have different absorption effects on light with different wavelengths. As shown in Figure 1, red light attenuats the fastest, and will disappear at about 5 meters underwater, blue and green light attenuate slowly, and blue light will disappear at about 60 meters underwater. The scattering of suspended particles and other media causes light to change direction during transmission and spread unevenly. The scattering process is inflfluenced by the properties of the medium, the light, and polarization. McGlamery et al. [1][2] presented a model for calculating underwater camera systems. The irradiance of non-scattered light, scattered light and backscattered light can be calculated by input geometry, source properties and optical properties of water. Finally, the parameters such as contrast, transmittance and the signal-to-noise ratio can be obtained. Then, the classical Jaffe–McGlamery [2][3] underwater imaging model was proposed. It indicates that the total illuminance entering the camera is a linear superposition of the direct component, the forward scatter component, and the backscattered component

(2)

(2)

In the equation, Ed, Ej and Eb represent the components of direct irradiation, forward scattering, and backscattering, respectively. The direct irradiation component is the light directly reflflected from the surface of the object into the receiver. The forward scattering component refers to the light reflflected by the target object in the water, deflflected into the receiver by the small angle of suspended particles in the water during straight propagation. Backscattering refers to illuminated light that reaches the receiver through the scattering of the water body. In general, the forward scattering of light attenuates more energy than the back scattering of light.

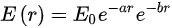

Due to the absorption and scattering of incident light by water bodies, the video images collected underwater generally appear blue-green and have an apparent fog-like effect. In addition, blur, low contrast, color distortion, more noise, unclear details, and limited visual range are the typical problems that degrade the quality of underwater video images [3][4]. Figure 1 shows some low-quality underwater images.

Figure 1. Some low-quality underwater images.

Figure 1. Some low-quality underwater images.

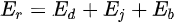

The existing underwater image enhancement techniques are classifified and summarized. As shown in Figure 2. The current algorithms are mainly divided into traditional and deep learning-based methods. Traditional methods include model-based and non-model methods. Non-model enhancement methods, such as the histogram algorithm, can directly enhance the visual effect through pixel changes without considering the imaging principle. Model-based enhancement is also known as the image restoration method. According to the imaging model, the relationship between clear, fuzzy, and transmission images is estimated, and clear images are derived, such as through the dark channel prior (DCP) algorithm [4][10]. With the rapid development of deep learning technology and its excellent performance in computer vision, underwater image enhancement technology based on deep learning is also developing rapidly. The methods based on deep learning can be divided into those based on convolution neural networks (CNN) [5][11] and those based on generative adversarial networks (GAN) [6][12]. Most of the existing enhancement techniques are extensions of underwater single image enhancement techniques in the video fifield. Since the development of underwater video enhancement technology is not fully mature, it will not classify for the time being here.

Figure 2. Classification of underwater image enhancement methods.

Figure 2. Classification of underwater image enhancement methods.

2. Traditional Uunderwater Image Eimage enhancement Mmethods

2.1. Non-Pphysical Model Emodel enhancement Mmethods

Due to the unique underwater optical environment, there are some limitations when traditional image enhancement methods are directly applied to image enhancement, so many targeted algorithms are proposed, including histogram-based, retinex-based, and image fusion-based algorithms.

(1) Histogram-based methods

Image enhancement based on the histogram equalization (HE) algorithm [7][19] transforms the image histogram from narrow unimodal to balanced distribution. As a result, the original image has roughly the same number of pixels in most gray levels (Table 1).

Table 1. Histogram-based underwater image enhancement methods.

| Author | Algorithm | Contribution |

|---|---|---|

| Iqbal et al. [8][22] | Unsupervised color correction method | Effectively removes blue bias and improves low component red channel and brightness |

| Ahmad et al. [9][23] | Adaptive histogram with Rayleigh stretch limit contrast | Enhances detail and reduces over-enhancement, supersaturated areas, and noise introduction |

| Ahmad et al. [10][24] | Recursive adaptive histogram modification combined with HSV model color correction | Better contrast in background area |

| Li et al. [11][25] | Contrast enhancement algorithm combining dehazing and prior histogram | Improves contrast and brightness |

| Li et al. [12][26] | Underwater white balance algorithm combined with histogram stretch phase | Shows better results in terms of color correction, haze removal, and detail clarification |

(2) Retinex-based methods

Retinex theory, based on color constancy, obtains the true picture of the scene by eliminating the influence of the irradiation component on the color of the object and removing the uneven illumination (Table 2).

Table 2. Underwater image enhancement methods based on retinex theory.

| Author | Algorithm | Contribution |

|---|---|---|

| Fu et al. [13][31] | Variational framework based on retinex to decompose and optimize reflectivity and illumination | Solves problems of color distortion, underexposure, and blurring |

| Bianco et al. [14][32] | The chromatic components are changed, moving their distributions around the white point (white balancing) and histogram cutoff, and stretching of the luminance component | Improves image contrast and it is suitable for real-time implementation. |

| Zhang et al. [15][33] | Extended multiscale retinex for underwater image enhancement | Suppresses halo phenomenon |

| Mercado et al. [16][34] | Multiscale retinex combined with reverse color loss (MSRRCL) | Overcomes problem of uneven lighting; color is more obvious |

| Li et al. [17][35] | Color correction algorithm based on MSR algorithm combined with histogram quantization of each color channel | Enhances underwater image contrast and removes color bias |

| Zhang et al. [18][36] | Multiscale retinex with color recovery based on multi-channel convolution (MC) | Enhances image’s global contrast and detail information, reduces noise, and eliminates the influence of illumination |

| Tang et al. [19][37] | Underwater image and video enhancement method based on multi-scale retinex (IMSRCP) | Improves image contrast and color, and suitable for underwater video |

| Hu et al. [20][38] | Use the gravitational search algorithm (GSA) to optimize the underwater image enhancement algorithm based on MSR and the NIQE index | Improves adaptive ability to environmental changes |

| Tang et al. [21][39] | Propose an underwater image enhancement algorithm based on adaptive feedback and Retinex algorithm | Reduces the time required for underwater image processing, improves the color saturation and color richness |

| Zhuang et al. [22][40] | A Bayesian retinex algorithm for enhancing single underwater image with multiorder gradient priors of reflectance and illumination | Solves problems of color correction, naturalness preservation, structures, and details promotion, artifacts, or noise suppression |

(3) Fusion-based methods

The image fusion algorithm fuses multiple images of the same scene to realize omplementary information of various images to achieve richer and more accurate image information after enhancement (Table 3).

Table 3. Underwater image enhancement methods based on image fusion algorithms.

| Author | Algorithm | Contribution |

|---|---|---|

| Ancuti et al. [23][41] | White balance and histogram equalization used to obtain two images, and multiscale fusion algorithm used to integrate underwater features | Noise reduction, improved global contrast, significantly enhanced edges and details for underwater video enhancement |

| Ancuti et al. [24][25][42,43] | Image is synthesized by using complementary information between multiple images; process of acquiring fused images and definition of weight information are optimized | Images are more informative and clearer, improves image exposure, and maintains image edges |

| Pan et al. [26][44] | Fusion strategy of Laplacian pyramid is used to fuse defogging image and color correction image | Enhances underwater image contrast and removes color bias |

| Chang et al. [27][45] | Transmission mapping fusion based on optical properties and image knowledge | Foreground has improved clarity, while the background remains somewhat blurry and more natural |

| Gao et al. [28][46] | A method based on local contrast correction (LCC) and multiscale fusion and the local contrast corrected images are fused with sharpened images by the multiscale fusion method | Solves the color distortion, low contrast, and unobvious details of underwater images |

| Song et al. [29][47] | An updated strategy of saliency weight coefficient combining contrast and spatial cues to achieve high-quality fusion combine with white-balancing and the global stretching | Eliminates color deviation, achieves high-quality fusion and a better de-hazing effect |

2.2. Physical Mmodel-Based Ebased enhancement Aalgorithm

Different from the non-physical model enhancement algorithm, the algorithm based on the physical model analyzes the imaging process and uses the inverse operation of the imaging model to get a clear image to improve the image quality from the imaging principle. It is also known as the image restoration technique. Underwater imaging models play a crucial role in physical model-based enhancement methods. The Jaffe–McGlamery underwater imaging model is a very widely used recovery model.

(1) Polarization-based methods

An underwater image restoration method based on the principle of polarization imaging utilizes the polarization characteristics of scattered light to separate scene light and scattered light, estimate the intensity and transmission coefficient of scattered light, and realize the imaging intensification (Table 4).

Table 4. Underwater image restoration algorithms based on polarization.

| Author | Algorithm | Contribution |

|---|---|---|

| Schechner et al. [30][51] | Polarization effect of underwater scattering is used to recover underwater images | Improved visibility and contrast |

| Namer et al. [31][52] | Polarization degree and intensity of background light are estimated from polarized image | More accurate estimation of depth map |

| Chen et al. [32][53] | If there is an artificial lighting area, area is compensated | Eliminates effects of artificial lighting on underwater images |

| Han et al. [33][54] | Backscattering effect is considered, and light source is changed to alleviate the scattering effect | Point diffusion estimation based on light polarization is proposed |

| Ferreira et al. [34][55] | Polarization parameters are estimated by the bionic optimization method, and unreferenced mass measure is used as the cost function for restoration | Achieves better visual quality and adaptability |

(2) Dark channel prior–based methods

He et al. [4][10] proposed the dark channel prior (DCP) algorithm. According to statistics, it is found that there is always a channel in most areas of a fog-free image, and a pixel has a meager gray value, which is called a dark channel. The dark channel prior theory is used to solve the transmission image and atmospheric light value, and the atmospheric scattering model is used to restore the image.

DCP algorithm has excellent defogging performance. When applied to underwater images, the dark channel is affected because the water absorbs too much red light. Therefore, underwater DCP algorithm is usually improved for this feature. Table 5 lists the underwater-specific DCP algorithms (Table 5).

Table 5. Underwater image restoration algorithms based on the DCP algorithm.

| Author | Algorithm | Contribution |

|---|---|---|

| Yang et al. [35][57] | Median filtering is used to estimate depth of field, and a color correction method is introduced | Improves calculation speed and contrast |

| Chiang et al. [36][58] | Combined wavelength compensation and image dehazing (WCID) | Corrects image blurring where artificial light is present |

| Drews et al. [37][38][59,60] | Underwater dark channel prior (UDCP) method considering only blue and green channels | Underwater images have a more obvious defogging effect |

| Galdran et al. [39][61] | DCP algorithm improved by using minimization of reverse red channel and blue-green channel | Processes influence of artificial light area, improves image color trueness |

| Li et al. [40][62] | Red channel uses gray world color correction algorithm, and blue and green channels use the DCP algorithm | Significantly improves visibility and contrast |

| Meng et al. [41][63] | Different strategies (color balance or DCP) selected to restore RGB combined with maximum posterior probability (MAP) sharpening | Eliminates underwater color projection, reduces blur, improves visibility, and better retains foreground textures |

(3) Integral imaging-based methods

Integral imaging technology is based on a multi-lens stereo vision system, which uses lens array or camera array to quickly obtain information from different perspectives of the target, and combines all element images (each image that records information from ifferent perspectives of a three-dimensional object) into element image array (EIA) (Table 6).

Table 6. Underwater image restoration algorithms based on the integral imaging.

| Author | Algorithm | Contribution |

|---|---|---|

| Cho et al. [42][64] | Use statistical image processing and computational 3D reconstruction algorithms to remedy the effects of scattering | The first report on 3D reconstruction of objects in turbid water using integral imaging |

| Lee et al. [43][65] | Applies spectral analysis and introduces a signal model with a visibility parameter to analyze the scattering signal | Reconstructed image presents better color presentation, edge, and detail information. |

| Satoruet et al. [44][66] | Combined with maximum posterior estimation, bayesian scattering suppression is achieved | This method achieves a higher structural similarity index measure |

| Neumann et al. [45][67] | Three-dimensional reconstruction is realized by combining the gray-world assumption applied in the Ruderman-opponent color space | Locally changing luminance and chrominance are taken into account |

| Bar et al. [46][68] | Single-shot multi-view circularly polarized speckle images collected by lens array and deconvolution algorithm based multiple medium sub-PSFs viewpoints are combined | Improve recovery of hidden objects in cloudy liquids |

| Li et al. [47][69] | Reconstruct the images obtained in a high-loss underwater environment by using photon-limited computational algorithms | Improves the PSNR in the high-noise regime |

3.1. Convolutional Nneural Nnetwork Mmethods

LeCun et al. [5][11] first proposed the convolutional neural network structure LeNET. The convolutional neural network is a kind of deep feedforward artificial neural network. It is composed of multiple convolutional layers that can effectively extract different feature expressions, from low-level details to high-level semantics, and is widely used in computer vision. In the underwater image enhancement algorithm based on CNN, according to whether the algorithm uses a physical imaging model to restore, it can be divided into non-physical and combined physical methods.

(1) Combined physical methods

Traditional model-based underwater image enhancement methods usually need to estimate the transmission graph and parameters of the underwater image based on prior knowledge and other strategies, and those estimated values thus have poor adaptability. The method combined with the physical model mainly uses the excellent feature extraction ability of the convolutional neural network to solve the parameter values in the imaging model, such as the transmission diagram. In this process, CNN replaces the assumptions or prior knowledge used in traditional methods, such as dark channel prior theory (Table 7).

Table 7. Underwater image enhancement method combined with physical model CNN.

| Author | Algorithm | Contribution |

|---|---|---|

| Shin et al. [48][71] | Learn the transmission image and background light of the underwater image at the same time | Good defogging performance |

| Ding et al. [49][72] | Adaptive color correction method is used for color compensation of underwater images, combined with convolutional neural network model | Reduces image blur |

| Wang et al. [50][73] | Color correction and “defog” processes trained simultaneously, and pixel interference strategy is used to optimize the training process | Improves convergence speed and accuracy of learning process |

| Barbosa et al. [51][74] | Set of image quality metrics is used to guide restoration process, and image is recovered by processing analog data | Avoids difficulty of real scene data measurement |

| Hou et al. [52][75] | Combined residual learning for underwater residual CNN | Deep learning approaches combine data-driven and model-driven approaches |

| Cao et al. [53][76] | Convolutional neural network is used to learn background light and transmission images directly from input images | Reveals more image details |

| Wang et al. [54][77] | Parallel convolutional neural network estimates transmission image and background light | Prevents halo, maintains edge features |

| Li et al. [55][78] | Design an underwater image enhancement network via medium transmission-guided multi-color space embedding | Exploiting multiple color spaces embedding and the advantages of both physical model-based and learning-based methods |

(2) Non-physical model methods

In the non-physical model, the original underwater image is sent into the network model with the help of CNN’s powerful learning ability. The enhanced underwater image is directly output after convolution, pooling, deconvolution, and other operations (Table 8).

Table 8. Underwater image enhancement methods of non-physical model CNNs.

| Author | Algorithm | Contribution |

|---|---|---|

| Perez et al. [56][79] | Deep learning method is used to learn the mapping model of degraded and clear images | First to use deep learning for underwater image enhancement |

| Sun et al. [57][80] | Underwater image is enhanced using an encoder–decoder structure | Significant denoising effect, enhanced image details |

| Li et al. [58][81] | Gated fusion network with white balance, histogram equalization, and gamma correction algorithms is used | Reference model for underwater image enhancement with good generalization performance |

| Li et al. [59][82] | Training data are synthesized by combining the physical model of the image and optical characteristics of underwater scenes and used to train the network | Retains original structure and texture while recreating a clear underwater image |

| Naik et al. [60][83] | Shallow neural networks connected by convolutional blocks and jumps are used | Maintains performance while having fewer parameters and faster speed |

| Han et al. [61][84] | A deep supervised residual dense network uses residual dense blocks, adds residual path blocks between the encoder and decoder, and employs a deep supervision mechanism to guide network training | Retains the local details of the image while performing color restoration and defogging |

| Yang et al. [62][85] | A non-parameter layer for the preliminary color correction and a parametric layers for a self-adaptive refinement constitute a trainable end-to-end neural model | The results have better details, contrast and colorfulness |

| Wang et al. [63][86] | Integrate both RGB Color Space and HSV Color Space in one single CNN | Addresses the problem that RGB color space is insensitive to image properties such as luminance and saturation |

3.2. Generative Aadversarial Nnetwork-Based Mbased methods

Generative adversarial network(GAN) was proposed by GoodFellow et al. [6][12]. A generative adversarial network (GAN) is used to produce better output through the game confrontation learning of generator and discriminator. By learning, the generator generates an image as similar to the actual image as possible so that the discriminator cannot distinguish between true and false images. The discriminator is used to indicate whether the image is a composite or actual image. If the discriminator cannot be cheated, the generator will continue to learn. The process is shown in Figure 5. The input of the generator is a low-quality image, and the output is a generated image. The input of the discriminant network is the generated image and the actual sample, and the output is the probability value that the generated image is true. The probability value is between 1 and 0. As an excellent generation model, GAN has a wide range of applications in image generation, image enhancement and restoration, and image style transfer mutual (Tables 9 and 10).

Table 9. Underwater image enhancement methods based on CGAN.

| Author | Algorithm | Contribution |

|---|---|---|

| Li et al. [64][91] | Underwater image generation countermeasure network WaterGAN, using atmospheric images and depth maps to synthesize underwater images as the training dataset | Constructs two-stage deep learning network using raw underwater images, authentic atmospheric color images, and depth maps |

| Guo et al. [65][92] | New multiscale dense generated adversarial network(UWGAN) | Multiscale manipulation, dense cascading, and residual learning improve performance, render more detail, and take full advantage of features |

| Liu et al. [66][93] | Multiscale feature fusion network for underwater image color correction (MLFcGAN) realized multiscale global and local feature fusion in the generator part | Conducive to more effective and faster online learning |

| Yang et al. [67][89] | Dual discriminator designed to obtain local and global semantic information, thus constraining the multiscale generator | Generated images are more realistic and natural |

| Li et al. [68][94] | A simple and effective fusion adversarial network that employs the fusion method and combines four different losses | Corrects color and has superiority in both qualitative and quantitative evaluations |

| Liu et al. [69][95] | Combine the Akkaynak–Treibitz model and generative adversarial network | Achieves clear results with good white balance and visually quite close to the ground-truth images |

Table 10. Underwater image enhancement and restoration based on CycleGAN.

| Author | Algorithm | Contribution |

|---|---|---|

| Fabbri et al. [70][96] | Unpaired underwater images are used for training, then generated clear images and corresponding degraded images are formed into a training set | Absolute error loss and gradient loss are added to the loss function |

| Lu et al. [71][97] | Underwater image restoration based on a multiscale CycleGAN network; establishes adaptive image restoration process by using dark channel prior to obtaining depth information of underwater images | Improves underwater image quality, enhances detail structure information, has good performance in contrast enhancement and color correction |

| Park et al. [72][98] | A pair of discriminators is added based on Cyc1eGAN; introduces adaptive weighting method to limit loss of the two discriminators | Stable training process |

| Islam et al. [73][99] | Supervises training based on global content, image content, local texture, and style information | Good color restoration and image sharpening effect, fast processing speed can be used in underwater video enhancement |

| Hu et al. [74][100] | Add the natural image quality evaluation (NIQE) index to the GAN | Provides generated images with higher contrast and tries to generate a better image than the truth images set by the existing dataset |

| Zhang et al. [75][101] | An end-to-end dual generative adversarial network (DuGAN) uses two discriminators to complete adversarial training toward different areas of images | Restores detail textures and colour degradations |

4. Underwater Vvideo Eenhancement

With the development of underwater video acquisition and data communication technology,real-time underwater video transmission becomes possible. Underwater video with spatiotemporal information and motion characteristics has higher application prospects than underwater images in ocean development. Because of the optical properties, underwater video has some similar problems to underwater images, such as color bias, image blur, low contrast, uneven illumination, etc. At the same time, due to the influence of water flow on video acquisition equipment, the texture features and details of moving objects are weakened or disappear. These problems seriously affect the ability of the underwater video system to accurately collect scene and object features. Unlike atmospheric video enhancement technology, which tends to solve blur and jitter, underwater video enhancement focuses more on solving the harmful effects of the unique optical environment on color and visibility.

Compared with underwater image enhancement technology, underwater video enhancement is more complicated. The research in this direction has not yet reached a mature stage. Most of the existing underwater video enhancement methods are extensions of single image enhancement algorithms. When underwater image enhancement technology is directly applied to video, each frame is enhanced and then connected into a new video. Due to the differences in transmission images and background light between frames, the continuity of the enhanced video is not well maintained, and time artifacts and interframe flicker phenomena can occur (Table 11).

Table 11. Underwater video enhancement algorithms.

| Author | Algorithm | Contribution |

|---|---|---|

| Tang et al. [19][37] | Extracts features from low-resolution images after subsampling; subsampling and IIR gaussian filter are used to form a fast filter to complete the fast two-bit convolution operation | Solves the problem of the strain on computing resources by increased scale effectively |

| Li et al. [59][82] | The convolutional layer in the network structure of UMCNN is not connected to other convolutional layers in the same block and the network does not use any full connection layer or batch normalization processing | The total depth of the network is only 10 layers, which reduces the computational cost and is easy to train |

| Islam et al. [73][99] | In the generator part, the model only learns 256 feature graphs of size 8 × 8; in the discriminant part, the recognition is only based on patch-level information | The entire network structure requires only 17 MB of memory and calculates more efficiently |

| Ancuti et al. [23][41] | The time-bilateral filtering strategy is used for the white balance version of the video frame. Time-sequence information is added in time-domain bilateral filtering | Enhances sharpness and improves the stability of the smooth region. Achieves smoothing between frames and maintains temporal coherence |

| Li et al. [76][105] | The depth cues from stereo matching and fog information reinforce each other. Calculates the fog transmission of each pixel directly from the scene depth and the estimated fog density | Eliminating the ambiguity of air albedo during defogging maintains the time consistency of the final defogging video |

| Qing et al. [77][106] | The images of subsequent frames are guided by the grayscale image of the first frame and combine the current frame’s background light estimation to avoid frequent changes of the atmospheric light value | Reduces the computational complexity and the scintillation caused by changes in the transmission image and atmospheric light value |

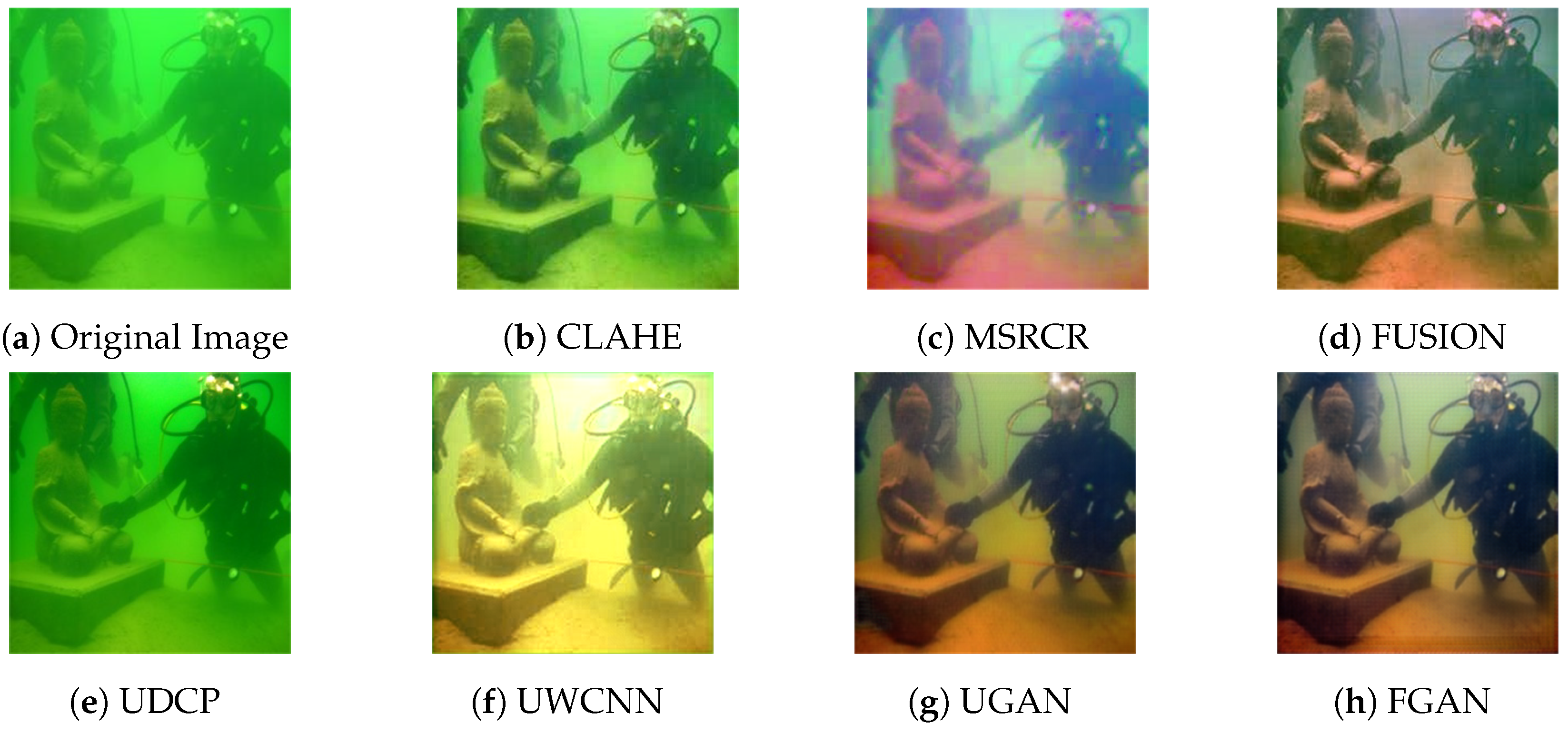

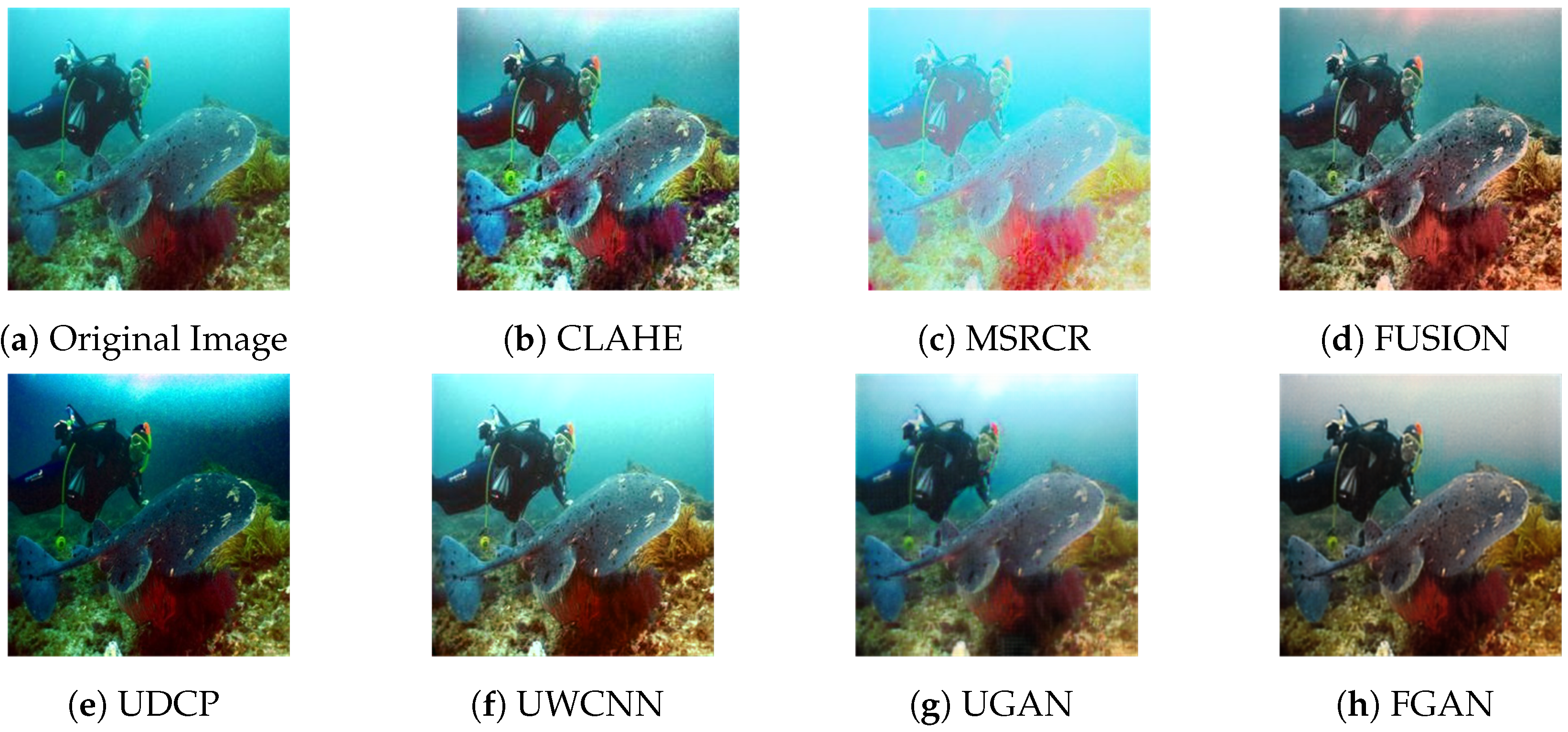

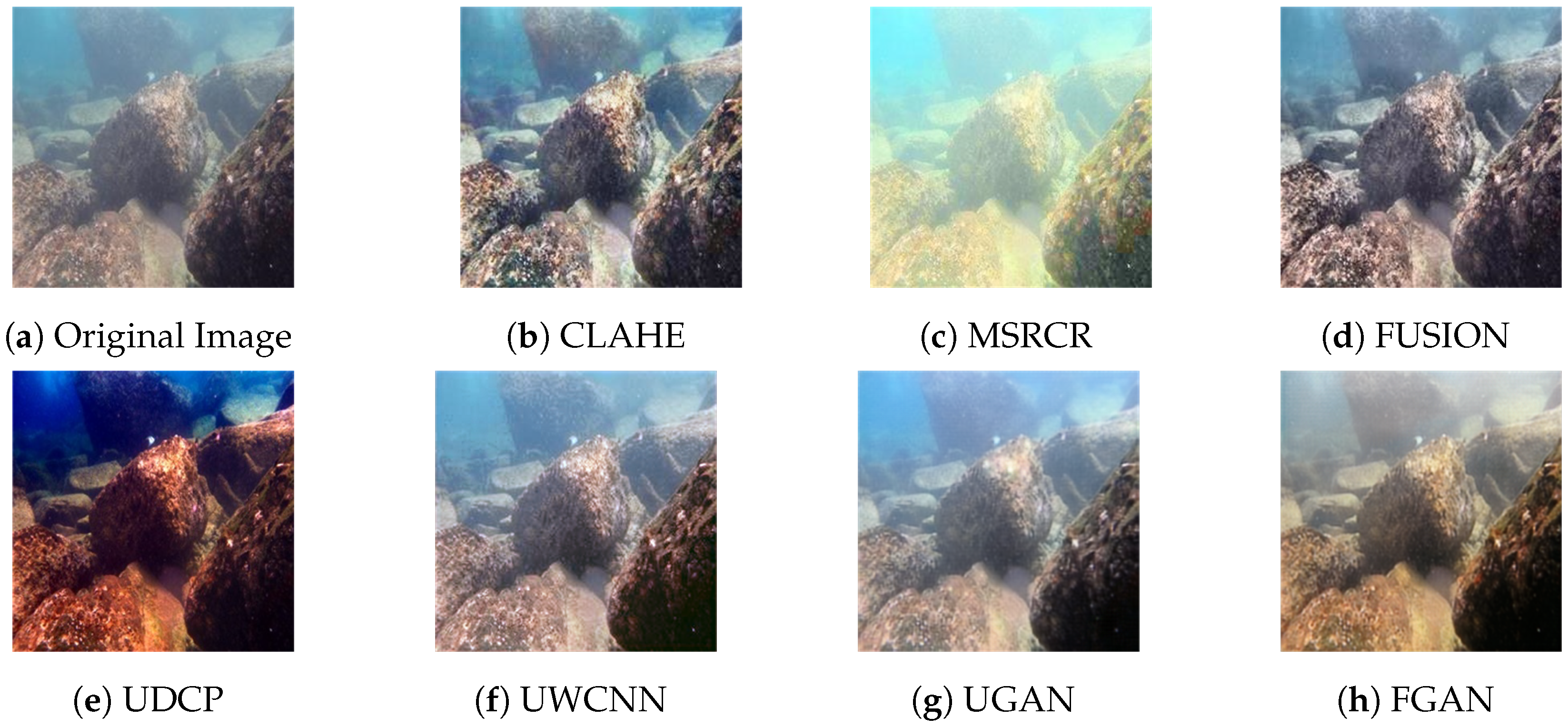

To verify the performance of these algorithms, some typical algorithms from different categories were selected, including CLAHE [78][21], MSRCR [16][34], FUSION [24][42], UDCP [37][59], UWCNN [59][82], UGAN [70][96], and FGAN [73][99]. Being tested it on an effective and public underwater test dataset (U45) [79][134], which includes the color casts, low contrast and haze-like effects of underwater degradation. This represents a typical feature of low-quality underwater images. The results are shown in Figure 3, Figure 4 and Figure 5.

Figure 3. Enhanced results of color casts.

Figure 3. Enhanced results of color casts.

Figure 4. Enhanced results of low contrast.

Figure 4. Enhanced results of low contrast.

Figure 5. Enhanced results of haze.

Figure 5. Enhanced results of haze.