Your browser does not fully support modern features. Please upgrade for a smoother experience.

Please note this is a comparison between Version 2 by Vivi Li and Version 1 by Yosoon Choi.

Smart glasses are a type of wearable device that can be worn on the face, and they meet the original objective of enabling clearer vision in addition to functioning as a computer. Since Google released “Google Glass” in 2012, companies such as Sony, Microsoft, and Epson have launched their own smart glass products.

- smart glasses

- head-mounted display

- wearable device

- augmented reality

- mixed reality

1. Introduction

With the development of information and communication technology, various forms of wearable devices that can replace smartphones are receiving attention. Wearable devices are defined as “all devices capable of computing that can be worn on the body, including for applications that entail computing functions” [1]. Wearable devices can be used for diverse purposes through the installation of applications available on a mobile operating system (OS), thereby providing various functionalities in addition to those related to fashion and health.

Smart glasses are a type of wearable device that can be worn on the face, and they meet the original objective of enabling clearer vision in addition to functioning as a computer. Since Google released “Google Glass” in 2012, companies such as Sony, Microsoft, and Epson have launched their own smart glass products. Figure 1 shows an example of smart glasses, which provide the desired information to users through a display in the form of external glasses or binoculars. They support wireless communication technologies, such as Bluetooth and Wi-Fi, and can be used to search and share information in real time through an internet connection. In addition, by providing a location tracking function through the Global Positioning System (GPS), it is possible to develop various applications based on location information. As an interface for communication between smart glasses and users, a touch button or a natural language command processing method based on voice recognition is used. By using a camera mounted in front of the device, it is possible to acquire photographs or video data of the surrounding environment in real time [2].

Figure 1. Example of smart glasses (Moverio BT-350, Epson, Suwa, Japan).

In [3], the concept of virtual reality (VR) was introduced, and in [4], a head-mounted display (HMD) was first presented. Smart glasses are a technology based on optical HMDs (OHMDs), which comprise plastic objects placed at eye level, allowing the user to view an online digital world, an offline world, and the physical world [5]. Unlike smartphones and other wearable devices, smart glasses enable users to conduct tasks without extensive physical effort; for example, it is not necessary to use one’s hands or eyes repeatedly to interact with a smart glass [6]. In addition, smart glasses are used in a variety of ways, including application development. Examples include information visualization, image data analysis, data processing, navigation, information transmission and sharing, and risk detection and warning [7,8,9,10,11,12][7][8][9][10][11][12].

2. Smart Glasses Research Trend

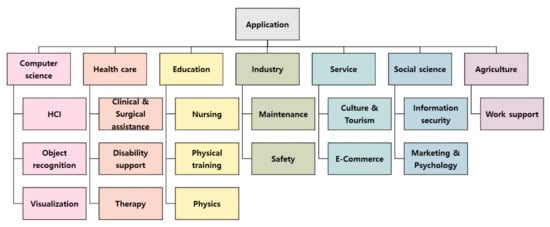

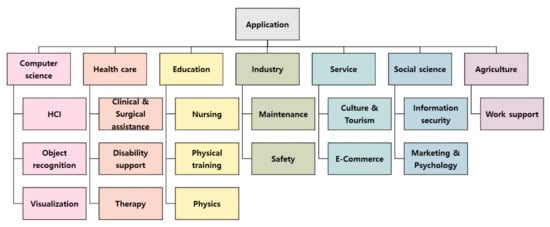

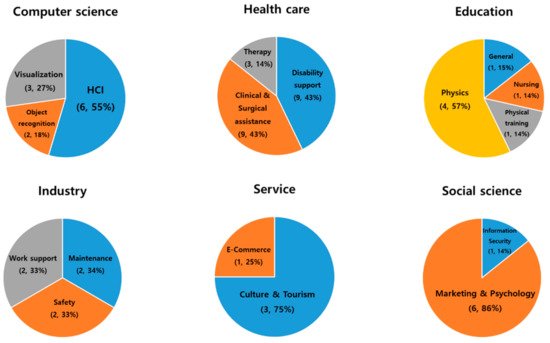

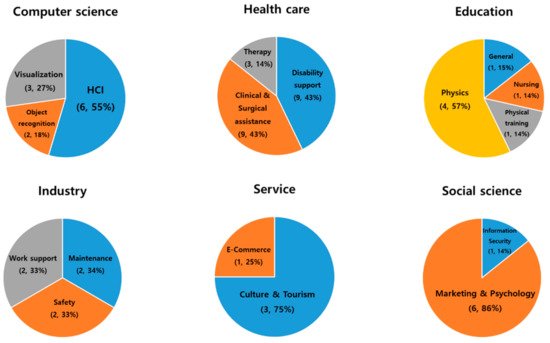

To analyze the areas in which smart glasses are actively researched, the 57 filtered studies were classified into 7 categories: computer science, healthcare, education, industry, service, social science, and agriculture. During the first stage, we classified them based on seven fields of application, and in the second stage, computer science was classified into human–computer interaction (HCI), object recognition, and visualization, and healthcare was classified into clinical and surgical assistance, disability support, and therapy. In addition, education was divided into nursing, physical training, and physics, and industry was divided into maintenance and safety. Service was divided into culture and tourism and e-commerce, and social science was divided into information security, marketing, and psychology. Finally, agriculture was further categorized as work support (Figure 42).

Figure 42. Structure of applied research fields and subfields.

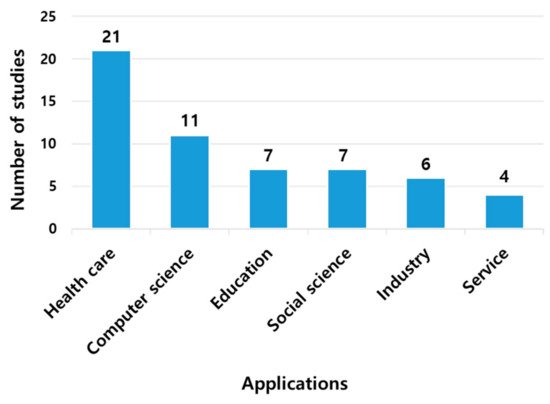

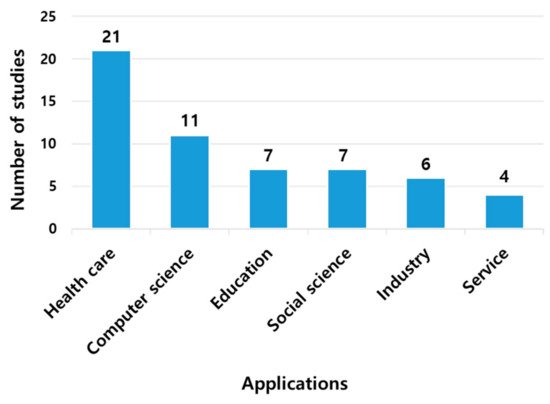

Of the 57 studies in the first category, 21 were healthcare-related studies, with 36% of the 7 areas being the most active. Computer science was included in 11 cases and social science in 7 cases. Industry had six studies, and service had four, which was the smallest number (Figure 53). In the second stage, the subfields in which smart glasses were employed and the corresponding ratios were analyzed (Figure 64).

Figure 53. Number of studies based on a study of the application fields.

Figure 64. Detailed distribution of smart glass applications.

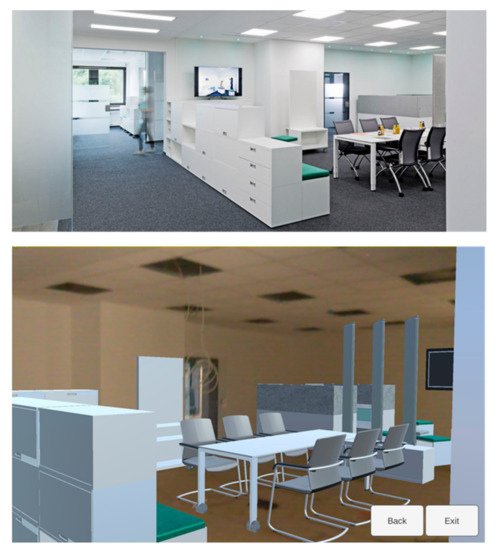

The computer science field is developing HCI [18,19,20,21,22,23][13][14][15][16][17][18] as an interaction technology between humans and computers; object recognition [24[19][20],25], which is a technology that recognizes what the user sees, based on a camera; and visualization [8,26,27][8][21][22] to visually show data to users (Table 1). There are several research cases in the field of visualization. Riedlinger et al. [27][22] compared Google’s Tango tablet with Microsoft’s HoloLens smart glasses in the context of their visualization of building information modeling data. For 16 participants, tests were conducted to solve four tasks using two devices, and the evaluations of users, in terms of interior design visualization and visualization of the modeling data, were analyzed. As a result of the analysis, it was found that most users preferred tablets that can enable the sharing of one screen with multiple people. Although smart glasses provided additional hands-free functionality and stability, tablets had a more positive impression on users than smart glasses because of the lack of the feeling of being isolated in the virtual world. Figure 75 shows an augmented reality (AR) view of a space for socializing, which was completed for comparison between tablets and smart glasses. Among the subfields of computer science, HCI had the highest proportion of studies at 55%, followed by visualization at 27%, and object recognition at 18%. To improve the convenience and efficiency using a detailed classification, the research and development of technologies that facilitate interactions between smart glasses and users is actively being pursued, and in particular, it was judged that there is a high level of interest in research on various interactions between users and devices.

Table 1. Summary of smart glass applications in the field of computer science.

| Sub-Field | References | Year | Aim of Study | |||

|---|---|---|---|---|---|---|

| HCI (Human–Computer Interaction) |

Belkacem et al. [18][13] | 472019 | Inputting text into smart glasses using touch fiber | |||

| ] | [42] | 2020 | Development of technology that can support maintenance work on the job site | Zhang et al. [19][14] | 2014 | Exploring the user’s visual interest through smart glasses |

| Siltanen and Heinonen [48][43] | 2020 | 2018 | Consumer perception survey for application to cycling training | Lee et al. [20][15] | 2019 | Development of technology to enter text through fingertip detection technology in the air without using a keyboard |

| Safety | ||||||

| General | Cao et al. [57][52] | 2019 | Development of new augmented reality learning system for future informatization experiment | Lee et al. [21][16] | 2019 | |

| Physics | Spitzer et al. [58][53] | 2018 | Distance learning and support using smart glasses | Lee et al. [22][17] | ||

| Kapp et al. | 2020 | |||||

| [59][54] | 2018 | Enhance understanding of physics experiments through smart glasses | Lee et al. [23][18] | 2020 | ||

| Object recognition | Park et al. [24][19] | 2020 | Effective object detection and object segmentation verification through wearable AR technology based on deep learning technology | |||

| Chauhan et al. [25][20] | 2016 | Verification of biometric accuracy of smart glasses | ||||

| Visualization | Parmparau [26][21] | 2020 | Development of technology to visualize digital contents | |||

| Riedlinger et al. [27][22] | 2019 | Visualization comparison of hands-free (smart glass) versus non-hands-free (tablet) devices | ||||

| Aiordǎchioae et al. [8] | 2019 | Data cloud formation through tags extracted from images recorded with cameras in smart glasses |

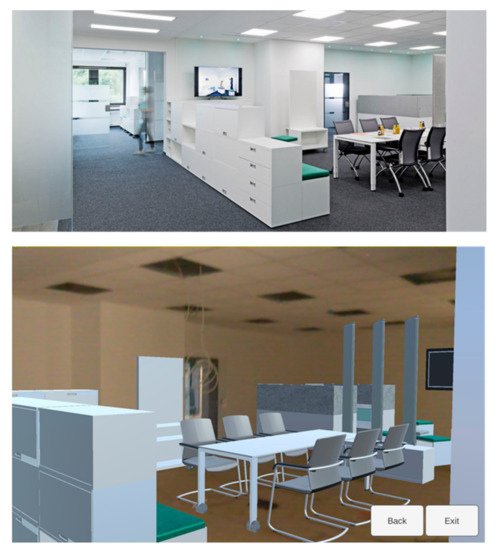

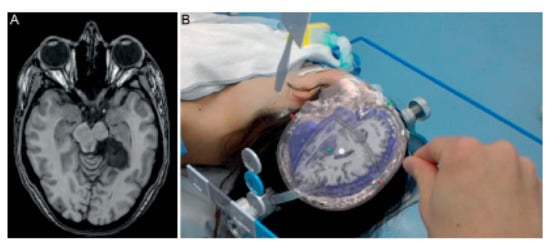

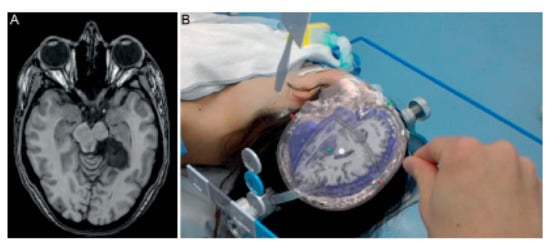

The field of healthcare is subdivided into clinical and surgical assistance [7,28[7][23][24][25][26][27][28][29][30],29,30,31,32,33,34,35], the development of technologies to help medical institution workers using smart glasses, disability support technologies [10,36,37,38,39,40,41,42,43][10][31][32][33][34][35][36][37][38] assisting physically and mentally handicapped people, and therapy [44,45,46][39][40][41] that effectively supports the treatment of patients (Table 2). Among them, the following research cases are in the fields of clinical and surgical assistance. van Doormaal et al. [29][24] evaluated the validity and accuracy of holographic neural navigation (HN) using AR smart glasses. They programmed a nerve-searching system and evaluated the accuracy and feasibility of using the system in an operating room. The conventional neuronavigation fiducial registration error (CN FRE) was measured in a plastic head model with points displayed, and HN and CN FRE were measured in three patients (Figure 86). Although the accuracy of hologram navigation using commercially available smart glasses in the measurement results has not reached a clinically acceptable level, it is possible to improve the accuracy and overcome the problem, and it has been evaluated that AR nerve navigation has significant potential. Clinical and surgical assistance and disability support in the healthcare field were conducted in the same proportion (43%) in 9 of the 21 papers. In addition, three studies were conducted in the therapy field. Using the results of this analysis, smart glasses are evenly applied in the field of healthcare, and are stably assisting users; however, it is difficult to employ smart glasses in a direct evaluation, and, therefore, technological development is required.

Table 2. Summary of smart glass applications in the field of healthcare.

| Sub-Field | References | Year | Aim of Study | ||

|---|---|---|---|---|---|

| Clinical and Surgical assistance | Yong et al. [28][23] | 2020 | Enhancing the learning experience for trainees practicing microsurgery techniques for science and surgery | ||

| van Doormaal et al. [29][24] | 2019 | To determine feasibility and accuracy of holographic neuronavigation (HN) using AR smart glasses. | |||

| Salisbury et al. [30][25] | 2018 | Objective measurement of concussion-related disorders through smart glasses | |||

| Chang et al. | [49][44] | 2018 | Design and implementation of drowsiness fatigue monitoring system to improve road safety | Maruyama et al. [31][26] | |

| 2019 | |||||

| Baek and Choi | 2018 | [11]Assessing the accuracy of visualizing 3D graphics in neurosurgery surgery | |||

| 2020 | Development of smart glass-based proximity warning system for pedestrians at a mining site | Klueber et al. [32][27] | Multiple patient monitoring | ||

| Work support | Kirks et al. [50][45] | 2019 | Development of distributed control system based on smart glasses | García-Cruz et al. [33][28] | 2018 |

| Gensterblum [51][46 | Potential evaluation of smart eyeglasses in urology | ||||

| ] | Ruminski et al. [34][29] | 2016 | Data exchange between medical information systems | ||

| Salisbury et al. [7] | 2020 | Verification of the AR system accuracy of smart glasses in percutaneous needle interventions surgery | |||

| 2020 | Examine the possibility that commercial drones and smart glasses are disruptive technologies in the construction industry. | Nag et al. [35][30] | 2020 | Analysis of emotional awareness of autistic children | |

| Disability support | Rowe [36][31] | 2019 | Assisting people with peripheral vision loss through a risk detection and tracking system | ||

| Meza-de-Luna et al. [37][ |

The service category was divided into culture and tourism [9[9][47][48],52,53], which enhances the understanding of artwork using a vivid expression method applying smart glasses, and the e-commerce field [54][49] for the purchasing of products through the internet or PC communication (Table 4). Research has also been conducted on museum visitors in the fields of culture and tourism. To explore the use of smart glasses in museums, Marco et al. [53][48] designed a glassware prototype that can provide visitors with a new interpretation experience in museums. The effects were tested through field experiments. They designed and implemented a glassware prototype and tested the interaction between glasses and users based on 12 visitors of the robot gallery at the MIT Museum. Participants were observed and interviewed during the experiment. As a result of an analysis based on qualitative research data collected through interviews, it became clear that the use of smart glasses makes it possible to better appreciate art. In this manner, the culture and tourism field accounted for 75% of the research corresponding to the service field; however, it was judged that research on smart glasses in the e-commerce-related service field is still inactive compared to that in the culture and tourism field, owing to security issues.

Table 4. Summary of smart glass applications in the service field.

| Sub-Field | References | Year | Aim of Study |

|---|---|---|---|

| Culture and Tourism | Han et al. [9] | 2019 | Providing a framework for adopting smart glasses in cultural tourism |

| tom Dieck et al. [52][47] | 2015 | Improving the understanding of art and analyzing the effects of using smart glasses in museums | |

| Mason [53][48] | 2016 | ||

| 32 | |||

| ] | |||

| E-Commerce | Ho et al. [54][49] | 2018 | |

| 2019 | |||

| Supporting social interaction (conversation) of people with visual impairments | |||

| Schipor et al. [38][33] | 2020 | Improved convenience for visually impaired users through voice command input and control | |

| Chang et al. [39][34] | 2020 | Development of a system to improve the safety of the visually impaired when using drugs | |

| Lausegger et al. [40][35] | 2017 | Visual aid for people with color blindness | |

| Miller et al. [10] | 2017 | To improve the understanding of lectures for the hearing impaired | |

| Janssen et al. [41][36] | 2017 | Validation of gait assist effect in Parkinson’s disease patients | |

| Sandnes [42][37] | 2016 | Investigating the features that people with low vision need in smart glasses | |

| Ruminski et al. [43][38] | 2015 | Identifying the potential use of smart glasses in medical activities | |

| Therapy | Machado et al. [44][39] | 2019 | Assisting people with autism to acquire daily life skills |

| Liu et al. [45][40] | 2017 | System application using smart glasses for coaching to improve autism disorder | |

| Vahabzadeh et al. [46][41] | 2018 | Socio-emotional and behavioral function treatment of autistic students by intervening using smart glasses |

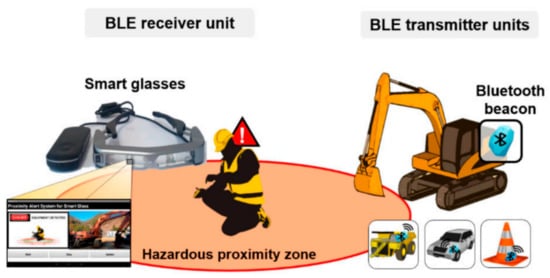

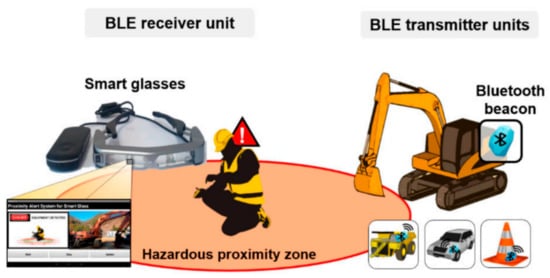

The industry field includes technical research on maintenance [47[42][43],48], safety [11[11][44],49], and work support [50,51][45][46] (Table 3). Among them, in the safety field, the following studies have been conducted: Beak et al. [11] developed a smart glass-based wearable personal proximity warning system (PWS) for the safety of pedestrians at construction and mining sites. Smart glasses receive a signal transmitted by a Bluetooth beacon that is attached to a heavy machine or vehicle and that provides a visual warning to the wearer based on the signal strength (Figure 97). Regardless of the direction the pedestrian is looking, all warnings are issued normally over a distance of 10 m or more. In addition, under the same conditions, a smartphone-based PWS or a smart glass-based PWS was used to evaluate the workload index of 10 subjects. Test results of mental, temporal, and physical stress were underestimated when using a smart glass-based PWS. The evaluation results showed that the use of PWS based on smart glasses can free both hands of pedestrians, improve work efficiency, and help enhance the safety of pedestrians at construction and mining sites. In addition to this study, in the field of safety, two studies were conducted on maintenance and work support. As a result of detailed analyses in the industry field, research has been conducted such that the two lenses are not biased in each of the three cases.

Figure 97. Conceptual view of the personal proximity warning system (PWS) comprising a Bluetooth low energy (BLE) receiver unit and BLE transmitter units [11].

Table 3. Summary of smart glass applications in the industry field.

| Sub-Field | References | Year | Aim of Study |

|---|---|---|---|

| Maintenance | Wolfartsberger et al. [ | ||

| Ticketing without input after automatically recognizing the license plate using smart glasses | |||

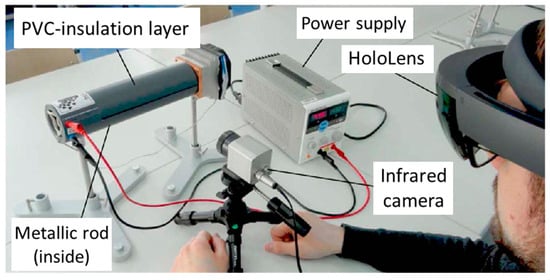

The education section was divided into nursing [55][50] for the education of medical workers using AR; physical training [56][51], such as cycling training; and a general category [57][52] for future informatization in experimental education and physics [58,59,60,61][53][54][55][56] to effectively teach physical theories using visual methods (Table 5). Research has been conducted in the field of physics. Strzys et al. [61][56] conducted experiments to improve the understanding of physical concepts by directly showing invisible physical quantities through AR-based learning (Figure 108). In a metal heat conduction experiment with 59 subjects, the temperature of the object was visualized in color in an AR form directly on the actual object itself. As a result of a questionnaire survey, it was found that MR improved learners’ understanding of basic physics concepts. Therefore, we determined that complex experiments can be easily understood through AR, using detailed analysis results in the field of education. Regarding the remaining fields, four among the seven studies corresponded to the physics field. The visual representation of physical phenomena that cannot be observed was judged to be the most useful for learning physics using smart glasses.

In social science, a study was conducted on user information security associated with the use of smart glasses [62][57]. A marketing and psychology study was conducted to analyze the factors that influence consumers’ social perception of smart glasses and device selection [63,64,65,66,67,68][58][59][60][61][62][63]. In the field of social sciences (Table 6), six studies (86% of the seven papers) focused on marketing and psychology. It was found that the consumer recognition of smart eyeglasses is increasing with progress in the commercialization of smart eyeglasses.

Table 6. Summary of smart glass applications in the field of social science.

| Sub-Field | References | Year | Aim of Study |

|---|---|---|---|

| Information security | Rauschnabel et al. [62][57] | 2018 | Research on consumer perception of smart glasses and personal information risk |

| Physical training | Berkemeier et al. [56][51] | ||

| Marketing and Psychology | Adapa et al. [63][58] | 2018 | Investigating factors influencing the selection of smart wearable devices |

| Rauschnabel et al. [64][59] | 2015 | ||

| Rauschnabel et al. [65][60] | 2016 | Consumer perception of smart glasses as a fashion | |

| Rallapalli et al. [66][61] | 2014 | Physical analysis of (indoor) retail stores using smart glasses | |

| Hoogsteen et al. [67][62] | 2020 | Recognition of smart glasses by low-visibility persons | |

| Ok et al. [68][63] | 2015 | Analysis of harmfulness and social perception in purchasing smart glasses |

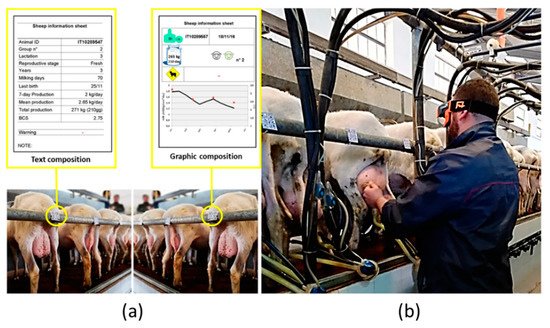

In the field of agriculture, research has been conducted to improve the performance of livestock farming operations [69][64]. Caria et al. [69][64] conducted experiments on knowledge-based extensions on how smart glasses interact with the agricultural environment. Sixteen participants conducted a test in milking parlors to read, identify, and group various types of content through smart glasses (Figure 119). A questionnaire was administered to evaluate the amount of work performed by the device and its ease of use. It was found that smart glasses provided a positive opportunity for livestock management in terms of animal data consultation and information evaluation. Smart glasses can help improve human cognition and usefulness in agricultural fields. It seems that only a few studies have been conducted in this regard. Therefore, it was determined that relatively active research must be conducted when compared to that in other fields.

3. Sensors Depending on the Application Purpose

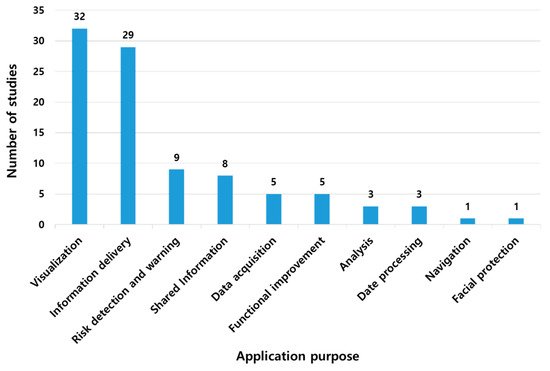

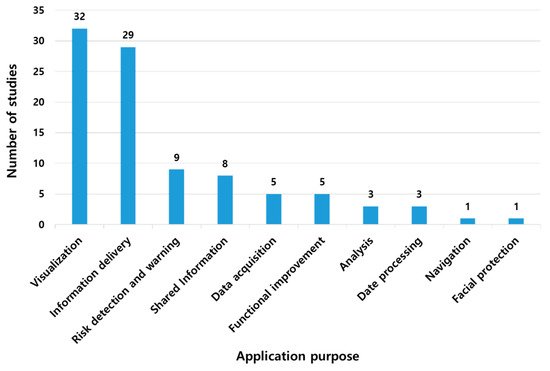

In this study, smart glasses were found to be used in various fields, although the purpose of their application may be different. Smart glasses have been used for one or more purposes. Therefore, the research was categorized based on the purpose of using the smart glasses, excluding the fields of use (Figure 120). Because smart glasses play the most visual role, most research aims to convey other information to the user’s line of sight. Therefore, 32 studies were performed to convey the information obtained from smart glasses and then to visualize such information. Visualization and information transmission corresponded to 32 and 29 studies, respectively, accounting for 65% of the total utilization purpose. In addition, nine studies were aimed at notifying users of dangers using acquired data and eight studies were aimed at sharing information.

Figure 120. Number of studies based on research purpose.

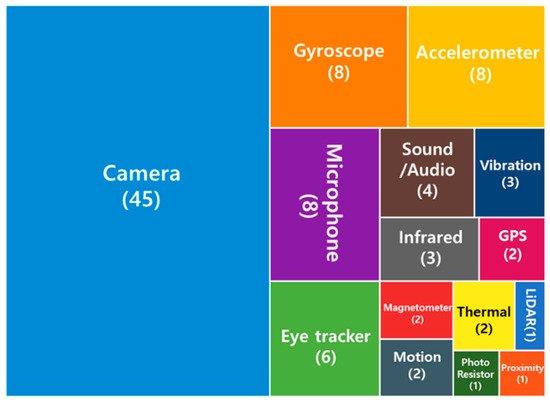

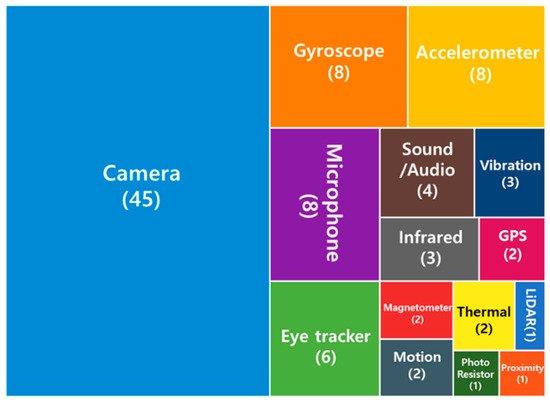

In this study, various sensors built into smart glasses were utilized based on the research aim. Therefore, the sensors used in the research were classified, and the types of sensors applied the most were analyzed. Figure 131 shows the frequency of the sensors used as a percentage. More than half of the studies (45) used camera sensors that are the most suitable for the original role of smart glasses. Smart glasses have a disadvantage in that data entry is inconvenient because there is no input device, such as a keyboard. Therefore, a microphone was used in eight of the studies to solve the input problem. The sensors needed to calculate motion (gyroscope, accelerometer, GPS, and motion) were also used. In addition, sound/audio and vibration sensors were used for information transmission through sound and vibration.

Figure 131. Frequency and ratio of each sensor used in the study.

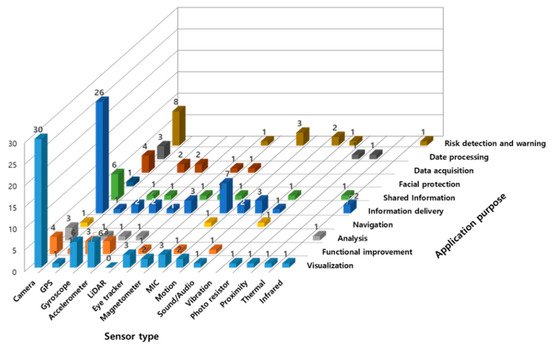

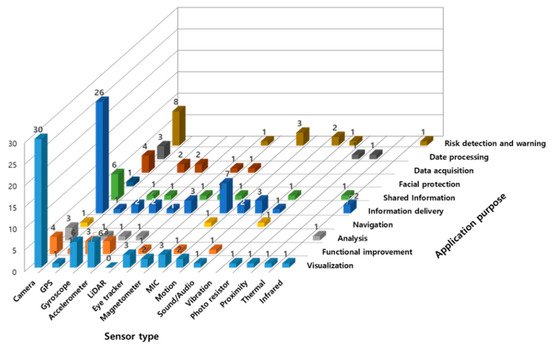

The use of arbitrary sensors was categorized based on the aim of use (Figure 142). As the classification results show, a camera was used for all research purposes. Among the 32 studies aimed at visualization and information transmission, 30 used camera sensors. Among the studies investigated in this study, those aimed at visualization utilized sensors the most, such as gyroscopes, accelerometers, eye trackers, and microphones, compared to studies conducted for other purposes. In addition, 36 studies, excluding 3 out of the 39, aimed at information transmission used camera sensors to obtain and transmit information, and multiple sensors, such as a GPS, motion sensor, and infrared device, were used.

Figure 142. Use of sensors according to the purpose of using smart glasses.

References

- Kang, J.Y. Study on the Content Design for Wearable Device—Focus on User Centered Wearable Infotainment Design. J. Digit. Des. 2015, 15, 325–333.

- Michalski, R.S.; Carbonell, J.G.; Mitchell, T.M. Machine learning: An artificial intelligence approach. Artif. Intell. 1985, 25, 236–238.

- Sutherland, I.E. The Ultimate Display. In Proceedings of the IFIP Congress, New York, NY, USA, 24–28 May 1965; pp. 506–508.

- Sutherland, I.E. A head-mounted three dimensional display. In Proceedings of the AFIPS 68, San Francisco, CA, USA, 9–11 December 1968.

- Kress, B.; Starner, T. A Review of Head-Mounted Displays (HMD) Technologies and Applications for Consumer Electronics. In Photonic Applications for Aerospace, Commercial, and Harsh Environments IV; International Society for Optics and Photonics: Bellingham, WA, USA, 2013; Volume 8720, p. 87200A.

- Due, B.L. The Future of Smart Glasses: An Essay about Challenges and Possibilities with Smart Glasses; Centre of Interaction Research and Communication Design, University of Copenhagen: København, Denmark, 2014; Volume 1, pp. 1–21.

- Seifabadi, R.; Li, M.; Long, D.; Xu, S.; Wood, B.J. Accuracy Study of Smartglasses/Smartphone AR Systems for Percutaneous Needle Interventions. In Proceedings of the SPIE Medical Imaging, Houston, TX, USA, 16 March 2020; Volume 11315.

- Aiordǎchioae, A.; Vatavu, R.-D. Life-Tags: A Smartglasses-Based System for Recording and Abstracting Life with Tag Clouds. ACM Hum. Comput. Interact. 2019, 3, 1–22.

- Han, D.-I.D.; Tom Dieck, M.C.; Jung, T. Augmented Reality Smart Glasses (ARSG) Visitor Adoption in Cultural Tourism. Leis. Stud. 2019, 38, 618–633.

- Miller, A.; Malasig, J.; Castro, B.; Hanson, V.L.; Nicolau, H.; Brandão, A. The Use of Smart Glasses for Lecture Comprehension by Deaf and Hard of Hearing Students. In Proceedings of the 2017 CHI Conference Extended Abstracts on Human Factors in Computing Systems, CHI EA ’17, Denver, CO, USA, 6–11 May 2017; pp. 1909–1915.

- Baek, J.; Choi, Y. Smart Glasses-Based Personnel Proximity Warning System for Improving Pedestrian Safety in Construction and Mining Sites. Int. J. Environ. Res. Public Health 2020, 17, 1422.

- Aiordachioae, A.; Schipor, O.-A.; Vatavu, R.-D. An Inventory of Voice Input Commands for Users with Visual Impairments and Assistive Smartglasses Applications. In Proceedings of the 2020 International Conference on Development and Application Systems (DAS), Suceava, Romania, 21–23 May 2020; pp. 146–150.

- Belkacem, I.; Pecci, I.; Martin, B.; Faiola, A. TEXTile: Eyes-Free Text Input on Smart Glasses Using Touch Enabled Textile on the Forearm. In Human-Computer Interaction—INTERACT 2019; Lamas, D., Loizides, F., Nacke, L., Petrie, H., Winckler, M., Zaphiris, P., Eds.; Computer Science, Lecture Notes; Springer International Publishing: Cham, Switzerland, 2019; pp. 351–371.

- Zhang, L.; Li, X.-Y.; Huang, W.; Liu, K.; Zong, S.; Jian, X.; Feng, P.; Jung, T.; Liu, Y. It Starts with Igaze: Visual Attention Driven Networking with Smart Glasses. In Proceedings of the 20th Annual International Conference on Mobile Computing and Networking, Maui, HI, USA, 7–11 September 2014; pp. 91–102.

- Lee, L.H.; Yung Lam, K.; Yau, Y.P.; Braud, T.; Hui, P. HIBEY: Hide the Keyboard in Augmented Reality. In Proceedings of the 2019 IEEE International Conference on Pervasive Computing and Communications (PerCom), Kyoto, Japan, 11–15 March 2019.

- Lee, L.H.; Braud, T.; Bijarbooneh, F.H.; Hui, P. Tipoint: Detecting Fingertip for Mid-Air Interaction on Computational Resource Constrained Smartglasses. In Proceedings of the 23rd ACM Annual International Symposium on Wearable Computers (ISWC2019), London, UK, 11–13 September 2019; pp. 118–122.

- Lee, L.H.; Braud, T.; Lam, K.Y.; Yau, Y.P.; Hui, P. From Seen to Unseen: Designing Keyboard-Less Interfaces for Text Entry on the Constrained Screen Real Estate of Augmented Reality Headsets. Pervasive Mob. Comput. 2020, 64, 101148.

- Lee, L.H.; Braud, T.; Bijarbooneh, F.H.; Hui, P. UbiPoint: Towards Non-Intrusive Mid-Air Interaction for Hardware Constrained Smart Glasses. In Proceedings of the 11th ACM Multimedia Systems Conference, Istambul, Turkey, 8–11 June 2020; pp. 190–201.

- Park, K.-B.; Kim, M.; Choi, S.H.; Lee, J.Y. Deep Learning-Based Smart Task Assistance in Wearable Augmented Reality. Robot. Comput. Integr. Manuf. 2020, 63, 101887.

- Chauhan, J.; Asghar, H.J.; Mahanti, A.; Kaafar, M.A. Gesture-Based Continuous Authentication for Wearable Devices: The Smart Glasses Use Case. In Applied Cryptography and Network Security; Manulis, M., Sadeghi, A.-R., Schneider, S., Eds.; Computer Science, Lecture Notes; Springer International Publishing: Cham, Switzerland, 2016; pp. 648–665.

- Pamparau, C.I. A System for Hierarchical Browsing of Mixed Reality Content in Smart Spaces. In Proceedings of the 2020 International Conference on Development and Application Systems (DAS), Suceava, Romania, 21–23 May 2020; pp. 194–197.

- Riedlinger, U.; Oppermann, L.; Prinz, W. Tango vs. Hololens: A Comparison of Collaborative Indoor AR Visualisations Using Hand-Held and Hands-Free Devices. Multimodal Technol. Interact. 2019, 3, 23.

- Yong, M.; Pauwels, J.; Kozak, F.K.; Chadha, N.K. Application of Augmented Reality to Surgical Practice: A Pilot Study Using the ODG R7 Smartglasses. Clin. Otolaryngol. 2020, 45, 130–134.

- van Doormaal, T.P.C.; van Doormaal, J.A.M.; Mensink, T. Clinical Accuracy of Holographic Navigation Using Point-Based Registration on Augmented-Reality Glasses. Oper. Neurosurg. 2019, 17, 588–593.

- Salisbury, J.P.; Keshav, N.U.; Sossong, A.D.; Sahin, N.T. Concussion Assessment with Smartglasses: Validation Study of Balance Measurement toward a Lightweight, Multimodal, Field-Ready Platform. JMIR mHealth uHealth 2018, 6, e15.

- Maruyama, K.; Watanabe, E.; Kin, T.; Saito, K.; Kumakiri, A.; Noguchi, A.; Nagane, M.; Shiokawa, Y. Smart Glasses for Neurosurgical Navigation by Augmented Reality. Oper. Neurosurg. 2018, 15, 551–556.

- Klueber, S.; Wolf, E.; Grundgeiger, T.; Brecknell, B.; Mohamed, I.; Sanderson, P. Supporting Multiple Patient Monitoring with Head-Worn Displays and Spearcons. Appl. Ergon. 2019, 78, 86–96.

- García-Cruz, E.; Bretonnet, A.; Alcaraz, A. Testing Smart Glasses in Urology: Clinical and Surgical Potential Applications. Actas Urológicas Españolas 2018, 42, 207–211.

- Ruminski, J.; Bujnowski, A.; Kocejko, T.; Andrushevich, A.; Biallas, M.; Kistler, R. The Data Exchange between Smart Glasses and Healthcare Information Systems Using the HL7 FHIR Standard. In Proceedings of the 2016 9th International Conference on Human System Interactions (HSI), Portsmouth, UK, 6–8 July 2016; pp. 525–531.

- Nag, A.; Haber, N.; Voss, C.; Tamura, S.; Daniels, J.; Ma, J.; Chiang, B.; Ramachandran, S.; Schwartz, J.; Winograd, T. Toward Continuous Social Phenotyping: Analyzing Gaze Patterns in an Emotion Recognition Task for Children with Autism through Wearable Smart Glasses. J. Med. Internet Res. 2020, 22, e13810.

- Rowe, F. A Hazard Detection and Tracking System for People with Peripheral Vision Loss Using Smart Glasses and Augmented Reality. Int. J. Adv. Comput. Sci. Appl. 2019, 10, 1–9.

- Meza-de-Luna, M.E.; Terven, J.R.; Raducanu, B.; Salas, J. A Social-Aware Assistant to Support Individuals with Visual Impairments during Social Interaction: A Systematic Requirements Analysis. Int. J. Hum. Comput. Stud. 2019, 122, 50–60.

- Schipor, O.; Aiordăchioae, A. Engineering Details of a Smartglasses Application for Users with Visual Impairments. In Proceedings of the 2020 International Conference on Development and Application Systems (DAS), Suceava, Romania, 21–23 May 2020; pp. 157–161.

- Chang, W.; Chen, L.; Hsu, C.; Chen, J.; Yang, T.; Lin, C. MedGlasses: A Wearable Smart-Glasses-Based Drug Pill Recognition System Using Deep Learning for Visually Impaired Chronic Patients. IEEE Access 2020, 8, 17013–17024.

- Lausegger, G.; Spitzer, M.; Ebner, M. OmniColor—A Smart Glasses App to Support Colorblind People. Int. J. Interact. Mob. Technol. 2017, 11, 161–177.

- Janssen, S.; Bolte, B.; Nonnekes, J.; Bittner, M.; Bloem, B.R.; Heida, T.; Zhao, Y.; van Wezel, R.J.A. Usability of Three-Dimensional Augmented Visual Cues Delivered by Smart Glasses on (Freezing of) Gait in Parkinson’s Disease. Front. Neurol. 2017, 8, 279.

- Sandnes, F.E. What Do Low-Vision Users Really Want from Smart Glasses? Faces, Text and Perhaps No Glasses at All. In Computers Helping People with Special Needs; Miesenberger, K., Bühler, C., Penaz, P., Eds.; Computer Science, Lecture Notes; Springer International Publishing: Cham, Switzerland, 2016; pp. 187–194.

- Ruminski, J.; Smiatacz, M.; Bujnowski, A.; Andrushevich, A.; Biallas, M.; Kistler, R. Interactions with Recognized Patients Using Smart Glasses. In Proceedings of the 2015 8th International Conference on Human System Interaction (HSI), Warsaw, Poland, 25–27 June 2015; pp. 187–194.

- Machado, E.; Carrillo, I.; Saldana, D.; Chen, F.; Chen, L. An Assistive Augmented Reality-Based Smartglasses Solution for Individuals with Autism Spectrum Disorder. In Proceedings of the 2019 IEEE Intl Conf on Dependable, Autonomic and Secure Computing, Intl Conf on Pervasive Intelligence and Computing, Intl Conf on Cloud and Big Data Computing, Intl Conf on Cyber Science and Technology Congress (DASC/PiCom/CBDCom/CyberSciTech), Fukuoka, Japan, 5–8 August 2019; pp. 245–249.

- Liu, R.; Salisbury, J.P.; Vahabzadeh, A.; Sahin, N.T. Feasibility of an Autism-Focused Augmented Reality Smartglasses System for Social Communication and Behavioral Coaching. Front. Pediatr. 2017, 5, 145.

- Vahabzadeh, A.; Keshav, N.U.; Abdus-Sabur, R.; Huey, K.; Liu, R.; Sahin, N.T. Improved Socio-Emotional and Behavioral Functioning in Students with Autism Following School-Based Smartglasses Intervention: Multi-Stage Feasibility and Controlled Efficacy Study. Behav. Sci. 2018, 8, 85.

- Wolfartsberger, J.; Zenisek, J.; Wild, N. Data-Driven Maintenance: Combining Predictive Maintenance and Mixed Reality-Supported Remote Assistance. Procedia Manuf. 2020, 45, 307–312.

- Siltanen, S.; Heinonen, H. Scalable and Responsive Information for Industrial Maintenance Work: Developing XR Support on Smart Glasses for Maintenance Technicians. In Proceedings of the 23rd International Conference on Academic Mindtrek, AcademicMindtrek ’20, Tampere, Finland, 29–30 January 2020; pp. 100–109.

- Chang, W.-J.; Chen, L.-B.; Chiou, Y.-Z. Design and Implementation of a Drowsiness-Fatigue-Detection System Based on Wearable Smart Glasses to Increase Road Safety. IEEE Trans. Consum. Electron. 2018, 64, 461–469.

- Kirks, T.; Jost, J.; Uhlott, T.; Püth, J.; Jakobs, M. Evaluation of the Application of Smart Glasses for Decentralized Control Systems in Logistics. In Proceedings of the 2019 IEEE Intelligent Transportation Systems Conference (ITSC), Auckland, New Zealand, 27–30 October 2019; pp. 4470–4476.

- Gensterblum, C. An Analysis of Smart Glasses and Commercial Drones’ Ability to Become Disruptive Technologies in the Construction Industry. Ph.D. Thesis, Kalamazoo College, Kalamazoo, MI, USA, 1 January 2020.

- tom Dieck, M.C.; Jung, T.; Han, D.I. Mapping Requirements for the Wearable Smart Glasses Augmented Reality Museum Application. J. Hosp. Tour. Technol. 2016, 7, 230–253.

- Mason, M. The MIT Museum Glassware Prototype: Visitor Experience Exploration for Designing Smart Glasses. J. Comput. Cult. Herit. 2016, 9, 12:1–12:28.

- Ho, C.C.; Tseng, B.-Y.; Ho, M.-C. Typingless Ticketing Device Input by Automatic License Plate Recognition Smartglasses. ICIC Express Lett. Part B Appl. 2018, 9, 325–330.

- Kopetz, J.P.; Wessel, D.; Jochems, N. User-Centered Development of Smart Glasses Support for Skills Training in Nursing Education. i-com 2019, 18, 287–299.

- Berkemeier, L.; Menzel, L.; Remark, F.; Thomas, O. Acceptance by Design: Towards an Acceptable Smart Glasses-Based Information System Based on the Example of Cycling Training. In Proceedings of the Multikonferenz Wirtschaftsinformatik, Lüneburg, Germany, 6–9 March 2018; p. 12.

- Cao, Y.; Tang, Y.; Xie, Y. A Novel Augmented Reality Guidance System for Future Informatization Experimental Teaching. In Proceedings of the 2018 IEEE International Conference on Teaching, Assessment, and Learning for Engineering (TALE), Wollongong, Australia, 4–7 December 2019; pp. 900–905.

- Spitzer, M.; Nanic, I.; Ebner, M. Distance Learning and Assistance Using Smart Glasses. Educ. Sci. 2018, 8, 21.

- Kapp, S.; Thees, M.; Strzys, M.P.; Beil, F.; Kuhn, J.; Amiraslanov, O.; Javaheri, H.; Lukowicz, P.; Lauer, F.; Rheinländer, C.; et al. Augmenting Kirchhoff’s Laws: Using Augmented Reality and Smartglasses to Enhance Conceptual Electrical Experiments for High School Students. Phys. Teach. 2018, 57, 52–53.

- Thees, M.; Kapp, S.; Strzys, M.P.; Beil, F.; Lukowicz, P.; Kuhn, J. Effects of Augmented Reality on Learning and Cognitive Load in University Physics Laboratory Courses. Comput. Hum. Behav. 2020, 108, 106316.

- Strzys, M.P.; Kapp, S.; Thees, M.; Klein, P.; Lukowicz, P.; Knierim, P.; Schmidt, A.; Kuhn, J. Physics Holo.Lab Learning Experience: Using Smartglasses for Augmented Reality Labwork to Foster the Concepts of Heat Conduction. Eur. J. Phys. 2018, 39, 035703.

- Rauschnabel, P.A.; He, J.; Ro, Y.K. Antecedents to the Adoption of Augmented Reality Smart Glasses: A Closer Look at Privacy Risks. J. Bus. Res. 2018, 92, 374–384.

- Adapa, A.; Nah, F.F.-H.; Hall, R.H.; Siau, K.; Smith, S.N. Factors Influencing the Adoption of Smart Wearable Devices. Int. J. Hum. Comput. Interact. 2018, 34, 399–409.

- Rauschnabel, P.A.; Brem, A.; Ivens, B.S. Who Will Buy Smart Glasses? Empirical Results of Two Pre-Market-Entry Studies on the Role of Personality in Individual Awareness and Intended Adoption of Google Glass Wearables. Comput. Hum. Behav. 2015, 49, 635–647.

- Rauschnabel, P.A.; Hein, D.W.E.; He, J.; Ro, Y.K.; Rawashdeh, S.; Krulikowski, B. Fashion or Technology? A Fashnology Perspective on the Perception and Adoption of Augmented Reality Smart Glasses. i-com 2016, 15, 179–194.

- Rallapalli, S.; Ganesan, A.; Chintalapudi, K.; Padmanabhan, V.N.; Qiu, L. Enabling Physical Analytics in Retail Stores Using Smart Glasses. In Proceedings of the 20th Annual International Conference on Mobile Computing and Networking, Maui, HI, USA, 7–11 September 2014; pp. 115–126.

- Hoogsteen, K.M.P.; Osinga, S.A.; Steenbekkers, B.L.P.A.; Szpiro, S.F.A. Functionality versus Inconspicuousness: Attitudes of People with Low Vision towards OST Smart Glasses. In Proceedings of the 22nd International ACM SIGACCESS Conference on Computers and Accessibility, Virtual Event, Greece, 26–28 October 2020; pp. 1–4.

- Ok, A.E.; Basoglu, N.A.; Daim, T. Exploring the Design Factors of Smart Glasses. In Proceedings of the 2015 Portland International Conference on Management of Engineering and Technology (PICMET), Portland, OR, USA, 2–6 August 2015; pp. 1657–1664.

- Caria, M.; Todde, G.; Sara, G.; Piras, M.; Pazzona, A. Performance and Usability of Smartglasses for Augmented Reality in Precision Livestock Farming Operations. Appl. Sci. 2020, 10, 2318.

More