Your browser does not fully support modern features. Please upgrade for a smoother experience.

Submitted Successfully!

Thank you for your contribution! You can also upload a video entry or images related to this topic.

For video creation, please contact our Academic Video Service.

| Version | Summary | Created by | Modification | Content Size | Created at | Operation |

|---|---|---|---|---|---|---|

| 1 | Shensheng Tang | -- | 2238 | 2023-06-15 17:23:35 | | | |

| 2 | Camila Xu | Meta information modification | 2238 | 2023-06-16 05:45:29 | | | | |

| 3 | Camila Xu | + 9 word(s) | 2247 | 2023-06-19 08:53:24 | | |

Video Upload Options

We provide professional Academic Video Service to translate complex research into visually appealing presentations. Would you like to try it?

Cite

If you have any further questions, please contact Encyclopedia Editorial Office.

Tang, S. Fog-Based IoT Platform Performance Modeling and Optimization. Encyclopedia. Available online: https://encyclopedia.pub/entry/45674 (accessed on 31 May 2026).

Tang S. Fog-Based IoT Platform Performance Modeling and Optimization. Encyclopedia. Available at: https://encyclopedia.pub/entry/45674. Accessed May 31, 2026.

Tang, Shensheng. "Fog-Based IoT Platform Performance Modeling and Optimization" Encyclopedia, https://encyclopedia.pub/entry/45674 (accessed May 31, 2026).

Tang, S. (2023, June 15). Fog-Based IoT Platform Performance Modeling and Optimization. In Encyclopedia. https://encyclopedia.pub/entry/45674

Tang, Shensheng. "Fog-Based IoT Platform Performance Modeling and Optimization." Encyclopedia. Web. 15 June, 2023.

Copy Citation

A fog-based IoT platform model involving three layers, i.e., IoT devices, fog nodes, and the cloud, was proposed using an open Jackson network with feedback. The system performance was analyzed for individual subsystems, and the overall system was based on different input parameters. Interesting performance metrics were derived from analytical results. A resource optimization problem was developed and solved to determine the optimal service rates at individual fog nodes under some constraint conditions.

fog computing

IoT

queueing network

Jackson network

performance modeling

1. Introduction

The Internet of Things (IoT) describes the network of physical objects embedded with sensors, microcontrollers, software, and other technologies for the purpose of connecting and exchanging data with other devices and systems over the Internet [1][2]. A typical IoT platform works by continuously sending, receiving, and processing data in a feedback loop. IoT devices are the nonstandard computing devices such as smart TVs, smart sensors, smartphones, and smart security robots that connect wirelessly to a network and have the ability to transmit data. Fog devices are where the data are collected and processed.

Fog computing, also known as edge computing, is an architecture that uses fog nodes to receive tasks from IoT devices and perform a large amount of computation, storage, and communication locally, and may route processed data over the Internet to the cloud for further processing if necessary. The goal of fog computing is to improve the efficiency of local and cloud data storage. Fog computing can handle massive data initiated from IoT devices on the edge of networks. Because of its characteristics like low latency, mobility, and heterogeneity, fog computing is considered to be the best platform for IoT applications, sometimes called a fog-based IoT platform. For example, fog computing reduces the amount of data that needs to be sent to the cloud and keeps the latency to a minimum, which is a key requirement for time-sensitive applications such as IoT-based healthcare services [3]. Another example is, in a fog-based IoT platform for smart buildings, the information about the indoor ambience is collected in real-time by IoT devices and sent to the fog for aggregating and preprocessing before being passed to the cloud for storage and analysis. Proper decisions are made and sent back to the related actuators to set the ambience accordingly, or to fire an alarm [4]. More investigation about the essential components of fog-based architecture for IoT systems and their implementation approaches is surveyed in [5].

Fog and cloud concepts are very similar to each other, however, there are some differences. Here is a brief comparison of fog computing and cloud computing: (1) Cloud architecture is centralized and consists of large data centers located around the globe; fog architecture is distributed and consists of many small nodes located as close to client devices as possible. (2) Data processing in cloud computing is in remote data centers. Fog processing and storage are done on the edge of the network close to the source of information, which is crucial for real-time control. (3) Cloud is more powerful than fog regarding computing capabilities and storage capacity, but fog is more secure due to its distributed architecture. (4) Cloud performs long-term deep analysis due to slower responsiveness, while fog aims for short-term edge analysis due to instant responsiveness. (5) A cloud system collapses without an Internet connection. Fog computing has a much lower risk of failure due to using various protocols and standards. Overall, while both cloud and fog computing have their respective advantages, it is important to note that fog computing does not replace cloud computing but complements it. Choosing between these two systems depends largely on the specific needs and goals of the user or developer.

Fog computing is especially important in IoT deployment as it frees resource-constrained IoT devices from having to frequently access the resource-rich cloud [6]. Since the IoT tasks of requesting fog computing resources and service types are variable, dynamically provisioning fog computing resources to ensure optimum resource utilization and at the same time meet a certain constraint will not be an easy task. To optimize the fog computing resources and assure the required system performance, the system modeling and performance analysis for a fog-based IoT platform is an important development step for commercial IoT network deployment.

2. Various Relevant Works of Fog-Based IoT Platform Performance Modeling and Optimization

The modelling, analysis and validation for fog computing systems, particularly with IoT applications, have been extensively studied in the literature. A brief summary of different research aspects is reviewed as follows. In [7], a set of new fall detection algorithms were investigated to facilitate fall detection process, and a real-time fall detection system employing fog computing paradigm was designed and employed to distribute the analytics throughout the network by splitting the detection task between the edge devices. In [8], a conceptual model of fog computing was presented, and its relation to cloud-based computing models for IoT was investigated. In [9], a new fog computing model was presented by inserting a management layer between the fog nodes and the cloud data center to manage and control resources and communication. This layer addresses the heterogeneity nature of fog computing and its challenging complex connectivity. Simulation results showed that the management layer achieves less bandwidth consumption and execution time. In [10], a health monitoring system with Electrocardiogram (ECG) feature extraction as the case study was proposed by exploiting the concept of fog computing at smart gateways providing advanced techniques and services. ECG signals are analyzed in smart gateways with features extracted including heart rate, P wave and T wave. The experimental results showed that fog computing helps achieve more than 90% bandwidth efficiency and offers low-latency real-time response at the edge of the network.

In [11], a charging mechanism called “FogSpot” was introduced to study the emerging market of provisioning low latency applications over the fog infrastructure. In FogSpot, cloudlets offer their resources in the form of Virtual Machines (VMs) via markets. FogSpot associates each cloudlet with a price that targets to maximize cloudlet’s resource utilization. In [12], a distributed dataflow (DDF) programming model was proposed for an IoT application in the fog. The model was evaluated by implementing a DDF framework based on an open-source flow-based run-time and visual programming tool called Node-RED, showing that the proposed approach eases the development process and is scalable. In [13], a complex event processing (CEP) based fog architecture was proposed for real-time IoT applications that use a publish-subscribe protocol. A testbed was developed to assess the effectiveness and cost of the proposal in terms of latency and resource usage. In [14], an alternative to the hierarchical approach was proposed using the self-organizing computer nodes. These nodes organize themselves into a flat model, which leverages on the network properties to provide improved performance.

In [15], a dynamic computation offloading framework was proposed for fog computing to determine how many tasks from IoT devices should be run on servers and how many should be run locally in a vibrant environment. The proposed algorithm makes dynamic decisions of offloading according to CPU usage, delay sensitivity, residual energy, task size and bandwidth, as well as by sending time-sensitive tasks to local devices or fog nodes for processing and resource-intensive tasks to the cloud. In [16], a distributed machine learning model was proposed to investigate the benefits of fog computing for industrial IoT. The proposed framework was implemented and tested in a real-world testbed for making quantitative measurements and evaluating the system. In [17], a resource-aware placement of a data analytics platform was studied in fog computing architecture, seeking to adaptively deploy the analytic platform, and thus reducing the network costs and response time for the user.

In [18], a joint optimization framework was proposed for computing resource allocations in three-tier IoT fog networks involving all fog nodes (FNs), data service operators (DSOs), and data service subscribers (DSSs). The proposed framework allocates the limited computing resources of FNs to all the DSSs to achieve an optimal and stable performance in a distributed fashion. In [19], a container migration mechanism was presented in fog computing that supports the performance and latency optimization through an autonomic architecture based on the MAPE-K control loop, providing a foundation for the analysis and optimization design for IoT applications. In [20], a market-based framework was proposed for a fog computing network that enables the cloud layer and the fog layer to allocate resources in the form of pricing, payment, and supply–demand relationship. Utility optimization models were investigated to achieve optimal payment and optimal resource allocation via convex optimization techniques, and a gradient-based iterative algorithm was proposed to optimize the utilities.

Although fog computing is considered to be the best platform for IoT applications, it has some major issues in the authentication, privacy, and security aspects. Authentication is one of the most concerning issues of fog computing, since fog services are offered at a large scale. This complicates the whole structure and trust situation of fog. A rouge fog node may pretend to be legal and coax the end user to connect to it. Once a user connects to it, it can manipulate the signals coming to and from the user to the cloud and can easily launch attacks. Since fog computing is mainly based on wireless technology, the issue of network privacy has attracted much attention. There are so many fog nodes that every end user can access them, thus more sensitive information is passed from end users to fog nodes. Fog computing security concerns arise as there are many IoT devices connected to fog nodes. Every device has a different IP address, and any hacker can forge your IP address to gain access to your personal information stored in the particular fog node. In [21], a lightweight anonymous authentication and secure communication scheme was proposed for fog computing services, which uses one-way hash function and bitwise XOR operations for authentication among cloud and fog, with a user and a session key agreed upon by both fog-based participants to encrypt the subsequent communication messages. More references can be found in [22][23] that survey main security and privacy challenges of fog and edge computing, and the effect of these security issues on the implementation of fog computing.

3. A Fog-Based IoT Platform Architecture

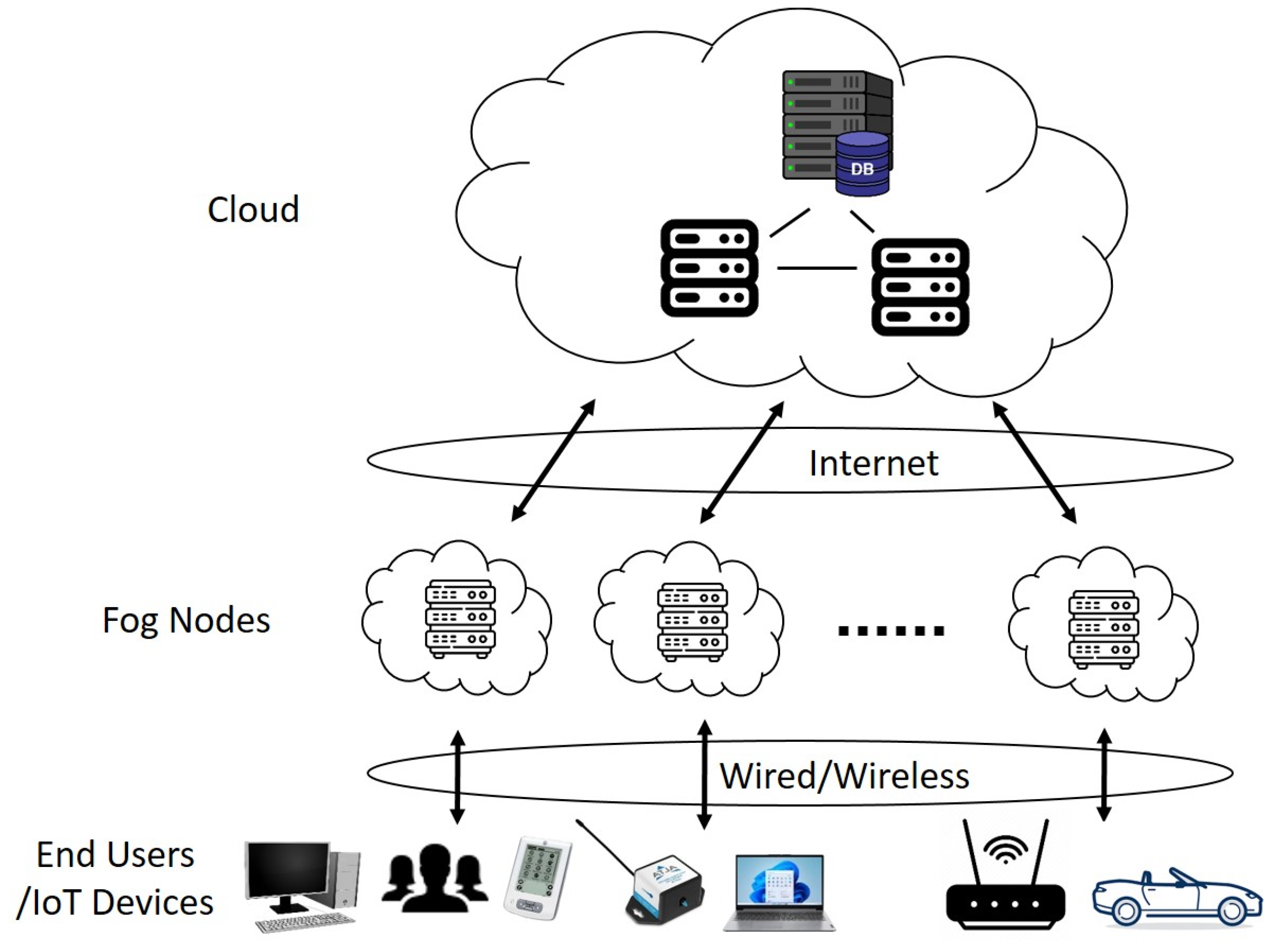

Fog computing is a decentralized computing infrastructure in which data, computer storage and applications are located somewhere between the source (end user) and the cloud. Thus, fog computing brings the advantages and power of the cloud closer to end users. The proposed fog computing architecture (i.e., the fog-based IoT platform) comprises three layers: IoT devices, fog nodes, and cloud, as shown in Figure 1. The need for smart control and decision-making at each layer depends on the time sensitivity of an IoT application.

Figure 1. The fog computing system architecture.

The lowest layer is the end user layer, which consists of a large number of IoT devices, such as robots, smart security, smart phones, wearable devices, smart watches, smart glasses, laptops, and autonomous vehicles. Some of these devices may have the capability of computing, while others may only collect raw data through intelligent sensing of objects or events and send the collected data to the upper layer for processing and storage.

The middle layer is the fog layer consisting of a group of fog nodes, such as routers, gateways, switchers, access points, base stations, and fog servers. Fog nodes are independent devices that calculate, transmit, and temporarily store the generated data from IoT devices, while a fog server also computes the data to make decision of an action. Other fog devices are usually linked to fog servers. Fog nodes can be deployed in a fixed position or on a moving vehicle and are linked to IoT devices to provide intelligent services. Fog nodes are located closer to the IoT devices compared with the cloud and, thus, in this way allow real-time analysis and delay-sensitive applications to be performed within the fog layer. Fog nodes are usually involved when an IoT device does not have data processing capability, or the generated data amount is too large for an IoT device (with computing capability) to process locally. Fog nodes are also connected to the upper layer cloud data centers through the Internet, and can obtain more powerful computing and storage capabilities for some large and complex data processing tasks.

The upper layer is the cloud layer, which includes many servers and storage devices with powerful computing and storage capabilities to provide intelligent application services. This layer can support a wide range of computational analysis and storage of large amounts of data. However, unlike the traditional cloud computing architecture, fog computing does not handle all computing and storage through the cloud. Fog nodes themselves have appropriate computing and storage capabilities. According to the data processing load and quality of service (QoS) requirements, some control strategies can be used to effectively manage and schedule the processing tasks between fog nodes and the cloud, to improve the utilization rate of the overall system resources.

The data transmission between the end users and the fog nodes may be in wired or wireless modes. The selection of the connectivity mode depends mostly on an IoT application. One may think that wired connectivity is generally faster and more secure than the wireless mode. The major wireless technologies for the IoT communication protocols include Bluetooth low energy (BLE) [24], Zigbee [25], Z-Wave [26], WiFi [27], cellular (GSM, 4G LTE, 5G), NFC [28], and LoRaWAN [29]. These IoT communication protocols cater to and fulfill the specific functional requirements of IoT systems. The Internet connection acts as the bridge between the fog layer and the cloud layer and establishes the interaction and communication between them.

References

- Sun, Y.; Song, H.; Jara, A.J.; Bie, R. Internet of Things and Big Data Analytics for Smart and Connected Communities. IEEE Access 2016, 4, 766–773.

- Shafiq, M.; Gu, Z.; Cheikhrouhou, O.; Alhakami, W.; Hamam, H. The Rise of “Internet of Things”: Review and Open Research Issues Related to Detection and Prevention of IoT-Based Security Attacks. Wirel. Commun. Mob. Comput. 2022, 2022, 8669348.

- Bonomi, F.; Milito, R.; Zhu, J.; Addepalli, S. Fog computing and its role in the internet of things. In Proceedings of the First Edition of the MCC Workshop on Mobile Cloud Computing, Helsinki, Finland, 17 August 2012; pp. 13–16.

- Alsuhli, G.; Khattab, A. A Fog-based IoT Platform for Smart Buildings. In Proceedings of the 2019 International Conference on Innovative Trends in Computer Engineering (ITCE), Aswan, Egypt, 2–4 February 2019; pp. 174–179.

- Sadatacharapandi, T.P.; Padmavathi, S. Survey on Service Placement, Provisioning, and Composition for Fog-Based IoT Systems. Int. J. Cloud Appl. Comput. 2022, 12, 1–14.

- Zhai, Z.; Xiang, K.; Zhao, L.; Cheng, B.; Qian, J. IoT-RECSM-Resource-Constrained Smart Service Migration Framework for IoT Edge Computing Environment. Sensors 2020, 20, 2294.

- Cao, Y.; Chen, S.; Hou, P.; Brown, D. FAST: A fog computing assisted distributed analytics system to monitor fall for stroke mitigation. In Proceedings of the 2015 IEEE International Conference on Networking, Architecture and Storage (NAS), Boston, MA, USA, 6–7 August 2015; pp. 2–11.

- Iorga, M.; Goren, N.; Feldman, L.; Barton, R.; Martin, M.; Mahmoudi, C. Fog Computing Conceptual Model. Natl. Inst. Stand. Technol. Spec. Publ. 2018, 1–8.

- Alraddady, S.; Soh, B.; AlZain, M.A.; Li, A.S. Fog Computing: Strategies for Optimal Performance and Cost Effectiveness. Electronics 2022, 11, 3597.

- Gia, T.N.; Jiang, M.; Rahmani, A.-M.; Westerlund, T.; Liljeberg, P.; Tenhunen, H. Fog Computing in Healthcare Internet of Things: A Case Study on ECG Feature Extraction. In Proceedings of the 2015 IEEE International Conference on Computer and Information Technology; Ubiquitous Computing and Communications; Dependable, Autonomic and Secure Computing; Pervasive Intelligence and Computing, Liverpool, UK, 26–28 October 2015; pp. 356–363.

- Tasiopoulos, A.G.; Ascigil, O.; Psaras, I.; Toumpis, S.; Pavlou, G. FogSpot: Spot Pricing for Application Provisioning in Edge/Fog Computing. IEEE Trans. Serv. Comput. 2021, 14, 1781–1795.

- Giang, N.K.; Blackstock, M.; Lea, R.; Leung, V.C.M. Developing IoT applications in the Fog: A Distributed Dataflow approach. In Proceedings of the 2015 5th International Conference on the Internet of Things (IoT), Seoul, Korea, 26–28 October 2015; pp. 155–162.

- Mondragón-Ruiz, G.; Tenorio-Trigoso, A.; Castillo-Cara, M.; Caminero, B.; Carrión, C. An experimental study of fog and cloud computing in CEP-based Real-Time IoT applications. J. Cloud Comput. 2021, 10, 32.

- Karagiannis, V.; Schulte, S.; Leitão, J.; Preguiça, N. Enabling Fog Computing using Self-Organizing Compute Nodes. In Proceedings of the 2019 IEEE 3rd International Conference on Fog and Edge Computing (ICFEC), Larnaca, Cyprus, 14–17 May 2019; pp. 1–10.

- Yadav, J.; Sangwan, S. Dynamic Offloading Framework in Fog Computing. Int. J. Eng. Trends Technol. 2022, 70, 32–42.

- Lavassani, M.; Forsström, S.; Jennehag, U.; Zhang, T. Combining Fog Computing with Sensor Mote Machine Learning for Industrial IoT. Sensors 2018, 18, 1532.

- Taneja, M.; Davy, A. Resource aware placement of data analytics platform in fog computing. Procedia Comput. Sci. 2016, 97, 153–156.

- Zhang, H.; Xiao, Y.; Bu, S.; Niyato, D.; Yu, F.R.; Han, Z. Computing Resource Allocation in Three-Tier IoT Fog Networks: A Joint Optimization Approach Combining Stackelberg Game and Matching. IEEE Internet Things J. 2017, 4, 1204–1215.

- Silva, D.S.; Machado, J.D.S.; Ribeiro, A.D.R.L.; Ordonez, E.D.M. Towards self-optimisation in fog computing environments. Int. J. Grid Util. Comput. 2020, 11, 755–768.

- Li, S.; Liu, H.; Li, W.; Sun, W. Optimal cross-layer resource allocation in fog computing: A market-based framework. J. Netw. Comput. Appl. 2023, 209, 103528.

- Weng, C.-Y.; Li, C.-T.; Chen, C.-L.; Lee, C.-C.; Deng, Y.-Y. A Lightweight Anonymous Authentication and Secure Communication Scheme for Fog Computing Services. IEEE Access 2021, 9, 145522–145537.

- Adel, A. Utilizing technologies of fog computing in educational IoT systems: Privacy, security, and agility perspective. J. Big Data 2020, 7, 99.

- Alwakeel, A.M. An Overview of Fog Computing and Edge Computing Security and Privacy Issues. Sensors 2021, 21, 8226.

- Gomez, C.; Oller, J.; Paradells, J. Overview and Evaluation of Bluetooth Low Energy: An Emerging Low-Power Wireless Technology. Sensors 2012, 12, 11734–11753.

- Wang, C.; Jiang, T.; Zhang, Q. ZigBee Network Protocols and Applications, 1st ed.; Auerbach Publications: New York, NY, USA, 2014.

- Badenhop, C.W.; Graham, S.R.; Ramsey, B.W.; Mullins, B.E.; Mailloux, L.O. The Z-Wave routing protocol and its security implications. Comput. Secur. 2017, 68, 112–129.

- Khalili, A.; Soliman, A.-H.; Asaduzzaman, M.; Griffiths, A. Wi-Fi sensing: Applications; challenges. J. Eng. 2020, 3, 87–97.

- Jain, G.; Dahiya, S. NFC: Advantages, limits and future scope. Int. J. Cybern. Inform. 2015, 4, 1–12.

- Chiani, M.; Elzanaty, A. On the LoRa Modulation for IoT: Waveform Properties and Spectral Analysis. IEEE Internet Things J. 2019, 6, 8463–8470.

More

Information

Subjects:

Computer Science, Hardware & Architecture; Computer Science, Information Systems; Engineering, Electrical & Electronic

Contributor

MDPI registered users' name will be linked to their SciProfiles pages. To register with us, please refer to https://encyclopedia.pub/register

:

View Times:

1.4K

Revisions:

3 times

(View History)

Update Date:

19 Jun 2023

Notice

You are not a member of the advisory board for this topic. If you want to update advisory board member profile, please contact office@encyclopedia.pub.

OK

Confirm

Only members of the Encyclopedia advisory board for this topic are allowed to note entries. Would you like to become an advisory board member of the Encyclopedia?

Yes

No

${ textCharacter }/${ maxCharacter }

Submit

Cancel

Back

Comments

${ item }

|

More

No more~

There is no comment~

${ textCharacter }/${ maxCharacter }

Submit

Cancel

${ selectedItem.replyTextCharacter }/${ selectedItem.replyMaxCharacter }

Submit

Cancel

Confirm

Are you sure to Delete?

Yes

No