Last year, a mid-sized European retailer unveiled an AI-powered demand-forecasting engine with palpable excitement. The board had seen the dazzling demos, the promise of 20% stock-out reduction, and a sleek dashboard pulsing with predictive insight. Eight months later, the system was quietly shelved. It turned out the data lakes were full of inconsistent product codes, store managers did not trust the recommendations, and no one had defined how a forecast should actually change a replenishment order. The algorithm, brilliant in a sandbox, met a messy, human organization and lost.

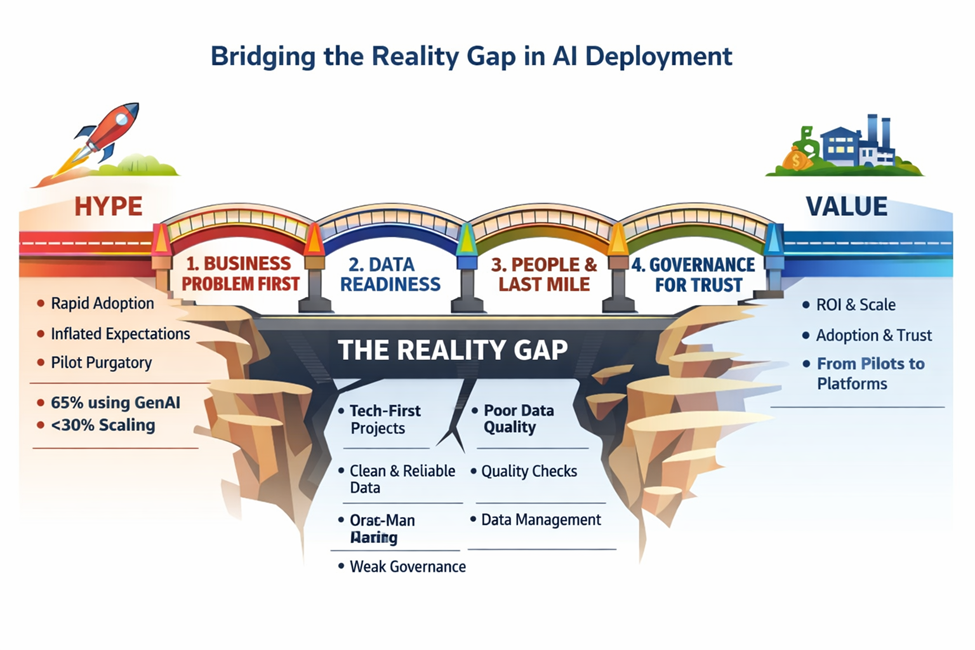

This story is far from unique. Across industries, the chasm between a compelling proof-of-concept and sustained, scaled value remains stubbornly wide. Research firm Gartner captured the zeitgeist bluntly, predicting that by 2025 at least 30% of generative AI projects would be abandoned after the pilot phase, not because the technology failed, but because of poor data quality, escalating costs, and unclear business value 1. The question for leaders today is no longer “Can we build it?” but “How do we bridge the reality gap and turn AI hype into durable returns?” (Figure 1).

Figure 1. A visual flowchart showing how organizations move from AI hype to sustained value by building four bridges: business alignment, data readiness, last‑mile adoption, and governance.

1. The Hype Machine

Artificial intelligence is enjoying its latest and loudest moment in the sun. From boardroom mandates to government task forces, the narrative is one of inevitability: automate everything, personalize every interaction, predict every failure. A McKinsey global survey found that 65% of organizations are now regularly using generative AI, nearly double the figure from just ten months prior 2. Venture capital pours billions into AI startups, and conference halls echo with tales of autonomous enterprises.

Yet behind the curtain, the same survey revealed that fewer than a third of companies have managed to shrink the gap between experimentation and enterprise-wide deployment. Most are stuck in “pilot purgatory”, running dozens of small experiments that never accumulate into a meaningful bottom-line shift. The hype has outpaced the operational muscle needed to digest it.

2. The Reality Gap: Where AI Stumbles

The reality gap is not a single fault line; it is a collection of cracks that widen under pressure. Firstly, many projects start with technology in search of a problem. An IT team acquires a state-of-the-art model, only to discover that the business team needed a simple rules-based automation. Secondly, data, the lifeblood of any AI system, is often fragmented, biased, or locked in legacy systems. A model trained on pristine historical data can produce nonsense when fed real-time, noisy operational feeds. Thirdly, the human element, the “last mile” of AI deployment, is routinely underestimated. Employees revert to gut instinct if they do not understand why a recommendation was made, or if it threatens their expertise. Finally, governance and ethical safeguards arrive as an afterthought, triggering regulatory or reputational fire alarms that freeze adoption.

3. Bridge #1: Anchor in Business Problems, Not Technology

The first bridge back to reality is a disciplined refusal to start with the algorithm. High-performing organizations begin with a sharp, measurable business problem: reducing customer churn in a specific segment, cutting energy consumption on a particular production line, or slashing invoice-processing time by 50%. They then identify the minimum viable prediction, classification, or generation task that would materially move that metric. This demand-led approach forces the team to articulate the expected ROI before a single line of code is written, and it creates a natural scorecard for the project’s success.

Equally importantly, it clarifies whether AI is even the right tool. In many cases, a well-designed business rule or a process simplification delivers most of the benefit with zero model risk. By anchoring in value, companies avoid the siren song of “AI for AI’s sake” and preserve credibility with stakeholders who will ultimately fund the next, more ambitious project.

4. Bridge #2: Data, The Unsexy Bedrock

If business alignment is the compass, data readiness is the terrain. Bridging the gap requires an honest reckoning with what data actually exists, what state it is in, and who owns it. A model trained on a perfectly curated dataset in a cloud sandbox will fail the moment it encounters a misspelled product entry or a date field typed in three different formats. The unglamorous work of data engineering, building reliable pipelines, establishing master data management, and embedding real-time quality checks, is the single biggest determinant of whether an AI system will survive contact with reality.

Forward-thinking companies appoint “data product” owners who are accountable for the availability, accuracy, and accessibility of critical data assets, treating them with the same rigor as physical products. They also invest in robust data labeling and feedback loops, ensuring that the model continues to learn from operational ground truth rather than drifting into irrelevance.

5. Bridge #3: People, Process, and the Last Mile

Even a technically perfect model is worthless if a frontline worker ignores its output. The last mile of AI deployment is profoundly human. This necessitates designing an interface that makes the recommendation interpretable, explaining, for example, that the forecast suggests ordering 200 units because the upcoming holiday plus a competitor’s stock-out raises demand probability to 85%. It also means co-creating the system with the people who will use it, not tossing it over a wall from the data science lab. Additionally, it means redesigning the corresponding workflows so that the insight translates seamlessly into action: an alert that triggers an automated purchase order, a suggested next-best-offer that appears inside the customer-relationship-management tool a salesperson already uses.

Change management is not a soft accessory; it is the engine of adoption. Davenport and Ronanki 3 emphasized that companies capturing real ROI from cognitive technologies had “redesigned the processes to take advantage of machine learning’s speed and scale, rather than simply overlaying AI on existing workflows”. That redesign often requires a new breed of “translator”, someone who speaks both business and data science, to bridge the two worlds continuously.

6. Bridge #4: Govern for Trust and Scale

Without trust, AI stalls. Trust is built on transparency, fairness, and reliability. A governance framework that clearly defines data usage policies, model validation protocols, and human-in-the-loop oversight is not a bureaucratic drag; it is the scaffolding that allows AI to scale safely. When a bank’s credit model exhibits bias, or a hospital’s triage algorithm makes an inexplicable recommendation, the entire program can be mothballed overnight. Organizations that embed ethics and compliance from the outset, through impact assessments, bias audits, and explainability dashboards, avoid the costly stop–start cycles that doom so many initiatives.

Moreover, governance includes financial discipline. Tracking actual costs versus benefits, and being willing to kill a project that does not meet its pre-agreed thresholds, keeps the portfolio healthy. McKinsey’s research shows that the organizations deriving the most value from AI are those that have moved beyond ad hoc experimentation to a factory model, where projects are run through a standardized “AI product lifecycle” with clear stage gates 2.

7. From Pilots to Platforms: A New Mindset

Ultimately, bridging the reality gap demands a shift in mindset: AI is not a magic box but an operational capability that must be industrialized. That means adopting platforms, reusable components, and MLOps practices that bring the same rigor to machine learning that DevOps brought to software. It means celebrating not just the brilliance of a model’s accuracy, but the grit of making it run reliably at 2 a.m., and the discipline of measuring whether it actually saved money or grew revenue.

The retailer that shelved its demand-forecasting engine eventually found its footing, not by building a better algorithm but by fixing product master data, involving store managers in feature selection, and starting with a single product category where the link between forecast and order was unambiguous. The pilot worked, and the proof was in the profit margin, not the PowerPoint slide.

The path from hype to value is rarely a straight line. It is a deliberate journey of problem-first thinking, data discipline, human-centric design, and unwavering governance. The organizations that walk it will stop asking rhetorical questions about AI’s potential and start pointing to earnings statements. The gap is real, but so is the bridge.

References

- Gartner. Gartner Predicts 30% of Generative AI Projects Will Be Abandoned After Proof of Concept By End of 2025.Gartner Press Release 2024. Available online: https://www.gartner.com/en/newsroom/press-releases/2024-07-29-gartner-predicts-30-percent-of-generative-ai-projects-will-be-abandoned-after-proof-of-concept-by-end-of-2025 (Accessed on 29 April 2026).

- McKinsey & Company. The state of AI in early 2024: Gen AI adoption spikes and starts to generate value. McKinsey Digital Available online: https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai (Accessed on 29 April 2026).

- Davenport, T.H.; Ronanki, R. Artificial Intelligence for the Real World. Harv. Bus. Rev. 2018, 96, 108–116.

Biography

Dr. Hamed Taherdoost is an award-winning researcher, educator, and R&D leader with over two decades of international experience across academia and industry. He is a Professor at University Canada West and holds academic affiliations with Westcliff University (USA), GISMA University of Applied Sciences (Germany), and Victorian Institute of Technology (Australia). He is a GUS Institute Fellow (UK), a Westcliff Faculty Fellow, and a Fellow at the National Kaohsiung University of Science and Technology, Taiwan. His work spans digital transformation, cybersecurity, AI, and technology innovation, with hundreds of high-impact publications. Dr. Taherdoost serves as Book Series Editor for Routledge’s Mastering Academic Excellence and holds editorial roles with leading international journals.