Your browser does not fully support modern features. Please upgrade for a smoother experience.

Please note this is an old version of this entry, which may differ significantly from the current revision.

Sensors are devices that output signals for sensing physical phenomena and are widely used in all aspects of our social production activities. The continuous recording of physical parameters allows effective analysis of the operational status of the monitored system and prediction of unknown risks.

- Sensors

1. Introduction

Sensors capture physical parameters from the observed environment and convert them into observable electrical pulses [1][2][3][4]. A wide variety of sensors are used in a wide range of applications in manufacturing and machinery [5][6][7], transportation [8][9][10][11][12][13], healthcare [14][15][16][17][18] and many other aspects of our daily lives. In the field of mechanical engineering, for example, accelerometer sensors placed around gearboxes or bearings can capture the vibration signals of a machine and predict possible impending failures [7]. In the healthcare field, voltage signals from a patient’s brain are captured by placing voltage sensors on the patient’s brain and used to identify patient commands [16], etc. By continuously recording physical parameters over a period of time, the current operating state of the monitored system can be analyzed and unknown risks can be assessed.

In recent years, the analysis techniques for time series data have been rapidly developed. Commonly used models for processing time series data are spectral and wavelet analysis [19][20][21], 1D Convolutional Neural Networks (CNNs) [22][23][24], Recurrent Neural Networks (RNNs) represented by Long Short-Term Memory (LSTM) networks [25][26][27] and Gated Recurrent Unit (GRU) [28][29], and the recently emerged Transformer [30]. Deep models have a large number of parameters and powerful feature extraction capabilities, and the parameters of the models are optimized and updated by loss functions and a gradient back propagation [31][32]. However, deep models are often based on the assumption that the distribution of training and test data is similar. In practice, however, this requirement is not satisfied. For example, in the fault diagnosis of rolling bearings, the vibration signals collected by accelerometer sensors under different loads (e.g., rotation speed) have different patterns. If vibration signals collected under one operating condition are used to train a deep network and data collected under another load condition is used to test the trained model, the performance of the model may be significantly degraded. As another example, in the brain-computer interface, a model trained with a large number of signals collected on one person is tested on another person and the model does not perform well. This is because the performance of the model tends to degrade when the model is trained on one scene (source domain) and tested on data collected on another scene (target domain). One way to solve this problem is to label a large amount of labeled data in a new scene and retrain the deep model. However, relabeling the data for each new scene requires significant human resources. Due to the ability to easily obtain unlabeled data in the target domain, many researchers have adopted unsupervised domain adaptation (UDA) techniques to improve the performance of the model in the target domain. That is, the model is trained using labeled data in the source domain and a large amount of unlabeled data in the target domain.

2. Basic Concept

2.1. Sensors

A sensor converts physical phenomena into a measurable digital signal, which can then be displayed, read, or processed further [1]. According to the physical characteristics of sensor sensing, common sensors include temperature sensors that sense temperature, acceleration sensors that sense motion information, infrared sensors that sense infrared information, etc. According to the way of sensing signals, sensors can be divided into active sensors and passive sensors. Active sensors need an external excitation signal or a power signal. On the other hand, passive sensors do not require any external power and produce an output response. LiDAR is an example of an active sensor, as it requires an external light source to emit a laser. By receiving the returned beam, the time delay between emission and return is calculated to determine the distance to an object. Passive sensors, such as temperature sensors, acceleration sensors, and infrared sensors, do not require external excitation and can directly measure the physical characteristics of the system being monitored. A wide variety of sensors are used in different industries and have greatly increased the productivity of society.

2.2. Time Series Sensor Data

The time series of length n observed by the sensor can be expressed as

where the data point xt is the data observed by the sensor at moment t. When x is univariate time series data, xt is a real value and xt∈R. When x is multivariate time series data, xt is a vector and xt∈Rd, where the d indicates the dimension of xt. Much of the time series data collected in practical applications are multivariate data that may be obtained from multiple attributes of a sensor or multiple sensors. For example, in the fault diagnosis of rolling bearings, an acceleration time series of three axes XYZ can be obtained simultaneously by a single accelerometer. Another example is in fault diagnosis of power plant thermal system, where multidimensional time series data are obtained simultaneously by using multiple sensors, such as temperature sensors, pressure sensors, flow rate sensors, etc.

2.3. Domain Gap

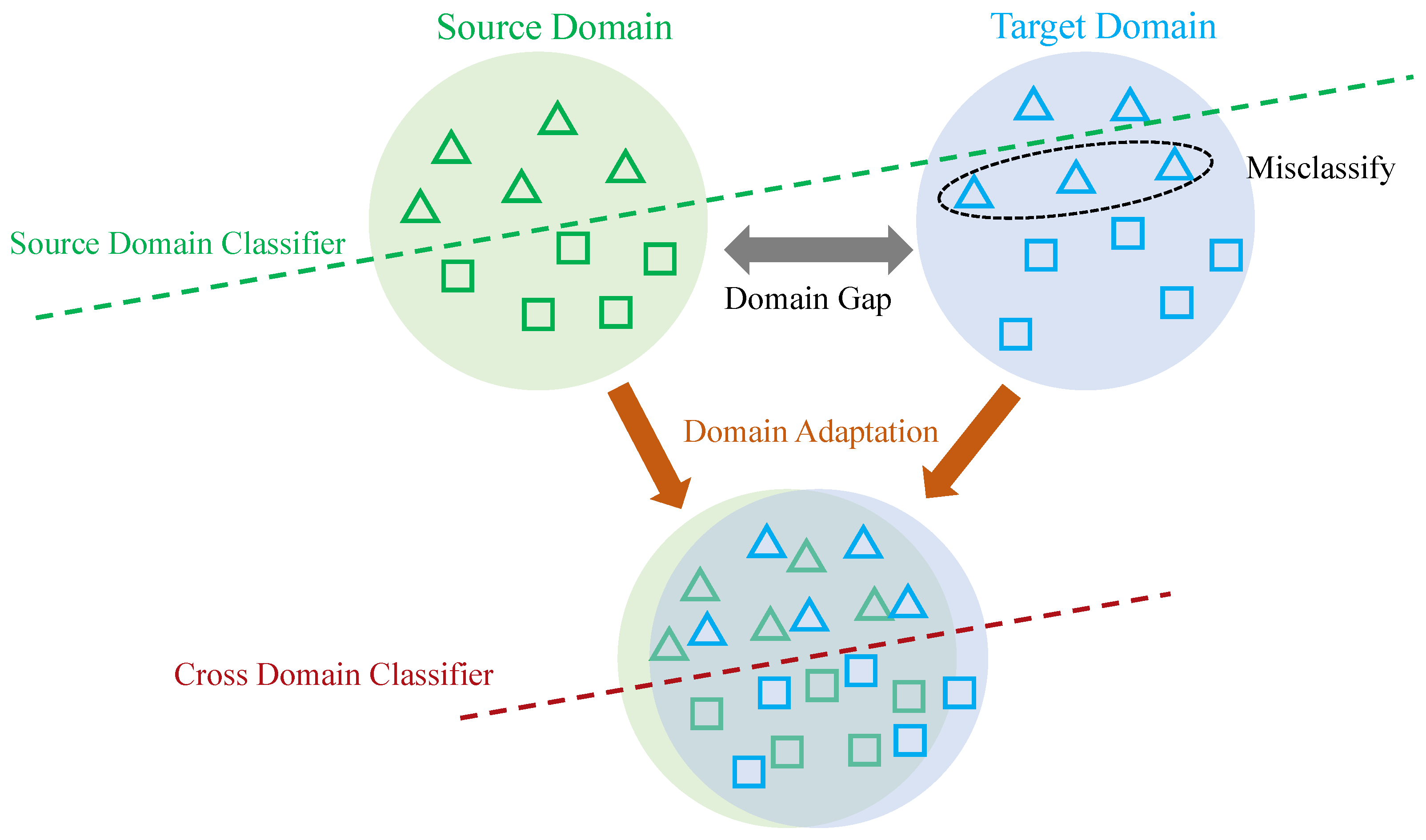

Data collected under a certain distribution is referred to as a domain, and this distribution can be understood as a specific data collection scenario [33]. For example, in human behavior recognition, inertial sensor data collected at the arm is referred to as one domain, while inertial sensor data collected at the leg is referred to as another domain. The data collected in different scenarios differ in distribution, and this difference is known as the domain gap (see Figure 1).

Figure 1. Illustration of source and target data with original feature distributions (top), and new features distributions (bottom) after domain adaptation, where domain adaptation techniques help to alleviate the “domain shift” problem between source and target domains.

2.4. Deep Unsupervised Domain Adaptation

In the concept of domain adaptation, scholars usually refer to the training set as the source domain and the test set as the target domain. Assuming that the model is trained with data collected at the arm (source domain) and later tested with data collected at the leg (target domain), the model does not perform as well. This is because the deep learning model can only recognize test data with the same distribution as the training data. One way to improve the performance of the model in the target domain is to label a large amount of data in the target domain and fine-tune the model trained in the source domain using a supervised approach. However, labeling a large amount of data requires significant human resources.

Domain adaptation (see Figure 1) refers to adapting the model trained in the source domain to the target domain, i.e., reducing the domain gap between the source and target domains and improving the performance of the target domain. Domain adaptation includes semi-supervised domain adaptation and unsupervised domain adaptation. Semi-supervised domain adaptation requires that samples from the target domain are partially labeled. In contrast, unsupervised domain adaptation does not require the target samples to have labels. Deep unsupervised domain adaptation specifically refers to improving the adaptation ability of deep learning models in the target domain. Due to the complexity and nonlinearity of deep learning models, a large number of algorithms for deep unsupervised domain adaptation have emerged in recent years, including mapping-based algorithms, adversarial learning-based algorithms, etc.

This entry is adapted from the peer-reviewed paper 10.3390/s22155507

References

- Javaid, M.; Haleem, A.; Rab, S.; Singh, R.P.; Suman, R. Sensors for daily life: A review. Sens. Int. 2021, 2, 100121.

- Heikenfeld, J.; Jajack, A.; Rogers, J.; Gutruf, P.; Tian, L.; Pan, T.; Li, R.; Khine, M.; Kim, J.; Wang, J. Wearable sensors: Modalities, challenges, and prospects. Lab Chip 2018, 18, 217–248.

- Arampatzis, T.; Lygeros, J.; Manesis, S. A survey of applications of wireless sensors and wireless sensor networks. In Proceedings of the International Symposium on, Mediterrean Conference on Control and Automation Intelligent Control, Limassol, Cyprus, 27–29 June 2005; pp. 719–724.

- Fleming, W.J. New automotive sensors: A review. Sens. J. 2008, 8, 1900–1921.

- Kalsoom, T.; Ramzan, N.; Ahmed, S.; Ur-Rehman, M. Advances in sensor technologies in the era of smart factory and industry 4.0. Sensors 2020, 20, 6783.

- Angelopoulos, A.; Michailidis, E.T.; Nomikos, N.; Trakadas, P.; Hatziefremidis, A.; Voliotis, S.; Zahariadis, T. Tackling faults in the industry 4.0 era: A survey of machine-learning solutions and key aspects. Sensors 2019, 20, 109.

- Hasan, M.J.; Islam, M.M.; Kim, J.M. Bearing fault diagnosis using multidomain fusion-based vibration imaging and multitask learning. Sensors 2021, 22, 56.

- Fayyad, J.; Jaradat, M.A.; Gruyer, D.; Najjaran, H. Deep learning sensor fusion for autonomous vehicle perception and localization: A review. Sensors 2020, 20, 4220.

- Boukabache, H.; Escriba, C.; Fourniols, J.Y. Toward smart aerospace structures: Design of a piezoelectric sensor and its analog interface for flaw detection. Sensors 2014, 14, 20543–20561.

- Pytka, J.; Budzyński, P.; Łyszczyk, T.; Józwik, J.; Michałowska, J.; Tofil, A.; Błażejczak, D.; Laskowski, J. Determining wheel forces and moments on aircraft landing gear with a dynamometer sensor. Sensors 2019, 20, 227.

- Kocić, J.; Jovičić, N.; Drndarević, V. Sensors and sensor fusion in autonomous vehicles. In Proceedings of the Telecommunications Forum, Belgrade, Serbia, 20–21 November 2018; pp. 420–425.

- Varghese, J.Z.; Boone, R.G. Overview of autonomous vehicle sensors and systems. In Proceedings of the International Conference on Operations Excellence and Service Engineering, Orlando, FL, USA, 10–11 September 2015; pp. 178–191.

- Van Brummelen, J.; O’Brien, M.; Gruyer, D.; Najjaran, H. Autonomous vehicle perception: The technology of today and tomorrow. Transp. Res. Part C Emerg. Technol. 2018, 89, 384–406.

- Voinea, G.D.; Butnariu, S.; Mogan, G. Measurement and geometric modelling of human spine posture for medical rehabilitation purposes using a wearable monitoring system based on inertial sensors. Sensors 2016, 17, 3.

- Arikumar, K.; Prathiba, S.B.; Alazab, M.; Gadekallu, T.R.; Pandya, S.; Khan, J.M.; Moorthy, R.S. FL-PMI: Federated learning-based person movement identification through wearable devices in smart healthcare systems. Sensors 2022, 22, 1377.

- Kwon, Y.H.; Shin, S.B.; Kim, S.D. Electroencephalography based fusion two-dimensional convolution neural networks model for emotion recognition system. Sensors 2018, 18, 1383.

- Leal-Junior, A.G.; Diaz, C.A.; Avellar, L.M.; Pontes, M.J.; Marques, C.; Frizera, A. Polymer optical fiber sensors in healthcare applications: A comprehensive review. Sensors 2019, 19, 3156.

- Baskar, S.; Shakeel, P.M.; Kumar, R.; Burhanuddin, M.; Sampath, R. A dynamic and interoperable communication framework for controlling the operations of wearable sensors in smart healthcare applications. Comput. Commun. 2020, 149, 17–26.

- Ghaderpour, E.; Vujadinovic, T.; Hassan, Q.K. Application of the least-squares wavelet software in hydrology: Athabasca River basin. J. Hydrol. Reg. Stud. 2021, 36, 100847.

- Ghaderpour, E.; Pagiatakis, S.D.; Hassan, Q.K. A survey on change detection and time series analysis with applications. Appl. Sci. 2021, 11, 6141.

- Ghaderpour, E.; Pagiatakis, S.D. Least-squares wavelet analysis of unequally spaced and non-stationary time series and its applications. Math. Geosci. 2017, 49, 819–844.

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. In Proceedings of the 25th International Conference on Neural Information Processing Systems, Lake Tahoe, NV, USA, 3–6 December 2012; Volume 1.

- Wu, G.; Liu, Y.; Fang, L.; Chai, T. Revisiting light field rendering with deep anti-aliasing neural network. arXiv 2021, arXiv:2104.06797.

- Ismail Fawaz, H.; Forestier, G.; Weber, J.; Idoumghar, L.; Muller, P.A. Deep learning for time series classification: A review. Data Min. Knowl. Discov. 2019, 33, 917–963.

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780.

- Li, M.; Zhang, M.; Luo, X.; Yang, J. Combined long short-term memory based network employing wavelet coefficients for MI-EEG recognition. In Proceedings of the International Conference on Mechatronics and Automation, Harbin, China, 7–10 August 2016; pp. 1971–1976.

- Du, X.; Ma, C.; Zhang, G.; Li, J.; Lai, Y.K.; Zhao, G.; Deng, X.; Liu, Y.J.; Wang, H. An efficient LSTM network for emotion recognition from multichannel EEG signals. Trans. Affect. Comput. 2020.

- Cho, K.; Van Merriënboer, B.; Gulcehre, C.; Bahdanau, D.; Bougares, F.; Schwenk, H.; Bengio, Y. Learning phrase representations using RNN encoder-decoder for statistical machine translation. arXiv 2014, arXiv:1406.1078.

- Yan, X.; Yang, J.; Song, L.; Liu, Y. PSA-GRU: Modeling person-social twin-attention based on GRU for pedestrian trajectory prediction. In Proceedings of the Chinese Control Conference, Shanghai, China, 26–28 July 2021; pp. 8151–8157.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; Volume 30.

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980.

- Ruder, S. An overview of gradient descent optimization algorithms. arXiv 2016, arXiv:1609.04747.

- Daumé, H., III. Frustratingly easy domain adaptation. arXiv 2009, arXiv:0907.1815.

This entry is offline, you can click here to edit this entry!