The visual organ is important for animals to obtain information and understand the outside world; however, robots cannot do so without a visual system. At present, the vision technology of artificial intelligence has achieved automation and relatively simple intelligence; however, bionic vision equipment is not as dexterous and intelligent as the human eye. At present, robots can function as smartly as human beings; however, existing reviews of robot bionic vision are still limited. Robot bionic vision has been explored in view of humans and animals’ visual principles and motion characteristics.

- artificial intelligence

- robot bionic vision

- optical devices

- bionic eye

- intelligent camera

1. Human Visual System

2. Differences and Similarities between Human Vision and Bionic Vision

3. Advantages and Disadvantages of Robot Bionic Vision

-

High accuracy: Human vision is 64 gray-levels, and the resolution of small targets is low [55]. Machine vision can identify significantly more gray levels and resolve micron-scale targets. Human visual adaptability is considerably strong and can identify a target in a complex and changing environment. However, color identification is easily influenced by a person’s psychology. Humans cannot identify color quantitatively, and their gray-level identification can also be considered poor, normally seeing only 64 grayscale levels. Their ability to resolve and identify tiny objects is weak.

-

Fast: According to Potter [56], the human brain can process images seen by the human eye within 13 ms, which is converted into approximately 75 frames per second. The results extend beyond the 100 ms recognized in earlier studies [57]. Bionic vision can use a 1000 frames per second frame rate or higher to realize rapid recognition in high-speed image movement, which is impossible for human beings.

-

High stability: Bionic vision detection equipment does not have fatigue problems, and there are no emotional fluctuations. Bionic vision is carefully executed according to the algorithm and requirements every time with high efficiency and stability. However, for large volumes of image detection or in the cases of high-speed or small-object detection, the human eye performs poorly. There is a relatively high rate of missed detections owing to fatigue or inability.

-

Information integration and retention: The amount of information obtained by bionic vision is comprehensive and traceable, and the relevant information can be easily retained and integrated.

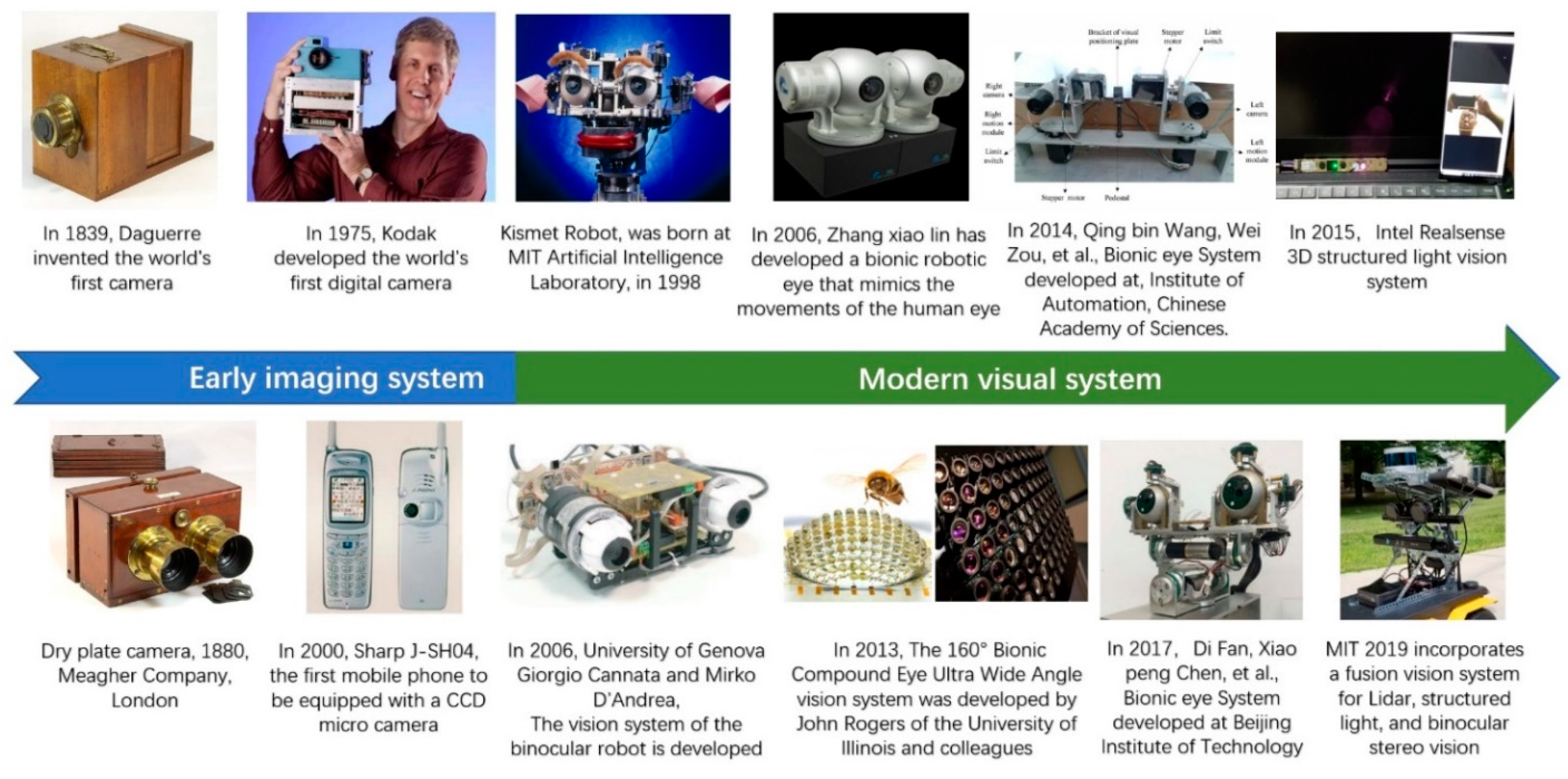

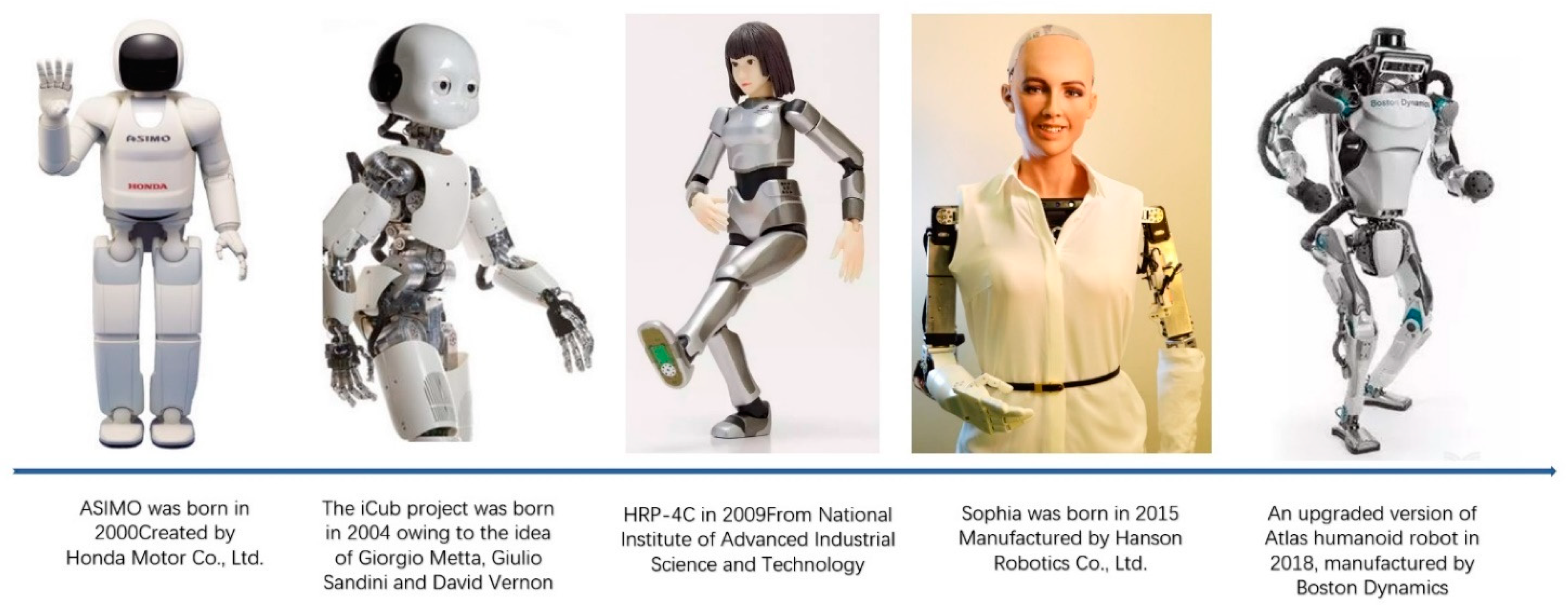

4. The Development Process of Bionic Vision

5. Common Bionic Vision Techniques

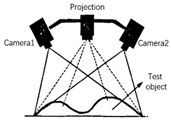

5.1. Binocular Stereo Vision

5.2. Structured Light

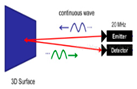

5.3. TOF (Time of Flight)

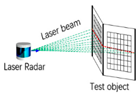

| Technology Category | Monocular Vision | Binocular Stereo Vision | Structured Light | TOF | Optical Laser Radar |

|---|---|---|---|---|---|

| Product pictures |  |

|

|

|

|

| Technology principle |  |

|

|

|

|

| Principle of work | Single camera | Dual camera | Camera and infrared projection patterns | Infrared reflection time difference | Time difference of laser pulse reflection |

| Response time | Fast | Medium | Slow | Medium | Medium |

| Weak light | Weak | Weak | Good | Good | Good |

| Bright light | Good | Good | Weak | Medium | Medium |

| Identification precision | Low | Low | Medium | Low | Medium |

| Resolving capability | High | High | Medium | Low | Low |

| Identification distance | Medium | Medium | Very short | Short | Far |

| Operation difficulty | Low | High | Medium | Low | High |

| Cost | Low | Medium | High | Medium | High |

| Power consumption | Low | Low | Medium | Low | High |

| Disadvantages | Low recognition accuracy, poor dark light | Dark light features are not obvious | High requirements for ambient light, short recognition distance | Low resolution, short recognition distance, limited by light intensity | Cloudy and rainy days, fog, and other weather interference have effects |

| Representative company | Cognex, Honda, Keyence | LeapMoTion, iit | Intel, Microsoft, PrimeSense | Intel, TI, ST, Pmd | Velodyne, Boston Dynamics |

This entry is adapted from the peer-reviewed paper 10.3390/app12167970

References

- Yau, J.M.; Pasupathy, A.; Brincat, S.L.; Connor, C.E. Curvature Processing Dynamics in Macaque Area V4. Cereb. Cortex 2013, 23, 198–209.

- Grill-Spector, K.; Malach, R. The human visual cortex. Annu. Rev. Neurosci. 2004, 27, 649–677.

- Hari, R.; Kujala, M.V. Brain Basis of Human Social Interaction: From Concepts to Brain Imaging. Physiol. Rev. 2009, 89, 453–479.

- Hao, Q.; Tao, Y.; Cao, J.; Tang, M.; Cheng, Y.; Zhou, D.; Ning, Y.; Bao, C.; Cui, H. Retina-like Imaging and Its Applications: A Brief Review. Appl. Sci. 2021, 11, 7058.

- Ayoub, G. On the Design of the Vertebrate Retina. Orig. Des. 1996, 17, 1.

- Williams, G.C. Natural Selection: Domains, Levels, and Challenges; Oxford University Press: Oxford, UK, 1992; Volume 72.

- Navarro, R. The Optical Design of the Human Eye: A Critical Review. J. Optom. 2009, 2, 3–18.

- Schiefer, U.; Hart, W. Functional Anatomy of the Human Visual Pathway; Springer: Berlin/Heidelberg, Germany, 2007; pp. 19–28.

- Horton, J.C.; Hedley-Whyte, E.T. Mapping of cytochrome oxidase patches and ocular dominance columns in human visual cortex. Philos. Trans. R. Soc. B Biol. Sci. 1984, 304, 255–272.

- Choi, S.; Jeong, G.; Kim, Y.; Cho, Z. Proposal for human visual pathway in the extrastriate cortex by fiber tracking method using diffusion-weighted MRI. Neuroimage 2020, 220, 117145.

- Welsh, D.K.; Takahashi, J.S.; Kay, S.A. Suprachiasmatic nucleus: Cell autonomy and network properties. Annu. Rev. Physiol. 2010, 72, 551–577.

- Ottes, F.P.; Van Gisbergen, J.A.M.; Eggermont, J.J. Visuomotor fields of the superior colliculus: A quantitative model. Vis. Res. 1986, 26, 857–873.

- Lipari, A. Somatotopic Organization of the Cranial Nerve Nuclei Involved in Eye Movements: III, IV, VI. Euromediterr. Biomed. J. 2017, 12, 6–9.

- Deangelis, G.C.; Newsome, W.T. Organization of disparity-selective neurons in macaque area MT. J. Neurosci. 1999, 19, 1398–1415.

- Larsson, J.; Heeger, D.J. Two Retinotopic Visual Areas in Human Lateral Occipital Cortex. J. Neurosci. 2006, 26, 13128–13142.

- Borra, E.; Luppino, G. Comparative anatomy of the macaque and the human frontal oculomotor domain. Neurosci. Biobehav. Rev. 2021, 126, 43–56.

- França De Barros, F.; Bacqué-Cazenave, J.; Taillebuis, C.; Courtand, G.; Manuel, M.; Bras, H.; Tagliabue, M.; Combes, D.; Lambert, F.M.; Beraneck, M. Conservation of locomotion-induced oculomotor activity through evolution in mammals. Curr. Biol. 2022, 32, 453–461.

- McLoon, L.K.; Willoughby, C.L.; Andrade, F.H. Extraocular Muscle Structure and Function; McLoon, L., Andrade, F., Eds.; Craniofacial Muscles; Springer: New York, NY, USA, 2012.

- Horn, A.K.E.; Straka, H. Functional Organization of Extraocular Motoneurons and Eye Muscles. Annu. Rev. Vis. Sci. 2021, 7, 793–825.

- Adler, F.H.; Fliegelman, M. Influence of Fixation on the Visual Acuity. Arch. Ophthalmol. 1934, 12, 475–483.

- Carter, B.T.; Luke, S.G. Best practices in eye tracking research. Int. J. Psychophysiol. 2020, 155, 49–62.

- Cazzato, D.; Leo, M.; Distante, C.; Voos, H. When I Look into Your Eyes: A Survey on Computer Vision Contributions for Human Gaze Estimation and Tracking. Sensors 2020, 20, 3739.

- Kreiman, G.; Serre, T. Beyond the feedforward sweep: Feedback computations in the visual cortex. Ann. N. Y. Acad. Sci. 2020, 1464, 222–241.

- Müller, T.J. Augenbewegungen und Nystagmus: Grundlagen und klinische Diagnostik. HNO 2020, 68, 313–323.

- Golomb, J.D.; Mazer, J.A. Visual Remapping. Annu. Rev. Vis. Sci. 2021, 7, 257–277.

- Tzvi, E.; Koeth, F.; Karabanov, A.N.; Siebner, H.R.; Krämer, U.M. Cerebellar—Premotor cortex interactions underlying visuomotor adaptation. NeuroImage 2020, 220, 117142.

- Banks, M.S.; Read, J.C.; Allison, R.S.; Watt, S.J. Stereoscopy and the Human Visual System. SMPTE Motion Imaging J. 2012, 121, 24–43.

- Einspruch, N. (Ed.) Application Specific Integrated Circuit (ASIC) Technology; Academic Press: Cambridge, MA, USA, 2012; Volume 23.

- Verri Lucca, A.; Mariano Sborz, G.A.; Leithardt, V.R.Q.; Beko, M.; Albenes Zeferino, C.; Parreira, W.D. A Review of Techniques for Implementing Elliptic Curve Point Multiplication on Hardware. J. Sens. Actuator Netw. 2021, 10, 3.

- Zhao, C.; Yan, Y.; Li, W. Analytical Evaluation of VCO-ADC Quantization Noise Spectrum Using Pulse Frequency Modulation. IEEE Signal Proc. Lett. 2014, 11, 249–253.

- Jouppi, N.P.; Young, C.; Patil, N.; Patterson, D.; Agrawal, G.; Bajwa, R.; Bates, S.; Bhatia, S.; Boden, N.; Borchers, A.; et al. In-datacenter performance analysis of a tensor processing unit. In Proceedings of the ISCA ‘17: Proceedings of the 44th Annual International Symposium on Computer Architecture, Toronto, ON, Canada, 24–28 June 2017.

- Huang, H.; Liu, Y.; Hou, Y.; Chen, R.C.-J.; Lee, C.; Chao, Y.; Hsu, P.; Chen, C.; Guo, W.; Yang, W.; et al. 45nm High-k/Metal-Gate CMOS Technology for GPU/NPU Applications with Highest PFET Performance. In Proceedings of the 2007 IEEE International Electron Devices Meeting, Washington, DC, USA, 10–12 December 2007.

- Kim, S.; Oh, S.; Yi, Y. Minimizing GPU Kernel Launch Overhead in Deep Learning Inference on Mobile GPUs. In Proceedings of the HotMobile ‘21: The 22nd International Workshop on Mobile Computing Systems and Applications, Virtual, 24–26 February 2021.

- Shah, N.; Olascoaga, L.I.G.; Zhao, S.; Meert, W.; Verhelst, M. DPU: DAG Processing Unit for Irregular Graphs With Precision-Scalable Posit Arithmetic in 28 nm. IEEE J. Solid-state Circuits 2022, 57, 2586–2596.

- He, K.; Gkioxari, G.; Dollar, P.; Girshick, R. Mask R-CNN. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2980–2988.

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149.

- Ren, J.; Wang, Y. Overview of Object Detection Algorithms Using Convolutional Neural Networks. J. Comput. Commun. 2022, 10, 115–132.

- Kreiman, G. Biological and Computer Vision; Cambridge University Press: Oxford, UK, 2021.

- Sabour, S.; Frosst, N.; Geoffrey, E. Hinton Dynamic routing between capsules. In Proceedings of the 31st International Conference on Neural Information Processing Systems (NIPS’17), Curran Associates Inc., Red Hook, NY, USA, 4–9 December 2017; pp. 3859–3869.

- Schwarz, M.; Behnke, S. Stillleben: Realistic Scene Synthesis for Deep Learning in Robotics. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 10502–10508, ISSN: 2577-087X.

- Piga, N.A.; Bottarel, F.; Fantacci, C.; Vezzani, G.; Pattacini, U.; Natale, L. MaskUKF: An Instance Segmentation Aided Unscented Kalman Filter for 6D Object Pose and Velocity Tracking. Front. Robot. AI 2021, 8, 594593.

- Bottarel, F.; Vezzani, G.; Pattacini, U.; Natale, L. GRASPA 1.0: GRASPA is a Robot Arm graSping Performance BenchmArk. IEEE Robot. Autom. Lett. 2020, 5, 836–843.

- Bottarel, F. Where’s My Mesh? An Exploratory Study on Model-Free Grasp Planning; University of Genova: Genova, Italy, 2021.

- Yamins, D.L.K.; Dicarlo, J.J. Using goal-driven deep learning models to understand sensory cortex. Nat. Neurosci. 2016, 19, 356–365.

- Breazeal, C. Socially intelligent robots: Research, development, and applications. In Proceedings of the 2001 IEEE International Conference on Systems, Man and Cybernetics. e-Systems and e-Man for Cybernetics in Cyberspace, Tucson, AZ, USA, 7–10 October 2001.

- Shaw-Garlock, G. Looking Forward to Sociable Robots. Int. J. Soc. Robot. 2009, 1, 249–260.

- Gokturk, S.B.; Yalcin, H.; Bamji, C. A Time-Of-Flight Depth Sensor—System Description, Issues and Solutions. In Proceedings of the 2004 Conference on Computer Vision and Pattern Recognition Workshop, Washington, DC, USA, 27 June–2 July 2004.

- Gibaldi, A.; Canessa, A.; Chessa, M.; Sabatini, S.P.; Solari, F. A neuromorphic control module for real-time vergence eye movements on the iCub robot head. In Proceedings of the 2011 11th IEEE-RAS International Conference on Humanoid Robots, Bled, Slovenia, 26–28 October 2011.

- Xiaolin, Z. A Novel Methodology for High Accuracy Fixational Eye Movements Detection. In Proceedings of the 4th International Conference on Bioinformatics and Biomedical Technology, Singapore, 26–28 February 2012.

- Song, Y.; Xiaolin, Z. An Integrated System for Basic Eye Movements. J. Inst. Image Inf. Telev. Eng. 2012, 66, J453–J460.

- Dorrington, A.A.; Kelly, C.D.B.; McClure, S.H.; Payne, A.D.; Cree, M.J. Advantages of 3D time-of-flight range imaging cameras in machine vision applications. In Proceedings of the 16th Electronics New Zealand Conference (ENZCon), Dunedin, New Zealand, 18–20 November 2009.

- Jain, A.K.; Dorai, C. Practicing vision: Integration, evaluation and applications. Pattern Recogn. 1997, 30, 183–196.

- Ma, Z.; Ling, H.; Song, Y.; Hospedales, T.; Jia, W.; Peng, Y.; Han, A. IEEE Access Special Section Editorial: Recent Advantages of Computer Vision. IEEE Access 2018, 6, 31481–31485.

- Rebecq, H.; Ranftl, R.; Koltun, V.; Scaramuzza, D. Events-to-Video: Bringing Modern Computer Vision to Event Cameras. arXiv 2019, arXiv:1904.08298.

- Silva, A.E.; Chubb, C. The 3-dimensional, 4-channel model of human visual sensitivity to grayscale scrambles. Vis. Res. 2014, 101, 94–107.

- Potter, M.C.; Wyble, B.; Hagmann, C.E.; Mccourt, E.S. Detecting meaning in RSVP at 13 ms per picture. Atten. Percept. Psychophys. 2013, 76, 270–279.

- Al-Rahayfeh, A.; Faezipour, M. Enhanced frame rate for real-time eye tracking using circular hough transform. In Proceedings of the 2013 IEEE Long Island Systems, Applications and Technology Conference (LISAT), Farmingdale, NY, USA, 3 May 2013.

- Wilder, K. Photography and Science; Reaktion Books: London, UK, 2009.

- Smith, G.E. The invention and early history of the CCD. Nucl. Instrum. Methods Phys. Res. Sect. A Accel. Spectrometers Detect. Assoc. Equip. 2009, 607, 1–6.

- Marcandali, S.; Marar, J.F.; de Oliveira Silva, E. Através da Imagem: A Evolução da Fotografia e a Democratização Profissional com a Ascensão Tecnológica. In Perspectivas Imagéticas; Ria Editorial: Aveiro, Portugal, 2019.

- Kucera, T.E.; Barret, R.H. A History of Camera Trapping. In Camera Traps in Animal Ecology; Springer: Tokyo, Japan, 2011.

- Boyle, W.S. CCD—An extension of man’s view. Rev. Mod. Phys. 2010, 85, 2305.

- Sabel, A.B.; Flammer, J.; Merabet, L.B. Residual Vision Activation and the Brain-eye-vascular Triad: Dysregulation, Plasticity and Restoration in Low Vision and Blindness—A Review. Restor. Neurol. Neurosci. 2018, 36, 767–791.

- Rosenfeld, J.V.; Wong, Y.T.; Yan, E.; Szlawski, J.; Mohan, A.; Clark, J.C.; Rosa, M.; Lowery, A. Tissue response to a chronically implantable wireless intracortical visual prosthesis (Gennaris array). J. Neural Eng. 2020, 17, 46001.

- Gu, L.; Poddar, S.; Lin, Y.; Long, Z.; Zhang, D.; Zhang, Q.; Shu, L.; Qiu, X.; Kam, M.; Javey, A.; et al. A biomimetic eye with a hemispherical perovskite nanowire array retina. Nature 2020, 581, 278–282.

- Gu, L.; Poddar, S.; Lin, Y.; Long, Z.; Zhang, D.; Zhang, Q.; Shu, L.; Qiu, X.; Kam, M.; Fan, Z. Bionic Eye with Perovskite Nanowire Array Retina. In Proceedings of the 2021 5th IEEE Electron Devices Technology & Manufacturing Conference (EDTM), Chengdu, China, 8–11 April 2021.

- Cannata, G.; D’Andrea, M.; Maggiali, M. Design of a Humanoid Robot Eye: Models and Experiments. In Proceedings of the 2006 6th IEEE-RAS International Conference on Humanoid Robots, Genova, Italy, 4–6 December 2006.

- Cannata, G.; Maggiali, M. Models for the Design of a Tendon Driven Robot Eye. In Proceedings of the IEEE International Conference on Robotics and Automation, Rome, Italy, 10–14 April 2007; pp. 10–14.

- Hirai, K. Current and future perspective of Honda humanoid robot. In Proceedings of the Proceedings of the 1997 IEEE/RSJ International Conference on Intelligent Robot and Systems IROS ‘97, Grenoble, France, 11 September 1997.

- Goswami, A.V.P. ASIMO and Humanoid Robot Research at Honda; Springer: Berlin/Heidelberg, Germany, 2007.

- Kajita, S.; Kaneko, K.; Kaneiro, F.; Harada, K.; Morisawa, M.; Nakaoka, S.I.; Miura, K.; Fujiwara, K.; Neo, E.S.; Hara, I.; et al. Cybernetic Human HRP-4C: A Humanoid Robot with Human-Like Proportions; Springer: Berlin, Heidelberg, 2011; pp. 301–314.

- Faraji, S.; Pouya, S.; Atkeson, C.G.; Ijspeert, A.J. Versatile and robust 3D walking with a simulated humanoid robot (Atlas): A model predictive control approach. In Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA), Hong Kong, China, 31 May–7 June 2014.

- Kaehler, A.; Bradski, G. Learning OpenCV 3: Computer Vision in C++ with the OpenCV Library; O’Reilly Media, Inc.: Sebastopol, CA, USA, 2016.

- Karve, P.; Thorat, S.; Mistary, P.; Belote, O. Conversational Image Captioning Using LSTM and YOLO for Visually Impaired. In Proceedings of 3rd International Conference on Communication, Computing and Electronics Systems; Springer: Berlin/Heidelberg, Germany, 2022.

- Kornblith, S.; Shlens, J.; Quoc, L.V. Do better imagenet models transfer better? In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019.

- Jacobstein, N. NASA’s Perseverance: Robot laboratory on Mars. Sci. Robot. 2021, 6, eabh3167.

- Zou, Y.; Zhu, Y.; Bai, Y.; Wang, L.; Jia, Y.; Shen, W.; Fan, Y.; Liu, Y.; Wang, C.; Zhang, A.; et al. Scientific objectives and payloads of Tianwen-1, China’s first Mars exploration mission. Adv. Space Res. 2021, 67, 812–823.

- Jung, B.; Sukhatme, G.S. Real-time motion tracking from a mobile robot. Int. J. Soc. Robot. 2010, 2, 63–78.

- Brown, J.; Hughes, C.; DeBrunner, L. Real-time hardware design for improving laser detection and ranging accuracy. In Proceedings of the Conference Record of the Forty Sixth Asilomar Conference on Signals, Systems and Computers (ASILOMAR), Pacific Grove, CA, USA, 4–7 November 2012; pp. 1115–1119.

- Min Seok Oh, H.J.K.T. Development and analysis of a photon-counting three-dimensional imaging laser detection and ranging (LADAR) system. Opt. Soc. Am. 2011, 28, 759–765.

- Rezaei, M.; Yazdani, M.; Jafari, M.; Saadati, M. Gender differences in the use of ADAS technologies: A systematic review. Transp. Res. Part F Traffic Psychol. Behav. 2021, 78, 1–15.

- Maybank, S.J.; Faugeras, O.D. A theory of self-calibration of a moving camera. Int. J. Comput. Vis. 1992, 8, 123–151.

- Murphy-Chutorian, E.; Trivedi, M.M. Head Pose Estimation in Computer Vision: A Survey. IEEE Trans. Pattern Anal. 2009, 31, 607–626.

- Jarvis, R.A. A Perspective on Range Finding Techniques for Computer Vision. IEEE Trans. Pattern Anal. 1983, PAMI-5, 122–139.

- Grosso, E. On Perceptual Advantages of Eye-Head Active Control; Springer: Berlin, Heidelberg, 2005; pp. 123–128.

- Binh Do, P.N.; Chi Nguyen, Q. A Review of Stereo-Photogrammetry Method for 3-D Reconstruction in Computer Vision. In Proceedings of the 19th International Symposium on Communications and Information Technologies (ISCIT), Ho Chi Minh City, Vietnam, 25–27 September 2019.

- Mattoccia, S. Stereo Vision Algorithms Suited to Constrained FPGA Cameras; Springer International Publishing: Cham, Switzerland, 2014; pp. 109–134.

- Mattoccia, S. Stereo Vision Algorithms for FPGAs. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Portland, OR, USA, 23–28 June 2013.

- Park, J.; Kim, H.; Tai, Y.W.; Brown, M.S.; Kweon, I. High quality depth map upsampling for 3D-TOF cameras. Int. Conf. Comput. Vis. 2011, 1623–1630.

- Foix, S.; Alenya, G.; Torras, C. Lock-in Time-of-Flight (ToF) Cameras: A Survey. IEEE Sensors J. 2011, 11, 1917–1926.

- Li, J.; Yu, L.; Wang, J.; Yan, M. Obstacle information detection based on fusion of 3D LADAR and camera; Technical Committee on Control Theory, CAA. In Proceedings of the 2017 36th Chinese Control Conference (CCC), Dalian, China, 26–28 July 2017.

- Gill, T.; Keller, J.M.; Anderson, D.T.; Luke, R.H. A system for change detection and human recognition in voxel space using the Microsoft Kinect sensor. In Proceedings of the 2011 IEEE Applied Imagery Pattern Recognition Workshop (AIPR), Washington, DC, USA, 11–13 October 2011.

- Atmeh, G.M.; Ranatunga, I.; Popa, D.O.; Subbarao, K.; Lewis, F.; Rowe, P. Implementation of an Adaptive, Model Free, Learning Controller on the Atlas Robot; American Automatic Control Council: New York, NY, USA, 2014.