This is a cloud-based intelligent power management system that uses analytics as a control signal and processes balance achievement pointer, and describes operator acknowledgments that must be shared quickly, accurately, and safely. Current studies of it aims to introduce a conceptual and systematic structure with three main components: demand power (direct current (DC)-device), power mix between renewable energy (RE) and other power sources, and a cloud-based power optimization intelligent system.

- power management

- big data

- battery aging

- cloud computing

- renewable energy

1. Introduction

In the last decade of industrial progress, the world economy has shifted from cheap energy to expensive fuel consumption. However, industrialization necessitates an increasing amount of energy, which is a condition for humanity’s economic prosperity and sustainability [1]. Awareness of the relative constraints of traditional energy resource exhaustion is essential; however, the restricted energy supply from RE sources is necessary. Thus, these two factors have not only a two-fold influence on energy and economic development only, but also on the environment. A cyber-physical system in which electrical components are controlled by a computer and connected to a network of other computer-controlled physical equipment is known as a power grid [2]. The power grid includes the movement of electricity and information between the power grid and control centers [3]. Safe and reliable grid operation requires controlling the energy flow such that the supply and demand can be well balanced in real-time [4]. It is necessary to ensure that information flows as intended as any disruption in information flow will affect the correct conduct of energy flow and the system’s safe and dependable functioning [5,6]. In traditional power networks, the supply and demand balancing are generally achieved by adjusting the output of centralized generating units [5]. When consumption rises, the production must increase to keep up. Similarly, as demand falls, the created production must be reduced [7].

The power system has witnessed significant modifications in recent years due to the rapid growth of a distributed generation (DG). DG, unlike centralized generators, are mostly weather-dependent and hence have limited controllability to meet demand. Due to their various sizes and network tiers to which they are attached, they also add more unpredictability to the entire operation [8]. Recent environmental worries about growing carbon dioxide emissions (CDE), expanding energy needs, and the liberalization of the electrical industry have drawn the world’s attention to renewable energy technology [9]. Although the integration of intermittent RE generation into electrical power systems is still relatively new in the evolution of electrical systems, it is popular all over the world due to its technical advantages such as improved voltage profile, power quality (PQ), voltage stability, reliability and grid support [8]. According to the modern grid initiative study from the United States Department of Energy (USDOE), a modern smart grid (SG) must be capable of self-healing and distributing high-quality power in order to avoid wasting money due to outages [9].

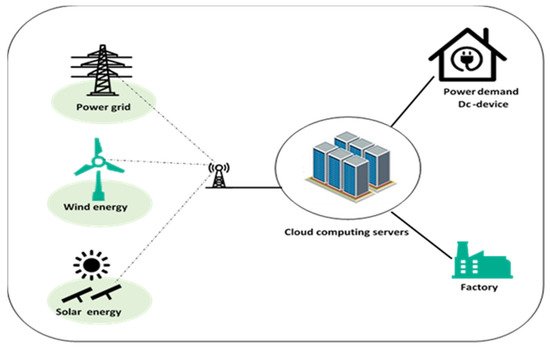

Societies on a global scale have reached a tipping point from fossil fuel power generation to sustainable alternatives. However, wireless connectivity plays a critical role in this transformation by enabling innovative smart energy systems (SESs) [9]. SES is a novel solution, which integrates energy generating and storage technologies with ‘intelligent’ applications, regulating and optimizing their usage. Cloud computing can use combined multiple energy sources with storage systems to manage them [10]. Furthermore, significant points to improve SES require real-time performance decisions based on technical features and climatic data, surplus renewable power generation, and building decentralized energy systems with excellent efficiency and lower cost [11]. In addition, to reduce rising environmental hazards such as increasing global mean temperature and greenhouse gas emissions, energy systems are experiencing a rapid transition toward low-carbon intelligent systems [12]. Unlike traditional energy systems, which dispatch various generators to meet changing demand, future energy systems include two-way energy flows between providers and consumers and active engagement of customers as prosumers in various electrical markets [13]. Under the suggested micro-market, not completely controllable loads were rescheduled by changing specific lectures, research timelines and optimization by a self-crossover genetic algorithm (GA) [14]. The numerical findings revealed that the suggested micro-market and algorithm efficiently increased load flexibility and resulted in increased cost savings for intelligent energy systems [15,16], as shown in Figure 1 .

2. Smart Grids System

An electrical device transforms electricity (the proper voltage, current, and frequency) from a source to an electrical load [63]. Researchers described the relationship between power and energy, and their management techniques; as seen in Equation (4) and (5). Both power and energy are defined in terms of the work that a system accomplishes. It is critical to understand the distinction between power and energy. A reduction in power consumption does not always imply a reduction in the amount of energy utilized. For example, reduce central processing units (CPU) performance by lowering voltage and frequency led to reduced power consumption. It may take longer to complete the program execution in this situation. The amount of energy consumed may not be reduced even with reducing power usage [64]. As explained in the next parts, energy consumption may decrease through implementing static power management (SPM), dynamic power management (DPM), or by combining the two solutions and services [65,66]. Furthermore, electricity consumption may be divided into three categories:

First: The energy consumed via parts of the system due to leaking electricity in the supplied technique is called SPM. It is unaffected by clock rates and does not rely on use situations dictated by the device type and architecture used in the service’s CPU [67].

DER are energy generating and storage systems that supply power where required. DER systems, which produce less than 10 megawatts (MWs) of power, may generally be scaled to fit your specific needs and can be installed on-site. Therefore, one single source is limited and can probably be costly, whereas to achieve efficient energy storage, a combination of all technologies is required. Power conversion systems for storage purposes must also be considered [120]. This is required to increase their control and dependability, as well as to ensure that storage systems are properly integrated into power networks [121]. A next-generation SG without energy storage is similar to a computer without a hard drive—severely limited [122]. A suitable EMS is required to obtain the optimum performance for clusters of distributed energy resources (DERs). The multi-agent systems (MASs) paradigm, as utilized and described, may be used to organize distributed control methods [123]. Some of the benefits of employing MASs for successful, intelligent grid operation in the energy market are discussed in [124,125]. The MAS application reduces the overall cost of power system production, integrated microgrids, comprised dispersed resources, and lumped loads [126]. To maximize the hybrid RE production system’s economic performance and energy quality, a hybrid immune-system-based PSO was presented and applied to reduce fuel cost in the generating process [126].

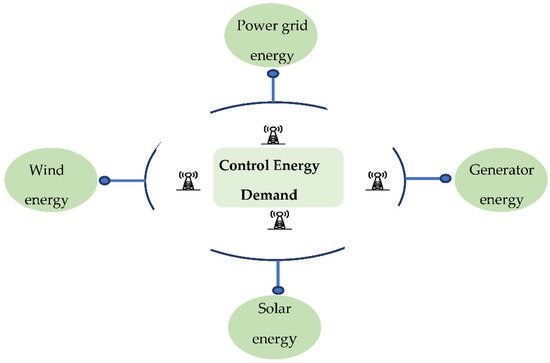

Conversely, the distribution system operator (DSO) can dispatch at least a portion of the DERs; implementation of a coordinated integration of the various DERs recommends a centralized method. The best operating strategy of the DER system is generally analyzed by using a multi-objective linear programming methodology in centralized control methods [127]. The combination of the energy costs with the reduction of environmental effects suggest reducing operational costs, including energy losses, curtailed energy, reactive support, and shed energy [128,129]. Additionally implemented is a two-stage short-term scheduling process. The first task is to create a day-ahead scheduler to optimize DER production for the next day. In the second step, an intra-day scheduler that modifies scheduling every 15 min is also proposed, which considers the distribution network’s operation needs and restrictions, as shown in Figure 2 [130].

3. Cloud Computing

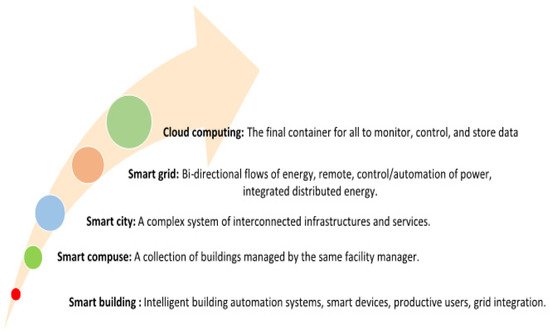

Cloud computing is an useful computing paradigm that provides on-demand access to facilities and shared resources over the Internet [163]. Infrastructure as a service (IaaS), platform as a service (PaaS), and service as a service (SaaS) are three notable services it offers, while storage, virtualization, computing and networking are supported [164,165]. Implementing cloud computing applications is a top priority, especially in today’s environment, for things such as providing appropriate financing for social services and purchasing programs. Grids are geographically distributed platforms for computation. They provide high computational power and merge extremely heterogeneous physical resources into a single virtual resource [166,167]. Grid computing is a set of resources; the primary resource is the central processing unit (CPU), which is mainly used to perform massive and complicated calculations. Cloud computing technology is used by the majority of existing information technology (IT)-based enterprises. Cloud computing is a rapidly evolving technology, and companies are constantly adding new services to their cloud environments to stay competitive and fulfill customers’ expanding demands [168]. Furthermore, many different organizations are moving their IT-based systems to cloud-based models [169]. Customers can use cloud computing resources in the form of virtual machines (VMs) that are deployed and run-in data centers. The data centers are composed of several physical servers, each with its own set of resources [114]. The cloud computing ecosystem for energy management is described in Figure 3.

The IaaS model of cloud computing provides consumers with storage services. People have begun to save their data on clouds due to the large storage capacity [170,171]. Through virtualization, the issues around the storage of user data IoT applications can be solved by providing storage, processing, and networking resources [172]. In mission development, two-measure CPU usage and storage capacity are the best typical capabilities of the cloud to reduce local storage overheads [173]. These parameters’ significance may minimize computation cost, communication, CPU usage reduction, and battery and data redundancy elimination in terms of storage and computing by performing task scheduling. Research on storage techniques has gained momentum due to the significant advantages of quick storage services in the cloud. Still, these techniques have specific challenges because there is a higher demand for quick access and secure storage. Cisco predicts, that by 2021, cloud computing systems will account for around 94 percent of all computing. Furthermore, by 2025, the size of data created and altered is expected to reach 175 zettabytes, according to International Data Corporation (IDC) [174]. The aforementioned necessitates cloud suppliers to establishing and simplifying additional services [114,169].

Cloud computing using virtualization technology offers end-users computational resources, on-demand resources, flexibility, dependability, dynamism, scalability, and better availability wherever and at any time, which are examples of different services [175]. Elasticity is one of the keys characteristics of cloud computing, which refers to the system’s capacity to respond to changes in workload [176]. Cloud services are now employed in most applications via the internet, which has become the contemporary economy’s backbone. As a result, resource scheduling has become a hot topic in the cloud because ineffective scheduling techniques can lead to a variety of issues, including long computation times, reduced profit, poorer throughput, higher cost, and inappropriate resource usage, which are all examples of an uneven workload at resources (over-utilization or under-utilization) [177]. Resource usage in cloud computing is directly related to power consumption when resources are not used properly (over-utilization or under-utilization) due to high processing demand from end users and no service delays from the cloud. Integrating energy-sensitive servers has become a popular topic in the cloud world [178]. Therefore, future research is required to address the challenges and meet end-user demand within a reasonable timeframe. Reducing power consumption by switching underused hosts to sleep or hibernation without violating service level agreements (SLAs), which are digital contracts between end users and cloud services, ensures quality of service while resources are ready. Therefore, several energy-conscious server integration methods have been proposed in the last decade [179]. Either of the two scenarios is intended to achieve server consolidation. Most of the suggested scheduling methods must strive toward greater resource utilization and energy efficiency. However, most available algorithms are still in their infancy due to constraints [180]. Most algorithms focus on a single parameter (energy) and ignore other factors such as cost, reaction time, elasticity during run time, etc. [181].

Local or green power sources are considered an excellent method to conserve energy at a data center by locating it near where the electricity is generated to reduce transmission losses [182]. Shutdown, hibernation, and sending in various low-power stages are examples of cloud computing approaches. At the same time, cloud computer energy consumption should be managed to optimize energy consumption for a specific computing task. When it comes to reducing energy usage per unit of work, cloud computing is a more energy-efficient option [183]. According to studies, employing the cloud might result in a 38 percent reduction in global data center energy expenditures by 2020, but a 31 percent reduction in data center power usage (from 201.8 terawatt-hours (TWh) in 2010 to 139.8 (TWh) in 2020). According to another report [183], cloud computing might help businesses save billions of dollars on their energy expenses. This equates to a reduction in carbon emissions of millions of metric tons each year [184].

4. The Framework of the Charge Controller System

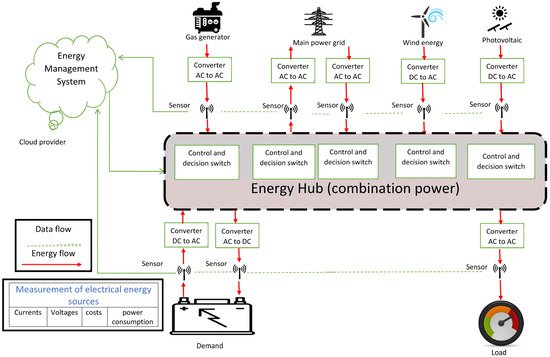

Overall, after the long review illustrated in this paper, the proposed framework contains an EMS stored on the cloud computing service. This system serves three different goals. The first is to monitor and combine different energy sources in order to obtain the best optimized system. The second goal is to control the switches in the energy hub, and the third goal is to manage the charging and discharging process. The system will yield many benefits: Reduce the carbon footprint by including RESs such as solar plants (photovoltaic), WTs, and other RESs; Enhance the demand power by monitoring and controlling the power balance at the same time; Introduce an intelligent system and cloud computing to the power management field, and make the system manageable.

It is difficult to carry out an actual optimization charge controller on an intelligent power system via cloud computing, as it is based on numerous nonlinear parameters and contains many genuine bonds and limitations. Furthermore, because many actual characteristics are stochastic, handling a power system as a plant (dynamic systems) is problematic. Therefore, there are two suggestions: the first is to plan the optimization algorithm for the charge controller based on the real parameters; the next is to implement this proposed algorithm as a practical system that offers optimal interventional treatment solutions for all protection requirements. Therefore, this study focuses on presenting a final chart of the model that will consider three aspects: power demand management, RE, and cloud computing, which will be the main contribution of the future study conducted in Figure 4.

This entry is adapted from the peer-reviewed paper 10.3390/app11219820