A number of robot-assisted MIS systems have been developed to product level and are now well-established clinical tools; Intuitive Surgical’s very successful da Vinci Surgical System a prime example. The majority of these surgical systems are based on the traditional rigid-component robot design that was instrumental in the third industrial revolution—especially within the manufacturing sector. However, the use of this approach for surgical procedures on or around soft tissue has come under increasing criticism. The dangers of operating with a robot made from rigid components both near and within a patient are considerable.

- soft robotics

- soft pose sensing

- sensor integration

- robot-assisted minimally invasive surgery

- EU project STIFF-FLOP

1. Introduction

The use of robotics in minimally invasive surgery has advanced considerably over the last twenty years. The da Vinci surgical system, in particular, is now well established in clinical practice and has been used widely in surgical procedures, although for reasons discussed later, it is predominantly in relation to prostatectomy that it remains the ‘go to’ tool.

Although the da Vinci system has, for some time, dominated the market, extensive research continues in this area. Much of it is focused on rigid-component robots (as per the da Vinci system) on the basis that robotics principles successfully adopted for automating the manufacturing industry are equally applicable to surgery. This has proven not to be the case, and while rigid robot applications in industry continue to grow exponentially, they have stagnated somewhat in surgery. The principal reason for this is the problem of mitigating the risk of injury – both to patients and to clinical staff. While manipulators can be kept away from humans in a factory setting, this is clearly not the case within an operating theatre, and therefore complex control algorithms are required to ensure that any forces applied to the patient, or their anatomy are kept below tolerable thresholds.

While successful in many cases, sheer cost, lack of dexterity, and safety concerns limit the da Vinci system’s widespread use. Above all, it is these last two points that restrict the application of rigid-component robots in surgical endoscopy for many pathologies [1].

As a rule, current robot devices use straight-line tools that are conjoined at a single base. This allows the device to handle complex operations, provided that the operating area is highly localised – the prostate being an example. When the operating area spreads over larger areas of the patient's body, current robotic devices are presented with a significant challenge. Certain colorectal and other abdominal based procedures are examples that lie beyond the capability of the da Vinci and similar systems. It is principally the robot’s lack of dexterity that limits its application, along with the risk factor of using a rigid metal tool in and around delicate human organs and other anatomy. Indeed, injuries and bruises have been reported due to excessive force applied by robot-assisted minimally invasive surgery systems to soft tissue [2]. These injuries often go undetected because no haptic feedback is provided to the surgeon when using these systems.

There is also a further risk that software malfunction can affect the behaviour of the robot – with potentially disastrous repercussions. Any small ‘unwanted’ movement on the part of the robot can cause significant injury to the patient. Although clinical data is only available for the da Vinci system, it is likely that market competitors, such as SennhanceTM Surgical System (Morrisville, NC, USA) and VersiusTM (Cambridge Medical Robotics, Cambridge, UK) are affected by the same limitations given the similarities of their hardware architecture.

The various shortcomings of rigid MIS robots prompted researchers to explore alternative approaches. The first large-scale project looking into soft robotics in MIS was the EU project STIFF-FLOP (2012 to 2015). The project's ambition was to radically part from traditional approaches and pursue the idea of creating robotic structures out of soft materials in a bid to overcome the shortcomings presented by rigid devices.

Although soft robots prove superior in terms of safety and dexterity, they present their own challenges within an MIS setting. Above all else, pneumatically actuated robot structures made from soft materials are notoriously difficult to model and control. The very factors that make them useful (high deformability and low stiffness of the materials used – typically silicone) along with the actuation medium’s high compressibility, render their control more complex - potentially infinitely more articulate, but as a consequence, considerably more complex to control and to achieve accurate positioning of the instrument tip.

It is important to acknowledge that visualisation during MIS is usually limited to the target organ and the end effectors of the surgical instrument – indeed the entire monitor will be often focussed on a viewing field of just a few square centimetres inside the abdominal cavity. In such circumstances, the surgeon is solely concentrating on the procedure at hand, e.g., a delicate dissection, at the tip of the instrument. In most surgical procedures, the instrument shaft is not visualised at all, and thus, not observed. With rigid devices, an instrument’s location and movement are usually predictable, effectively following the surgeon's movements. This, however, is not the case in soft robotics. This fact, together with limited visualisation, mandates a complete understanding of the instrument’s location and pose within its environment. This can be achieved by embedding soft sensors within the robot’s body, the feedback from which determining the robot’s pose and shape.

In traditional articulated instruments made from rigid instruments, the end effector can be manipulated using one pivotal hinge (at the trocar port through which the shaft is inserted into the patient’s abdominal cavity) offering limited mobility. In soft robotic manipulators, the manipulator can deform along the entire length of the manipulator and also leverage contact with the body parts due to the safety offered by the softness to achieve a greater dexterity. Advanced control software is therefore required to ensure the desired end-effector position and angle is achieved in the most efficient way and with minimal body displacement.

In robotic surgery, the correct placement of trocars on the abdominal wall is of utmost importance. Choosing the correct location for the insertion of rigid instruments into the abdominal cavity and creating the fulcrum point is crucial in that it enables the surgeon to perform the various tasks that are demanded by the procedure. This pivot point on the abdominal wall is not as critical in soft robotics as instruments can bend, and their manoeuvrability is therefore less dependent upon the specific location of the fulcrum point.

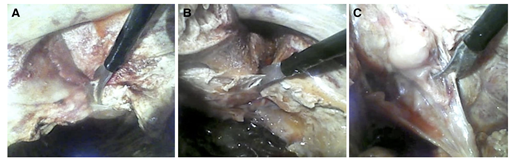

Figure 1: Mesorectal excision restricted by rigid straight-light laparoscopic tool. Lateral completion on the right side (A), the left side (B) and the anterior side (C) [3].

With far more freedom in relation to trocar placement, cosmetic factors can be brought into the equation – with Trocar port placements kept to areas that are not usually exposed. That said, by increasing the distance from trocar to target – and the consequent route that the soft robot has to take, we also increase the complexity of pose perception and control.

Speed of robotic action is also a consideration. Rigid instruments (especially those that are manually operated) work at the speed of a surgeon’s hands, which is vital to ensure that the intuitiveness of a surgeon’s actions is not compromised. Any response delay between surgeon hand movement and soft robot end effector movement therefore needs to be mitigated – a challenge given the complex kinematics of soft robot arms and the requisite computational software.

Soft Robots, because of their compliance and infinite degrees of freedom, have low positional accuracy. This is clearly a major handicap in surgical work where precision is absolutely key. To achieve this, we need to enhance the robot’s pose (position and orientation) accuracy by embedding appropriate, soft shape-sensing sensors into the body of the soft robot arm. These sensors combined with camera-based observation of the space will allow us to obtain a good appreciation of the robot within its work environment. Unwanted contact with healthy organs can thus be detected and avoided. Additionally, soft tactile sensors could be integrated into the robot’s outer surface to help estimate contact point and interactional forces with the environment. That said, the need for “environment-interaction” sensors is less critical as the proposed soft devices compliantly adapt to their soft environments without exerting the excessive force that their rigid-bodied counterparts might. Although tactile perception has its merits, including the provision of haptic feedback to the surgeon, it is of lesser importance in the context of MIS and beyond the scope of this paper. The interested reader may refer to [4]–[7] for a more in-depth treatment of tactile perception in soft robotics.

It is noted that, even today, surgeons are generally more accustomed to performing MIS with standard surgical instruments. Despite the tremendous hype – and indeed research – in robotic solutions for MIS over the past twenty years or so, the technology has, in the main, failed to achieve more substantial outcomes than standard laparoscopy in the treatment of a range of diseases [8]. There have been many comparative studies between traditional laparoscopy and robotic surgery, from both patients’ and surgeons’ perspectives and the findings largely suggest that the much hoped for advantages of robotic technologies have not translated into tangible results [1], [9]–[11]. Although there has been recent progress, there is general consensus that the treatment of diseases requiring more complex procedures is not achievable with existing systems, to a large extent, because they are made of rigid parts [1].

2. Robotic Requirements in MIS

Although hand-held robots are commercially available, the standard instrument for MIS is a traditional hand-held laparoscopic device, characterised by its end effector, of which there are several types. The most commonly used ones are graspers, with curved jaws or fenestrated plates, needle holders, scissors, or, more recently, thanks to advances in laser technology, contact-less laser-based dissectors. Irrespective of the type of end effector, the standard instrument's tip has limited operating flexibility and often only possesses opening and closing options. Increased dexterity would constitute a major step forward, and to this end, we have distilled, from background research and clinicians’ perspectives, the key characteristics that such an instrument would require.

- Accurate positioning of the end effector tool at the target point (operating site). This is key to all precision surgery, not least of all extended lymphatic dissection, which increasingly appears to be improving overall survival of cancer patients [12]–[14].

- Increased dexterity. There is a clear need for instruments to have enhanced dexterity in order to reach further into a patient's abdomen, pelvic and other more obscure areas, while at the same time being able to circumvent organs and other "obstacles". It is therefore not simply the end effector that needs greater flexibility and manoeuvrability, but the entire robot arm itself [3], [15].

- Intuitive operation and control of the robot. When MIS was introduced into the clinical setting, surgeons, to an extent, had to reinvent themselves. They were now working without the direct 3D vision they had grown accustomed to, nor indeed without the ability to directly manipulate objects (tissue, organs, etc) with their hands. Ever since then, efforts have been made to restore the intuitive nature of surgical activity, through more advanced tools and technologies. That intuitiveness would need to be retained as new soft robotic systems are developed.

- Feedback on the interaction forces for any point of contact between the robot and patient. The lack of force feedback remains one of the principal limitations of current devices, as there is little way to mitigate the risk of impact and friction between the device and tissue or organs around it [16]–[19]. It is noted that this is a particular issue when using rigid-component robots as they can exert excessive forces onto tissue. Employing soft robots for surgical procedures dramatically reduces this risk, and therefore the need for tactile sensing as these robots are compliant by nature.

- Visualisation of the entire surgical field. With visual sensors currently located at the end effector, visualisation is only achieved on entry, on exit, and, for the duration of the procedure is restricted to the target area. More generalised image feedback would allow us to better understand the impact of any and every part of the device upon its immediate environment. This extra set of eyes is likely to contribute to overall MIS safety, although, as already noted, being highly compliant and adaptable, soft robots are somewhat less dependent on this feature.

Having distilled the key requirements, the task now in hand is to develop solutions that address these issues. These solutions, based on the principles of soft and flexible robotics, will need to build upon the platform that already exists in rigid robotics, enhancing outcomes for MIS and meeting the demands of ever increasingly complex surgery. Alongside any advances in this field, the overarching objective of patient safety will remain forefront.

3. Soft Robot Design Overcoming Challenges in Sensor Integration

3.1. Considerations for the design of soft surgical robots integrated with sensory capabilities

Flexible and soft manipulators have promising applications in minimally invasive surgery as they allow surgical tools to reach targets that are inaccessible with conventional rigid surgical instruments. However, a principal challenge in the implementation of such devices is controlling their pose and it is with this in mind, that we discuss our approach to creating a soft robotic device for use in minimally invasive surgery (MIS). Our designs aim to address the requirements outlined in Section 2, a key feature being the robot’s capacity to have sensors embedded within it without impacting on the robot’s “softness”. Our soft robot manipulator is based on designs developed during the EU project STIFF-FLOP. It is made of silicone rubber and equipped with pneumatic actuation chambers to enable bending in any direction away from the longitudinal robot arm axis.

What makes a soft robot ‘soft’ is the selection of materials used in the production of its main body(Rigid-bodied robots are sometimes called “soft” when in fact they would be better described as “compliant”. Compliance can be achieved through various compensatory mechanisms using smart sensors and compensatory algorithms. For the purposes of this paper, however, “soft” means physically soft). Traditional robots are made of rigid materials, their stiff parts interacting with each other in a well-defined way, and within well-defined constraints, by way of mechanisms composed of rigid links and stiff joints. Most roboticists are well versed in determining the dynamics and kinematics of rigid robotic systems and are able to create rigid robots that are both precise and reliable. These are not however infallible, and accidents still happen, and the stiffness of these devices’ components is often a factor [20]. Soft robots offer certain advantages, in particular in relation to safety and physical adaptability or deformability – a characteristic that clearly reduces its impact, during any collision, upon an external object [21]. This natural compliance also helps to compensate for any minor control inadequacies on the part of the robot [22], [23], see Figure 2.

Figure 2. A soft robotic hand with a simple binary controller is naturally able to adapt, when handling delicate objects .

With a soft robotic device, motion can be distributed across its entire body. Because of the compliant properties of the material, an entirely new level of dexterity becomes achievable. It is of course not without its challenges. The lack of stiffness, for example, makes the kinematics and dynamics of the soft robot significantly more complex than that of its rigid counterpart, especially during the interaction with the environment [24]. Similarly, precise control of a soft robot’s movement and its positional tracking are also rendered more difficult. The reason for this is that while the rigid robot has a strictly limited number of degrees of freedom, its soft equivalent, thanks to its distributed deformation properties, has a virtually infinite number. The inverse kinematics required for calculation of input control parameters therefore become considerably more complex, and numerical solutions can be slow and difficult to compute.

One possible solution to these problems is to use simplified kinematic models, such as constant curvature or beam-based models [25] and to carefully track the state of the soft robot, collecting a constant stream of data with which to create a feedback loop. This however is dependent on integrating greater sensing capabilities into the robot. An immediate problem arises, as current sensors that have been created for use in rigid structures, cannot be transposed into soft structures. They are themselves rigid devices that are usually attached to the body of a rigid robot, collecting data on joint and associated link movements. Soft robots require soft, deformable sensors that measure bending rather than rotation, stretch rather than translation, tactile contact pressure distribution rather than force. As one of the ‘holy grails’ of soft robotics, much work has been directed into this area, but despite a degree of progress [26], [27], reliable and robust sensors are few and far between.

3.1.1. Integrative Robot Design and Fabrication

Moving from rigid robot technologies to soft robotic solutions within the realm of MIS brings with it a host of challenges. This is because these technologies are fundamentally different, not just in terms of materials, but in the way they operate. Soft robots have fused parts rather than rigid components linked by discrete joints - motion is therefore, achieved through deformation of the body material. As a consequence, the design and fabrication of soft robots is entirely different from that of their rigid relatives.

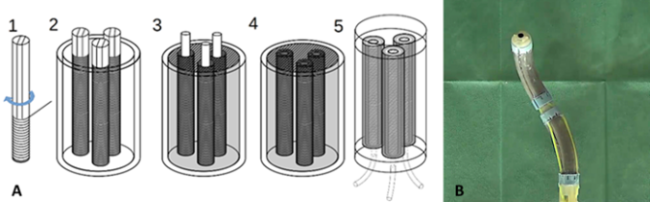

Figure 3. (A) Schematic showing the fabrication stages for a STIFF-FLOP arm segment made of fibre-reinforced pneumatic chambers in a cylindrical silicone structure [28], (B) STIFF-FLOP robot arm prototype comprising two segments and a camera integrated at the tip.

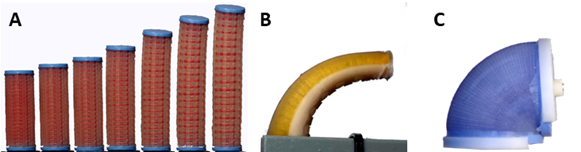

In the STIFF-FLOP project, the aim was to create a soft robot manipulator capable of complex motions including bending and elongation. Fluidic actuation was chosen as the ideal medium for an MIS tool [29], an incidental benefit being its compatibility with magnetic resonance imaging (MRI). The principle behind fluidic actuation is the transfer of a pressurised fluid into an actuation chamber that expands in response. As the pressure on the elastic material of the robot’s body increases, there is a corresponding amount of resultant distention in the body. By altering the geometry of the chamber and the robot’s body, and by using materials of varying degrees of stiffness and flexibility, one can produce an almost infinite array of poses. Research in this field shows that from just a handful of primary motions (including contraction, elongation, bending and twisting – see Figure 4) an almost infinite range of complex motions can be achieved [30]–[32].

Figure 4. Primary motions in soft robotics. (A) Soft linear actuator elongating due to increasing pressure being supplied [33], (B) Soft robot segment bending due to strain-limiting material being used on one side of the actuator [30], (C) Soft robot segment rotating due to its toroidal geometry [31].

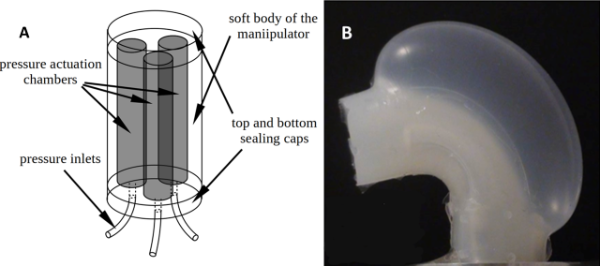

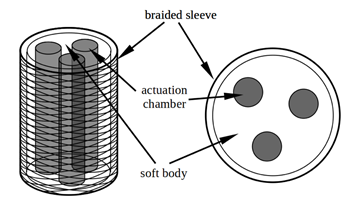

Although fluidic actuation has its merits, it also presents challenges. The STIFF-FLOP manipulator was designed as a modular device, each module providing three degrees of freedom (2D bending and elongation). Stacking two modules potentially allowed for 6 degrees of freedom, while increasing the area within which the device could operate. Three longitudinal pneumatic chambers were embedded into the cylindrical body of each module and arranged symmetrically around its main axis (Figure 5A). Pressurisation of one of the chambers while the other two remain ‘at rest’ caused the module to bend away from the pressurised chamber. Actuation of all three chambers resulted in elongation, and any combination of pressures resulted in a combined movement.

Figure 5. (A) Improved STIFF-FLOP arm design featuring three independent pneumatic actuators reinforced with fibres arranged around the central axis, embedded in a soft silicon body [28]; (B) Ballooning effect observed in a soft silicon structure upon inflating an embedded air chamber without fibre reinforcement [34].

The problem we experienced upon manufacture of the first prototypes was that although expansion produced the requisite elongation, it also led to ballooning or radial expansion (see Figure 5B). An initial solution was to counter that effect by reinforcing the device in such a way as to reduce radial expansion though allow the requisite elongation. An external braided sheath was therefore applied, the result of which was that ballooning was no longer apparent and bending motion was enhanced, see Figure 6.

Figure 6. Solution for the ballooning issue: a braided sleeve allowing for bending and elongation but constraining radial expansion schematic [28].

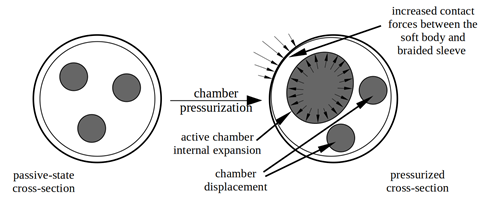

However, the integration of deformable shape sensors revealed that while the expansion from increased pressure was no longer externally observable in terms of radial expansion, it was nonetheless leading to other problematic consequences. These included nonlinear actuation, elevated sensor readings, very high hysteresis, and complex deformation that was difficult to measure, model or predict, Figure 7.

Nonlinearity of the actuation in the early prototype resulted mainly from two factors: chamber cross-section deformation and friction between the silicone body and braided sheath.

Figure 7. Deformation of module cross-section during actuation. Pressurization of any chamber causes it to expand within the braided sleeve that leads to significant deformation of the cross-section of the module. The deformation includes the expansion of the chamber itself, displacement of other chambers and increased pressure between the silicone body and the sleeve [28].

The pressure-induced force that stretches the chamber results from the interplay between the pressure and the area upon which it is acting. If the area expands along with the pressure, the force increases in a nonlinear fashion. As external reinforcement did not prevent the chambers from expanding internally, there was substantial growth of the internal cross-section area, resulting in nonlinear behaviour.

Another issue observed was a high output dependence on the actuation sequence. That effect was also caused by the internal expansion of the actuation chambers. This was because the chamber that was first actuated could expand freely, but others thereafter encountered restrictive forces from those already actuated. Once expanded chambers were pressed against the reinforcement, the friction between the silicon and braided material hampered any subsequent change to the bending direction. An additional issue was the fact that internal deformation was having an effect on the workings of the shape and force sensors embedded into the module.

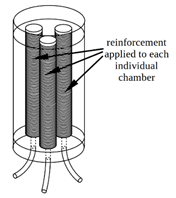

A robust and reliable solution to all the aforementioned issues was to introduce reinforcement directly where it was needed, i.e. around the actuation chambers themselves rather than the overall device, Figure 8. By reinforcing the pneumatic chambers within the silicone body as close as possible to where the pressure is created, we were able to limit the radial expansion of each individual chamber, without compromising their ability to bend and stretch. Also, by embedding fibres directly into the silicone material, we reduced the aforementioned friction issues, and this meant that the shape of the device would be solely a consequence of pressure values in the chambers, uninfluenced by the sequence in which they were activated.

Figure 8. Improved STIFF-FLOP design; three independent pneumatic actuators reinforced with fibres arranged around the central axis, embedded into the soft body of the device [28].

3.2. The Challenges of Sensor Integration

Given that the STIFF-FLOP arm is designed for MIS procedures, the ability to track its precise state in terms of pose is crucial. This is what enables us to close the control loop in order to provide the necessary feedback to the operator. Achieving this is dependent on the presence of sensors that can relay the requisite information.

Due to the fundamental operative differences between rigid and soft robots, traditional sensors cannot be transplanted into soft devices. Indeed, there are almost no commercially available sensors that are easily “re-engineerable” for soft device integration. Although there are certain useful and easily measurable values that can be obtained using sensors external to the robot body – air pressure for example – this data alone is insufficient to provide us with a clear picture of the robot’s shape and position. The route forward in this regard is therefore to embed soft/deformable sensors into the robot itself.

With regards to sensing, the use of an optics-based approach was central in the STIFF-FLOP project. Optical transmitter fibres were used to introduce light into the sensing element and receiver optical fibres would allow for the measurement of light intensity changes due to geometrical changes in the manipulator’s structure. This effectively provided the information needed to track its shape while also providing force or tactile information.

Since the measurements were based on light intensity change due to geometrical deformation, any additional deformation resulting from the actuation system was a source of significant disturbance in the measured values. For that reason, the individually reinforced chambers were a crucial design feature that drastically improved the sensing capabilities of the robot by limiting the ballooning effect.

4. Designing Sensors for Soft Robots

In this section, we aim to identify how we can increase sensing capability in soft manipulators within the context of MIS. Based on the types of manipulators described in section 3 and an outline of key robotic requirements from a surgical perspective (section 2), we have focused our work on one main measurand - the pose (proprioception). Determining the pose is what allows us to establish precisely where our robot end effector is located in relation to its base. Sensing tactile signals that provide information about physical interaction with the environment (exteroception) is also of great importance and being explored widely in the literature [4]–[7], [35], but is beyond the scope of this paper.

Expanding on the STIFF-FLOP project, our research continues to focus on optics-based sensor technologies. Here, the optics-based approach relates light intensity to the curvature of the soft robot module in which the sensor is integrated, and by measuring the former, one can estimate the latter. This approach can be realised by optical fibres transmitting light to the sensing point where light intensity is modulated by varying the distance between fibre tips as a function of the incurred curvature or by employing abraded optic fibres that lose light as a function of the incurred curvature – indeed we have successfully employed these techniques in the measurement of the pose of soft robots [36]–[38].

4.1. Optic Fibre Based Pose Sensor Design

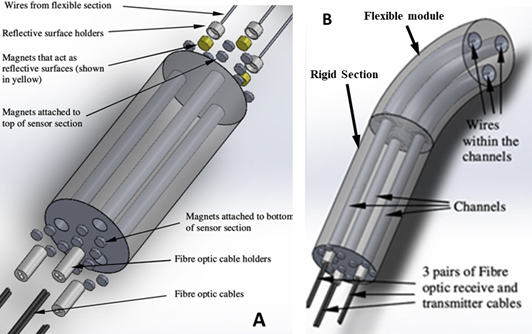

The optic fibre-based sensor described here measures the distance between a reflective surface and the tips of the optic fibres used for transmission and reception of light signals. Varying the fibre-to-tip distance as a function of the robot’s bending motion alters the light intensity reflected back which in turn allows us to determine the robot’s pose. In this particular implementation, we use three light sources (in this case light emitting diodes (LEDs)) throwing light via three optic fibres onto three reflective surfaces that move according to the amount of bending and the direction of bending experienced by the soft robot. The amount of reflected light (modulated by the robot motion) is subsequently collected by three receiving fibres and read out by an optoelectronics circuit at the base of the receiving fibres. As any change in pose affects those distances, there will be concurrent changes in light intensity at these reflective surfaces. When compared to other optic-based sensing techniques (such as Fibre-Bragg Grating (FBG) methods), light intensity modulated sensing is a relatively low-cost option that mitigates various complexities relating to system set-up, signal acquisition and processing.

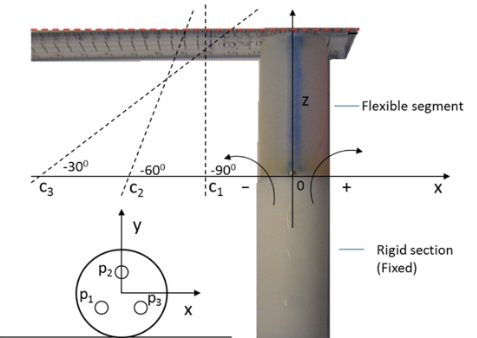

Our curvature sensor system was developed using three sets of optical fibres and inextensible wires that allowed us to calculate the bending radius, the bending direction and the elongation of a soft manipulator section from the arc lengths of these wires – all of which were embedded along the longitudinal axis of a flexible, cylindrical module emulating the flexible portion of a soft robot arm. In our test rig, the flexible module itself was attached to a rigid section housing the optic fibres. To achieve this, three channels were introduced at 120° intervals across the cross section of each module, to house the required wires (in the flexible module) and transmitter/receiver optic fibres (in the rigid section), respectively, as per Figure 9. Within the flexible module, the wires are fixed to the far end and to small magnets at the other end where it connects to the rigid section. The magnets are moved when the flexible module bends in different directions. The surfaces of the magnets are highly reflective and thus used to reflect the light from the tip of the transmitting fibres back to the receiving fibres. Because of their magnetic properties, additional magnets can be integrated within the rigid section to ensure that measuring wires are always kept taut. Bending in the flexible segment causes changes to the length of the wires within the three channels with the effect that the magnets are drawn towards or moved away from the top of the flexible module. As stated, the distances between transmitter, receiver and magnet affect the amount of reflected light and so transmitter/receiver and the magnet effectively act as a light intensity-modulation sensor. By measuring the length of all three wires, we can calculate, with some precision, the amount of bend, the direction of bend and the elongation of the flexible module.

In terms of prototype production, we used three Keyence Digital Fibre sensors FS N10 to transmit and receive light via three pairs of optic fibres (transmission and reception). The flexible module was constructed from EcoFlexTM, a plastic resin commonly used in the fabrication of soft manipulators [17]. The flexible module length, pre-extension, was l = 55mm and had a diameter of 25.5mm. The channels in the flexible module are symmetrically positioned, each one d = 7.75mm from the central axis, and with a radius of 3.5mm taking into account its inner lining. Made from the articulator sections of drinking straws, this lining helps lubricate the channel surface for the fibres within.

Figure 9. Constituent parts of the test system. (A) The rigid section with the integrated sensing elements; (B) Assembled system comprising the rigid sensor section and the flexible module [38].

The rigid lower section of the test platform (see Figure 9B) houses the ends of the optic fibres and the magnets within its three chambers, such that the magnets are able to move up and down. The magnets with their reflective surfaces are connected to the wires travelling up through the flexible module of the robot. For each of the three fibre pairs, the two fibres (for transmission and reception) are held parallel and in contact with one another, by a small plastic guide. Additional, non-moving magnets are introduced in the rigid section to ensure, by repulsion and attraction, that the moving magnets are kept as close as possible to the tips of the three fibre pairs with the pose measurement wires being kept taut.

4.2. Modelling

In order to convert our designs into a working prototype, we also needed a model that would translate sensor gathered data into useful information about the assumed pose of the flexible robot module. This model would need to deduce the extent of bend in the flexible module from the changes of the length of the wires moving within the flexible module’s internal chambers. To do this, we assume that the flexible module is a continuous cylinder and that when in any bent position, the three channels trace an arc or partial circumference of a circle. We note that constant curvature is assumed [38]. The proposed model (introduced in [38]) is based on the geometric relations between the measured arc lengths and angle θ (amount of bending), β (bending direction) and the overall elongation of the flexible module. Figure 10A shows this circle, with its centre at (Rx, Ry, 0); R denoting the circle’s radius.

Oriented normal to the main axis of the flexible module, virtual planes, p1p2p3 and q1q2q3, are introduced to determine the bending radius, as shown in Figure 10A. The locations of these planes are defined by three points along the paths of the three wires. We assume that n1 and n2 denote the centred normal vectors of the introduced cross-sectional planes which are perpendicular to the cylinder’s main axis. We also assume that all the normal vectors project onto the same line (nxy) on the x-y plane.

For our considerations, we assume that the length of the flexible module in its unactuated, rested state is l. Also, when not bent (i.e., bending angle θ = 0), the planes are parallel and l/N apart. Integer N is a large number that can be chosen arbitrarily. For the calculations here, it was set to 100. We presume that when the flexible module bends it takes on the shape of an arc and that the two planes are normal to the module’s main axis, the two planes always intersect at a point c = (Rx, Ry, 0) which is at the centre of the achieved arc. As we can see from Figure 10A, the centre line of the flexible module is always the bending radius, R, away from the intersection point, c. It is noted that the location of intersection point c and the length of radius R change depend on the curvature experienced by the flexible module.

We introduce ∆s1, ∆s2, ∆s3 to describe the distances between the vertices of the triangles that describe the outlines of the virtual planes, p1q1, p2q2 and p3q3, respectively [38]:

|

∆s1 = 1/N(l-l1), |

|

∆s2 = 1/N(l-l2) , (1) |

|

∆s3 = 1/N(l-l3), |

where, l1, l2 and l3 represent the change in length of the three wires in the flexible module, see Figure 10A.

Figure 10. (A) Sketch of the bending module with its three inner conduits within which the wires move depending on amount and direction of movement. The centre of the circle assumed by the constant-curvature bend is at point c; (B) The planes, p1p2p3 and q1q2q3, form a pentahedron from which the bending radius and the centre point c can be computed; (C) The coordinates of the three conduits at the base of the flexible module; points p1, p2 and p3 are assumed to be located in the centre of each conduit [38].

As one can see from Figure 10B, a pentahedron is formed by the two virtual planes and the connections between their vertices. With being defined as the normal vector of the upper plane p1p2p3, and as that of the lower plane q1q2q3, we can describe these as functions of the vertices of the two planes, as follows:

|

n1 = (p3p1 x p2p1) / (||p3p1 x p3p1||) |

|

(2) |

|

n2 = (q3q1 x q2q1) / (||q3q1 x q3q1||) |

Defining n2 = [nx,ny,nz]T, and introducing angle ∆θ as the angle between the two planes and angle β as the angle that describes by how much the flexible module is turned away from the x-axis, it follows that

|

∆θ = cos-1 (n1T n2) |

|

(3) |

|

β = tan2-1(ny / nx) |

Referring to Figure 10B, points p0, q0 and c define a triangle whose parameters can be computed using the law of cosines:

|

2R2 (1 - cos∆θ) = |p0q0|2 |

(4) |

The above equation can be solved for radius R and bending angle θ, as follows:

|

R = |p0q0| / √(2(1-cos∆θ)) |

(5) |

|

θ = l / R |

(6) |

The centre of the circle around which bending takes place is then:

|

c = (Rcosß, Rsinß, 0) |

(7) |

Since the distances between the two planes (in Figure 10B) are considered small, the three points on the plane can be approximated as follows:

|

q1 ≈ p1 + ∆s1n1 |

|

|

q2 ≈ p2 + ∆s2n1 |

(8) |

|

q3 ≈ p3 + ∆s3n1 |

|

Hence, a good approximation of normal vector n2 can be obtained from Eq. 2, using Eq. 7. Further, it is noted that for our calculations n1 is [0 0 1] and that the following simplification has been used:

|

|p0q0| ≈ (∆s1+∆s2+∆s3) / 3 |

(9) |

The origin of the world frame is placed at the centre of the cylindrical modules where the rigid section meets the flexible module (z=0). The unactuated length of the flexible module is l = 55 mm. Because of the constant curvature assumption, coordinates p1, p2 and p3 can be placed anywhere along the three wires of the flexible module; here, for simplicity, at z = 0. The complete definition of p1, p2 and p3 is [38]:

|

p1 = [((-√3)/2)d (-1/2)d 0]T |

|

|

p2 =[((√3)/2)d (-1/2)d 0]T |

(10) |

|

p3 = [0 d 0]T |

|

where d is the offset of the wires from the world frame origin (in our test rig: d = 7.75 mm). The three “delta”-distances (∆s1, ∆s2, ∆s3) between the vertices of the two planes can be determined from Eq.1, by inserting the wire length changes l1, l2 and l3, as obtained from the optic fibre measurements.

4.3. Optic Fibre Sensor Calibration

Calibration was required to relate the measured signals from the optic fibre sensors to the length changes occurring to the three wires. We achieved this by setting the distance between transmitter/receiver and reflective plates and recording the reflected light intensity. A selection of distances were used, and for each distance multiple readings were collected, before averaging over the results for each distance. In this way, functions relating light intensity to distance could be derived, with an acceptable range of sensitivity in the relevant length change range of 2mm to 30mm [38].

4.4. Testing Procedures

During testing, we mechanically forced the flexible module to assume the bending angles of θ= ±30°, ±60° and ±90°, with direction angle β = 0°. Data from the light intensity modulated sensors were recorded, with channel lengths deduced from changes in reflected light intensity. As a benchmark for validation, the rigid section of the overall system was fixed to a flat tabletop, so that its main axis was parallel to that of the table. This was to keep any bend solely to the x-z plane. A ruler, attached to the top of the flexible module, enabled measurement of the bending angle (see Figure 11), testing angles from -90° to +90° at 30° intervals. Multiple tests (five) for each angle were conducted and the computed averages were used for further elaboration. At each angle, the centres of the bending circle were determined experimentally by finding the intersection between the ruler’s projection line and the x-axis which was marked out on the tabletop. In order to set each angle, the flexible module was manipulated manually, using the attached ruler [38].

Figure 11. Test rig for the evaluation of the bending sensor performance. The ruler attached at the top of the flexible module allowed measuring the bending angle. During calibration, the angular ruler positions were related to the fibre-optic measurements. After calibration the fibre-optic measurements were a good predictor of bending angles [38].

In order to assess how well the proposed model can predict bend in the flexible module, the changes in wire lengths l1, l2 and l3 for each tested bending angle are obtained (see Table 1), and then used in conjunction with Eqs. 2 and 7, to calculate the normal vector. Eqs. 3-6 were then employed to determine the corresponding bending circle centre, c, as well as angles θ and β. This approach allowed the comparison between the sensor data and the tabletop ground truth measurements.

Table 1. Measured wire length changes at shown angular positions [38].

|

Bend angle θ (β=0) |

l1 mm |

l2 mm |

l3 mm |

|

-90° |

-6.5 |

15.0 |

5.1 |

|

-60° |

-4.0 |

9.5 |

3.0 |

|

-30° |

-2.0 |

6.0 |

2.0 |

|

30° |

4.9 |

-2.3 |

1.1 |

|

60° |

10.1 |

-4.0 |

1.7 |

|

90° |

14.9 |

-5.3 |

5.0 |

Table 2. Overview of computed and measured sensor data. The centre of the bending circle Cx was measured using the ruler as well as calculated from assuming constant curvature. The results indicated by “sensor” are the bending amount (), the bending direction () and the bending radius (R) calculated based on the measured values of and [38].

|

θm (β=0) (measured) |

Cx (measured) |

Cx (calculated) |

θs (sensor) |

βs (sensor) |

R (sensor) |

|

-90° |

-26.5 |

-35.0 |

84.1 |

-177.3 |

37.4 |

|

-60° |

-51.0 |

-52.5 |

54.6 |

-178.7 |

57.7 |

|

-30° |

-99.5 |

-105.0 |

32.9 |

-180.1 |

95.7 |

|

30° |

100.5 |

105.0 |

30.1 |

1.8 |

104.8 |

|

60° |

53.5 |

52.5 |

57.5 |

6.3 |

54.7 |

|

90° |

28.5 |

35.0 |

79.5 |

-0.6 |

40.1 |

A comparison of the experimental and sensor predicted measurements is shown in Table 2. What is most apparent is that at a constant curvature of θm = (± 30°, ± 60°), there is close agreement between results. At θm = (± 90°) there is, however, increased deviation. We also find that the estimated bending radius R and angle have good accuracy for θm = (± 30°, ± 60°) with averaged errors of (4.1mm, 3.9mm) for R and (1.50, 4.50) for θs. This increases to 11mm for R and 8.30 for θs at testing angles of (± 90°). Also noteworthy is the good estimation of angle β, which corresponds to the orientation of the flexible section with respect to the x axis (see Table 2 and Figure 11). Analysis of the discrepancies certainly suggests that this sensor system does show promise as a tool to estimate three-dimensional bending curvature. There are potential extraneous reasons for the errors in R and θs estimation, either because of the way in which the tests were conducted (partly manually) or because the theoretical assumption relating to constant curvature may be less applicable when working with higher bending angles – certainly a point that needs further investigation. The relatively wide channel diameter of 3.5mm, around a wire that is just 0.25mm in diameter could also add to the inaccuracy of the results. In this instance the reason would be that while minimal amount of bend in the channel would not immediately lead to a corresponding length change in the wire, as there is too much ‘wiggle-room’ within the chamber. Future designs would need to look at reducing channel diameter and checking that the material used in the channel lining is sufficiently flexible. In this way, we could ensure that the change in effective wire length genuinely mimics the curvature of each channel [38].

This new approach, using an optical fibre-based curvature sensor in conjunction with soft manipulators is demonstrably promising. The model has been tested and shows encouraging results as a tool with which to estimate the pose of a flexible cylindrical manipulator segment. Other advantages over those currently available include its potentially low-cost manufacture and ease of integration into a surgical system.

Although techniques that use standard optic fibres with mechanisms that displace a reflective surface in response to changes in the measurand are suitable for remote light-based sensing in flexible structures, there are still challenges in creating and integrating such structures into soft bodies. Issues such as large size of the channel compared to the optical fibre, the friction between the moving fibre optic cables and the surrounding sheath and compact integration require further study.

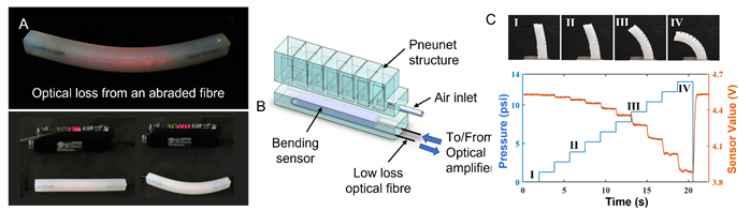

Since the STIFF-FLOP project, we have seen advances in many other approaches to soft-robot proprioception. Related to optical fibre-based sensing, an approach which involves the measurement of optical losses within a single optical fibre or lightguide to characterize the bending strain, or applied stimuli such as normal force, is emerging as a versatile technique for creating flexible and stretchable sensors that can be integrated into soft robots. Although most of the studies in relation to deformable lightguides for sensing focus on proprioception in soft structures or soft robotic hands, these techniques would be equally applicable to soft surgical manipulators. Hence, we present a brief overview and discussion of sensing using deformable lightguide-based sensors.

4.5. Emerging deformable optical-waveguide based sensing technologies

An optical fibre combines a core and a cladding, made of materials with different refractive indices. The core material has a considerably higher refractive index than the cladding and because of this a light ray incident on the cross-section of the core, within a certain range of incident angles, gets internally reflected and transmitted along the length of the fibre. Commercial optical fibres used in communications have very smooth surfaces and display negligible light loss over large distances. However, if the surface of the core-cladding interface becomes rough or the refractive index difference between the core and the cladding decreases, light loss in the fibre increases. It has also been observed that when the waveguide is bent or the cross-section of the core is changed due to an external force, light loss increases at the core-cladding interface. With sufficiently lossy optical fibres, it is possible to create optical fibres at centimetre scale that can be used to detect fibre bending by monitoring the change in the intensity of light transmitted through the fibre. Moreover, the core and cladding can also be fabricated from soft silicones with different refractive indices to make light wave-guide-based sensors that are stretchable and capable of detecting both fibre bending and externally applied forces [39], [40].

Many optical fibre sensors based on inherent light loss have been developed to monitor deformations in soft structures. Zhao et. al. developed stretchable optical waveguides using two different silicone elastomers as core and cladding[39]. These waveguides can be stretched up to 85% strain and can detect stretch, bending and external forces, and have been utilised for proprioception and exteroception in soft robotic fingers. Teeple et. al. have embedded stretchable optical waveguides into an underwater gripper to achieve bend sensing in an underwater robot, demonstrating the suitability of the technique in extreme conditions – in this case high hydrostatic pressure[40]. In contrast to sensors based on different core and cladding materials, To et al. developed gold-coated optical channels in which the light reflected off the gold coating was transmitted internally[41]. These optical waveguides were able to predict linear strain and indentation forces from the transmission losses.

One of the issues associated with optical waveguide-based soft sensors is their sensitivity to multiple stimuli (force, stretch and bending), and the difficulty in interpreting the data they provide. A sensor that was sensitive to bending and insensitive to pressure would enable us to measure true bending deformation and would therefore be useful for proprioception of soft manipulator environment interaction. This prompted us to research sensors that were based on flexible optical fibres that are insensitive to pressure. We developed a fibre-optic cable sensor that can be made from off-the-shelf materials using a simple and inexpensive method of fabrication [42]. The pressure-insensitive bend sensor was fabricated using the commercially available Super EskaTMSimplex Cable SH1001-1.0. These cables are composed of a thin optic fibre with a transparent core made of Polymethyl-Methyl acrylate(PMMA) and is cladded in a layer of fluorinated polymer. Through mechanical abrasion, the optic fibre was made lossier such that the light loss due to bending in a centimetre scale cable is substantial enough to allow characterisation of the bending curvature. The abraded fibre was moulded inside a silicone structure for structural stability.

Figure 12. (A) Abraded fibre-based bending sensor shows optical loss through the cladding and decrease in light intensity measured by the receiver corresponds to the bending curvature, (B) Schematic of a pneumatically controlled soft finger sensorized by the fibre-based sensor (C) (top) the bending shape of the finger under different input pressures and (bottom) the sensor response during the bending process.

The fibre-based sensor was integrated into a pneumatically actuated soft finger (based on PneuNets design – see Figure 12B [42]. As the finger was actuated at different pressures, it gradually deformed from pose A to pose D (see Figure 15B) and the optical light transmission losses in the sensor waveguide increased leading to a decrease in sensor output values. Once the pressure was released, the soft finger returned to its original pose and the sensor reading returned to its base value (see Figure 15B). In comparison to an optical lightguide sensor made of two different silicone materials [42], this sensor with the integrated abraded fibre was found to be less sensitive to external pressure, making it practicable for identifying the pose when the soft structure is subjected to mechanical stimuli from the interaction with objects.

These abraded optical fibre sensors have limited stretchability as they have a plastic core. Consequently, their application may be limited to soft surgical tools that exhibit bending around a single axis analogous to concentric tube robots. For multi-axis bending or for manipulators that exhibit significant stretching, stretchable optical waveguides made entirely of stretchable silicones can be used. However, they need to be carefully designed to shield them from external forces during interactions; a challenge given the strict size constraints imposed by the requirements of minimally invasive surgery applications.

As the dexterity requirements in soft surgical manipulators increase, the number of optical fibre sensors that need to be integrated into the manipulator and the corresponding space requirements increase too. Reducing the number of independent fibre optic cables required for sensing would help in designing effective MIS manipulators. In a recent work, Bai et al. have developed a waveguide with different chromatic patterns along its length exhibiting wavelength specific adsorption of light, as a basis for multi-segment soft sensors that can detect mechanical stimuli at different locations[43]. This technique has excellent potential for addressing the canonical challenge of high degree-of-freedom sensing with minimal sensing structures.

This would be particularly helpful for MIS applications where it is not feasible to have 9 optical fibres to measure 3 DOF deformation in 3 segments. Moreover, the demarcation of deformation modes of the soft structure to reduce the dimensionality of sensor requirements within a task, will be instrumental meeting the challenges posed by proprioceptive sensing.

- Insights for development of proprioceptive soft MIS manipulators

The studies on the development and integration of sensing conducted during the STIFF-FLOP project have highlighted the complex challenges involved in developing soft surgical manipulators. The robotic requirements bring to fore the technological challenges. The key technological challenges relating to actuation and sensing can be summarized into (a) enhancing dexterity, (b) achieving proprioception and exteroception, and (c) data fusion, information extraction for visualization and control. In the following synopsis, we elaborate on the research paths that lie ahead in terms of solving both the actuation and sensing challenges:

- Miniature and addressable actuation: Challenges still remain in terms of achieving dextrous soft manipulators that meet the requirements of minimally invasive surgical applications. A major technical challenge with respect to actuation can be summarised as the development of miniature soft actuators capable of achieving sufficient forces for procedures such as tissue biopsy powered by a compact and addressable fluidic system. Modular soft actuator systems that share a single fluidic source have the capability to reduce the size of fluidic channels connecting the power source to the actuators distant from the base of the manipulator [44]. Application specific solutions involving multi-stage deployable structures for anchoring may help to solve the force generation challenges in miniature surgical manipulators.

- Novel technologies for multi-modal and miniaturised sensing: Analogous to addressable actuation offering high dexterity, achieving high degree of freedom sensing within size constraints is also challenging. Multi-modal sensing from a single sensor system and distinguishing between multiple modes of sensor feedback will help to reduce the number of sensors needed, thereby reducing space requirements [6]. Novel technologies such as optical waveguides with chromatic patterns that discern the location of stimuli are crucial for sensorizing dexterous soft manipulators. Akin to capacitive and resistive tomography-based methods, researchers are also pursuing techniques involving multiple light sources and detectors to develop sensor skins [45], [46].

- Co-design of actuation and sensing structures: Designing the sensor layout and structure with intended function in mind is important to address the joint challenges of dexterity and proprioception. Effective use of pressure-insensitive sensors in conjunction with sensors that are responsive to interaction forces can help to distinguish between sensor readings due to pose change and external stimuli. Task-based selection of optimal sensor layout will be useful in reducing the number of sensors required [47]. Artificial Intelligence based approaches to learning the robot’s task with sparse sensor placement are also improving the effectiveness of available sensor data [26]. There is also scope for the development of algorithms that take into account the multi-stimuli responsiveness of soft lightguide-based sensors and achieve sensor selection and placement to enable proprioception in the event of interactions with the environment. Algorithms that can automatically co-design the actuator layout along with the sensor layout based on the specific surgical task requirements will be a significant step towards soft manipulators for MIS.

- Machine learning and AI for sensor-data processing: To tackle strict processing challenges, we need to adopt efficient data processing approaches. Camera-vision based procedures to utilise high dimensional data (multiple fibres, spectrum, and techniques such as imaging a multi-mode optical fibre) that have the potential to enlarge the information within a single fibre need to be utilised. Machine learning has also been recently shown to fuse data from many optical sensor channels to measure complex physical deformations including bending and stretching [48]. Prior information about the deformation modalities can be used to fuse data from limited sensor streams and achieve high levels of proprioceptive information [49].

- Sensor-data processing for closed-loop position control: The key advantage of soft robot-their compliance-also presents a huge challenge. Any interaction with external objects causes deformations, which, when left uncompensated and can lead to significant positioning errors. To solve this issue the control loop has to be closed with appropriately designed sensorial data. All available information from sensors measuring haptics, interaction forces and shape is useful in the creation of models that can be then used to compensate for positioning errors.

6. Conclusions

This paper highlights the various challenges of using soft robotic devices in minimally invasive surgery (MIS) while also offering a number of solutions to those challenges. Reviewing the main requirements of laparoscopic and robot-assisted tools, it becomes clear that there is a need for robotic systems that are more compliant and more dexterous than the traditional rigid-component robots currently used in surgical settings. An almost ideal candidate for minimally invasive surgery, it seems, is the soft robot approach. With their ability to deform easily and continuously across their entire structure, soft robots offer unparalleled dexterity along with the ability to naturally adapt to their environment.

Despite the clear advantages soft robots have over their rigid-component counterparts, there are technical challenges that need to be overcome before they become accepted as part of the surgeon’s toolkit in MIS. The paper identifies the difficulties in estimating a soft robot’s pose and the inability of soft robot arms to navigate accurately to the target location as the principal challenges. The paper hypothesises that through the appropriate integration of flexible shape sensors this hurdle can be overcome. Two optics-based shape sensor solutions that are made from flexible and soft materials are proposed and evaluated. The research shows that these sensors can determine the robot’s pose with sufficient accuracy and reliability. The interaction between the robot’s actuation and the integrated sensors is also examined and optimized for navigation control and measurement accuracy.

Future work will focus on sensor materials that are similar to those used in soft robots. Silicone based light guides will be explored as they have the potential to merge seamlessly with robot structures, fully preserving their compliant nature. Work will also extend into optics-based distributed soft sensors that are capable of measuring the tactile information across the entirety of the robot’s structure, as these would provide key insights into the physical interaction between robot and environment. Further work will also investigate the testing of proposed soft robotics systems in realistic clinical environments using animal models and human cadavers.

Author Contributions: Conceptualization, Kaspar Althoefer; Funding acquisition, Alberto Arezzo and Kaspar Althoefer; Writing – original draft, Abu Bakar Dawood, Jan Fras, Faisal Aljaber, Yoav Mintz, Alberto Arezzo, Hareesh Godaba and Kaspar Althoefer; Writing – review & editing, Abu Bakar Dawood, Jan Fras, Faisal Aljaber, Yoav Mintz, Alberto Arezzo, Hareesh Godaba and Kaspar Althoefer.

Funding: This work was supported by research grants from the Seventh Framework Programme of the European Commission in the framework of EU project STIFF-FLOP (ID: 287728), the National Centre for Nuclear Robotics (NCNR) funded by the Engineering and Physical Sciences Research Council (EPSRC) (ID: EP/R02572X/1), and the Alan Turing Institute funded project “Learning collaboration affordances for intuitive human-robot interaction” (ID: R-QMU-001).

Institutional Review Board Statement: In this section, please add the Institutional Review Board Statement and approval number for studies involving humans or animals. Please note that the Editorial Office might ask you for further information. Please add “The study was conducted according to the guidelines of the Declaration of Helsinki, and approved by the Institutional Review Board (or Ethics Committee) of NAME OF INSTITUTE (protocol code XXX and date of approval).” OR “Ethical review and approval were waived for this study, due to REASON (please provide a detailed justification).” OR “Not applicable.” for studies not involving humans or animals. You might also choose to exclude this statement if the study did not involve humans or animals.

Informed Consent Statement: N/A.

Data Availability Statement: None.

Acknowledgements: Thanks to Mish Toszeghi for proofreading the manuscript.

Conflicts of Interest: None.

References

[1][2][3][4][5][6][7][8][9][10][11][12][13][14][15][16][17][18][19][20][21][22][23][24][25][26][27][28][29][30][31][32][33][34][35][36][37][38][39][40][41][42][43][44][45][46][47][48][49]

This entry is adapted from the peer-reviewed paper 10.3390/app11146586

References

- [1] D. Jayne et al., “Effect of robotic-assisted vs conventional laparoscopic surgery on risk of conversion to open laparotomy among patients undergoing resection for rectal cancer the rolarr randomized clinical trial,” JAMA - J. Am. Med. Assoc., vol. 318, no. 16, 2017, doi: 10.1001/jama.2017.7219.

- [2] W. B. Roberts, K. Tseng, P. C. Walsh, and M. Han, “Critical appraisal of management of rectal injury during radical prostatectomy,” Urology, vol. 76, no. 5, 2010, doi: 10.1016/j.urology.2010.03.054.

- [3] A. Arezzo et al., “Total mesorectal excision using a soft and flexible robotic arm: a feasibility study in cadaver models,” Surg. Endosc., vol. 31, no. 1, pp. 264–273, 2017, doi: 10.1007/s00464-016-4967-x.

- [4] H. Wang, M. Totaro, and L. Beccai, “Toward perceptive soft robots: Progress and challenges,” Adv. Sci., vol. 5, no. 9, p. 1800541, 2018.

- [5] S. Denei, P. Maiolino, E. Baglini, and G. Cannata, “Development of an Integrated Tactile Sensor System for Clothes Manipulation and Classification Using Industrial Grippers,” IEEE Sens. J., vol. 17, no. 19, pp. 6385–6396, 2017, doi: 10.1109/JSEN.2017.2743065.

- [6] A. B. Dawood, H. Godaba, A. Ataka, and K. Althoefer, “Silicone-based Capacitive E-skin for Exteroception and Proprioception,” in 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2020, pp. 8951–8956, doi: 10.1109/IROS45743.2020.9340945.

- [7] C. Larson et al., “Highly stretchable electroluminescent skin for optical signaling and tactile sensing,” Science (80-. )., vol. 351, no. 6277, pp. 1071 LP – 1074, Mar. 2016, doi: 10.1126/science.aac5082.

- [8] M. Morino, G. Benincà, G. Giraudo, G. M. Del Genio, F. Rebecchi, and C. Garrone, “Robot-assisted vs laparoscopic adrenalectomy: A prospective randomized controlled trial,” Surg. Endosc. Other Interv. Tech., vol. 18, no. 12, 2004, doi: 10.1007/s00464-004-9046-z.

- [9] M. Morino, L. Pellegrino, C. Giaccone, C. Garrone, and F. Rebecchi, “Randomized clinical trial of robot-assisted versus laparoscopic Nissen fundoplication,” Br. J. Surg., vol. 93, no. 5, 2006, doi: 10.1002/bjs.5325.

- [10] G. Scozzari, F. Rebecchi, P. Millo, S. Rocchietto, R. Allieta, and M. Morino, “Robot-assisted gastrojejunal anastomosis does not improve the results of the laparoscopic Roux-en-Y gastric bypass,” Surg. Endosc., vol. 25, no. 2, 2011, doi: 10.1007/s00464-010-1229-1.

- [11] M. Morino, F. Famiglietti, C. Giaccone, and F. Rebecchi, “Robot-assisted Heller myotomy for achalasia. Technique and results,” Ann. Ital. Chir., vol. 84, no. 5, 2013.

- [12] J. Crane, M. Hamed, J. P. Borucki, A. El-Hadi, I. Shaikh, and A. T. Stearns, “Complete mesocolic excision versus conventional surgery for colon cancer: A systematic review and meta-analysis,” Colorectal Disease. 2021, doi: 10.1111/codi.15644.

- [13] L. Xu et al., “Short-term outcomes of complete mesocolic excision versus D2 dissection in patients undergoing laparoscopic colectomy for right colon cancer (RELARC): a randomised, controlled, phase 3, superiority trial,” Lancet Oncol., vol. 22, no. 3, 2021, doi: 10.1016/S1470-2045(20)30685-9.

- [14] G. Anania et al., “Rise and fall of total mesorectal excision with lateral pelvic lymphadenectomy for rectal cancer: an updated systematic review and meta-analysis of 11,366 patients,” International Journal of Colorectal Disease. 2021, doi: 10.1007/s00384-021-03946-2.

- [15] P. L. Anderson, R. A. Lathrop, and R. J. Webster, “Robot-like dexterity without computers and motors: a review of hand-held laparoscopic instruments with wrist-like tip articulation,” Expert Review of Medical Devices, vol. 13, no. 7. 2016, doi: 10.1586/17434440.2016.1146585.

- [16] A. Saracino, T. J. C. Oude-Vrielink, A. Menciassi, E. Sinibaldi, and G. P. Mylonas, “Haptic Intracorporeal Palpation Using a Cable-Driven Parallel Robot: A User Study,” IEEE Trans. Biomed. Eng., vol. 67, no. 12, 2020, doi: 10.1109/TBME.2020.2987646.

- [17] P. Schleer, P. Kaiser, S. Drobinsky, and K. Radermacher, “Augmentation of haptic feedback for teleoperated robotic surgery,” Int. J. Comput. Assist. Radiol. Surg., vol. 15, no. 3, 2020, doi: 10.1007/s11548-020-02118-x.

- [18] H. L. Kaan and K. Y. Ho, “Robot-assisted endoscopic resection: Current status and future directions,” Gut and Liver, vol. 14, no. 2. 2020, doi: 10.5009/gnl19047.

- [19] D. B. Camarillo, T. M. Krummel, and J. K. Salisbury, “Robotic technology in surgery: Past, present, and future,” Am. J. Surg., vol. 188, no. 4 SUPPL. 1, 2004, doi: 10.1016/j.amjsurg.2004.08.025.

- [20] S. Haddadin, A. Albu-SchäCurrency Signffer, and G. Hirzinger, “Requirements for safe robots: Measurements, analysis and new insights,” in International Journal of Robotics Research, 2009, vol. 28, no. 11–12, doi: 10.1177/0278364909343970.

- [21] N. S. Usevitch, Z. M. Hammond, M. Schwager, A. M. Okamura, E. W. Hawkes, and S. Follmer, “An untethered isoperimetric soft robot,” Sci. Robot., vol. 5, no. 40, 2020, doi: 10.1126/scirobotics.aaz0492.

- [22] J. Fras and K. Althoefer, “Soft Biomimetic Prosthetic Hand: Design, Manufacturing and Preliminary Examination,” 2018, doi: 10.1109/IROS.2018.8593666.

- [23] J. Shintake, V. Cacucciolo, D. Floreano, and H. Shea, “Soft Robotic Grippers,” Advanced Materials, vol. 30, no. 29. 2018, doi: 10.1002/adma.201707035.

- [24] G. Fang, C. D. Matte, R. B. N. Scharff, T. H. Kwok, and C. C. L. Wang, “Kinematics of Soft Robots by Geometric Computing,” IEEE Trans. Robot., vol. 36, no. 4, 2020, doi: 10.1109/TRO.2020.2985583.

- [25] R. J. Webster and B. A. Jones, “Design and kinematic modeling of constant curvature continuum robots: A review,” Int. J. Rob. Res., vol. 29, no. 13, pp. 1661–1683, 2010, doi: 10.1177/0278364910368147.

- [26] A. Spielberg, A. Amini, L. Chin, W. Matusik, and D. Rus, “Co-learning of task and sensor placement for soft robotics,” IEEE Robot. Autom. Lett., vol. 6, no. 2, 2021, doi: 10.1109/LRA.2021.3056369.

- [27] M. Ilami, H. Bagheri, R. Ahmed, E. O. Skowronek, and H. Marvi, “Materials, Actuators, and Sensors for Soft Bioinspired Robots,” Advanced Materials. 2020, doi: 10.1002/adma.202003139.

- [28] J. Fras, M. Macias, J. Czarnowski, M. Brancadoro, A. Menciassi, and J. Glowka, “Soft Manipulator Actuation Module – with Reinforced Chambers,” in Soft and Stiffness-controllable Robotics Solutions for Minimally Invasive Surgery: The STIFF-FLOP Approach, 2018, p. 47.

- [29] M. Cianchetti et al., “Soft Robotics Technologies to Address Shortcomings in Today’s Minimally Invasive Surgery: The STIFF-FLOP Approach,” Soft Robot., vol. 1, no. 2, 2014, doi: 10.1089/soro.2014.0001.

- [30] J. Fras, M. MacIas, Y. Noh, and K. Althoefer, “Fluidical bending actuator designed for soft octopus robot tentacle,” in 2018 IEEE International Conference on Soft Robotics (RoboSoft), 2018, pp. 253–257, doi: 10.1109/ROBOSOFT.2018.8404928.

- [31] J. Fras, Y. Noh, H. Wurdemann, and K. Althoefer, “Soft fluidic rotary actuator with improved actuation properties,” in IEEE International Conference on Intelligent Robots and Systems, 2017, vol. 2017-September, doi: 10.1109/IROS.2017.8206448.

- [32] L. Manfredi, F. Putzu, S. Guler, Y. Huan, and A. Cuschieri, “4 DOFs hollow soft pneumatic actuator–hose,” Mater. Res. Express, vol. 6, no. 4, 2019, doi: 10.1088/2053-1591/aaebea.

- [33] J. Fras, J. Glowka, and K. Althoefer, “Instant soft robot: A simple recipe for quick and easy manufacturing,” in 2020 3rd IEEE International Conference on Soft Robotics (RoboSoft), 2020, pp. 482–488, doi: 10.1109/RoboSoft48309.2020.9115973.

- [34] T. Ranzani, I. de Falco, M. Cianchetti, and A. Menciassi, “Design of the Multi-module Manipulator,” in Soft and Stiffness-controllable Robotics Solutions for Minimally Invasive Surgery: The STIFF-FLOP Approach, 2018, p. 23.

- [35] A. B. Dawood, H. Godaba, and K. Althoefer, “Modelling of a soft sensor for exteroception and proprioception in a pneumatically actuated soft robot,” in Lecture Notes in Computer Science, 2019, vol. 11650 LNAI, pp. 99–110, doi: 10.1007/978-3-030-25332-5_9.

- [36] H. Godaba, I. Vitanov, F. Aljaber, A. Ataka, and K. Althoefer, “A bending sensor insensitive to pressure: Soft proprioception based on abraded optical fibres,” in 2020 3rd IEEE International Conference on Soft Robotics (RoboSoft), 2020, pp. 104–109, doi: 10.1109/RoboSoft48309.2020.9115984.

- [37] F. Aljaber and K. Althoefer, “Light Intensity-Modulated Bending Sensor Fabrication and Performance Test for Shape Sensing,” in Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics), 2019, vol. 11649 LNAI, doi: 10.1007/978-3-030-23807-0_11.

- [38] T. C. Searle, K. Althoefer, L. Seneviratne, and H. Liu, “An optical curvature sensor for flexible manipulators,” in Proceedings - IEEE International Conference on Robotics and Automation, 2013, pp. 4415–4420, doi: 10.1109/ICRA.2013.6631203.

- [39] H. Zhao, K. O’Brien, S. Li, and R. F. Shepherd, “Optoelectronically innervated soft prosthetic hand via stretchable optical waveguides,” Sci. Robot., 2016, doi: 10.1126/scirobotics.aai7529.

- [40] C. B. Teeple, K. P. Becker, and R. J. Wood, “Soft Curvature and Contact Force Sensors for Deep-Sea Grasping via Soft Optical Waveguides,” in IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2018, pp. 1621–1627, doi: 10.1109/IROS.2018.8594270.

- [41] C. To, T. Hellebrekers, J. Jung, S. J. Yoon, and Y. L. Park, “A Soft Optical Waveguide Coupled with Fiber Optics for Dynamic Pressure and Strain Sensing,” IEEE Robot. Autom. Lett., vol. 3, no. 4, 2018, doi: 10.1109/LRA.2018.2856937.

- [42] B. Mosadegh et al., “Pneumatic networks for soft robotics that actuate rapidly,” Adv. Funct. Mater., vol. 24, no. 15, 2014, doi: 10.1002/adfm.201303288.

- [43] H. Bai, S. Li, J. Barreiros, Y. Tu, C. R. Pollock, and R. F. Shepherd, “Stretchable distributed fiber-optic sensors,” Science (80-. )., vol. 370, no. 6518, 2020, doi: 10.1126/science.aba5504.

- [44] M. A. Robertson and J. Paik, “New soft robots really suck: Vacuum-powered systems empower diverse capabilities,” Sci. Robot., vol. 2, no. 9, 2017, doi: 10.1126/scirobotics.aan6357.

- [45] P. A. Xu, A. K. Mishra, H. Bai, C. A. Aubin, L. Zullo, and R. F. Shepherd, “Optical lace for synthetic afferent neural networks,” Sci. Robot., vol. 4, no. 34, 2019, doi: 10.1126/scirobotics.aaw6304.

- [46] D. O. Amoateng, M. Totaro, M. Crepaldi, E. Falotico, and L. Beccai, “Intelligent Position, Pressure and Depth Sensing in a Soft Optical Waveguide Skin,” 2019, doi: 10.1109/ROBOSOFT.2019.8722775.

- [47] J. Tapia, E. Knoop, M. Mutný, M. A. Otaduy, and M. Bächer, “MakeSense: Automated Sensor Design for Proprioceptive Soft Robots,” Soft Robot., 2019, doi: 10.1089/soro.2018.0162.

- [48] I. M. Van Meerbeek, C. M. De Sa, and R. F. Shepherd, “Soft optoelectronic sensory foams with proprioception,” Sci. Robot., vol. 3, no. 24, p. eaau2489, 2018, doi: 10.1126/scirobotics.aau2489.

- [49] D. Lunni, G. Giordano, E. Sinibaldi, M. Cianchetti, and B. Mazzolai, “Shape estimation based on Kalman filtering: Towards fully soft proprioception,” 2018, doi: 10.1109/ROBOSOFT.2018.8405382.