Your browser does not fully support modern features. Please upgrade for a smoother experience.

Please note this is an old version of this entry, which may differ significantly from the current revision.

For image compression, discrete wavelet transform (DWT) and CHC with principal component analysis (PCA) were combined. The lossy method was introduced by using PCA, followed by DWT and CHC to enhance compression efficiency. By using DWT and CHC instead of PCA alone, the reconstructed images have a better peak signal-to-noise ratio (PSNR).

- canonical Huffman coding (CHC)

- 2D discrete wavelet transform (2D DWT)

- hard thresholding

1. Introduction

With the phenomenal rise in the use of digital images in the Internet era, researchers are concentrating on image-processing applications [1][2]. The need for image compression has been growing due to the pressing need to minimize data size for transmission. This has become particularly necessary due to the restricted capacity of the Internet. The primary objectives of image compression are to store large amounts of data in a small memory space and to transfer data quickly [2].

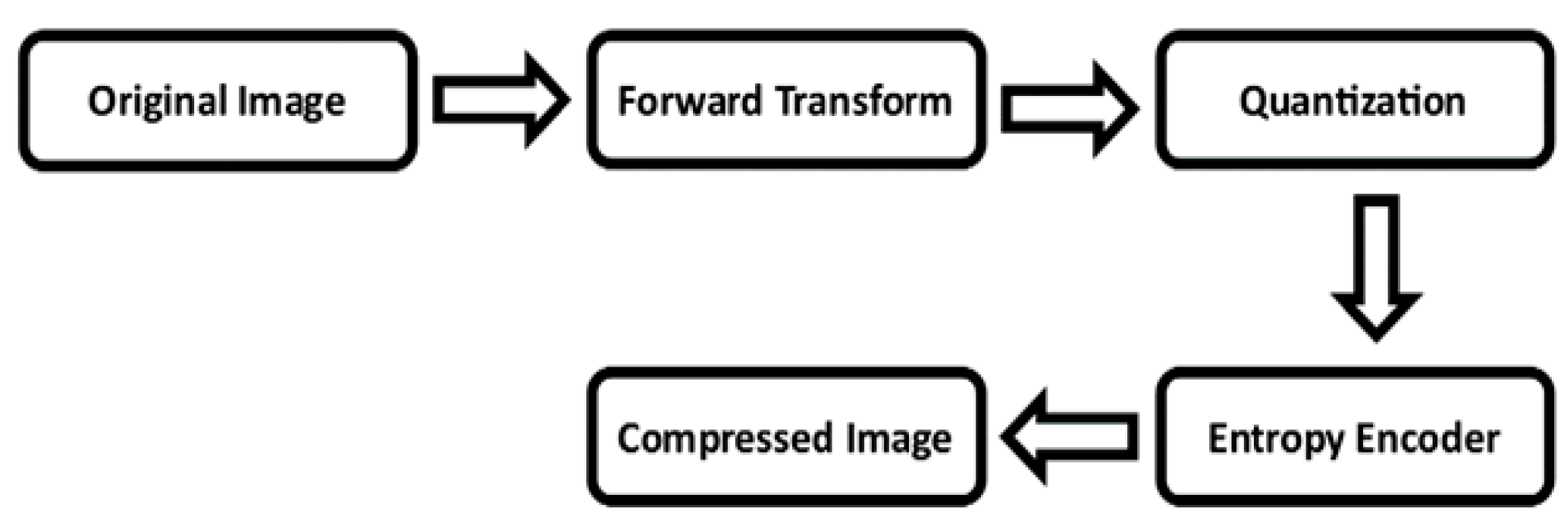

There are primarily two types of image compression methods: lossless and lossy. In lossless compression, the original and the reconstructed images remain exactly the same. On the other hand, in lossy compression, notwithstanding its extensive application in many domains, there can be data loss to a certain extent for greater reduction of redundancy. In lossy compression, the original image is first forward transformed before the final image is quantized. The compressed image is then produced using entropy encoding. This process is shown in Figure 1.

Figure 1. Lossy Image Compression Block Diagram in General.

Firstly, there are direct image compression methods, which are applied for sampling an image in a spatial domain. These methods comprise techniques such as block truncation (block truncation coding (BTC) [5], absolute moment block truncation (AMBTC) [6], modified block truncation coding (MBTC) [7], improved block truncation coding using K-means quad clustering (IBTC-KQ) [8], adaptive block truncation coding using edge-based quantization approach (ABTC-EQ) [9]) and vector quantization [10].

Secondly, there are image transformation methods, which include singular value decomposition (SVD) [11], principal component analysis (PCA) [12], discrete cosine transform (DCT) [13] and discrete wavelet transform (DWT) [14]. Through these methods, image samples are transformed from the spatial domain to the frequency domain, thereby concentrating the energy of the image in a small number of coefficients.

Presently, researchers are noticeably turning to the DWT transformation tool due to its pyramidal or dyadic wavelet decomposition properties [15]. It enables high compression and helps produce superior-quality reconstructed images. The present study demonstrates the benefits of the DWT-based strategy using canonical Huffman coding. In their preliminary work, the present authors explained this aspect of the entropy encoder [16]. In course of the analysis, a comparison of canonical Huffman coding with basic Huffman coding showed that the former has a smaller code-book size and accordingly requires less processing time.

In the present study, for the standard test images, the issue of enhancing the compression ratio was addressed by improving quality of the reconstructed image and by thoroughly analyzing the necessary parameters, such as PSNR, SSIM, CR and BPP. PCA, DWT, normalization, thresholding and canonical Huffman coding methods were employed to achieve high compression with excellent image quality.

The present authors developed a lossy compression technique during the study using the PCA [12], which proved to be marginally superior to the SVD method [17] and DWT [16] algorithms for both grayscale and color images. Canonical Huffman coding [16] was used to compress the reconstructed image to a great extent. The authors also compared the parameters obtained in their proposed method with those provided in the block truncation [18] and the DCT-based approaches [19].

PCA-DWT-CHC extends the previously reported work (DWT) [16]. The proposed method uses the Haar wavelet transform to decompose images to a single level and then incorporates PCA with DWT to improve performance. In the previous work, images were decomposed up to three levels using a Haar wavelet, and PCA was not included as a pre-processing compression method. Here, the proposed method yields high-quality images with a high compression ratio and requires less computing time (by an average of 45%) than the previously reported work. In the process of the study, the authors examined several frequently cited images in the available literature. Slice resolutions of 512 × 512 and 256 × 256 were used, which are considered to be the minimum standards in the industry [20]. The present authors also calculated the compression ratio and the PSNR values of their methods and compared them to the other research findings [3][5][6][7][8][9][16][20].

2. 2D DWT and PCA with Canonical Huffman Encoding

An overview of several published works on this subject highlights various other methods that have so far been presented by many other researchers. One approach that has gained considerable attention in recent years among the research communities is a hybrid algorithm that combines DWT with other transformation tools [10]. S M Ahmed et al. [21] explained in detail their method of compressing ECG signals using a combination of SVD and DWT. Jayamol M. et al. [8] presented an improved method for the block truncation coding of grayscale images known as IBTC-KQ. This technique uses K-means quad clustering to achieve better results. Aldzjia et al. [22] introduced a method for compressing color images using the DWT and genetic algorithms (GAs). Messaoudi et al. [3] proposed a technique called DCT-DLUT that involves using the discrete cosine transform and a lookup table known as DLUT to demarcate the difference between the indices. It is a quick and effective way to compress lossy color images.

Paul et al. [10] proposed a technique, namely DWT-VQ (discrete wavelet transform-Vector Quantization), for generating an YCbCr image from an RGB image. This technique compresses images while maintaining their perceptual quality in a clinical setting. A K Pandey et al. [23] presented a compression technique that uses the Haar wavelet transform to compress medical images. A method for compressing images using the discrete Laguerre wavelet transform (DLWT) was introduced by J A Eleiwy [24]. However, this method concentrates only on approximate coefficients from four sub-bands of the DLWT post-decomposition. As a result, this approach may affect the quality of the reconstructed images. In other words, maintaining a good image quality while achieving a high compression rate can prove to be considerably challenging in image compression. Moreover, J. A. Eleiwy did not apply the peak signal-to-noise ratio (PSNR) or the structural similarity index measure (SSIM) index to evaluate the quality of the reconstructed image.

M. Alosta et al. [25] examined the arithmetic coding for data compression. They measured the compression ratio and the bit rate to determine the extent of the image compression. However, their study did not assess the quality of the compressed images, specifically the PSNR or SSIM values, which correspond to the compression rate (CR) or bits per pixel (BPP) values.

This entry is adapted from the peer-reviewed paper 10.3390/e25101382

References

- Latha, P.M.; Fathima, A.A. Collective Compression of Images using Averaging and Transform coding. Measurement 2019, 135, 795–805.

- Farghaly, S.H.; Ismail, S.M. Floating-point discrete wavelet transform-based image compression on FPGA. AEU Int. J. Electron. Commun. 2020, 124, 153363–153373.

- Messaoudi, A.; Srairi, K. Colour image compression algorithm based on the dct transform using difference lookup table. Electron. Lett. 2016, 52, 1685–1686.

- Ge, B.; Bouguila, N.; Fan, W. Single-target visual tracking using color compression and spatially weighted generalized Gaussian mixture models. Pattern Anal. Appl. 2022, 25, 285–304.

- Delp, E.; Mitchell, O. Image Compression Using Block Truncation Coding. IEEE Trans. Commun. 1979, 27, 1335–1342.

- Lema, M.; Mitchell, O. Absolute Moment Block Truncation Coding and Its Application to Color Images. IEEE Trans. Commun. 1984, 32, 1148–1157.

- Mathews, J.; Nair, M.S.; Jo, L. Modified BTC algorithm for gray scale images using max-min quantizer. In Proceedings of the 2013 International Mutli-Conference on Automation, Computing, Communication, Control and Compressed Sensing (iMac4s), Kottayam, India, 22–23 March 2013; pp. 377–382.

- Mathews, J.; Nair, M.S.; Jo, L. Improved BTC Algorithm for Gray Scale Images Using K-Means Quad Clustering. In Proceedings of the 19th International Conference on Neural Information Processing, ICONIP 2012, Part IV, LNCS 7666, Doha, Qatar, 12–15 November 2012; pp. 9–17.

- Mathews, J.; Nair, M.S. Adaptive block truncation coding technique using edge-based quantization approach. Comput. Electr. Eng. 2015, 43, 169–179.

- Ammah, P.N.T.; Owusu, E. Robust medical image compression based on wavelet transform and vector quantization. Inform. Med. Unlocked 2019, 15, 100183.

- Kumar, R.; Patbhaje, U.; Kumar, A. An efficient technique for image compression and quality retrieval using matrix completion. J. King Saud. Univ.-Comput. Inf. Sci. 2022, 34, 1231–1239.

- Wei, Z.; Lijuan, S.; Jian, G.; Linfeng, L. Image compression scheme based on PCA for wireless multimedia sensor networks. J. China Univ. Posts Telecommun. 2016, 23, 22–30.

- Almurib, H.A.F.; Kumar, T.N.; Lombardi, F. Approximate DCT Image Compression Using Inexact Computing. IEEE Trans. Comput. 2018, 67, 149–159.

- Ranjan, R.; Kumar, P. An Efficient Compression of Gray Scale Images Using Wavelet Transform. Wirel. Pers. Commun. 2022, 126, 3195–3210.

- Cheremkhin, P.A.; Kurbatova, E.A. Wavelet compression of off-axis digital holograms using real/imaginary and amplitude/phase parts. Nat. Res. Sci. Rep. 2019, 9, 7561.

- Ranjan, R. Canonical Huffman Coding Based Image Compression using Wavelet. Wirel. Pers. Commun. 2021, 117, 2193–2206.

- Renkjumnong, W. SVD and PCA in Image Processing. Master’s Thesis, Department of Arts & Science, Georgia State University, Alanta, GA, USA, 2007.

- Ranjan, R.; Kumar, P. Absolute Moment Block Truncation Coding and Singular Value Decomposition-Based Image Compression Scheme Using Wavelet. In Communication and Intelligent Systems; Lecture Notes in Networks and Systems; Sharma, H., Shrivastava, V., Kumari Bharti, K., Wang, L., Eds.; Springer: Singapore, 2022; Volume 461, pp. 919–931.

- Ranjan, R.; Kumar, P.; Naik, K.; Singh, V.K. The HAAR-the JPEG based image compression technique using singular values decomposition. In Proceedings of the 2022 2nd International Conference on Emerging Frontiers in Electrical and Electronic Technologies (ICEFEET), Patna, India, 24–25 June 2022; pp. 1–6.

- Boujelbene, R.; Boubchir, L.; Jemaa, Y.B. Enhanced embedded zerotree wavelet algorithm for lossy image coding. IET Image Process. 2019, 13, 1364–1374.

- Ahmed, S.M.; Al-Zoubi, Q.; Abo-Zahhad, M. A hybrid ECG compression algorithm based on singular value decomposition and discrete wavelet transform. J. Med. Eng. Technol. 2007, 31, 54–61.

- Boucetta, A.; Melkemi, K.E. DWT Based-Approach for Color Image Compression Using Genetic Algorithm. In Proceedings of the International Conference on Image and Signal Processing—ICISP 2012, Agadir, Morocco, 28–30 June 2012; Elmoataz, A., Mammass, D., Lezoray, O., Nouboud, F., Aboutajdine, D., Eds.; Springer: Berlin, Germany, 2012; pp. 476–484.

- Pandey, A.K.; Chaudhary, J.; Sharma, A.; Patel, H.C.; Sharma, P.D.; Baghel, V.; Kumar, R. Optimum Value of Scale and threshold for Compression of 99m To-MDP bone scan image using Haar Wavelet Transform. Indian J. Nucl. Med. 2022, 37, 154–161.

- Eleiwy, J.A. Characterizing wavelet coefficients with decomposition for medical images. J. Intell. Syst. Internet Things 2021, 2, 26–32.

- Alosta, M.; Souri, A. Design of Effective Lossless Data Compression Technique for Multiple Genomic DNA Sequences. Fusion Pract. Appl. 2021, 6, 17–25.

- Skodras, A.; Christopoulos, C.; Ebrahimi, T. The JPEG2000 still image compression standard. IEEE Signal Process. Mag. 2001, 18, 36–58.

- Said, A.; Pearlman, W.A. A new, fast, and efficient image codec based on set partitioning in hierarchical trees. IEEE Trans. Circuits Syst. Video Technol. 1996, 6, 243–250.

- Singh, S.; Kumar, V. DWT–DCT hybrid scheme for medical image compression. J. Med. Eng. Technol. 2007, 31, 109–122.

- Wallace, G.K. The JPEG still picture compression standard. IEEE Trans. Consum. Electron. 1992, 38, xviii–xxxiv.

This entry is offline, you can click here to edit this entry!