Your browser does not fully support modern features. Please upgrade for a smoother experience.

Please note this is a comparison between Version 2 by Sirius Huang and Version 1 by Kerstin Thurow.

With the in-depth development of Industry 4.0 worldwide, mobile robots have become a research hotspot. Indoor localization has become a key component in many fields and the basis for all actions of mobile robots. Herein, 12 mainstream indoor positioning methods and related positioning technologies for mobile robots are introduced and compared in detail.

- indoor positioning

- mobile robots

- positioning technology

- SLAM

1. Introduction

With the continuous innovation of sensor technology and information control technology and the impact of a new round of a world industrial revolution, indoor mobile robot technology has developed rapidly. Mobile robots have produced huge economic benefits in laboratories, industry, warehousing and logistics, transportation, shopping, entertainment, and other fields [1]. They can replace manual tasks such as the transportation of goods, monitoring patrols, dangerous operations, and repetitive labor. In addition, mobile robots realize 24 h automation and unmanned operation of factories and laboratories [2]. The question of how to achieve high-precision mobile robot positioning technology for these functions has always been an urgent problem to be solved. Positioning refers to the estimation of the position and the direction of a mobile robot in the motion area, which is a prerequisite for robot navigation [3]. It is a difficulty in the field of mobile robots and is the focus of researchers.

The following sections detail the basic principles of the 12 positioning methods, the localization techniques used, and case studies. The 12 positioning methods are divided into Non-Radio Frequency (IMU, VLC, IR, Ultrasonic, Geomagnetic, LiDAR, and Computer Vision) [20][4] and Radio Frequency (Wi-Fi, Bluetooth, ZigBee, RFID, and UWB).

2. Non-Radio Frequency Technologies

Although radio localization is the most commonly used indoor localization method, LiDAR and Computer Vision are more attractive to researchers in the field of mobile robotics. They are more accurate and require no modification to the environment. This section details the non-radio methods: IMU, VLC, IR, Ultrasonic, Geomagnetic, LiDAR, and Computer Vision.

2.1. Inertial Measurement Unit

The IMU is the most basic sensor in mobile robots, and all mobile robots are equipped with an IMU. The IMU is composed of an accelerometer and gyroscope, which can measure the acceleration, angular velocity, and angle increment of the mobile robot. Real-time positioning of the robot is achieved through integral calculation of the motion trajectory and pose according to the parameters of acceleration, angular velocity, and angle increment. This method can only rely on the internal information of the robot for autonomous navigation [23][5]. Therefore, the calibration accuracy of the IMU directly affects the positioning accuracy of the mobile robot.

The advantages of inertial navigation are strong anti-interference; inertial sensors will not reduce the accuracy due to the interference of external environment signals such as Wi-Fi, sound waves, etc. The working speed of the IMU is fast, and the inertial navigation of the IMU is more suitable for fast-moving objects than other positioning technologies. The main disadvantage is that the positioning error of inertial navigation is cumulative. Thus, the last positioning error will affect the next positioning, which will cause the error to expand. As the robot runs, the positioning error of inertial navigation will continue to increase if there is no correction process. Therefore, researchers usually use other navigation methods in combination with inertial navigation. In this way, the positioning method will retain the advantages of strong anti-interference and high speed of inertial navigation, and will limit the error to a certain range.

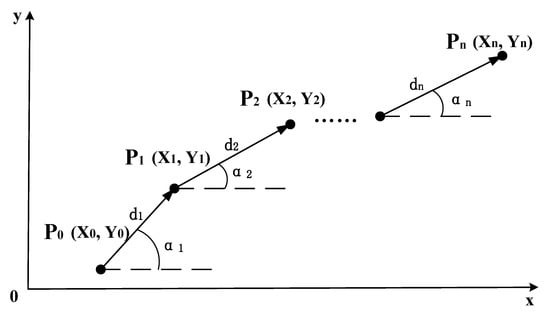

The positioning technique of the IMU is dead reckoning [12][6]. The dead reckoning method knows the initial position and pose of the mobile robot and calculates the current position through the moving distance and rotation angle recorded by the IMU. The formula for deriving the current position Pn(Xn,Yn) is expressed as Equation (1), and the schematic diagram of the dead reckoning algorithm is shown in Figure 1:

where P0 (X0, Y0) is the starting position of the mobile robot, d is the moving distance, and α is the rotation angle of the mobile robot.

Figure 1.

Schematic diagram of the IMU dead reckoning algorithm.

The IMU is usually used as an auxiliary navigation method to assist other positioning methods to estimate the position of the robot. The anti-interference feature of the IMU can be used to correct the positioning information. In recent literature, IMU localization methods focus on how to perform data fusion. The fusion algorithms used are mainly based on filters, but machine learning is also used.

2.2. Visible Light Communication

Localization based on visible light is a new type of localization method. The key element of VLC communication is the LED light [16][7]. The communication principle is to encode information and transmit the encoded information by adjusting the intensity of the LED, and the photosensitive sensor can receive and decode the high-frequency flickering signal. Using photo sensors to locate the position and orientation of LEDs, the RSSI, TOA, and AOA of radio methods are the main localization techniques [37][8]. In contrast, a camera can also be used to receive images of LEDs [38][9]. This approach is similar to Computer Vision.

LED is a kind of energy-saving lighting equipment, which has the characteristics of low energy consumption, long life, environmental protection, and anti-electromagnetic interference. With the popularity of LED lights now, the cost of environmental modification using VLC positioning is lower, which is similar to Wi-Fi. LED lights can be installed in large numbers indoors while considering the functions of lighting and communication. Due to its high-frequency nature, it transmits information without disturbing the illumination [39][10]. A major disadvantage of VLC is that it can only communicate within the line-of-sight (LOS) range. Moreover, light does not interfere with radio frequency equipment and can be safely used in places where radio frequency signals are prohibited.

The direction of improvement of VLC positioning based on RSSI technology lies in the process of optimizing the signal strength mapping position. An improved Bayesian-based fingerprint algorithm [37][8], as well as the optimization of a baseline smoother based on an extended Kalman filter and a central difference Kalman, filter have been described [40][11]. The VLC method can be fused with other localization methods, such as the IMU [16][7] and the encoder [41][12], and the corresponding fusion algorithms are the EKF and particle filter. Similar to fingerprint recognition, which requires building a signal strength library in advance, LEDs require a prior calibration stage.

2.3. Infrared Detection Technologies

Infrared is an electromagnetic wave that is invisible to the human eye and has a longer wavelength than visible light. The positioning of indoor mobile robots based on infrared methods relies on artificial landmarks with known positions, which can be divided into active landmarks and passive landmarks according to whether the landmarks require energy or not. The principle of active landmarks is that a mobile robot equipped with an infrared transmitter emits infrared rays and a receiver installed in the environment receives the infrared rays and then calculates the position of the robot, which can achieve sub-meter accuracy. The principle of passive landmarks is that the arrangement in the environment can reflect infrared landmarks and the mobile robot receives the reflected infrared information at the same time and obtains the landmark ID, position and angle, and other information [15][13]. At present, infrared-based positioning technology is relatively mature, but its penetration is poor, and it can only perform line-of-sight measurement and control. It is susceptible to environmental influences, such as sunlight and lighting, so the application is limited.

The center for life science automation (CELISCA) has developed a complete automatic transportation system for multi-mobile robots across floors [15][13]. The positioning method was selected by Hagisonic’s StarGazer. It performs indoor positioning based on infrared passive landmarks and obtains the relative position of the robot and passive landmarks through a visual calculation to evaluate the robot’s own position and orientation. The average positioning accuracy is about 2 cm, and the maximum error is within 5 cm. Bernardes et al. designed an infrared-based active localization sensor [48][14]. Infrared LED lights are arranged on the ceiling, and the mobile robot is equipped with an infrared light receiver. Combined with EKF, the pose of the mobile robot is calculated using the emission angle of the LED.

2.4. Ultrasonic Detection Technologies

An ultrasonic is defined as a sound wave with a vibration frequency higher than 20 kHz, which is higher than the upper limit of human hearing and cannot be heard. At present, ultrasonic ranging has three methods: phase detection, acoustic amplitude detection, and time detection. In practical applications, most of them are time detection methods using the TOF principle. The time detection method obtains the distance by calculating the time when the ultrasonic transmitter transmits the ultrasonic wave and the time difference and sound speed of the ultrasonic wave received by the receiving end [17][15]. The accuracy can theoretically reach the centimeter level [49][16]. Among them, the phase detection method has the advantage of better detection accuracy. Its principle is to calculate the measurement distance based on the ultrasonic wavelength by comparing the phase difference when the ultrasonic wave is transmitted and the phase difference when the ultrasonic wave is received. It also has a defect. The measured phase difference is a multiple solution value with a period of 2nπ, so its measurement range needs to be within the wavelength. The acoustic wave amplitude detection method detects the amplitude of the returned acoustic wave, but it is easily affected by factors such as the medium, resulting in inaccurate measurement, but this method is easy to implement. The principle of the transit time detection method is to determine the distance of the obstacle according to the time difference between the ultrasonic transmitting end transmitting the acoustic wave signal and the receiving end receiving the acoustic signal reflected by the obstacle. Although the positioning accuracy of ultrasonic waves can reach the centimeter level in theory, the propagation speed of ultrasonic waves is different in different media. Moreover, it will be affected by changes in temperature and air pressure, and sometimes temperature compensation is required.

2.5. Geomagnetic Field Detection Technologies

The earth is wrapped in a natural geomagnetic field formed between the north and south poles, and the strength of the magnetic field varies with latitude and longitude. At present, geomagnetic fingerprints are mainly used for indoor positioning based on geomagnetism. Magnetic fingerprints have unique properties in space, with geomagnetic modulus values in a small contiguous region forming a fingerprint sequence [54][17]. Geomagnetic fingerprint positioning includes two stages: offline training and online positioning. During the offline training stage, the geomagnetic sensor is used to collect the geomagnetic information about the sampling points, and the information is stored in the fingerprint information database. In the online positioning stage, the geomagnetic information about the current location obtained by the geomagnetic sensor is used to form the fingerprint information and match the information in the fingerprint database. The geomagnetic field is less affected by external factors, as there is no cumulative error and there is no NLOS problem, but the accuracy is at the meter level. Generally, geomagnetism is used as an auxiliary positioning tool to correct accumulated errors, or used with other sensors for information fusion. The formula for geomagnetic indoor positioning is:

where P is the position of the device is located. B is the measured magnetic field strength at the device’s location. B0 is the magnetic field strength at a reference point. B1 is the magnetic field strength at another reference point.

2.6. LiDAR Detection Technologies

LiDAR systems consist of an optical transmitter, a reflected light detector, and a data processing system. First, the optical transmitter emits discrete laser pulses to the surrounding environment. The laser pulses are reflected after encountering obstacles. The reflected laser light is received by the reflected light detector, and then the data are sent to the data processing system to obtain a two-dimensional image or 3D information of the surrounding environment. LiDAR systems are active systems since they emit laser pulses and detect reflected light. This feature supports the mobile robot to work in a dark environment, does not require any modification of the environment, and is highly universal. In indoor situations, 2D LiDAR is mainstream, and 3D LiDAR is usually used in the field of unmanned driving [56][18].

The 2D LiDAR SLAM is the mainstream method for the indoor positioning of mobile robots. Without prior information, LiDAR SLAM uses LiDAR as a sensor to obtain surrounding environment data, evaluate its pose, and draw an environment map based on the pose information. Finally, synchronous positioning and map construction are realized. At present, there are two types of 2D LiDAR SLAM methods to solve the indoor positioning problem of mobile robots: filter-based and graph-based [57][19]. The problem to be solved is the estimation of a posterior probability distribution over the robot’s location given sensor measurements and control inputs. This can be stated as follows:

where xt represents the robot’s state (position and orientation) at time t; z{1:t} represents the lidar observation data from time 1 to t; u{1:t} represents the robot control input from time 1 to t; P(xt|z{1:t},u{1:t}) represents the posterior probability of the robot’s state at time t, given the observation data and control input; P(zt|xt) represents the likelihood probability of observation data zt, given the robot state xt; P(xt|xt−1,ut) represents the transition probability of the current state xt, given the previous state xt−1 and the current control input ut; and η is a normalization factor that ensures the sum of probabilities is 1. By iterating this formula, we can estimate the robot’s position and orientation based on the LiDAR observation data and robot control input.

The filter-based SLAM method has been proposed since the 1990s and has been widely studied and applied [58][20]. Its principle is simply to use recursive Bayesian estimation to iterate the posterior probability distribution of the robot pose to construct an incremental map and achieve localization. The most basic algorithms are EKF SLAM and particle filter SLAM. Gmapping SLAM and Hector SLAM are now commonly used methods.

EKF SLAM is linearized by first-order Taylor expansion to approximate the nonlinear robot motion model and observation model. Cadena et al. improved the EKF slam based on directional endpoint features [58][20].

The particle filter RBPF SLAM, also known as sequential Monte Carlo, is a recursive algorithm that implements Bayesian filtering through a non-parametric Monte Carlo method [59,60][21][22]. Zhang et al. used the PSO algorithm to improve the particle filter [20][4]. Nie et al. added loop detection and correction functions [61][23]. Chen et al. proposed a heuristic Monte Carlo algorithm (HMCA) based on Monte Carlo localization and discrete Hough transform (DHT) [62][24]. Garrote et al. added reinforcement learning to particle filter-based localization [63][25]. Yilmaz and Temeltas employed self-adaptive Monte Carlo (SA-MCL) based on an ellipse-based energy model [64][26]. Based on the EKF and RBPF, FastSLAM combines the advantages of both. It uses a particle filter for path estimation and a Kalman filter for the maintenance of map state variables. FastSLAM has great improvements in computational efficiency and scalability. Yan and Wong integrated particle filter and FastSLAM algorithms to improve the localization operation efficiency [65][27].

In 2007, Gmapping SLAM was released as open source, which is an important achievement in the field of laser SLAM [66][28]. It is a particle filter-based method. On this basis, many companies, and researchers have applied Gmapping in mobile robot products [67,68][29][30]. Norzam et al. studied the parameters in the Gmapping algorithm [69][31]. Shaw et al. combined Gmapping and particle swarm optimization [70][32].

Hector SLAM is also a classic open-source SLAM algorithm based on filtering. The main difference from Gmapping is that it does not require odometer data and can only construct maps based on laser information [71][33]. Garrote et al. fused mobile Marvelmind beacons and Hector SLAM using PF [72][34]. Teskeredzic et al. created a low-cost, easily scalable Unmanned Ground Vehicle (UGV) system based on Hector SLAM [73][35].

The difference between graph-based SLAM and particle filter-based iterative methods is that graph-based SLAM estimates the pose and trajectory of mobile robots entirely based on observational information. All collected data are recorded, and finally, computational mapping is performed [74][36]. The pose graph represents the motion trajectory of the mobile robot. The pose of the robot is a node of the pose graph, and an edge is formed according to the relationship between the poses. This process of extracting feature points from LiDAR point cloud data is called the front end. The corresponding back end is to further optimize and adjust the edges connected by the node poses, and the optimization is based on the constraint relationship between the poses.

The methods of 2D LiDAR SLAM based on graph optimization include Karto SLAM [75][37], published by Konolige et al. in 2010, and the Cartographer algorithm, open-sourced by Google in 2016 [76][38].

Cartographer is currently the best 2D laser SLAM open-source algorithm for mapping performance. Cartographer SLAM uses odometer and IMU data for trajectory estimation. Using the estimated value of the robot’s pose as the initial value, the LiDAR data are matched and the value of the pose estimator is updated. After a frame of radar data is subjected to motion filtering, it is superimposed to form a submap. Finally, all subgraphs are formed into a complete environment map through loop closure detection and back-end optimization [76][38]. Deng et al. divided the environment into multiple subgraphs, reducing the computational cost of the Cartographer [77][39]. Sun et al. improved Cartographer’s boundary detection algorithm to reduce cost and error rate [57][19]. Gao et al. proposed a new graph-optimized SLAM method for orientation endpoint features and polygonal graphs, which experimentally proved to perform better than Gmapping and Karto [78][40].

LiDAR SLAM cannot provide color information, while vision can provide an informative visual map. Some researchers have integrated laser SLAM and visual SLAM to solve geometrically similar environmental problems, ground material problems, and global localization problems [26,79,80,81,82][41][42][43][44][45]. Visual-semantic SLAM maps are built based on laser SLAM maps [83,84][46][47]. There is also fusion with other localization methods: odometer [85][48], Wi-Fi [86[49][50],87], IMU [29[51][52],30], encoder, RTK, IMU, and UWB [36][53].

To solve the problem of a large amount of computation in SLAM, Sarker et al. introduced cloud servers into LiDAR SLAM [22][54]. Li et al. used cloud computing to optimize a Monte Carlo localization algorithm [88][55]. Recently, some researchers have also performed a lateral comparison between localization methods and algorithms. Rezende et al. compared attitude estimation algorithms based on wheel, vision, and LiDAR odometry, as well as ultra-wideband radio signals, all fused with the IMU [89][56]. The results strongly suggested that LiDAR has the best accuracy. Shen et al. compared Gmapping, Hector SLAM, and Cartographer SLAM, and the results indicate that Cartographer performs best in complex working environments, Hector SLAM is suitable for working in corridor-type environments, and Gmapping SLAM is most suitable for simple environments [90][57].

2.7. Computer Vision Detection Technologies

Computer Vision uses cameras to obtain environmental image information. It is designed to recognize and understand content in images/videos to help mobile robots localize. Computer Vision localization methods are divided into beacon-based absolute localization and visual odometry-based SLAM methods. Among them, visual SLAM is a hot spot for the indoor positioning of mobile robots.

Absolute positioning based on beacons is the most direct positioning method. The most common method is the QR code [91,92,93,94,95,96,97][58][59][60][61][62][63][64]. QR codes can provide location information directly in the video image. By arranging the distribution of QR codes reasonably, the mobile robot can be positioned in the whole working environment. Avgeris et al. designed a cylinder-shaped beacon [98][65]. Typically, beacons are placed in the environment, and Song et al. innovatively put QR codes on robots and vision cameras mounted on the ceiling [93][60]. The location of the mobile robot can be determined by tracking the QR code. Lv et al. utilized grids formed by tile joints to assist mobile robot localization [99][66].

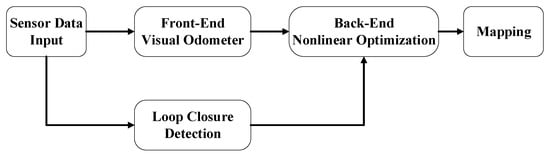

Visual SLAM is similar to LiDAR SLAM. Multiple cameras or stereo cameras are used to collect feature points with depth information in the surrounding environment, and feature point matching is performed to obtain the pose of the mobile robot. Visual SLAM systems use geometric features such as points, lines, and planes as landmarks to build maps. The visual SLAM flowchart is shown in Figure 2 [100][67].

Figure 2.

Visual SLAM flowchart.

-

Sensor data input: Environmental data collected by the camera. Occasionally, the IMU is used as a secondary sensor.

-

Front-end visual odometer: Preliminary camera poses estimation based on image information of adjacent video frames.

-

Back-end nonlinear optimization: optimize the camera pose obtained by the front-end to reduce the global error.

-

Loop closure detection: According to the image to determine whether to reach the previous position. Form a closed loop.

-

Mapping: Build a map of the environment based on continuous pose estimates.

Visual SLAM can be divided into five types according to the type of camera: Mono SLAM based on a monocular camera [3[3][42][50][58][64][66][68][69][70][71][72],79,87,91,97,99,101,102,103,104,105], Stereo SLAM based on a binocular camera [27[73][74][75],106,107], and RGB-D SLAM based on a depth camera [7[43][45][76][77][78][79][80][81][82][83][84][85][86][87][88][89][90][91][92][93],24,25,28,32,80,82,108,109,110,111,112,113,114,115,116,117,118,119,120], fisheye camera [121][94], and omnidirectional vision sensor [122][95]. Due to the lack of depth information, monocular cameras are usually used with beacons [91][58] or IMUs [101][68]. The algorithm used to fuse the monocular camera and IMUs in visual SLAM is called a visual-inertial odometer (VIO). The current mainstream is RGB-D cameras that can directly obtain depth information. The most common RGB-D camera model in the papers is Kinect, developed by Microsoft.

Currently, many researchers are working on assigning specific meanings to objects in visual SLAM, called semantic SLAM. Semantic SLAM builds maps with semantic entities, which not only contain the spatial structure information of traditional visual SLAM, but also the semantic information of objects in the workspace [123][96]. It is beneficial to improve the speed of closed-loop detection, establish human–computer interaction, and perform complex tasks.

In semantic SLAM for dynamic scenes, Han and Xi utilized PSPNet to divide video frames into static, latent dynamic, and prior dynamic regions [109][82]. Zhao et al. proposed to combine semantic segmentation and multi-view geometric constraints to exclude dynamic feature points and only use static feature points for state estimation [101][68]. Yang et al. introduced a new dynamic feature detection method called semantic and geometric constraints to filter dynamic features [114][87].

Object detection and recognition based on deep learning is now mainstream in the field of Computer Vision. Lee et al. used YOLOv3 to remove dynamic objects from dynamic environments [124][97]. Maolanon et al. demonstrated that the YOLOv3 network can be enhanced by using a CNN [21][98]. Xu and Ma simplified the number of convolutional layers of YOLOv3’s darknet53 to speed up recognition [125][99]. Zhao et al. used a Mask-RCNN network to detect moving objects [123][96].

For the division of semantic objects, Rusli et al. used RoomSLAM, which is a method of constructing a room through wall recognition by using the room as a special semantic identifier for loop closure detection [126][100]. Liang et al. identified house numbers and obtained room division information [84][47].

With the use of sensor data fusion, more precise positioning can be obtained. Since the previous localization methods have been introduced, they are listed here as LiDAR [79[42][43][44][46][47][62],80,81,83,84,95], IMU [7,24,27[64][73][76][77][79],28,97], wheel odometer [99[66][97],124], IMU and UWB [32][80], LiDAR and Odometer [82][45], LiDAR Odometer and IMU [26][41], and IMU and wheel odometer [25][78].

The image information obtained by visual SLAM has the largest amount of data among all positioning technologies. The inability of onboard computers to meet the computational demands of visual SLAM is one of the reasons that currently limits the development of visual SLAM, in particular, deep learning-based visual SLAM [105,107][72][75]. Corotan et al. developed a mobile robot system based on Google’s ARcore platform [127][101]. Zheng et al. built a cloud-based visual SLAM framework [102][69]. Keyframes are first extracted and submitted to the cloud server, saving bandwidth.

3. Radio Frequency Technologies

Radio frequency-based positioning is a common type of positioning system, covering many fields. It is very convenient to expand from the original communication function to the positioning function, which offers the advantages of low energy consumption and low cost, including Wi-Fi, Bluetooth, ZigBee, RFID, and UWB.

3.1. Radio Frequency Technology Positioning Algorithms

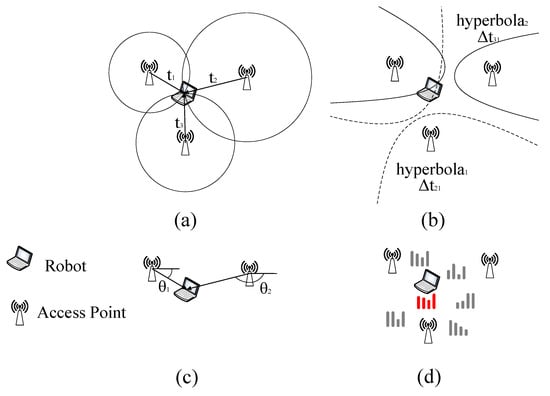

This section introduces several of the classic radio frequency technology positioning algorithms: RSSI, TOA, TDOA, and AOA, as shown in Figure 3.

Figure 3.

Radio frequency technologies. (

a

) TOA; (

b

) TDOA; (

c

) AOA; (

d

) RSSI.

RF positioning algorithms can be divided into two categories according to whether they are based on received signal strength indication (RSSI).

There are two main kinds of positioning techniques based on RSSI: triangulation and fingerprint positioning [128][102]. The position of the AP (access point) is known by RSSI-based triangulation [129][103]. The distance between the robot and each AP can be calculated according to the signal attenuation model, and then circles are drawn around each AP according to the obtained distance information. The intersection point is the mobile robot’s position. This method needs to measure the location of the AP in advance, so it is not suitable for situations where the environment is changing.

Fingerprint positioning is more accurate than triangulation. It is divided into two stages, an offline training stage and an online localization stage [130][104]. In the training phase, the room is divided into small area blocks to establish a series of sampling points, and the radio frequency technology receiving equipment is used to sample these one by one, record the RSSI value and AP address obtained at this point, and establish a database. During online positioning, the mobile robot carries the radio frequency technology signal receiving device, obtains the current RSSI and AP address, and transmits this information to the server to match with the established database to obtain the estimated position. Collecting fingerprints in advance requires significant effort, and the fingerprints need to be re-collected after the signal-receiving device moves.

CSI (Channel State Information) is an upgraded version based on RSSI. The problem of multipath fading is the main problem of RSSI which is difficult to solve but will greatly reduce the positioning accuracy [131][105]. CSI can receive and transmit channel amplitude and phase responses at different frequencies. In frequency, CSI has fine-grained characteristics compared to the coarse-grained RSSI and has the characteristics of resisting multipath fading [132][106]. Compared to RSSI, CSI contains more than 10 times the information. Thus, CSI has better stability and positioning accuracy [4][107]. However, the amount of data transmitted by CSI is huge, which requires more equipment and transmission time.

The positioning methods not based on RSSI mainly include Time of Arrival (TOA), Angle of Arrival (AOA), and Time Difference of Arrival (TDOA) algorithms. These methods are easy to calculate but are generally affected by problems, such as multipath and non-line-of-sight (NLOS) problems of the signal.

The most basic time-based RF positioning method is the Time of Arrival (TOA) [31][108]. The distance between the two is assessed by recording the time it takes for a signal to travel from the target to the access point (AP). Conventional TOA positioning requires at least three APs with known positions to participate in the measurement. The positioning model can be established according to the triangulation method or geometric formula to solve the target position. The TOA algorithm completely relies on time to calculate the target position and requires exact time synchronization between the target and the AP. The formula for TOA can be described as:

where d is the distance between the transmitter and the device. v is the speed of signals. tr is the time the signal is received by the device. ts is the time the signal is transmitted by the transmitter.

TDOA is a time difference positioning algorithm. It is an improved version based on TOA [133][109]. The positioning is performed by recording the time difference between the target and different APs to estimate the distance difference between different APs. Like TOA, at least three APs with known locations are required to participate in the measurement. The method selects a base station as a reference base station and combines other base stations and reference base stations to establish multiple hyperbolas with the base station as the focus. The intersection of multiple hyperbolas is the position of the target. The focus of the hyperbolic equation is the base station, and the long axis is the distance between the signal arrival time differences between the base stations. Since there is no need to detect signal transmission time, TDOA does not require time synchronization between the target and the AP; it only requires time synchronization between APs. This feature reduces the need for time synchronization. The formula for TDOA can be described as:

where d is the distance between the transmitter and the device. v is the speed of signals. Δt is the time difference between signals received at two receivers. Δf is the frequency difference between the two signals.

AOA estimates the target position by obtaining the relative angle of the target to the AP [134][110]. AOA requires at least two APs and sets up array antennas or directional antennas to obtain the angle information of the target and AP. AOA has a simpler structure than the above two algorithms but is seriously affected by non-line-of-sight. The positioning accuracy depends on the accuracy of the antenna array and requires regular inspection and maintenance. The formula for AOA can be described as:

where θ is the angle of arrival of the signal. (x, y) is the position of the device being located. (xs, ys) is the position of the signal source. AOA is a direction-based localization technique that requires multiple antennas or an array of antennas to accurately estimate the angle of arrival.

3.2. Wi-Fi

Wi-Fi is the 802.11 wireless local area network standard defined by the Institute of Electrical and Electronics Engineers (IEEE). The research on Wi-Fi-based indoor positioning started in 2000 [10][111]. Wi-Fi devices are widely used in various modern indoor occasions, and Wi-Fi is mainly used in mobile robot communication in an indoor environment. It is currently the preferred method in the field of indoor positioning [135][112]. Wi-Fi-based indoor positioning is relatively simple to deploy in an indoor renovation, and there is no requirement for additional hardware devices. The working frequency of Wi-Fi is in the 2.4 GHz and 5 GHz frequency bands, of which the 5 GHz frequency band has the advantages of less interference, lower noise, and faster speed. The main purpose of Wi-Fi now is communication. If Wi-Fi is to be used for robot positioning, further processing of Wi-Fi signals is required to improve positioning accuracy.

In the screened papers, all the papers based on Wi-Fi technology applied the RSSI technique. While techniques such as TOA and AOA are not uncommon, the RSSI technique has a higher positioning accuracy ceiling. RSSI is less affected by non-line-of-sight and is more suitable for the actual working environment of mobile robots. The problem with RSSI is multipath fading and frequent signal fluctuation. With the introduction of filters, neural networks, and deep learning, there are more and more schemes combined with RSSI. Wang et al. used a K-ELM (Kernel extreme learning machine) to improve the accuracy of a fingerprint recognition algorithm [12][6]. Cui et al. proposed a robust principal component analysis–extreme learning machine (RPCA-ELM) algorithm based on robust principal component analysis to improve the localization accuracy of mobile robot RSSI fingerprints [136][113]. Since the fluctuation of Wi-Fi signal strength has a great influence on fingerprint positioning accuracy, Thewan et al. proposed the Weight-Average of the Top-n Populated (WATP) filtering method. Compared with Kalman filtering, it reduces the calculation time while satisfying the positioning accuracy. The Kalman filtering Mean-Square-Error (MSE) is 13.65 dBm, and the proposed method MSE is 0.12 dBm [11][114]. Sun et al. applied cellular automata to the indoor RSS positioning of AGVs to achieve low-cost filtering of noise caused by environmental factors and mechanical errors [137][115]. Zhang et al. optimized Wi-Fi-based RSS localization with Deep Fuzzy Forest [138][116]. The first step in using RSSI is to collect Wi-Fi fingerprints at various indoor locations and establish a database. This step is labor-intensive, and as the location of the device changes, the database needs to be rebuilt. Lee et al. [87][50] and Zou et al. [86][49] combined the LiDAR method with the Wi-Fi method and used SLAM technology to collect Wi-Fi fingerprints. CSI and RSSI are compared, and the results indicate that the CSI positioning accuracy error is about 2% smaller than the RSSI, which improves the problem of multipath effects in RSSI [132][106].

3.3. Bluetooth

Bluetooth uses the 802.15.1 standard developed by the IEEE. The worldwide universal Bluetooth 4.0 protocol was announced in 2010 [139][117]. Bluetooth communicates using radio waves with frequencies between 2.402 GHz and 2.480 GHz. Among them, the Bluetooth low-energy (BLE) version has the advantages of high speed, low cost, and low power consumption, so it is widely used on the Internet of Things (IoT). Bluetooth positioning technology is mainly based on RSSI positioning. It uses the broadcast function of the Bluetooth beacon to measure the signal strength. A Bluetooth beacon installed in the working environment will continuously send broadcast messages. After the terminal mounted on the mobile robot receives the transmitted signal, it evaluates the pose of the mobile robot according to the positioning algorithm. Bluetooth is widely used in indoor positioning IoT because of its low cost and low operating expenses. Since it is susceptible to noise interference and has insufficient stability, and the positioning accuracy of unprocessed Bluetooth signals is at the meter level, it is rarely used in the field of indoor positioning of mobile robots.

The iBeacon developed by Apple in 2012 and the Eddystone released by Google in 2015 greatly promoted the development of BLE [140][118]. Although the positioning accuracy of the existing Bluetooth 4.0 technology cannot meet the high-precision requirements of mobile robots, with the emergence of Bluetooth 5.0, this demand can be met. Compared with Bluetooth 4.0, Bluetooth 5.0 has a longer effective distance, a faster data transmission rate, and lower energy consumption [141][119]. Most importantly, the localization accuracy is improved to the sub-meter level [142][120]. Bluetooth 5.0 has not been put into formal application, and it is the indoor positioning technology of mobile robots available in the future.

The trend of Bluetooth-based indoor positioning is to combine optimization algorithms with positioning technology. Mankotia et al. implemented a Bluetooth localization of a mobile robot based on iterative trilateration and cuckoo search (CS) algorithm based on a Monte Carlo simulation [143][121]. Comparing the cuckoo algorithm and particle filter in the simulation, the positioning accuracy of the CS algorithm (mean error 0.265 m) is higher than that of the particle filter (mean error 0.335 m), and the overall error is reduced by 21%.

3.4. ZigBee

ZigBee uses the IEEE 802.15.4 standard specification. Its positioning principle is similar to that of Wi-Fi and Bluetooth, and it is also mainly based on RSSI to estimate the distance between devices. ZigBee is characterized by low cost, wide signal range, high reliability, low data rate, and good topology capabilities. The disadvantage is that the stability is poor, it is susceptible to environmental interference, and the positioning accuracy is at the meter level.

Wang et al. designed a mobile robot positioning system based on the ZigBee positioning technology and combined the centroid method and the least square method to improve the positioning accuracy of the RSSI algorithm in complex indoor environments [144][122]. The mobile robot is both the coordination node and the mobile node of the network, which increases the mobility and flexibility of the ZigBee network. Since RSSI-based positioning is easily affected by environmental changes, Luo et al. proposed a ZigBee-based adaptive wireless indoor localization system (ILS) in a dynamic environment [128][102]. The system is divided into two steps. First, the mobile robot uses LiDAR SLAM to collect RSSI values in the working environment and update the fingerprint database in real-time. Secondly, the adaptive signal model fingerprinting (ASMF) algorithm is proposed. The signal attenuation model of the ASMF can reduce noise, evaluate the confidence of RSSI measurement values, and reduce the interference of abnormal RSSI values.

3.5. Radio Frequency Identification

RFID positioning technology uses radio frequency signals to transmit the location of the target. The hardware consists of three parts: a reader, an electronic tag, and an antenna. The reader is the core part of the RFID system, mainly composed of data processing modules. It communicates with the electronic tag through the antenna and reads the information such as ID, RSSI, and phase of the electronic tag [145][123]. RFID tags are divided into active and passive tags according to whether they need power [146][124]. Active tags can always transmit wireless signals to the environment, while passive tags are passively responding to the wireless signals emitted by readers. Active tags have wider coverage, but in indoor positioning, passive tags are used more due to their low-cost, easy deployment, and maintenance. RFID has different operating frequencies, mainly including low frequency (LF) (9–135 KHz), high frequency (HF) (13.553–15.567 MHz), and ultra-high frequency (UHF) (860–930 MHz) [147][125]. At present, UHF-RFID is mostly used in the indoor positioning of mobile robots. Compared with LF and HF, UHF-RFID has the advantages of a simple structure, low cost, and high data transmission rate. Most of the RFID-based papers screened selected UHF-RFID.

Similar to many RF schemes, applying filters to RFID signals is also a common method. DiGiampaolo et al. [148][126] and Wang et al. [146][124] both chose Rao-Blackwellized particle filters as the algorithm for RFID SLAM. The reflection and divergence problem of RFID tags is serious. Magnago proposed an Unscented Kalman Filter (UKF) to solve the phase ambiguity of the RF signal backscattered by UHF-RFID tags [149][127]. Bernardini et al. equipped a mobile robot with dual reader antennas to reduce signal loss and tag backscatter, and used the PSO algorithm to optimize the specific absorption rate (SAR) process [150][128]. Gareis et al. also used SAR RFID for localization, adding eight channels for monitoring [151][129]. The positioning accuracy reached the centimeter level. Tzitzis et al. introduced a multi-antenna localization method based on the K-means algorithm [152][130].

3.6. Ultra-Wide Band

As a carrierless communication technology, a UWB uses non-sinusoidal narrowband pulses with very short periods to transmit data, which can achieve high speed, large-bandwidth, and high time-resolution communication in proximity [153][131]. Extremely short pulse modulation makes it possible to greatly reduce the effects of multipath problems. Low transmission power avoids interference with Wi-Fi, BLE, or similar devices. A UWB has stronger wall penetration than Wi-Fi and BLE. Recently, the UWB has become the focus in the field of indoor positioning due to its stable performance and centimeter-level positioning accuracy, making it one of the ideal choices for the positioning of mobile robots. High-precision positioning at the centimeter level requires numerous anchor points, higher carrier wave frequency, and sampling frequency, resulting in relatively high hardware and computational costs.

A UWB can achieve localization using RSS, TOA, AOA, and TDOA techniques [154,155,156][132][133][134]. Traditional UWB localization systems are mostly based on mathematical methods and filters [157,158][135][136]. Lim et al. proposed a three-layered bidirectional Long Short-term Memory (Bi-LSTM) neural network to optimize UWB localization [159][137]. Sutera et al. used reinforcement learning to optimize noise in UWB localization and correct localization errors [160][138]. Recently, data fusion is the trend of UWB positioning. A Constraint Robust Iterate Extended Kalman filter (CRIEKF) algorithm has been proposed by Li and Wang to fuse the UWB and IMU [33][139]. A Sage-Husa Fuzzy Adaptive Filter (SHFAF) is used to fuse the UWB and IMU [31][108]. Cano et al. proposed a Kalman filter-based anchor synchronization system to tune clock drift [154][132]. The UWB has also been used as a hybrid localization method and SLAM method for data fusion using filter algorithms [32,36][53][80]. Liu et al. studied how to arrange anchor points scientifically, and proposed a UWB-based multi-base station fusion positioning method [161][140]. In the actual robot positioning process, the arrangement and rationality of the base station directly determine the positioning accuracy and benign space area.

4. Comparison of 12 Indoor Positioning Technologies for Mobile Robots

A comparison of 12 positioning technologies based on the accuracy, cost, scalability, advantages, and disadvantages of positioning technology is shown in Table 1.

Table 1.

Comparison of 12 indoor positioning technologies for mobile robots.

| Technology | Accuracy Level | Hardware Costs | Computational Costs | Advantages | Disadvantages |

|---|---|---|---|---|---|

| IMU | 0.2 m [162][141] | Low | Low | Wide application Easy to use Anti-interference |

Accumulated error |

| VLC | <0.05 m [44][142] | Low | Medium | Easy to deploy No electromagnetic interference |

Only line-of-sight communication |

| Ultrasonic | 0.012 m [49][16] | Low | Low | Mature technology High positioning accuracy |

Short-distance measurement Signal attenuation |

| IR | <0.1 m [48][14] | Medium | Low | Mature technology | Only line-of-sight communication Need environmental transformation Affected by sunlight |

| Geomagnetic | <0.21 m [55][143] | Low | Low | No cumulative error No need for environmental transformation |

Low accuracy Build a geomagnetic database |

| LiDAR | <0.025 m [90][57] | High | High | Strong adaptability Strong stability No need for environmental transformation |

High requirements for algorithms Affected by glass objects Suffer in weak feature environments |

| Computer Vision | 0.09 m [108][81] | Medium | High | Collection of rich information Strong adaptability No need for environmental transformation |

High requirements for algorithms and computing performance Affected by light Suffer in weak feature environments |

| Wi-Fi | 2.31 m [136][113] | Medium | Medium | Widely used Mature technology Easy to deploy |

Easy to be interfered with Multipath problem |

| Bluetooth | 0.27 m [143][121] | Low | Low | Wide application Low-power consumption |

Path loss Easy to be interfered with |

| ZigBee | 0.71 m [128][102] | Low | Low | Low-power consumption Good topology |

Poor stability Easy to be interfered with |

| RFID | <0.01 m [150][128] | Low | Low | High positioning accuracy Easy to deploy |

Need environmental transformation Multipath problem |

| UWB | <0.1 m [161][140] | High | Medium | High positioning accuracy Anti-interference High multipath resolution |

High cost |

The accuracy level column shows the data with the highest accuracy of the corresponding method in the literature. The hardware and computational cost may vary depending on the specific implementation, the environment, and other factors. This table is only intended to provide a rough comparison of the relative costs of different indoor localization techniques. The cost considered here refers to the expenses required to implement high-precision positioning solutions for this type of technology.

References

- Umetani, T.; Kondo, Y.; Tokuda, T. Rapid development of a mobile robot for the nakanoshima challenge using a robot for intelligent environments. J. Robot. Mechatron. 2020, 32, 1211–1218.

- Karabegović, I.; Karabegović, E.; Mahmić, M.; Husak, E.; Dašić, P. The Implementation of Industry 4.0 Supported by Service Robots in Production Processes. In Proceedings of the 4th International Conference on Design, Simulation, Manufacturing: The Innovation Exchange, Lviv, Ukraine, 8–11 June 2021; pp. 193–202.

- Lee, T.J.; Kim, C.H.; Cho, D.I.D. A Monocular Vision Sensor-Based Efficient SLAM Method for Indoor Service Robots. IEEE Trans. Ind. Electron. 2019, 66, 318–328.

- Zhang, Q.B.; Wang, P.; Chen, Z.H. An improved particle filter for mobile robot localization based on particle swarm optimization. Expert Syst. Appl. 2019, 135, 181–193.

- Borodacz, K.; Szczepański, C.; Popowski, S. Review and selection of commercially available IMU for a short time inertial navigation. Aircr. Eng. Aerosp. Technol. 2022, 94, 45–59.

- Wang, H.; Li, J.; Cui, W.; Lu, X.; Zhang, Z.; Sheng, C.; Liu, Q. Mobile Robot Indoor Positioning System Based on K-ELM. J. Sens. 2019, 2019, 7547648.

- Liang, Q.; Liu, M. A Tightly Coupled VLC-Inertial Localization System by EKF. IEEE Robot. Autom. Lett. 2020, 5, 3129–3136.

- Cui, Z.; Wang, Y.; Fu, X. Research on Indoor Positioning System Based on VLC. In Proceedings of the 2020 Prognostics and Health Management Conference, PHM-Besancon 2020, Besancon, France, 4–7 May 2020; pp. 360–365.

- Guan, W.; Chen, S.; Wen, S.; Tan, Z.; Song, H.; Hou, W. High-Accuracy Robot Indoor Localization Scheme Based on Robot Operating System Using Visible Light Positioning. IEEE Photonics J. 2020, 12, 7901716.

- Louro, P.; Vieira, M.; Vieira, M.A. Bidirectional visible light communication. Opt. Eng. 2020, 59, 127109.

- Zhuang, Y.; Wang, Q.; Shi, M.; Cao, P.; Qi, L.; Yang, J. Low-Power Centimeter-Level Localization for Indoor Mobile Robots Based on Ensemble Kalman Smoother Using Received Signal Strength. IEEE Internet Things J. 2019, 6, 6513–6522.

- Amsters, R.; Holm, D.; Joly, J.; Demeester, E.; Stevens, N.; Slaets, P. Visible light positioning using bayesian filters. J. Light. Technol. 2020, 38, 5925–5936.

- Thurow, K.; Zhang, L.; Liu, H.; Junginger, S.; Stoll, N.; Huang, J. Multi-floor laboratory transportation technologies based on intelligent mobile robots. Transp. Saf. Environ. 2019, 1, 37–53.

- Bernardes, E.; Viollet, S.; Raharijaona, T. A Three-Photo-Detector Optical Sensor Accurately Localizes a Mobile Robot Indoors by Using Two Infrared Light-Emitting Diodes. IEEE Access 2020, 8, 87490–87503.

- Grami, T.; Tlili, A.S. Indoor Mobile Robot Localization based on a Particle Filter Approach. In Proceedings of the 19th International Conference on Sciences and Techniques of Automatic Control and Computer Engineering, STA 2019, Sousse, Tunisia, 24–26 March 2019; pp. 47–52.

- Tsay, L.W.J.; Shiigi, T.; Zhao, X.; Huang, Z.; Shiraga, K.; Suzuki, T.; Ogawa, Y.; Kondo, N. Static and dynamic evaluations of acoustic positioning system using TDMA and FDMA for robots operating in a greenhouse. Int. J. Agric. Biol. Eng. 2022, 15, 28–33.

- Pérez-Navarro, A.; Torres-Sospedra, J.; Montoliu, R.; Conesa, J.; Berkvens, R.; Caso, G.; Costa, C.; Dorigatti, N.; Hernández, N.; Knauth, S.; et al. Challenges of fingerprinting in indoor positioning and navigation. In Geographical and Fingerprinting Data for Positioning and Navigation Systems: Challenges, Experiences and Technology Roadmap; Academic Press: Cambridge, MA, USA, 2018; pp. 1–20.

- Habich, T.L.; Stuede, M.; Labbe, M.; Spindeldreier, S. Have I been here before? Learning to close the loop with lidar data in graph-based SLAM. In Proceedings of the IEEE/ASME International Conference on Advanced Intelligent Mechatronics, AIM, Delft, The Netherlands, 12–16 July 2021; pp. 504–510.

- Sun, Z.; Wu, B.; Xu, C.Z.; Sarma, S.E.; Yang, J.; Kong, H. Frontier Detection and Reachability Analysis for Efficient 2D Graph-SLAM Based Active Exploration. In Proceedings of the IEEE International Conference on Intelligent Robots and Systems, Las Vegas, NV, USA, 24 October 2020–24 January 2021; pp. 2051–2058.

- Cadena, C.; Carlone, L.; Carrillo, H.; Latif, Y.; Scaramuzza, D.; Neira, J.; Reid, I.; Leonard, J.J. Past, present, and future of simultaneous localization and mapping: Toward the robust-perception age. IEEE Trans. Robot. 2016, 32, 1309–1332.

- Li, Y.; Shi, C. Localization and Navigation for Indoor Mobile Robot Based on ROS. In Proceedings of the 2018 Chinese Automation Congress, Xi’an, China, 30 November–2 December 2018; pp. 1135–1139.

- Talwar, D.; Jung, S. Particle Filter-based Localization of a Mobile Robot by Using a Single Lidar Sensor under SLAM in ROS Environment. In Proceedings of the International Conference on Control, Automation and Systems, Jeju, Republic of Korea, 15–18 October 2019; pp. 1112–1115.

- Nie, F.; Zhang, W.; Yao, Z.; Shi, Y.; Li, F.; Huang, Q. LCPF: A Particle Filter Lidar SLAM System with Loop Detection and Correction. IEEE Access 2020, 8, 20401–20412.

- Chen, D.; Weng, J.; Huang, F.; Zhou, J.; Mao, Y.; Liu, X. Heuristic Monte Carlo Algorithm for Unmanned Ground Vehicles Realtime Localization and Mapping. IEEE Trans. Veh. Technol. 2020, 69, 10642–10655.

- Garrote, L.; Torres, M.; Barros, T.; Perdiz, J.; Premebida, C.; Nunes, U.J. Mobile Robot Localization with Reinforcement Learning Map Update Decision aided by an Absolute Indoor Positioning System. In Proceedings of the IEEE International Conference on Intelligent Robots and Systems, Macau, China, 3–8 November 2019; pp. 1620–1626.

- Yilmaz, A.; Temeltas, H. ROS Architecture for Indoor Localization of Smart-AGVs Based on SA-MCL Algorithm. In Proceedings of the 11th International Conference on Electrical and Electronics Engineering, Bursa, Turkey, 28–30 November 2019; pp. 885–889.

- Yan, Y.P.; Wong, S.F. A navigation algorithm of the mobile robot in the indoor and dynamic environment based on the PF-SLAM algorithm. Clust. Comput. 2019, 22, 14207–14218.

- Liu, Z.; Cui, Z.; Li, Y.; Wang, W. Parameter optimization analysis of gmapping algorithm based on improved RBPF particle filter. In Proceedings of the Journal of Physics: Conference Series, Guiyang, China, 14–15 August 2020; Volume 1646, p. 012004.

- Wu, C.Y.; Lin, H.Y. Autonomous mobile robot exploration in unknown indoor environments based on rapidly-exploring random tree. In Proceedings of the IEEE International Conference on Industrial Technology, Melbourne, Australia, 13–15 February 2019; pp. 1345–1350.

- Camargo, A.B.; Liu, Y.; He, G.; Zhuang, Y. Mobile Robot Autonomous Exploration and Navigation in Large-scale Indoor Environments. In Proceedings of the 10th International Conference on Intelligent Control and Information Processing, ICICIP 2019, Marrakesh, Morocco, 14–19 December 2019; pp. 106–111.

- Norzam, W.A.S.; Hawari, H.F.; Kamarudin, K. Analysis of Mobile Robot Indoor Mapping using GMapping Based SLAM with Different Parameter. In Proceedings of the IOP Conference Series: Materials Science and Engineering, Pulau Pinang, Malaysia, 26–27 August 2019; Volume 705, p. 012037.

- Shaw, J.-S.; Liew, C.J.; Xu, S.-X.; Zhang, Z.-M. Development of an AI-enabled AGV with robot manipulator. In Proceedings of the 2019 IEEE Eurasia Conference on IOT, Communication and Engineering (ECICE), Yunlin, Taiwan, 3–6 October 2019; pp. 284–287.

- Nagla, S. 2D Hector SLAM of Indoor Mobile Robot using 2D Lidar. In Proceedings of the IEEE 2nd International Conference on Power, Energy, Control and Transmission Systems, Proceedings, Chennai, India, 10–11 December 2020; pp. 1–4.

- Garrote, L.; Barros, T.; Pereira, R.; Nunes, U.J. Absolute Indoor Positioning-aided Laser-based Particle Filter Localization with a Refinement Stage. In Proceedings of the IECON (Industrial Electronics Conference), Lisbon, Portugal, 14–17 October 2019; pp. 597–603.

- Teskeredzic, E.; Akagic, A. Low cost UGV platform for autonomous 2D navigation and map-building based on a single sensory input. In Proceedings of the 7th International Conference on Control, Decision and Information Technologies, Prague, Czech Republic, 29 June–2 July 2020; pp. 988–993.

- Wilbers, D.; Merfels, C.; Stachniss, C. A comparison of particle filter and graph-based optimization for localization with landmarks in automated vehicles. In Proceedings of the 2019 Third IEEE International Conference on Robotic Computing (IRC), Naples, Italy, 25–27 February 2019; pp. 220–225.

- Papadimitriou, A.; Kleitsiotis, I.; Kostavelis, I.; Mariolis, I.; Giakoumis, D.; Likothanassis, S.; Tzovaras, D. Loop Closure Detection and SLAM in Vineyards with Deep Semantic Cues. In Proceedings of the 2022 International Conference on Robotics and Automation (ICRA), Philadelphia, PA, USA, 23–27 May 2022; pp. 2251–2258.

- Hess, W.; Kohler, D.; Rapp, H.; Andor, D. Real-time loop closure in 2D LIDAR SLAM. In Proceedings of the IEEE International Conference on Robotics and Automation, Stockholm, Sweden, 16–21 May 2016; pp. 1271–1278.

- Deng, Y.; Shan, Y.; Gong, Z.; Chen, L. Large-Scale Navigation Method for Autonomous Mobile Robot Based on Fusion of GPS and Lidar SLAM. In Proceedings of the 2018 Chinese Automation Congress, CAC 2018, Xi’an, China, 30 November–2 December 2018; pp. 3145–3148.

- Gao, H.; Zhang, X.; Wen, J.; Yuan, J.; Fang, Y. Autonomous Indoor Exploration Via Polygon Map Construction and Graph-Based SLAM Using Directional Endpoint Features. IEEE Trans. Autom. Sci. Eng. 2019, 16, 1531–1542.

- Zali, A.; Bozorg, M.; Masouleh, M.T. Localization of an indoor mobile robot using decentralized data fusion. In Proceedings of the ICRoM 2019—7th International Conference on Robotics and Mechatronics, Tehran, Iran, 20–21 November 2019; pp. 328–333.

- Chan, S.H.; Wu, P.T.; Fu, L.C. Robust 2D Indoor Localization Through Laser SLAM and Visual SLAM Fusion. In Proceedings of the 2018 IEEE International Conference on Systems, Man, and Cybernetics, SMC 2018, Miyazaki, Japan, 7–10 October 2018; pp. 1263–1268.

- Xu, Y.; Ou, Y.; Xu, T. SLAM of Robot based on the Fusion of Vision and LIDAR. In Proceedings of the 2018 IEEE International Conference on Cyborg and Bionic Systems, Shenzhen, China, 25–27 October 2018; pp. 121–126.

- Xu, S.; Chou, W.; Dong, H. A robust indoor localization system integrating visual localization aided by CNN-based image retrieval with Monte Carlo localization. Sensors 2019, 19, 249.

- Wang, C.; Wang, J.; Li, C.; Ho, D.; Cheng, J.; Yan, T.; Meng, L.; Meng, M.Q.H. Safe and robust mobile robot navigation in uneven indoor environments. Sensors 2019, 19, 2993.

- Chen, H.; Huang, H.; Qin, Y.; Li, Y.; Liu, Y. Vision and laser fused SLAM in indoor environments with multi-robot system. Assem. Autom. 2019, 39, 297–307.

- Liang, J.; Song, W.; Shen, L.; Zhang, Y. Indoor semantic map building for robot navigation. In Proceedings of the 2019 IEEE 8th Joint International Information Technology and Artificial Intelligence Conference, Chongqing, China, 24–26 May 2019; pp. 794–798.

- Li, H.; Mao, Y.; You, W.; Ye, B.; Zhou, X. A neural network approach to indoor mobile robot localization. In Proceedings of the 2020 19th Distributed Computing and Applications for Business Engineering and Science, Xuzhou, China, 16–19 October 2020; pp. 66–69.

- Zou, H.; Chen, C.L.; Li, M.; Yang, J.; Zhou, Y.; Xie, L.; Spanos, C.J. Adversarial Learning-Enabled Automatic WiFi Indoor Radio Map Construction and Adaptation with Mobile Robot. IEEE Internet Things J. 2020, 7, 6946–6954.

- Lee, G.; Moon, B.C.; Lee, S.; Han, D. Fusion of the slam with wi-fi-based positioning methods for mobile robot-based learning data collection, localization, and tracking in indoor spaces. Sensors 2020, 20, 5182.

- Yan, X.; Guo, H.; Yu, M.; Xu, Y.; Cheng, L.; Jiang, P. Light detection and ranging/inertial measurement unit-integrated navigation positioning for indoor mobile robots. Int. J. Adv. Robot. Syst. 2020, 17, 1729881420919940.

- Baharom, A.K.; Abdul-Rahman, S.; Jamali, R.; Mutalib, S. Towards modelling autonomous mobile robot localization by using sensor fusion algorithms. In Proceedings of the 2020 IEEE 10th International Conference on System Engineering and Technology, Shah Alam, Malaysia, 9 November 2020; pp. 185–190.

- Wang, Y.X.; Chang, C.L. ROS-base Multi-Sensor Fusion for Accuracy Positioning and SLAM System. In Proceedings of the 2020 International Symposium on Community-Centric Systems, Islamabad, Pakistan, 21–22 November 2018; pp. 1–6.

- Pena Queralta, K.J.; Gia, T.N.; Tenhunen, H.; Westerlund, T. Offloading SLAM for Indoor Mobile Robots with Edge-Fog-Cloud Computing. In Proceedings of the 1st International Conference on Advances in Science, Engineering and Robotics Technology 2019, Dhaka, Bangladesh, 3–5 May 2019; pp. 1–6.

- Li, I.-h.; Wang, W.-Y.; Li, C.-Y.; Kao, J.-Z.; Hsu, C.-C. Cloud-based improved Monte Carlo localization algorithm with robust orientation estimation for mobile robots. Eng. Comput. 2018, 36, 178–203.

- Rezende, A.M.C.; Junior, G.P.C.; Fernandes, R.; Miranda, V.R.F.; Azpurua, H.; Pessin, G.; Freitas, G.M. Indoor Localization and Navigation Control Strategies for a Mobile Robot Designed to Inspect Confined Environments. In Proceedings of the IEEE International Conference on Automation Science and Engineering, Hong Kong, China, 20–21 August 2020; pp. 1427–1433.

- Shen, D.; Xu, Y.; Huang, Y. Research on 2D-SLAM of indoor mobile robot based on laser radar. In Proceedings of the ACM International Conference Proceeding Series, Shenzhen, China, 19–21 July 2019; pp. 1–7.

- Muñoz-Salinas, R.; Marín-Jimenez, M.J.; Medina-Carnicer, R. SPM-SLAM: Simultaneous localization and mapping with squared planar markers. Pattern Recognit. 2019, 86, 156–171.

- Tanaka, H. Ultra-High-Accuracy Visual Marker for Indoor Precise Positioning. In Proceedings of the IEEE International Conference on Robotics and Automation, Paris, France, 31 May 2020–31 August 2020; pp. 2338–2343.

- Song, K.T.; Chang, Y.C. Design and Implementation of a Pose Estimation System Based on Visual Fiducial Features and Multiple Cameras. In Proceedings of the 2018 International Automatic Control Conference, Taoyuan, Taiwan, 4–7 November 2018; pp. 1–6.

- Mantha, B.R.K.; Garcia de Soto, B. Designing a reliable fiducial marker network for autonomous indoor robot navigation. In Proceedings of the 36th International Symposium on Automation and Robotics in Construction, Banff, AB, Canada, 21–24 May 2019; pp. 74–81.

- Nitta, Y.; Bogale, D.Y.; Kuba, Y.; Tian, Z. Evaluating slam 2d and 3d mappings of indoor structures. In Proceedings of the 37th International Symposium on Automation and Robotics in Construction, ISARC 2020: From Demonstration to Practical Use—To New Stage of Construction Robot, Kitakyshu, Japan, 27–28 October 2020; pp. 821–828.

- Magnago, V.; Palopoli, L.; Passerone, R.; Fontanelli, D.; Macii, D. Effective Landmark Placement for Robot Indoor Localization with Position Uncertainty Constraints. IEEE Trans. Instrum. Meas. 2019, 68, 4443–4455.

- Li, Y.; He, L.; Zhang, X.; Zhu, L.; Zhang, H.; Guan, Y. Multi-sensor fusion localization of indoor mobile robot. In Proceedings of the 2019 IEEE International Conference on Real-Time Computing and Robotics, Irkutsk, Russia, 4–9 August 2019; pp. 481–486.

- Avgeris, M.; Spatharakis, D.; Athanasopoulos, N.; Dechouniotis, D.; Papavassiliou, S. Single vision-based self-localization for autonomous robotic agents. In Proceedings of the 2019 International Conference on Future Internet of Things and Cloud Workshops, Istanbul, Turkey, 26–28 August 2019; pp. 123–129.

- Lv, W.; Kang, Y.; Qin, J. FVO: Floor vision aided odometry. Sci. China Inf. Sci. 2019, 62, 12202.

- Duan, C.; Junginger, S.; Huang, J.; Jin, K.; Thurow, K. Deep Learning for Visual SLAM in Transportation Robotics: A review. Transp. Saf. Environ. 2019, 1, 177–184.

- Zhao, X.; Wang, C.; Ang, M.H. Real-Time Visual-Inertial Localization Using Semantic Segmentation towards Dynamic Environments. IEEE Access 2020, 8, 155047–155059.

- Zheng, Y.; Chen, S.; Cheng, H. Real-time cloud visual simultaneous localization and mapping for indoor service robots. IEEE Access 2020, 8, 16816–16829.

- Sun, M.; Yang, S.; Liu, H. Convolutional neural network-based coarse initial position estimation of a monocular camera in large-scale 3D light detection and ranging maps. Int. J. Adv. Robot. Syst. 2019, 16, 1729881419893518.

- Ran, T.; Yuan, L.; Zhang, J.B. Scene perception based visual navigation of mobile robot in indoor environment. ISA Trans. 2021, 109, 389–400.

- Da Silva, S.P.P.; Almeida, J.S.; Ohata, E.F.; Rodrigues, J.J.P.C.; De Albuquerque, V.H.C.; Reboucas Filho, P.P. Monocular Vision Aided Depth Map from RGB Images to Estimate of Localization and Support to Navigation of Mobile Robots. IEEE Sens. J. 2020, 20, 12040–12048.

- Xu, Y.; Liu, T.; Sun, B.; Zhang, Y.; Khatibi, S.; Sun, M. Indoor Vision/INS Integrated Mobile Robot Navigation Using Multimodel-Based Multifrequency Kalman Filter. Math. Probl. Eng. 2021, 2021, 6694084.

- Syahputra, K.A.; Sena Bayu, D.B.; Pramadihanto, D. 3D Indoor Mapping Based on Camera Visual Odometry Using Point Cloud. In Proceedings of the International Electronics Symposium: The Role of Techno-Intelligence in Creating an Open Energy System Towards Energy Democracy, Proceedings, Surabaya, Indonesia, 27–28 September 2019; pp. 94–99.

- Kanayama, H.; Ueda, T.; Ito, H.; Yamamoto, K. Two-mode Mapless Visual Navigation of Indoor Autonomous Mobile Robot using Deep Convolutional Neural Network. In Proceedings of the 2020 IEEE/SICE International Symposium on System Integration, Honolulu, HI, USA, 12–15 January 2020; pp. 536–541.

- Chen, C.; Zhu, H.; Wang, L.; Liu, Y. A stereo visual-inertial SLAM approach for indoor mobile robots in unknown environments without occlusions. IEEE Access 2019, 7, 185408–185421.

- Gao, M.; Yu, M.; Guo, H.; Xu, Y. Mobile robot indoor positioning based on a combination of visual and inertial sensors. Sensors 2019, 19, 1773.

- Al Khatib, E.I.; Jaradat, M.A.K.; Abdel-Hafez, M.F. Low-Cost Reduced Navigation System for Mobile Robot in Indoor/Outdoor Environments. IEEE Access 2020, 8, 25014–25026.

- Liu, R.; Shen, J.; Chen, C.; Yang, J. SLAM for Robotic Navigation by Fusing RGB-D and Inertial Data in Recurrent and Convolutional Neural Networks. In Proceedings of the 2019 IEEE 5th International Conference on Mechatronics System and Robots, Singapore, 3–5 May 2019; pp. 1–6.

- Xu, X.; Liu, X.; Zhao, B.; Yang, B. An extensible positioning system for locating mobile robots in unfamiliar environments. Sensors 2019, 19, 4025.

- Fu, Q.; Yu, H.; Lai, L.; Wang, J.; Peng, X.; Sun, W.; Sun, M. A robust RGB-D SLAM system with points and lines for low texture indoor environments. IEEE Sens. J. 2019, 19, 9908–9920.

- Han, S.; Xi, Z. Dynamic Scene Semantics SLAM Based on Semantic Segmentation. IEEE Access 2020, 8, 43563–43570.

- Sun, Q.; Yuan, J.; Zhang, X.; Duan, F. Plane-Edge-SLAM: Seamless Fusion of Planes and Edges for SLAM in Indoor Environments. IEEE Trans. Autom. Sci. Eng. 2021, 18, 2061–2075.

- Liao, Z.; Wang, W.; Qi, X.; Zhang, X. RGB-D object slam using quadrics for indoor environments. Sensors 2020, 20, 5150.

- Yang, S.; Fan, G.; Bai, L.; Li, R.; Li, D. MGC-VSLAM: A meshing-based and geometric constraint VSLAM for dynamic indoor environments. IEEE Access 2020, 8, 81007–81021.

- Wang, H.; Zhang, C.; Song, Y.; Pang, B.; Zhang, G. Three-Dimensional Reconstruction Based on Visual SLAM of Mobile Robot in Search and Rescue Disaster Scenarios. Robotica 2020, 38, 350–373.

- Yang, S.; Fan, G.; Bai, L.; Zhao, C.; Li, D. SGC-VSLAM: A semantic and geometric constraints VSLAM for dynamic indoor environments. Sensors 2020, 20, 2432.

- Zhang, F.; Li, Q.; Wang, T.; Ma, T. A robust visual odometry based on RGB-D camera in dynamic indoor environments. Meas. Sci. Technol. 2021, 32, 044033.

- Maffei, R.; Pittol, D.; Mantelli, M.; Prestes, E.; Kolberg, M. Global localization over 2D floor plans with free-space density based on depth information. In Proceedings of the IEEE International Conference on Intelligent Robots and Systems, Las Vegas, NV, USA, 24 October 2020–24 January 2021; pp. 4609–4614.

- Tian, Z.; Guo, C.; Liu, Y.; Chen, J. An Improved RRT Robot Autonomous Exploration and SLAM Construction Method. In Proceedings of the 5th International Conference on Automation, Control and Robotics Engineering, Las Vegas, NV, USA, 24 October 2020–24 January 2021; pp. 612–619.

- Hu, B.; Huang, H. Visual odometry implementation and accuracy evaluation based on real-time appearance-based mapping. Sens. Mater. 2020, 32, 2261–2275.

- Martín, F.; Matellán, V.; Rodríguez, F.J.; Ginés, J. Octree-based localization using RGB-D data for indoor robots. Eng. Appl. Artif. Intell. 2019, 77, 177–185.

- Yang, L.; Dryanovski, I.; Valenti, R.G.; Wolberg, G.; Xiao, J. RGB-D camera calibration and trajectory estimation for indoor mapping. Auton. Robot. 2020, 44, 1485–1503.

- Liu, Y.; Zhu, D.; Peng, J.; Wang, X.; Wang, L.; Chen, L.; Li, J.; Zhang, X. Real-Time Robust Stereo Visual SLAM System Based on Bionic Eyes. IEEE Trans. Med. Robot. Bionics 2020, 2, 391–398.

- Cebollada, S.; Paya, L.; Roman, V.; Reinoso, O. Hierarchical Localization in Topological Models Under Varying Illumination Using Holistic Visual Descriptors. IEEE Access 2019, 7, 49580–49595.

- Zhao, X.; Zuo, T.; Hu, X. OFM-SLAM: A Visual Semantic SLAM for Dynamic Indoor Environments. Math. Probl. Eng. 2021, 2021, 5538840.

- Lee, C.; Peng, J.; Xiong, Z. Asynchronous fusion of visual and wheel odometer for SLAM applications. In Proceedings of the IEEE/ASME International Conference on Advanced Intelligent Mechatronics, AIM, Boston, MA, USA, 6–9 July 2020; pp. 1990–1995.

- Maolanon, P.; Sukvichai, K.; Chayopitak, N.; Takahashi, A. Indoor Room Identify and Mapping with Virtual based SLAM using Furnitures and Household Objects Relationship based on CNNs. In Proceedings of the 10th International Conference on Information and Communication Technology for Embedded Systems, Bangkok, Thailand, 25–27 March 2019; pp. 1–6.

- Xu, M.; Ma, L. Object Semantic Annotation Based on Visual SLAM. In Proceedings of the 2021 Asia-Pacific Conference on Communications Technology and Computer Science, Shenyang, China, 22–24 January 2021; pp. 197–201.

- Rusli, I.; Trilaksono, B.R.; Adiprawita, W. RoomSLAM: Simultaneous localization and mapping with objects and indoor layout structure. IEEE Access 2020, 8, 196992–197004.

- Corotan, A.; Irgen-Gioro, J.J.Z. An Indoor Navigation Robot Using Augmented Reality. In Proceedings of the 2019 5th International Conference on Control, Automation and Robotics, Beijing, China, 19–22 April 2019; pp. 111–116.

- Luo, R.C.; Hsiao, T.J. Dynamic wireless indoor localization incorporating with an autonomous mobile robot based on an adaptive signal model fingerprinting approach. IEEE Trans. Ind. Electron. 2019, 66, 1940–1951.

- Rusli, M.E.; Ali, M.; Jamil, N.; Din, M.M. An Improved Indoor Positioning Algorithm Based on RSSI-Trilateration Technique for Internet of Things (IOT). In Proceedings of the 6th International Conference on Computer and Communication Engineering: Innovative Technologies to Serve Humanity, Kuala Lumpur, Malaysia, 26–27 July 2016; pp. 12–77.

- Villacrés, J.L.C.; Zhao, Z.; Braun, T.; Li, Z. A Particle Filter-Based Reinforcement Learning Approach for Reliable Wireless Indoor Positioning. IEEE J. Sel. Areas Commun. 2019, 37, 2457–2473.

- Zafari, F.; Papapanagiotou, I.; Devetsikiotis, M.; Hacker, T. An ibeacon based proximity and indoor localization system. arXiv 2017, arXiv:1703.07876.

- Khoi Huynh, M.; Anh Nguyen, D. A Research on Automated Guided Vehicle Indoor Localization System Via CSI. In Proceedings of the 2019 International Conference on System Science and Engineering, Dong Hoi, Vietnam, 20–21 July 2019; pp. 581–585.

- Geok, T.K.; Aung, K.Z.; Aung, M.S.; Soe, M.T.; Abdaziz, A.; Liew, C.P.; Hossain, F.; Tso, C.P.; Yong, W.H. Review of indoor positioning: Radio wave technology. Appl. Sci. 2021, 11, 279.

- Liu, J.; Pu, J.; Sun, L.; He, Z. An approach to robust INS/UWB integrated positioning for autonomous indoor mobile robots. Sensors 2019, 19, 950.

- Bottigliero, S.; Milanesio, D.; Saccani, M.; Maggiora, R. A low-cost indoor real-time locating system based on TDOA estimation of UWB pulse sequences. IEEE Trans. Instrum. Meas. 2021, 70, 5502211.

- Yang, T.; Cabani, A.; Chafouk, H. A Survey of Recent Indoor Localization Scenarios and Methodologies. Sensors 2021, 21, 8086.

- Obeidat, H.; Shuaieb, W.; Obeidat, O.; Abd-Alhameed, R. A Review of Indoor Localization Techniques and Wireless Technologies. Wirel. Pers. Commun. 2021, 119, 289–327.

- Sonnessa, A.; Saponaro, M.; Alfio, V.S.; Capolupo, A.; Turso, A.; Tarantino, E. Indoor Positioning Methods—A Short Review and First Tests Using a Robotic Platform for Tunnel Monitoring. In Proceedings of the International Conference on Computational Science and Its Applications, Cagliari, Italy, 1–4 July 2020; pp. 664–679.

- Cui, W.; Liu, Q.; Zhang, L.; Wang, H.; Lu, X.; Li, J. A robust mobile robot indoor positioning system based on Wi-Fi. Int. J. Adv. Robot. Syst. 2020, 17, 1729881419896660.

- Thewan, T.; Seksan, C.; Pramot, S.; Ismail, A.H.; Terashima, K. Comparing WiFi RSS filtering for wireless robot location system. Procedia Manuf. 2019, 30, 143–150.

- Sun, J.; Fu, Y.; Li, S.; Su, Y. Automated guided vehicle indoor positioning method based cellular automata. In Proceedings of the 2018 Chinese Intelligent Systems Conference. WenZhou, ZheJiang, China, 13–14 October 2018; pp. 615–630.

- Zhang, L.; Chen, Z.; Cui, W.; Li, B.; Chen, C.; Cao, Z.; Gao, K. WiFi-Based Indoor Robot Positioning Using Deep Fuzzy Forests. IEEE Internet Things J. 2020, 7, 10773–10781.

- Yu, N.; Zhan, X.; Zhao, S.; Wu, Y.; Feng, R. A Precise Dead Reckoning Algorithm Based on Bluetooth and Multiple Sensors. IEEE Internet Things J. 2018, 5, 336–351.

- Zafari, F.; Gkelias, A.; Leung, K.K. A Survey of Indoor Localization Systems and Technologies. IEEE Commun. Surv. Tutor. 2019, 21, 2568–2599.

- Sreeja, M.; Sreeram, M. Bluetooth 5 beacons: A novel design for Indoor positioning. In Proceedings of the ACM International Conference Proceeding Series, Tacoma, DC, USA, 18–20 August 2017.

- Alexandr, A.; Anton, D.; Mikhail, M.; Ilya, K. Comparative analysis of indoor positioning methods based on the wireless sensor network of bluetooth low energy beacons. In Proceedings of the 2020 International Conference Engineering and Telecommunication, Dolgoprudny, Russia, 25–26 November 2020; pp. 1–5.

- Parhi, D. Advancement in navigational path planning of robots using various artificial and computing techniques. Int. Robot. Autom. J. 2018, 4, 133–136.

- Wang, Z.; Liu, M.; Zhang, Y. Mobile localization in complex indoor environment based on ZigBee wireless network. J. Phys. 2019, 1314, 012214.

- Wang, J.; Takahashi, Y. Particle Smoother-Based Landmark Mapping for the SLAM Method of an Indoor Mobile Robot with a Non-Gaussian Detection Model. J. Sens. 2019, 2019, 3717298.

- Patre, S.R. Passive Chipless RFID Sensors: Concept to Applications A Review. IEEE J. Radio Freq. Identif. 2022, 6, 64–76.

- Ibrahim, A.A.A.; Nisar, K.; Hzou, Y.K.; Welch, I. Review and Analyzing RFID Technology Tags and Applications. In Proceedings of the 13th IEEE International Conference on Application of Information and Communication Technologies, AICT 2019, Baku, Azerbaijan, 23–25 October 2019; pp. 1–4.

- Martinelli, F. Simultaneous Localization and Mapping Using the Phase of Passive UHF-RFID Signals. J. Intell. Robot. Syst. Theory Appl. 2019, 94, 711–725.

- Magnago, V.; Palopoli, L.; Fontanelli, D.; Macii, D.; Motroni, A.; Nepa, P.; Buffi, A.; Tellini, B. Robot localisation based on phase measures of backscattered UHF-RFID signals. In Proceedings of the 2019 IEEE International Instrumentation and Measurement Technology Conference, Proceedings, Auckland, New Zealand, 20–23 May 2019; pp. 1–6.

- Bernardini, F.; Motroni, A.; Nepa, P.; Buffi, A.; Tripicchio, P.; Unetti, M. Particle swarm optimization in multi-antenna SAR-based localization for UHF-RFID tags. In Proceedings of the 2019 IEEE International Conference on RFID Technology and Applications, Pisa, Italy, 25–27 September 2019; pp. 291–296.

- Gareis, M.; Hehn, M.; Stief, P.; Korner, G.; Birkenhauer, C.; Trabert, J.; Mehner, T.; Vossiek, M.; Carlowitz, C. Novel UHF-RFID Listener Hardware Architecture and System Concept for a Mobile Robot Based MIMO SAR RFID Localization. IEEE Access 2021, 9, 497–510.

- Tzitzis, A.; Megalou, S.; Siachalou, S.; Emmanouil, T.G.; Filotheou, A.; Yioultsis, T.V.; Dimitriou, A.G. Trajectory Planning of a Moving Robot Empowers 3D Localization of RFID Tags with a Single Antenna. IEEE J. Radio Freq. Identif. 2020, 4, 283–299.

- Yadav, R.; Malviya, L. UWB antenna and MIMO antennas with bandwidth, band-notched, and isolation properties for high-speed data rate wireless communication: A review. Int. J. RF Microw. Comput. Aided Eng. 2020, 30, e22033.

- Cano, J.; Chidami, S.; Ny, J.L. A Kalman filter-based algorithm for simultaneous time synchronization and localization in UWB networks. In Proceedings of the IEEE International Conference on Robotics and Automation, Montreal, QC, Canada, 20–24 May 2019; pp. 1431–1437.

- Sbirna, S.; Sbirna, L.S. Optimization of indoor localization of automated guided vehicles using ultra-wideband wireless positioning sensors. In Proceedings of the 2019 23rd International Conference on System Theory, Control and Computing, Sinaia, Romania, 9–11 October 2019; pp. 504–509.

- Xin, J.; Gao, K.; Shan, M.; Yan, B.; Liu, D. A Bayesian Filtering Approach for Error Mitigation in Ultra-Wideband Ranging. Sensors 2019, 19, 440.

- Shi, D.; Mi, H.; Collins, E.G., Jr.; Wu, J. An Indoor Low-Cost and High-Accuracy Localization Approach for AGVs. IEEE Access 2020, 8, 50085–50090.

- Zhu, X.; Yi, J.; Cheng, J.; He, L. Adapted Error Map Based Mobile Robot UWB Indoor Positioning. IEEE Trans. Instrum. Meas. 2020, 69, 6336–6350.

- Lim, H.; Park, C.; Myung, H. RONet: Real-time Range-only Indoor Localization via Stacked Bidirectional LSTM with Residual Attention. In Proceedings of the IEEE International Conference on Intelligent Robots and Systems, Macau, China, 3–8 November 2019; pp. 3241–3247.

- Sutera, E.; Mazzia, V.; Salvetti, F.; Fantin, G.; Chiaberge, M. Indoor point-to-point navigation with deep reinforcement learning and ultra-wideband. In Proceedings of the 13th International Conference on Agents and Artificial Intelligence, Online Streaming, 4–6 February 2021; pp. 38–47.

- Li, X.; Wang, Y. Research on the UWB/IMU fusion positioning of mobile vehicle based on motion constraints. Acta Geod. Geophys. 2020, 55, 237–255.

- Liu, Y.; Sun, R.; Liu, J.; Fan, Y.; Li, L.; Zhang, Q. Research on the positioning method of autonomous mobile robot in structure space based on UWB. In Proceedings of the 2019 International Conference on High Performance Big Data and Intelligent Systems, Shenzhen, China, 9–11 May 2019; pp. 278–282.