Distributed edge intelligence is a disruptive research area that enables the execution of machine learning and deep learning (ML/DL) algorithms close to where data are generated. Since edge devices are more limited and heterogeneous than typical cloud devices, many hindrances have to be overcome to fully extract the potential benefits of such an approach (such as data-in-motion analytics).

- machine learning

- artificial intelligence

- distributed

- edge intelligence

- fog intelligence

- Internet of Things

1. Introduction

- High latency [8]: offloading intelligence tasks to the edge enables achievement of faster inference, decreasing the inherent delay in data transmission through the network backbone;

- Security and privacy issues [9][10]: it is possible to train and infer on sensitive data fully at the edge, preventing their risky propagation throughout the network, where they are susceptible to attacks. Moreover, edge intelligence can derive non-sensitive information that could then be submitted to the cloud without further processing;

- The need for continuous internet connection: in locations where connectivity is poor or intermittent, the ML/DL could still be carried out;

- Bandwidth degradation: edge computing can perform part of processing tasks on raw data and transmit the produced data to the cloud (filtered/aggregated/pre-processed), thus saving network bandwidth. Transmitting large amounts of data to the cloud burdens the network and impacts the overall Quality of Service (QoS) [11];

- Power waste [12]: unnecessary raw data being transmitted through the internet demands power, decreasing energy efficiency on a large scale.

2. Related Work

Some surveys have been published that address the edge intelligence subject recently. However, they adopt different perspectives from the one adopted in this SLR. Al-Rakhami et al. [13] propose and analyze a framework based on the distributed edge/cloud paradigm using docker technology which provides a very lightweight and effective virtualization solution. This solution can be utilized to manage, deploy and distribute applications onto clusters (e.g., small board devices such as Raspberry PI). It is able to provide an advantageous combination of various benefits and lower costs of data processing performed at the edge instead of central servers. However, the authors base their proposal on experiments to support the proposal of a new framework. The research does not mention any of the nine groups of techniques the researchers present in the work. Wang et al. [14] survey is centered on the connection between Deep Learning and the edge, either to apply DL in optimizing the edge or to use the edge to run DL algorithms. The study is divided into five fronts: DL applications on edge; DL inference in edge; edge computing for DL; DL training at the edge; DL for optimizing the edge. The paper discusses hardware and virtualization aspects. Concerning the (groups of) techniques and strategies, it is more restricted to Federated Learning and the optimization of the edge with DL. Xu et al. [10] approach edge intelligence under the perspectives of edge caching, edge training, edge inference, and edge offloading in a very comprehensive way. The researchers discuss all these aspects in the work but explore additional techniques, and strategies related to pre-processing, federated learning, and scheduling. One intersection of this paper with the ouresearchers' researchs is the overlap of three groups of techniques the researchers present (Federated Learning, Edge Pre-processing and Scheduling). However, the researchers deepened the discussion into more groups of techniques. The work presented by Zhou et al. [15] covers artificial intelligence to edge AI, showing a generalized representation of application architecture used in the lifecycle management of ML. In the edge layer: sensors/actuators; edge analytics; logging and monitoring. In the fog layer: visualization; live streaming engines; batch processing; data ingestion; storage and ML model development platforms and libraries. The researchers' respapearchr approaches several more domains in which edge intelligence is used, which are not present in this survey. Compared to these other surveys, the researchers analyze the literature more comprehensively, including a discussion on application domains of edge intelligence and their correlation with identified techniques. Verbraeken et al. [16] provide an extensive overview of the current state-of-the-art in terms of outlining the challenges and opportunities of distributed machine learning over conventional machine learning, discussing the techniques used for distributed machine learning. The paper follows the same line of research of Wang et al. [14], with a focus on machine learning applied to the distributed environment. To this end, it makes inroads into the various types of algorithms to solve problems using ML. Table 1 shows the comparison between the researchers' work and the other surveys mentioned in this section. In summary, the main gaps of the analyzed works are focused on aspects such as “Techniques and Strategies” on the edge. The table also shows the aspects of “Challenges”and “Different Application Domains”, where edge intelligence can be used.Scope | |||||||||

|---|---|---|---|---|---|---|---|---|---|

References | Groups of | Techniques or | Works That Tackle the Challenges | Strategies | Comments | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

Paper | Challenges | Group | of Techniques | Different Application | Domains | ||||||||||||

Al-Rakhami et al. [13] | 0/6 | Running ML/DL on devices with limited resources | |||||||||||||||

CH1 | |||||||||||||||||

2/8 | Model Partitioning | [14][24][27][28][29][30][31][32][33][34][35][36][37][38][39][40][41][42] |

Lightweight scheduler to automatically partition DNN computation between edge devices and cloud at the granularity of NN layers | 1/6 | |||||||||||||

] | [ | ||||||||||||||||

JointDNN [73] | |||||||||||||||||

Model Partitioning | JointDNN provides an energy- and performance-efficient method of querying some layers on the mobile device and some layers on the cloud server. | CH3 | |||||||||||||||

H. Li et al. [31] | Model Partitioning | ||||||||||||||||

They divide the NN layers and deploy the part with the lower ones (closer to the input) into edge servers and the part with higher layers (closer to the output) into the cloud for offloading processing. They also propose an offline and an online algorithm that schedules tasks in Edge servers. | CH4 | ||||||||||||||||

Musical chair [74] | [ |

Model Partitioning | |||||||||||||||

Musical Chair aims at alleviating the compute cost and overcoming the resource barrier by distributing their computation: data parallelism and model parallelism. | CH5 | – | |||||||||||||||

CH6 |

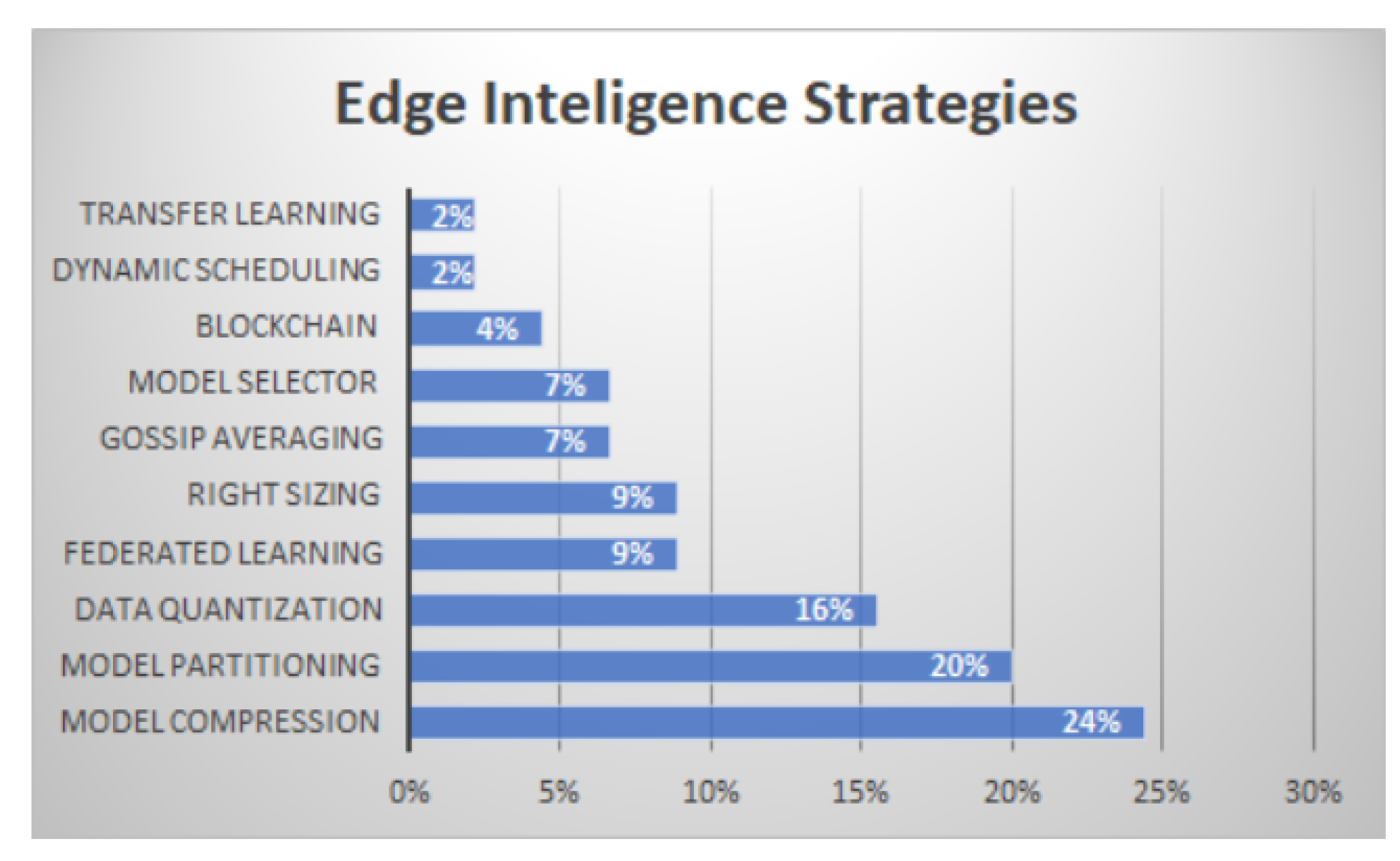

3.2. RQ2—Techniques and Strategies

Here, the researchers focus on three main aspects, namely: (i) the system architecture, (ii) how the ML tasks are distributed among the devices, and (iii) the underlying adopted techniques. The researchers classify the several approaches used in distributed learning based on these three aspects. The researchers identified nine groups of techniques and strategies, described in what follows: Federated learning; Model partitioning; Right-sizing; Edge pre-processing; Scheduling; Cloud pre-training; Edge only; Model Compression; and Other techniques.3.3. RQ3—Frameworks for Edge Intelligence

This section describes the studies that provided answers to the RQ3 of this survey. Table 4 lists the main frameworks currently used in distributed ML applications. The table also correlates each framework with the corresponding EI group of techniques or the main related strategy.Framework | |||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

[ | ][44][45][46][47][48][49][50][51][52][53][54][55][56][57][58][59][60][61][62] | ||||||||||||||||

Wang et al. [14] | CH2 | 1/6 | Ensuring energy efficiency without compromising the accuracy | ||||||||||||||

CH2 | 4/8 | 4/6 | |||||||||||||||

] | [33 |

Verbraeken et al. [16] | CH3 | 1/6 | 0/8 | 0/6 | |||||||||||

Communication efficiency | Zhou et al. [15] | 2/6 | |||||||||||||||

Intelligent Transport Systems (ITS) (13) | 4/8 | ||||||||||||||||

CH4 | Ensuring data privacy and security | 0/6 | |||||||||||||||

Dianlei Xu et al. [10] | 6/6 | ||||||||||||||||

CH5 | Handling failure in edge devices | 3/8 | 0/6 | ||||||||||||||

The researchers' work | 6/6 | 8/8 | 6/6 |

3. Answering the RQs

3.1. RQ1—Research Challenges in Edge Intelligence (EI)

In this section, the researchers summarize the challenges faced by the Edge Intelligence (EI) paradigm that the analyzed studies either mentioned or aimed to tackle. The discussion presented in this section aims to provide answers to RQ1: What are the main challenges and open issues in the distributed learning field? As mentioned earlier, performing ML techniques at the edge of the network promises to bring several benefits, but it raises several challenges. As this field is still in its beginning, solutions to such challenges are still being investigated. The surveyed studies tackle several challenges, which can be broadly grouped into six categories, displayed in Table 2 and described in what follows.Challenges | |||||

|---|---|---|---|---|---|

CH1 | |||||

CH6 | |||||

Heterogeneity and low quality of data | |||||

] | ||||||||||||||

[ | ||||||||||||||

AAIoT [75] | Model Partitioning | |||||||||||||

Health (14) | Accurate segmenting NNs under multi-layer IoT architectures | |||||||||||||

MobileNet [42] | Model Compression | Model Selector | Presented by Google Inc., the two hyperparameters introduced allow the model builder to choose the right sized model for the specific application. | |||||||||||

Squeezenet | Model Compression | It is a reduced DNN that achieves AlexNet-level accuracy with 50 times fewer parameters | ||||||||||||

Tiny-YOLO | Model Compression | Tiny Yolo is a very lite NN and is hence suitable for running on edge devices. It has an accuracy that is comparable to the standard AlexNet for small class numbers but is much faster. | ||||||||||||

BranchyNet | Right sizing | Open source DNN training framework that supports the early-exit mechanism. | ||||||||||||

TeamNet [76] | Model Compression | Transfer Learning | TeamNet trains shallower models using the similar but downsized architecture of a given SOTA (state of the art) deep model. The master node compares its uncertainty with the worker’s and selects the one with the least uncertainty as to the final result. | |||||||||||

OpenEI [42] | Model Compression | Data Quantization | Model Selector | The algorithms are optimized by compressing the size of the model, quantizing the weight. The model selector will choose the most suitable model based on the developer’s requirement (the default is accuracy) and the current computing resource. | ||||||||||

TensorFlow Lite [77] | Data Quantization | TensorFlow’s lightweight solution, which is designed for mobile and edge devices. It leverages many optimization techniques, including quantized kernels, to reduce the latency. | ||||||||||||

] | QNNPACK (Quantized Neural Networks PACKage) [78] | Data Quantization | Developed by Facebook, is a mobile-optimized library for high-performance NN inference. It provides an implementation of common NN operators on quantized 8-bit tensors. | |||||||||||

ProtoNN [79] | Model Compression | Inspired by k-Nearest Neighbor (KNN) and could be deployed on the edges with limited storage and computational power. | ||||||||||||

EMI-RNN [80] | Right Sizing | It requires 72 times less computation than standard Long Short term Memory Networks (LSTM) and improves its accuracy by 1%. | ||||||||||||

CoreML [81] | Model Compression | Data Quantization | Published by Apple, it is a deep learning package optimized for on-device performance to minimize memory footprint and power consumption. Users are allowed to integrate the trained machine learning model into Apple products, such as Siri, Camera, and QuickType. | |||||||||||

DroNet [33] | Model Compression | Data Quantization | The DroNet topology was inspired by residual networks and was reduced in size to minimize the bare image processing time (inference). The numerical representation of weights and activations reduces from the native one, 32-bit floating-point (Float32), down to a 16-bit fixed point one (Fixed16). | |||||||||||

Stratum [82] | ||||||||||||||

[ | ||||||||||||||

] | ||||||||||||||

Energy Management (4) | Model Selector | Dynamic Scheduling | Stratum can select the best model by evaluating a series of user-built models. A resource monitoring framework within Stratum keeps track of resource utilization and is responsible for triggering actions to elastically scale resources and migrate tasks, as needed, to meet the ML workflow’s Quality of Services (QoS). ML modules can be placed on the edge of the Cloud layer, depending on user requirements and capacity analysis. | |||||||||||

] | Efficient distributed deep learning (EDDL) [53] | Model Compression | Model Partitioning | Right-Sizing | A systematic and structured scheme based on balanced incomplete block design (BIBD) used in situations where the dataflows in DNNs are sparse. Vertical and horizontal model partition and grouped convolution techniques are used to reduce computation and memory. To speed up the inference, BranchyNet is utilized. | |||||||||

In-Edge AI [5] | Federated Learning | Utilizes the collaboration among devices and edge nodes to exchange the learning parameters for better training and inference of the models. | ||||||||||||

Edgence [83] | Blockchain | Edgence (EDGe + intelligENCE) is proposed to serve as a blockchain-enabled edge-computing platform to intelligently manage massive decentralized applications in IoT use cases. | ||||||||||||

FederatedAveraging (FedAvg) [84] | Federated Learning | Combines local stochastic gradient descent (SGD) on each client with a server that performs model averaging. | ||||||||||||

SSGD |

Federated Learning | System that enables multiple parties to jointly learn an accurate neural network model for a given objective without sharing their input datasets. | ||||||||||||

] | BlockFL [86] | Blockchain | Federated Learning | Mobile devices’ local model updates are exchanged and verified by leveraging blockchain. | ||||||||||

Edgent [6] | Model Partitioning | Right-Sizing | Adaptively partitions DNN computation between the device and edge, in order to leverage hybrid computation resources in proximity for real-time DNN inference. DNN right-sizing accelerates DNN inference through the early exit at a proper intermediate DNN layer to further reduce the computation latency. | |||||||||||

PipeDream [87] | Model Partitioning | PipeDream keeps all available GPUs productive by systematically partitioning DNN layers among them to balance work and minimize communication. | ||||||||||||

GoSGD [88] | Gossip Averaging | Method to share information between different threads based on gossip algorithms and showing good consensus convergence properties. | ||||||||||||

Gossiping SGD [89] | Gossip Averaging | Asynchronous method that replaces the all-reduce collective operation of synchronous training with a gossip aggregation algorithm. | ||||||||||||

GossipGraD [90] | Gossip Averaging | Asynchronous communication of gradients for further reducing the communication cost. | ||||||||||||

INCEPTIONN [91] | Data Quantization | Lossy-compression algorithm for floating-point gradients. The framework reduces the communication time by 70.9 80.7% and offers 2.2 3.1× speedup over the conventional training system while achieving the same level of accuracy. | ||||||||||||

Minerva [92] | Data Quantization | Model compression | Quantization analysis minimizes bit widths without exceeding a strict prediction error bound. Compared to a 16-bit fixed-point baseline, Minerva reduces power consumption by 1.5×. Minerva identifies operands that are close to zero and removes them from the prediction computation such that model accuracy is not affected. Selective pruning further reduces power consumption by 2.0× on top of bit width quantization. | |||||||||||

AdaDeep [93] | Model Compression | Automatically selects a combination of compression techniques for a given DNN that will lead to an optimal balance between user-specified performance goals and resource constraints. AdaDeep enables up to 9.8× latency reduction, 4.3× energy efficiency improvement, and 38× storage reduction in DNNs while incurring negligible accuracy loss. | ||||||||||||

JALAD [94] | Data Quantization | Model Partitioning | Data compression by jointly considering compression rate and model accuracy. A latency-aware deep decoupling strategy to minimize the overall execution latency is employed. Decouples a deep NN to run a part of it at edge devices and the other part inside the conventional cloud. |

3.4. RQ4—Edge Intelligence Application Domains

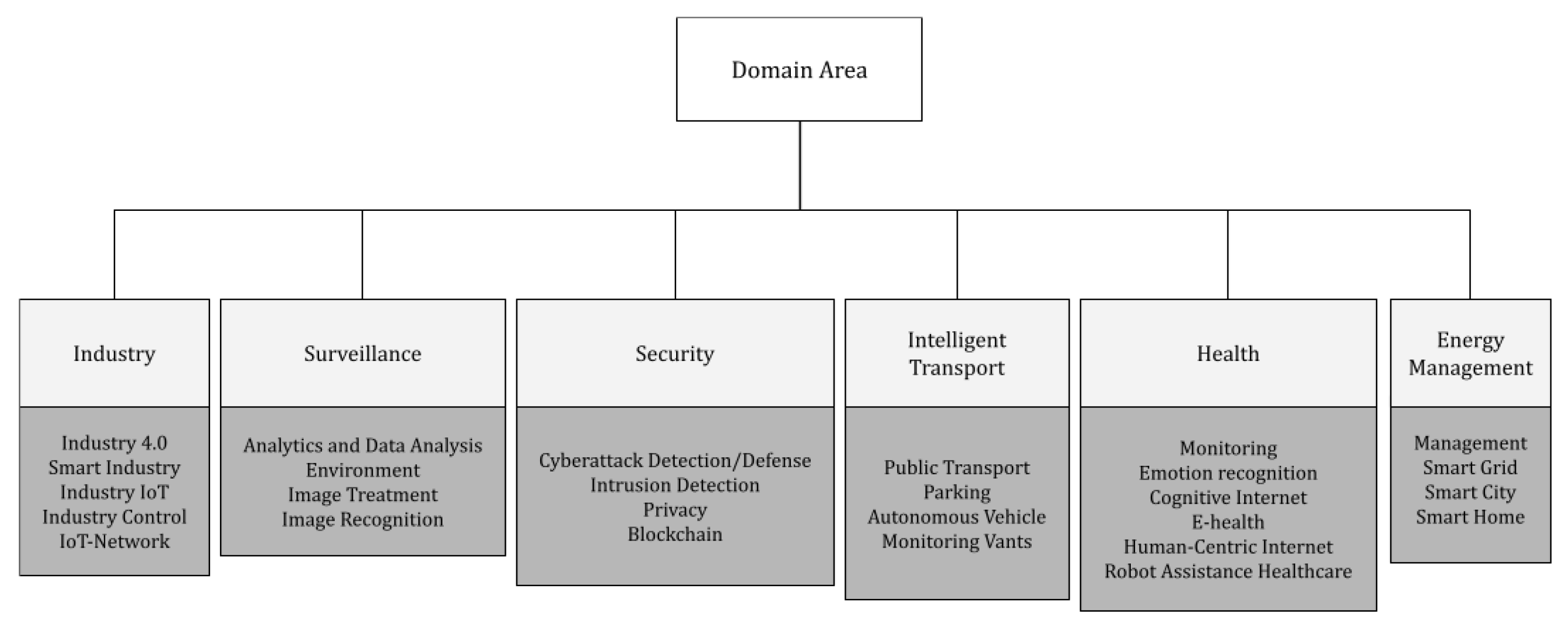

In this section, the researchers present a taxonomy to characterize the application domains where the field of EI has been adopted, providing inputs to answer the RQ4. According to the researched articles, it was possible to group them into six main domains: (i) Industry, (ii) Surveillance, (iii) Security, (iv) Intelligent Transport, (v) Health, and (vi) Energy Management. This does not mean that other domains cannot be created due to new research. Figure 2 illustrates this taxonomy up to a third level. Table 5 shows the works that tackle these domains. Figure 3 summarizes the statistics of the six domains of the publishing by field.References

- AI and MEMS Sensors: A Critical Pairing|SEMI. Available online: https://www.semi.org/en/blogs/technology-trends/ai-and-mems-sensors (accessed on 1 March 2022).

- Dion, G.; Mejaouri, S.; Sylvestre, J. Reservoir computing with a single delay-coupled non-linear mechanical oscillator. J. Appl. Phys. 2018, 124, 152132.

- Rafaie, M.; Hasan, M.H.; Alsaleem, F.M. Neuromorphic MEMS sensor network. Appl. Phys. Lett. 2019, 114, 163501.

- Hasan, M.H.; Al-Ramini, A.; Abdel-Rahman, E.; Jafari, R.; Alsaleem, F. Colocalized Sensing and Intelligent Computing in Micro-Sensors. Sensors 2020, 20, 6346.

- Wang, X.; Han, Y.; Wang, C.; Zhao, Q.; Chen, X.; Chen, M. In-edge ai: Intelligentizing mobile edge computing, caching and communication by federated learning. IEEE Netw. 2019, 33, 156–165.

- Li, E.; Zhou, Z.; Chen, X. Edge intelligence: On-demand deep learning model co-inference with device-edge synergy. In Proceedings of the 2018 Workshop on Mobile Edge Communications, Budapest, Hungary, 20 August 2018; pp. 31–36.

- Wang, Z.; Cui, Y.; Lai, Z. A first look at mobile intelligence: Architecture, experimentation and challenges. IEEE Netw. 2019, 33, 120–125.

- Zhang, Y.; Huang, H.; Yang, L.X.; Xiang, Y.; Li, M. Serious challenges and potential solutions for the industrial Internet of Things with edge intelligence. IEEE Netw. 2019, 33, 41–45.

- Qureshi, K.N.; Iftikhar, A.; Bhatti, S.N.; Piccialli, F.; Giampaolo, F.; Jeon, G. Trust management and evaluation for edge intelligence in the Internet of Things. Eng. Appl. Artif. Intell. 2020, 94, 103756.

- Xu, D.; Li, T.; Li, Y.; Su, X.; Tarkoma, S.; Jiang, T.; Crowcroft, J.; Hui, P. Edge Intelligence: Architectures, Challenges, and Applications. arXiv 2020, arXiv:2003.12172.

- Wang, J.; Zhang, J.; Bao, W.; Zhu, X.; Cao, B.; Yu, P.S. Not just privacy: Improving performance of private deep learning in mobile cloud. In Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, London, UK, 19–23 August 2018; pp. 2407–2416.

- Zhang, W.; Zhang, Z.; Zeadally, S.; Chao, H.C.; Leung, V.C. MASM: A multiple-algorithm service model for energy-delay optimization in edge artificial intelligence. IEEE Trans. Ind. Inform. 2019, 15, 4216–4224.

- Al-Rakhami, M.; Alsahli, M.; Hassan, M.M.; Alamri, A.; Guerrieri, A.; Fortino, G. Cost efficient edge intelligence framework using docker containers. In Proceedings of the 2018 IEEE 16th International Conference on Dependable, Autonomic and Secure Computing Congress (DASC/PiCom/DataCom/CyberSciTech), Athens, Greece, 12–15 August 2018; pp. 800–807.

- Wang, X.; Han, Y.; Leung, V.C.; Niyato, D.; Yan, X.; Chen, X. Convergence of edge computing and deep learning: A comprehensive survey. IEEE Commun. Surv. Tutorials 2020, 22, 869–904.

- Zhou, Z.; Chen, X.; Li, E.; Zeng, L.; Luo, K.; Zhang, J. Edge intelligence: Paving the last mile of artificial intelligence with edge computing. Proc. IEEE 2019, 107, 1738–1762.

- Verbraeken, J.; Wolting, M.; Katzy, J.; Kloppenburg, J.; Verbelen, T.; Rellermeyer, J.S. A survey on distributed machine learning. ACM Comput. Surv. (CSUR) 2020, 53, 1–33.

- Valerio, L.; Passarella, A.; Conti, M. Accuracy vs. traffic trade-off of learning iot data patterns at the edge with hypothesis transfer learning. In Proceedings of the 2016 IEEE 2nd International Forum on Research and Technologies for Society and Industry Leveraging a better tomorrow (RTSI), Bologna, Italy, 7–9 September 2016; pp. 1–6.

- Zou, Z.; Jin, Y.; Nevalainen, P.; Huan, Y.; Heikkonen, J.; Westerlund, T. Edge and fog computing enabled AI for IoT-an overview. In Proceedings of the 2019 IEEE International Conference on Artificial Intelligence Circuits and Systems (AICAS), Hsinchu, Taiwan, 18–20 March 2019; pp. 51–56.

- Hossain, M.S.; Muhammad, G.; Amin, S.U. Improving consumer satisfaction in smart cities using edge computing and caching: A case study of date fruits classification. Future Gener. Comput. Syst. 2018, 88, 333–341.

- Sharma, A.; Sabitha, A.S.; Bansal, A. Edge analytics for building automation systems: A review. In Proceedings of the 2018 International Conference on Advances in Computing, Communication Control and Networking (ICACCCN), Greater Noida, India, 12–13 October 2018; pp. 585–590.

- Wan, S.; Gu, Z.; Ni, Q. Cognitive computing and wireless communications on the edge for healthcare service robots. Comput. Commun. 2020, 149, 99–106.

- Elbamby, M.S.; Perfecto, C.; Liu, C.F.; Park, J.; Samarakoon, S.; Chen, X.; Bennis, M. Wireless edge computing with latency and reliability guarantees. Proc. IEEE 2019, 107, 1717–1737.

- Rausch, T.; Dustdar, S. Edge intelligence: The convergence of humans, things, and ai. In Proceedings of the 2019 IEEE International Conference on Cloud Engineering (IC2E), Prague, Czech Republic, 24–27 June 2019; pp. 86–96.

- Fasciano, C.; Vitulano, F. Artificial Intelligence on Edge Computing: A Healthcare Scenario in Ambient Assisted Living. In Proceedings of the Artificial Intelligence for Ambient Assisted Living (AI*AAL.it 2019), Rende, Italy, 20–23 November 2019.

- Wang, Y.; Meng, W.; Li, W.; Liu, Z.; Liu, Y.; Xue, H. Adaptive machine learning-based alarm reduction via edge computing for distributed intrusion detection systems. Concurr. Comput. Pract. Exp. 2019, 31, e5101.

- Yazici, M.T.; Basurra, S.; Gaber, M.M. Edge machine learning: Enabling smart internet of things applications. Big Data Cogn. Comput. 2018, 2, 26.

- Chen, S.; Gong, P.; Wang, B.; Anpalagan, A.; Guizani, M.; Yang, C. EDGE AI for heterogeneous and massive IoT networks. In Proceedings of the 2019 IEEE 19th International Conference on Communication Technology (ICCT), Xi’an, China, 16–19 October 2019; pp. 350–355.

- Zhou, J.; Dai, H.N.; Wang, H. Lightweight convolution neural networks for mobile edge computing in transportation cyber physical systems. ACM Trans. Intell. Syst. Technol. (TIST) 2019, 10, 1–20.

- Ali, M.; Anjum, A.; Yaseen, M.U.; Zamani, A.R.; Balouek-Thomert, D.; Rana, O.; Parashar, M. Edge enhanced deep learning system for large-scale video stream analytics. In Proceedings of the 2018 IEEE 2nd International Conference on Fog and Edge Computing (ICFEC), Washington, DC, USA, 1–3 May 2018; pp. 1–10.

- Li, E.; Zeng, L.; Zhou, Z.; Chen, X. Edge AI: On-demand accelerating deep neural network inference via edge computing. IEEE Trans. Wirel. Commun. 2019, 19, 447–457.

- Li, H.; Ota, K.; Dong, M. Learning IoT in edge: Deep learning for the Internet of Things with edge computing. IEEE Netw. 2018, 32, 96–101.

- Hassan, M.A.; Xiao, M.; Wei, Q.; Chen, S. Help your mobile applications with fog computing. In Proceedings of the 2015 12th Annual IEEE International Conference on Sensing, Communication, and Networking-Workshops (SECON Workshops), Seattle, WA, USA, 22–25 June 2015; pp. 1–6.

- Liu, C.; Cao, Y.; Luo, Y.; Chen, G.; Vokkarane, V.; Yunsheng, M.; Chen, S.; Hou, P. A new deep learning-based food recognition system for dietary assessment on an edge computing service infrastructure. IEEE Trans. Serv. Comput. 2017, 11, 249–261.

- Liu, Y.; Yang, C.; Jiang, L.; Xie, S.; Zhang, Y. Intelligent edge computing for IoT-based energy management in smart cities. IEEE Netw. 2019, 33, 111–117.

- Nishio, T.; Yonetani, R. Client selection for federated learning with heterogeneous resources in mobile edge. In Proceedings of the ICC 2019-2019 IEEE International Conference on Communications (ICC), Shanghai, China, 20–24 May 2019; pp. 1–7.

- Bura, H.; Lin, N.; Kumar, N.; Malekar, S.; Nagaraj, S.; Liu, K. An edge based smart parking solution using camera networks and deep learning. In Proceedings of the 2018 IEEE International Conference on Cognitive Computing (ICCC), San Francisco, CA, USA, 2–7 July 2018; pp. 17–24.

- Wei, J.; Cao, S. Application of edge intelligent computing in satellite Internet of Things. In Proceedings of the 2019 IEEE International Conference on Smart Internet of Things (SmartIoT), Tianjin, China, 9–11 August 2019; pp. 85–91.

- Moon, J.; Kum, S.; Lee, S. A heterogeneous IoT data analysis framework with collaboration of edge-cloud computing: Focusing on indoor PM10 and PM2. 5 status prediction. Sensors 2019, 19, 3038.

- Ke, R.; Zhuang, Y.; Pu, Z.; Wang, Y. A smart, efficient, and reliable parking surveillance system with edge artificial intelligence on IoT devices. IEEE Trans. Intell. Transp. Syst. 2021, 22, 4962–4974.

- Ma, Z.; Liu, Y.; Liu, X.; Ma, J.; Ren, K. Lightweight privacy-preserving ensemble classification for face recognition. IEEE Internet Things J. 2019, 6, 5778–5790.

- Palossi, D.; Loquercio, A.; Conti, F.; Flamand, E.; Scaramuzza, D.; Benini, L. A 64-mW DNN-based visual navigation engine for autonomous nano-drones. IEEE Internet Things J. 2019, 6, 8357–8371.

- Zhang, X.; Wang, Y.; Lu, S.; Liu, L.; Xu, L.; Shi, W. OpenEI: An open framework for edge intelligence. In Proceedings of the 2019 IEEE 39th International Conference on Distributed Computing Systems (ICDCS), Dallas, TX, USA, 7–10 July 2019; pp. 1840–1851.

- Xu, C.; Dong, M.; Ota, K.; Li, J.; Yang, W.; Wu, J. Sceh: Smart customized e-health framework for countryside using edge ai and body sensor networks. In Proceedings of the 2019 IEEE global communications conference (GLOBECOM), Waikoloa, HI, USA, 9–13 December 2019; pp. 1–6.

- Gómez-Carmona, O.; Casado-Mansilla, D.; Kraemer, F.A.; López-de Ipiña, D.; García-Zubia, J. Exploring the computational cost of machine learning at the edge for human-centric Internet of Things. Future Gener. Comput. Syst. 2020, 112, 670–683.

- Lu, S.; Sengupta, A. Exploring the connection between binary and spiking neural networks. Front. Neurosci. 2020, 14, 535.

- Guo, R.; Xiang, Y.; Mao, Z.; Yi, Z.; Zhao, X.; Shi, D. Artificial Intelligence Enabled Online Non-intrusive Load Monitoring Embedded in Smart Plugs. In Proceedings of the International Symposium on Signal Processing and Intelligent Recognition Systems, Trivandrum, India, 18–21 December 2019; Springer: Singapore, 2019; pp. 23–36.

- Zhang, Y.; Ma, X.; Zhang, J.; Hossain, M.S.; Muhammad, G.; Amin, S.U. Edge intelligence in the cognitive Internet of Things: Improving sensitivity and interactivity. IEEE Netw. 2019, 33, 58–64.

- Plastiras, G.; Terzi, M.; Kyrkou, C.; Theocharidcs, T. Edge intelligence: Challenges and opportunities of near-sensor machine learning applications. In Proceedings of the 2018 IEEE 29th International Conference on Application-Specific Systems, Architectures and Processors (ASAP), Milan, Italy, 10–12 July 2018; pp. 1–7.

- Zeng, L.; Li, E.; Zhou, Z.; Chen, X. Boomerang: On-demand cooperative deep neural network inference for edge intelligence on the industrial Internet of Things. IEEE Netw. 2019, 33, 96–103.

- Xie, F.; Xu, A.; Jiang, Y.; Chen, S.; Liao, R.; Wen, H. Edge intelligence based co-training of cnn. In Proceedings of the 2019 14th International Conference on Computer Science & Education (ICCSE), Toronto, ON, Canada, 19–21 August 2019; pp. 830–834.

- Liu, J.; Zhang, J.; Ding, Y.; Xu, X.; Jiang, M.; Shi, Y. Binarizing Weights Wisely for Edge Intelligence: Guide for Partial Binarization of Deconvolution-Based Generators. IEEE Trans. Comput.-Aided Des. Integr. Circuits Syst. 2020, 39, 4748–4759.

- Wu, H.; Lyu, F.; Zhou, C.; Chen, J.; Wang, L.; Shen, X. Optimal UAV caching and trajectory in aerial-assisted vehicular networks: A learning-based approach. IEEE J. Sel. Areas Commun. 2020, 38, 2783–2797.

- Chang, Y.; Huang, X.; Shao, Z.; Yang, Y. An efficient distributed deep learning framework for fog-based IoT systems. In Proceedings of the 2019 IEEE Global Communications Conference (GLOBECOM), Waikoloa, HI, USA, 9–13 December 2019; pp. 1–6.

- Montino, P.; Pau, D. Environmental Intelligence for Embedded Real-time Traffic Sound Classification. In Proceedings of the 2019 IEEE 5th International forum on Research and Technology for Society and Industry (RTSI), Florence, Italy, 9–12 September 2019; pp. 45–50.

- Yang, Y.; Mai, X.; Wu, H.; Nie, M.; Wu, H. POWER: A Parallel-Optimization-Based Framework Towards Edge Intelligent Image Recognition and a Case Study. In Proceedings of the International Conference on Algorithms and Architectures for Parallel Processing, Guangzhou, China, 15–17 November 2018; Springer: Berlin/Heidelberg, Germany, 2018; pp. 508–523.

- Munir, M.S.; Abedin, S.F.; Hong, C.S. Artificial intelligence-based service aggregation for mobile-agent in edge computing. In Proceedings of the 2019 20th Asia-Pacific Network Operations and Management Symposium (APNOMS), Matsue, Japan, 18–20 September 2019; pp. 1–6.

- Gonzalez-Guerrero, P.; Tracy II, T.; Guo, X.; Stan, M.R. Towards low-power random forest using asynchronous computing with streams. In Proceedings of the 2019 Tenth International Green and Sustainable Computing Conference (IGSC), Alexandria, VA, USA, 21–24 October 2019; pp. 1–5.

- Sanchez, J.; Soltani, N.; Chamarthi, R.; Sawant, A.; Tabkhi, H. A novel 1d-convolution accelerator for low-power real-time cnn processing on the edge. In Proceedings of the 2018 IEEE High Performance extreme Computing Conference (HPEC), Waltham, MA, USA, 25–27 September 2018; pp. 1–8.

- Fong, S.; Li, T.; Mohammed, S. Data Stream Mining in Fog Computing Environment with Feature Selection Using Ensemble of Swarm Search Algorithms. In Bio-Inspired Algorithms for Data Streaming and Visualization, Big Data Management, and Fog Computing; Springer: Berlin/Heidelberg, Germany, 2021; pp. 43–65.

- Ullrich, K.; Meeds, E.; Welling, M. Soft weight-sharing for neural network compression. arXiv 2017, arXiv:1702.04008.

- Chakraborty, I.; Roy, D.; Garg, I.; Ankit, A.; Roy, K. Constructing energy-efficient mixed-precision neural networks through principal component analysis for edge intelligence. Nat. Mach. Intell. 2020, 2, 43–55.

- Zhang, S.; Li, Y.; Liu, B.; Fu, S.; Liu, X. Enabling Adaptive Intelligence in Cloud-Augmented Multiple Robots Systems. In Proceedings of the 2019 IEEE International Conference on Service-Oriented System Engineering (SOSE), San Francisco, CA, USA, 4–9 April 2019; pp. 338–3385.

- Cao, Y.; Chen, S.; Hou, P.; Brown, D. FAST: A fog computing assisted distributed analytics system to monitor fall for stroke mitigation. In Proceedings of the 2015 IEEE International Conference on Networking, Architecture and Storage (NAS), Boston, MA, USA, 6–7 August 2015; pp. 2–11.

- Li, L.; Ota, K.; Dong, M. Deep learning for smart industry: Efficient manufacture inspection system with fog computing. IEEE Trans. Ind. Inform. 2018, 14, 4665–4673.

- Kamath, G.; Agnihotri, P.; Valero, M.; Sarker, K.; Song, W.Z. Pushing analytics to the edge. In Proceedings of the 2016 IEEE Global Communications Conference (GLOBECOM), Washington, DC, USA, 4–8 December 2016; pp. 1–6.

- Morshed, A.; Jayaraman, P.P.; Sellis, T.; Georgakopoulos, D.; Villari, M.; Ranjan, R. Deep osmosis: Holistic distributed deep learning in osmotic computing. IEEE Cloud Comput. 2017, 4, 22–32.

- Abeshu, A.; Chilamkurti, N. Deep learning: The frontier for distributed attack detection in fog-to-things computing. IEEE Commun. Mag. 2018, 56, 169–175.

- Lyu, L.; Bezdek, J.C.; He, X.; Jin, J. Fog-embedded deep learning for the Internet of Things. IEEE Trans. Ind. Inform. 2019, 15, 4206–4215.

- Jiang, X.; Yu, F.R.; Song, T.; Ma, Z.; Song, Y.; Zhu, D. Blockchain-enabled cross-domain object detection for autonomous driving: A model sharing approach. IEEE Internet Things J. 2020, 7, 3681–3692.

- Zhou, Z.; Liao, H.; Gu, B.; Huq, K.M.S.; Mumtaz, S.; Rodriguez, J. Robust mobile crowd sensing: When deep learning meets edge computing. IEEE Netw. 2018, 32, 54–60.

- Ferdowsi, A.; Challita, U.; Saad, W. Deep learning for reliable mobile edge analytics in intelligent transportation systems: An overview. IEEE Veh. Technol. Mag. 2019, 14, 62–70.

- Kang, Y.; Hauswald, J.; Gao, C.; Rovinski, A.; Mudge, T.; Mars, J.; Tang, L. Neurosurgeon: Collaborative intelligence between the cloud and mobile edge. ACM SIGARCH Comput. Archit. News 2017, 45, 615–629.

- Eshratifar, A.E.; Abrishami, M.S.; Pedram, M. JointDNN: An efficient training and inference engine for intelligent mobile cloud computing services. IEEE Trans. Mob. Comput. 2021, 20, 565–576.

- Hadidi, R.; Cao, J.; Woodward, M.; Ryoo, M.S.; Kim, H. Musical chair: Efficient real-time recognition using collaborative iot devices. arXiv 2018, arXiv:1802.02138.

- Zhou, J.; Wang, Y.; Ota, K.; Dong, M. AAIoT: Accelerating artificial intelligence in IoT systems. IEEE Wirel. Commun. Lett. 2019, 8, 825–828.

- Fang, Y.; Jin, Z.; Zheng, R. TeamNet: A Collaborative Inference Framework on the Edge. In Proceedings of the 2019 IEEE 39th International Conference on Distributed Computing Systems (ICDCS), Dallas, TX, USA, 7–10 July 2019; pp. 1487–1496.

- TensorFlow Lite - Deploy Machine Learning Models on Mobile and IoT Devices. Available online: https://www.tensorflow.org/lite (accessed on 20 January 2021).

- Marat, D.; Yiming, W.; Hao, L. Qnnpack: Open Source Library for Optimized Mobile Deep Learning. 2018. Available online: https://engineering.fb.com/2018/10/29/ml-applications/qnnpack (accessed on 20 January 2021).

- Gupta, C.; Suggala, A.S.; Goyal, A.; Simhadri, H.V.; Paranjape, B.; Kumar, A.; Goyal, S.; Udupa, R.; Varma, M.; Jain, P. Protonn: Compressed and accurate knn for resource-scarce devices. In Proceedings of the International Conference on Machine Learning, Sydney, Australia, 6–11 August 2017; PMLR: Fort Lauderdale, FL, USA, 2017; pp. 1331–1340.

- Dennis, D.K.; Pabbaraju, C.; Simhadri, H.V.; Jain, P. Multiple Instance Learning for Efficient Sequential Data Classification on Resource-Constrained Devices; NeurIPS: Montreal, QC, Canada, 2018; pp. 10976–10987.

- Core ML|Apple Developer Documentation. Available online: https://developer.apple.com/documentation/coreml (accessed on 20 January 2021).

- Bhattacharjee, A.; Barve, Y.; Khare, S.; Bao, S.; Gokhale, A.; Damiano, T. Stratum: A serverless framework for the lifecycle management of machine learning-based data analytics tasks. In Proceedings of the 2019 USENIX Conference on Operational Machine Learning (OpML 19), Santa Clara, CA, USA, 20 May 2019; pp. 59–61.

- Xu, J.; Wang, S.; Zhou, A.; Yang, F. Edgence: A blockchain-enabled edge-computing platform for intelligent IoT-based dApps. China Commun. 2020, 17, 78–87.

- McMahan, B.; Moore, E.; Ramage, D.; Hampson, S.; y Arcas, B.A. Communication-efficient learning of deep networks from decentralized data. In Artificial Intelligence and Statistics; PMLR: Fort Lauderdale, FL, USA, 2017; pp. 1273–1282.

- Shokri, R.; Shmatikov, V. Privacy-preserving deep learning. In Proceedings of the 22nd ACM SIGSAC Conference on Computer and Communications Security, Denver, CO, USA, 12–16 October 2015; pp. 1310–1321.

- Kim, H.; Park, J.; Bennis, M.; Kim, S.L. Blockchained on-device federated learning. IEEE Commun. Lett. 2019, 24, 1279–1283.

- Harlap, A.; Narayanan, D.; Phanishayee, A.; Seshadri, V.; Devanur, N.; Ganger, G.; Gibbons, P. Pipedream: Fast and efficient pipeline parallel dnn training. arXiv 2018, arXiv:1806.03377.

- Blot, M.; Picard, D.; Cord, M.; Thome, N. Gossip training for deep learning. arXiv 2016, arXiv:1611.09726.

- Daily, J.; Vishnu, A.; Siegel, C.; Warfel, T.; Amatya, V. Gossipgrad: Scalable deep learning using gossip communication based asynchronous gradient descent. arXiv 2018, arXiv:1803.05880.

- Li, Y.; Park, J.; Alian, M.; Yuan, Y.; Qu, Z.; Pan, P.; Wang, R.; Schwing, A.; Esmaeilzadeh, H.; Kim, N.S. A network-centric hardware/algorithm co-design to accelerate distributed training of deep neural networks. In Proceedings of the 2018 51st Annual IEEE/ACM International Symposium on Microarchitecture (MICRO), Fukuoka, Japan, 20–24 October 2018; pp. 175–188.

- Reagen, B.; Whatmough, P.; Adolf, R.; Rama, S.; Lee, H.; Lee, S.K.; Hernández-Lobato, J.M.; Wei, G.Y.; Brooks, D. Minerva: Enabling low-power, highly-accurate deep neural network accelerators. In Proceedings of the 2016 ACM/IEEE 43rd Annual International Symposium on Computer Architecture (ISCA), Seoul, Korea, 18–22 July 2016; pp. 267–278.

- Liu, S.; Lin, Y.; Zhou, Z.; Nan, K.; Liu, H.; Du, J. On-demand deep model compression for mobile devices: A usage-driven model selection framework. In Proceedings of the 16th Annual International Conference on Mobile Systems, Applications, and Services, Munich, Germany, 10–15 June 2018; pp. 389–400.

- Li, H.; Hu, C.; Jiang, J.; Wang, Z.; Wen, Y.; Zhu, W. Jalad: Joint accuracy-and latency-aware deep structure decoupling for edge-cloud execution. In Proceedings of the 2018 IEEE 24th International Conference on Parallel and Distributed Systems (ICPADS), Singapore, 11–13 December 2018; pp. 671–678.

- Jeong, H.J.; Lee, H.J.; Shin, C.H.; Moon, S.M. IONN: Incremental offloading of neural network computations from mobile devices to edge servers. In Proceedings of the ACM Symposium on Cloud Computing, Carlsbad, CA, USA, 11–13 October 2018; pp. 401–411.

- Sun, W.; Liu, J.; Yue, Y. AI-enhanced offloading in edge computing: When machine learning meets industrial IoT. IEEE Netw. 2019, 33, 68–74.

- Brik, B.; Bettayeb, B.; Sahnoun, M.; Duval, F. Towards predicting system disruption in industry 4.0: Machine learning-based approach. Procedia Comput. Sci. 2019, 151, 667–674.

- Kulkarni, S.; Guha, A.; Dhakate, S.; Milind, T. Distributed Computational Architecture for Industrial Motion Control and PHM Implementation. In Proceedings of the 2019 IEEE International Conference on Prognostics and Health Management (ICPHM), San Francisco, CA, USA, 17–20 June 2019; pp. 1–8.

- Zhang, S.; Li, W.; Wu, Y.; Watson, P.; Zomaya, A. Enabling edge intelligence for activity recognition in smart homes. In Proceedings of the 2018 IEEE 15th International Conference on Mobile Ad Hoc and Sensor Systems (MASS), Chengdu, China, 9–12 October 2018; pp. 228–236.

- Shao, B.E.; Lu, C.H.; Huang, S.S. Lightweight image De-raining for IoT-enabled cameras. In Proceedings of the 2019 IEEE International Conference on Consumer Electronics-Taiwan (ICCE-TW), Yilan, Taiwan, 20–22 May 2019; pp. 1–2.

- Muhammad, K.; Khan, S.; Palade, V.; Mehmood, I.; De Albuquerque, V.H.C. Edge intelligence-assisted smoke detection in foggy surveillance environments. IEEE Trans. Ind. Inform. 2019, 16, 1067–1075.

- Yang, K.; Ma, H.; Dou, S. Fog intelligence for network anomaly detection. IEEE Netw. 2020, 34, 78–82.

- Zhang, J.; Letaief, K.B. Mobile edge intelligence and computing for the internet of vehicles. Proc. IEEE 2019, 108, 246–261.

- Liu, L.; Zhang, X.; Zhang, Q.; Weinert, A.; Wang, Y.; Shi, W. AutoVAPS: An IoT-enabled public safety service on vehicles. In Proceedings of the Fourth Workshop on International Science of Smart City Operations and Platforms Engineering, Montreal, QC, Canada, 15 April 2019; pp. 41–47.

- Yang, H.; Wen, J.; Wu, X.; He, L.; Mumtaz, S. An efficient edge artificial intelligence multipedestrian tracking method with rank constraint. IEEE Trans. Ind. Inform. 2019, 15, 4178–4188.

- Lee, C.; Park, S.; Yang, T.; Lee, S.H. Smart parking with fine-grained localization and user status sensing based on edge computing. In Proceedings of the 2019 IEEE 90th Vehicular Technology Conference (VTC2019-Fall), Honolulu, HI, USA, 22–25 September 2019; pp. 1–5.

- Hossain, M.S.; Muhammad, G. An audio-visual emotion recognition system using deep learning fusion for a cognitive wireless framework. IEEE Wirel. Commun. 2019, 26, 62–68.

- Cao, Y.; Hou, P.; Brown, D.; Wang, J.; Chen, S. Distributed analytics and edge intelligence: Pervasive health monitoring at the era of fog computing. In Proceedings of the 2015 Workshop on Mobile Big Data, Hangzhou, China, 22–25 June 2015; pp. 43–48.

- Al-Rakhami, M.; Gumaei, A.; Alsahli, M.; Hassan, M.M.; Alamri, A.; Guerrieri, A.; Fortino, G. A lightweight and cost effective edge intelligence architecture based on containerization technology. World Wide Web 2020, 23, 1341–1360.

- Rincon, J.A.; Julian, V.; Carrascosa, C. Towards the Edge Intelligence: Robot Assistant for the Detection and Classification of Human Emotions. In Proceedings of the International Conference on Practical Applications of Agents and Multi-Agent Systems, L’Aquila, Italy, 13–15 July 2020; Springer: Berlin/Heidelberg, Germany, 2020; pp. 31–41.

- Maitra, A.; Kuntagod, N. A novel mobile application to assist maternal health workers in rural India. In Proceedings of the 2013 5th International Workshop on Software Engineering in Health Care (SEHC), San Francisco, CA, USA, 20–21 May 2013; pp. 75–78.

- Gupta, A.; Mukherjee, N. A cloudlet platform with virtual sensors for smart edge computing. IEEE Internet Things J. 2019, 6, 8455–8462.

- Lin, Y.H. Novel smart home system architecture facilitated with distributed and embedded flexible edge analytics in demand-side management. Int. Trans. Electr. Energy Syst. 2019, 29, e12014.