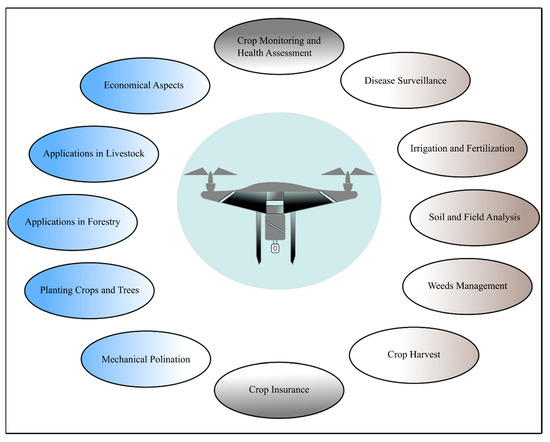

The establishment of significant applications of RPAs in livestock, forestry, crop monitoring, disease surveillance, irrigation, soil analysis, fertilization, crop harvest, weed management, mechanical pollination, crop insurance and tree plantation are cited in the light of currently available literature in this domain.

- Drone

- Precision Agriculture

- Remote sensing

- RPA

Note:All the information in this draft can be edited by authors. And the entry will be online only after authors edit and submit it.

1. Introduction

The world is going through rapid technological shifts and innovations. The agriculture sector has also been benefiting from such technological advancements for many years. An indispensable way of accomplishing more by utilizing fewer resources and exerting little effort is considered as innovation [1]. It is very well argued that enriching raw material by innovation ensures production efficiency, contributes to economic growth, food safety and security [2].

In recent years, the use of technology in agriculture has gained momentum of which GIS (Geographic Information System), satellites, air vehicles, autonomous robots, GPS (Global Positioning System) and various other communication technologies have made their way into farming. With the innovation and implementation of such technologies, new terms like “precision agriculture”, “precision farming”, “precision approach”, “digital farming” and “agriculture 4.0” etc. have appeared on the horizon. The precision agriculture is defined as information and technology based agricultural production system that is used in order to analyze, determine, and manage field factors like spatial and temporal variability for obtaining maximum sustainability, profit, and environmental protection [3].

Precision agriculture that paves the way to make efficient plans for pest control, harvest, irrigation, disease control, and optimum fertilization etc. is an emerging technology and is related to the development of technology for obtaining and analysing data that in turn results in the implementation of adequate solutions [3][4][3,4]. Remote sensing (a technique of collecting information about objects without establishing any physical contact with them [5]) has proven itself an integral part of precision farming. Although, it was initially linked to photogrammetry with the usage of balloons for aerial observation as first ever aerial photographs captured thus date backs to 1858 aboard a hot-air balloon [6]. Various platforms are used for remote sensing and can be classified as aerial platforms (i.e planes, helicopters, drones, balloons) and spatial platforms (i.e. satellites) that use sensors for measuring reflected or emitted electromagnetic radiations from the object under study. Consequently, they can be classified according to the radiations they register into passive (cameras, scanners, etc.) and active (radar and LIDAR) ones. The formers are limited to collecting the electromagnetic energy reflected or emitted by the surface, while the latter discharge radiations towards the observed surface and collect the energy reflected by it. A refined definition for remote sensing according to the scope of this article could thus be: a set of techniques that analyse the data obtained by sensors on aerial or spacial platforms, including the acquisition of data from earth's surface as emitted or reflected radiations followed by its subsequent reception, correction and distribution, as well as its final treatment by experts for the extraction of useful information in which the end user can support their decision-making.

Satellites and drones are the most commonly used tools in precision farming. With the launch of Landsat-1 satellite in 1972 [7], a new era of remote sensing began. Nevertheless, given the recent technological advancements, the use of drones has become widespread and is gaining popularity due to the number of benefits they offer, explicitly integrated sensors and imagery system [2][8][2,8]. Remotely Piloted Aircraft (RPA), commonly known as drone, refers to a remotely controlled or autonomously flown, unpiloted, unmanned aircraft that is based on complex dynamic automation systems [3]. The incorporation of drones into precision farming is a growing agricultural trend with a potential of invoking novel agricultural and economical trails. Although, today’s research is slanted towards the employment of novel tools and sensors capable of remote surveillance of soil properties and crops in quasi-real-time [3].

To ensure global food security for the cumulating world population, there is an immense need for closing the gap between actual and potential crop yields. The most prominent factors contributing to this gap include interactions among the crop genotype, environment, and management: G × E × M [9]. For instance, a difference in soil affects fertilizer uptake even if the crop response to fertilizer application is known, thereby contributing to this yield gap. Similarly, on practical basis farmers usually apply excessive fertilizers than the desired amount, even for areas of high potential yield, resulting this excessive fertilizer to be accumulated in the ground and deteriorating water quality [10][11][10,11]. International controls on the use of fertilizers in agriculture not only ensure the safety of humans but also the environment. That’s why it is very important not to exceed these limits by over-fertilizing the land. For improved crop yield, as nitrogen (N) is the most limiting crop nutrient, so N based fertilizers are applied frequently [12]. However, this also augments the N losses to the environment via leaching or gaseous emissions. For example, fertilizer nitrate (NO3–) leaching pollutes the surface and ground water [13]. Ultimately, these NO3– ends up in our diet. In human body NO3– is converted to NO2– and then eventually to nitrosamine compounds and NO in acidic environment (specifically in stomach). These compounds are responsible for methemoglobinemia that further provokes cancers, diabetes and thyroid disorders [14]. To nip the evil in the bud precision agriculture is the answer. Precision agriculture presents on site-specific information with optimized solutions for which drones are anticipated to play a key role thereby minimizing the yield gaps while widening up the room for scientific exploration and development [15]. RPAs are facilitating us in this domain too by furnishing the estimates of total N concentration in water, so that only the required amount of N fertilizer be applied avoiding the potential harmful impacts and saving the economic loss to farmers. One such practical example of using drone equipped with hyperspectral cameras to assess the N concentration in water has recently been reported [13]. Although the lower adoption rates of precision technologies than expected comprise of various factors including economical ones [15][16][15,16].

2. Remotely Piloted Aircraft (RPA)

Remotely Piloted Aircraft (RPA) or Unmanned Aerial Vehicle (UAV) refer to auto-piloted multipurpose aircraft. Although, the term UAV is considered obsolete because of the use of this term by aviation organizations and the operational complexity that they represent [17]. Whereas, the term RPA is acceptably used in Europe [18]. Other terminologies frequently used for referring to drones include: Dynamic Remotely Operated Navigation Equipment (DRONE), Remotely Piloted Vehicles (RPV), Remotely Piloted Aircraft Systems (RPAS), Remotely Operated Aircraft (ROA), and Unmanned Aircraft Systems (UAS) [17].

In 1930, RPAs or drones were known as “Queen Bees” [19] and were initially used by military followed by their disposition for civil use [20]. One of the earliest recorded use of RPAs was by the Austrians in July 1849, after around two hundred bomb-mounted, unmanned hot air balloons were launched in the city of Venice [21]. In agricultural context, use of RPAs for Montana’s forest fires monitoring was tested in 1986, followed by the documentation of enhanced image resolution captured using RPA named “Predator” in 1994 [20]. The first RPA model “Yamaha RMAX”, for pest control and crop monitoring applications, was developed by Yamaha [22]. By the year 2020, given the current uses of drones from hobbyists to industrialists, their market is anticipated to reach upto $200 billion [23]. Although, the pandemic caused by COVID-19 can certainly affect these estimates.

Currently, RPAs are gaining popularity as an integral part of precision agriculture and ensuring agricultural sustainability [24]. The agriculture sector is in demand of RPAs with diverse features to ensure better crop yields and for overcoming several challenges of farmers [23]. In forestry and agriculture, RPAs are increasingly becoming part of remote sensing and imaging applications with simultaneous analysis of data through mapping spatial variability in the field thereby paving the way for improved farm productivity [25]. For example, a quad-copter is reported to conduct crop scouting, map field tile drainage and monitor fertilizer trials [26]. Furthermore, the use of RPAs for biophysical variables’ (i.e. chlorophyll and biomass determination) control is also of particular interest [25].

The number of advantages of RPAs that they endow is the reason for their increasing demand in agriculture sector. The accessibility, flexibility and efficiency are their promising features. For example, RPAs are the cheapest means of land monitoring with high resolution images (up to 0.2 m) providing complete spatial coverage without worrying about the clouds interference compared to satellites and traditional aerial photography systems [4]. Similarly, the 3-D maps for soil and field analysis help farmers for their irrigation and nitrogen level management for better yield [23]. RPAs offer a prominent advantage over other aerial imagery means i.e. satellites and airplanes. The images taken by an RPA are 44 times better than satellite images and in terms of resolution, RPA camera offers over 40,000 times better resolution. Satellites and planes can equally suffer to bad weather and clouds. Furthermore, RPAs offer the freedom of flight scheduling and the flexibility to re-fly as per needs [27]. Additionally, low costs, agility, manoeuvrability, real-time data hunting for better yields, time saving by tremendously reducing inspection times, use of geographic information system (GIS) mapping for input cost management, high resolution imagery to overcome the pixel demixing problem, yield increase and resource management, the use of Infrared, normalized difference vegetation index (NVDI), and multispectral sensors for monitoring the crop health are few of the salient features of RPAs that make their use in agriculture attractive and sustainable [3][19][23][3,19,23].

2.1. Classification of RPAs

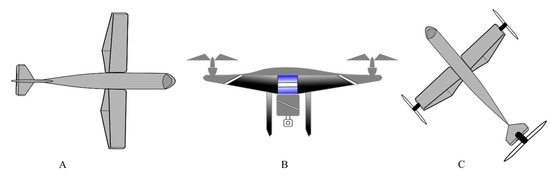

There are various RPAs in the commercial market to date including for military use but based on the use of RPAs in agriculture, they are largely categorized into rotary wing and fixed wing RPAs, Figure 1. Although both of these kinds have their own benefits and limitations. For example, structurally simple fixed wing RPAs lack hovering and require a runway for take-off and landing while offering high-speed flights for longer durations. Whereas, with structurally complex rotary wings RPAs exhibit low-speed flights for shorter duration, they are also capable of hovering, vertical takeoff, and landing with nimble maneuverability [22].

Figure 1. Illustration of basic RPA types (A) Fixed wing RPA (B) Rotary wing RPA (C) Combinational concepts.

By the type of control, Pino [21] has classified the RPAs as:

- Autonomous: An autonomous RPA doesn't need a human pilot to control it from the ground. It is guided by its own integrated sensors and systems.

- Monitored: In this case, a human technician is needed. The job of this person is to provide information and control the feedback of the RPA. The drone directs its own flight plan and the technician can decide what action to take. This system is common in precision agriculture and photogrammetry work.

- Supervised: It is piloted by an operator, although it can perform some tasks autonomously.

- Preprogrammed: It follows a previously designed flight plan and there is no way to modify it to accommodate possible changes.

- Remotely controlled (R / C): It is piloted directly by a technician through a console.

However, Vroegindeweij, et al. [19] have categorized RPAs in the following types;

- Fixed wings and flying wings RPAs (having limited maneuverability) that use a jet engine for thrust and wings for lift,( Figure 1 A).

- Vertical take-off and landing (VTOL) RPAs (being very maneuverable) that use a rotor system for thrust and lift.

- Micro RPAs, as their name indicate of very small sizes i.e. in the range of centimeters. They may use either rotors or flapping wings for thrust and lift.

- Airships and parafoils (having lower maneuverability) that use balloons or parachutes for the flight.

- Novel concepts and combinations that could be based on the previous principles to obtain the desired benefits, Figure 1 (C).

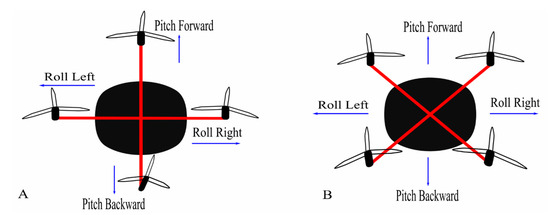

There is also a notable difference in the landing gears of fixed wings and rotary wings RPAs as the former ones may use wheels or magnetic levitation while the later ones have simple supporting structures. The rotary winged RPAs can further be of a helicopter, quadcopter, hexacopter, and octocopter, based on the number of rotors they have. The rotor movements are responsible for the lift of these copters as two of the four rotors, in a quadcopter specifically, move in clockwise direction and other two in the anticlockwise direction. Two configuration models plus (+) and cross (X) are

used in quadcopters, of which the latter is more stable and common than former [22] (Figure 2).

Figure 2.

Configuration models of quadcopter (

A

) Plus configuration (

B

) Cross configuration.

2.2. Basic Architecture of an Agricultural RPA

Usually following are the basic components of a RPA aimed for agricultural use [28].

- Frame

- Brush-less motors

- Electronic Speed Control (ESC) modules

- A control board

- An Inertial Navigation System (INS) and Global Navigation Satellite System (GNSS)

- Payload sensors (i.e. Light Detection and Ranging ̴LiDAR systems, thermal camera, multispectral camera, RGB camera ) and altimeter (i.e. ultrasonic sensor, laser altimeter, barometer etc.)

- Transmitter and receiver modules

All of these components are necessary not only for a steady flight but also for field monitoring and collecting various field data. The parameters like the normalized difference vegetation index (NDVI), leaf water content, ground cover, leaf area index (LAI) and chlorophyll content are quantified using multispectral cameras embedded on drones [28]. For example, a drone embedded with a thermal camera (thermovision A40M) and multispectral sensor (MCA-6 Tetracam) is a practical system for vegetation monitoring [29].

Similarly, the Digital Surface Model (DSM) or the Digital Terrain Model (DTM): digitization of the terrain surface of the monitored area is obtained using the components like LiDAR systems and RGB cameras. One such example of the enactment of these tools is previously reported [30][31][30,31].

Software programs intended for data processing and image analysis are not usually considered as a physical component of a drone but they play a crucial role in management, decision making and planning [32]. Various software, open-source solutions as well as marketable, are commercially available developed on the vendors’ policies. Some key features that such software programs should have include: data collection (imageries and videos assembly from drone and their storage in database), analysis and reports (production of valuable information after analysing the data like yield prediction etc.), map generation (creating 3D field models and high resolution maps), and flight planning and automation (real-time flight planning, scheduling and route optimization within the program) [32].

2.3. Choosing an Appropriate Drone

Given the market range, numerous drones with various features are available. As mentioned earlier, the simplest of the drone comes with a digital camera (e.g. Canon or GoPro) along with different filters. Although the choice of an appropriate drone for a farmer depends upon many factors. For example, an orchard growing farmer is more interested in the crop status than weed pressure while for a cash crop farmer it’s the opposite case [27].

With the intention of using a drone for PA, it should be capable of flying according to waypoints definition, of controlling its flight altitude, of landing automatically given the battery status, of sensing and avoiding the obstacles during its flight and of acquiring stabilized images. The Parrot Bluegrass has been reported to fulfil such requirements and is anticipated to be employed for PA practices [28]. A few of the commercially available drones for agricultural use are summarized in the Table 1.

Table 1. Characteristics of few drones applied in agriculture field [23].

|

Drone |

Parameter |

Value |

|

Honeycomb AgDrone |

||

|

|

Drone type |

Fixed wing |

|

Material |

Kevlar Exoskeleton |

|

|

Wingspan and Battery |

1.2 m; 8 Ah Lipo |

|

|

Coverage |

34722000 m2 |

|

|

Trigger Method |

Automatic Dual Camera Electrical Signal |

|

|

Flight Specifications |

Cruise Speed: 12.7 ms−1 Max Speed: 22.7 ms−1 |

|

|

DJI Matrice 100 |

||

|

|

Drone Type |

Fixed Wing Quadcopter |

|

Battery |

5.7 Ah LiPo 6s |

|

|

Video Output |

USB, HDMI-mini |

|

|

Flight Specifications |

Max Speed: 5 ms−1 (Ascent) Max Speed: 4 ms−1 (Descent) |

|

|

Operating Temperature |

−10 ℃ to 4 ℃ |

|

|

Others |

Intelligent Flight Battery, Advanced Flight Navigation System |

|

|

DJI T600 Inspire |

||

|

|

Material |

Carbon Fiber |

|

Interface Type |

Detachable |

|

|

Battery |

4.5 Ah LiPo 6s |

|

|

Camera Features |

Image: 4000 × 3000 ISO Range” 100-3200 (Video) Photography Modes: Single, Burst, Auto Exposure, Time-Lapse Video Modes: UHD, FHD, HD File Formats: JPEG, DNG, MP4, MOV MEMORY Card: 64 GB (Max) |

|

|

Flight Operations |

Max Speed: 5 ms−1 (Ascent) Max Speed: 4 ms−1 (Descent) |

|

|

Flight Time |

18 min /40 min with additional battery |

|

|

Others |

Easy Navigation |

|

|

Agras MG-1- DJI |

||

|

|

Drone Type |

Octocopter |

|

Material |

High Performance Engineered Plastics |

|

|

Coverage |

4000 – 6000 m2 in 10 min |

|

|

Liquid Tank |

10 Kg (Payload), 10 L (Volume) |

|

|

Nozzle |

4 |

|

|

Battery |

MG-12000 |

|

|

Flight Parameters |

Max Take Off Weight: 42.5 Kg Max Operating Speed: 8 ms−1 Max Flying Speed: 22 ms−1 Flight Modes: Smart, Manual Plus Mode and Manual

|

|

|

Operating Temperature |

0 to 40 ℃ |

|

|

Others |

Y-type Folding Structure |

|

|

EBEE SQ- SenseFly |

||

|

|

Drone Type |

Detachable Wings with Low-Noise, Brushless and Electric Motor |

|

Flight Operations |

Max Flight Time: 55 min Linear Landing with ̴ 5 m Flight Planning Software: eMotion Ag |

|

|

Sensors |

4 Spectral Sensors, GPS, IMU, Magnetometer, SD Card |

|

|

Camera |

4–1.2 MP Spectral Camera 1 fps 16 MP RGB Camera |

|

|

Others |

Automatic 3D Flight Planning, Problem Identification During Flight |

|

|

Lancaster 5 Precision Hawk |

||

|

|

CPU |

720 MHz Dual Core Linux |

|

Interface |

Analog, Digital, Wi-Fi, Ethernet, USB |

|

|

Wing |

Fixed Wing with Single Electric Motor |

|

|

Battery |

7 Ah |

|

|

Sensors |

Humidity, Temperature, Pressure, Incident Light Plug and Play sensors |

|

|

Flight Parameters |

Altitude: 2500 m Max Speed: 21.9 ms−1 Survey Span: 50–300 m |

|

|

Operating Temperature |

40 ℃ |

|

|

Others |

Smart Flight Controls, Open Source Technology |

|

|

SOLO AGCO Edition |

||

|

|

Flight Controller |

PIXHAWK |

|

Material |

Self-Tightening Glass-Fortified Nylon Props |

|

|

CPU |

1 GHz On-board Computer |

|

|

Video |

Full HD Streaming to Mobile Devices |

|

|

Flight Parameters |

Max Speed: 24.5 ms−1 Flight Time: 25 min Auto Take Off and Landing |

|

|

Camera |

2 Cameras: GoPro 4 Hero4 Silver for RGB NIR GoPro |

|

|

Others |

Field Health Mapping (NDVI) Management Zone Mapping |

|

Similarly, one is not restricted to solely rely on the commercially built drone packages (RPAs with cameras). RPAs can be modified as per needs by customizing the required cameras needed. For example, at various crop’s stages a farmer can be interested in different crop data (like crop’s irrigation need or crop health status) for which a thermal, multispectral, hyperspectral etc. camera might be required. Thus, a customized desired camera can be mounted on RPA. Most commonly used RPA cameras, as reported previously [6], with their fundamental characteristics are quoted in Table 2.

Table 2. Representative cameras for RPAs.

|

Visible band cameras |

||||||

|

Name |

Pixel size (µm) |

Sensor Type and Resolution (MPx) |

Size (mm2) |

Weight (kg) |

Frame rate (fps) |

Speed (s-1) |

|

iXA 180 |

5.2 |

CCD 80 |

53.7 × 40.4 |

1.70 |

0.7 |

4000 (fp), 1600 (ls) |

|

IQ180 |

5.2 |

CCD 80 |

53.7 × 40.4 |

1.50 |

- |

1000 (ls) |

|

H4D-60 |

6.0 |

CCD 60 |

53.7 × 40.2 |

1.80 |

0.7 |

800 (ls) |

|

NEX-7 |

3.9 |

CMOS 24.3 |

23.5 × 15.6 |

0.35 |

2.3 |

4000 (fp) |

|

GXR A16 |

4.8 |

CMOS 16.2 |

23.6 × 15.7 |

0.35 |

3 |

3200 (fp) |

|

Multispectral cameras |

||||||

|

Name |

Pixel size (µm) |

Sensor Type and Resolution (MPx) |

Size (mm2) |

Weight (kg) |

Spectral Range (nm) |

|

|

MiniMCA-6 |

5.2 × 5.2 |

CMOS 1.3 |

6.66 × 5.32 |

0.7 |

450-1050 |

|

|

Condor-5 UAV-285 |

7.5 × 8.1 |

CCD 1.4 |

10.2 × 8.3 |

0.8 |

400-1000 |

|

|

Hyperspectral cameras |

||||||

|

Name |

Pixel size (µm) |

Sensor Type and Resolution (MPx) |

Size (mm2) |

Weight (kg) |

Spectral Range |

Spectral bands and resolution |

|

Hyperspectral Camera (Rikola Ltd.) |

5.5 |

CMOS

|

5.6 × 5.6 |

0.6 |

500-900 |

40 |

|

Micro-Hyperspec X-series NIR |

30 |

InGaAs |

9.6 × 9.6 |

1.025 |

900-1700 |

62 |

|

Thermal cameras |

||||||

|

Name |

Pixel size (µm) |

Resolution (MPx) |

Size (mm2) |

Weight (kg) |

Spectral Range |

Thermal sensitivity |

|

FLIR TAU 2 640 |

17 |

640 × 512 |

10.8 × 8.7 |

0.07 |

7.5-13.5 |

≤50 |

|

Miricle 307K-25 |

25 |

640 × 480 |

16 × 12.8 |

0.105 |

8-12 |

≤50 |

|

Laser scanners |

||||||

|

Name |

Scanning pattern |

Angular Res. (deg) |

FOV (deg) |

Weight (kg) |

Range (m) |

Laser class and λ (nm) |

|

IBEO LUX |

4 Scanning parallel lines |

(H) 0.125 |

(H) 110 |

1 |

200 |

Class A |

|

HDL-32E |

32 Laser/detector Pairs |

(H) – |

(H) 360 |

2 |

100 |

Class A |

|

VQ-820-GU |

1 Scanning line |

(H) 0.01 |

(H) 60 |

- |

≥1000 |

Class 3B |

2.4. Flight Planning and Data Collection

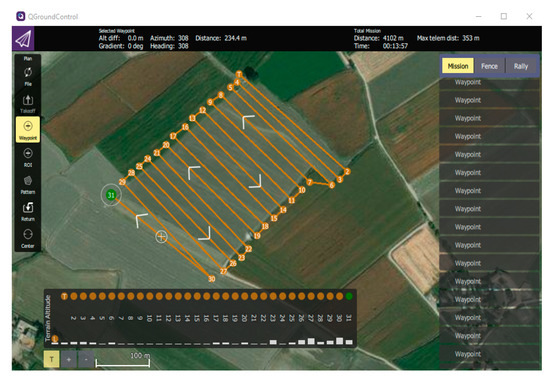

Flight planning is an important and preliminary step for quality data acquisition. There are various ways to accomplish this. For example, software can be used to design and send the designed flight plan to the drone that is known as downlinking [17]. Similarly, applications can also be used on smartphones and tablets, for this purpose, facilitating the mission planning even minutes before the flight. These applications and software act as ground control station (GCS) for drones. Generally, the compatible software and application, to plan and execute missions, comes with the drone by the respective company. For example, in a study corresponding applications eMotion 2, the Mission Planner and DJI-Phantom were utilized for eBee, X8 and Phantom 2 drones [17]. On the other hand, there are plenty of free and open-source GCS available on the internet and one can choose according to his needs. A software interface of QGroundControl, an open source, and MAVLink enabled software, installed on windows, can be seen in Figure 3.

Figure 3. QGroundControl (an open source software) interface is represented, where the current window shows a setting of waypoints for flight planning.

The other important factors for flight planning include estimation of flight area and surroundings, identification of potential hazards, preparation and configuration of equipment and weather conditions. The weather condition, as wind speed, can highly influence the drone flight. Similarly, presence of poles, trees, windmills, nearby roads, vehicles and populated areas are also considered before flying drones. Another important thing is to comply with the local and national laws regulating the drones’ flight.

Generally, to ensure the accuracy and quality of the data, image overlapping is performed. Although, few software do not facilitate the lateral and forward overlap. In this context, a study indorsed the greater overlap (lateral 50% and forward 80%) for orthomosaic preparation [17]. Nevertheless, higher overlays increase the image capturing time that further result in higher amounts of point cloud and therefore extended processing time. Siebert and Teizer [33] recommended at least 70 and 40% longitudinal and transverse coverage areas respectively. Anyhow, the need for a greater amount of overlap should be evaluated depending upon the respective drone used and its application. The flight plan, once completed, should be saved and by connecting a tablet or phone with the drone´s remote control, the desired mission can be executed.

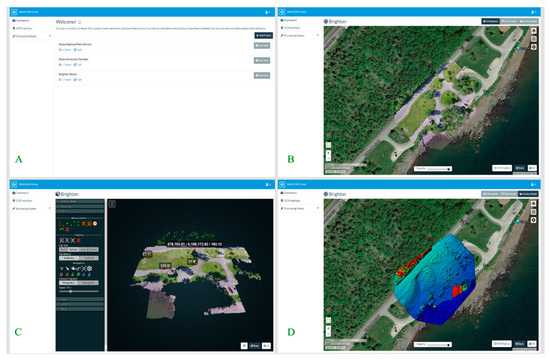

2.5. Image Processing and Software

Various open source and commercial software are in the market for pre-processing of images and automatic assembly of the orthomosaic and even facilitate a person with no prior expertise to extract meaningful information, in a shorter time as compared to conventional photogrammetry, of Digital Elevation Model (DEM), orthomosaic and DSM [17]. For example, Open Drone Map (ODM) is an open-source image processing software that allows to create and visualize orthomosaic, 3D models, point clouds, DEM, and other products (Figure 4).

Figure 4. Screenshots of interface of open source image processing software WebODM (demo version). A) Represents the user interface B) Orthophoto of a certain area of Brighten beach can be distinguished C) Represents the 3D model of the terrain D) Represents a DSM for a certain area of Brighten beach [34].

According to a study the estimated time frame for flight plan and image acquisition, collecting GCPs, and photogrammetric processing are 25%, 15% and 60% respectively. This indicates the dire need of better, speedy and automatic software especially for processing tasks [6].

A semi-automatic workflow is used to process images acquired through drones. During this camera calibration, images alignment, cloud points generation is done ultimately producing the DEM and Digital Surface Model (DSM). These models are then used for 3D modelling, acquisition of metric information (i.e. heights, area calculation, volume etc.) and orthomosaics [17].

Supervised classification techniques can be applied on the obtained data to analyze the image and extract information e.g. soil use classification through object-oriented image analysis or by examination of the spectral bands of images [35][36][35,36]. Such studies of map generation using vegetation indexes have been reported [37][38][37,38].

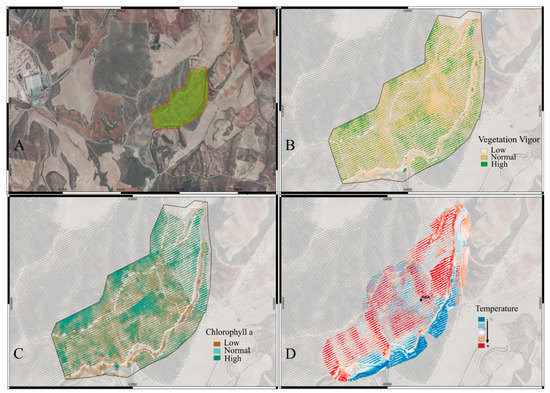

Drones get an enhanced spatial resolution at low altitudes but remain unable to cover large extensions as orbital platforms. That’s why a large number of high-resolution images are recommended to cover larger fields. This extensive data to generate mosaic image of the field needs to be pre-processed. Interestingly, an automated method for the mosaic preparation was developed, to reduce the cumbersome and lengthy processing time, that implements the pre-processing of these images [17]. An overview of processed images of olive crop using multispectral camera (parrot sequoia) and thermal sensor mounted on Yuneec Typhoon H hexacopter drone, flown at a height of 40 m and 80 m respectively, are represented in Figure 5. Mission planner was used for flight planning followed by orthomosaic generation using Pix4D software and ultimately using QGIS for generating NDVI (image B), NDRE (image C), and thermal map (image D).

Figure 5. Images taken using multispectral (A, B and C) and thermal (D) cameras for olive crop using Yuneec Typhoon H hexacopter drone. (Images facilitated by MC Biofertilizantes).

For various field operations (i.e. planting, spraying, nutrient application etc.) geo-referencing is used. A method of automatic geo-referencing, with 0.90 m accuracy, has been reported [11]. Since this margin of 0.90 m can prompt errors, hence further studies are suggested in this regard. Similarly, ground control points (GCPs), that are the representative points of the terrain like corners of a building and road crossings etc., pertain an indispensable importance with regard to enhancing maps accuracy and geometric correction of data acquired by the virtue of remote sensing. Use of global positioning system (GPS) receiver of Post-Processed Kinematic (PPK) or Real Time Kinematic (RTK) is recommended for taking coordinates. Using minimum GCPs for drones favors good results. As for example, a position error around ± 0.20 m vertically and ± 0.05 m horizontally was reported for 30 x 50 m area, when 24 GCPs were set with dual-frequency GPS RTK [39]. Similarly, when 23 GCPs were set with dual-frequency GPS RTK in another study for an area of 125 × 60 m, around 0.03–0.04 m vertically, and 0.04–0.05 m horizontally root mean square values for error were reported [40].

3. Applications of Drones in Farming

With the developing technologies and invention of novel sensors, drones are finding numerous application in agriculture field. The ease and autonomy that RPAs offer is their prominent feature. For example, they can either be flown manually or put on GPS programmed pre-determined paths where learning to pilot is not more than a few hours job with the possibility of one touch takeoff and ground steering. Self-leveling programs further facilitate their autonomy by helping in the acquisition of stabilized images while adjusting the drones to the wind [41]. Few of their most common applications along with novel areas of application are discussed below.

34.1. Crop Monitoring and Health Assessment

RPAs have been anticipated for counting plants, monitoring growth, phenology and chlorophyll measurement among other potential applications [21]. For this purpose, RPAs like SenseFly’s eBee Ag, having NDVI or near infrared (NIR) sensors, have replaced the conventional farm scouting by significantly minimizing the human error [42]. RPAs are also highly efficient sources of monitoring crops especially in hilly areas that are otherwise challenging for conventional scouting [24].

The following figure represents a schematic diagram of the applications of drones in agriculture.

Figure 68. A schematic diagram of the applications of drones in agriculture.

For a detailed study on the practical examples of RPAs, please have a look here: https://doi.org/10.3390/agronomy11010007

References

- Avşar, D.; Avşar, G. Yeni Tarım Düzeninin Tarımsal Üretim Üzerindeki Etkileri ve Türkiye’deki Uygulamalar. Platf. 2014, 379–385.

- Ozdogan, B.; Gacar, A.; Aktas, H. Digital agriculture practices in the context of agriculture 4.0. Econ. Financ. Account. 2017, 4, 186–193.

- Unal, I.; Topakci, M. A review on using drones for precision farming applications. In Proceedings of 12th International Congress on Agricultural Mechanization and Energy, Nevsehir, Turkey, 3–6 September 2014; pp. 276–283.

- Urbahs, A.; Jonaite, I. Features of the use of unmanned aerial vehicles for agriculture applications. Aviation 2013, 17, 170–175.

- Avery, T.E.; Berlin, G.L. Fundamentals of Remote Sensing and Airphoto Interpretation; Macmillan: Stuttgart, Germany, 1992.

- Colomina, I.; Molina, P. Unmanned aerial systems for photogrammetry and remote sensing: A review. ISPRS Photogramm. Remote Sens. 2014, 92, 79–97.

- Toth, C.; Jóźków, G. Remote sensing platforms and sensors: A survey. ISPRS Photogramm. Remote Sens. 2016, 115, 22–36.

- Aerocamaras Available online: https://aerocamaras.es/ (accessed on 29 October 2020).

- Hunt , E.R.; Daughtry, C.S. What good are unmanned aircraft systems for agricultural remote sensing and precision agriculture? Int. J. Remote Sens. 2018, 39, 5345–5376.

- Oliver, M.; Bishop, T.; Marchant, B. An Overview of Precision Agriculture; Routledge: London, UK, 2013.

- Banger, K.; Yuan, M.; Wang, J.; Nafziger, E.D.; Pittelkow, C.M. A vision for incorporating environmental effects into nitrogen management decision support tools for US maize production. Plant Sci. 2017, 8, 1270.

- Rigby, H.; Clarke, B.O.; Pritchard, D.L.; Meehan, B.; Beshah, F.; Smith, S.R.; Porter, N.A. A critical review of nitrogen mineralization in biosolids-amended soil, the associated fertilizer value for crop production and potential for emissions to the environment. Total Environ. 2016, 541, 1310–1338.

- Wang, J.; Shi, T.; Yu, D.; Teng, D.; Ge, X.; Zhang, Z.; Yang, X.; Wang, H.; Wu, G. Ensemble machine-learning-based framework for estimating total nitrogen concentration in water using drone-borne hyperspectral imagery of emergent plants: A case study in an arid oasis, NW China. Pollut. 2020, 266, 115412.

- Moazeni, M.; Heidari, Z.; Golipour, S.; Ghaisari, L.; Sillanpää, M.; Ebrahimi, A. Dietary intake and health risk assessment of nitrate, nitrite, and nitrosamines: A Bayesian analysis and Monte Carlo simulation. Sci. Pollut. Res. 2020, 1–13.

- Daponte, P.; De Vito, L.; Glielmo, L.; Iannelli, L.; Liuzza, D.; Picariello, F.; Silano, G. A review on the use of drones for precision agriculture. In Proceedings of the IOP Conference Series: Earth and Environmental Science, Graz, Austria, 11–14 September 2019; p. 012022.

- Schimmelpfennig, D. Farm Profits and Adoption of Precision Agriculture; United States Department of Agriculture (USDA): Washington, DC, USA, 2016.

- Santos, L.M.D.; Barbosa, B.D.S.; Andrade, A.D. Use of remotely piloted aircraft in precision agriculture: A review. DYNA 2019, 86, 284–291.

- Gallardo-Saavedra, S.; Hernández-Callejo, L.; Duque-Perez, O. Technological review of the instrumentation used in aerial thermographic inspection of photovoltaic plants. Sustain. Energy Rev. 2018, 93, 566–579.

- Vroegindeweij, B.A.; van Wijk, S.W.; van Henten, E. Autonomous unmanned aerial vehicles for agricultural applications. In Proceedings of the AgEng 2014, Zurich, Switzerland, 7 June–7 October 2014.

- Muchiri, N.; Kimathi, S. A review of applications and potential applications of UAV. In Proceedings of the Sustainable Research and Innovation Conference, London, UK, 24–26 April 2013; pp. 280–283.

- Pino, E. Los drones una herramienta para una agricultura eficiente: Un futuro de alta tecnología. Idesia (Arica) 2019, 37, 75–84.

- Mogili, U.R.; Deepak, B. Review on application of drone systems in precision agriculture. Procedia Sci. 2018, 133, 502–509.

- Puri, V.; Nayyar, A.; Raja, L. Agriculture drones: A modern breakthrough in precision agriculture. Stat. Manag. Syst. 2017, 20, 507–518.

- Rani, A.; Chaudhary, A.; Sinha, N.; Mohanty, M.; Chaudhary, R. Drone: The green technology for future agriculture. Dhara 2019, 2, 3–6.

- Negash, L.; Kim, H.-Y.; Choi, H.-L. Emerging UAV Applications in Agriculture. In Proceedings of the 2019 7th International Conference on Robot Intelligence Technology and Applications (RiTA), Daejeon, Korea, 1–3 November 2019; pp. 254–257.

- Zhang, W.; Wu, J.; Chen, S. Based on the UAV of land and resources of low level remote sensing applications research. In Proceedings of the 2014 International Conference on Artificial Intelligence and Software Engineering (AISE 2014), Phuket, Thailand, 11–12 January 2014; pp. 27–30.

- Stehr, N.J. Drones: The newest technology for precision agriculture. Sci. Educ. 2015, 44, 89–91.

- Saha, A.K.; Saha, J.; Ray, R.; Sircar, S.; Dutta, S.; Chattopadhyay, S.P.; Saha, H.N. IOT-based drone for improvement of crop quality in agricultural field. In Proceedings of the 2018 IEEE 8th Annual Computing and Communication Workshop and Conference (CCWC), Las Vegas, NV, USA, 8–10 January 2018; pp. 612–615.

- Reinecke, M.; Prinsloo, T. The influence of drone monitoring on crop health and harvest size. In Proceedings of the 2017 1st International Conference on Next Generation Computing Applications (NextComp), Mauritius, 19–21 July 2017; pp. 5–10.

- De Rango, F.; Palmieri, N.; Santamaria, A.F.; Potrino, G. A simulator for UAVs management in agriculture domain. In Proceedings of the 2017 International Symposium on Performance Evaluation of Computer and Telecommunication Systems (SPECTS), Seattle, WA, USA, 9–12 July 2017; pp. 1–8.

- Yallappa, D.; Veerangouda, M.; Maski, D.; Palled, V.; Bheemanna, M. Development and evaluation of drone mounted sprayer for pesticide applications to crops. In Proceedings of the 2017 IEEE Global Humanitarian Technology Conference (GHTC), San Jose, CA, USA, 19–22 October 2017; pp. 1–7.

- Kulbacki, M.; Segen, J.; Knieć, W.; Klempous, R.; Kluwak, K.; Nikodem, J.; Kulbacka, J.; Serester, A. Survey of drones for agriculture automation from planting to harvest. In Proceedings of the 2018 IEEE 22nd International Conference on Intelligent Engineering Systems (INES), Canaria, Spain, 21–23 June 2018; pp. 000353–000358.

- Siebert, S.; Teizer, J. Mobile 3D mapping for surveying earthwork projects using an Unmanned Aerial Vehicle (UAV) system. Constr. 2014, 41, 1–14.

- WebODM. Availabe online: https://www.opendronemap.org/webodm/ (accessed on 30 September 2020).

- Rango, A.; Laliberte, A.; Steele, C.; Herrick, J.E.; Bestelmeyer, B.; Schmugge, T.; Roanhorse, A.; Jenkins, V. Using unmanned aerial vehicles for rangelands: Current applications and future potentials. Pract. 2006, 8, 159–168.

- Laliberte, A.S.; Herrick, J.E.; Rango, A.; Winters, C. Acquisition, orthorectification, and object-based classification of unmanned aerial vehicle (UAV) imagery for rangeland monitoring. Eng. Remote Sens. 2010, 76, 661–672.

- Torres-Sánchez, J.; Pena, J.M.; de Castro, A.I.; López-Granados, F. Multi-temporal mapping of the vegetation fraction in early-season wheat fields using images from UAV. Electron. Agric. 2014, 103, 104–113.

- Primicerio, J.; Di Gennaro, S.F.; Fiorillo, E.; Genesio, L.; Lugato, E.; Matese, A.; Vaccari, F.P. A flexible unmanned aerial vehicle for precision agriculture. Agric. 2012, 13, 517–523.

- Wallace, L.; Lucieer, A.; Malenovský, Z.; Turner, D.; Vopěnka, P. Assessment of forest structure using two UAV techniques: A comparison of airborne laser scanning and structure from motion (SfM) point clouds. Forests 2016, 7, 62.

- Turner, D.; Lucieer, A.; De Jong, S.M. Time series analysis of landslide dynamics using an unmanned aerial vehicle (UAV). Remote 2015, 7, 1736–1757.

- Stehr, N.J. Drones: The newest technology for precision agriculture. Nat. Sci. Educ. 2015, 44, 89–91.

- Natu, A.S.; Kulkarni, S. Adoption and utilization of drones for advanced precision farming: A review. Int. J. Recent Innov. Trends Comput. Commun. 2016, 4, 563–565.