Your browser does not fully support modern features. Please upgrade for a smoother experience.

Please note this is a comparison between Version 2 by Dean Liu and Version 1 by Salman Ahmed.

Remote Health Monitoring Systems (RHMS) can manage, maintain and monitor a specific set of tasks efficiently over a network with reduced cost and errors. Wearable sensors and vision-based sensors detect any abnormalities in the patient’s behavior, prompting immediate action from caregivers or doctors, enabling them to take necessary measures promptly to address the situation.

- remote health monitoring system

- smart health

- health edge computing

- activity recognition for healthcare

1. Introduction

Remote Health Monitoring Systems (RHMS) can manage, maintain and monitor a specific set of tasks efficiently over a network with reduced cost and errors. This network can be an Internet-of-Things (IoT) system or a local network system with a series of connected devices [1]. They are scalable and provide multiple opportunities to implement changes. They use certain devices, either vision-based devices such as cameras or sensor-based devices such as Accelerometers or Gyroscopes, to form a network. The selection of devices for these systems depends on the environment, requirements, and application [2].

Remote Monitoring System (RMS) is often used in sensor-based technologies with applications such as radars, satellites, and aeroplanes. IAn the context of this study, anothother impactful application of RMS is in the health sector, usually labelled as RHMS. Real-time health monitoring of patients by a doctor from a remote location has a significant impact on the avoidance of irregularities. It provides first aid within the nick of time. These systems show great promise, especially for elderly and physically disabled patients [3].

Wearable sensors and health-monitoring devices, including heart rate sensors, pulse sensors, oxygen sensors, and blood pressure sensors, play a crucial role in remote health monitoring systems, whether in open or closed environments, for observing patients [4]. These sensors detect any abnormalities in the patient’s behavior, prompting immediate action from caregivers or doctors, enabling them to take necessary measures promptly to address the situation. In addition to these wearable sensors, vision-based sensors are also employed to monitor the health conditions of patients. For instance, a camera mounted near the patient’s vicinity keeps track of the patient’s movement. If the system detects any abnormal movement by the patient, it promptly triggers an alarm to notify the caretaker [5]. By combining wearable and vision-based sensors, healthcare providers can comprehensively monitor and respond to the well-being of patients in real-time.

2. RHMS for Elderly People

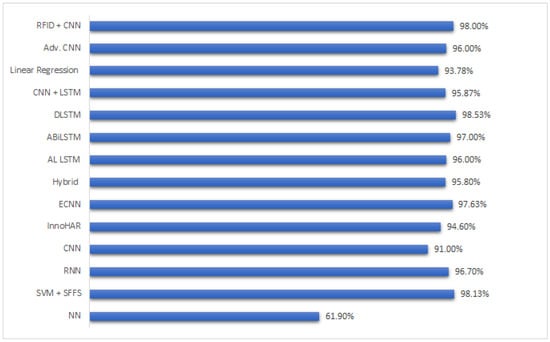

The smart healthcare monitoring system is proposed in [52][6]. This system can highly contribute to providing a comfortable and safe environment for elder and disabled people. This can help them live independently without the fear of any emergency or critical healthcare situation through continuous monitoring of their health. The proposed framework collects and accumulates patients’ physiological data with the help of wearable sensors and transmits them to a cloud server for data analyzing and processing. Hence, any change in a patient’s health data will be detected and transmitted to the patient’s doctor through the hospital’s cloud server. Thus, any detection of disorder in a patient’s data will be reported to the patient’s doctors via the hospital platform. The framework is a simple technology based on a fixable architecture that can be scaled and easily expanded, thus providing stable and cost-efficient systems to monitor elderly patients remotely. In addition, the results show that the system could efficiently contribute to improving healthcare services by being able to monitor the patient’s health in real-time detecting symptoms remotely. The proposed system, which can monitor patients’ symptoms remotely and in real-time, is highly effective. Having a powerful effect on physical and mental health and robust association with many rehabilitation programs, Physical Activity Recognition and Monitoring (PARM) have been considered as a key paradigm for smart healthcare [110][7]. Traditional methods for PARM used controlled environments, intending to increase the identifiable activity subjects completely and improve recognition accuracy and system robustness using novel body-worn sensors or advanced learning algorithms. The system has now changed with cost-effective heterogeneous wearable devices and mobile applications. PRAM has been transferred to uncontrolled and open environments. However, these technologies and their results with traditional PRAM are currently less known. To help understand the use of IoT technology in PRAM studies, this research will provide a systematic review,ers inspectinged PARM studies from a typical IoT layer-based perspective. First, it will summarize the modern techniques in traditional PARM methodologies as used in the healthcare domain, including sensory, feature extraction, and recognition techniques. The second thing this researcher explains is the identification of some new research trends and challenges in PRAM studies in the IoT environment. Finally, this paperresearchers includes a few successful studies to inculcate PRAM in industrial applications. In the last two decades, several studies have been conducted to address critical issues of PRAM because of its importance in healthcare support in a variety of chronic diseases, musculoskeletal rehabilitation, independent living of the elderly, as well as fitness goals for active lifestyles. The contribution of this work is is from the perspective of the Internet of Things (IoT) that sequentially covers the sensing layer, network layer, processing layer, and application layer, distinctively and systematically summarizing existing primary PARM devices, methods, and environments. Wearable and portable sensors/devices, inertial signal data processing, and classification/clustering approaches are described and compared in the light of physical activity types, subjects, accuracy, flexibility, and energy. Typical research and project applications regarding PARM are also introduced. In [111][8], the use of RFID sensors and accelerometers has been proposed to recognize a user’s daily activity. A decision tree is employed to classify five human body states using two wireless accelerometers, and detection of RFID-tagged objects with hand movements provides additional instrumental activity information. The system has already developed tagging and visualization tools, making it widely applicable for caring for elderly people’s health. Ming et al. [112][9] proposed a CNN-based elderly monitoring system. The approach utilizes vision-based devices to capture movement data. They ensure sensitive data protection by introducing a key-based authorization module with the CNN. The experimental evaluation reveals the system to be sustainable towards explicit breach attempts. The proposed framework was tested on the publicly available UCI-HAR dataset with six basic and six transitional activities and achieved an overall accuracy of 92.02%. Yang et al. [66][10] proposed an RFID-based CNN model for the posture detection of elderly people. Kastersen’s dataset is used in order to evaluate the approach. The dataset consists of 245 action instances for seven different activities over 28 days, sensed using RFID technology. They recorded four daily life activities, which include “brushing teeth, taking a bath, eating and getting dressed”. The CNN utilized was a dense network, which involved dense layers, thus making the proposed model complicated and prone to errors. The model demonstrated an accuracy of 82.78%, which showed the effectiveness of the proposed approach. However, in real-time scenarios, this approach may not produce substantial results. To provide a comparison between the aforementioned approaches, let uresearchers take a closer look. In one approach [52][6], a smart healthcare monitoring system is proposed, utilizing wearable sensors to collect patients’ physiological data, which is then transmitted to a cloud server for analysis. Changes in the patients’ health data are detected and reported to their doctors, enabling real-time monitoring and detection of symptoms. This scalable and cost-efficient system aims to improve healthcare services for elderly patients remotely. Another approach [110][7] focuses on PARM in uncontrolled environments using IoT technology. It provides a systematic review of traditional PARM methodologies, discussing sensory, feature extraction, and recognition techniques. It also explores new research trends and challenges in PARM studies within the IoT environment. Additionally, RFID sensors and accelerometers are employed in an approach [111][8] to recognize daily activities of users, using a decision tree for classification. This system combines wearable sensors and RFID technology to provide valuable information about user activities. A CNN-based elderly monitoring system [112][9] utilizes vision-based devices and sensitive data protection measures, achieving high accuracy in activity recognition. This approach ensures privacy and security while effectively monitoring elderly individuals’ movements. Finally, a CNN model for posture detection of elderly people is proposed [66][10] using RFID technology, demonstrating good effectiveness but potential limitations in real-time scenarios. This system focuses on posture detection, which is crucial for maintaining the health and safety of elderly individuals. Each of these approaches brings unique features and advantages to the field of healthcare monitoring and human activity recognition, catering to different scenarios and requirements. Table 31 summarizes the Section 4 to points out the strengths of referenced approaches. Figure 21 presents a summarized accuracy-comparison graph, which visually represents the information provided in the aforementioned table. The arrangement of the approaches in the graph takes into consideration both the publication year and the novelty of their architectures. Notably, the graph excludes certain approaches from the reference table that exclusively encompassed a systematic review of diverse frameworks. The graph depicts that the mean accuracy of the various approaches lies within the range of 90% to 95%. This range signifies a customary level of accuracy attained through the utilization of neural network architecture. However, it is imperative to acknowledge that the majority of these approaches prioritize enhancing the duration of model training, rather than solely emphasizing accuracy. As expounded upon in the preceding paragraphs, the significance of model training time should not be disregarded, particularly when formulating an approach that concentrates on unsupervised data.

Figure 21. Comparison of Machine learning and deep learning techniques in healthcare system [91,92,95,101,102,103,104,105,106,107,108,111,112,113][8][9][11][12][13][14][15][16][17][18][19][20][21][22].

Table 31. Summary

| Approach | Type | Strength |

|---|---|---|

| [52][6] | Wearable sensors | Able to detect abnormalities in elderly people and capable of employment in real-time scenarios |

| [111][8] | RFID + wearable sensors | By the tracking of hand motion, RFID tagged objects are able to be detected which provides additional pattern data for efficient human activity recognition |

| [112][9] | CNN + vision devices | Introduced an authentication-based access network to avoid any unwanted access or breach in the network |

| [66][10] | RFID + CNN | A dense CNN with RFID unit brought forward a novel RSS based approach. However no solid strengths were presented in the research work |

References

- Rashid, M.M.; Khan, S.U.; Eusufzai, F.; Redwan, M.A.; Sabuj, S.R.; Elsharief, M. A Federated Learning-Based Approach for Improving Intrusion Detection in Industrial Internet of Things Networks. Network 2023, 3, 158–179.

- Blount, M.; Batra, V.M.; Capella, A.N.; Ebling, M.R.; Jerome, W.F.; Martin, S.M.; Nidd, M.; Niemi, M.R.; Wright, S.P. Remote health-care monitoring using Personal Care Connect. IBM Syst. J. 2007, 46, 95–113.

- Kalid, N.; Zaidan, A.; Zaidan, B.; Salman, O.H.; Hashim, M.; Muzammil, H. Based real time remote health monitoring systems: A review on patients prioritization and related" big data" using body sensors information and communication technology. J. Med. Syst. 2018, 42, 30.

- Mohammed, K.; Zaidan, A.; Zaidan, B.; Albahri, O.S.; Alsalem, M.; Albahri, A.S.; Hadi, A.; Hashim, M. Real-time remote-health monitoring systems: A review on patients prioritisation for multiple-chronic diseases, taxonomy analysis, concerns and solution procedure. J. Med. Syst. 2019, 43, 223.

- Rahman, H.; Ahmed, M.U.; Begum, S. Vision-based remote heart rate variability monitoring using camera. In Proceedings of the Internet of Things (IoT) Technologies for HealthCare: 4th International Conference, HealthyIoT 2017, Angers, France, 24–25 October 2017; Proceedings 4. Springer: Berlin/Heidelberg, Germany, 2018; pp. 10–18.

- Al-Khafajiy, M.; Baker, T.; Chalmers, C.; Asim, M.; Kolivand, H.; Fahim, M.; Waraich, A. Remote health monitoring of elderly through wearable sensors. Multimed. Tools Appl. 2019, 78, 24681–24706.

- Qi, J.; Yang, P.; Waraich, A.; Deng, Z.; Zhao, Y.; Yang, Y. Examining sensor-based physical activity recognition and monitoring for healthcare using Internet of Things: A systematic review. J. Biomed. Inform. 2018, 87, 138–153.

- Hong, Y.J.; Kim, I.J.; Ahn, S.C.; Kim, H.G. Mobile health monitoring system based on activity recognition using accelerometer. Simul. Model. Pract. Theory 2010, 18, 446–455.

- Tao, M.; Li, X.; Wei, W.; Yuan, H. Jointly optimization for activity recognition in secure IoT-enabled elderly care applications. Appl. Soft Comput. 2021, 99, 106788.

- Yang, J.; Lee, J.; Choi, J. Activity recognition based on RFID object usage for smart mobile devices. J. Comput. Sci. Technol. 2011, 26, 239–246.

- Cheng, L.; Guan, Y.; Zhu, K.; Li, Y. Recognition of human activities using machine learning methods with wearable sensors. In Proceedings of the 2017 IEEE 7th Annual Computing and Communication Workshop and Conference (CCWC), Las Vegas, NV, USA, 9–11 January 2017; IEEE: PIscataway, NJ, USA, 2017; pp. 1–7.

- Ahmed, N.; Rafiq, J.I.; Islam, M.R. Enhanced human activity recognition based on smartphone sensor data using hybrid feature selection model. Sensors 2020, 20, 317.

- Murad, A.; Pyun, J.Y. Deep recurrent neural networks for human activity recognition. Sensors 2017, 17, 2556.

- Wan, S.; Qi, L.; Xu, X.; Tong, C.; Gu, Z. Deep learning models for real-time human activity recognition with smartphones. Mob. Netw. Appl. 2020, 25, 743–755.

- Wang, J.; Chen, Y.; Hao, S.; Peng, X.; Hu, L. Deep learning for sensor-based activity recognition: A survey. Pattern Recognit. Lett. 2019, 119, 3–11.

- Ignatov, A. Real-time human activity recognition from accelerometer data using Convolutional Neural Networks. Appl. Soft Comput. 2018, 62, 915–922.

- Zhou, X.; Liang, W.; Kevin, I.; Wang, K.; Wang, H.; Yang, L.T.; Jin, Q. Deep-learning-enhanced human activity recognition for Internet of healthcare things. IEEE Internet Things J. 2020, 7, 6429–6438.

- Xu, C.; Chai, D.; He, J.; Zhang, X.; Duan, S. InnoHAR: A deep neural network for complex human activity recognition. IEEE Access 2019, 7, 9893–9902.

- Chen, Z.; Zhang, L.; Jiang, C.; Cao, Z.; Cui, W. WiFi CSI based passive human activity recognition using attention based BLSTM. IEEE Trans. Mob. Comput. 2018, 18, 2714–2724.

- Zhu, Q.; Chen, Z.; Soh, Y.C. A novel semisupervised deep learning method for human activity recognition. IEEE Trans. Ind. Inform. 2018, 15, 3821–3830.

- Wang, H.; Zhao, J.; Li, J.; Tian, L.; Tu, P.; Cao, T.; An, Y.; Wang, K.; Li, S. Wearable sensor-based human activity recognition using hybrid deep learning techniques. Secur. Commun. Netw. 2020, 2020, 2132138.

- Dritsas, E.; Trigka, M. Stroke risk prediction with machine learning techniques. Sensors 2022, 22, 4670.

More