1000/1000

Hot

Most Recent

Automated emotion recognition (AEE) is important issue in the various field of activities, which uses human emotional reaction as a signal for marketing, technical equipment or human-robot interaction. Paper analyzes vast layer of scientific research and technical papers for sensor use analysis, where various methods implemented or researched. Paper cover few classes of sensors, using contactless methods, contact and skin-penetrating electrodes with for human emotion detection and measurement of their intensity. Result of performed analysis in this paper presented applicable methods for each type of emotions or their intensity and proposed their classification. Provided classification of emotion sensors revealed area of application and expected outcome from each method as well as noticed limitation of them. This paper should be interested for researchers, needed to use of human emotion evaluation and analysis, when there is a need to choose proper method for their purposes or find alternative decision.

With the rapid increase in the use of smart technologies in society and the development of the industry, the need for technologies capable to assess the needs of a potential customer and choose the most appropriate solution for them is increasing dramatically. Automated emotion evaluation (AEE) is particularly important in areas such as: robotics [[1]], marketing [[2]], education [[3]], and the entertainment industry [[4]]. The application of AEE is used to achieve various goals:

(i) in robotics: to design smart collaborative or service robots which can interact with humans

[[5][6][7]];

(ii) in marketing: to create specialized adverts, based on the emotional state of the potential customer [[8][9][10]];

(iii) in education: used for improving learning processes, knowledge transfer, and perception methodologies [[11][12][13]];

(iv) in entertainment industries: to propose the most appropriate entertainment for the target audience [[14][15][16][17]].

In the scientific literature are presented numerous attempts to classify the emotions and set boundaries between emotions, affect, and mood [[18][19][20][21]]. From the prospective of automated emotion recognition and evaluation, the most convenient classification is presented in [[3],[22]]. According to the latter classification, main terms defined as follows:

(i) “emotion” is a response of the organism to a particular stimulus (person, situation or event). Usually it is an intense, short duration experience and the person is typically well aware of it;

(ii) “affect” is a result of the effect caused by emotion and includes their dynamic interaction;

(iii) “feeling” is always experienced in relation to a particular object of which the person is aware; its duration depends on the length of time that the representation of the object remains active in the person’s mind;

(iv) “mood” tends to be subtler, longer lasting, less intensive, more in the background, but it can affect affective state of a person to positive or negative direction.

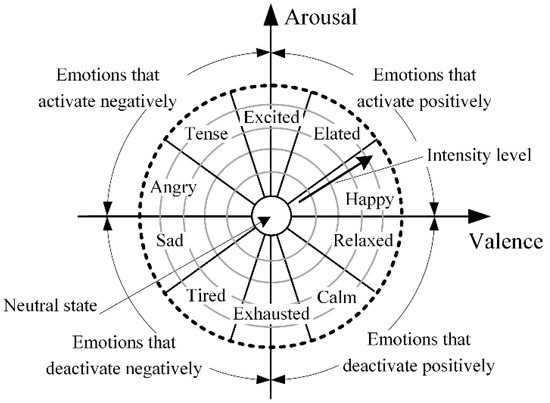

According to the research performed by Feidakis, Daradoumis and Cabella [[21]] where the classification of emotions based on fundamental models is presented, exist 66 emotions which can be divided into two groups: ten basic emotions (anger, anticipation, distrust, fear, happiness, joy, love, sadness, surprise, trust) and 56 secondary emotions. To evaluate such a huge amount of emotions, it is extremely difficult, especially if automated recognition and evaluation is required. Moreover, similar emotions can have overlapping parameters, which are measured. To handle this issue, the majority of studies of emotion evaluation focuses on other classifications [[3],[21]], which include dimensions of emotions, in most cases valence (activation—negative/positive) and arousal (high/low) [[23][24][25]], and analyses only basic emotions which can be defined more easily. A majority of researches use variations of Russel’s circumplex model of emotions (Figure 1) which provides a distribution of basic emotions in two-dimensional space in respect of valence and arousal. Such an approach allows for the definition of a desired emotion and evaluating its intensity just analyzing two dimensions.

Figure 1. Russel’s circumplex model of emotions.

Using the above-described model, the classification and evaluation of emotions becomes clear, but still there are many issues related to the assessment of emotions, especially the selection of measurement and results evaluation methods, the selection of measurement hardware and software. Moreover, the issue of emotion recognition and evaluation remains complicated by its interdisciplinary nature: emotion recognition and strength evaluation are the object of psychology sciences, while the measurement and evaluation of human body parameters are related with medical sciences and measurement engineering, and sensor data analysis and solution is the object of mechatronics.

This review focuses on the hardware and methods used for automated emotion recognition, which are applicable for machine learning procedures using obtained experimental data analysis and automated solutions based on the results of these analyses. This study also analyzes the idea of humanizing the Internet of Things and affective computing systems, which has been validated by systems developed by the authors of this research [[26][27][28][29]].

Intelligent machines with empathy for humans are sure to make the world a better place. The IoT field is definitely progressing on human emotion understanding thanks to achievements in human emotion recognition (sensors and methods), computer vision, speech recognition, deep learning, and related technologies [[30]].