1000/1000

Hot

Most Recent

Autonomous robots that assist humans in day to day living tasks are becoming increasingly popular. Autonomous mobile robots operate by sensing and perceiving their surrounding environment to make accurate driving decisions. A combination of several different sensors such as LiDAR, radar, ultrasound sensors and cameras are utilized to sense the surrounding environment of autonomous vehicles. These heterogeneous sensors simultaneously capture various physical attributes of the environment. Such multimodality and redundancy of sensing need to be positively utilized for reliable and consistent perception of the environment through sensor data fusion. However, these multimodal sensor data streams are different from each other in many ways, such as temporal and spatial resolution, data format, and geometric alignment. For the subsequent perception algorithms to utilize the diversity offered by multimodal sensing, the data streams need to be spatially, geometrically and temporally aligned with each other. A typical approach is to use a geometrical model to spatially align the two sensor outputs, followed by a Gaussian Process (GP) regression-based resolution matching algorithm to interpolate the missing data with quantifiable uncertainty.

For example in De Silva 2018 et al., address the problem of fusing the outputs of a Light Detection and Ranging (LiDAR) scanner and a wide-angle monocular image sensor for free space detection. The outputs of LiDAR scanner and the image sensor are of different spatial resolutions and need to be aligned with each other. The results indicate that the proposed sensor data fusion framework significantly aids the subsequent perception steps, as illustrated by the performance improvement of a uncertainty aware free space detection algorithm.

Figure 1: Projection of LiDAR data on to the spherical video frame.

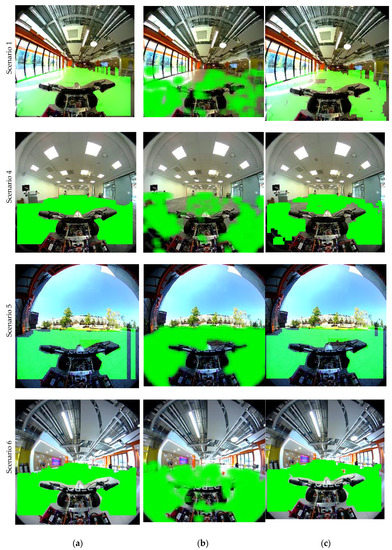

Figure 2: Comparison of free-space maps for different algorithms for three different scenarios. (a) LiDAR only; (b) Image classifier only; (c) Fusion based approach.

The benefits of the proposed approach for FSD based on fusion can be illustrated as in above figure (Figure 2). For example, in Figure 2a, for Scenario 1, when the image-based classifier fails to detect extremely bright area as free space, the fusion approach will also consider it as non-free space. This area corresponds to a space in the LiDAR’s blind spot. Similarly, for Scenario 6 in Figure 2, the ball that is thrown across the vehicle falls on the LiDAR’s blind spot. However, the ball is captured as an obstacle by the image-based FSD algorithm, and when the results are fused, the ball is captured as an obstacle. Scenario 1 corresponds to a situation where the image based FSD is not performing very well. In this example, due to high saturation and mirroring on the floor, the area just next to the glass windows is not classified as free space (i.e., the classifier fails). However, the LiDAR information in this region shows high confidence and hence the fused image combines the two areas based on uncertainty. Scenario 5 illustrates a different situation where, due to an air-borne obstacle (e.g., the experimenter’s hand) that is visible only by the LiDAR, the FSD by LIDAR projects to see an obstacle on the floor. However, the image-based FSD performs very well on this occasion and the fused image demonstrates the best of the individual scenarios.

The advantages of the proposed data fusion framework is illustrated through performance analysis of a free space detection algorithm. It was demonstrated that perception tasks in autonomous vehicles/mobile robots can be significantly improved by multimodal data fusion approaches, as compared to single sensor-based perception capability. As compared to extrinsic calibration methods, the main novelty of the proposed approach is the ability to fuse multimodal sensor data by accounting for different forms of uncertainty associated with different sensor data streams (resolution mismatches, missing data, and blind spots). [1]